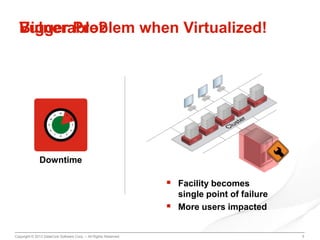

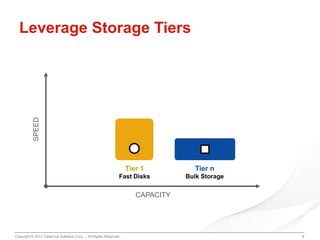

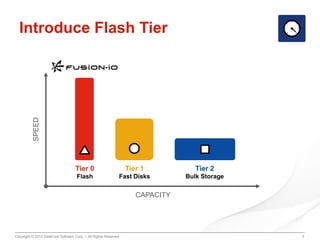

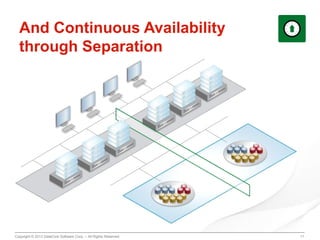

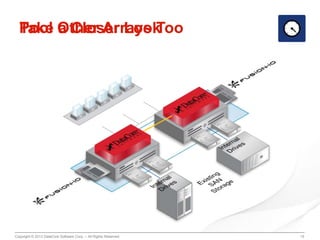

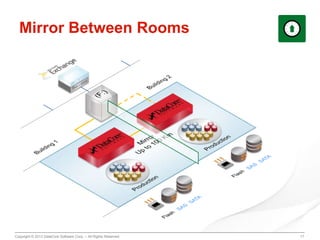

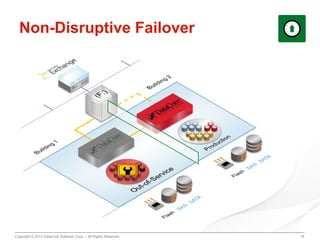

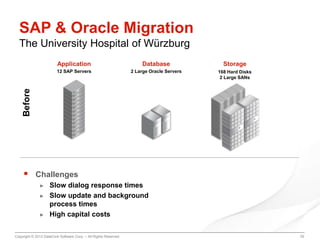

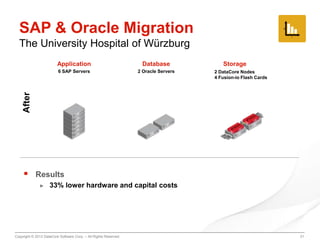

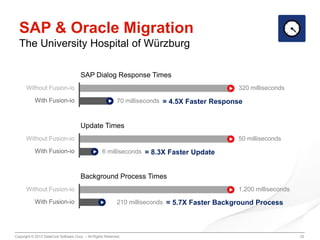

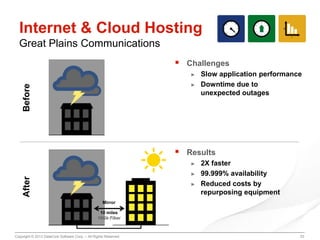

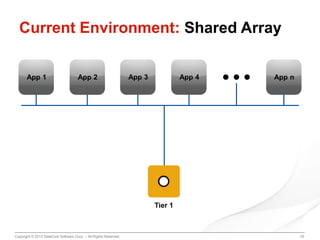

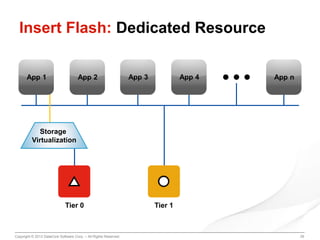

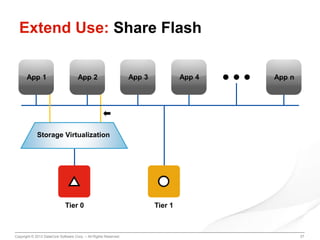

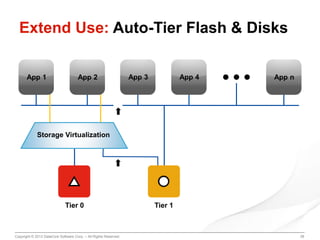

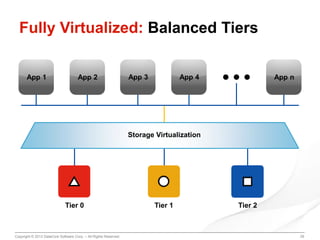

The document discusses using flash storage to improve the performance of virtualized workloads. It describes three main pain points of weak performance due to I/O bottlenecks, service interruptions from downtime, and complex data management. Introducing a flash tier can provide 2-5x faster performance and continuous availability even if the main facility has an outage. Case studies show how flash storage improved response times for SAP and Oracle databases and provided 99.999% uptime for an internet hosting company. The document provides a blueprint for adopting flash storage by first dedicating it to specific workloads and then extending its use across more applications and tiers.