Embed presentation

Download as PDF, PPTX

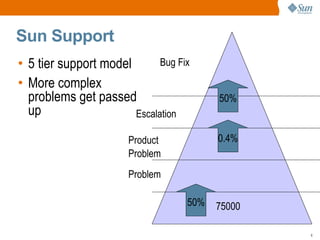

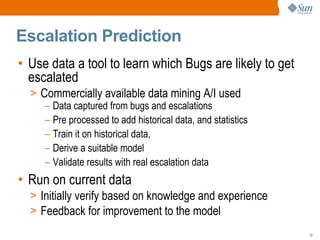

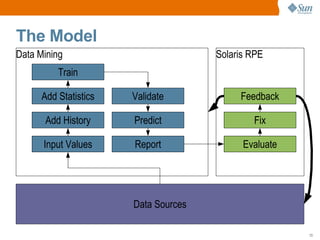

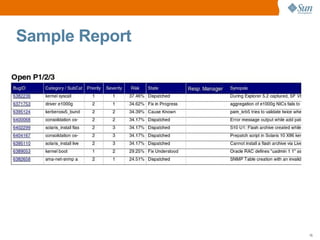

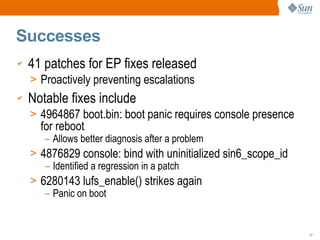

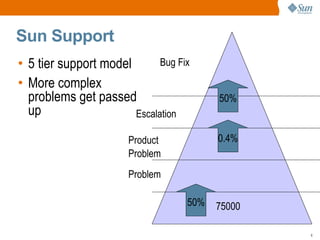

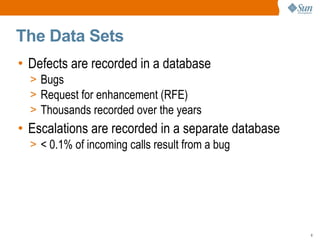

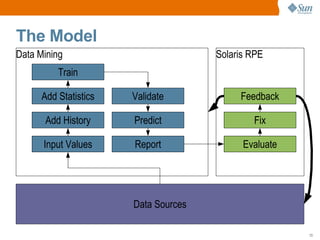

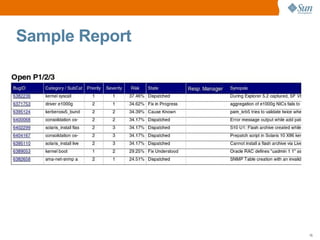

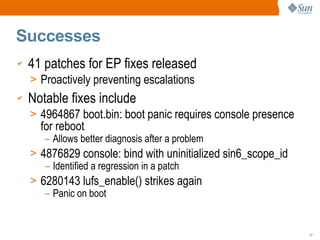

Sun Microsystems implemented a defect prediction model using historical bug and escalation data to identify bugs likely to escalate and be proactively fixed. This initially saw successes with 41 patches preventing escalations and notable fixes. However, the model declined over time due to lack of resources, expertise, and executive support. Lessons included ring-fencing resources, keeping the model simple, and continually improving it. Now Sun focuses on regular release criteria and incoming bug triage to identify bugs to proactively fix. They are considering upgrading tools and run-time checking to potentially revisit defect prediction.