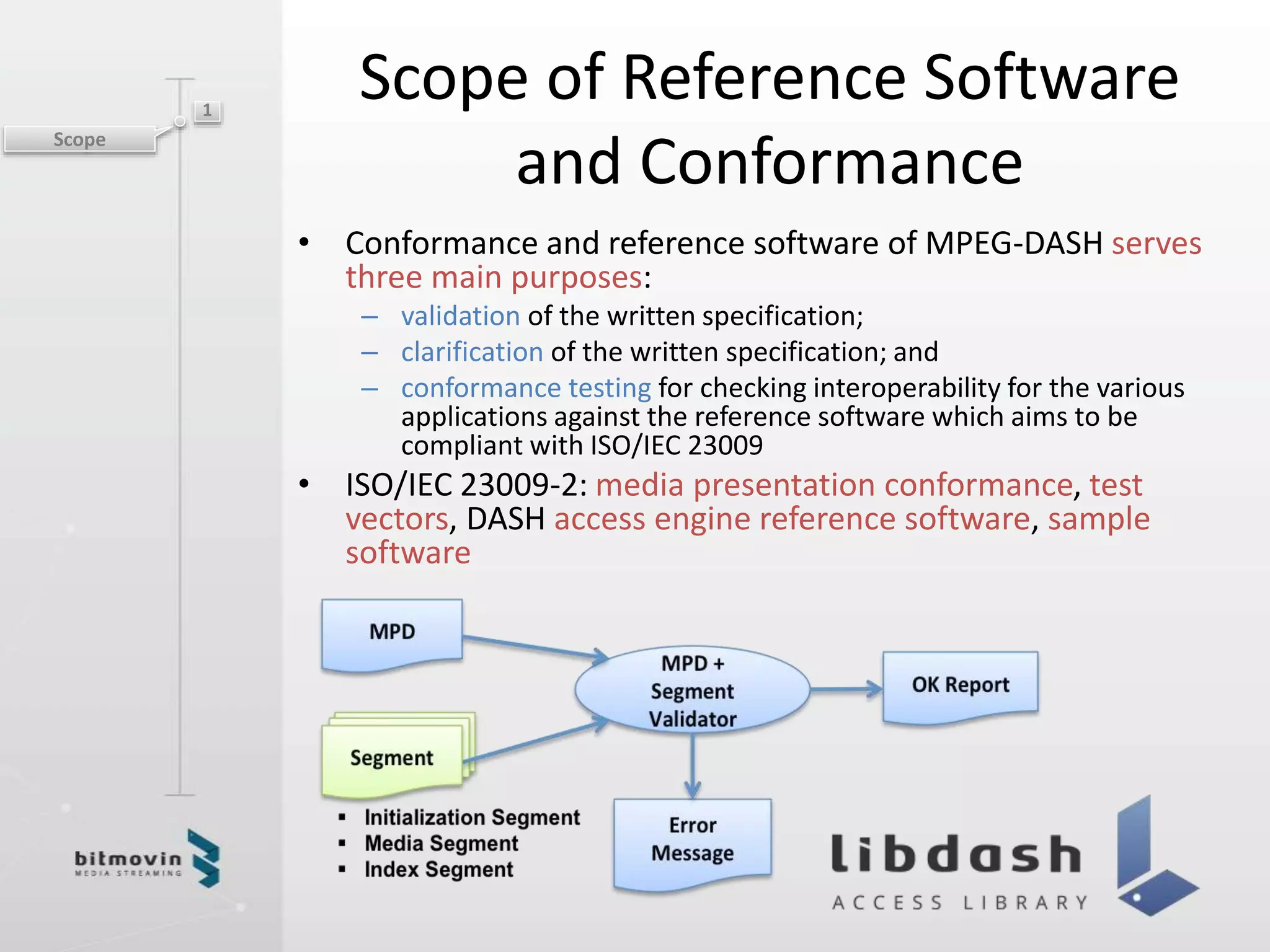

The document discusses MPEG-DASH reference software and conformance. It describes how the reference software serves to validate the specification, clarify ambiguities, and test for interoperability. Components include an MPD validator, segment conformance checker, dynamic service validator, DASH access client software, and sample players and encoding tools. The reference software provides a comprehensive toolset for conformance testing and verification of the DASH specification.