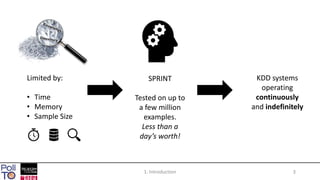

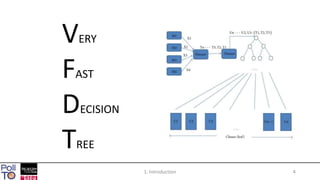

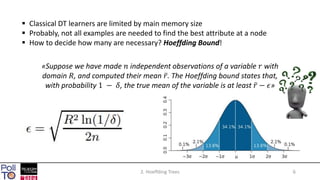

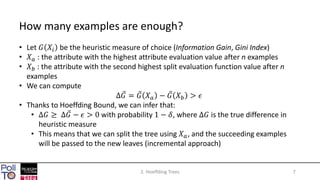

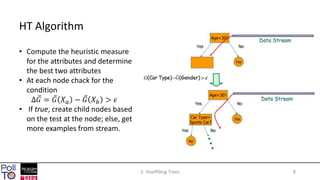

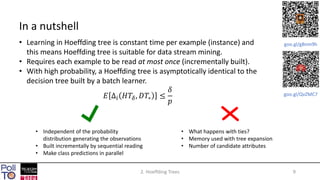

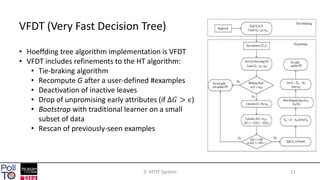

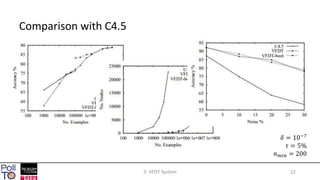

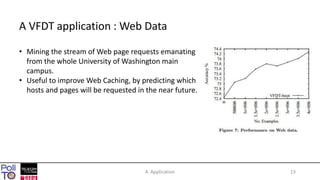

The document discusses mining high-speed data streams using Hoeffding Trees and the Very Fast Decision Tree (VFDT) algorithm, which allows for efficient data stream mining with a focus on incremental learning. Hoeffding Trees utilize a bound to determine how many examples are necessary for accurate decision-making, enabling constant-time learning per example. The document also highlights the application of VFDT in web data analysis and outlines potential future research directions.