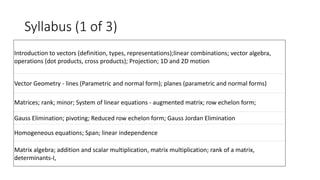

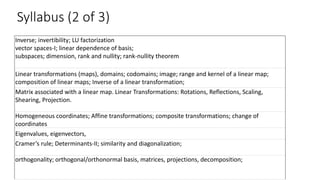

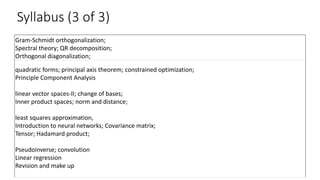

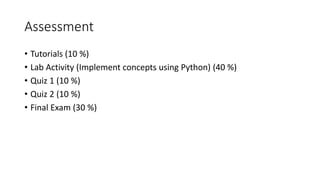

This document outlines the syllabus and curriculum for a linear algebra course covering topics like vectors, matrices, linear transformations, eigenvalues, and tensor applications. The course will be assessed through tutorials, lab activities, quizzes, and a final exam. Linear algebra concepts are applied in fields like image processing, cryptography, machine learning, and engineering. Tensors provide a way to represent higher dimensional data.