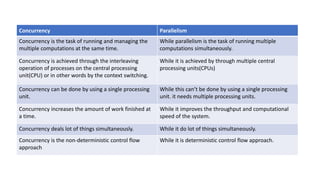

Concurrency refers to running multiple tasks simultaneously using a single processing unit through fast context switching. It creates the illusion of parallelism. Parallelism refers to running tasks simultaneously using multiple processing units to improve computational speed and throughput. Concurrency is achieved through interleaving processes on a CPU, while parallelism uses multiple CPUs. Concurrency can be done on a single CPU but parallelism requires multiple CPUs. Concurrency overlaps tasks to increase work done over time, while parallelism improves speed.