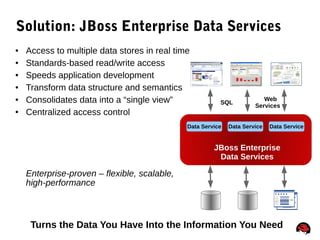

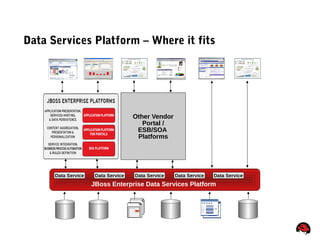

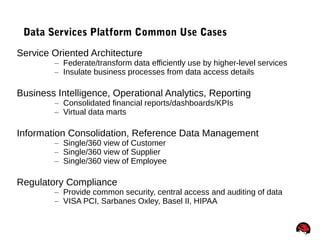

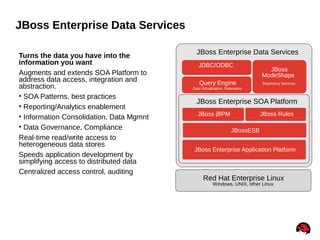

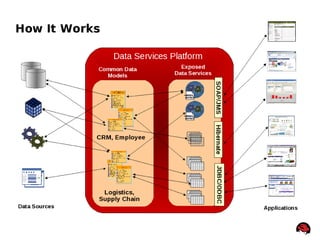

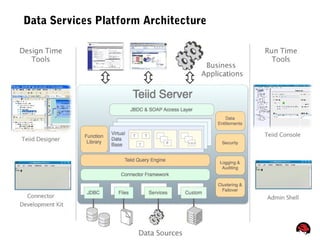

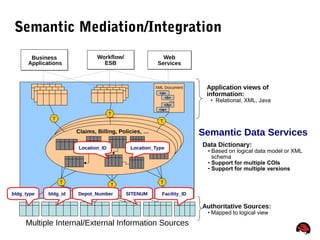

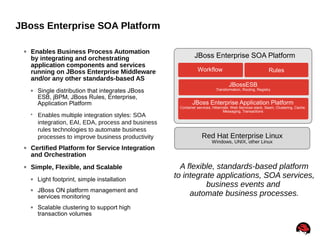

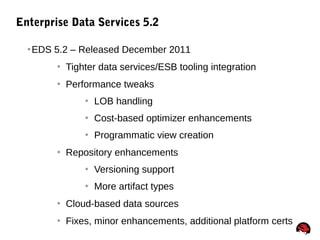

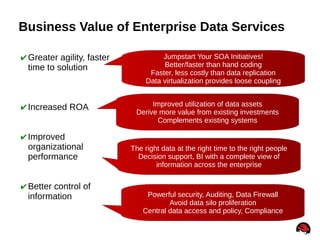

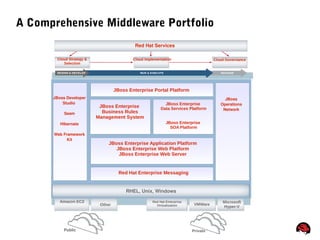

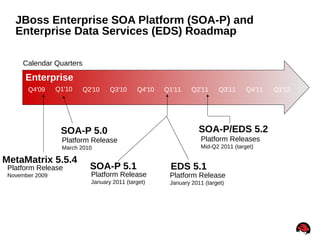

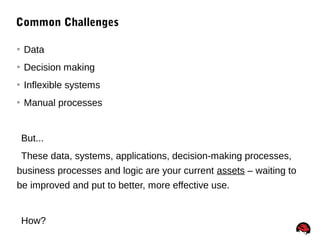

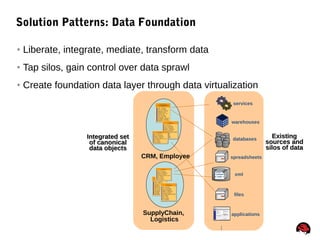

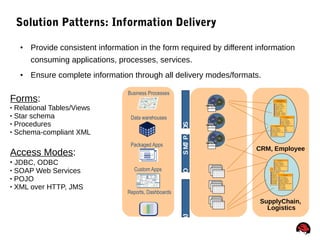

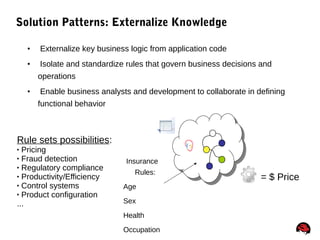

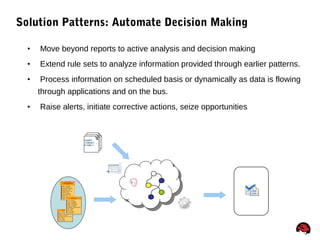

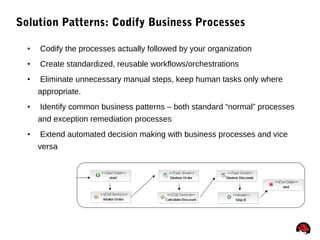

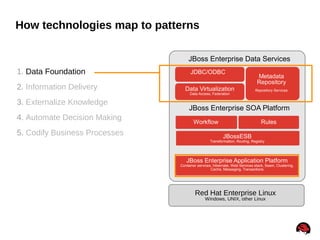

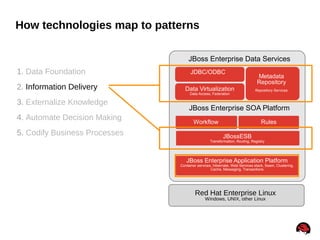

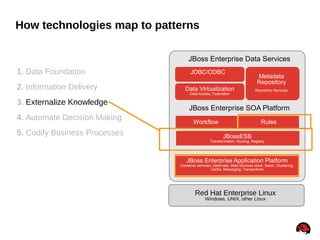

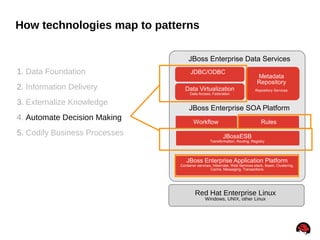

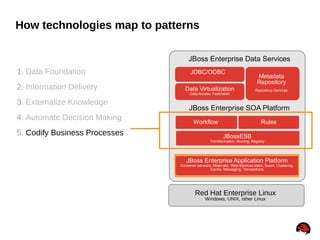

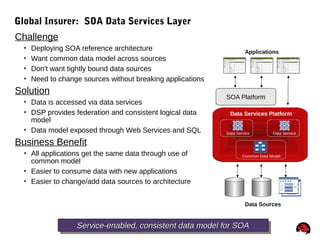

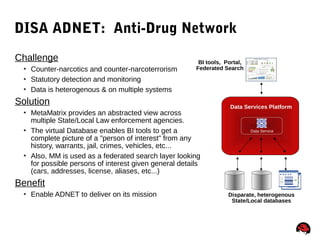

The document discusses how JBoss Enterprise Data Services and the JBoss Enterprise SOA Platform can be used to address common business challenges related to data, decision making, inflexible systems, and manual processes. It presents five solution patterns - data foundation, information delivery, externalizing knowledge, automating decision making, and codifying business processes - and how the technologies in the JBoss platforms map to implementing these patterns to generate new business value from existing assets.

![73

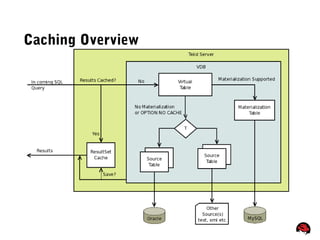

Cache Hints – How They Are Used

• Indicate that a user query is eligible for result set caching

• Set the result set query cache entry memory preference or

time to live

• Set the materialized view memory preference, time to live, or

updatability

/*+ cache[([pref_mem] [ttl:n] [updatable])] */

– pref_mem - if present indicates that the cached results

should prefer to remain in memory

– ttl - if present n indicates the time to live value in milliseconds

– updatable - if present indicates that the cached results can

be updated](https://image.slidesharecdn.com/edsdeepdive-150729035136-lva1-app6892/85/JBoss-Enterprise-Data-Services-Data-Virtualization-70-320.jpg)