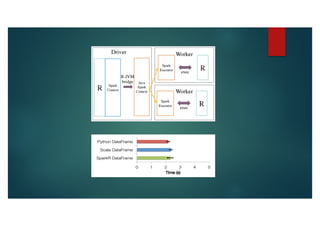

SparkR is an R package that provides an interface to Apache Spark to enable large scale data analysis from R. It introduces the concept of distributed data frames that allow users to manipulate large datasets using familiar R syntax. SparkR improves performance over large datasets by using lazy evaluation and Spark's relational query optimizer. It also supports over 100 functions on data frames for tasks like statistical analysis, string manipulation, and date operations.