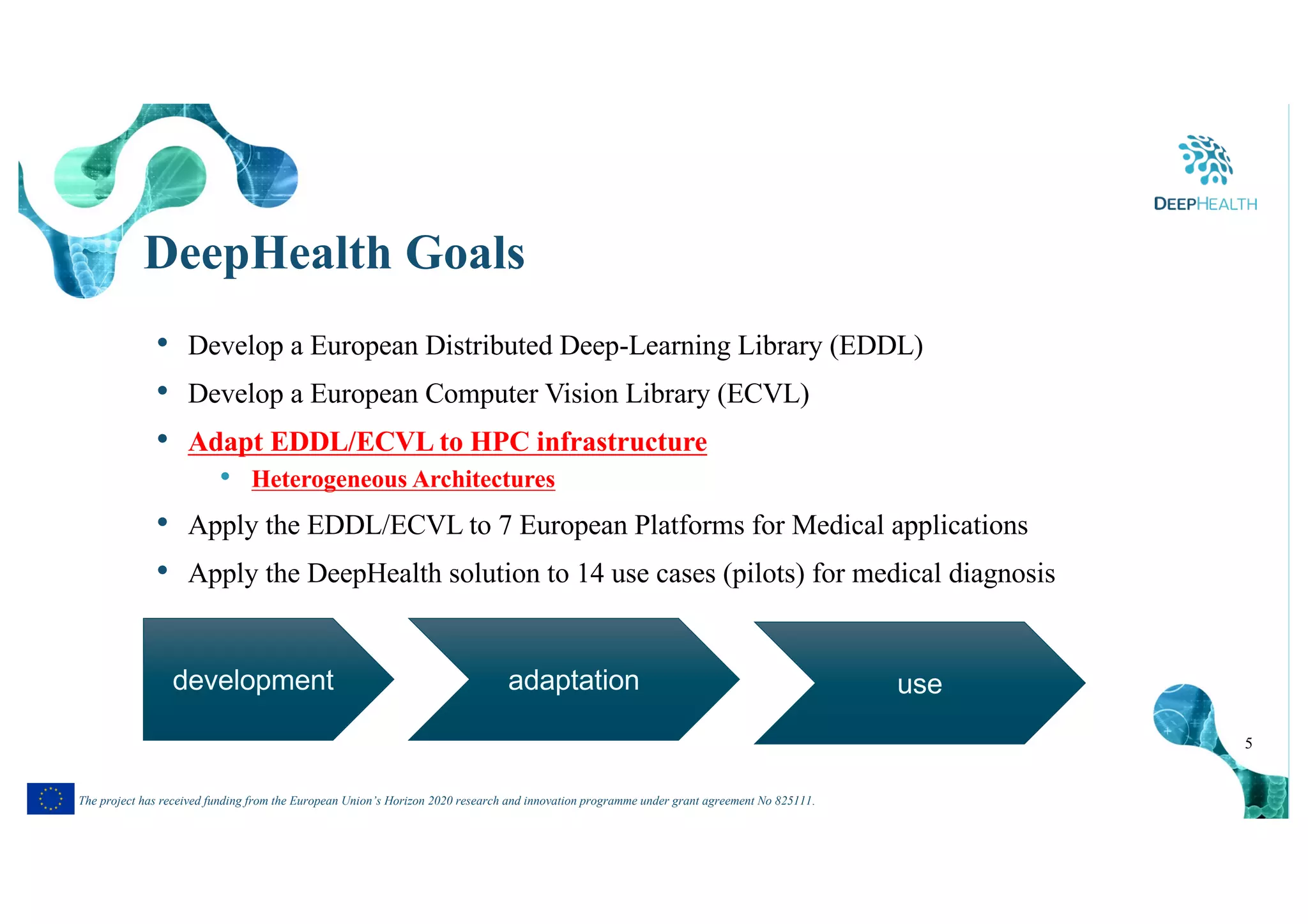

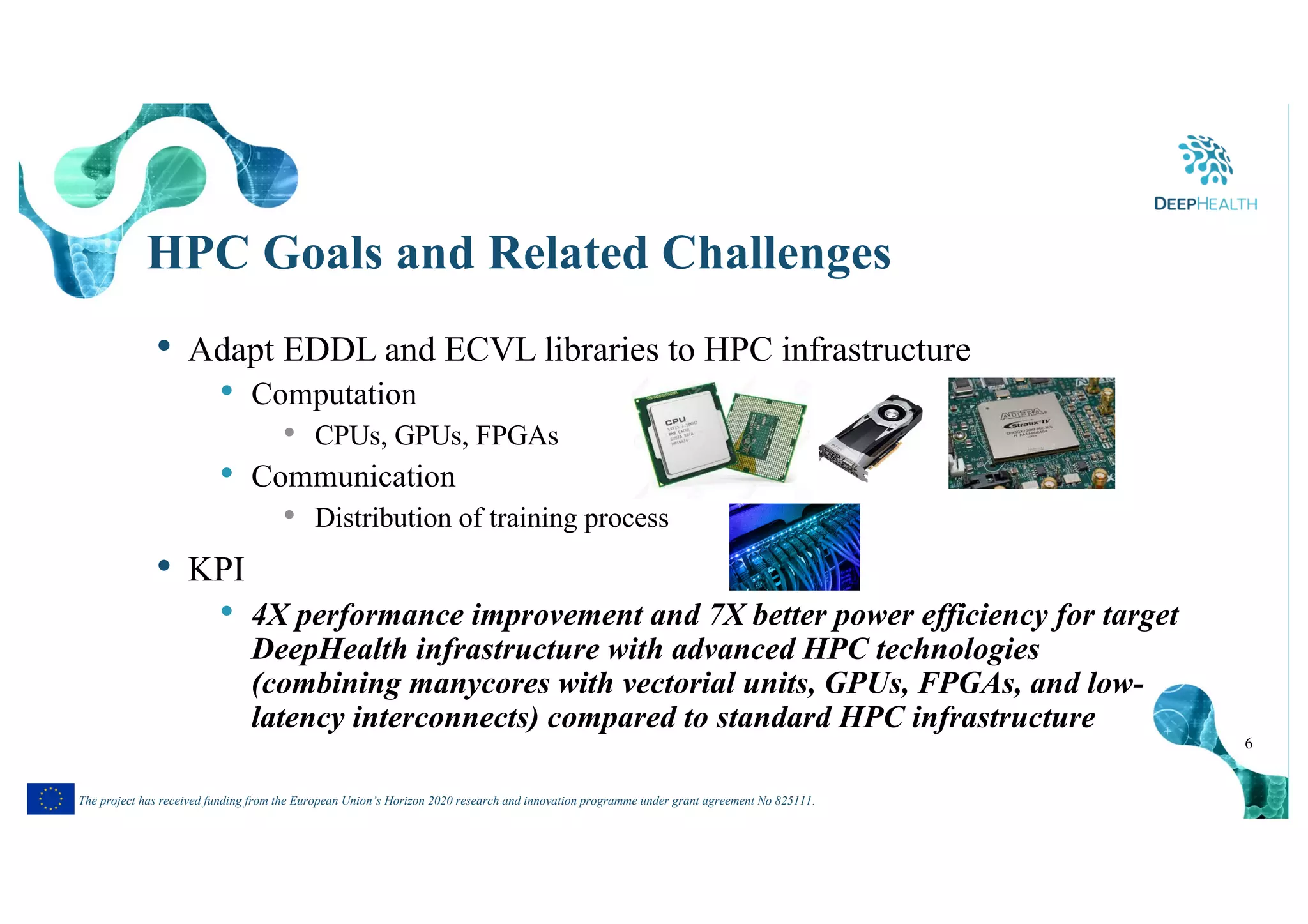

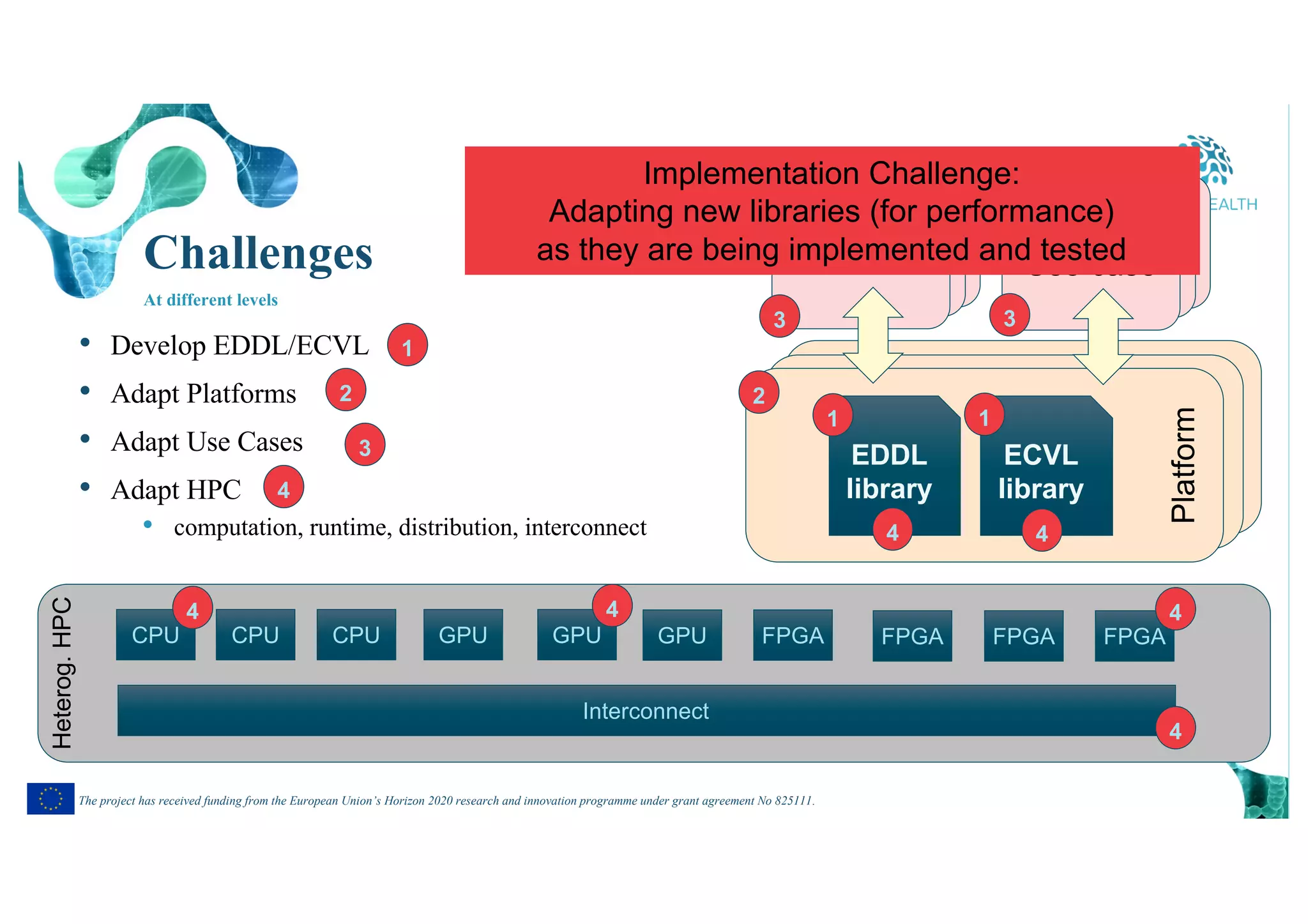

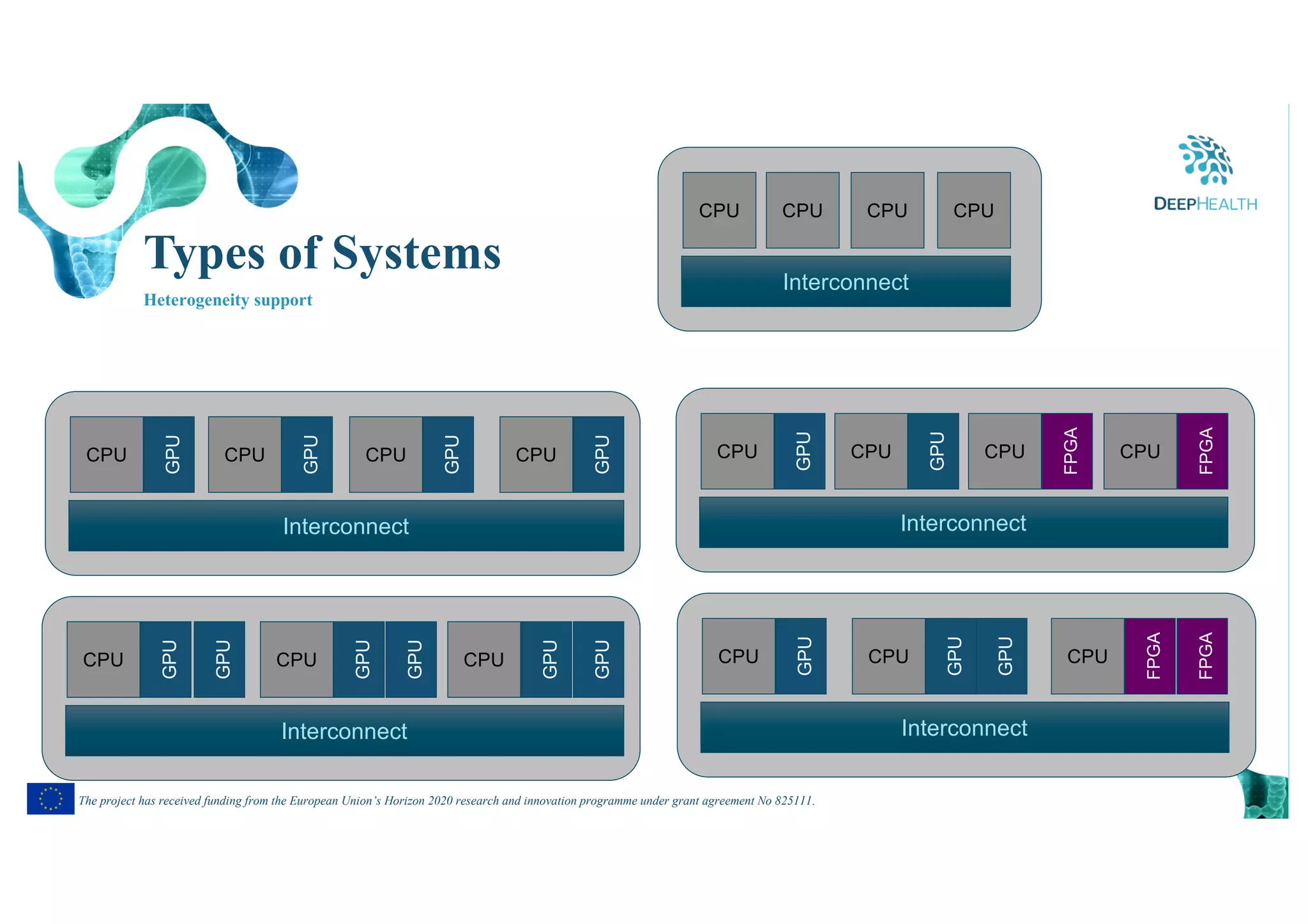

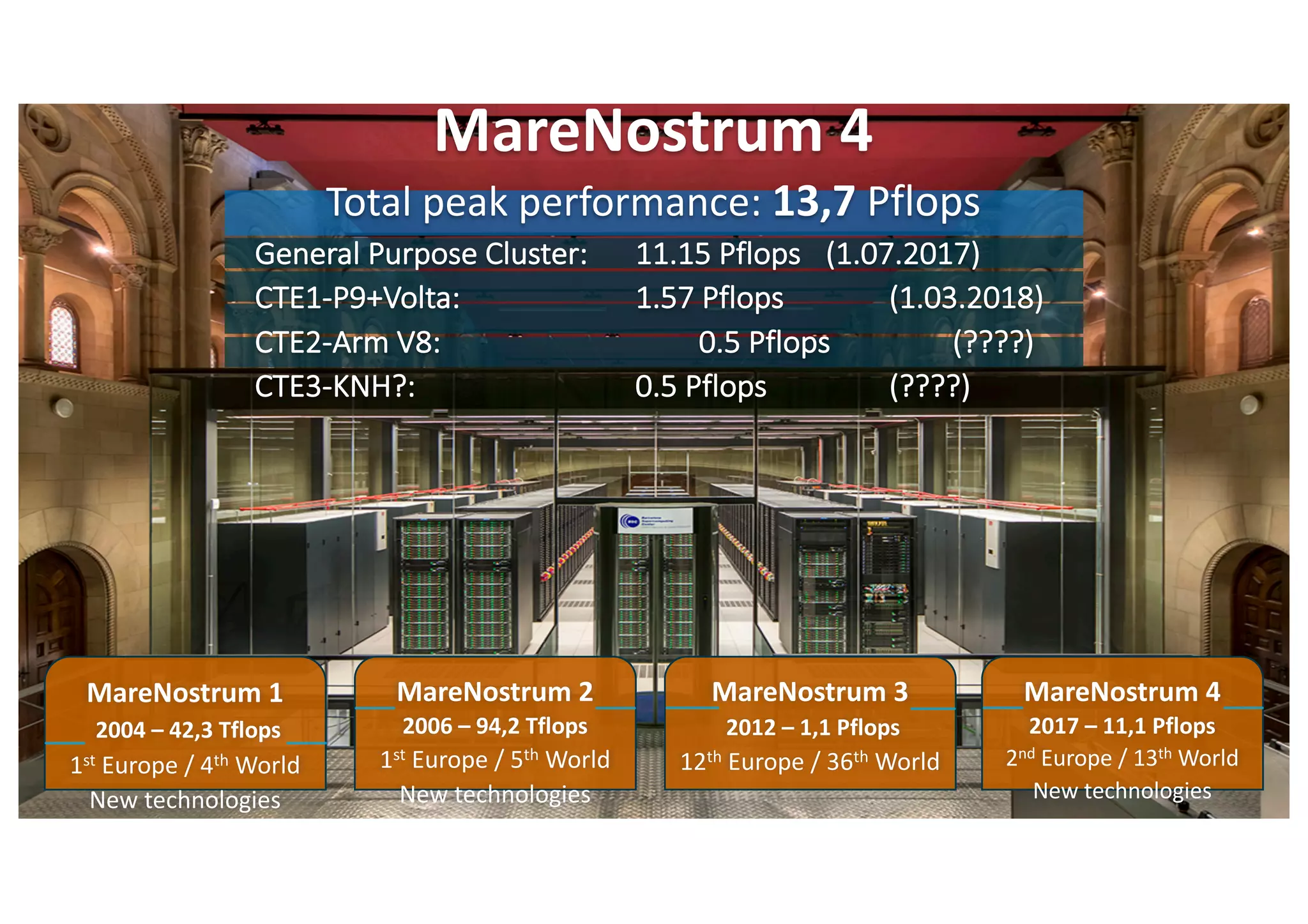

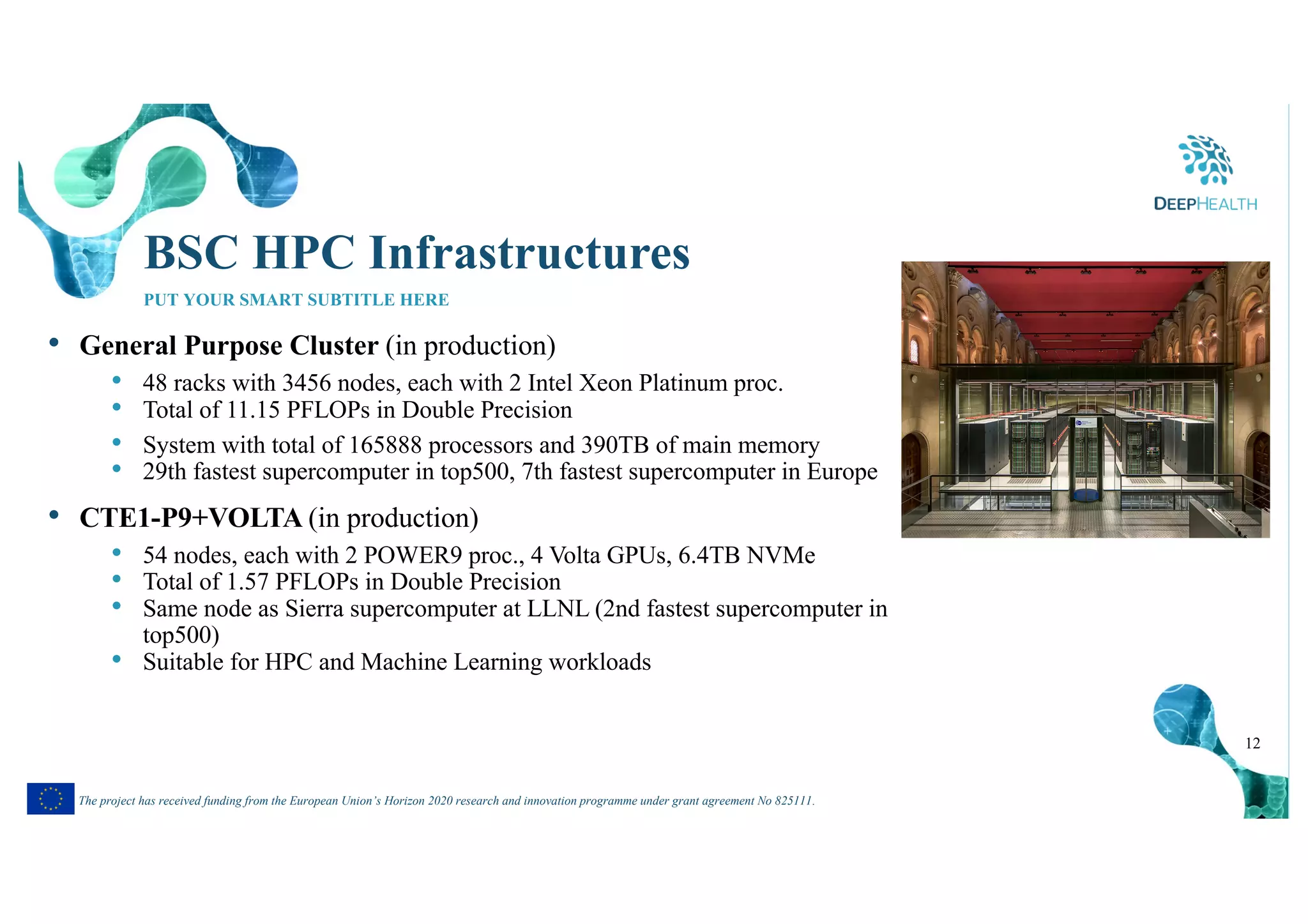

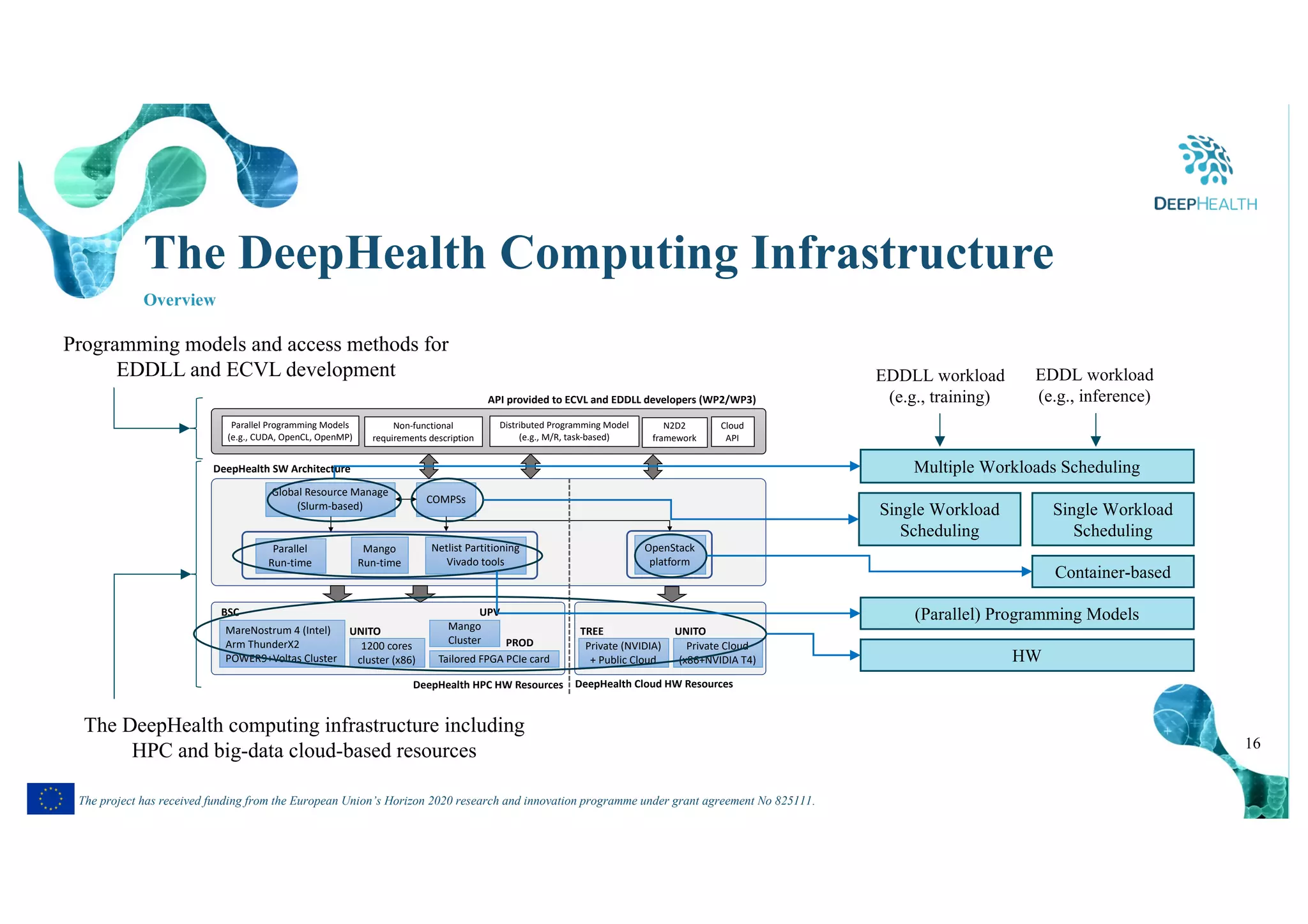

The document discusses the DeepHealth project, which aims to facilitate medical image processing and predictive model development through a unified framework that can exploit heterogeneous HPC and big data architectures. The project involves developing the European Distributed Deep Learning Library (EDDLL) and European Computer Vision Library (ECVL) to run algorithms on hybrid systems. It will apply these libraries to 7 medical software platforms and 14 pilot testbeds covering neurological diseases, tumor detection, and digital pathology. The project goals include adapting the libraries for HPC, applying them to use cases, and improving performance and efficiency on target systems like the MareNostrum supercomputer.

![17

The project has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No 825111.

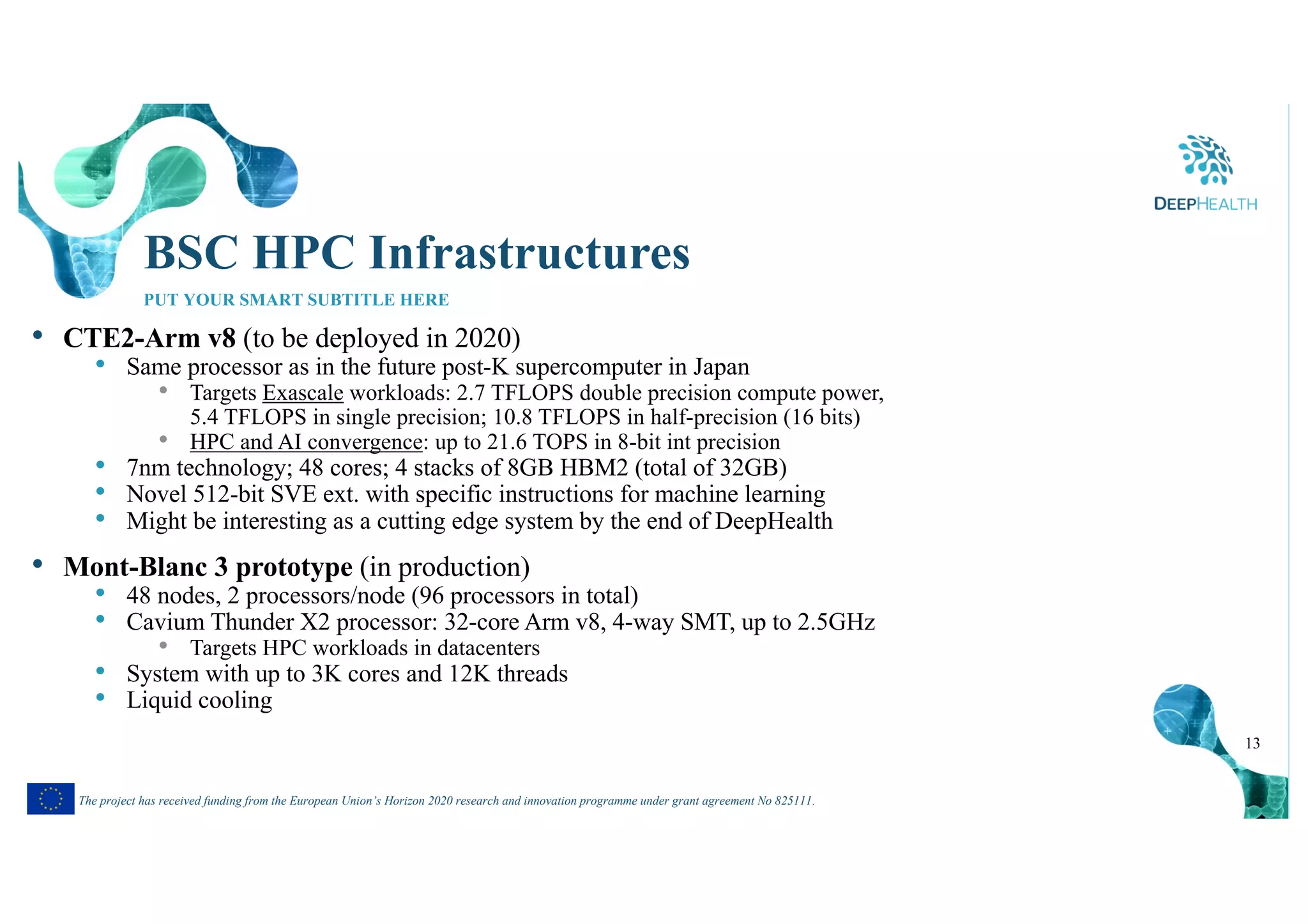

COMPSs

• Framework (programming model + runtime system) to develop parallel

applications for distributed infrastructures

• Abstract model: exposes parallelism while hides the infrastructure

• Agnostic of computing platform

• Task-based programming model build on top of general purpose sequential

programming languages (Python, C, C++, Java)

def display(c):

…

def add(a, b, c):

c = a + b

for i in range(MSIZE):

add(A[i],B[i],C[i])

display(C)

@task(c=INOUT)

def display(c):

…

@task(a=IN,b=IN,c=OUT)

def add(a, b, c):

c = a + b

for i in range(MSIZE):

add(A[i],B[i],C[i])

display(C)

ad

d

ad

d

ad

d

dis

pla

y

…

MSIZE](https://image.slidesharecdn.com/ebdvf19-deephealth-191105095333/75/Heterogeneous-HPC-Computing-in-the-DeepHealth-Project-17-2048.jpg)