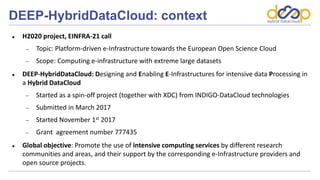

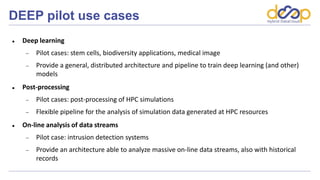

DEEP-Hybrid-DataCloud is a Horizon 2020 project that aims to promote intensive computing services for analyzing large datasets through a hybrid cloud approach. It received funding from the European Union to develop specialized computing infrastructure and integrate intensive computing services. The project involves 9 academic and 1 industrial partners across 6 European countries. It will define a "DEEP as a Service" solution and evolve existing INDIGO components to better support intensive computing workloads and specialized hardware.