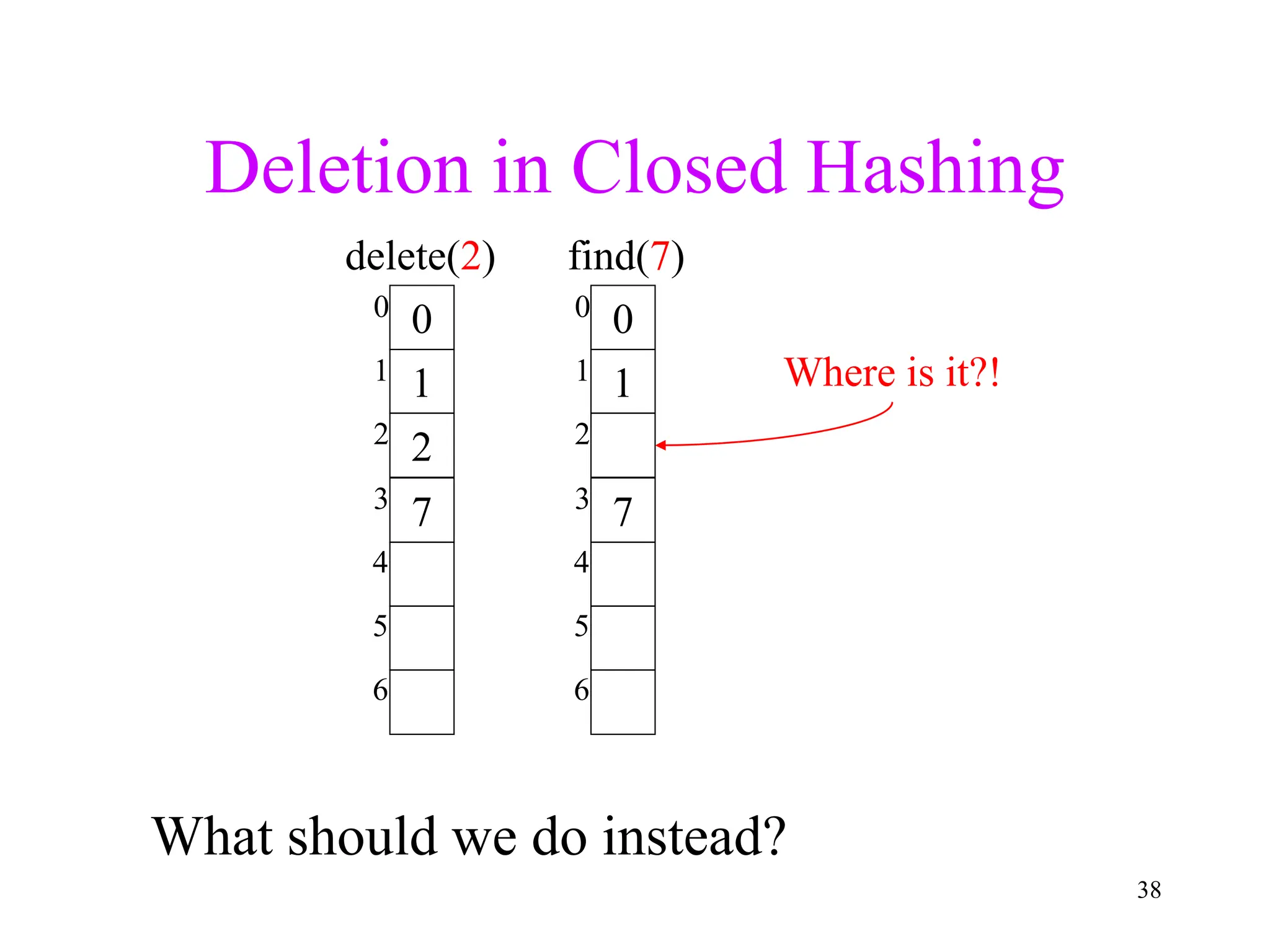

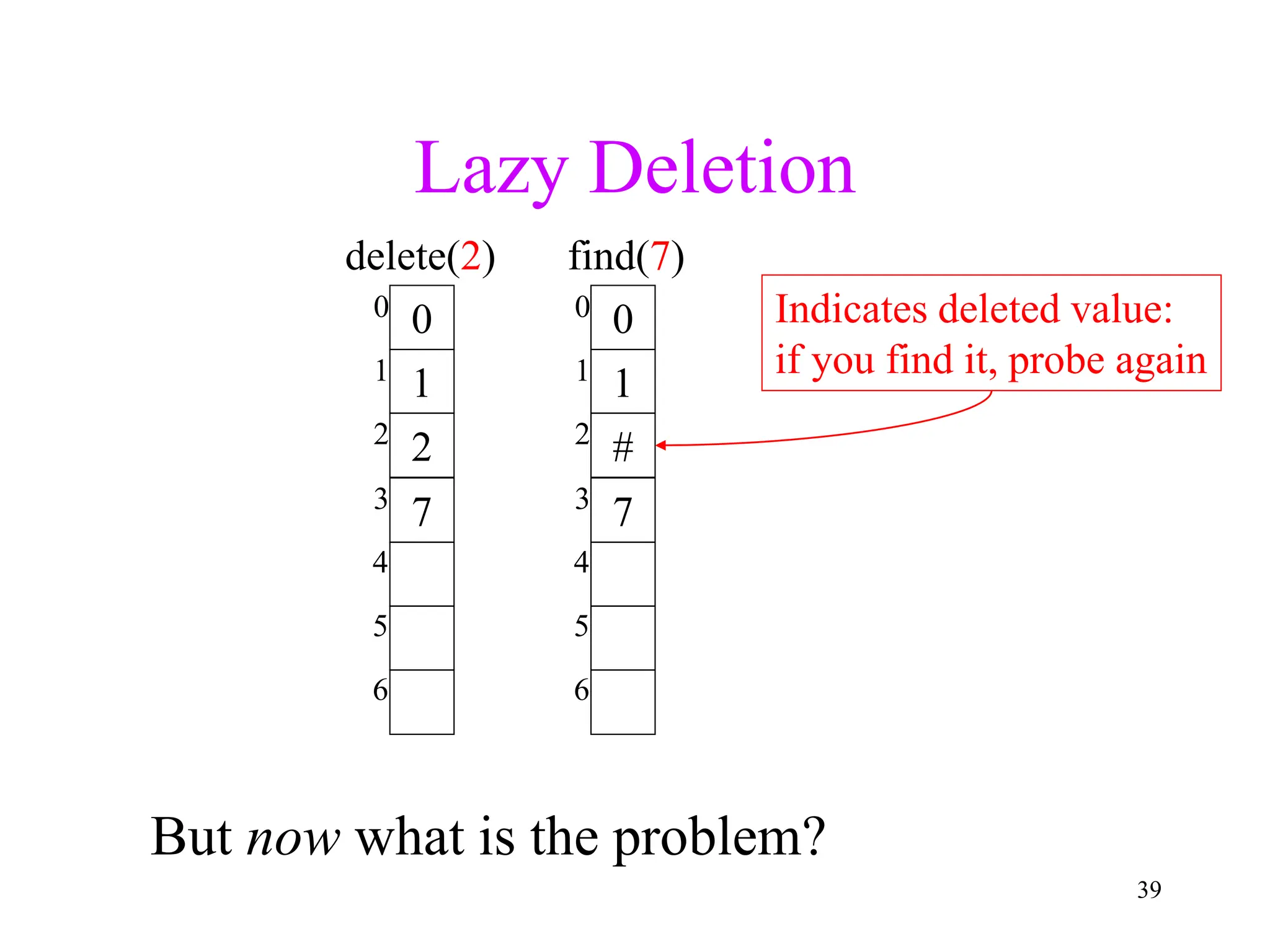

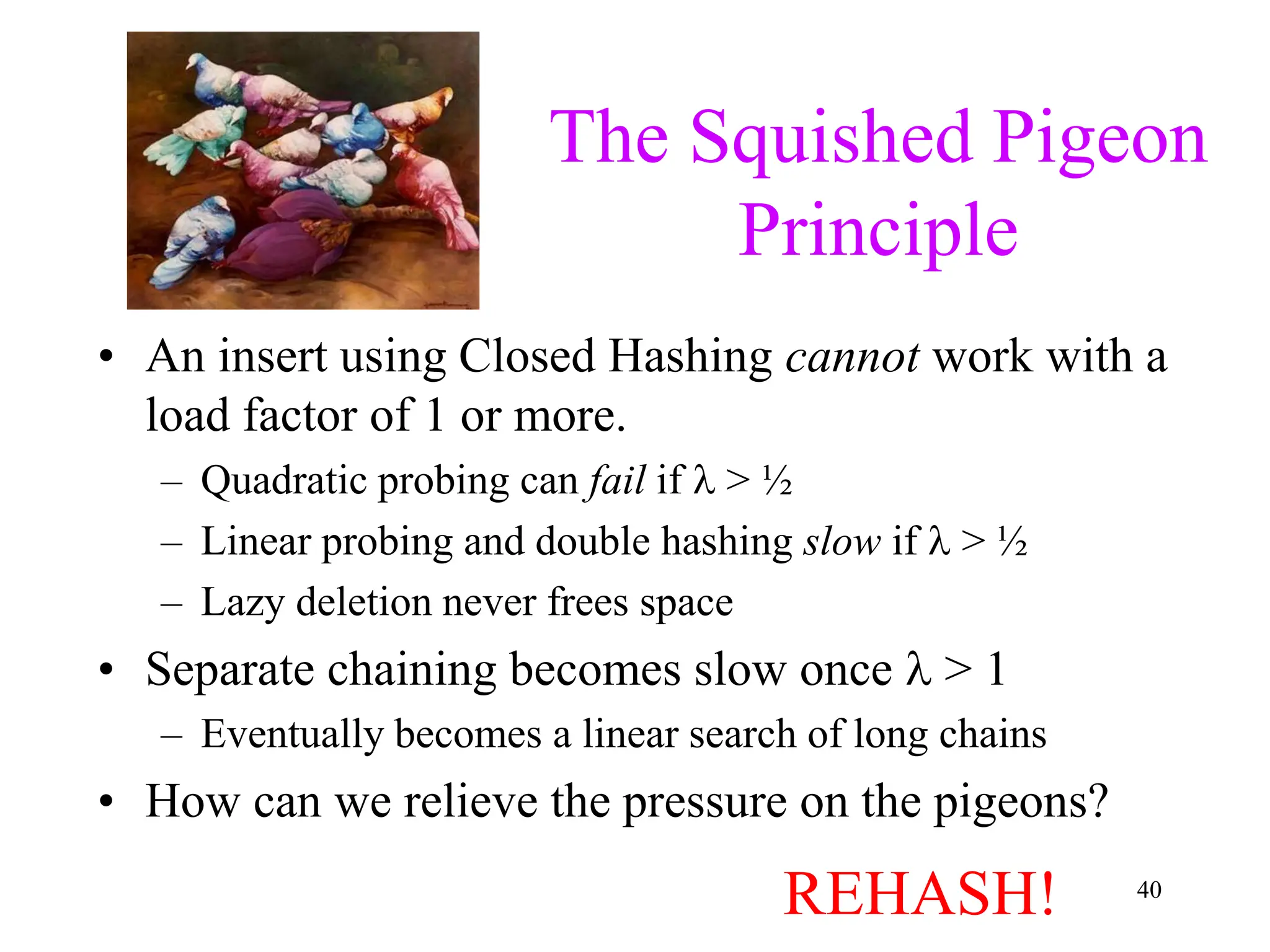

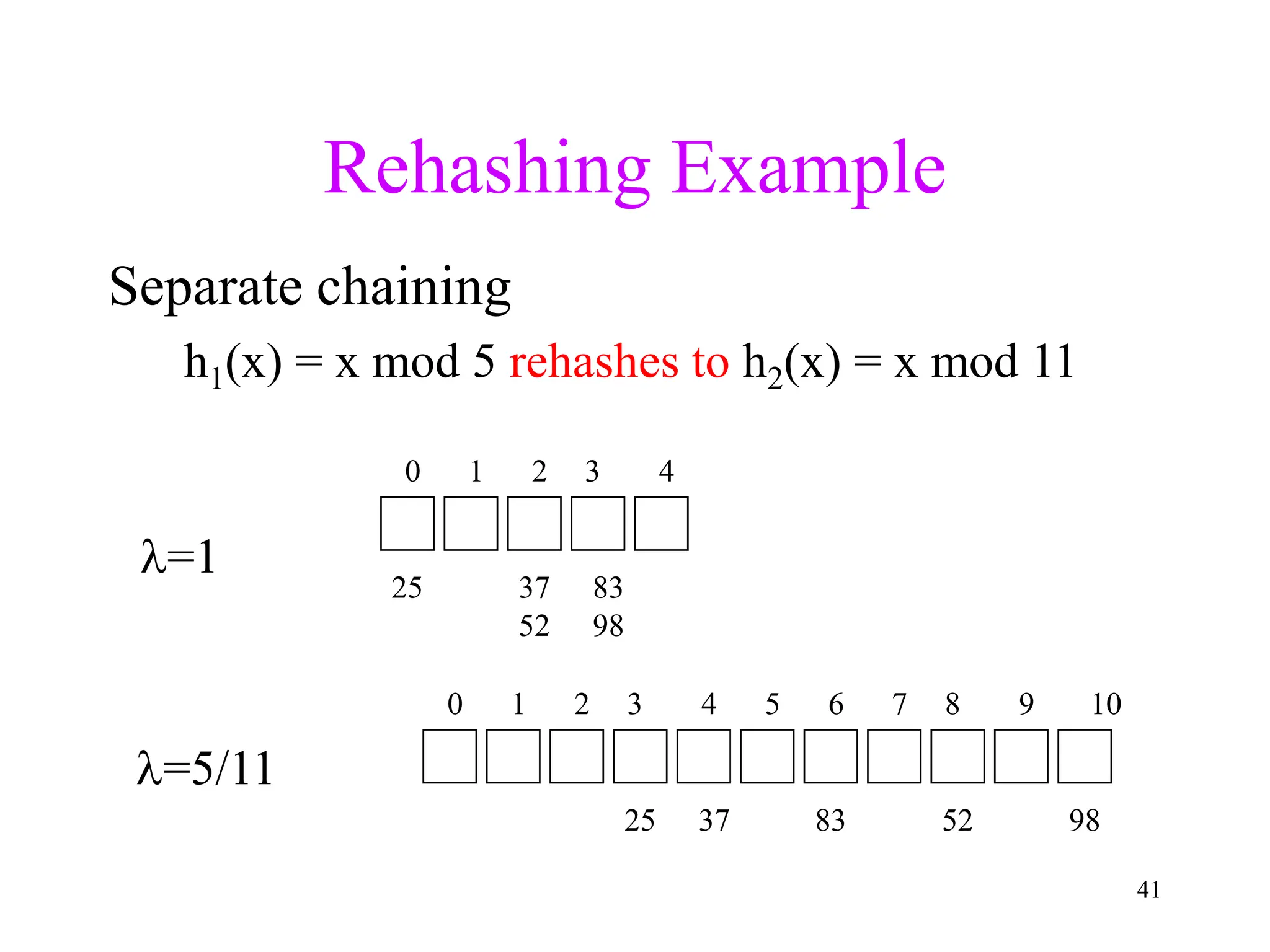

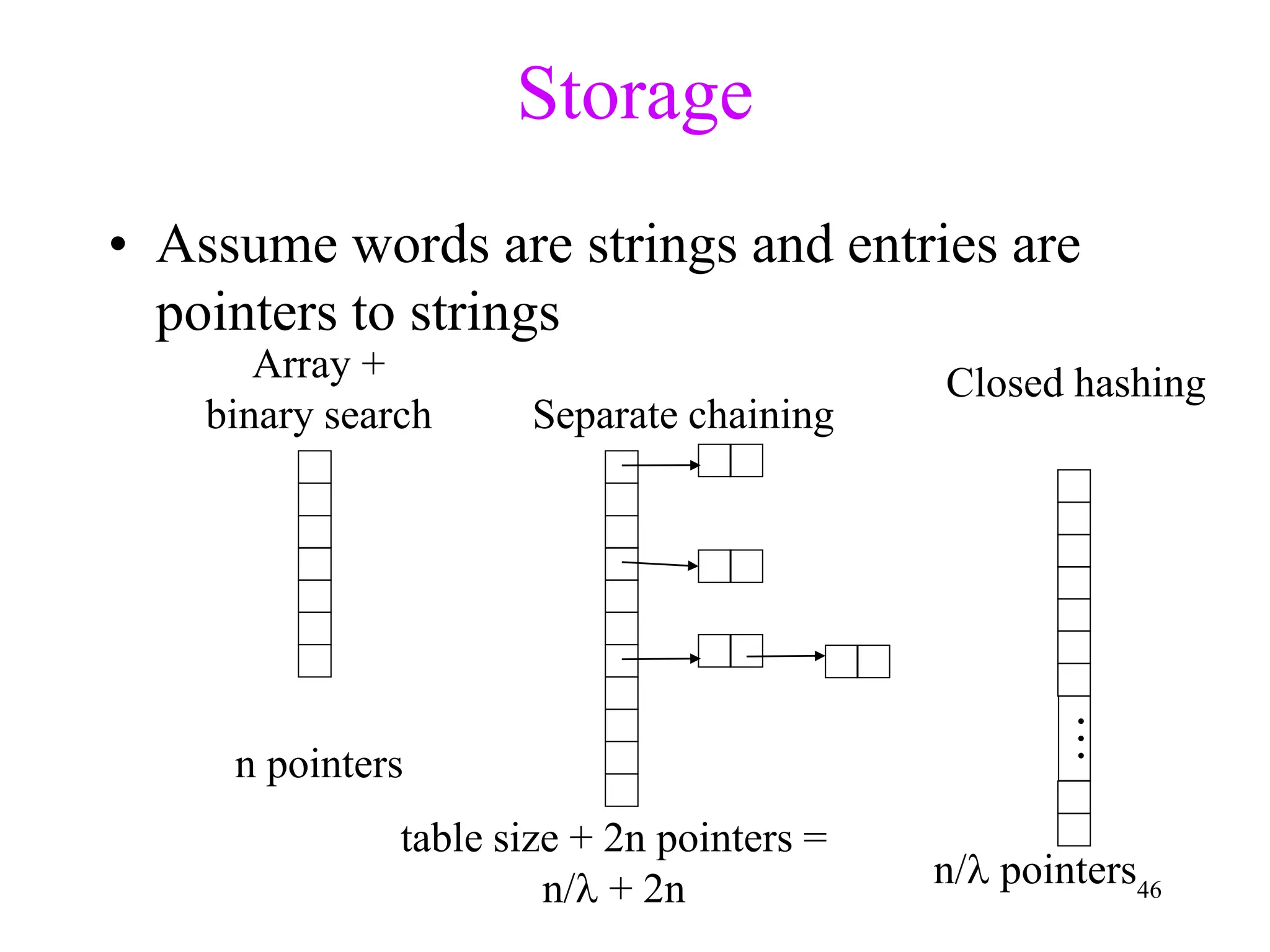

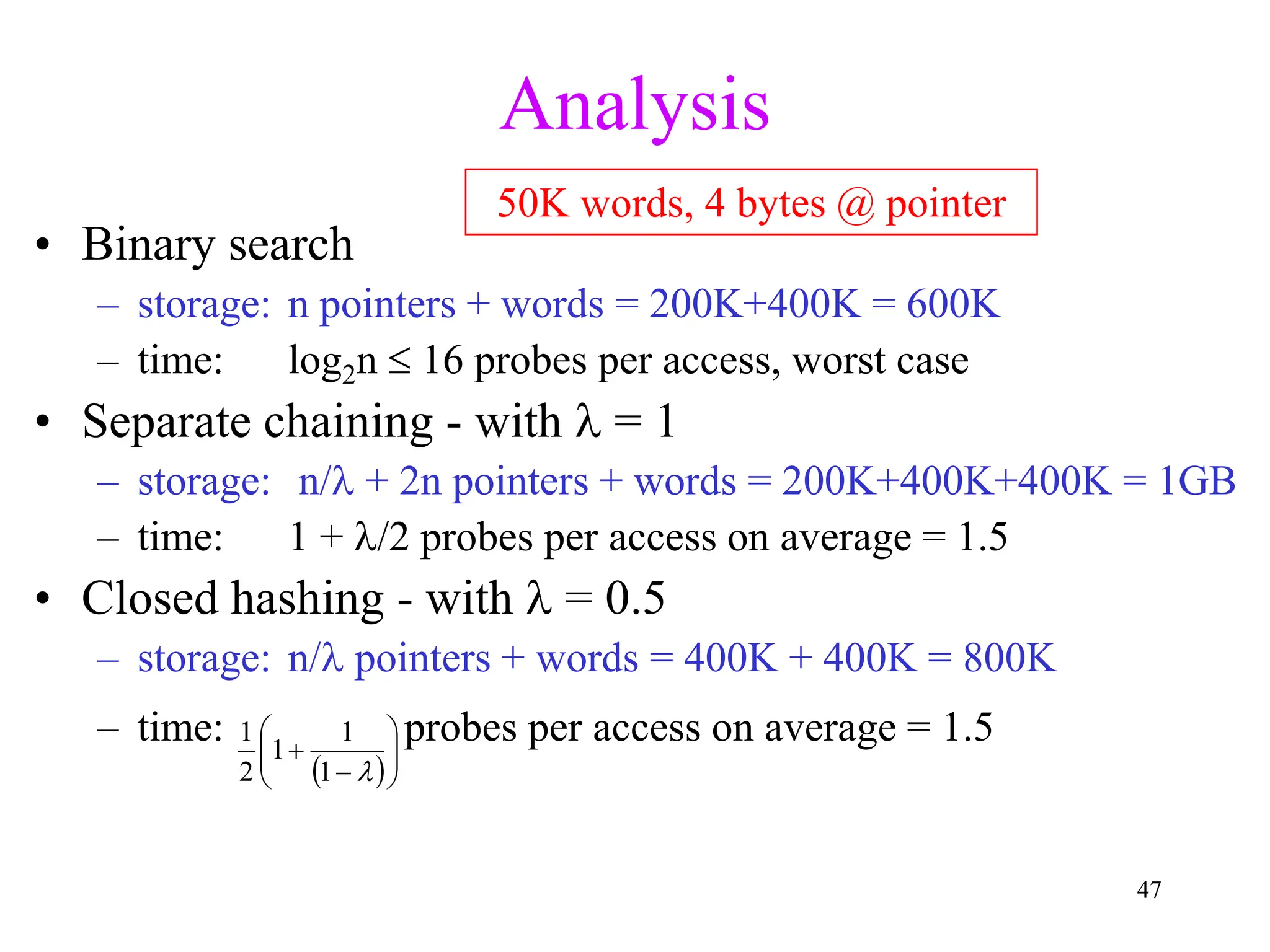

1. The document discusses hashing techniques for implementing dictionaries and search data structures, including separate chaining and closed hashing (linear probing, quadratic probing, and double hashing).

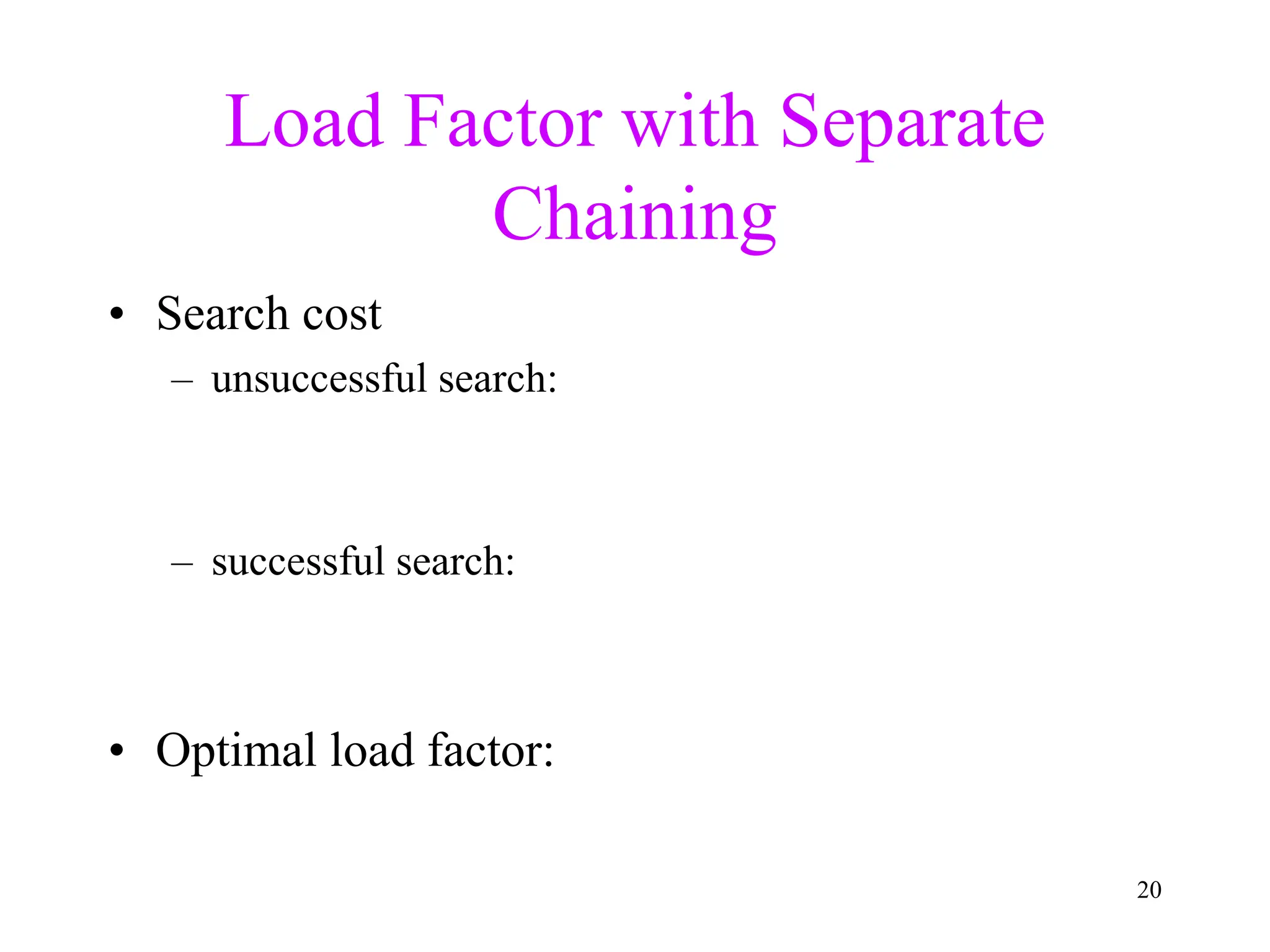

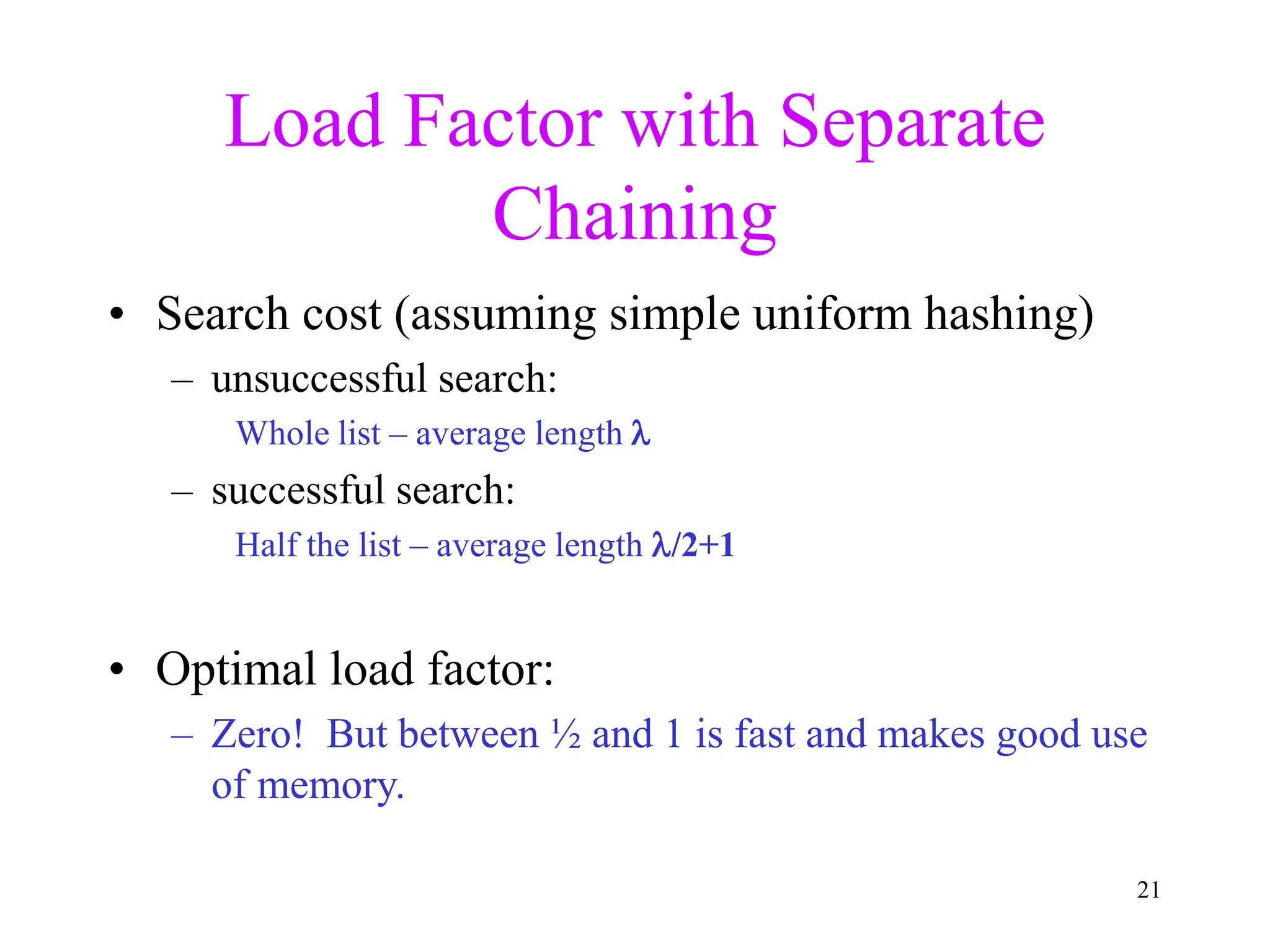

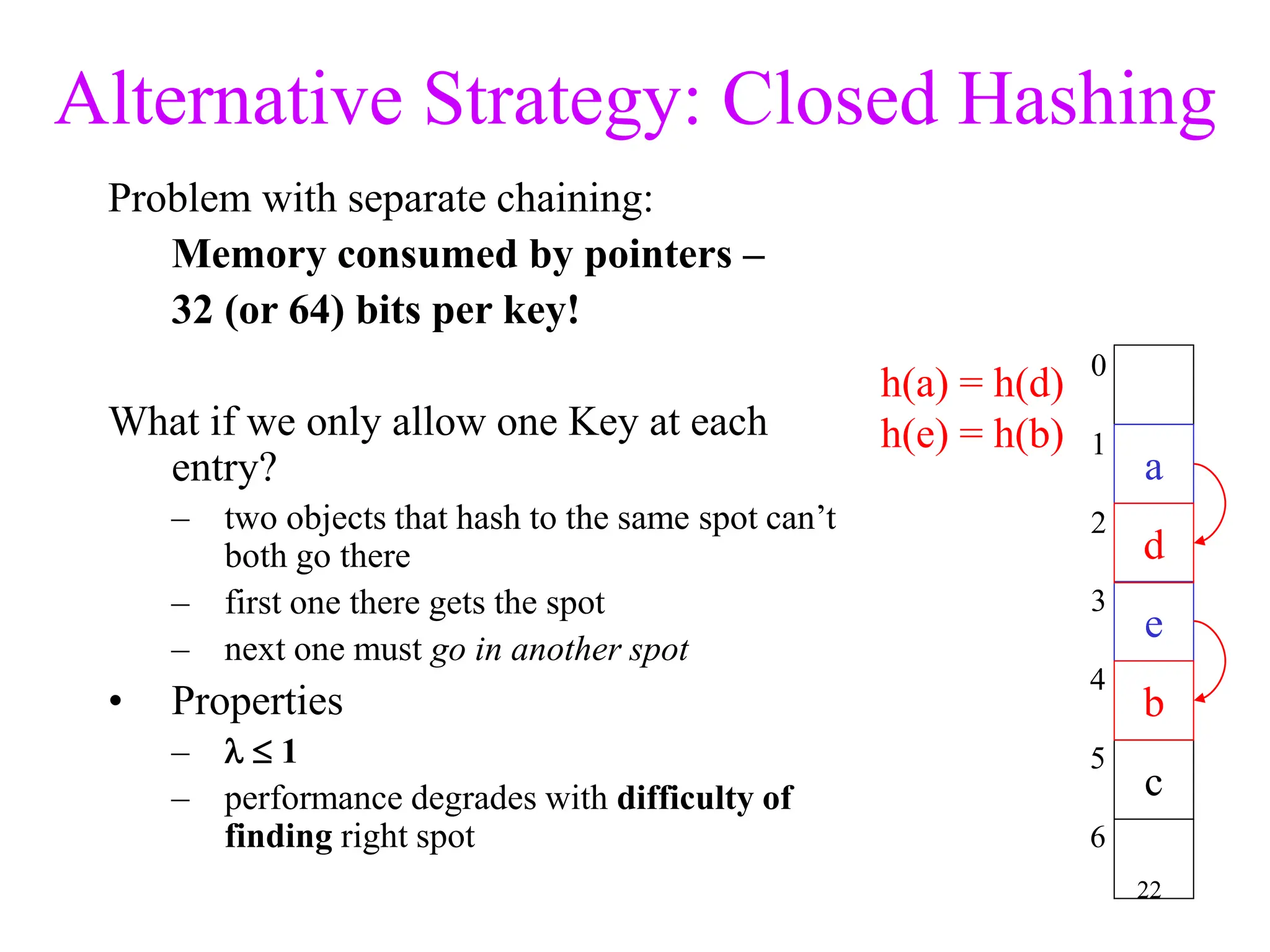

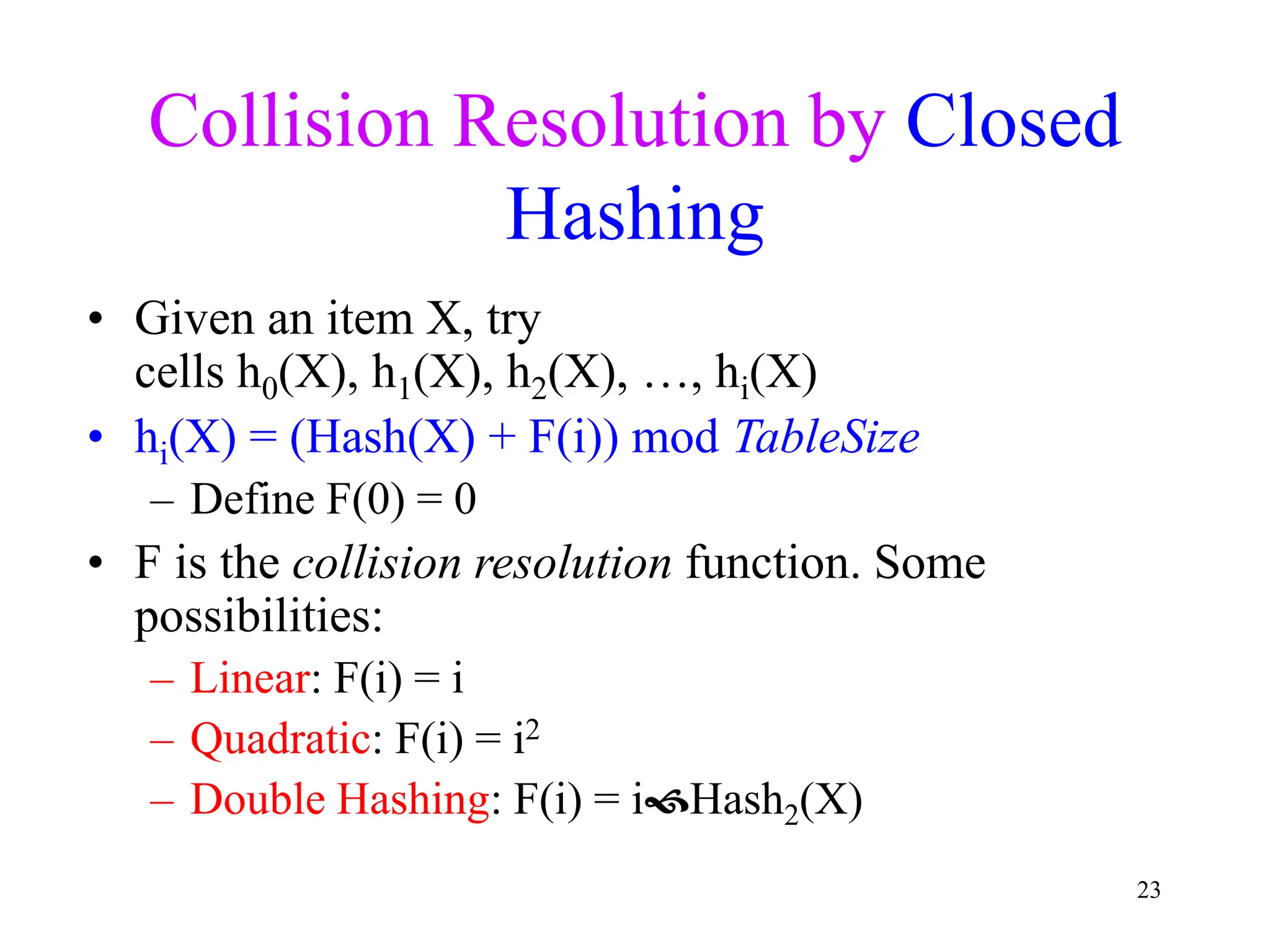

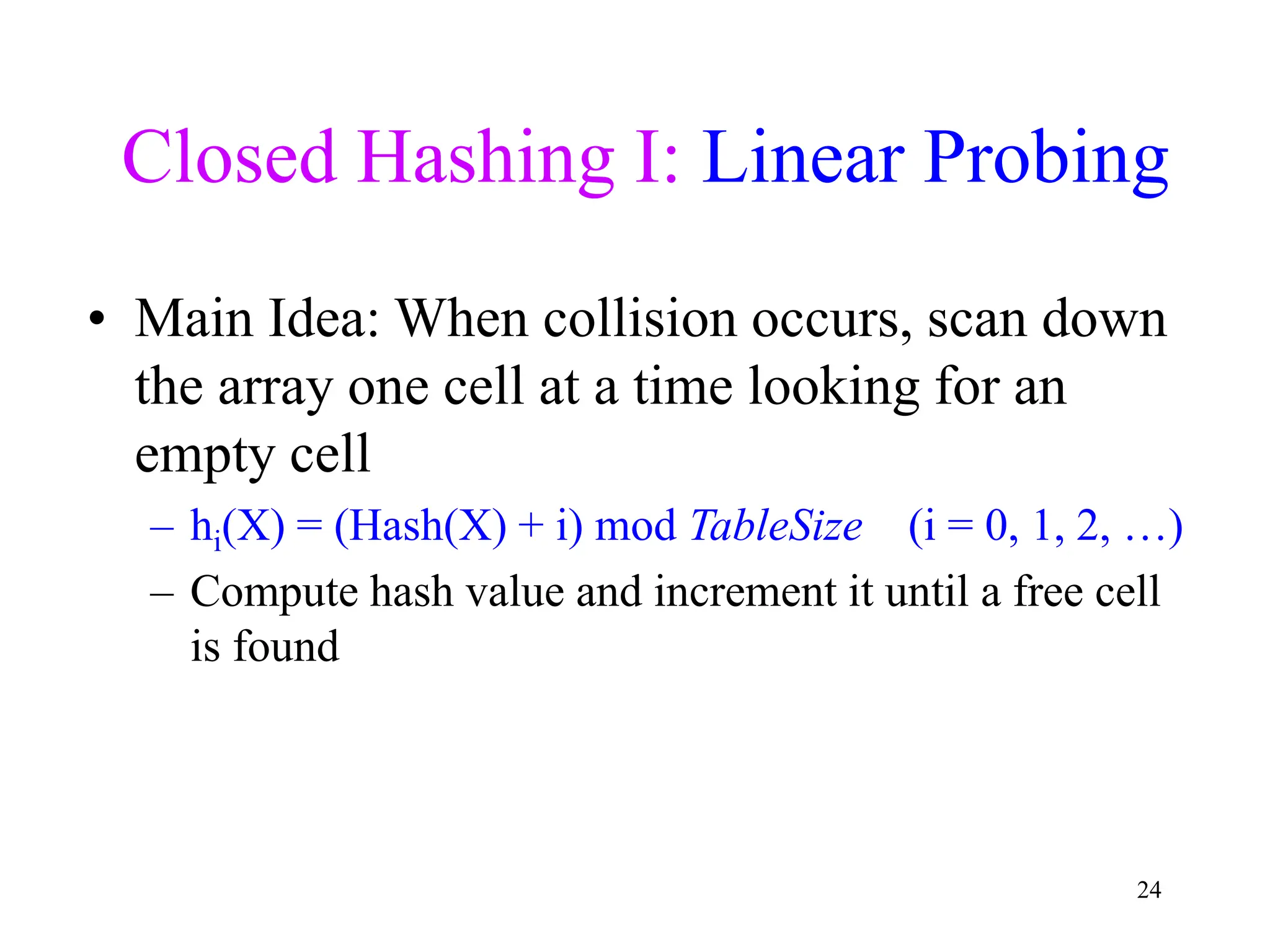

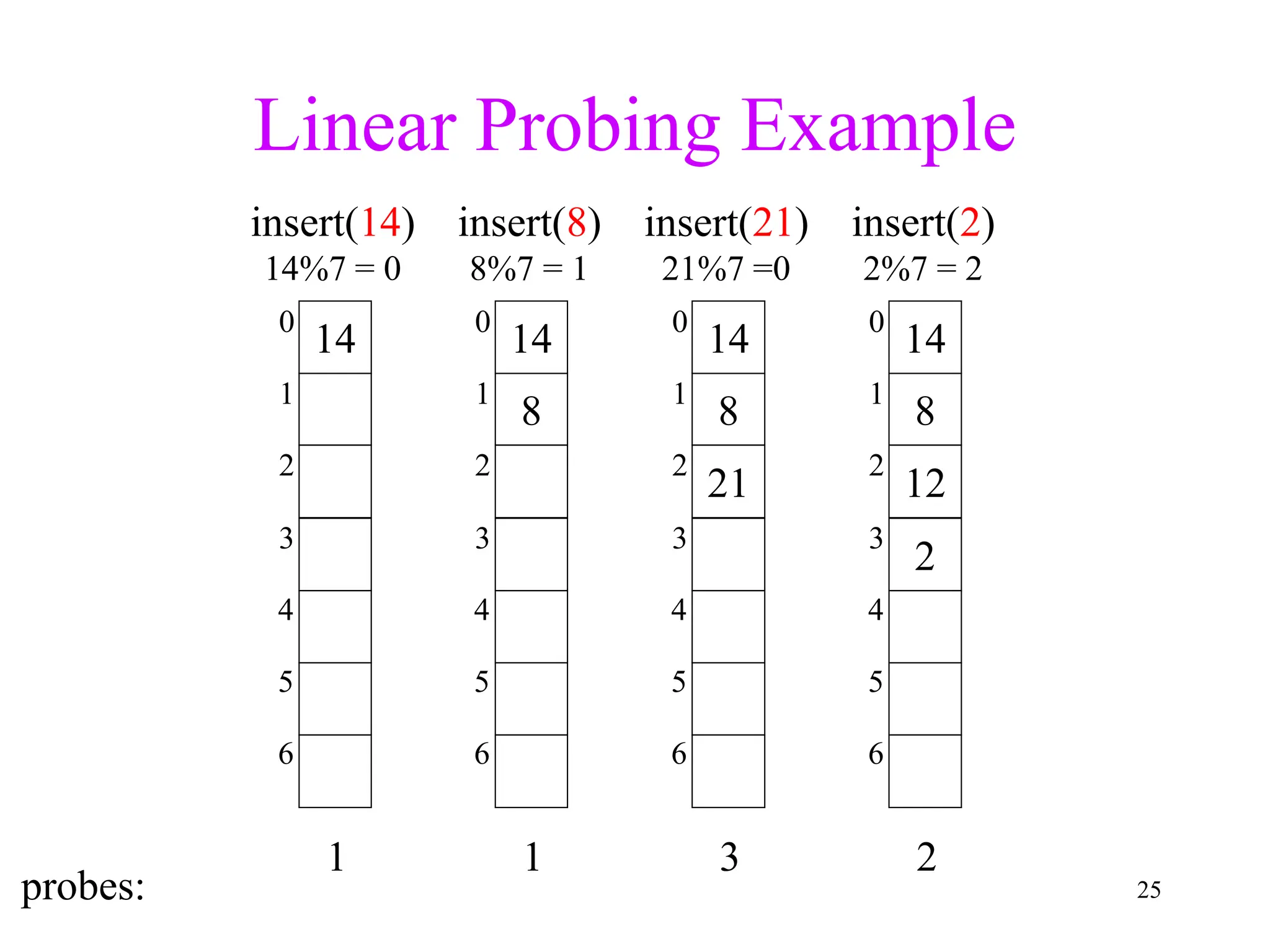

2. Separate chaining uses a linked list at each index to handle collisions, while closed hashing searches for empty slots using a collision resolution function.

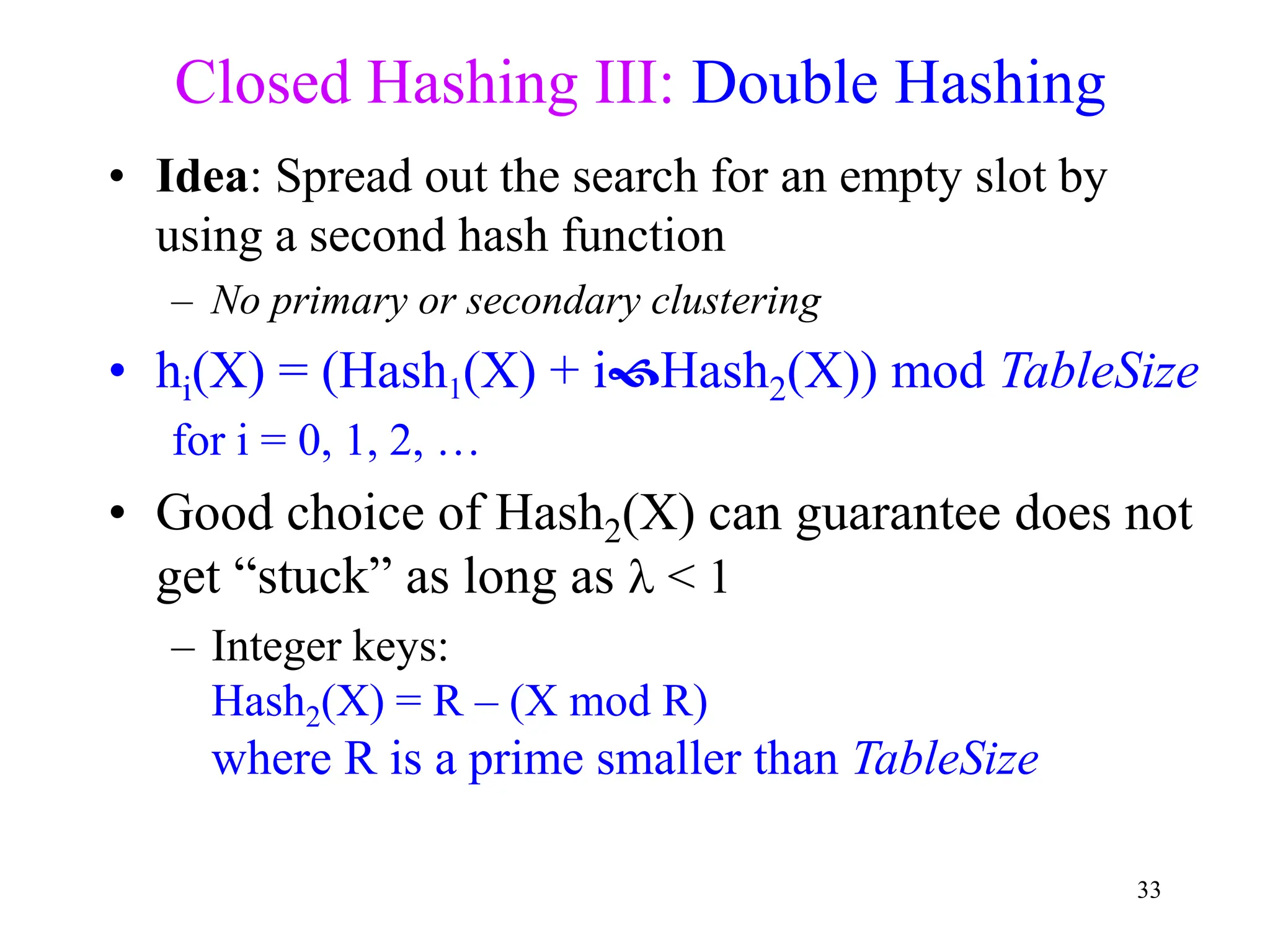

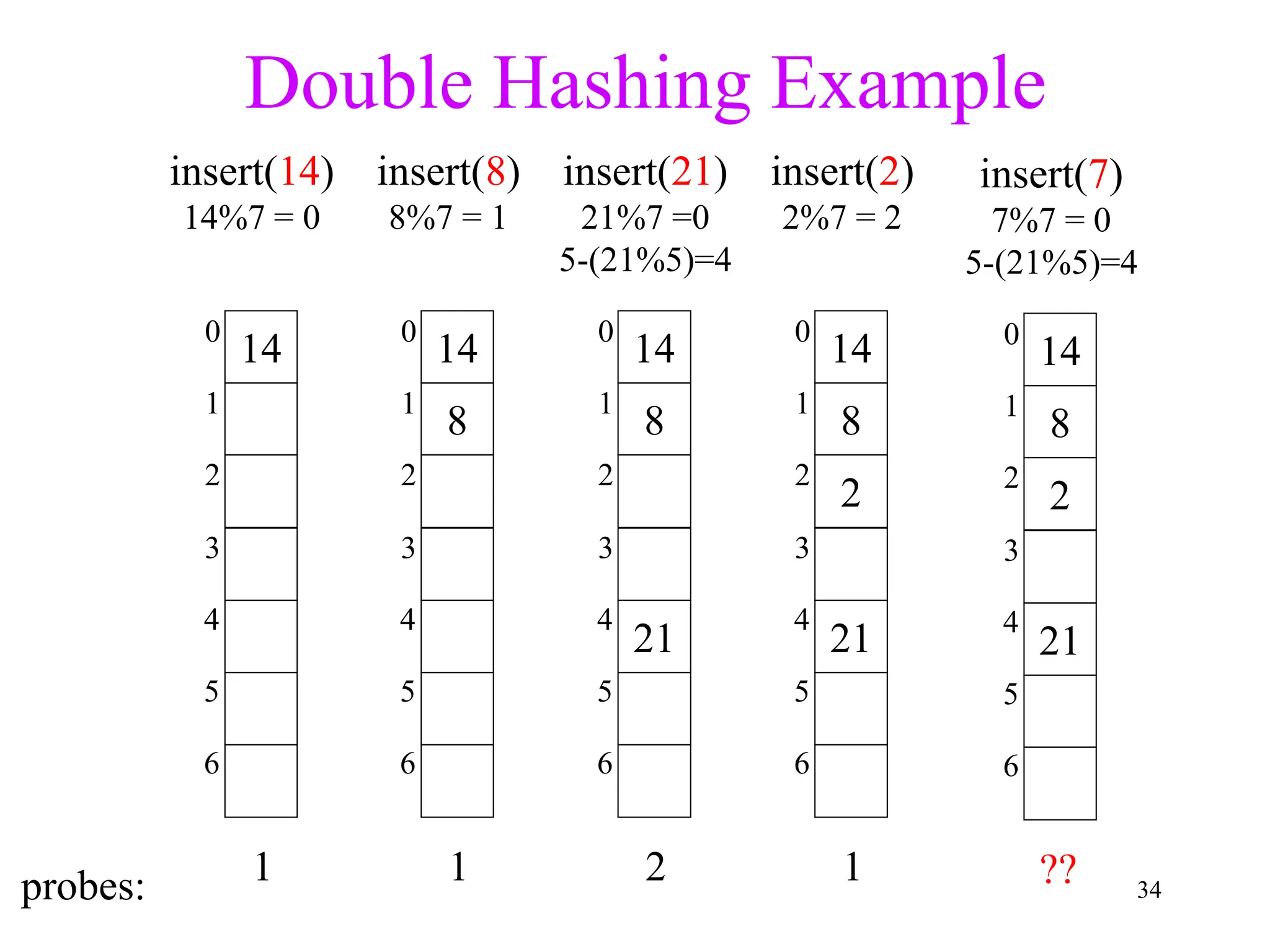

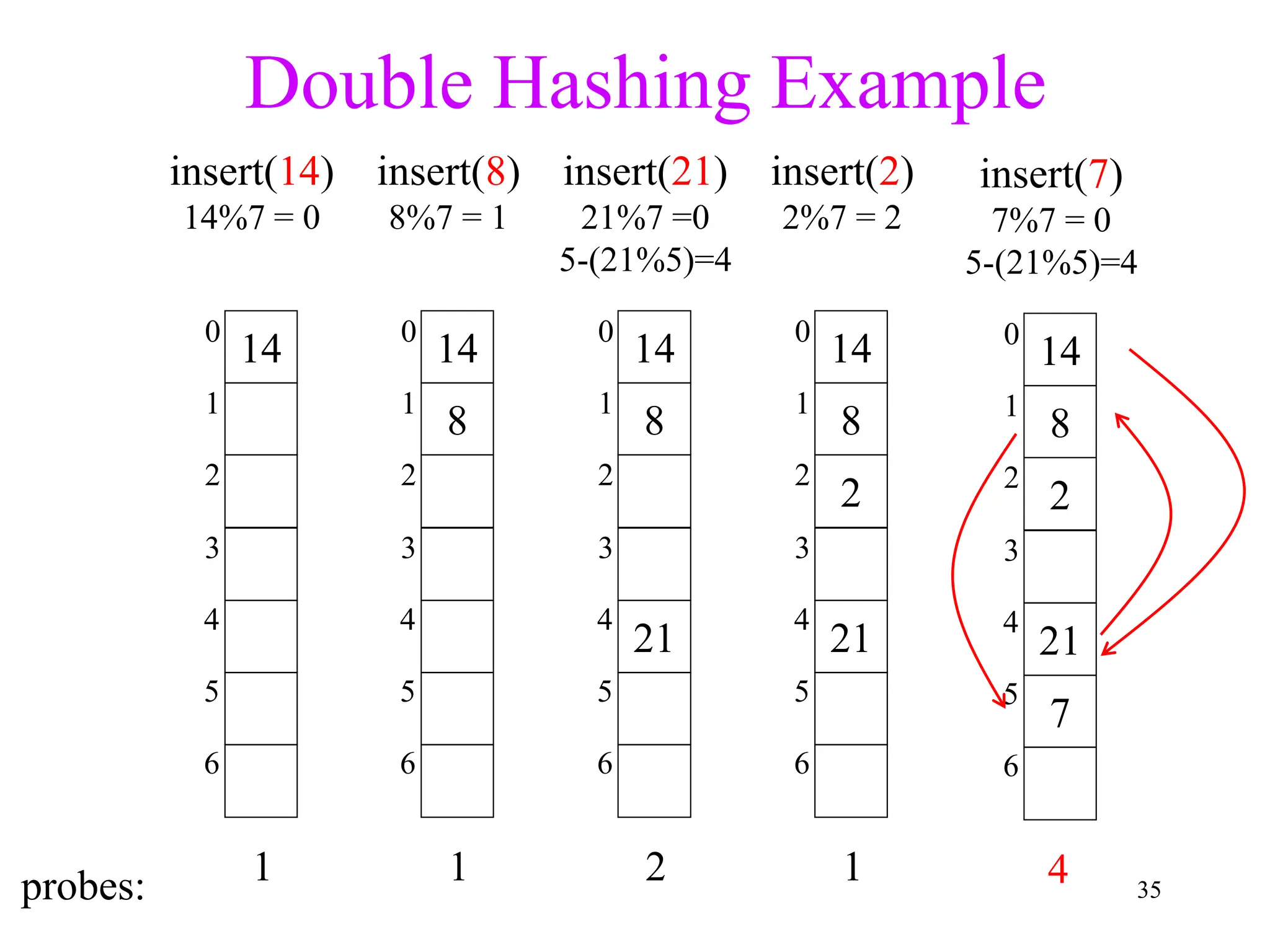

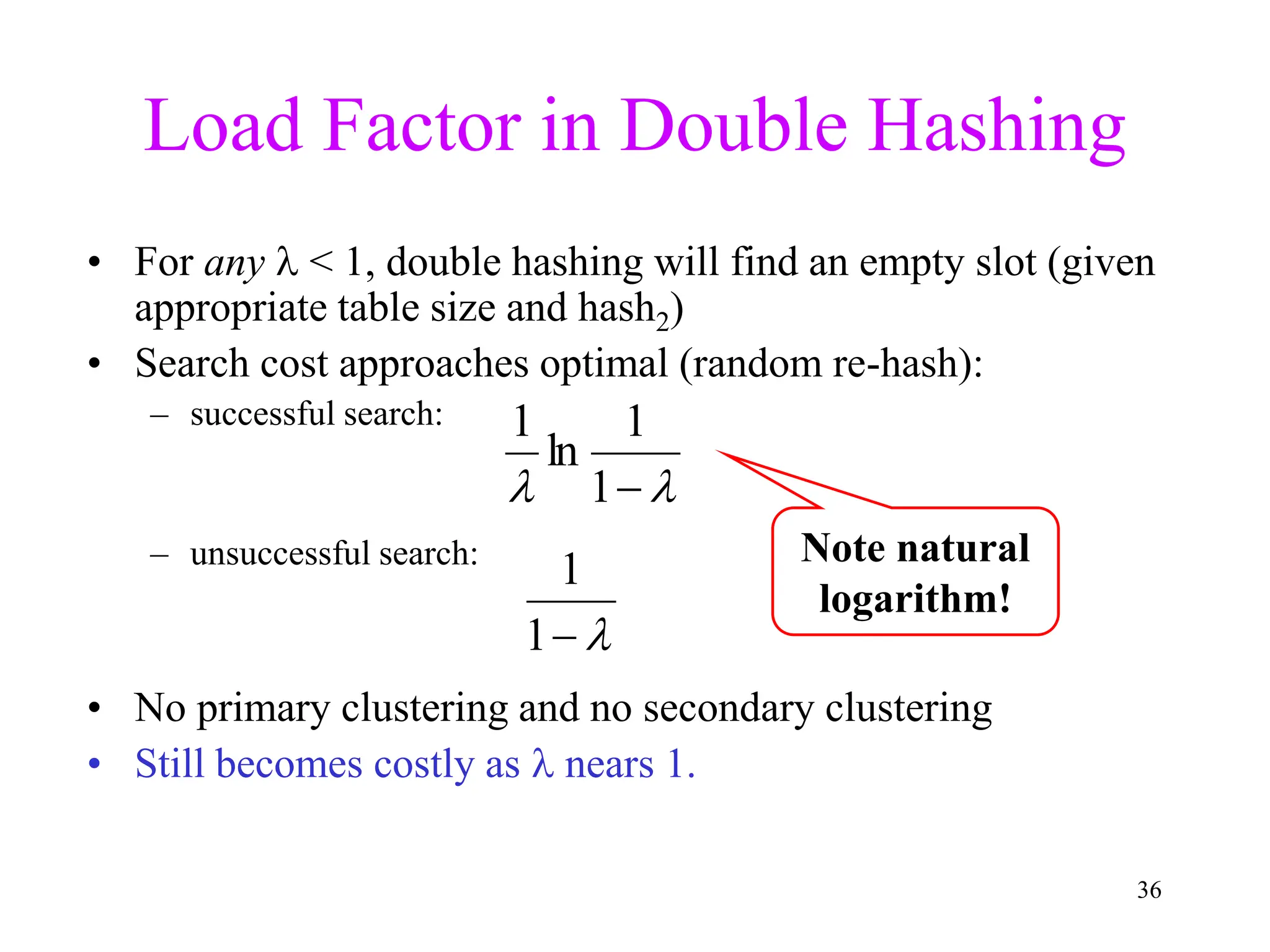

3. Double hashing is described as the best closed hashing technique, as it uses a second hash function to spread keys out and avoid clustering completely.

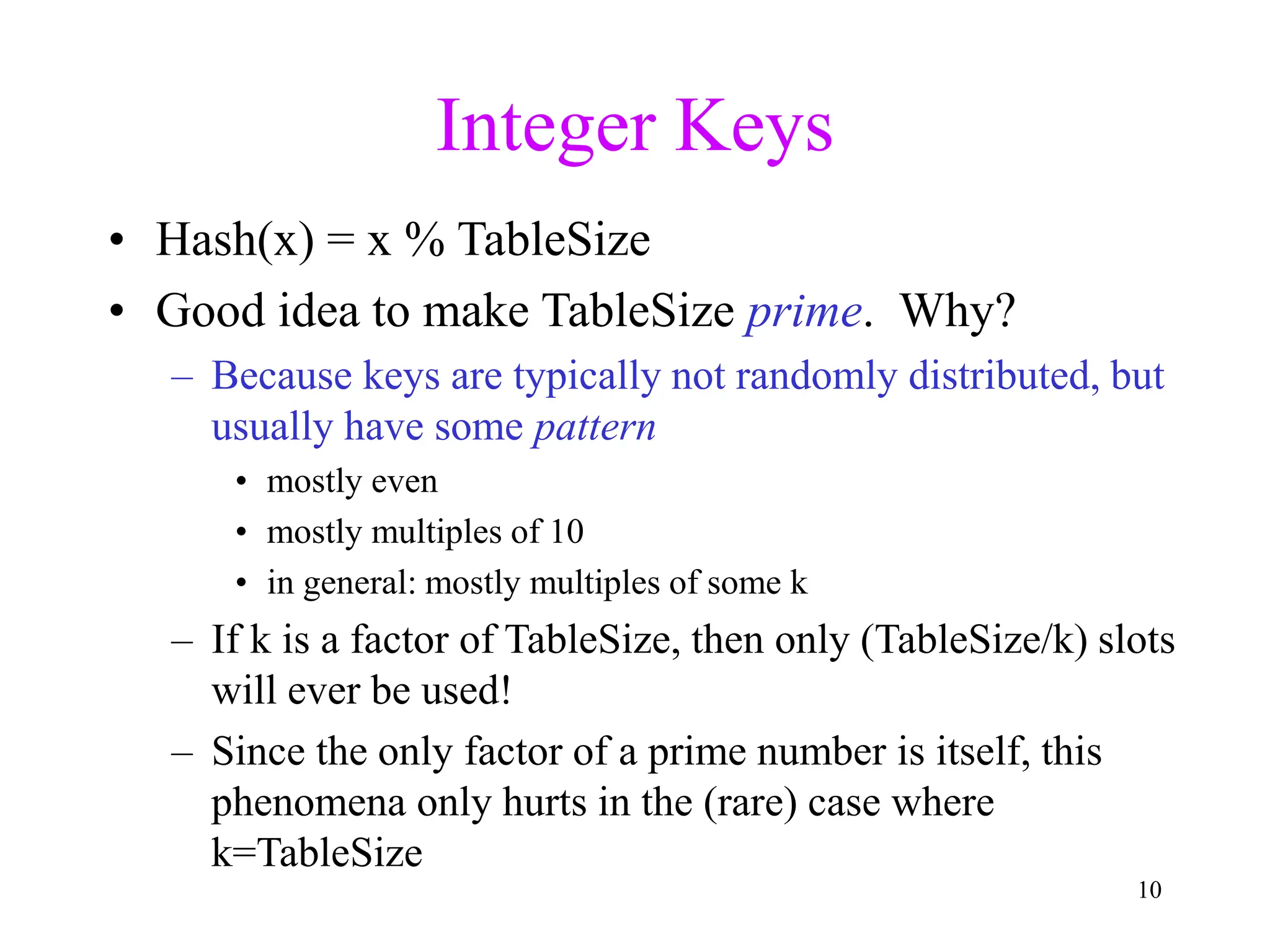

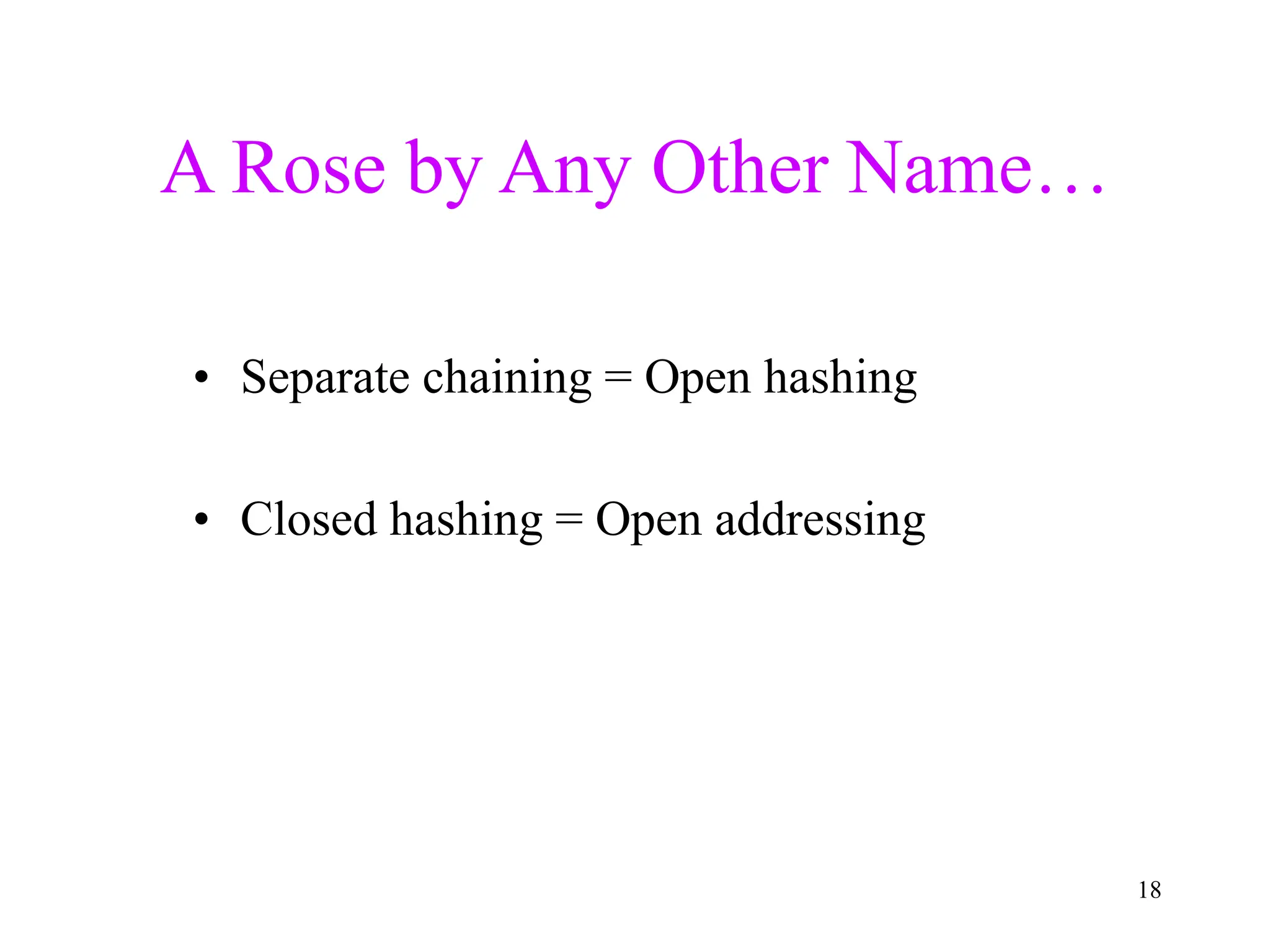

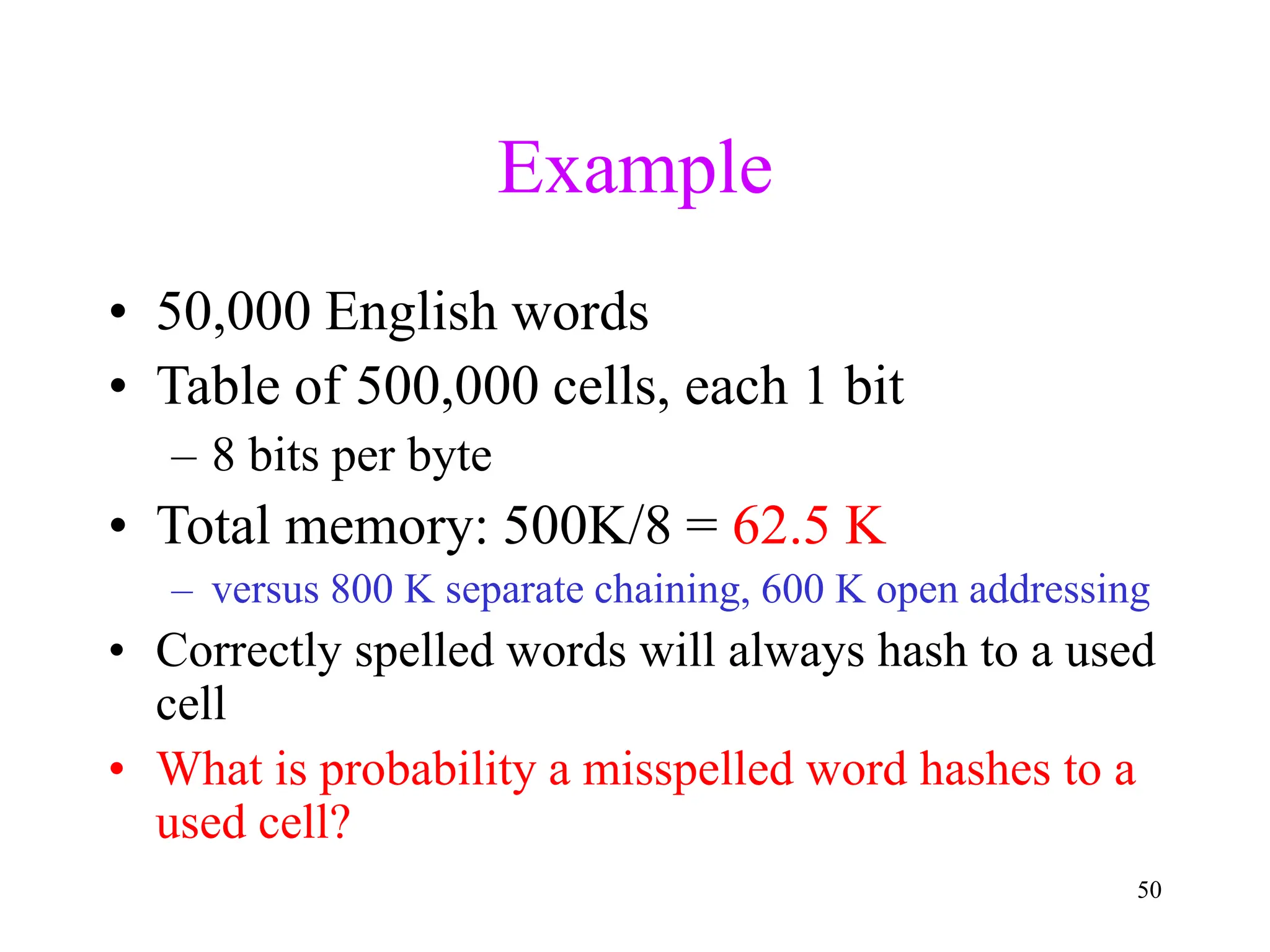

![5

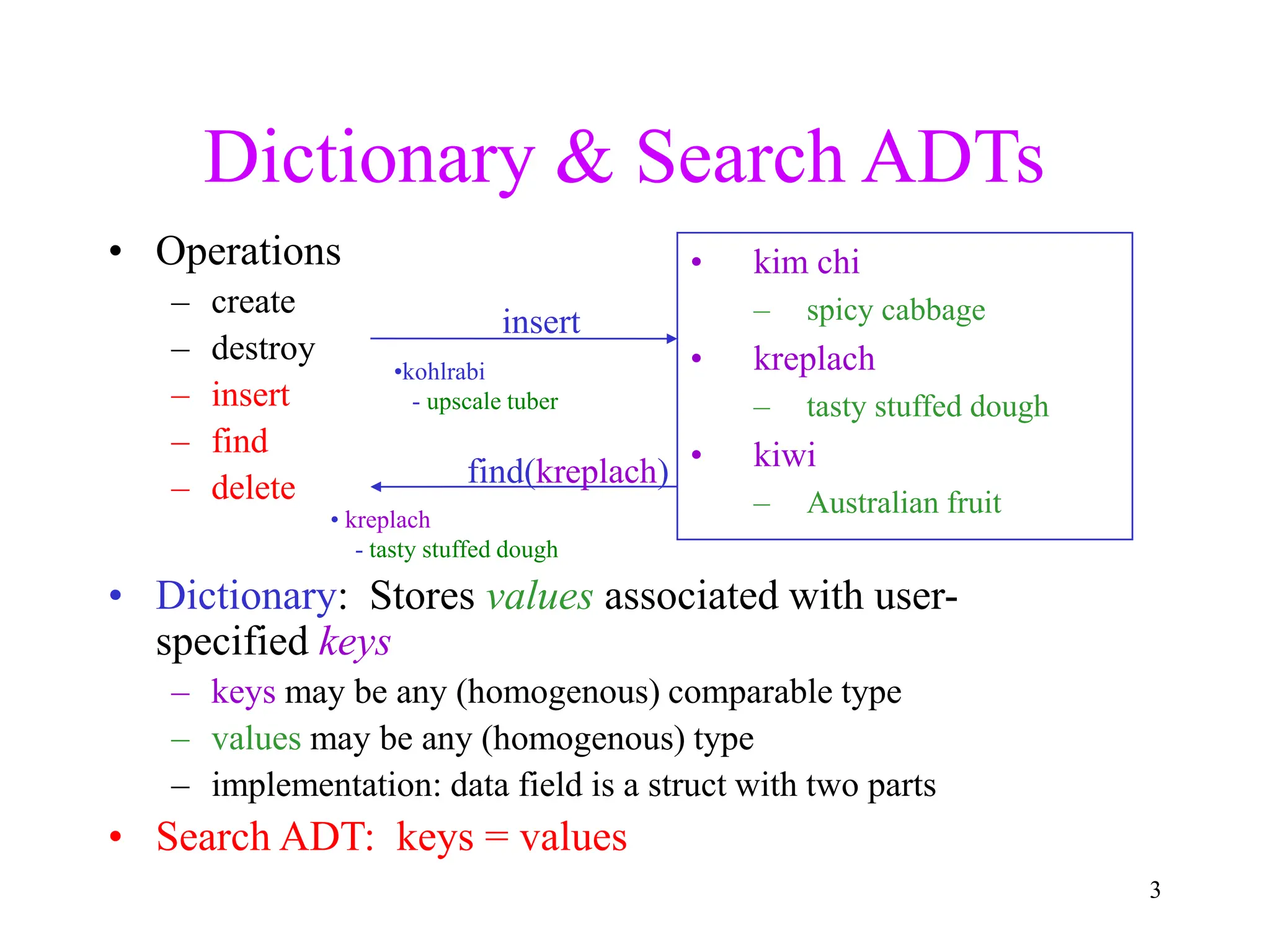

Hash Tables: Basic Idea

• Use a key (arbitrary string or number) to index

directly into an array – O(1) time to access records

– A[“kreplach”] = “tasty stuffed dough”

– Need a hash function to convert the key to an integer

Key Data

0 kim chi spicy cabbage

1 kreplach tasty stuffed dough

2 kiwi Australian fruit](https://image.slidesharecdn.com/part5-hashing-240320060630-dba28dc3/75/Hashing-In-Data-Structure-Download-PPT-i-5-2048.jpg)

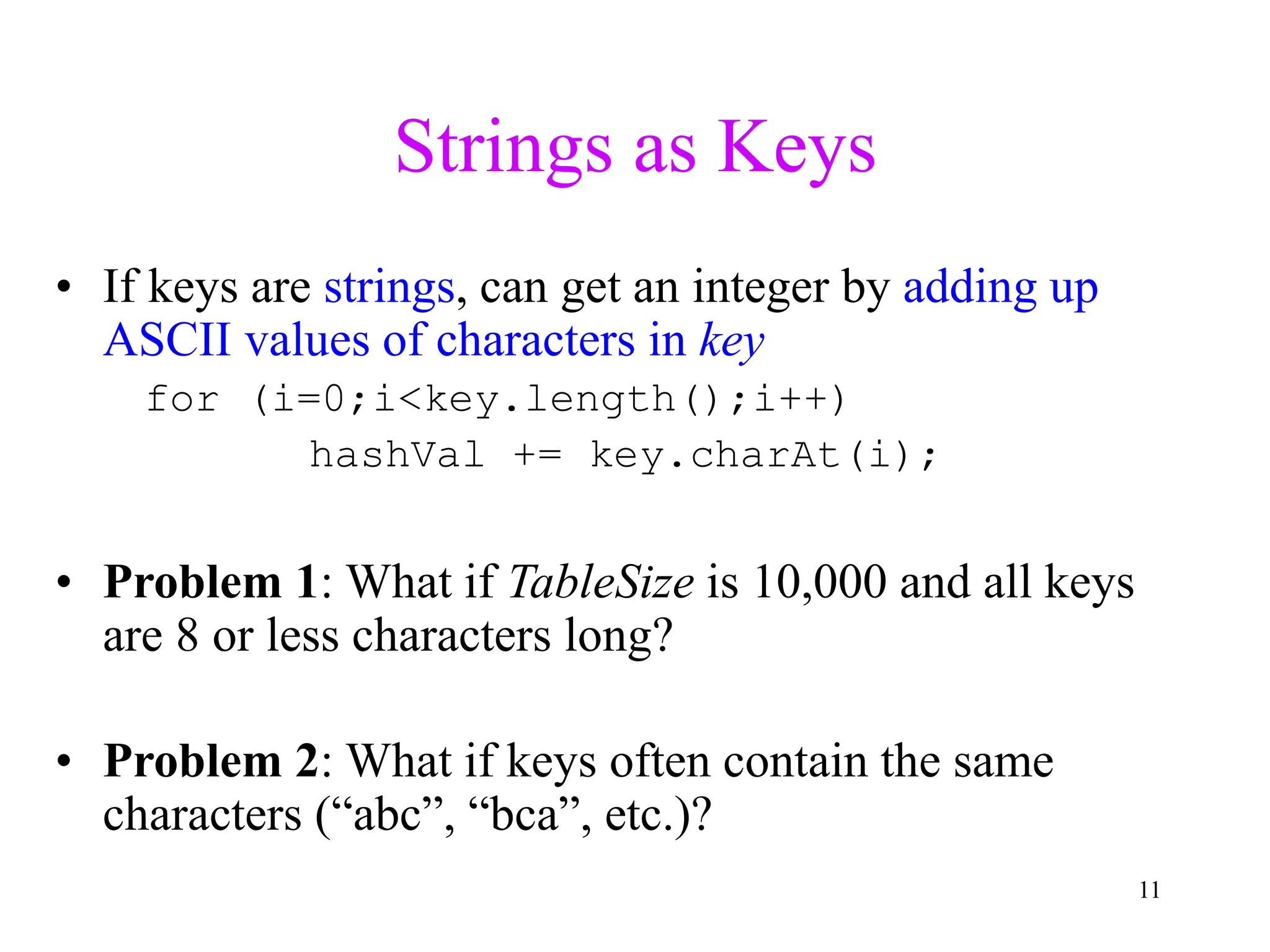

![55

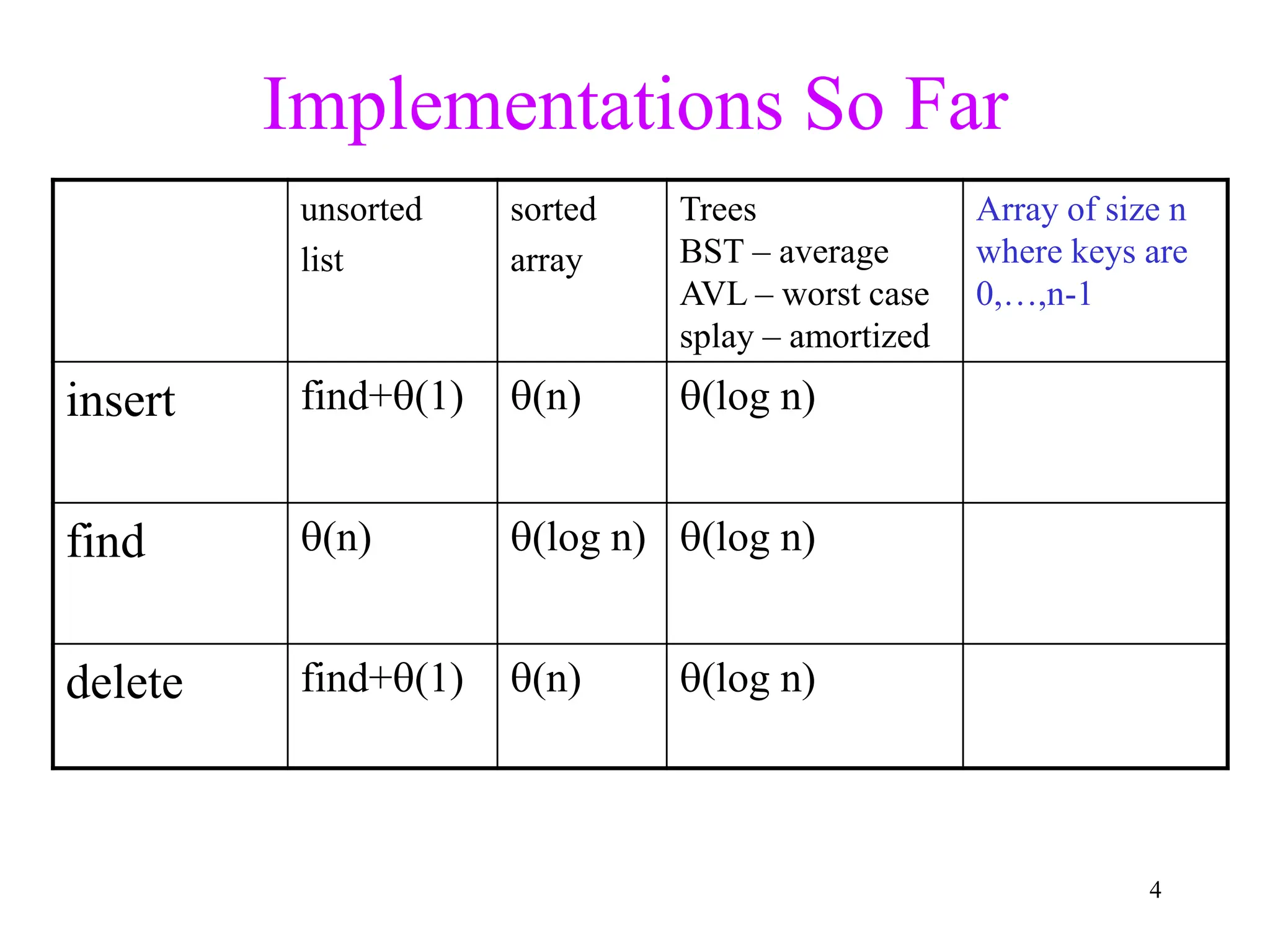

Databases

• A database is a set of records, each a tuple

of values

– E.g.: [ name, ss#, dept., salary ]

• How can we speed up queries that ask for

all employees in a given department?

• How can we speed up queries that ask for

all employees whose salary falls in a given

range?](https://image.slidesharecdn.com/part5-hashing-240320060630-dba28dc3/75/Hashing-In-Data-Structure-Download-PPT-i-55-2048.jpg)