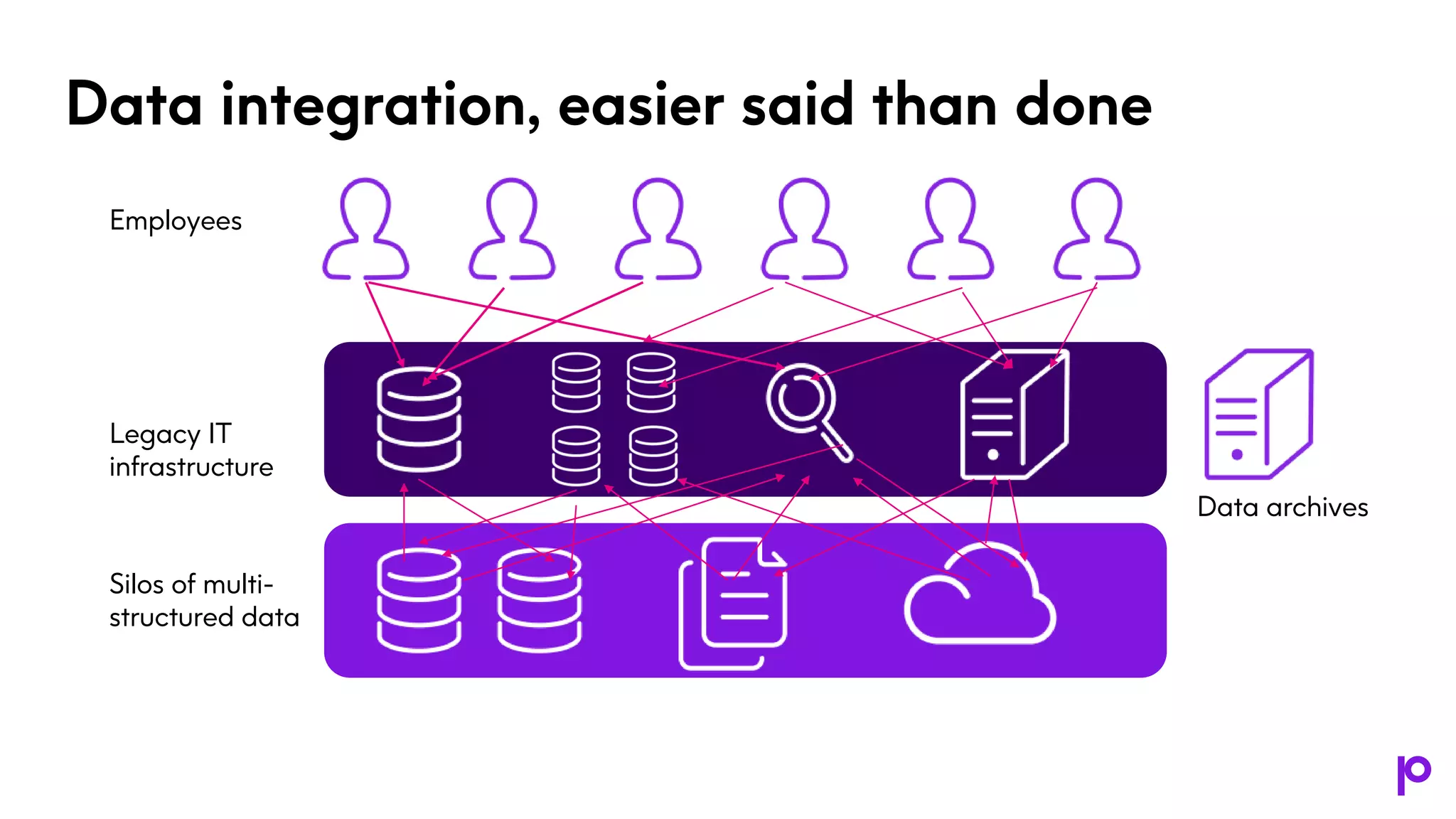

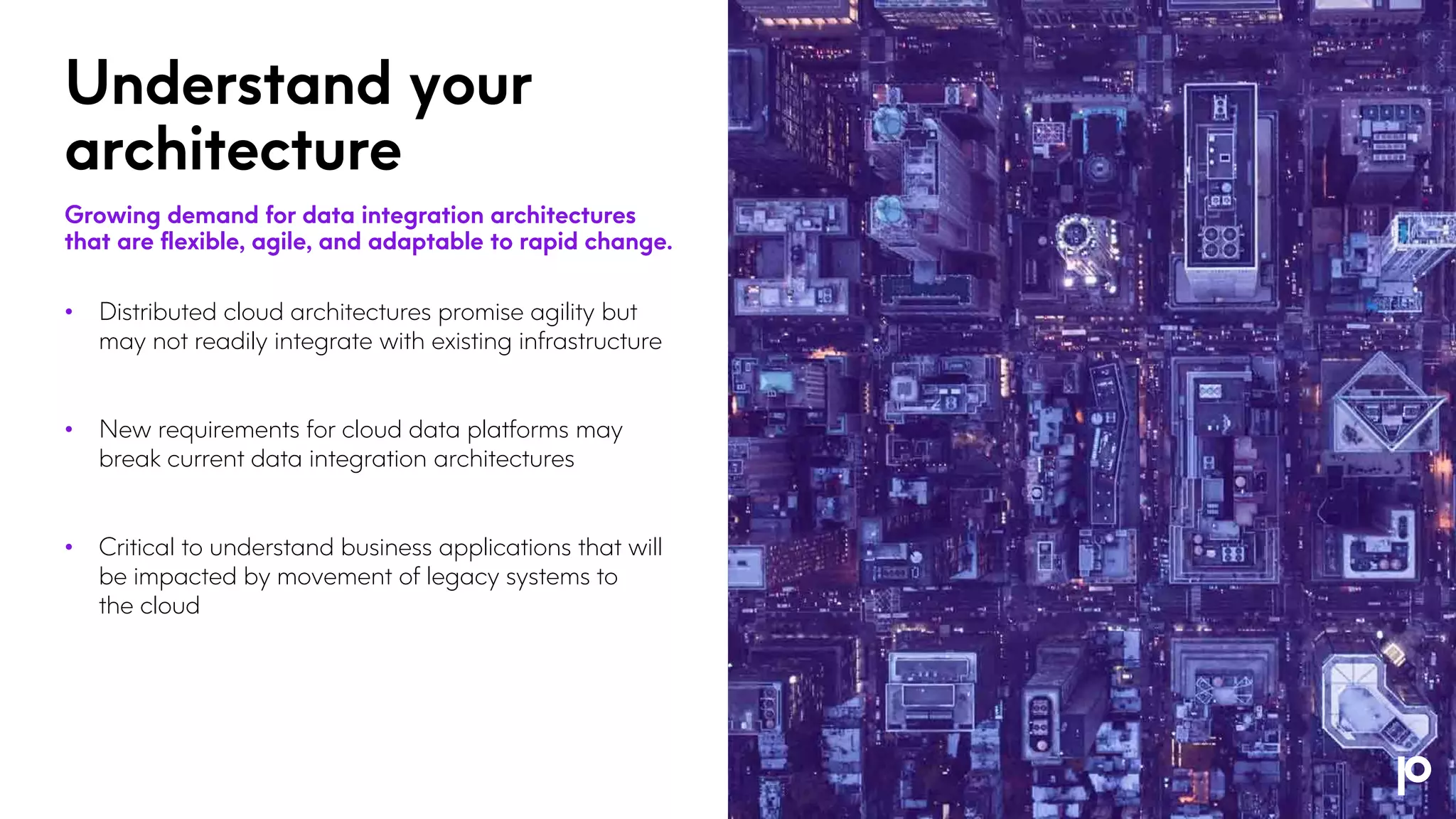

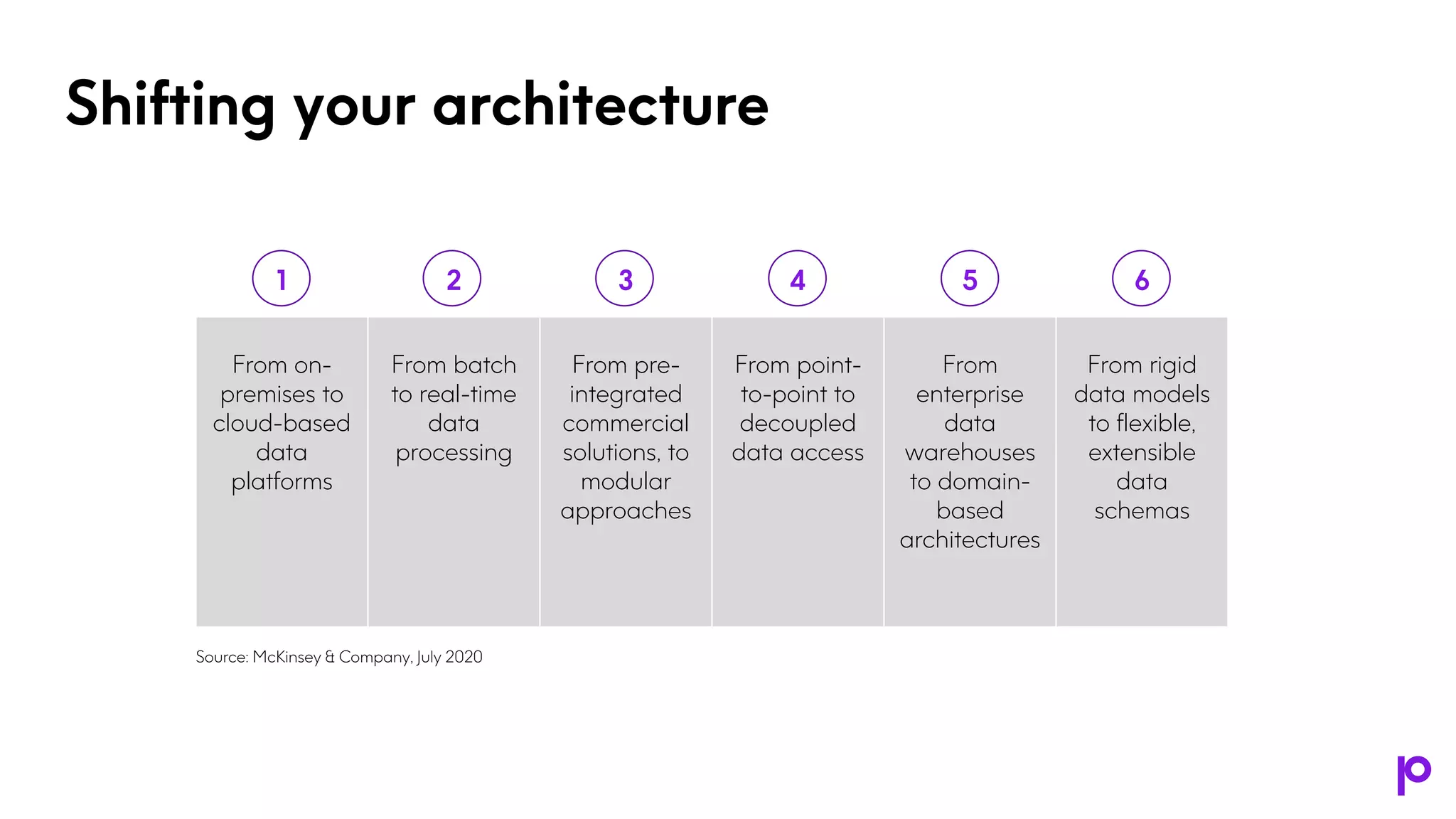

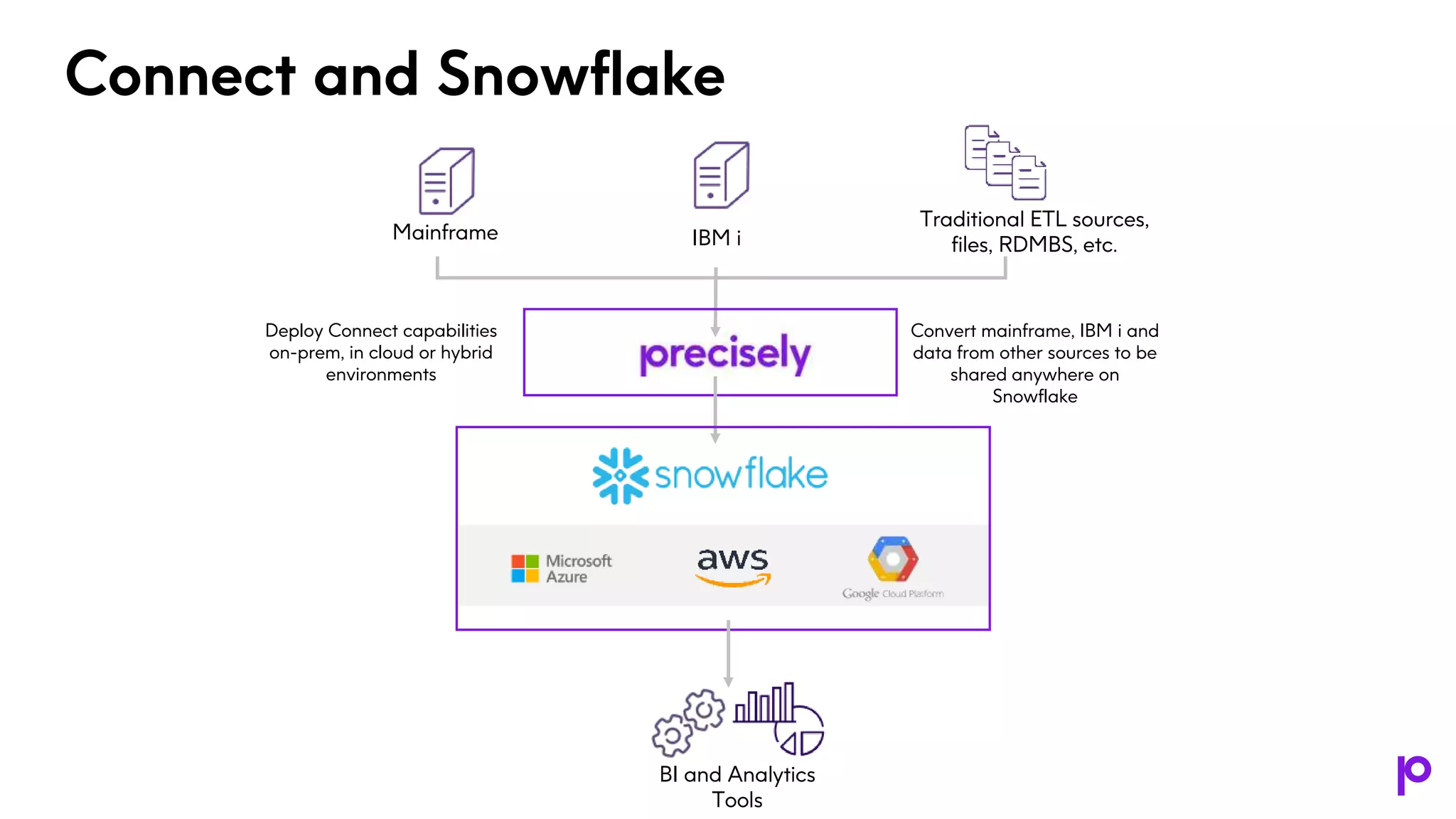

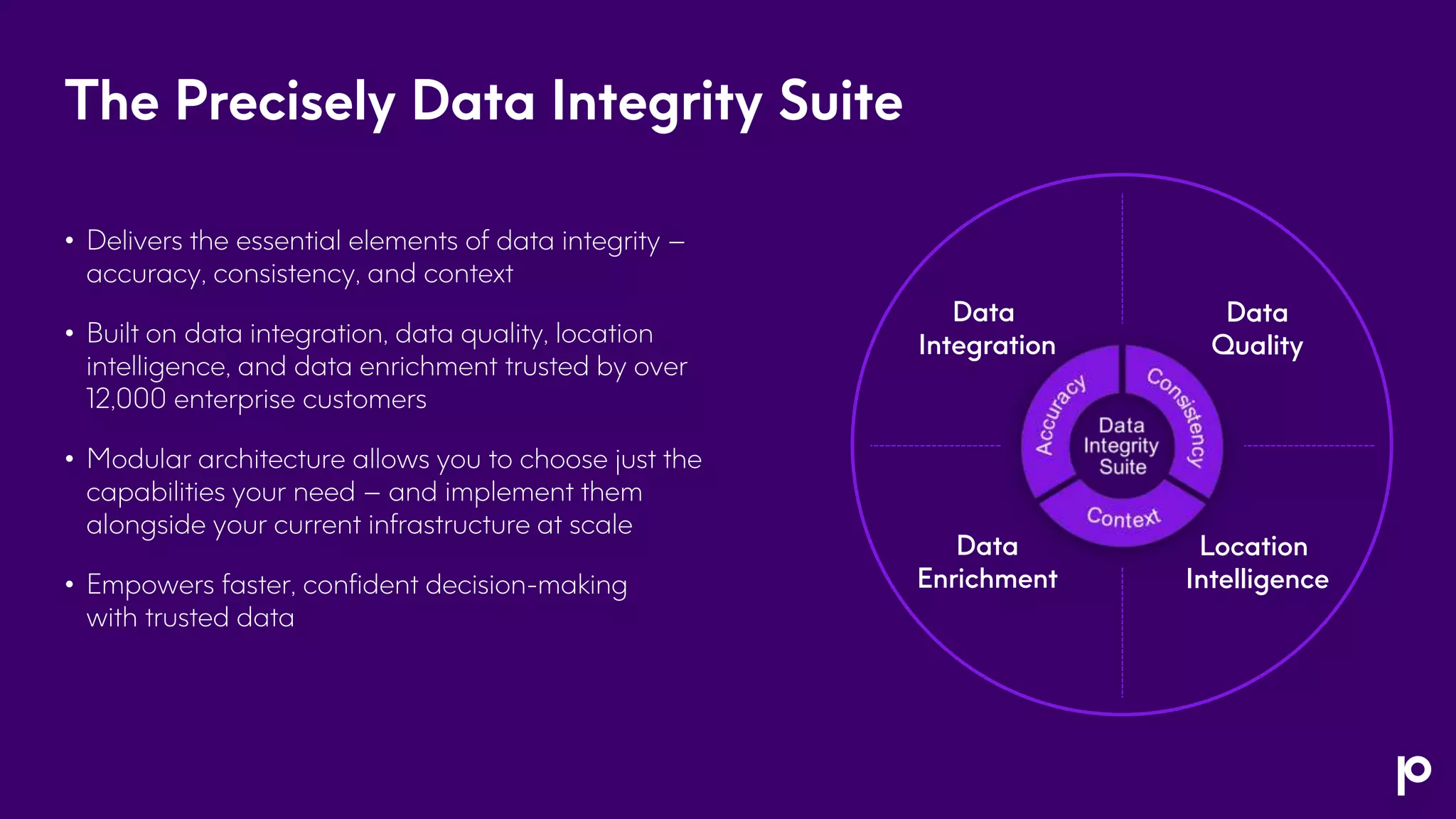

The document discusses foundational strategies for achieving trusted data integration, emphasizing the importance of visibility, real-time data sharing, and removal of data silos as enterprises transition from on-premises to cloud environments. It highlights challenges such as legacy IT infrastructure and the need for adaptable data architectures to support new technologies like AI and ML. Ultimately, the document suggests defining clear business cases and developing scalable integration solutions to maximize the value of legacy data while ensuring data integrity.