1) The document describes a workshop on research synthesis and reproducibility.

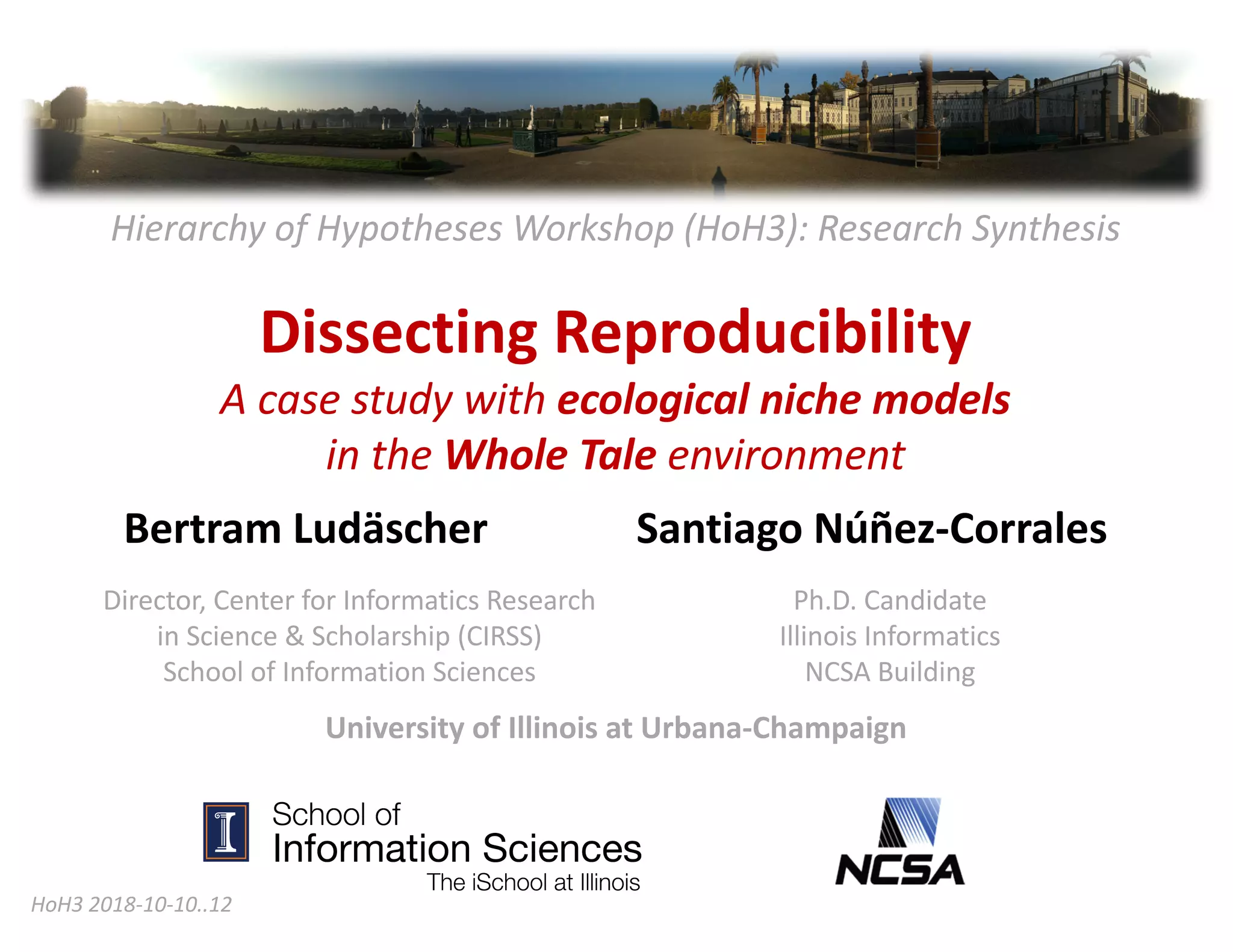

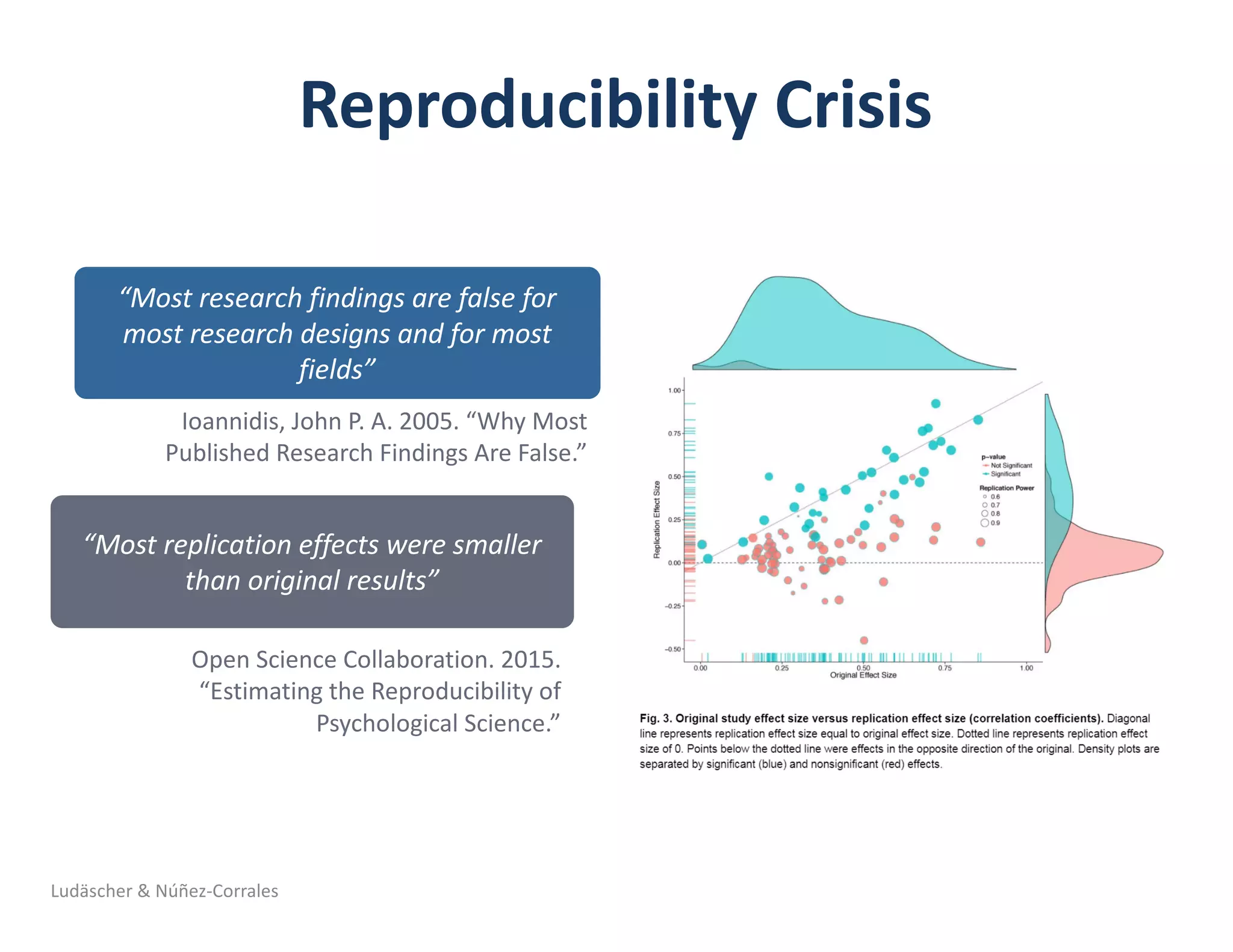

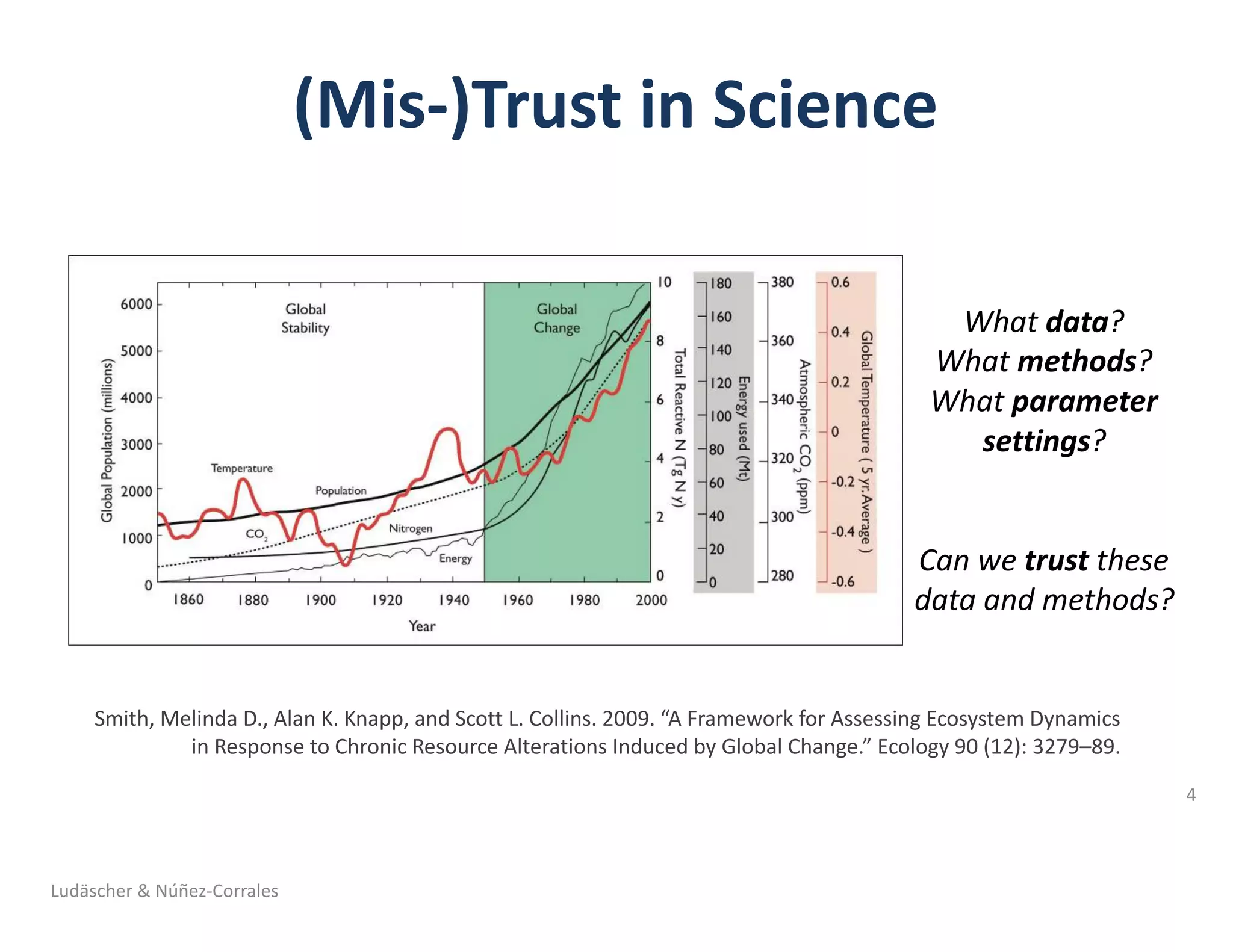

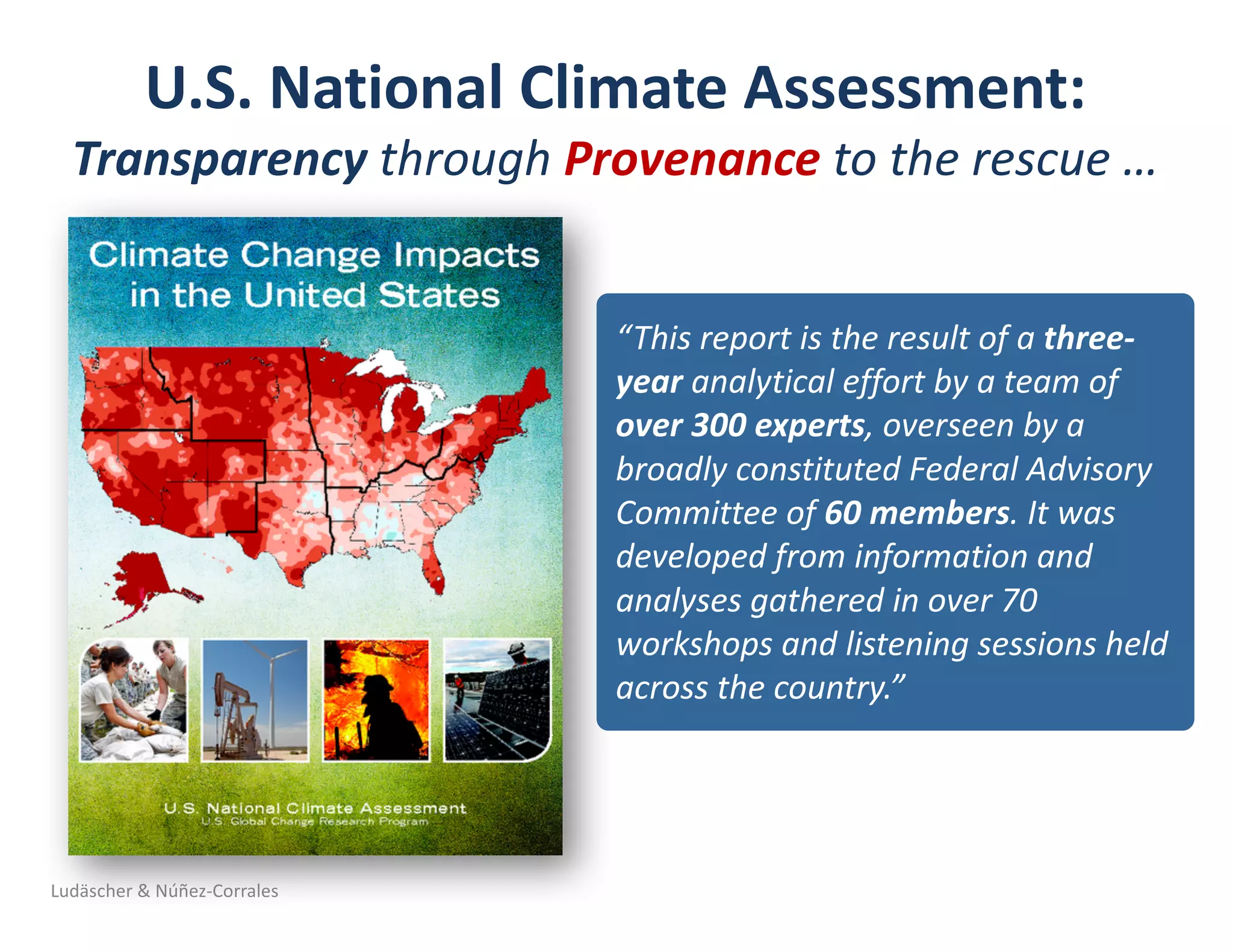

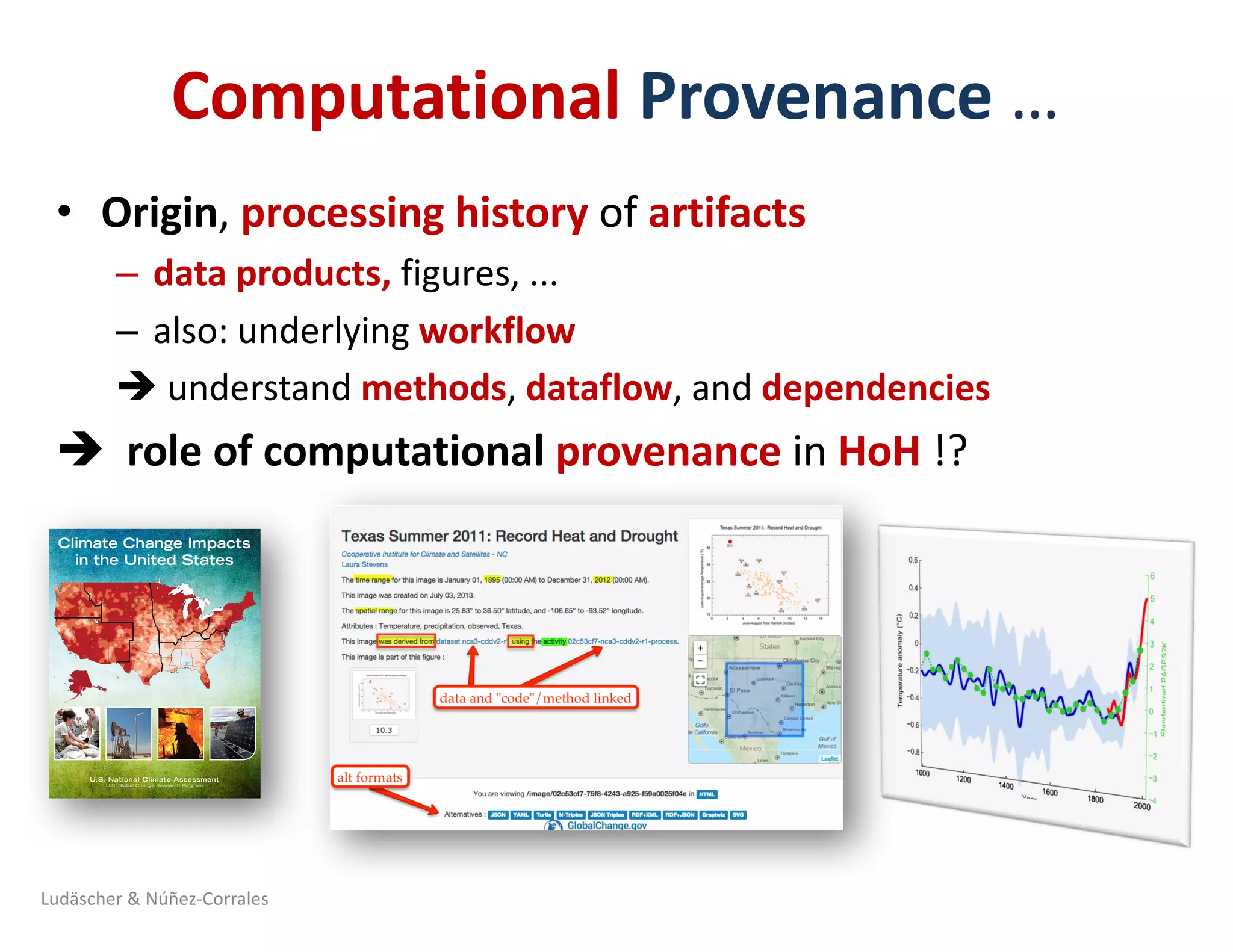

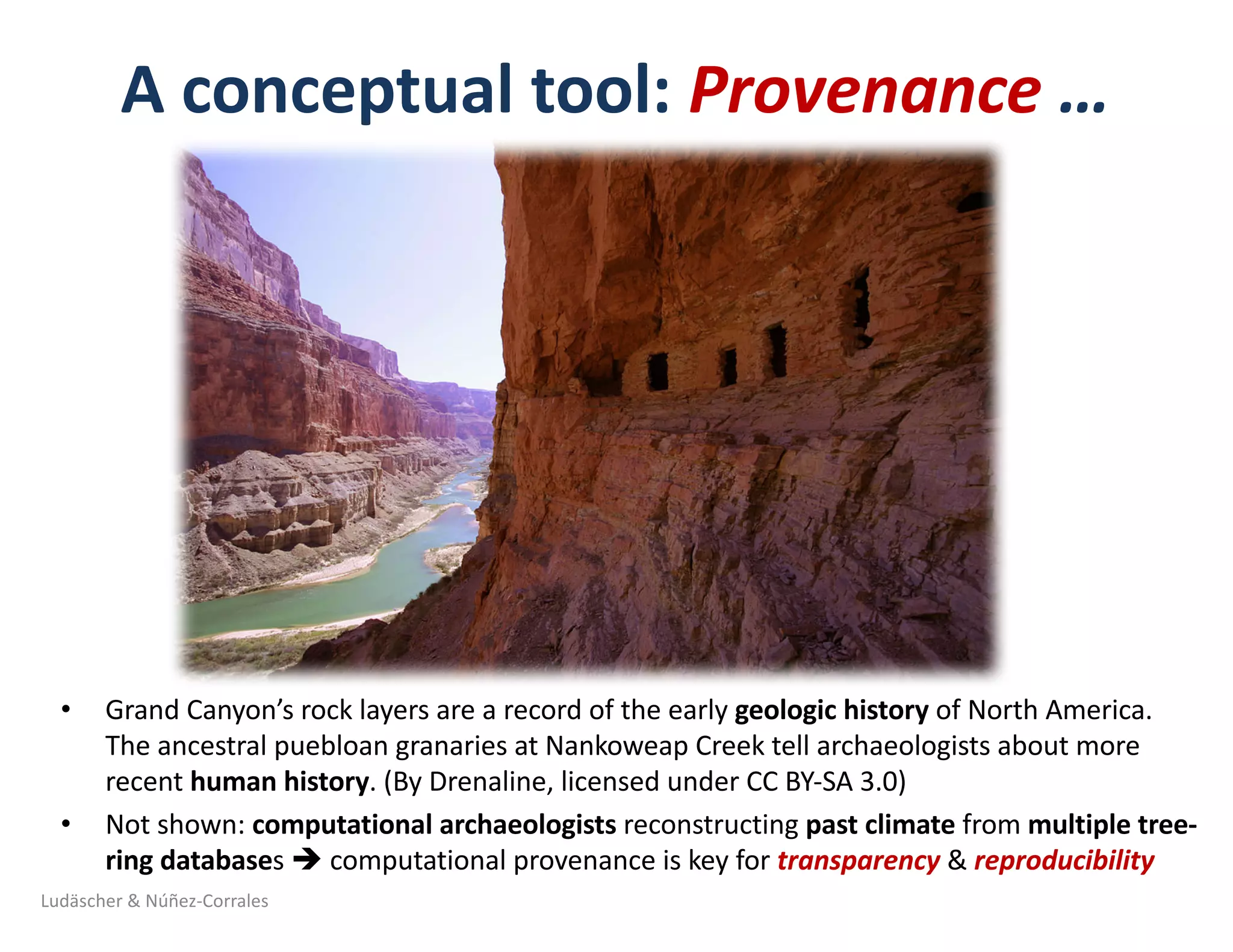

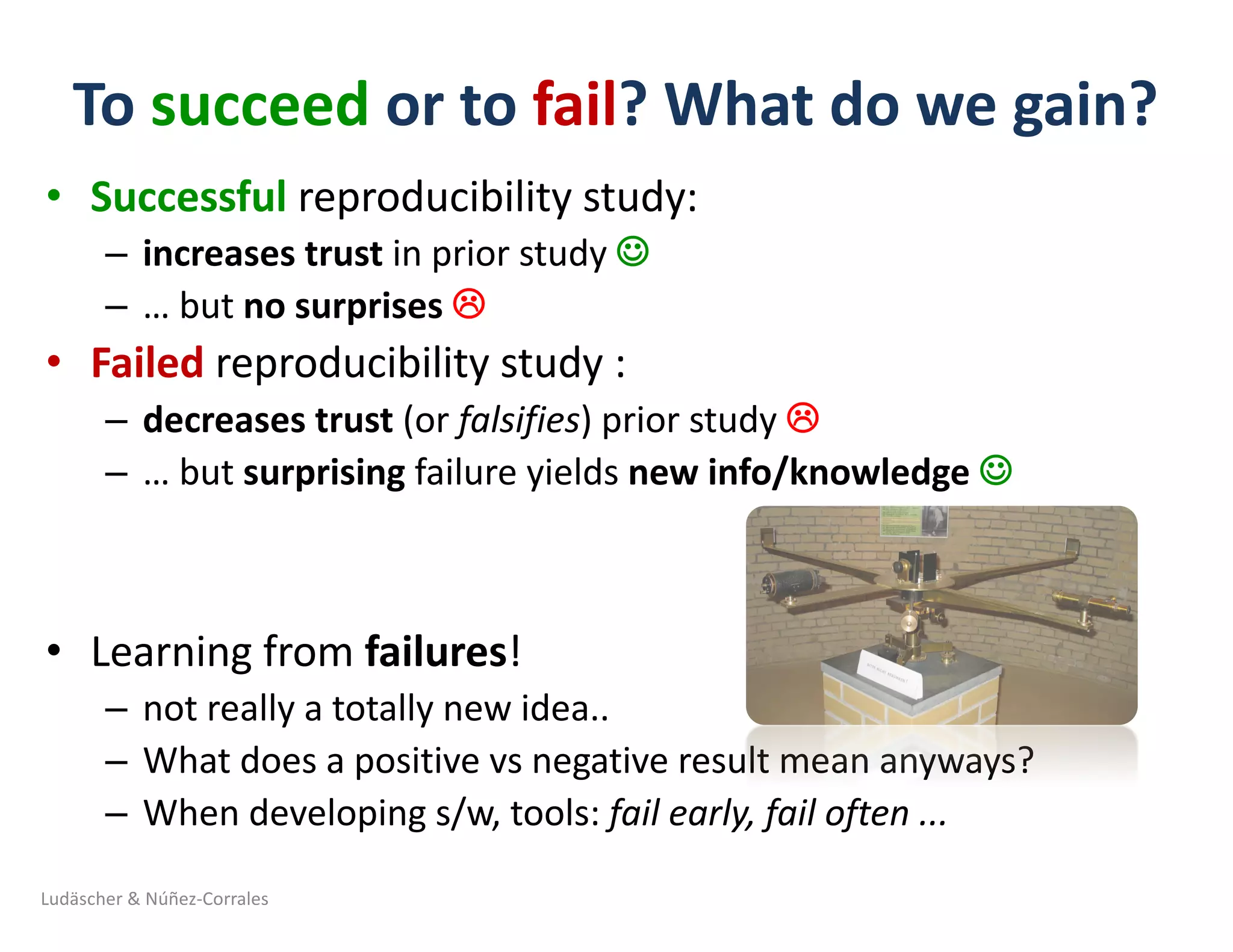

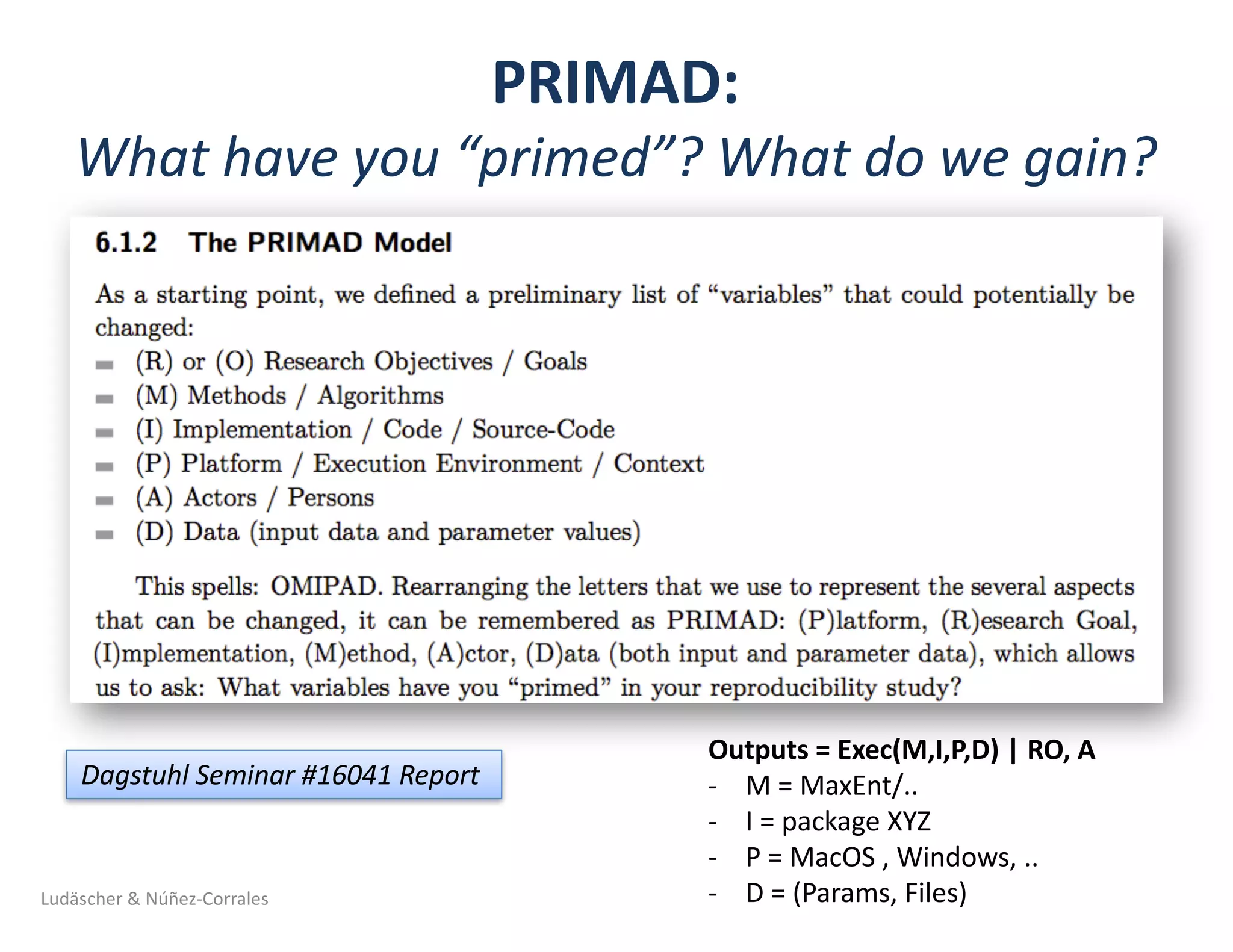

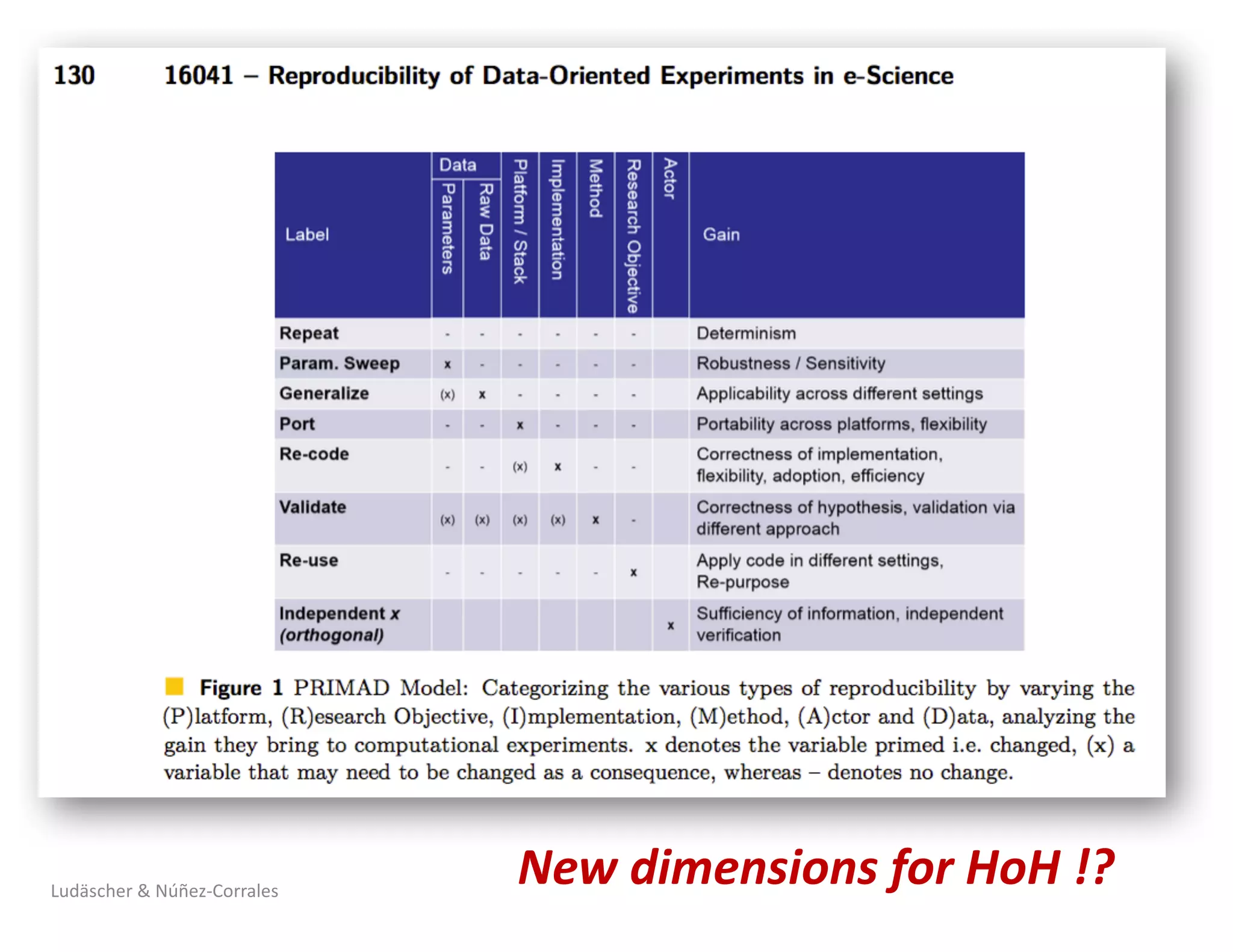

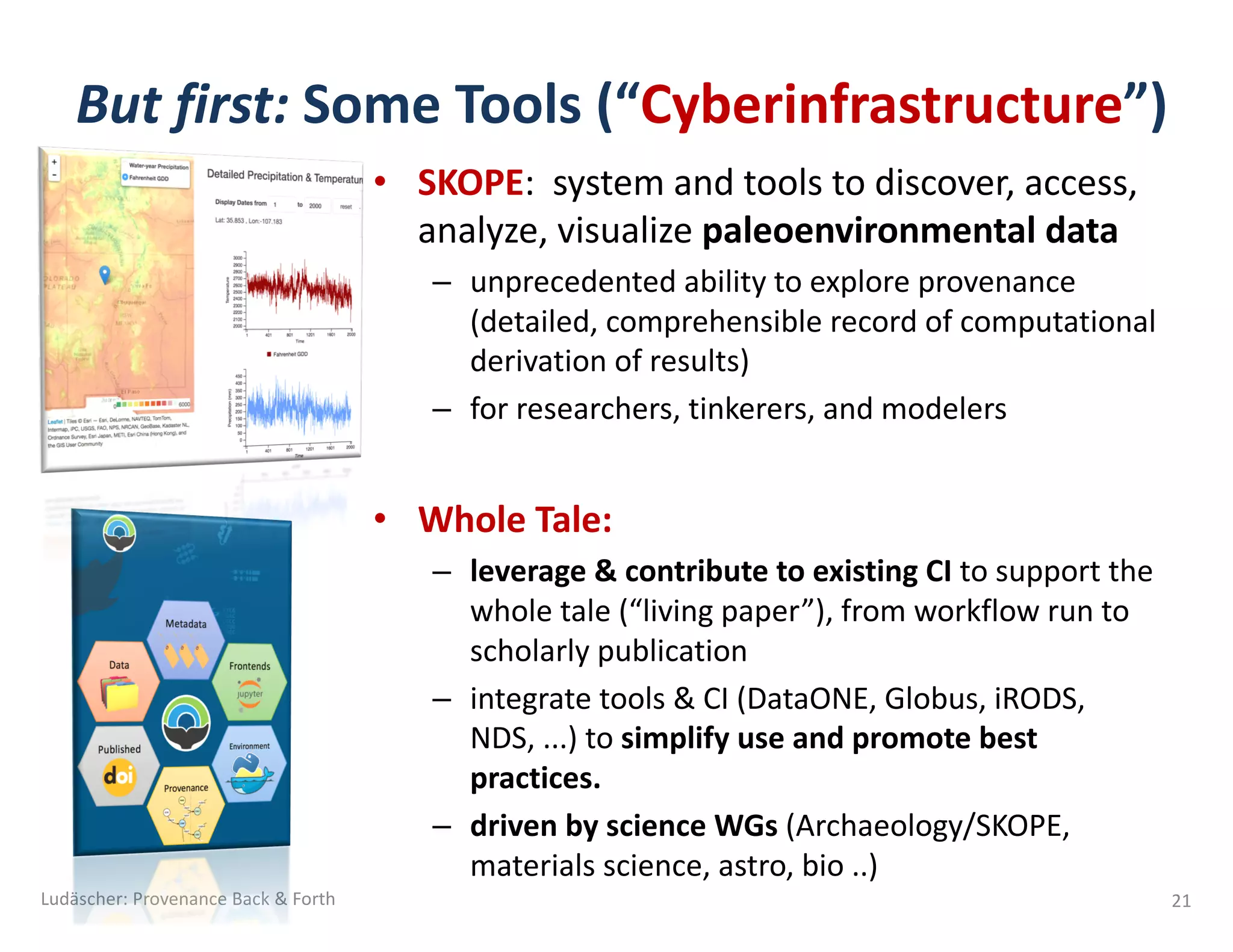

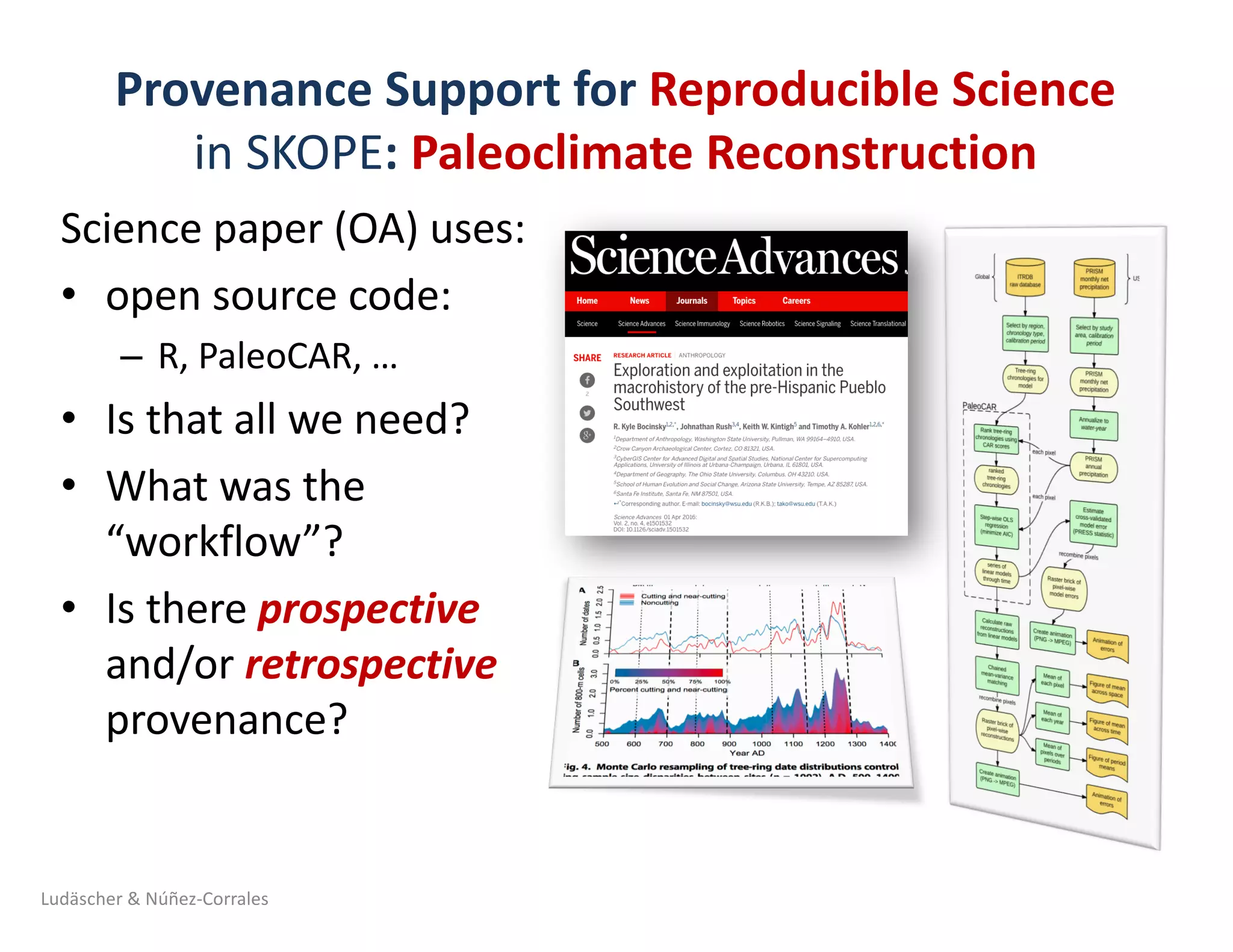

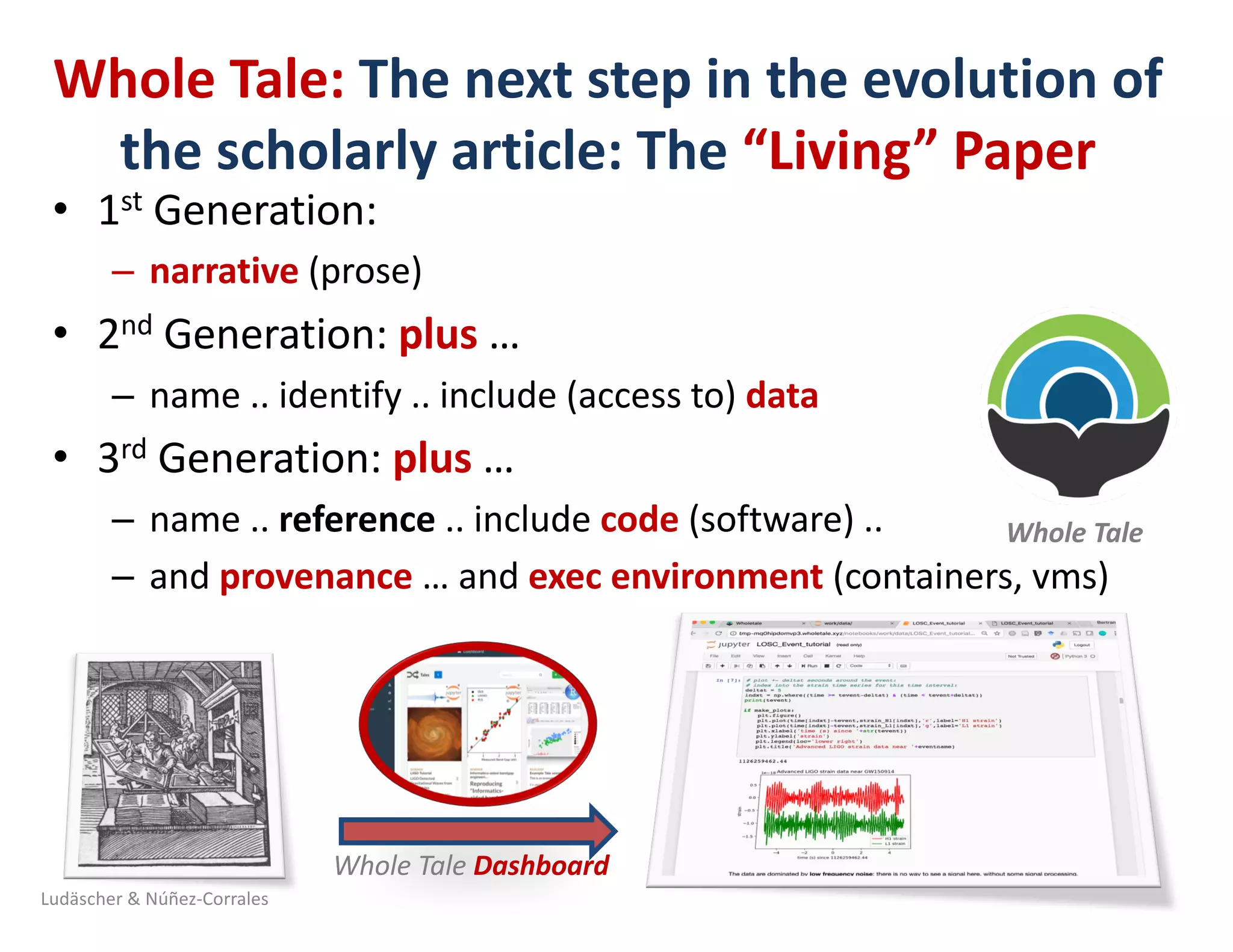

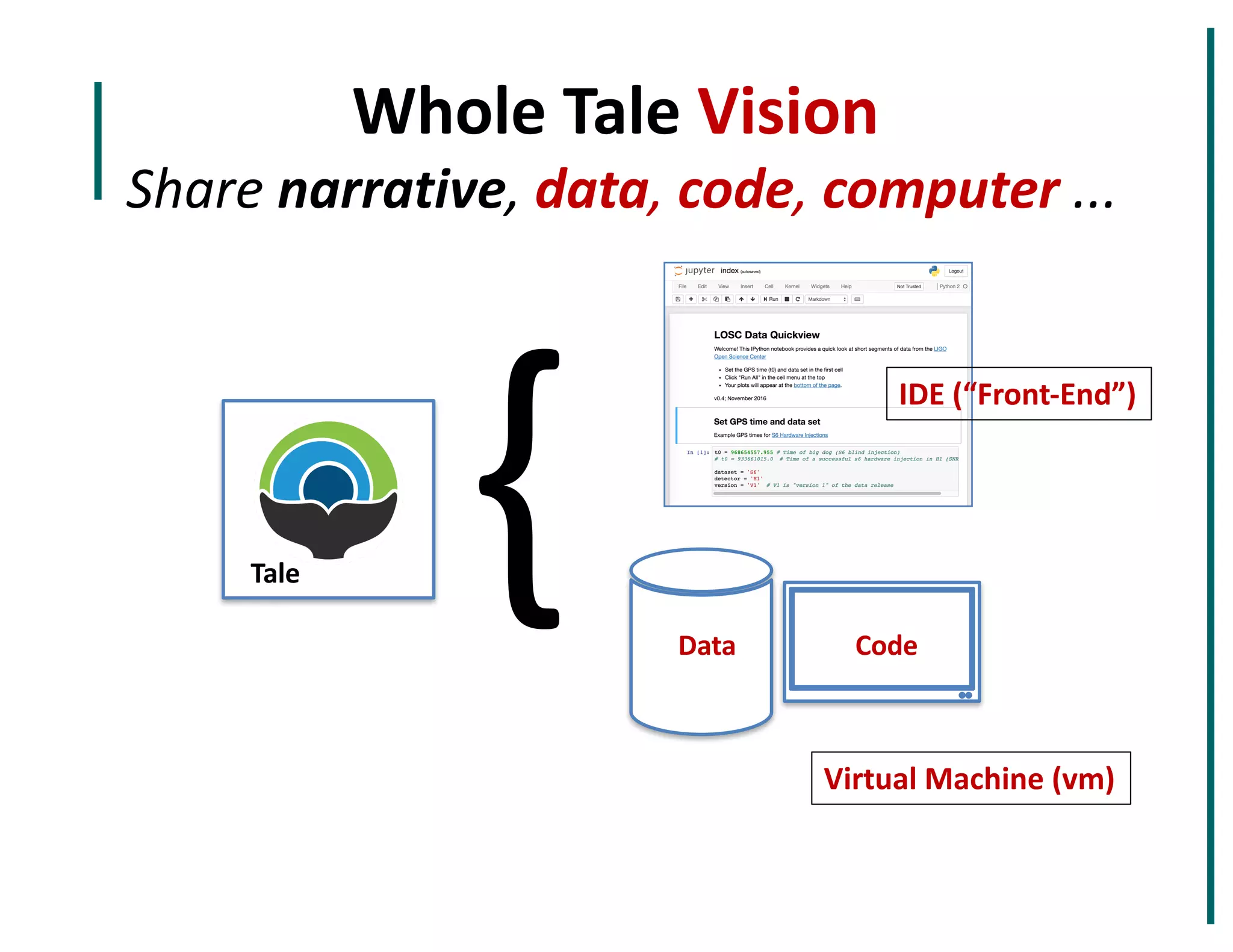

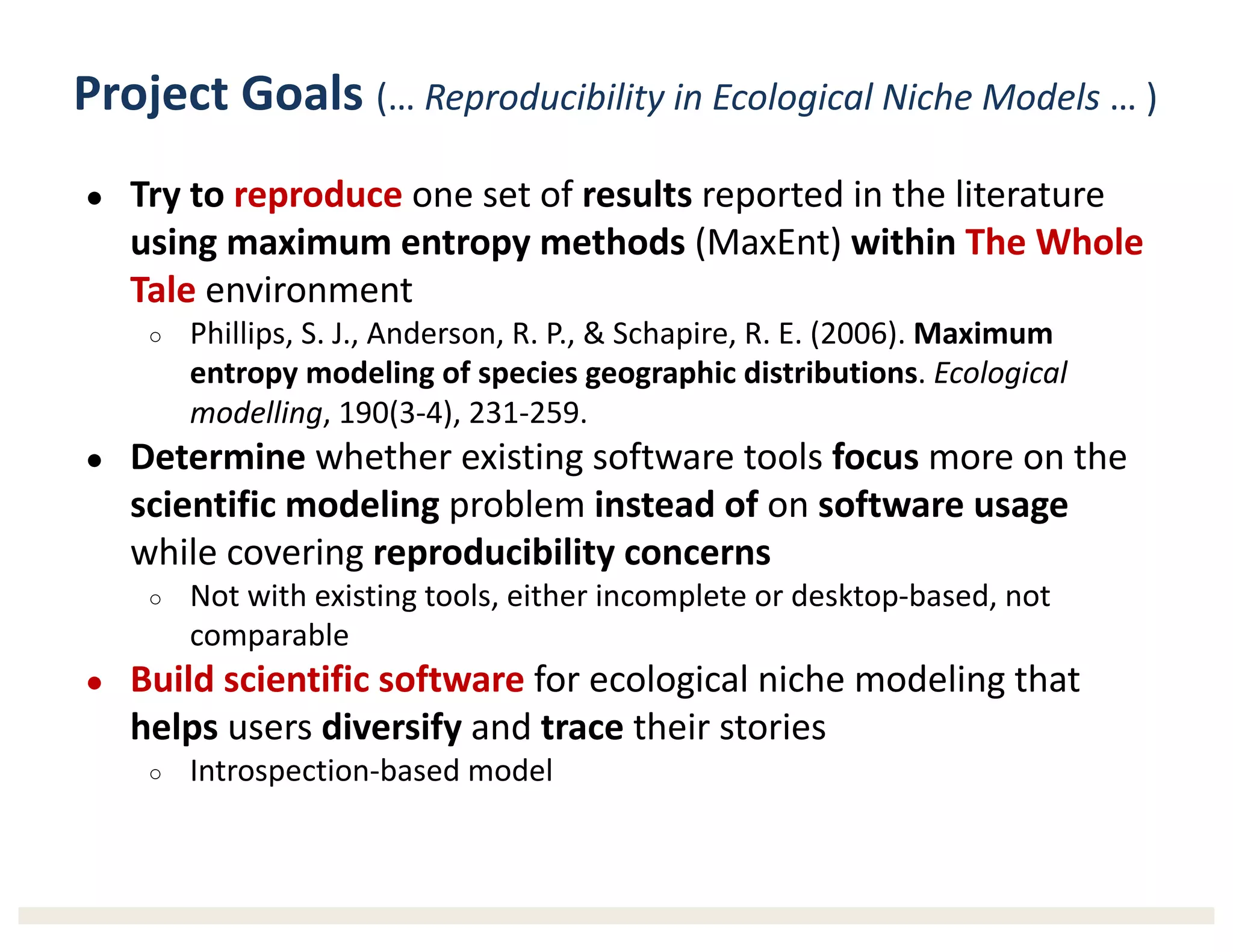

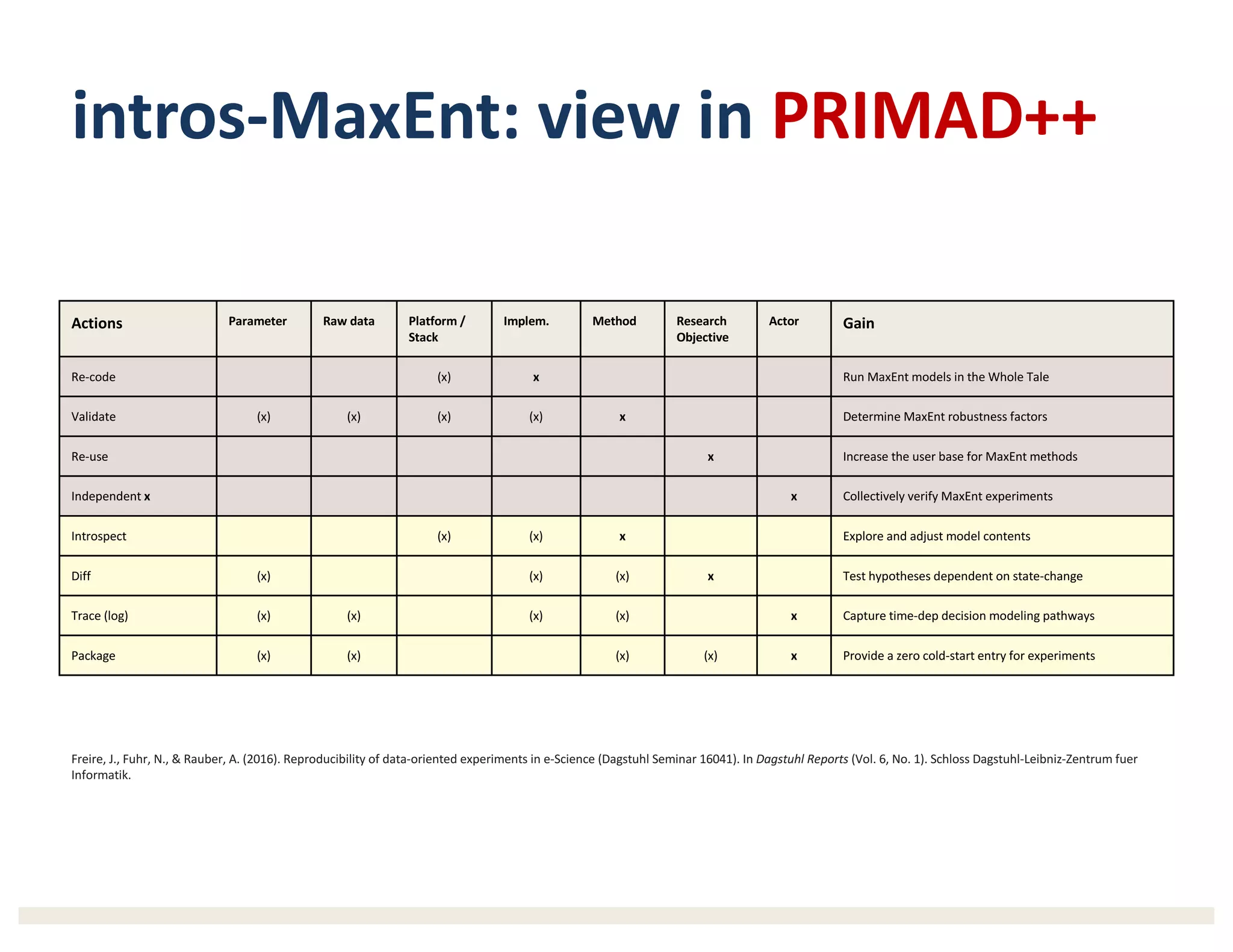

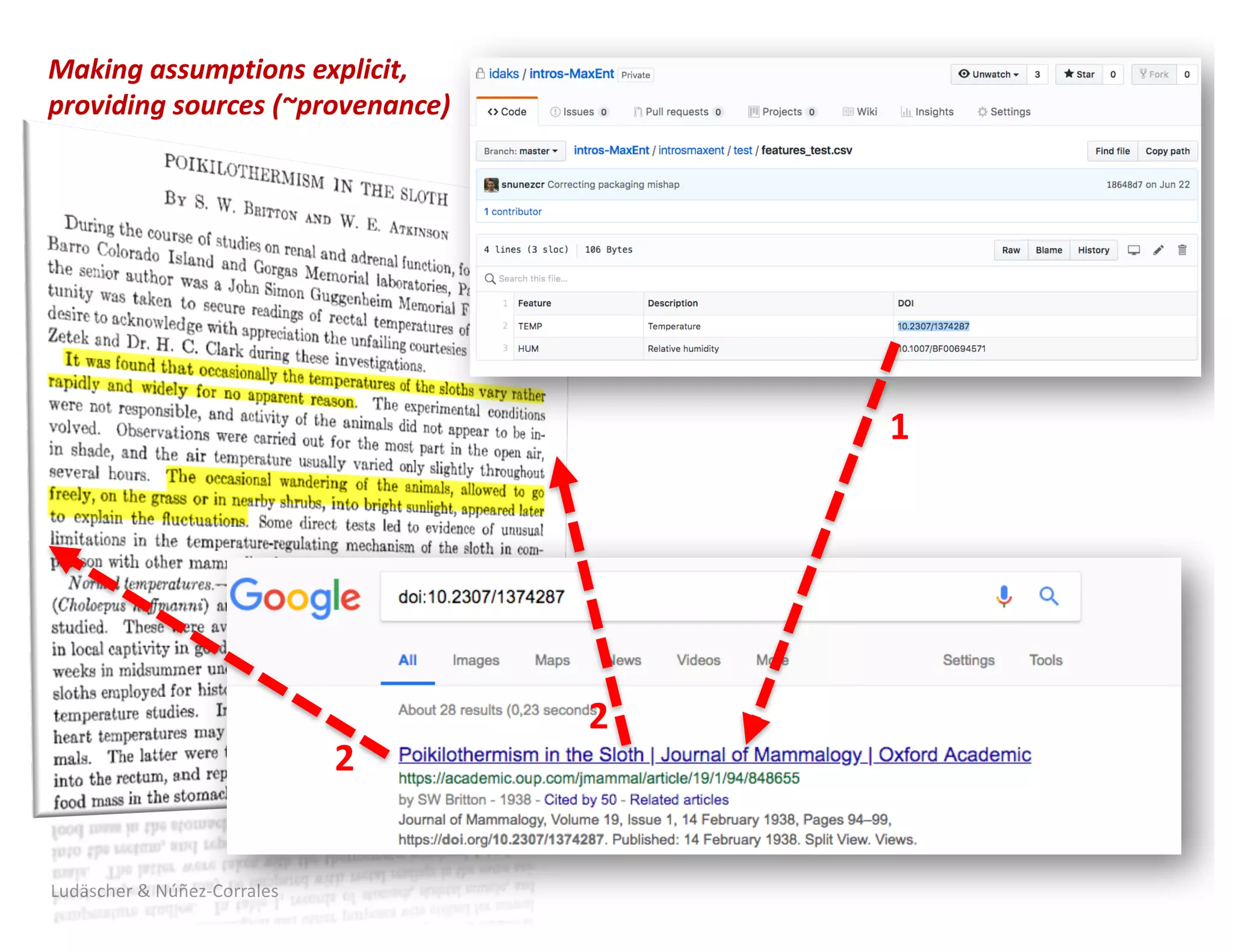

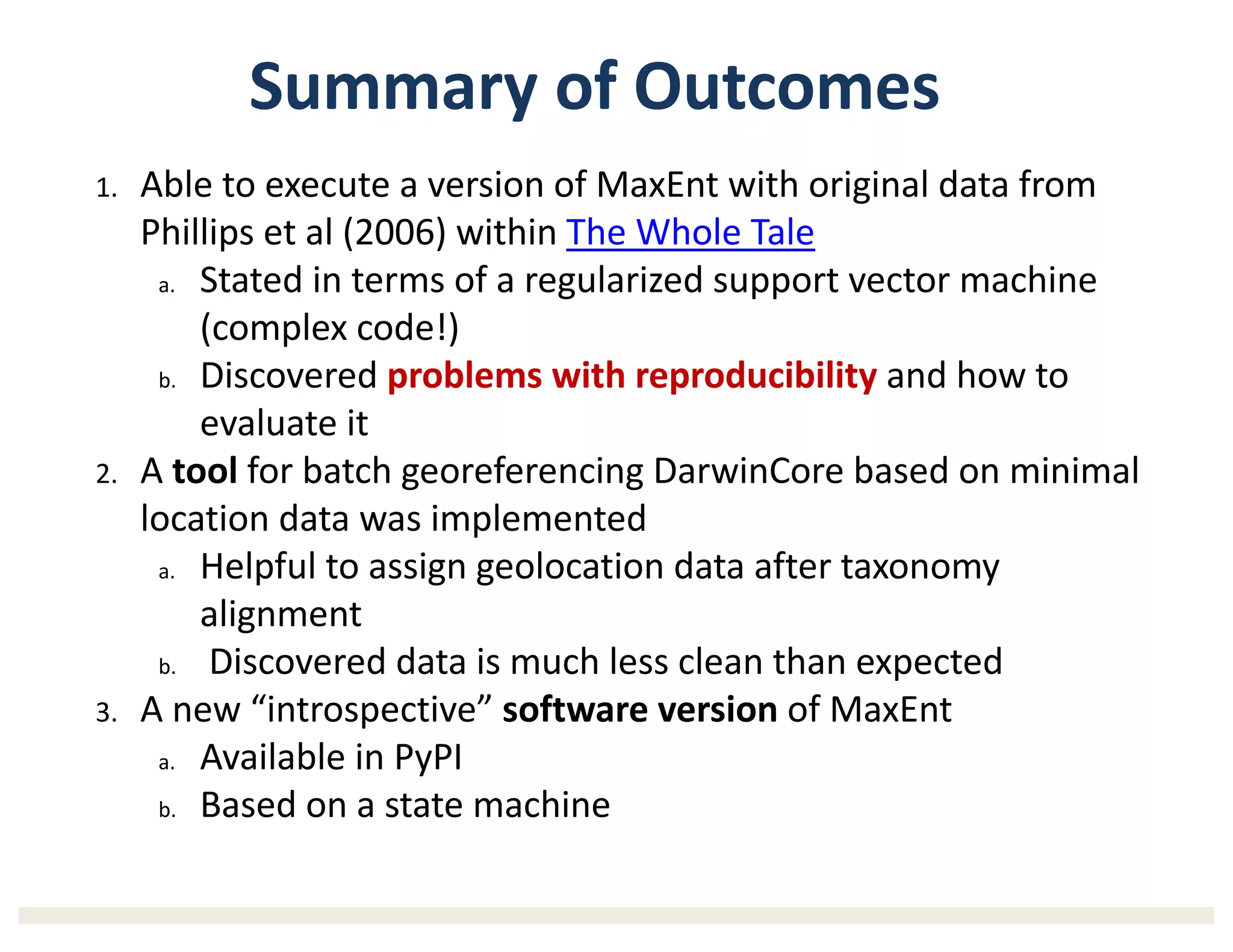

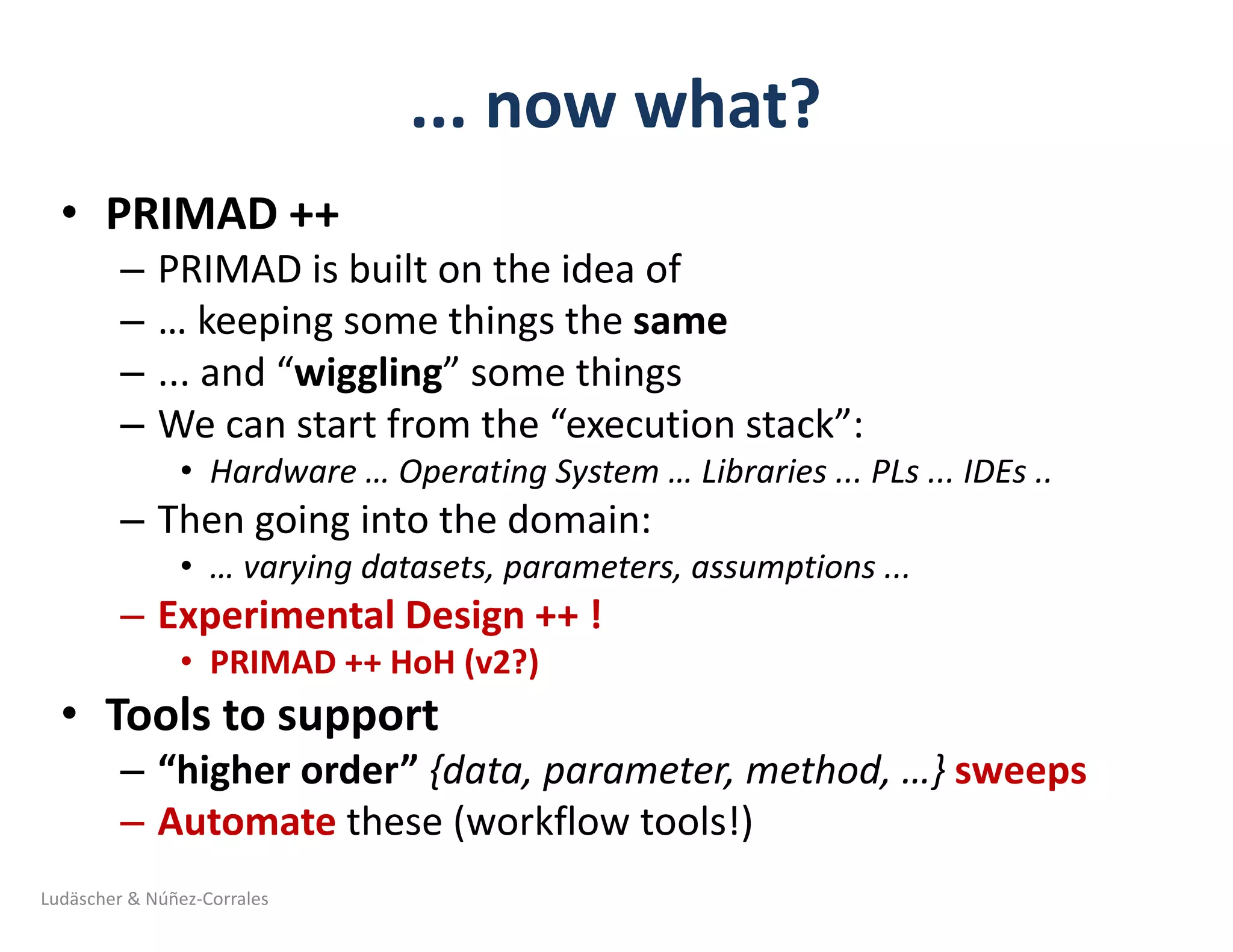

2) It discusses challenges with reproducibility in science and proposes provenance and conceptual tools like PRIMAD to help address these challenges.

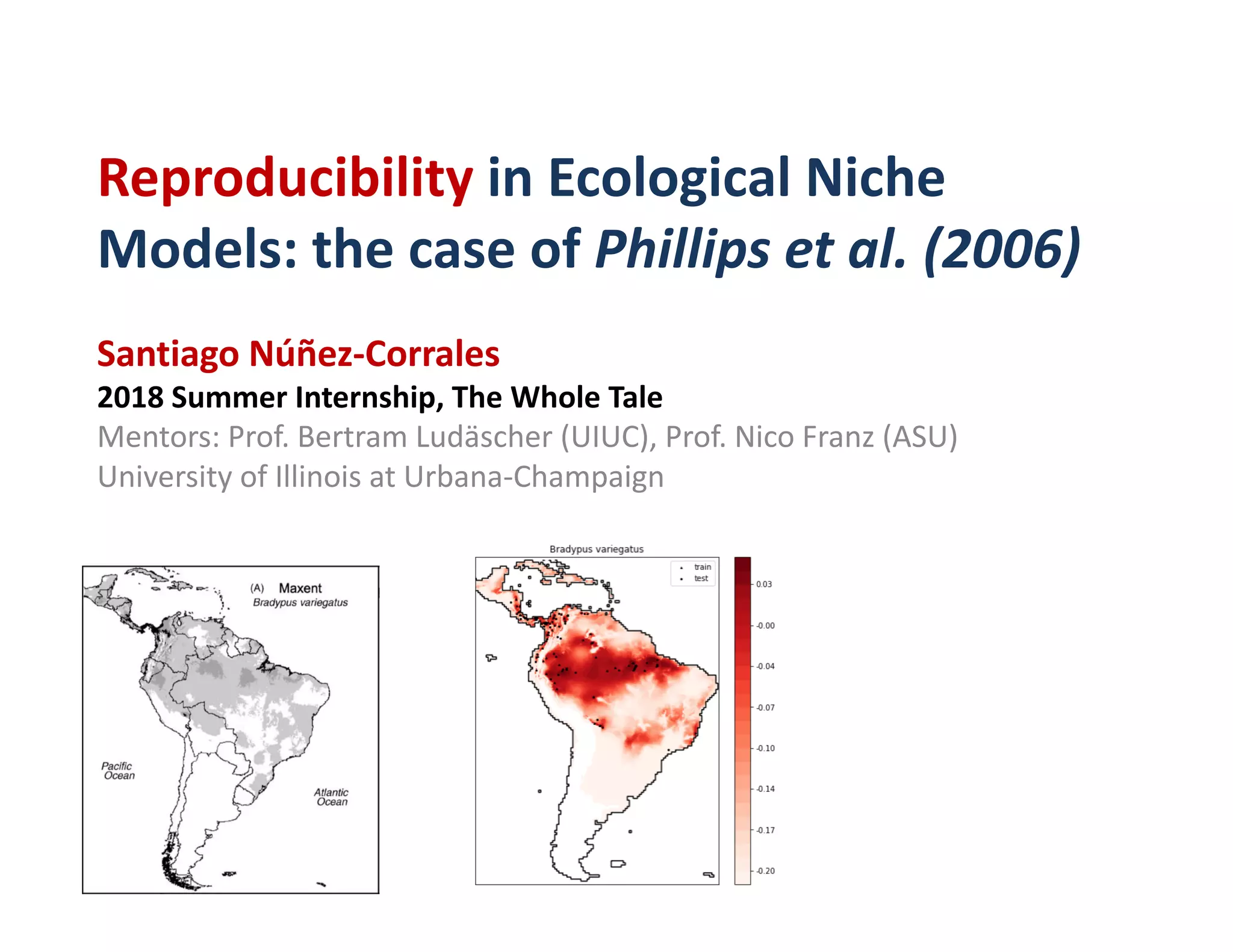

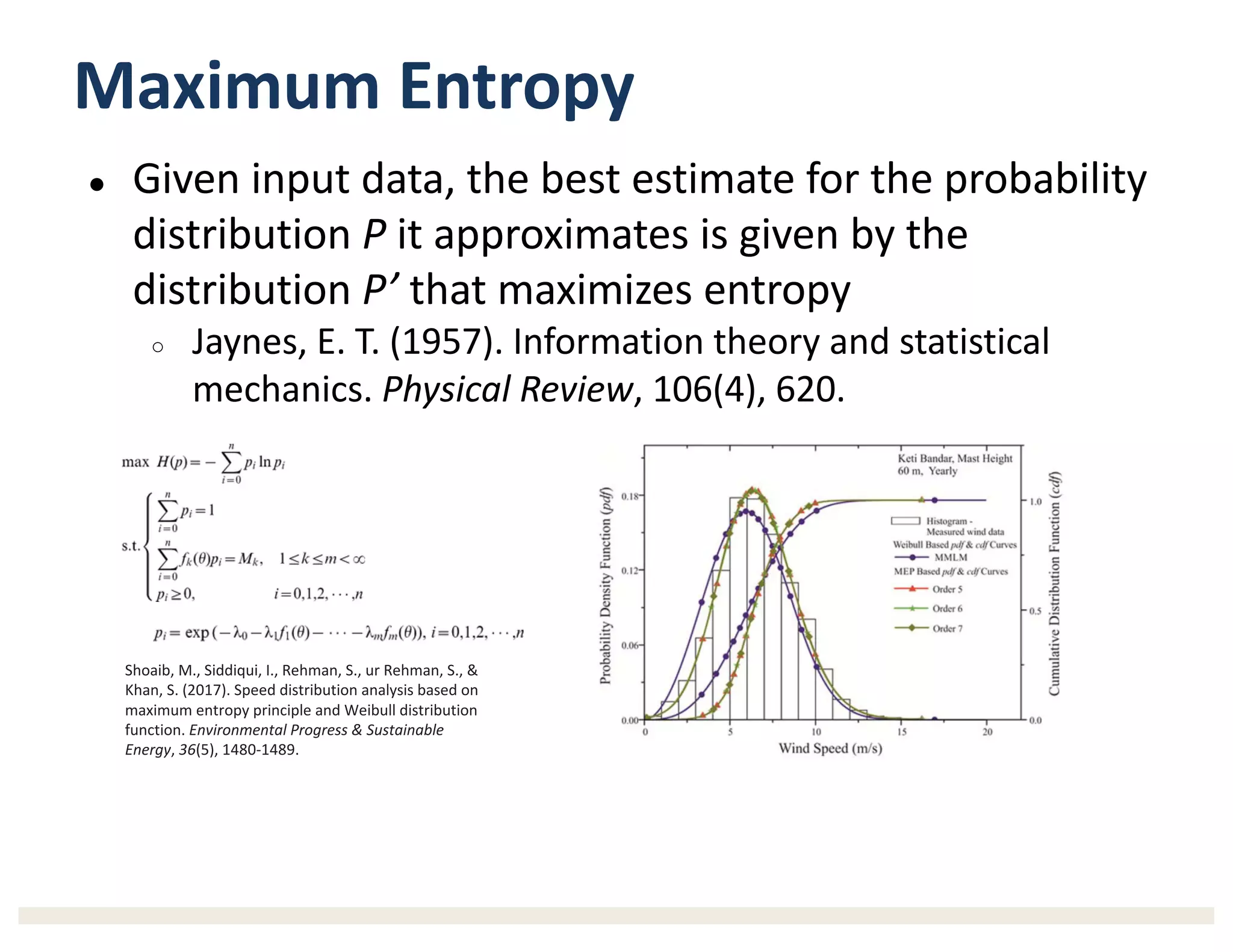

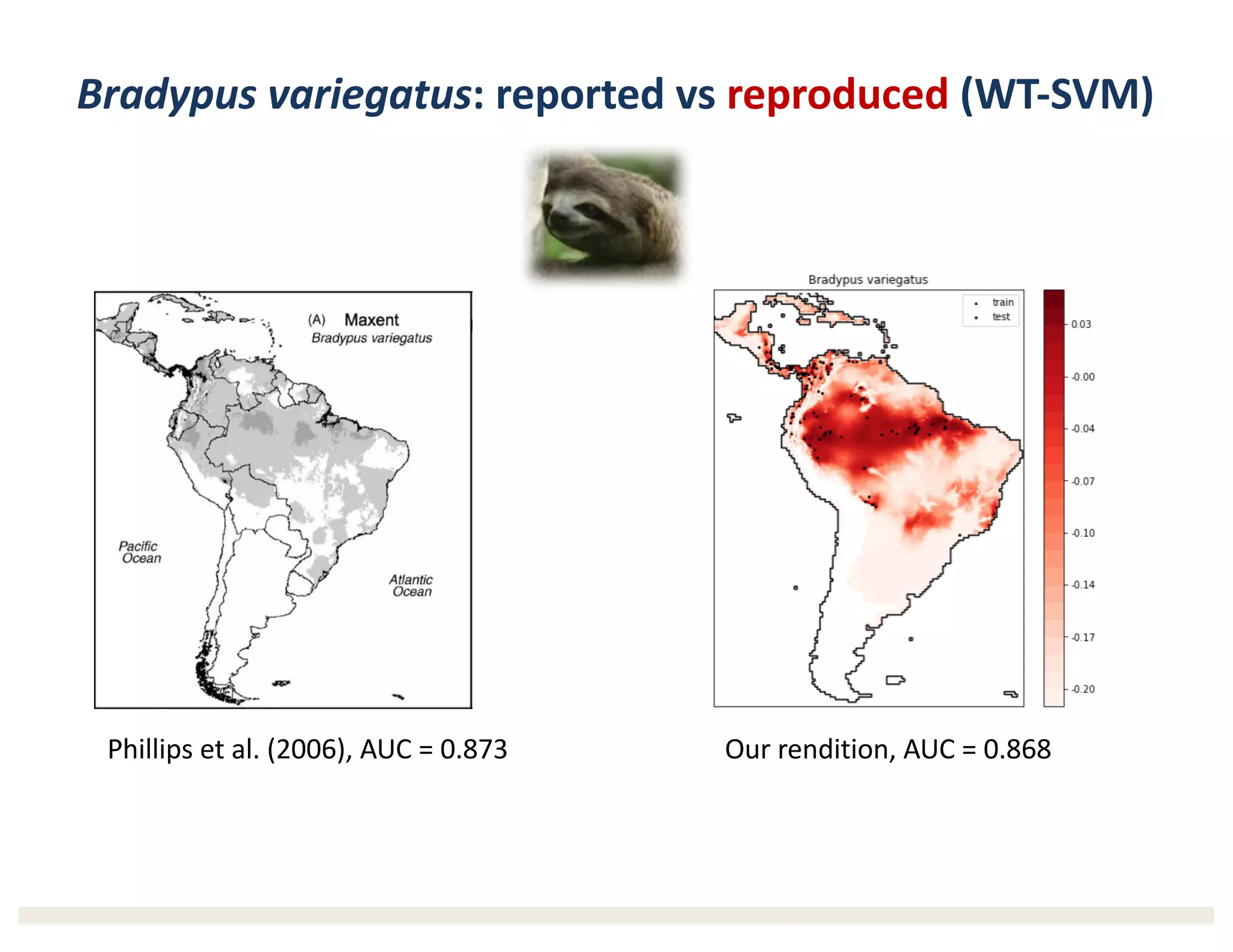

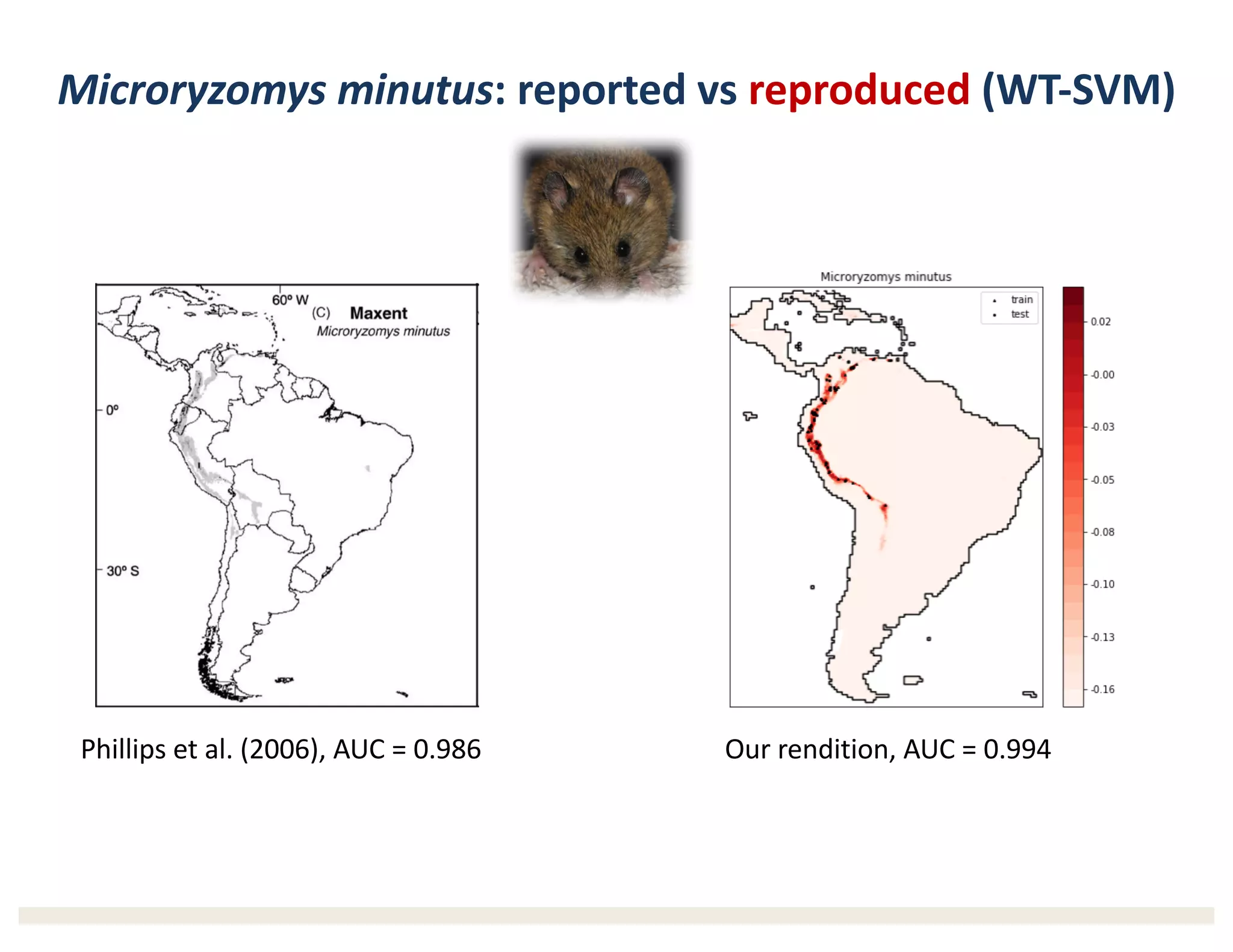

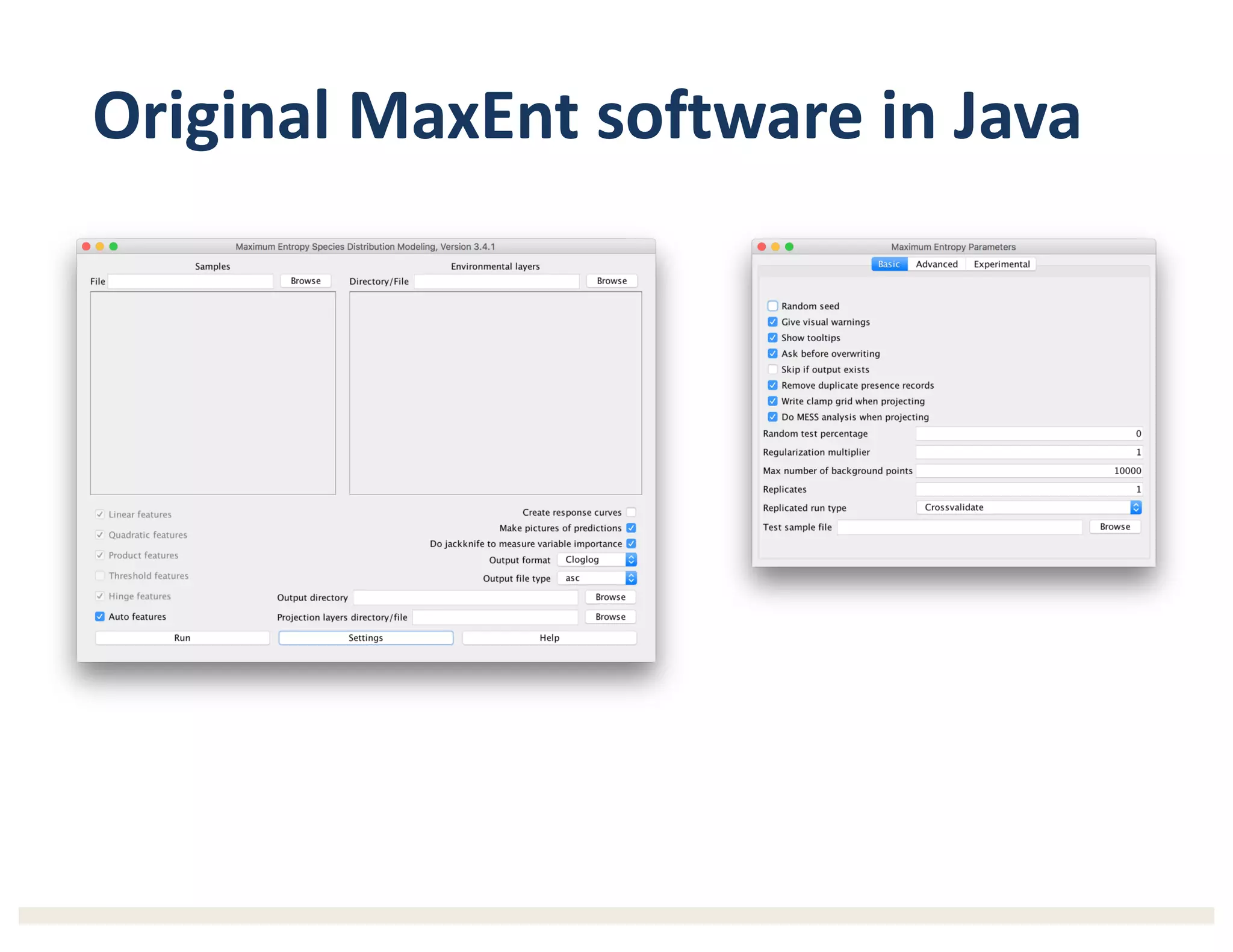

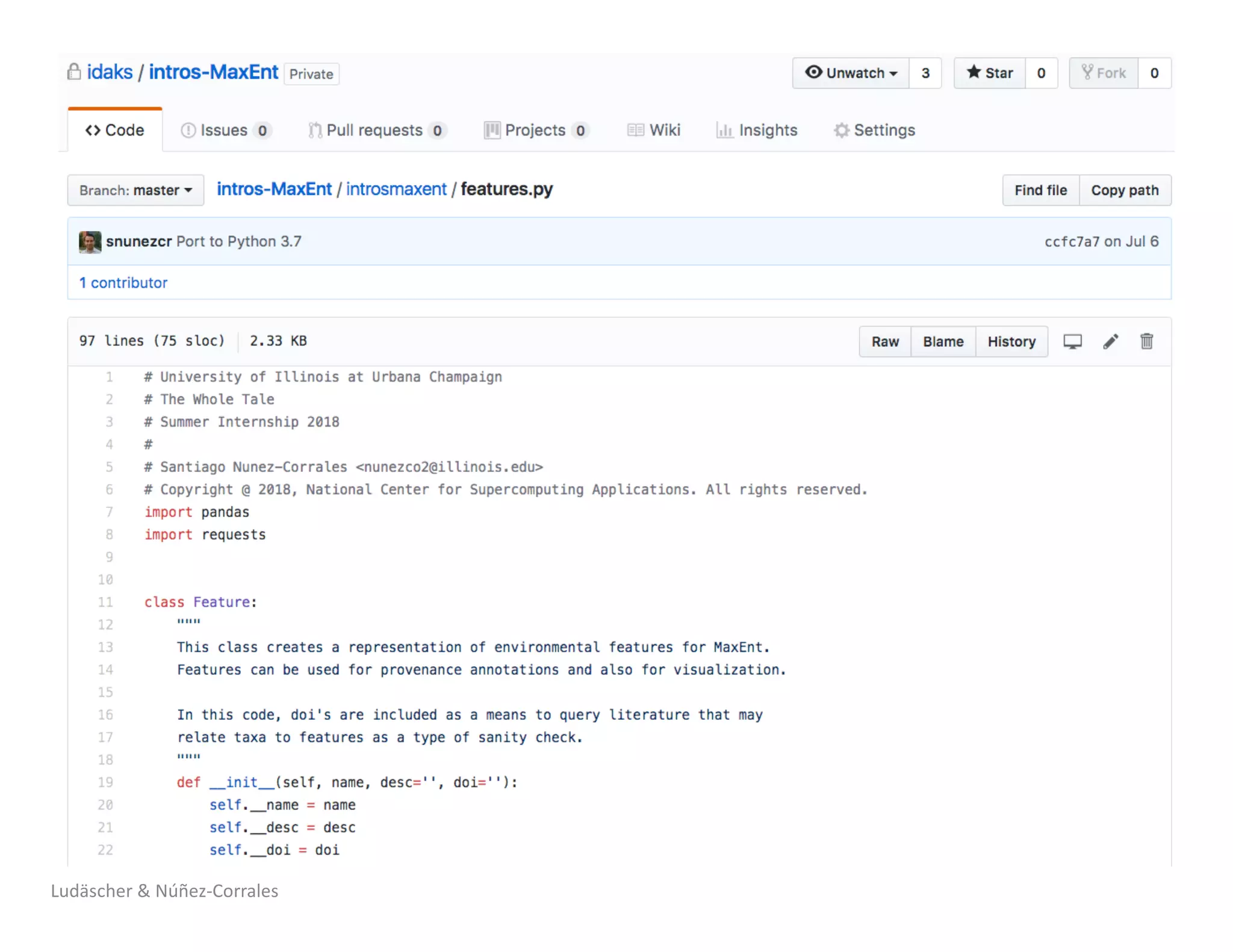

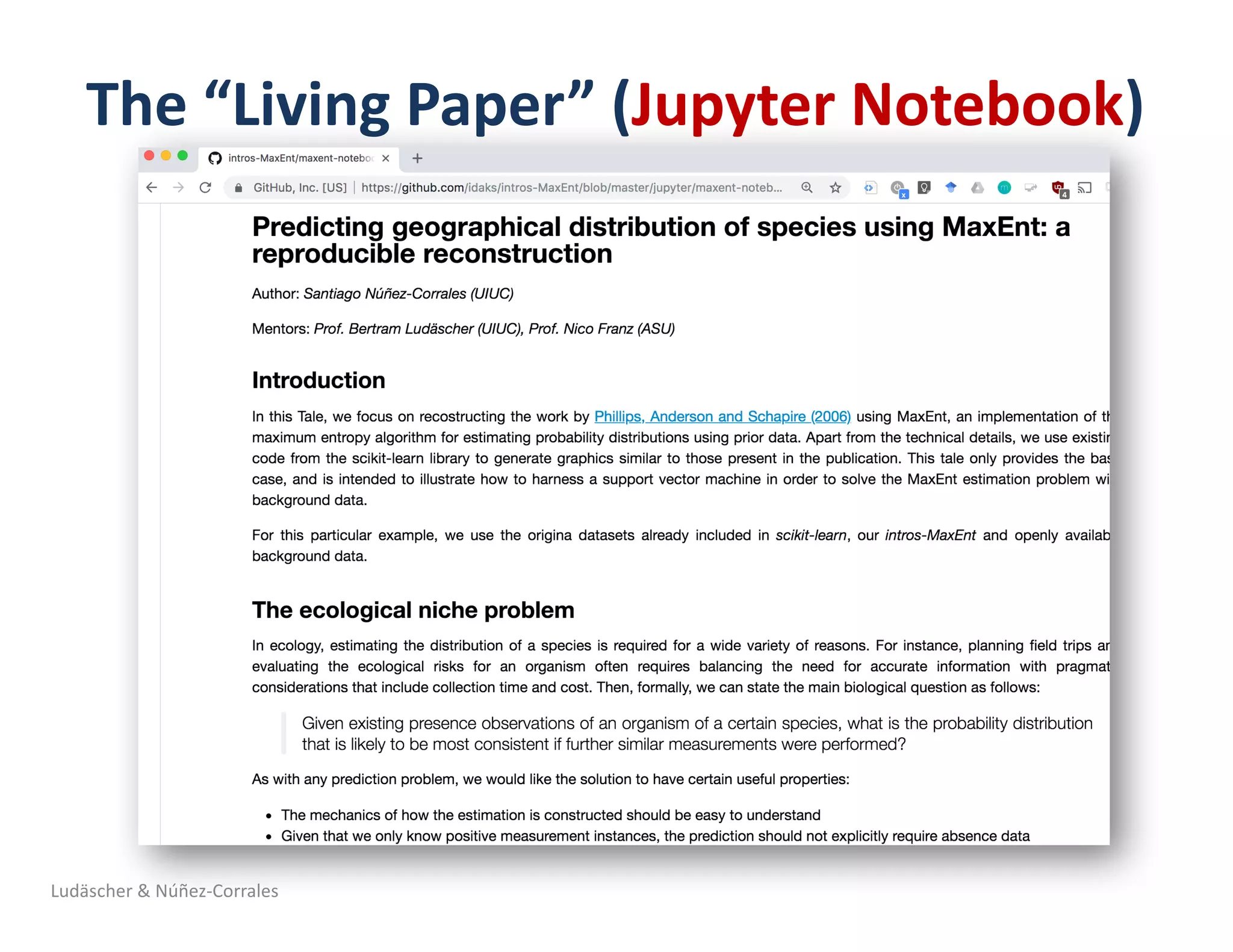

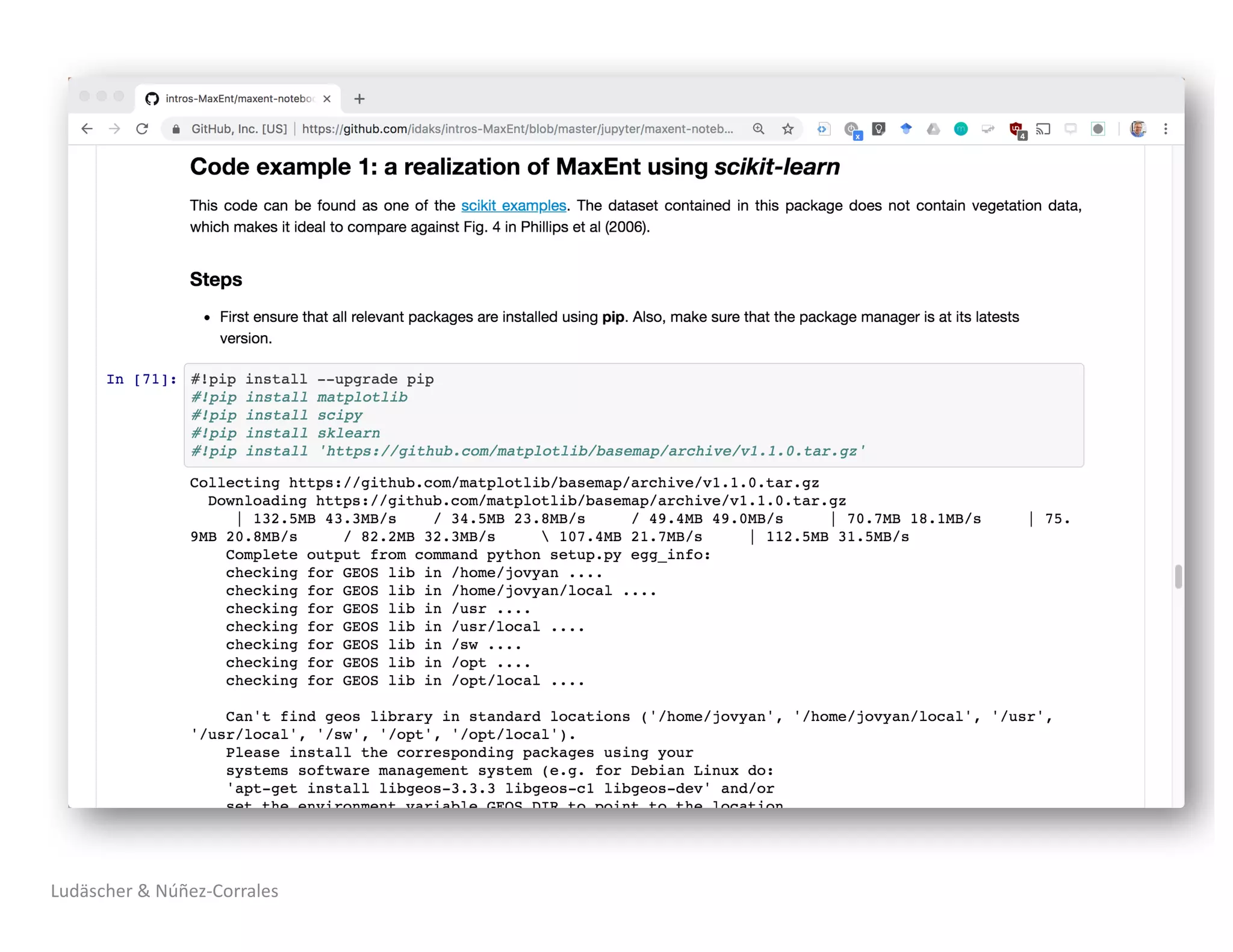

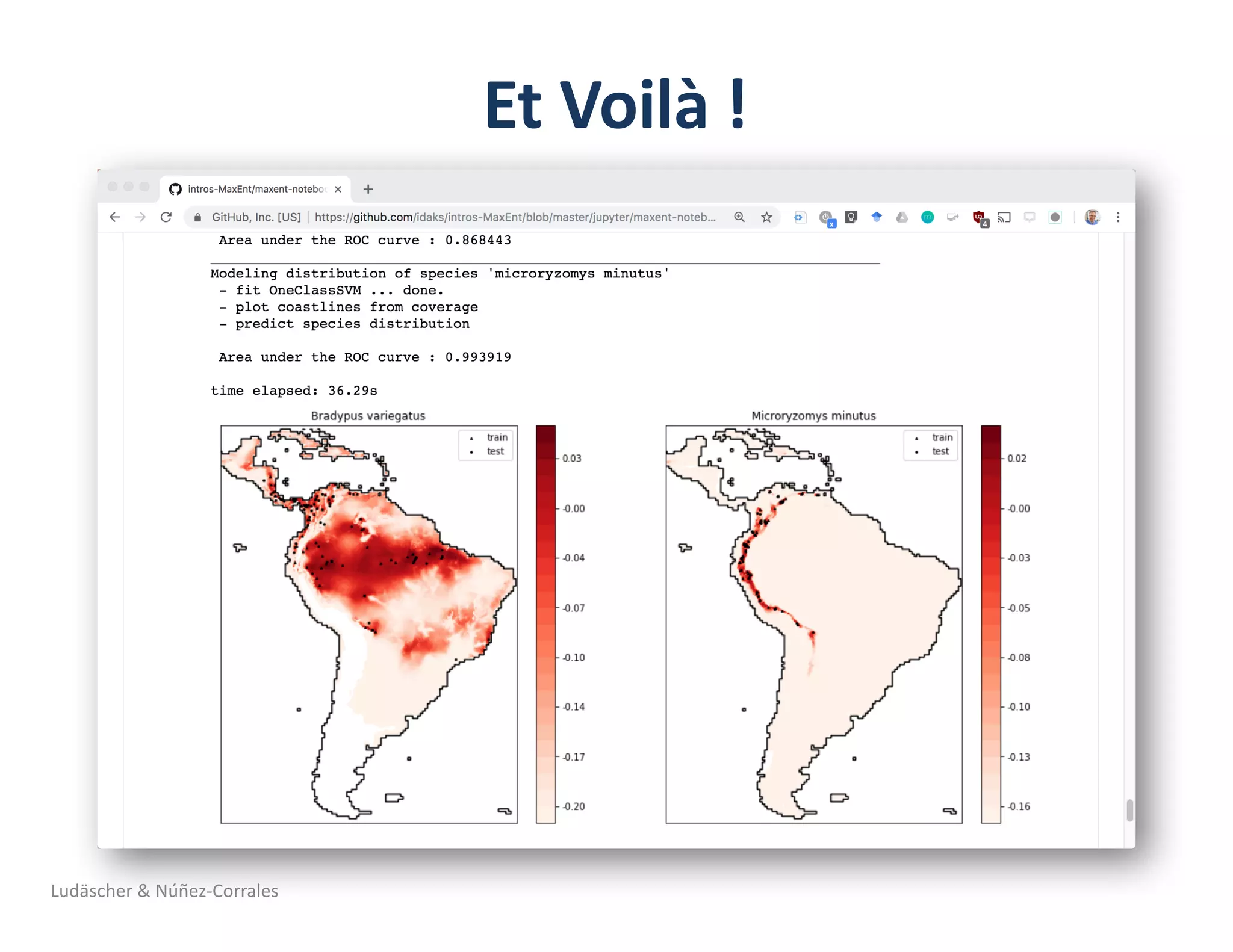

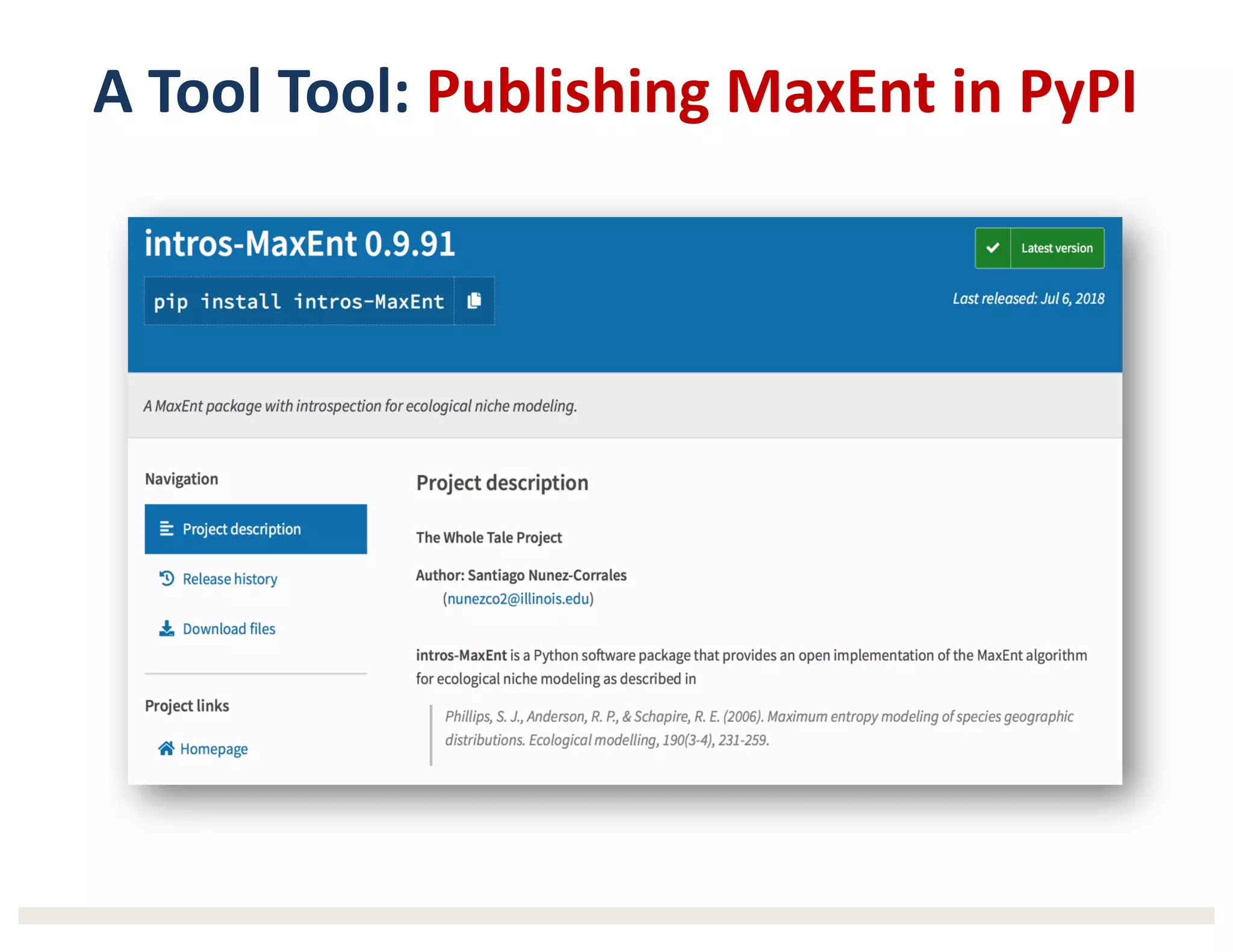

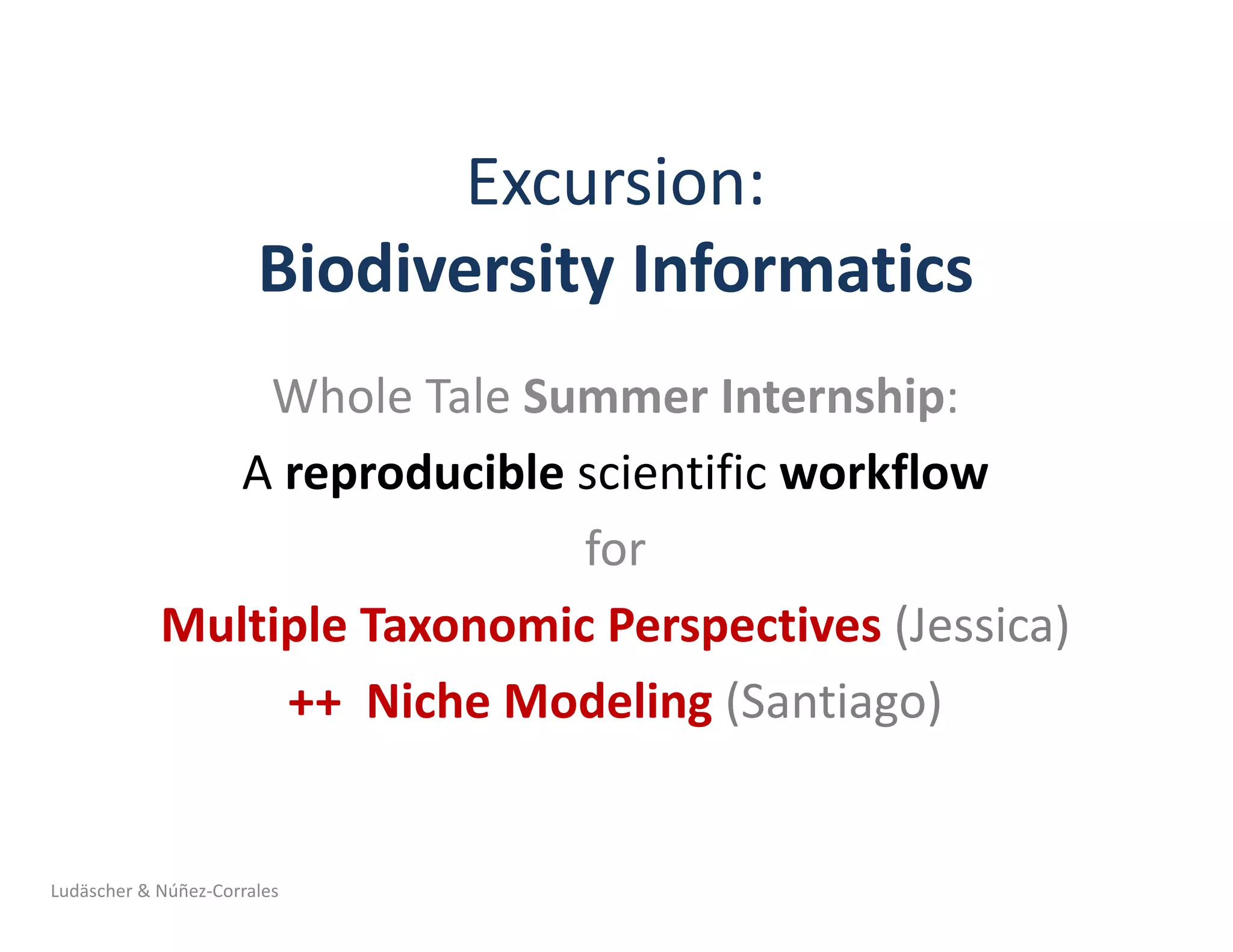

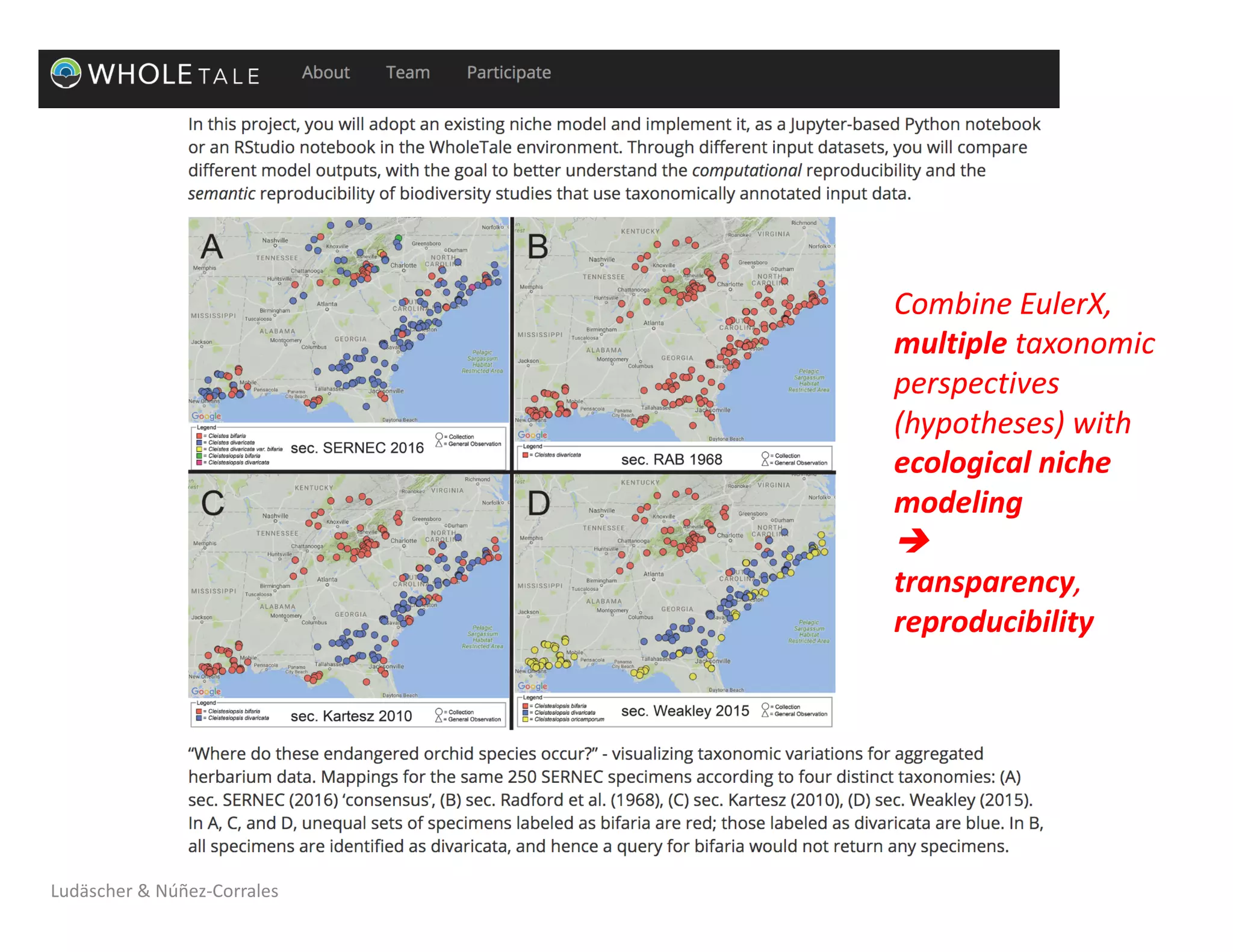

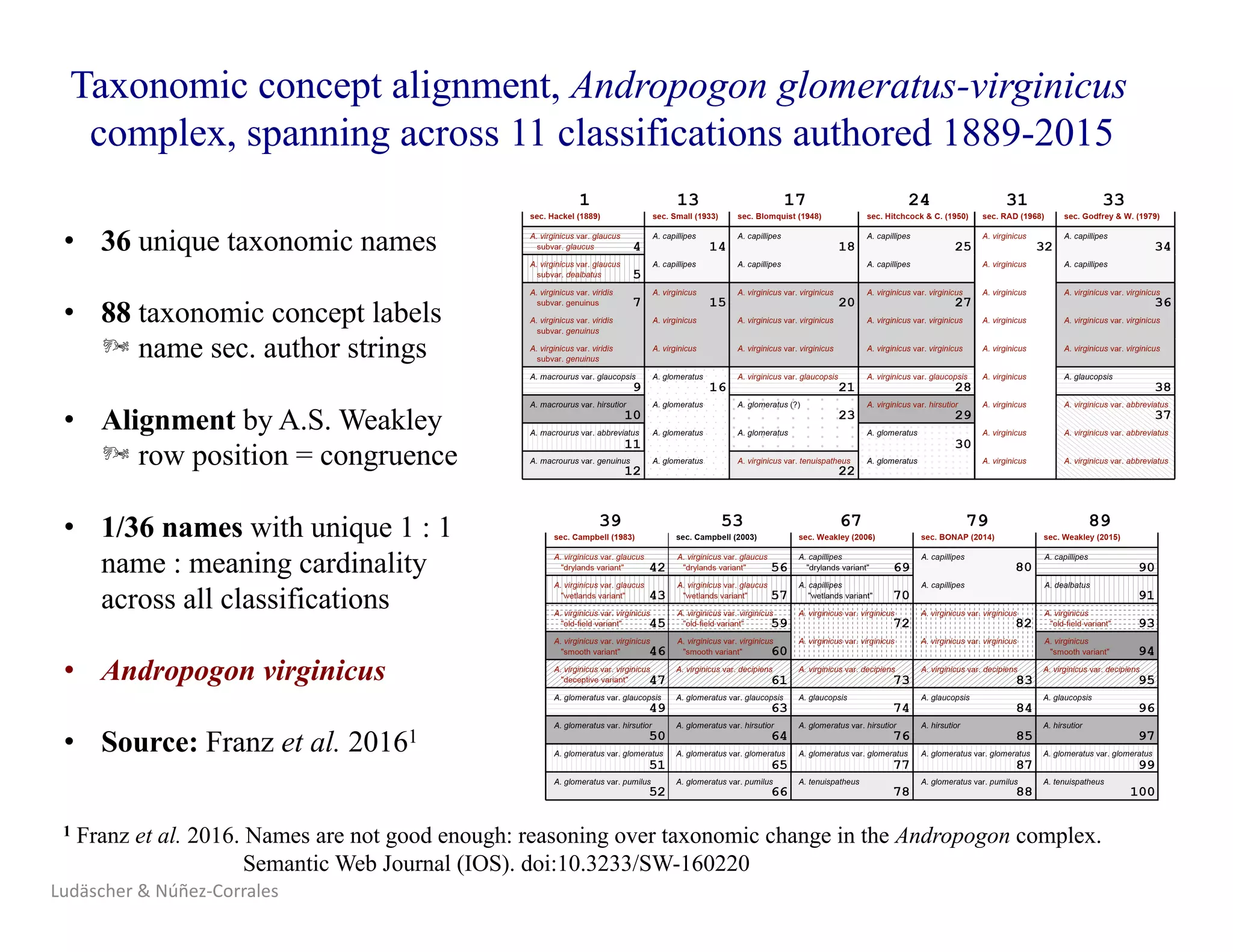

3) The document presents a case study where an intern was able to reproduce results from a 2006 ecological niche modeling paper using the Whole Tale environment and MaxEnt software, demonstrating computational reproducibility.

![Reproduce,

Replicate,

Repeat …

Wait!

Mind your

vocabulary!

Ludäscher & Núñez-Corrales

Barba, Lorena A. 2018. “Terminologies for Reproducible Research.”

ArXiv:1802.03311 [Cs], February. http://arxiv.org/abs/1802.03311.](https://image.slidesharecdn.com/2018-10-hoh3-ludaescher-181012092954/75/Dissecting-Reproducibility-A-case-study-with-ecological-niche-models-in-the-Whole-Tale-environment-14-2048.jpg)

![Ludäscher & Núñez-Corrales

Barba, Lorena A. 2018. “Terminologies for Reproducible Research.”

ArXiv:1802.03311 [Cs], February. http://arxiv.org/abs/1802.03311.](https://image.slidesharecdn.com/2018-10-hoh3-ludaescher-181012092954/75/Dissecting-Reproducibility-A-case-study-with-ecological-niche-models-in-the-Whole-Tale-environment-15-2048.jpg)

![Ludäscher & Núñez-Corrales

Plesser, Hans E. 2018. “Reproducibility vs.

Replicability: A Brief History of a Confused

Terminology.” Frontiers in Neuroinformatics 11.

https://doi.org/10.3389/fninf.2017.00076.

Barba, Lorena A. 2018. “Terminologies for

Reproducible Research.” ArXiv:1802.03311 [Cs],

February. http://arxiv.org/abs/1802.03311.](https://image.slidesharecdn.com/2018-10-hoh3-ludaescher-181012092954/75/Dissecting-Reproducibility-A-case-study-with-ecological-niche-models-in-the-Whole-Tale-environment-16-2048.jpg)

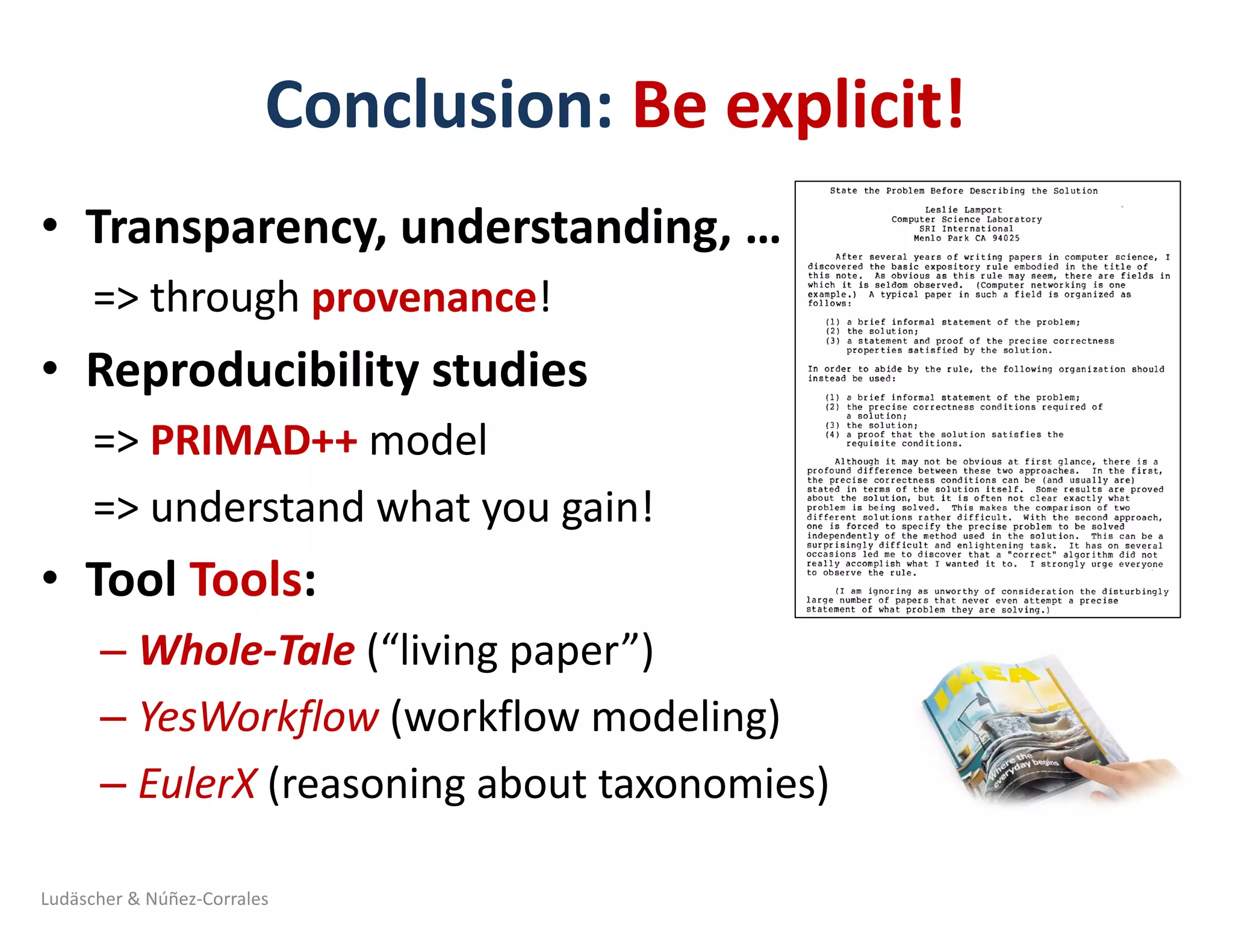

![Half-Smokes in DC: Typical for the Northeast?

… or the South !? (A tale of two taxonomies: NDC vs CEN)

“…in the face of incompatible information or data structures among users or among those specifying

the system, attempts to create unitary knowledge categories are futile. Rather, parallel or multiple

representational forms are required” [Bowker & Star, 2000, p.159]

West

Southwest Southeast

Midwest North-

east

West

South

Midwest North-

east

National Diversity Council map (NDC) US Census Buero map (CEN)

Source: Yi-Yun (Jessica) Cheng (PhD student, iSchool @ Illinois)

Ludäscher & Núñez-Corrales](https://image.slidesharecdn.com/2018-10-hoh3-ludaescher-181012092954/75/Dissecting-Reproducibility-A-case-study-with-ecological-niche-models-in-the-Whole-Tale-environment-45-2048.jpg)