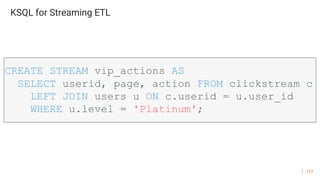

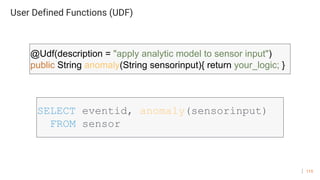

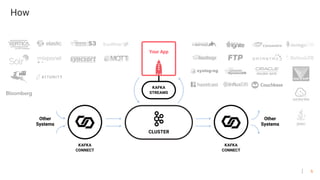

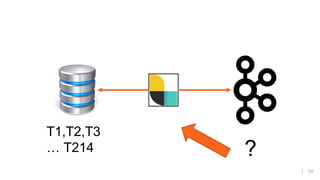

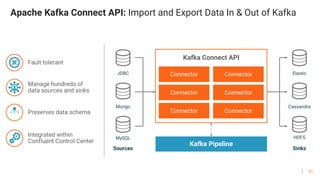

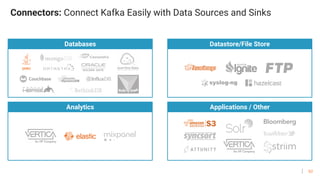

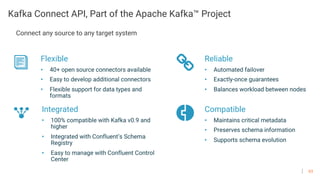

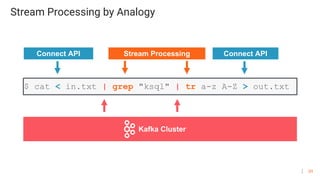

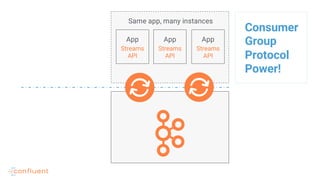

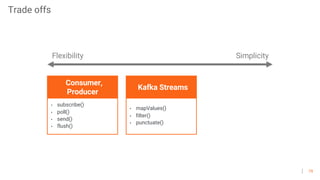

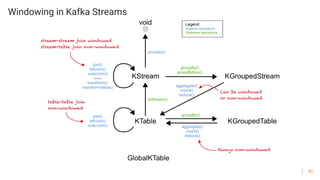

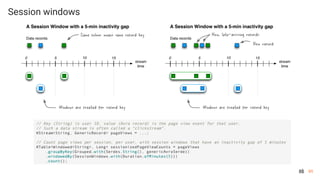

Kafka can be used to build real-time streaming applications and process large amounts of data. It provides a simple publish-subscribe messaging model with streams of records. Kafka Connect allows connecting Kafka with other data systems and formats through reusable connectors. Kafka Streams provides a streaming library to allow building streaming applications and processing data in Kafka streams through operators like map, filter and windowing.

![94

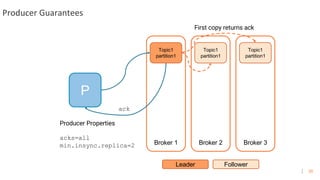

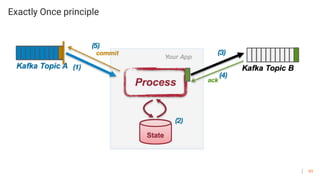

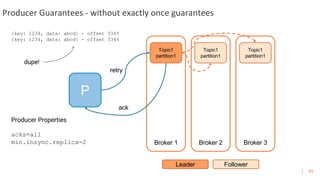

Producer Guarantees - with exactly once guarantees

P

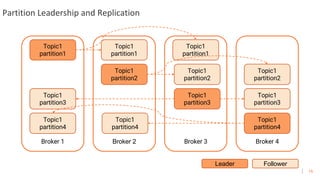

Broker 1 Broker 2 Broker 3

Topic1

partition1

Leader Follower

Topic1

partition1

Topic1

partition1

Producer Properties

enable.idempotence=true

max.inflight.requests.per.connection=1

acks = “all”

retries > 0 (preferably MAX_INT)

(pid, seq) [payload]

(100, 1) {key: 1234, data: abcd} - offset 3345

(100, 1) {key: 1234, data: abcd} - rejected, ack re-sent

(100, 2) {key: 5678, data: efgh} - offset 3346

retry

ack

no dupe!](https://image.slidesharecdn.com/devoxxkafkauniversity-190416195241/85/Devoxx-university-Kafka-de-haut-en-bas-94-320.jpg)