DataModeling.pptx

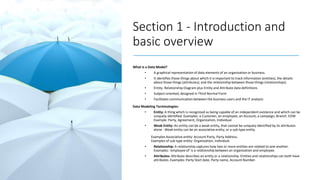

- 1. Section 1 - Introduction and basic overview What is a Data Model? • A graphical representation of data elements of an organization or business. • It identifies those things about which it is important to track information (entities), the details about those things (attributes), and the relationship between those things (relationships). • Entity- Relationship Diagram plus Entity and Attribute data definitions • Subject-oriented, designed in Third Normal Form • Facilitates communication between the business users and the IT analysts Data Modeling Terminologies: • Entity: A thing which is recognized as being capable of an independent existence and which can be uniquely identified. Examples: a Customer, an employee, an Account, a campaign, Branch. EDW Example: Party, Agreement, Organization, Individual. • Weak Entity: An entity can be a weak entity, that cannot be uniquely identified by its attributes alone . Weak entity can be an associative entity, or a sub type entity. Examples Associative entity: Account Party, Party Address. Examples of sub type entity: Organization, Individual. • Relationship: A relationship captures how two or more entities are related to one another. Examples: ‘employee of’ is a relationship between an organization and employee. • Attributes: Attribute describes an entity or a relationship. Entities and relationships can both have attributes. Examples: Party Start date, Party name, Account Number.

- 2. Section 2a – Data Modeling & Data Mapping guidelines Upstream EDW Upstream Data Model – To EDW, Upstream is a combination of layers having processes, databases, jobs, and de-coupling views through which extracted source system files are loaded from Extraction Layer to staging (or Loading) layer and then further transformed via Transform Layer to Core EDW Layer. Upstream Model standards: • To be modeled and extended by keeping Teradata FSLDM as base. • Tables to be in 3rd Normal Form as per Teradata FSLDM. • Jobs to follow the Primary Key defined by OCBC Data Modeler. Prerequisite: • User requirement Or Functional Spec document, to determine the data elements to bring to EDW. • Early data Inventory (EDI), to understand the source system which contains the required data elements. • It has to explain the business attributes, • primary keys of each tables, • The relationship between the tables, • If the source system has the files which relates to other system. • Data Profiling documents to understand the data demographics and integrity of the new data elements • Understanding of the existing EDW model Or Teradata FSLDM.

- 3. Section 2a – Data Modeling & Data Mapping guidelines Upstream Contd.. Upstream Logical Data Modeling Process: Once the data elements required to bring to EDW is finalized and the EDI for the corresponding source system is available, then it can follow the below process to prepare the data model. 1. Identify the data entity: Based on the EDI and data profiling, identify the data entity corresponding to the data element which has to bring to EDW. E.g. Customer, account, Branch, employee, transaction, campaign, product, collateral etc.. 2. Group the attributes related to each entity, which EDW is interested. • Attributes describes data entities e.g.: Account (entity) : Status, account number, account open date ..etc 3. Refer the FSDLM guidelines to map the identified entities to OCBC FSLDM. • As per FSLDM, Customer, Branch, Employee are Party • Transaction is event • Collateral is Party asset 4. Extend the OCBC FSLDM model if the required entity doesn’t fit the existing model subject to the approval of OCBC Data Modeler.

- 4. Section 2a – Data Modeling & Data Mapping guidelines Upstream Contd.. Upstream Physical Data Model creation: Physical data models are derived from logical model entity definitions and relationships and are constructed to ensure that data is stored and managed in physical structures that operate effectively on the Teradata database platform. Below are the PDM creation guidelines: • Primary Index: Target tables to follow the best candidate eligible for Primary Index (Unique or Non- Unique) knowing the access path, distribution, join criteria etc. Note: Primary Index and Primary key are different concept. • Partitioned Primary Index: To enhance the performance of the big size tables, PPI can be implemented. Refer the Teradata technical documentation for more details on PPI guidelines. • Default Values: It can be used to default the values if no value passed to the table attribute • ETL Control Frame work Attributes: All EDW target tables (except b-key, b-map and reference tables) will have seven control frame work attributes. 1. Start_Dt 2. End_Dt 3. Data_Source_Cd 4. Record_Deleted_Flag 5. BusinessDate 6. Ins_Txf_BatchID 7. Upd_Txf_BatchID • Data Types Assignation: Data Types should be assigned as part of physicalisation. • Null / Not Null Handling: Nulls and Not-Nulls should be assigned for each attribute.

- 5. Section 2a – Data Modeling & Data Mapping guidelines Upstream Contd.. There are two Data Model deliverables in Upstream: • T - EDW Platform Upstream Data Architecture: SDLC standard document to document the data model, which describes all the business data entities, attributes and how the data elements modeled in OCBC EDW. It also should include all the model design decisions and deviations if any. Data architecture document should have the logical and physical model diagram with relationships and primary keys, primary indexes and other physical design considerations. • T - EDW Platform Upstream Source Target Mapping: Data architecture document will be used as the input for the source to target mapping document. Source to Target Mapping document captures the detailed business rules, how the source business attributes transformed to EDW data elements. It will be used as the reference document for the further ETL development.

- 6. Section 2b – Data Modeling & Data Mapping guidelines Downstream EDW Downstream Data Model: To EDW, Downstream is a combination of layers having process, databases, tables, views and through which user requirements are modeled and met after applying the business and technical transformation rules on data read via customized OCBC FSLDM. Downstream Model standards: • To be modelled to support user requirements and must be scalable. • To be modelled to ensure that it’s easy to maintain and it’s tuned to meet the SLA based on best performance. • To be modelled keeping in mind the enterprise level reporting (including geographies). • To be modelled with various security attributes at most granular level. • Tables must not be skewed. • Jobs to follow the Primary Key defined by OCBC Data Modeler. • Target tables to follow the best candidate eligible for Primary Index (Unique or Non-Unique) knowing the access path, distribution, join criteria etc. Prerequisite: User requirement or Functional Spec document, to determine the data elements to bring report to users which: • should explain the business rules to derive the reporting data elements • should specify the reporting level of each data element • should have details of the reporting hierarchies

- 7. Section 2b – Data Modeling & Data Mapping guidelines Downstream Contd.. There are two Data Model deliverables in Downstream: • T - EDW Platform Downstream Data Architecture: SDLC standard document to document the data model, which describes all the business data entities, attributes and how the data elements modeled in OCBC EDW downstream data mart to support the user reporting requirement. It also should include all the model design decisions and deviations if any. Data architecture document should have the logical and physical model diagram with relationships and primary keys, primary indexes and other physical design considerations. • T - EDW Platform Downstream Source Target Mapping: Data architecture document will be used as the input for the source to target mapping document. Source to Target Mapping document captures the detailed business rules, how the source business attributes transformed to EDW data elements. It will be used as the reference document for the further ETL development.

- 8. Section 3a – Naming standards and conventions Contd… Column name Convention: Table naming convention is as per below rule. • Keep the column name same as of parent column in Core EDW. • Take off the vowels i.e. a, e, i, o and u. Please do not remove the vowel if it is first letter of the word for example, “A” in Amount. If the column name is still longer than the 30 characters please contact the architecture or the standard and guidelines team. • Keep the column name in mixed case (first letter in capital). For example, Data_Source_Cd instead of DATA_SOURCE_CD or Data_SOURCE_Cd etc. • The control columns should be brought as it is from Core EDW. And the above rules does not apply on them. • Example: • Like measure Count of Transaction is not part of Core EDW then • Column Name • Count_Of_Transactions / Transaction_Count • Take off the vowels i.e. a, e, i, o and u. • Column Name post this rule • Cnt_Of_Trnsctn / Trnsctn_Cnt • Keep the column name in mixed case (first letter in capital). • Column Name post this rule • Cnt_Of_Trnsctn / Trnsctn_Cnt • The control columns should be brought as it is from Core EDW. And the above rules does not apply on them. • Column Name post this rule • The above column is not a control column. • Control column like Data_Source_Cd should be brought as it is.

- 9. Section 4b – Best Practices Contd… Data Model Creation: Below are the key steps in the data modeling • Identify entities • Identify attributes of entities • Identify relationships • Apply naming conventions • Assign keys and indexes • Normalize to reduce data redundancy • Denormalize to improve performance

- 10. Section 5 – Check list / Self Review Process Data Mapping: • Please start the self review after the completion of data mapping document. Each and every column in the doc should provide the useful and meaningful information. Provide the information in the transformation rules as expected by the OCBC reviewer. • Once the self review is done, suggest to have an internal review by Data Mapper before proceed to the OCBC BADM team review to avoid rework / escalations. Data Modeling: • Check closely if all the names having correct naming conventions. • Create the domains to provide the flexibility of changing a data type • Create the indexes as per the OCBC standards. • Assign all the data types to all the columns. • Provide the table and column definitions for all the tables. • Assign Null & Not null to all the columns. • Create the default values, if any. • Attach the abbreviation file with all the meaningful abbreviated tables and columns. • Generate the DDLs from Erwin and add the database name for the datamart, send it to OCBC data modeler for review. Once he/she approves will ask DBA to deploy it in the respective environment. • Make the data model presentable to the client / business users by coloring the entities and the relationships / columns.

- 11. Section 6 – DOs and DON’Ts Please do a self review after the completion of Data Model & Data Mapping. All the standards should be meet as per the client expectations. • For Data Modeling check all the data types, null, not null fields, indexes, generate the DDLs and pass it to the OCBC DM get the approval and he/she will pass it to DBA to create the table structures. • For Data Mapping, all the columns should be filled with proper formatting, coloring, if any, font, size, uppercase, lowercase, etc. • Every page of mapping document should be updated by the mapping name. • Extraction criteria should be clearly mentioned.

- 12. THANK YOU