DataHandlingStatistics.ppt

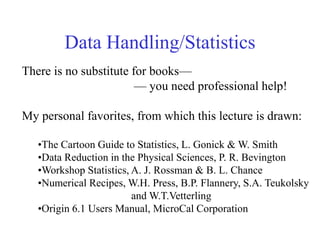

- 1. Data Handling/Statistics There is no substitute for books— — you need professional help! My personal favorites, from which this lecture is drawn: •The Cartoon Guide to Statistics, L. Gonick & W. Smith •Data Reduction in the Physical Sciences, P. R. Bevington •Workshop Statistics, A. J. Rossman & B. L. Chance •Numerical Recipes, W.H. Press, B.P. Flannery, S.A. Teukolsky and W.T.Vetterling •Origin 6.1 Users Manual, MicroCal Corporation

- 2. Outline •Our motto •What those books look like •Stuff you need to be able to look up •Samples & Populations •Mean, Standard Deviation, Standard Error •Probability •Random Variables •Propagation of Errors •Stuff you must be able to do on a daily basis •Plot •Fit •Interpret

- 3. Our Motto That which can be taught can be learned. The “progress” of civilization relies being able to do more and more things while thinking less and less about them. An opposing, non-CMC IGERT viewpoint

- 4. What those books look like The Cartoon Guide to Statistics

- 5. The Cartoon Guide to Statistics In this example, the author provides step-by-step analysis of the statistics of a poll. Similar logic and style tell you how to tell two populations apart, whether your measley five replicate runs truly represent the situation, etc. The Cartoon Guide gives an enjoyable account of statistics in scientific and everyday life.

- 6. Bevington Bevington is really good at introducing basic concepts, along with simple code that really, really works. Our lab uses a lot of Bevington code, often translated from Fortran to Visual Basic.

- 7. “Workshop Statistics” This book has a website full of data that it tells you how to analyze. The test cases are often pretty interesting, too. Many little shadow boxes provide info.

- 8. “Numerical Recipes” A more modern and thicker version of Bevington. Code comes in Fortran, C, Basic (others?). Includes advanced topics like digital filtering, but harder to read on the simpler things. With this plus Bevington and a lot of time, you can fit, smooth, filter practically anything.

- 9. Stuff you need to be able to look up Samples vs. Populations The world as we understand it, based on science. The world as God understands it, based on omniscience. Statistics is not art but artifice–a bridge to help us understand phenomena, based on limited observations.

- 10. Our problem Sitting behind the target, can we say with some specific level of confidence whether a circle drawn around this single arrow (a measurement) hits the bullseye (the population mean)? Measuring a molecular weight by one Zimm plot, can we say with any certainty that we have obtained the same answer God would have gotten?

- 11. Sample View Population View Average Variance Standard deviation Standard error of mean n i i n x n n x x x x x 1 3 2 1 1 ... n i i x x n s 1 2 2 ) ( 1 1 2 s s ) (x E n x) ( 2 n s SEM

- 12. Sample View: direct, experimental, tangible The single most important thing about this is the reduction In standard deviation or standard error of the mean according To inverse root n. ) large (for 1 ~ 2 n n s s Three times better takes 9 times longer (or costs 9 times more, or takes 9 times more disk space). If you remembered nothing else from this lecture, it would be a success!

- 13. Population View: conceptual, layered with arcana! The purple equation in the table is an expression of the central limit theorem. If we measure many averages, we do not always get the same average: ). " Cartoon... " (from " deviation standard and mean with on distributi normal a approaches itself ) large (for then , deviation standard and mean with population a from size of samples random takes one if “ variable! random a itself is n x n n x

- 14. Huh? It means…if you want to estimate , which only God really knows, you should measure many averages, each involving n data points, figure their standard deviation, and multiply by n1/2. This is hard work! A lot of times, is approximated by s. If you wanted to estimate the population average , the best you can do is to measure many averages and averaging those. A lot of times is approximated by x. IT’S HARD TO KNOW WHAT GOD DOES. I think the in the purple equation should be an s, but the equation only works in the limit of large n anyhow, so there is no difference.

- 15. You got to compromise, fool! The t-distribution was invented by a statistician named Gosset, who was forced by his employer (the Guinness brewery!) to publish under a pseudonym. He chose “Student” and his t-distribution is known as student’s t. The student’s t distribution helps us assign confidence in our imperfect experiments on small samples. Input: desired confidence level, estimate of population mean (or estimated probability), estimated error of the mean (or probability). Output: ± something

- 16. Probability …is another arcane concept in the “population” category: something we would like to know but cannot. As a concept, it’s wonderful. The true mean of a distribution of mass is given as the probability of that mass times the mass. The standard deviation follows a similarly simple rule. In what follows, F means a normalized frequency (think mole fraction!) and P is a probability density. P(x)dx represents the number of things (think molecules) with property x (think mass) between x+dx/2 and x-dx/2. x all x all x F x x xF 2 2 ) ( ) ( ) ( Discrete system dx x P x dx x xP ) ( ) ( ) ( 2 2 Continuous system

- 17. Here’s a normal probability density distribution from “Workshop…” where you use actual data to discover. 68% of results 2 95% of results

- 18. What it means Although you don’t usually know the distribution, (either or ) about 68% of your measurements will fall within 1 of ….if the distribution is a “normal”, bell-shaped curve. t-tests allow you to kinda play this backwards: given a finite sample size, with some average, x, and standard deviation, s—inferior to and , respectively—how far away do we think the true is?

- 19. Details No way I could do it better than “Cartoon…” or “Workshop…” Remember…this is the part of the lecture entitled “things you must be able to look up.”

- 20. Propagation of errors Suppose you give 30 people a ruler and ask them to measure the length and width of a room. Owing to general incompetence, otherwise known as human nature, you will get not one answer but many. Your averages will be L and W, and standard deviations sW and sL. Now, you want to buy carpet, so need area A = L·W. What is the uncertainty in A due to the measurement errors in L and W? Answer! There is no telling….but you have several options to estimate it.

- 21. A = L·W example Here are your measured data: ft W ft L 2 19 1 30 2 2 2 average 2 2 min 2 2 max 65) (560 : area reported 65 2 490 - 620 : y uncertaint estimated 557 2 490 620 490 17 29 620 20 31 ft ft ft A ft ft W L A ft ft W L A You can consider “most” and “least” cases:

- 22. Another way We can use a formula for how propagates. Suppose some function y (think area) depends on two measured quantities t and s (think length & width). Then the variance in y follows this rule: 2 2 2 2 2 s t y s y t y Aren’t you glad you took partial differential equations? What??!! You didn’t? Well, sign up. PDE is the bare minimum math for scientists.

- 23. Translation in our case, where A = L·W: 2 2 2 2 2 2 2 2 2 W L W L A L W W A L A Problem: we don’t know W, L, L or W! These are population numbers we could only get if we had the entire planet measure this particular room. We therefore assume that our measurement set is large enough (n=30) That we can use our measured averages for W and L and our standard deviations for L and W.

- 25. Error propagation caveats The equation, 2 2 2 2 2 s t y s y t y , assumes normal behavior. Large systematic errors—for example, 3 euroguys who report their values in metric units—are not taken into consideration properly. In many cases, there will be good knowledge a priori about the uncertainty in one or more parameters: in photon counting, if N is the number of photons detected, then N = (N)1/2 . Systematic error that is not included in this estimate, so photon folk are well advised to just repeat experiments to determine real standard deviations that do take systematic errors into account.

- 26. Stuff you must know how to do on daily basis 0 2 4 6 8 10 0 5000 10000 15000 20000 25000 Larger Particle 30.9 g/ml Parameter Value Error ------------------------------------------------------------ A -0.00267 44.94619 B 2.25237E-7 8.46749E-10 ------------------------------------------------------------ R SD N P ------------------------------------------------------------ 0.99987 118.8859 21 <0.0001 ------------------------------------------------------------ /Hz q2/1010cm-2 Plot!!! r=0.99987 r2=0.9997 99.97% of the trend can be explained by the fitted relation. Intercept = 0.003 ± 45 (i.e., zero!)

- 27. The same data 0 2 4 6 8 10 12 0.0 0.5 1.0 1.5 2.0 2.5 3.0 Larger Particle 30.9 g/ml twilight users rcueto e739 Parameter Value Error ------------------------------------------------------------ A 2.2725E-7 7.62107E-10 B -3.09723E-20 1.43575E-20 ------------------------------------------------------------ R SD N P ------------------------------------------------------------ -0.44355 2.01583E-9 21 0.044 ------------------------------------------------------------ D app / cm 2 s -1 q2/1010cm-2 How to find this file! r=0.444 r2=0.20 Only 20% of the data can be explained by the line! While depended on q2, Dapp does not!

- 28. 0 10 20 30 0 5 10 15 20 25 [6/7/01 13:44 "/Rhapp" (2452067)] Linear Regression for BigSilk_Ravgnm: Y = A + B * X Parameter Value Error ------------------------------------------------------------ A 20.88925 0.19213 B 0.01762 0.01105 ------------------------------------------------------------ R SD N P ------------------------------------------------------------ 0.62332 0.28434 6 0.18611 ------------------------------------------------------------ Range of Rg values obsreved in MALLS (3/5) 1/2 Rh R h /nm c/g-ml -1 Plot showing 95% confidence limits. Excel doesn’t excel at this!

- 29. Interpreting data: Life on the bleeding edge of cutting technology. Or is that bleating edge? 1E7 10 2E7 3E6 n = 0.324 +/- 0.04 df = 3.12 +/- 0.44 R g /nm M The noise level in individual runs is much less than The run-to-run variation. That’s why many runs are a good idea. More would be good here, but we are still overcoming the shock that we can do this at all!

- 30. Correlation Caveat! Correlation Cause. No, Correlation=Association. Chart Title y = 35.441x + 57.996 R2 = 0.5782 0 10 20 30 40 50 60 70 80 90 0.0000 0.2000 0.4000 0.6000 0.8000 1.0000 TV's per person Life Expectancy Country Life Expectancy People per TV TV's per person Angola 44 200 0.0050 Australia 76.5 2 0.5000 Cambodia 49.5 177 0.0056 Canada 76.5 1.7 0.5882 China 70 8 0.1250 Egypt 60.5 15 0.0667 France 78 2.6 0.3846 Haiti 53.5 234 0.0043 Iraq 67 18 0.0556 Japan 79 1.8 0.5556 Madagascar 52.5 92 0.0109 Mexico 72 6.6 0.1515 Morocco 64.5 21 0.0476 Pakistan 56.5 73 0.0137 Russia 69 3.2 0.3125 South Africa 64 11 0.0909 SriLanka 71.5 28 0.0357 Uganda 51 191 0.0052 United Kingdom 76 3 0.3333 United States 75.5 1.3 0.7692 Vietnam 65 29 0.0345 Yemen 50 38 0.0263 58% of life expectancy is associated with TV’s. Would we save lives by sending TV’s to Uganda? Excel does not automatically provide estimates!

- 31. Linearize it! Observant scientists are adept at seeing curvature. Train your eye by looking for defects in wallpaper, door trim, lumber bought at Home Depot, etc. And try to straighten out your data, rather than let the computer fit a nonlinear form, which it is quite happy to do! Chart Title y = -0.1156x + 70.717 R2 = 0.6461 0 10 20 30 40 50 60 70 80 90 0 50 100 150 200 250 People per TV Life Expectancy Linearity is improved by plotting Life vs. people per TV rather than TV’s per people.

- 32. These 4 plots all have the Same slopes, intercepts and r values! Plots are pictures of science, worth thousands of words in boring tables.

- 33. From whence do those lines come? Least squares fitting. “Linear Fits” the fitted coefficients appear in linear part expression. e.g.. y =a+bx+cx2+dx3 An analytical “best fit” exists! “Nonlinear fits” At least some of the fitted coefficients appear in transcendental arguments. e.g., y =a+be-cx+dcos(ex) Best fit found by trial & error. Beware false solutions! Try several initial guesses!

- 34. All data points are not created equal. Since that one point has so much error (or noise) should we really worry about minimizing its square? No. n i i fit i y y 1 2 2 2 ) ( We should minimize “chisquared.” n is the # of degrees of freedom n n-# of parameters fitted Goodness of fit parameter that should be unity for a “fit within error” n i i fit i reduced y y 1 2 2 2 ) ( 1 n

- 35. 2 caveats •Chi-square lower than unity is meaningless…if you trust your 2 estimates in the first place. •Fitting too many parameters will lower 2 but this may be just doing a better and better job of fitting the noise! •A fit should go smoothly THROUGH the noise, not follow it! •There is such a thing as enforcing a “parsimonious” fit by minimizing a quantity a bit more complicated than 2. This is done when you have a-priori information that the fitted line must be “smooth”.

- 36. Achtung! Warning! This lecture is an example of a very dangerous phenomenon: “what you need to know.” Before you were born, I took a statistics course somewhere in undergraduate school. Most of this stuff I learned from experience….um… experiments. A proper math course, or a course from LSU’s Department of Experimental Statistics would firm up your knowledge greatly. AND BUY THOSE BOOKS! YOU WILL NEED THEM!