Data Quality & Metadata

•

0 likes•4 views

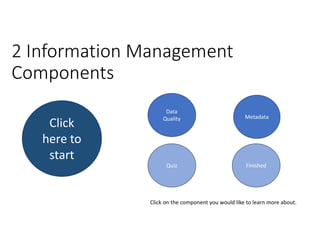

In this learning pack you will get a high level overview of 2 information management components, being Data Quality and Metadata.

Report

Share

Report

Share

Recommended

5 Best Practices of Effective Data Quality Management

Data Entry India Outsource's article on 5 best practices to ensure effective data quality management and a focused plan for data governance. For more info - https://www.dataentryindiaoutsource.com/blog/5-best-practices-effective-data-quality-management/

Automated Survey Data Received and Sync From Field

This document provides instructions for various user management and data transfer functions in a survey application. It includes steps for changing passwords, downloading and uploading questions and survey data between a web server and laptop, and downloading data from a connected PDA device. The document is organized into sections covering user management, downloading/uploading questions and survey results, and viewing survey data on the laptop.

6 Steps to Data Quality in Marketing Automation

Taking the following 6 steps can help improve data quality:

1. Perform a data audit to identify errors and gaps in current data.

2. Conduct a systems audit to ensure tools are integrated and functioning properly.

3. Revise data capture processes like forms and surveys to reduce errors.

4. Correct existing data errors by cleaning, deduplicating, and standardizing records.

5. Implement email alerts and reports to monitor data quality over time.

6. Manage data quality practices across departments to maintain high standards.

Data quality testing – a quick checklist to measure and improve data quality

Don't wait for a data migration event to test your data quality. Perform data quality tests now before it gets too late. Here's everything you need to know!

https://dataladder.com/data-quality-test-checklist/

Data Cleansing The Never Ending Quest for Lead Generation.pdf

Effective lead generation relies heavily on the quality of the data being

used. Data cleansing is a critical process that ensures accurate, reliable,

and high-quality data for lead generation strategies

Optimize Your Healthcare Data Quality Investment: Three Ways to Accelerate Ti...

Healthcare organizations increasingly rely on data to inform strategic decisions. This growing dependence makes ensuring data across the organization is fit for purpose more critical than ever. Decision-making challenges associated with pandemic-driven urgency, variety of data, and lack of resources have further highlighted the critical importance of healthcare data quality and prompted more focus and investment. However, many data quality initiatives are too narrow in focus and reactive in nature or take longer than expected to demonstrate value. This leaves organizations unprepared for future events, like COVID-19, that require a rapid enterprise-wide analytic response.

What are some actionable ways you can help your organization guard against the data quality challenges uncovered this past year and better prepare to respond in the future? Join Taylor Larsen, Director of Data Quality for Health Catalyst, to learn more.

What You’ll Learn

- How data profiling and data quality assessments, in combination with your data catalog, can increase data quality transparency, expedite root cause analysis, and close data quality monitoring gaps.

- How to leverage AI to reduce data quality monitoring configuration and maintenance time and improve accuracy.

- How defining data quality based on its measurable utility (i.e., data represents information that supports better decisions) can provide a scalable way to ensure data are fit for purpose and avoid cost outstripping return.

SDM Presentation V1.0

This presentation contains our view on how data can be Strategically managed and stewarded in an organization, and the categories where rules can be applied to facilitate that process.

thegrowingimportanceofdatacleaning-211202141902.pptx

The global data cleaning tools market is growing due to increased digitization from the COVID-19 pandemic. Data cleaning is the process of removing duplicate, inaccurate, or incomplete data from databases. It is important for obtaining clean data that can be analyzed without false conclusions. The benefits of data cleaning include removing errors, better reporting, and increased productivity from high-quality data.

Recommended

5 Best Practices of Effective Data Quality Management

Data Entry India Outsource's article on 5 best practices to ensure effective data quality management and a focused plan for data governance. For more info - https://www.dataentryindiaoutsource.com/blog/5-best-practices-effective-data-quality-management/

Automated Survey Data Received and Sync From Field

This document provides instructions for various user management and data transfer functions in a survey application. It includes steps for changing passwords, downloading and uploading questions and survey data between a web server and laptop, and downloading data from a connected PDA device. The document is organized into sections covering user management, downloading/uploading questions and survey results, and viewing survey data on the laptop.

6 Steps to Data Quality in Marketing Automation

Taking the following 6 steps can help improve data quality:

1. Perform a data audit to identify errors and gaps in current data.

2. Conduct a systems audit to ensure tools are integrated and functioning properly.

3. Revise data capture processes like forms and surveys to reduce errors.

4. Correct existing data errors by cleaning, deduplicating, and standardizing records.

5. Implement email alerts and reports to monitor data quality over time.

6. Manage data quality practices across departments to maintain high standards.

Data quality testing – a quick checklist to measure and improve data quality

Don't wait for a data migration event to test your data quality. Perform data quality tests now before it gets too late. Here's everything you need to know!

https://dataladder.com/data-quality-test-checklist/

Data Cleansing The Never Ending Quest for Lead Generation.pdf

Effective lead generation relies heavily on the quality of the data being

used. Data cleansing is a critical process that ensures accurate, reliable,

and high-quality data for lead generation strategies

Optimize Your Healthcare Data Quality Investment: Three Ways to Accelerate Ti...

Healthcare organizations increasingly rely on data to inform strategic decisions. This growing dependence makes ensuring data across the organization is fit for purpose more critical than ever. Decision-making challenges associated with pandemic-driven urgency, variety of data, and lack of resources have further highlighted the critical importance of healthcare data quality and prompted more focus and investment. However, many data quality initiatives are too narrow in focus and reactive in nature or take longer than expected to demonstrate value. This leaves organizations unprepared for future events, like COVID-19, that require a rapid enterprise-wide analytic response.

What are some actionable ways you can help your organization guard against the data quality challenges uncovered this past year and better prepare to respond in the future? Join Taylor Larsen, Director of Data Quality for Health Catalyst, to learn more.

What You’ll Learn

- How data profiling and data quality assessments, in combination with your data catalog, can increase data quality transparency, expedite root cause analysis, and close data quality monitoring gaps.

- How to leverage AI to reduce data quality monitoring configuration and maintenance time and improve accuracy.

- How defining data quality based on its measurable utility (i.e., data represents information that supports better decisions) can provide a scalable way to ensure data are fit for purpose and avoid cost outstripping return.

SDM Presentation V1.0

This presentation contains our view on how data can be Strategically managed and stewarded in an organization, and the categories where rules can be applied to facilitate that process.

thegrowingimportanceofdatacleaning-211202141902.pptx

The global data cleaning tools market is growing due to increased digitization from the COVID-19 pandemic. Data cleaning is the process of removing duplicate, inaccurate, or incomplete data from databases. It is important for obtaining clean data that can be analyzed without false conclusions. The benefits of data cleaning include removing errors, better reporting, and increased productivity from high-quality data.

How to make the Metadata Model| EWSolutions

Building a strong metadata model is essential to businesses seeking to gain valuable insights from their massive data warehouses in the ever-changing context of managing data. An essential part of improving data governance, quality, and comprehension is metadata.

OberservePoint - The Digital Data Quality Playbook

There is a big difference between having data and having correct data. But collecting correct, compliant digital data is a journey, not a destination. Here are ten steps to get you to data quality nirvana.

Data quality management best practices

This document provides an overview of data quality management best practices. It discusses conducting data quality assessments, building a data quality firewall, unifying data management and business intelligence, making business users data stewards, and creating a data governance board. A variety of quality management tools are also listed, including check sheets, control charts, Pareto charts, scatter plots, Ishikawa diagrams, histograms, and other quality management topics such as systems, courses, techniques, standards, and strategies. The document emphasizes the importance of data governance and ongoing quality improvement processes involving all organizational levels.

The Growing Importance of Data Cleaning

The process of data cleaning involves the process of transformation of data from a raw format to a format that is compatible with your and use case.

Read More: https://expressanalytics.com/blog/growing-importance-of-data-cleaning/

The Key Reason Why Your DG Program is Failing

Key takeaways:

-Identify with the key reasons for failing Data Governance initiatives

-Uncover the commonly used Data Governance terms and their meanings

-Learn the Framework for a successful Data Governance Program

A simplified approach for quality management in data warehouse

Data warehousing is continuously gaining importance as organizations are realizing the benefits of

decision oriented data bases. However, the stumbling block to this rapid development is data quality issues

at various stages of data warehousing. Quality can be defined as a measure of excellence or a state free

from defects. Users appreciate quality products and available literature suggests that many organization`s

have significant data quality problems that have substantial social and economic impacts. A metadata

based quality system is introduced to manage quality of data in data warehouse. The approach is used to

analyze the quality of data warehouse system by checking the expected value of quality parameters with

that of actual values. The proposed approach is supported with a metadata framework that can store

additional information to analyze the quality parameters, whenever required.

Tom Kunz

- A professional data organization can exist within a large company like Shell by managing data as a process across the organization and aligning roles and responsibilities.

- Metadata can accelerate data quality improvement by providing information about the contents, location, and attributes of data that can help identify issues and opportunities to reduce errors.

- Applying techniques from Six Sigma and Lean can help solve data quality issues by structuring improvement efforts, prioritizing projects, and quantifying the costs and risks of poor quality data to motivate necessary changes.

Enterprise information flow and data management

The document discusses the importance of aligning master data management, master data governance, and business process management for effective enterprise information management and decision making. It states that bad data costs businesses 10-20% of annual revenue. The document provides a framework for assessing the maturity of these initiatives and advancing them in a synchronized manner from the initial configuration stage through facilitating, delivering, evaluating, and changing stages. It identifies key factors for evaluating solutions for master data management, governance, and business process management.

Is Your Data Ready to Drive Your Company's Future?

Before investing the time and money to implement a reporting and analytics solution to guide you out of the current economic crisis, make sure that your data is prepared to lead the way.

Join Edgewater Technology for a step-by-step approach to readying your data to support enterprise reporting and analytics applications.

DATA QUALITY MANAGEMENT

This presentation has following agenda

Data quality management.

Why do you need data quality management?

Major causes of poor data quality.

Essential factors for clean data.

How to maintain clean data?

Best data quality tools.

Master Your Data. Master Your Business

Suresh Menon, Vice President, Product Management - Information Quality Solutions at Informatica, shares how to master your data and your business from the 2015 Informatica Government Summit.

How to choose the right Martech stack and Data for your organization

There are 3,874 vendors listed in the 2016 Marketing Technology Landscape, and the phrase “MarTech stack” yields over 50,000 Google results. What’s a rational way to decide what you actually need?

Join experts from DemandGen and Openprise as they provide a strategic framework for deciding what systems and what data you need to be successful.

E-commerce (System Analysis and Design)

Our project is about e-commerce system.So we are trying to complete all the necessary need for the e-commerce site.

The dependent relationship of data quality & data governance

This short deck is an overview on how data quality can be utilized to create a better data governance initiative

Quality management best practices

This document discusses quality management best practices and provides resources on the topic. It outlines six common quality management tools: check sheets, control charts, Pareto charts, scatter plots, Ishikawa diagrams, and histograms. These tools can be used to collect and analyze quality data. The document also lists additional quality management topics and provides links to download related PDF files.

How analytics should be used in controls testing instead of sampling

Sampling has existed as a standard for controls testing since controls testing began. We’ve developed algorithms to tell us how many samples we should pull and how many errors we can have and still pass the control. We’ve even developed algorithms to tell us how many more samples we can test if the control didn’t pass the first time.

If your goal is simply to do the minimum to pass a SOX audit, then these behaviors should probably continue. If your goals also include really improving the operations of the organization to make it stronger then a more holistic approach is needed, such as analysis on 100% of the population, rather than a small sample.

Most controls analytics do not require a degree in data science, but they do require the controls team begin changing its behaviors. Join us to understand what it takes to begin this change, it’s not as challenging as you might think.

Learning Objectives

Understanding the advantages of analytics vs sampling

How to Identify controls where analytics can be applied

Real life examples of controls and their associated analytics

How to effect a change

How analytics should be used in controls testing instead of sampling

Sampling has existed as a standard for controls testing since controls testing began. We’ve developed algorithms to tell us how many samples we should pull and how many errors we can have and still pass the control. We’ve even developed algorithms to tell us how many more samples we can test if the control didn’t pass the first time.

If your goal is simply to do the minimum to pass a SOX audit, then these behaviors should probably continue. If your goals also include really improving the operations of the organization to make it stronger then a more holistic approach is needed, such as analysis on 100% of the population, rather than a small sample.

Most controls analytics do not require a degree in data science, but they do require the controls team begin changing its behaviors. Join us to understand what it takes to begin this change, it’s not as challenging as you might think.

Learning Objectives

Understanding the advantages of analytics vs sampling

How to Identify controls where analytics can be applied

Real life examples of controls and their associated analytics

How to effect a change

Data quality management system

This document discusses data quality management systems. It provides information on tools, strategies, and best practices for data quality management. Some key points include:

- Conducting a data quality assessment to understand current data quality issues.

- Building a "data quality firewall" to detect and prevent bad data from entering systems.

- Unifying data management and business intelligence so the highest priority data can be cleansed and analyzed.

- Making business users responsible for data quality as "data stewards".

- Creating a data governance board to set policies and resolve data issues.

Data Governance with Profisee, Microsoft & CCG

1. The workshop agenda covers data governance fundamentals, assessing an organization's data governance maturity using the CCGDG framework, and prioritizing a roadmap for improvement.

2. The Profisee presentation promotes their master data management solution for enabling digital transformation by providing a single view of critical data across systems.

3. Profisee's solution focuses on five key areas: stewardship, matching configuration, adjusting the configuration, operational matching, and workflow management to ensure data quality.

Data quality management

This document provides information about data quality management including tools, strategies, and best practices. It discusses conducting data quality assessments, building a data quality firewall, unifying data management and business intelligence, making business users data stewards, and creating a data governance board as five best practices for data governance and quality management. It also outlines several quality management tools including check sheets, control charts, Pareto charts, scatterplot methods, and Ishikawa diagrams that can be used to determine if a process is in statistical control.

原版一比一多伦多大学毕业证(UofT毕业证书)如何办理

原版制作【微信:41543339】【多伦多大学毕业证(UofT毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

by

Timothy Spann

Principal Developer Advocate

https://budapestdata.hu/2024/en/

https://budapestml.hu/2024/en/

tim.spann@zilliz.com

https://www.linkedin.com/in/timothyspann/

https://x.com/paasdev

https://github.com/tspannhw

https://www.youtube.com/@flank-stack

milvus

vector database

gen ai

generative ai

deep learning

machine learning

apache nifi

apache pulsar

apache kafka

apache flink

More Related Content

Similar to Data Quality & Metadata

How to make the Metadata Model| EWSolutions

Building a strong metadata model is essential to businesses seeking to gain valuable insights from their massive data warehouses in the ever-changing context of managing data. An essential part of improving data governance, quality, and comprehension is metadata.

OberservePoint - The Digital Data Quality Playbook

There is a big difference between having data and having correct data. But collecting correct, compliant digital data is a journey, not a destination. Here are ten steps to get you to data quality nirvana.

Data quality management best practices

This document provides an overview of data quality management best practices. It discusses conducting data quality assessments, building a data quality firewall, unifying data management and business intelligence, making business users data stewards, and creating a data governance board. A variety of quality management tools are also listed, including check sheets, control charts, Pareto charts, scatter plots, Ishikawa diagrams, histograms, and other quality management topics such as systems, courses, techniques, standards, and strategies. The document emphasizes the importance of data governance and ongoing quality improvement processes involving all organizational levels.

The Growing Importance of Data Cleaning

The process of data cleaning involves the process of transformation of data from a raw format to a format that is compatible with your and use case.

Read More: https://expressanalytics.com/blog/growing-importance-of-data-cleaning/

The Key Reason Why Your DG Program is Failing

Key takeaways:

-Identify with the key reasons for failing Data Governance initiatives

-Uncover the commonly used Data Governance terms and their meanings

-Learn the Framework for a successful Data Governance Program

A simplified approach for quality management in data warehouse

Data warehousing is continuously gaining importance as organizations are realizing the benefits of

decision oriented data bases. However, the stumbling block to this rapid development is data quality issues

at various stages of data warehousing. Quality can be defined as a measure of excellence or a state free

from defects. Users appreciate quality products and available literature suggests that many organization`s

have significant data quality problems that have substantial social and economic impacts. A metadata

based quality system is introduced to manage quality of data in data warehouse. The approach is used to

analyze the quality of data warehouse system by checking the expected value of quality parameters with

that of actual values. The proposed approach is supported with a metadata framework that can store

additional information to analyze the quality parameters, whenever required.

Tom Kunz

- A professional data organization can exist within a large company like Shell by managing data as a process across the organization and aligning roles and responsibilities.

- Metadata can accelerate data quality improvement by providing information about the contents, location, and attributes of data that can help identify issues and opportunities to reduce errors.

- Applying techniques from Six Sigma and Lean can help solve data quality issues by structuring improvement efforts, prioritizing projects, and quantifying the costs and risks of poor quality data to motivate necessary changes.

Enterprise information flow and data management

The document discusses the importance of aligning master data management, master data governance, and business process management for effective enterprise information management and decision making. It states that bad data costs businesses 10-20% of annual revenue. The document provides a framework for assessing the maturity of these initiatives and advancing them in a synchronized manner from the initial configuration stage through facilitating, delivering, evaluating, and changing stages. It identifies key factors for evaluating solutions for master data management, governance, and business process management.

Is Your Data Ready to Drive Your Company's Future?

Before investing the time and money to implement a reporting and analytics solution to guide you out of the current economic crisis, make sure that your data is prepared to lead the way.

Join Edgewater Technology for a step-by-step approach to readying your data to support enterprise reporting and analytics applications.

DATA QUALITY MANAGEMENT

This presentation has following agenda

Data quality management.

Why do you need data quality management?

Major causes of poor data quality.

Essential factors for clean data.

How to maintain clean data?

Best data quality tools.

Master Your Data. Master Your Business

Suresh Menon, Vice President, Product Management - Information Quality Solutions at Informatica, shares how to master your data and your business from the 2015 Informatica Government Summit.

How to choose the right Martech stack and Data for your organization

There are 3,874 vendors listed in the 2016 Marketing Technology Landscape, and the phrase “MarTech stack” yields over 50,000 Google results. What’s a rational way to decide what you actually need?

Join experts from DemandGen and Openprise as they provide a strategic framework for deciding what systems and what data you need to be successful.

E-commerce (System Analysis and Design)

Our project is about e-commerce system.So we are trying to complete all the necessary need for the e-commerce site.

The dependent relationship of data quality & data governance

This short deck is an overview on how data quality can be utilized to create a better data governance initiative

Quality management best practices

This document discusses quality management best practices and provides resources on the topic. It outlines six common quality management tools: check sheets, control charts, Pareto charts, scatter plots, Ishikawa diagrams, and histograms. These tools can be used to collect and analyze quality data. The document also lists additional quality management topics and provides links to download related PDF files.

How analytics should be used in controls testing instead of sampling

Sampling has existed as a standard for controls testing since controls testing began. We’ve developed algorithms to tell us how many samples we should pull and how many errors we can have and still pass the control. We’ve even developed algorithms to tell us how many more samples we can test if the control didn’t pass the first time.

If your goal is simply to do the minimum to pass a SOX audit, then these behaviors should probably continue. If your goals also include really improving the operations of the organization to make it stronger then a more holistic approach is needed, such as analysis on 100% of the population, rather than a small sample.

Most controls analytics do not require a degree in data science, but they do require the controls team begin changing its behaviors. Join us to understand what it takes to begin this change, it’s not as challenging as you might think.

Learning Objectives

Understanding the advantages of analytics vs sampling

How to Identify controls where analytics can be applied

Real life examples of controls and their associated analytics

How to effect a change

How analytics should be used in controls testing instead of sampling

Sampling has existed as a standard for controls testing since controls testing began. We’ve developed algorithms to tell us how many samples we should pull and how many errors we can have and still pass the control. We’ve even developed algorithms to tell us how many more samples we can test if the control didn’t pass the first time.

If your goal is simply to do the minimum to pass a SOX audit, then these behaviors should probably continue. If your goals also include really improving the operations of the organization to make it stronger then a more holistic approach is needed, such as analysis on 100% of the population, rather than a small sample.

Most controls analytics do not require a degree in data science, but they do require the controls team begin changing its behaviors. Join us to understand what it takes to begin this change, it’s not as challenging as you might think.

Learning Objectives

Understanding the advantages of analytics vs sampling

How to Identify controls where analytics can be applied

Real life examples of controls and their associated analytics

How to effect a change

Data quality management system

This document discusses data quality management systems. It provides information on tools, strategies, and best practices for data quality management. Some key points include:

- Conducting a data quality assessment to understand current data quality issues.

- Building a "data quality firewall" to detect and prevent bad data from entering systems.

- Unifying data management and business intelligence so the highest priority data can be cleansed and analyzed.

- Making business users responsible for data quality as "data stewards".

- Creating a data governance board to set policies and resolve data issues.

Data Governance with Profisee, Microsoft & CCG

1. The workshop agenda covers data governance fundamentals, assessing an organization's data governance maturity using the CCGDG framework, and prioritizing a roadmap for improvement.

2. The Profisee presentation promotes their master data management solution for enabling digital transformation by providing a single view of critical data across systems.

3. Profisee's solution focuses on five key areas: stewardship, matching configuration, adjusting the configuration, operational matching, and workflow management to ensure data quality.

Data quality management

This document provides information about data quality management including tools, strategies, and best practices. It discusses conducting data quality assessments, building a data quality firewall, unifying data management and business intelligence, making business users data stewards, and creating a data governance board as five best practices for data governance and quality management. It also outlines several quality management tools including check sheets, control charts, Pareto charts, scatterplot methods, and Ishikawa diagrams that can be used to determine if a process is in statistical control.

Similar to Data Quality & Metadata (20)

OberservePoint - The Digital Data Quality Playbook

OberservePoint - The Digital Data Quality Playbook

A simplified approach for quality management in data warehouse

A simplified approach for quality management in data warehouse

Is Your Data Ready to Drive Your Company's Future?

Is Your Data Ready to Drive Your Company's Future?

How to choose the right Martech stack and Data for your organization

How to choose the right Martech stack and Data for your organization

The dependent relationship of data quality & data governance

The dependent relationship of data quality & data governance

How analytics should be used in controls testing instead of sampling

How analytics should be used in controls testing instead of sampling

How analytics should be used in controls testing instead of sampling

How analytics should be used in controls testing instead of sampling

Recently uploaded

原版一比一多伦多大学毕业证(UofT毕业证书)如何办理

原版制作【微信:41543339】【多伦多大学毕业证(UofT毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

by

Timothy Spann

Principal Developer Advocate

https://budapestdata.hu/2024/en/

https://budapestml.hu/2024/en/

tim.spann@zilliz.com

https://www.linkedin.com/in/timothyspann/

https://x.com/paasdev

https://github.com/tspannhw

https://www.youtube.com/@flank-stack

milvus

vector database

gen ai

generative ai

deep learning

machine learning

apache nifi

apache pulsar

apache kafka

apache flink

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Harness the power of AI-backed reports, benchmarking and data analysis to predict trends and detect anomalies in your marketing efforts.

Peter Caputa, CEO at Databox, reveals how you can discover the strategies and tools to increase your growth rate (and margins!).

From metrics to track to data habits to pick up, enhance your reporting for powerful insights to improve your B2B tech company's marketing.

- - -

This is the webinar recording from the June 2024 HubSpot User Group (HUG) for B2B Technology USA.

Watch the video recording at https://youtu.be/5vjwGfPN9lw

Sign up for future HUG events at https://events.hubspot.com/b2b-technology-usa/

Experts live - Improving user adoption with AI

Bekijk de slides van onze sessie Enhancing Modern Workplace Efficiency op Experts Live 2024.

原版一比一弗林德斯大学毕业证(Flinders毕业证书)如何办理

原版制作【微信:41543339】【弗林德斯大学毕业证(Flinders毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

The Modern Marketing Reckoner (MMR) is a comprehensive resource packed with POVs from 60+ industry leaders on how AI is transforming the 4 key pillars of marketing – product, place, price and promotions.

原版一比一利兹贝克特大学毕业证(LeedsBeckett毕业证书)如何办理

原版制作【微信:41543339】【利兹贝克特大学毕业证(LeedsBeckett毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(UMN文凭证书)明尼苏达大学毕业证如何办理

毕业原版【微信:176555708】【(UMN毕业证书)明尼苏达大学毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版制作(unimelb毕业证书)墨尔本大学毕业证Offer一模一样

学校原件一模一样【微信:741003700 】《(unimelb毕业证书)墨尔本大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

This webinar will explore cutting-edge, less familiar but powerful experimentation methodologies which address well-known limitations of standard A/B Testing. Designed for data and product leaders, this session aims to inspire the embrace of innovative approaches and provide insights into the frontiers of experimentation!

一比一原版(UCSF文凭证书)旧金山分校毕业证如何办理

毕业原版【微信:176555708】【(UCSF毕业证书)旧金山分校毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(UCSB文凭证书)圣芭芭拉分校毕业证如何办理

毕业原版【微信:176555708】【(UCSB毕业证书)圣芭芭拉分校毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

University of New South Wales degree offer diploma Transcript

澳洲UNSW毕业证书制作新南威尔士大学假文凭定制Q微168899991做UNSW留信网教留服认证海牙认证改UNSW成绩单GPA做UNSW假学位证假文凭高仿毕业证申请新南威尔士大学University of New South Wales degree offer diploma Transcript

Open Source Contributions to Postgres: The Basics POSETTE 2024

Postgres is the most advanced open-source database in the world and it's supported by a community, not a single company. So how does this work? How does code actually get into Postgres? I recently had a patch submitted and committed and I want to share what I learned in that process. I’ll give you an overview of Postgres versions and how the underlying project codebase functions. I’ll also show you the process for submitting a patch and getting that tested and committed.

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Navigating today's data landscape isn't just about managing workflows; it's about strategically propelling your business forward. Apache Airflow has stood out as the benchmark in this arena, driving data orchestration forward since its early days. As we dive into the complexities of our current data-rich environment, where the sheer volume of information and its timely, accurate processing are crucial for AI and ML applications, the role of Airflow has never been more critical.

In my journey as the Senior Engineering Director and a pivotal member of Apache Airflow's Project Management Committee (PMC), I've witnessed Airflow transform data handling, making agility and insight the norm in an ever-evolving digital space. At Astronomer, our collaboration with leading AI & ML teams worldwide has not only tested but also proven Airflow's mettle in delivering data reliably and efficiently—data that now powers not just insights but core business functions.

This session is a deep dive into the essence of Airflow's success. We'll trace its evolution from a budding project to the backbone of data orchestration it is today, constantly adapting to meet the next wave of data challenges, including those brought on by Generative AI. It's this forward-thinking adaptability that keeps Airflow at the forefront of innovation, ready for whatever comes next.

The ever-growing demands of AI and ML applications have ushered in an era where sophisticated data management isn't a luxury—it's a necessity. Airflow's innate flexibility and scalability are what makes it indispensable in managing the intricate workflows of today, especially those involving Large Language Models (LLMs).

This talk isn't just a rundown of Airflow's features; it's about harnessing these capabilities to turn your data workflows into a strategic asset. Together, we'll explore how Airflow remains at the cutting edge of data orchestration, ensuring your organization is not just keeping pace but setting the pace in a data-driven future.

Session in https://budapestdata.hu/2024/04/kaxil-naik-astronomer-io/ | https://dataml24.sessionize.com/session/667627

The Ipsos - AI - Monitor 2024 Report.pdf

According to Ipsos AI Monitor's 2024 report, 65% Indians said that products and services using AI have profoundly changed their daily life in the past 3-5 years.

一比一原版英属哥伦比亚大学毕业证(UBC毕业证书)学历如何办理

原版办【微信号:BYZS866】【英属哥伦比亚大学毕业证(UBC毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(Unimelb毕业证书)墨尔本大学毕业证如何办理

原版制作【微信:41543339】【(Unimelb毕业证书)墨尔本大学毕业证】【微信:41543339】《成绩单、外壳、雅思、offer、留信学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同进口机器一比一制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Recently uploaded (20)

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

Monthly Management report for the Month of May 2024

Monthly Management report for the Month of May 2024

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

University of New South Wales degree offer diploma Transcript

University of New South Wales degree offer diploma Transcript

Open Source Contributions to Postgres: The Basics POSETTE 2024

Open Source Contributions to Postgres: The Basics POSETTE 2024

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Data Quality & Metadata

- 1. Data Quality Metadata Quiz Finished Click on the component you would like to learn more about. 2 Information Management Components Click here to start

- 2. Click here to continue or skip introduction

- 3. Data Quality Metadata Quiz Finished Click on the component you would like to learn more about. 2 Information Management Components Click here to start

- 4. Components of Data Quality Privacy Completeness Currency Click on the component you would like to learn more about.

- 5. Importance of Data Quality Data quality is important for reporting on which decisions are made on. Incomplete or incorrect data would lead to wrong decision making.

- 6. Components of Metadata Business Technical & Operational Data Stewardship Process Input Output Click on the component you would like to learn more about.

- 7. Importance of Metadata Metadata creates an understanding of the data that is being stored in the database Without metadata the organisation will not be able to monitor the data and have the data shared among the users Continue to Data Quality

- 8. Quiz: Select the correct option 1. Completeness is a component of the Data Quality Information Management Practise True False 2. Which component is not a component of Metadata Currency Process Data Stewardship Technical and Operational 3. Incomplete or incorrect data would lead to ________ decision making 4. Metadata creates an ___________ of the data that is being stored in the database Wrong Good enough Correct Idea Understanding Overview

- 9. Feedback (Correct) Well done, your answer is correct! Back to quiz

- 11. Finished You are at the end of the learning pack. Hope you have a good overview of the 2 Information Management components Data Quality and Metadata.

- 12. References & License • DAMAInternational (2010). DAMA Guide to the Data Management Body of Knowledge. Technics Publications, LCC. (DAMAInternational, 2010, pp. 1-6, 259-317)