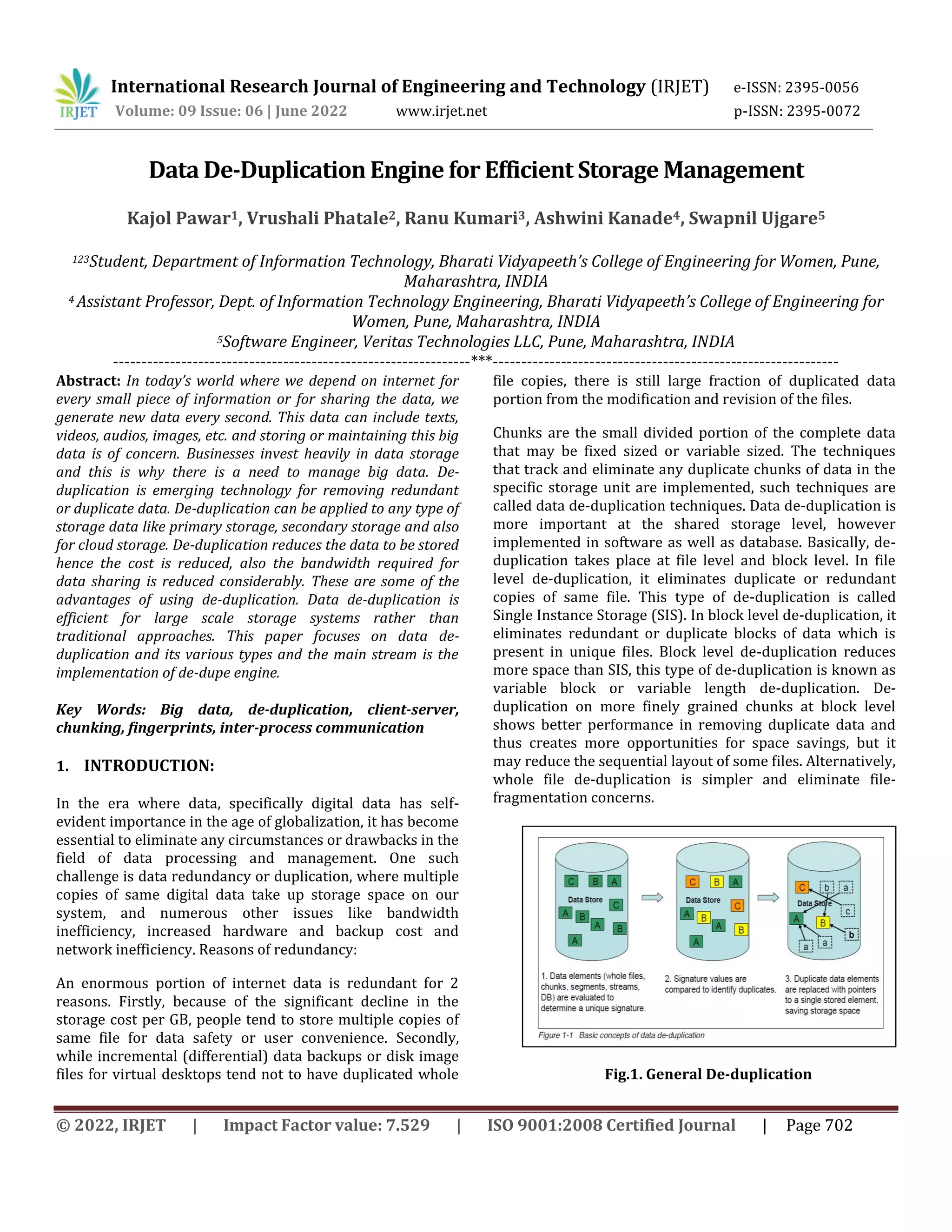

This document describes a data de-duplication engine for efficient storage management. It discusses how data de-duplication works to eliminate redundant data and reduce storage needs. The document outlines the methodology used to implement a client-server de-duplication engine that identifies unique data chunks, writes metadata to track duplicates, and can restore files using only the metadata. Key aspects covered include source vs target de-duplication, inline vs post-processing, file-level vs block-level de-duplication, and the data structures and algorithms used.