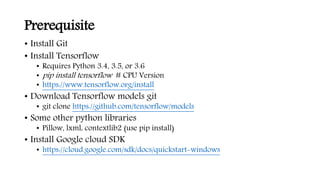

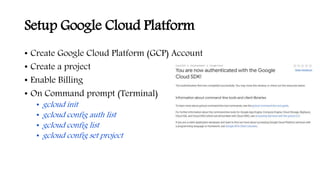

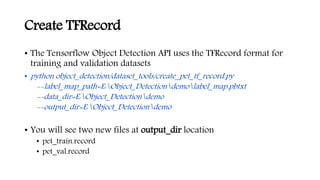

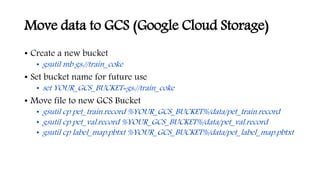

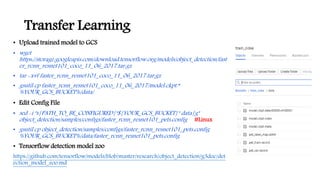

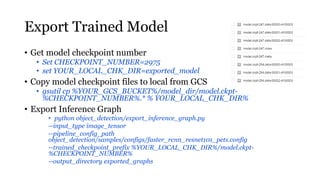

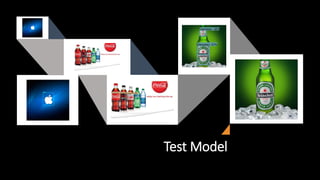

This document outlines the steps to train a custom object detection model using TensorFlow and Google Cloud Platform. It includes setting up prerequisites like TensorFlow and Google Cloud SDK, creating training datasets with images and annotations, converting data to TFRecord format, training a model using transfer learning on Cloud ML Engine, exporting the trained model, and testing the model.