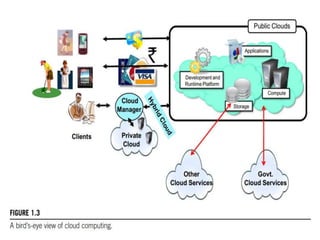

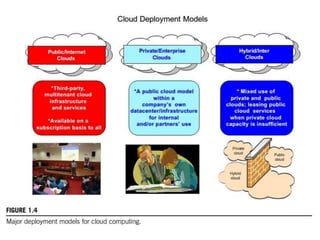

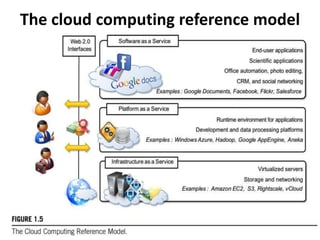

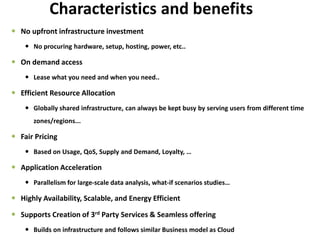

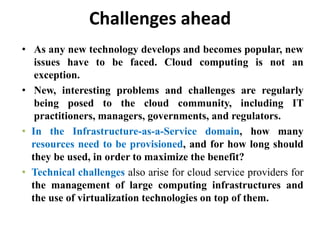

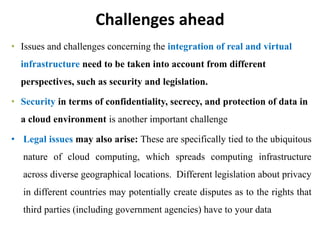

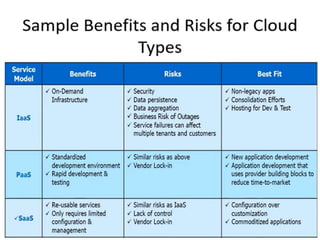

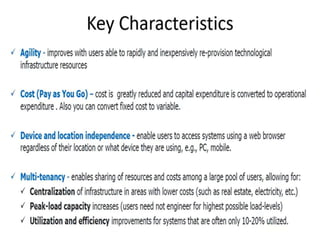

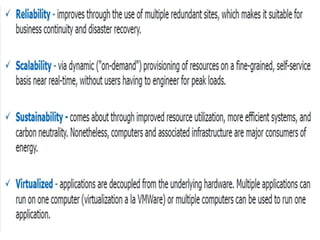

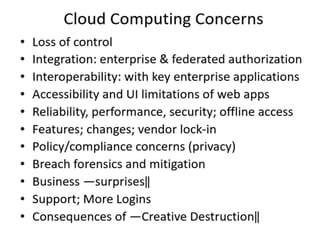

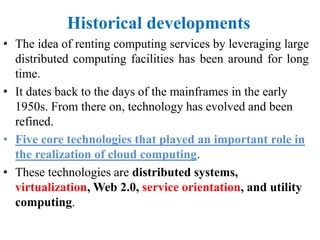

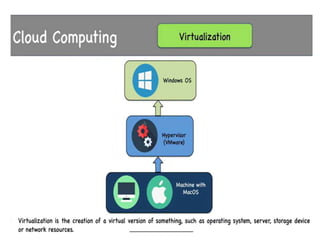

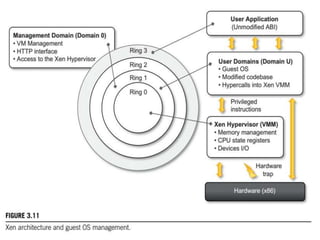

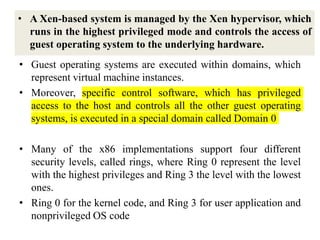

Cloud computing allows users to access computing resources like servers, storage, databases, networking, software and more over the internet. It offers on-demand access to resources that can be provisioned quickly with minimal management effort. Cloud computing is delivered through three major models - Infrastructure as a Service (IaaS), Platform as a Service (PaaS) and Software as a Service (SaaS). IaaS provides virtualized computing resources, PaaS provides platforms to build apps on and SaaS provides ready-to-use applications. Cloud computing offers advantages like no upfront costs, flexibility, scalability and efficiency. However, security, legal and technical challenges need to be addressed for cloud computing to reach its full