ch13 here is the ppt of this chapter included pictures

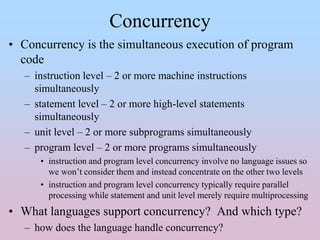

- 1. Concurrency • Concurrency is the simultaneous execution of program code – instruction level – 2 or more machine instructions simultaneously – statement level – 2 or more high-level statements simultaneously – unit level – 2 or more subprograms simultaneously – program level – 2 or more programs simultaneously • instruction and program level concurrency involve no language issues so we won’t consider them and instead concentrate on the other two levels • instruction and program level concurrency typically require parallel processing while statement and unit level merely require multiprocessing • What languages support concurrency? And which type? – how does the language handle concurrency?

- 2. Categories of Concurrency • Physical concurrency: program code executed in parallel on multiple processors • Logical concurrency: program code executed in an interleaved fashion on a single processor with the OS or language responsible for the switching from one piece of code to another – to a programmer, physical and logical concurrency are the same, the language implementor must map logical concurrency onto the underlying hardware – thread of control is the path through the code taken, that is, the sequence of program points reached • Unit-level logical concurrency is implemented through the co- routine – a form of subroutine but with a different behavior from previous subroutines – one coroutine executes at a time, like normal subroutines, but in an interleaved fashion rather than a LIFO fashion – a coroutine can interrupt itself to start up another coroutine • for instance, a called function may stop to let the calling function execute, and then return to the same spot in the called function later

- 3. Subprogram-Level Concurrency • Task – a program unit that can execute concurrently with another program unit – tasks are unlike subroutines because • they may be implicitly started rather than explicitly as with subprograms • a unit that invokes a task does not have to wait for the task to complete but may continue executing • control may or may not return to the calling task – tasks may communicate through • shared memory (non-local variables), parameters, or through message passing – PL/I was the first language to offer subprogram-level concurrency via “call task” and “wait(event)” instructions • programmers can specify the priority of each task so that waiting routines are prioritized • wait is used to force a routine to wait the routine it is waiting on has finished • Disjoint tasks – tasks which do not affect each other or have any common memory – most tasks are not disjoint but instead share information

- 4. Example: Card Game • Four players, each using the same strategy • The card game is simulated as follows: – master unit creates 4 coroutines – master unit initializes each coroutine such that each starts with a different set of cards (perhaps an array randomly generated) • Master unit selects one of the 4 coroutines to start the game and resumes it – the coroutine runs its routine to decide what it will play and then resumes the next coroutine in order – after the 4th coroutine executes its “play”, it resumes the 1st one to start the next turn • notice that this is not like a normal function call where the caller is resumed once the function terminates! – this continues until one coroutine wins, at which point the coroutine returns control to the master unit • notice here that transfer of control is always progressing through each hand, this is only one form of concurrent control

- 5. More on Tasks • A heavyweight task executes in its own address space with its own run-time stack • Lightweight tasks share the same address space and run-time stack with each other – the lightweight task is often called a thread • Non-disjoint tasks must communicate with each other – this requires synchronization: • cooperating synchronization (consumer-producer relationship) • competitive synchronization (access to a shared critical section) – synchronization methods: • monitors • semaphores • message passing Without synchronization, shared data can become corrupt – here, TOTAL should be 8 (if A fetches TOTAL before B) or7

- 6. Liveness and Deadlock • These are the two states for any concurrent task (or multitasking) • A process which is not making progress toward completion may be in a state of: – deadlock – a process is holding resources other processes need while those processes are holding resources this process needs – starvation – a process continually is unable to access the resource because others get to it first • Liveness is when a task is in a state that will eventually allow it to complete execution and terminate – meaning that the task is not suffering from and will not suffer from either deadlock or starvation – without a fair selection mechanism for the next concurrent task, a process could easily wind up starving, and without adequate OS protection, a process could wind up in deadlock • these issues are studied in operating systems and so we won’t discuss them in much more detail in this chapter • Note that without concurrency or multitasking, deadlock cannot arise and starvation should not arise – unless resources are unavailable (off-line, not functioning)

- 7. Design Issues for Concurrency • Unit-level concurrency is supported by synchronization – two forms: competitive and cooperative • How is synchronization implemented? – semaphores – monitors – message passing – threads • How and when do tasks begin and end execution? • How and when are tasks created (statically or dynamically)? • Synchronization guards over the execution of coroutines – coroutines have these features • multiple entry points • a means to maintain status information when inactive • invoked by a “resume” instruction (rather than a call) • may have “initialization code” which is only executed when the coroutine is created – typically, all coroutines are created by a non-coroutine program unit often called a master unit – if a coroutine reaches end of its code, control is transferred to master unit • otherwise, control is determined by a coroutine resuming another one

- 8. Semaphores • A data structure that provides synchronization through mutually exclusive access – a semaphore typically just stores an int value: 1 means that the shared resource is available, 0 means it is unavailable – the semaphore uses two processes: wait and release • when a process needs to access the shared resource, it executes wait • when the process is done with the shared resource, it executes resume – for the semaphore to work, wait and release cannot be interrupted (e.g., via multitasking) • so wait and release are often implemented in the machine’s instruction set as atomic instructions void wait(semaphore s) { if (s.value > 0) s.value--; else place the calling process in a wait queue, s.queue } void release(semaphore s) { if s.queue is not empty, wake up first process else s.value++; } A simpler form of the semaphore uses a while loop instead of a queue, that is, the process stays in a while loop while s.value <= 0

- 9. Using the Semaphore semaphore full, empty; full.value = 0; empty.value = 0; //producer code: while(1) { // produce value wait(empty); insert(value); release(full); } //consumer code: while(1) { wait(full); retrieve(value); release(empty); // consume value } semaphore access, full, empty; access.value = 1; full.value = 0; empty.value = BUFFER_LENGTH; while(1) { // produce value wait(empty); wait(access); insert(value); release(access); release(full); } while(1) { wait(full); wait(access); retrieve(value); release(access); release(empty); // consume value } Producer-consumer code On the left, consumer must wait until producer produces (cooperative synch) On the right, as long as a product is available, any consumer can consume it and any producer can produce if there is room in the buffer (competitive synch) NOTE: access ensures that two producers or two consumers are not accessing the buffer at the same time

- 10. Evaluation of Semaphores • Binary semaphores were first implemented in PL/I which was the first language to offer concurrency – the binary semaphore version had no queue such that a waiting coroutine may not gain access in a timely fashion • in fact, there is no guarantee that a coroutine would not starve • so the semaphore’s use in PL/I was limited – ALGOL 68 offered compound-level concurrency and had a built-in data type called sema (semaphore) • Unfortunately, semaphore use can lead to disasterous results if not checked carefully – misuse can lead to deadlock or can permit non-mutual exclusive access (as shown in the previous slide’s notes page) • there is no way to, in general, check semaphore usage to ensure correctness, so, by offering built-in semaphores in a language, it does not necessarily help matters – instead, we will now turn to a better synchronization construct • the monitor

- 11. Monitors • Introduced in Concurrent Pascal (1975) and later used in Modula and other languages – concurrent Pascal is Pascal + classes (Simula 67), tasks (for concurrency) and monitors – the general form of the Concurrent Pascal monitor is given to the right • the monitor is an encapsulated data type (ADT) but one that allows shared access to its data structure through synchronization • one can use the monitor to implement cooperative or competitive synchronization without semaphores type monitor_name = monitor(params) --- declaration of shared vars --- --- definitions of local procedures --- --- definitions of exported procedures --- --- initialization code --- end Exported procedures are those that can be referenced externally, e.g., the public portion of the monitor Because the monitor is implemented in the language itself as a subprogram type, there is no way to misuse it like you could semaphores

- 12. Competitive and Cooperative Synchronization • Access to the shared data of a monitor is automatically synchronized through the monitor – competitive synchronization needs no further mechanisms – cooperative synchronization requires further communication so that one task can alert another task to continue once it has performed its operation Here, different tasks communicate through a shared buffer, inserting and removing data to the buffer Through the use of continue(process), one process can alert another as to when the datum is ready

- 13. Message Passing • While the monitor approach is safer than semaphores, it does not work if we are dealing with a concurrent system with distributed memory – message passing will solve this problem • message passing uses a transmission from one process to another called a rendezvous – we can use either synchronous message passing or asynchronous message passing • both approaches have been implemented in Ada – synchronous message passing in Ada 83 – asynchronous message passing in Ada 95 – both of these are rather complex, so we are going to skip it, the message passing model is one seen in OOP, so you should be familiar with the idea even though you might not understand the implementation • you can explore the details on pages 591-598 if you wish

- 14. Threads • The concurrent unit in Java and C# is the thread – lightweight tasks (as opposed to Ada’s heavyweight tasks) – a thread is code that shares address and stack space but each thread has its own private data space • In Java threads, threads have at least two methods – run: describes the actions of the thread – start: starts the thread as a concurrent unit but control also resumes to the caller which continues executing (sort of like a fork in Unix) • All Java classes are implemented as single threads – to define your own threaded class, you have to extend the Thread class • If the program has multiple threads, a scheduler must manage which thread should be run at any given time – different OSs schedule threads in different ways, so executing threads is somewhat unpredictable • Additional thread methods include: – yield (voluntarily stop itself) – sleep (block itself for a given number of milliseconds) – join (call another thread until it terminates) – stop, suspend and resume

- 15. Synchronization With Threads • Competitive synchronization is implemented by – specifying that one method’s code must run completely before a competitor’s runs – this can be done by using the synchronized modifier • see the code to the right where the two methods are synchronized for access to buf • Cooperative synchronization uses methods wait, notify and notifyAll of the Object class – notify will tell a given thread that an event has occurred, notifyAll notifies all threads in an object’s wait list – wait puts a thread to sleep until it is notified class ManageBuffer { private int[100] buf; … public synchronized void deposit(int item) {…} public synchronized int fetch( ) {…} … }

- 16. Partial Example class Queue { private int[ ] queue; private int nextIn, nextOut, filled, size; // constructor omitted public synchronized void deposit(int item) { try { while(filled = = size) wait( ); queue[nextIn] = item; nextIn = (nextIn % size) + 1; filled++; notifyAll( ); } catch(InterruptedExecution e) {} } public synchronized int fetch( ) { int item = 0; try { while(filled = = 0) wait( ); item = queue[nextOut]; nextOut = (nextOut % size) + 1; filled --; notifyAll( ); } catch(InterruptedExecution e) {} return item; } } // end class We would create Producer and Consumer classes that extend Thread and contain the Queue as a shared data structure (create a single Queue object and use it when initializing both the Producer object and the Consumer object) See pages 603-606 for the full example

- 17. C# Threads • A modest improvement over Java threads – any method can run in its own thread by creating a Thread object – Thread objects are instantiated with a previously defined ThreadStart class which is passed the method that implements the action of the new Thread object • C#, like Java, has methods for start, join, sleep, and includes an abort method that makes termination of threads superior than in Java • In C#, threads can be synchronized by – being placed inside a Monitor class (for creating an ADT with a critical section as per Pascal) – being placed inside an Interlock class (this is used only when synchronizing access to a datum that is to be incr/decr) – using the lock statement (to mark access to a critical section inside a thread) • C# threads are not as flexible as Ada threads, but are simpler to use/implement

- 18. Statement-Level Concurrency • From a language designer’s viewpoint, it is important to have constructs that allow a programmer to identify to a compiler how a program can be mapped onto a multiprocessor – there are many forms ways to parallelize code, the book only refers to methods for a SIMD architecture • High-Performance FORTRAN is an extension to FORTRAN 90 that allows programmers to specify statement-level concurrency – PROCESSORS is a specification that describes the number of processors available for the program. This is used with other specifications to tell the compiler how data is to be distributed – DISTRIBUTE statement specifies what data is to be distributed (e.g. an array) – ALIGN statement relates the distribution of arrays with each other – FORALL statement lists those statements that can be executed concurrently • details can be found on pages 609-610 • Other languages are available to implement statement-level concurrency such as Cn (a variation of C), Parallaxis-III (a variation of Modula-2) and Vector Pascal