Embed presentation

Download to read offline

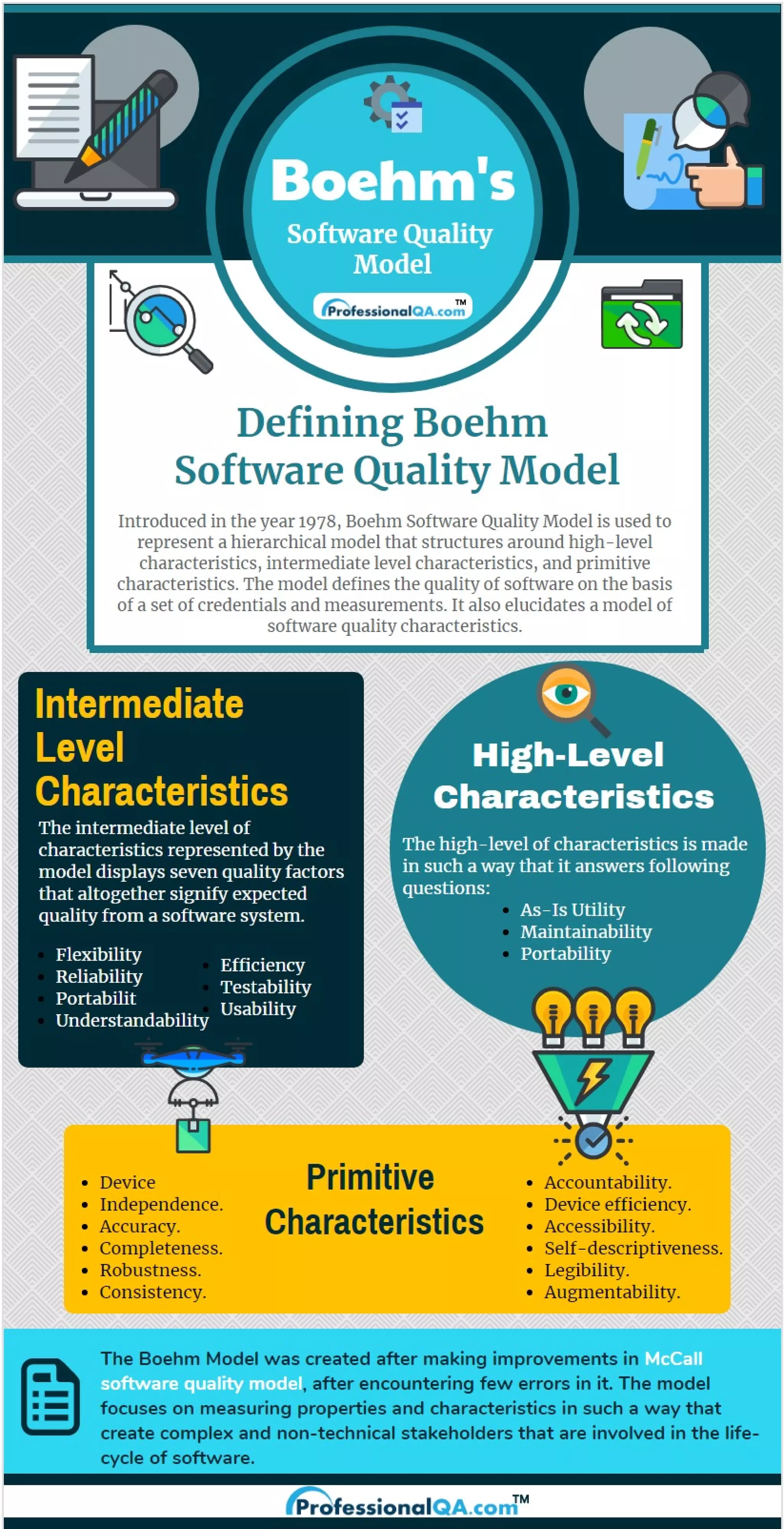

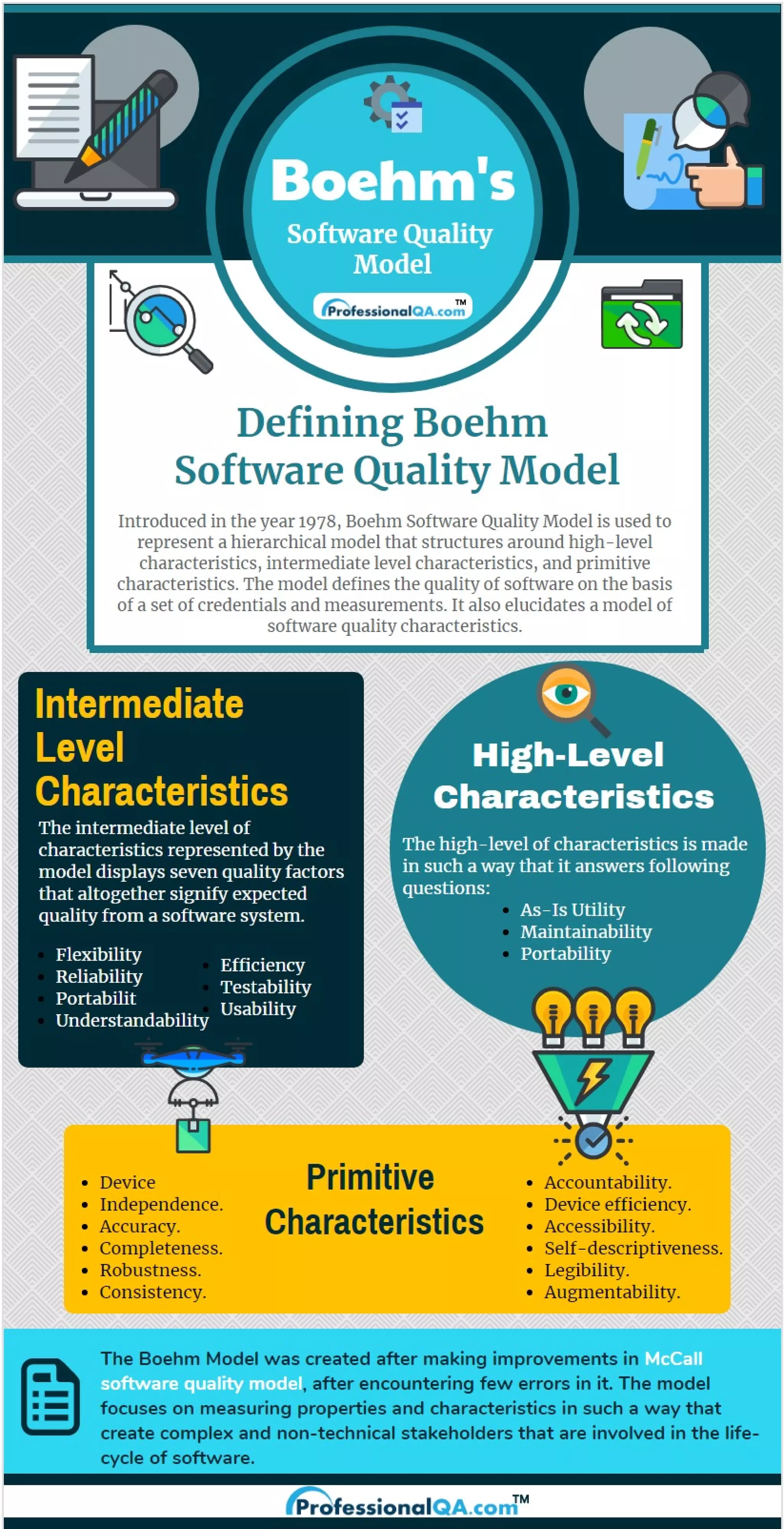

Boehm Software Quality Model is an important Software Quality Model. Introduced in 1978, it helps define the usability, maintainability, and portability of the product. Learn more: www.professionalqa.com/boehm-software-quality-model