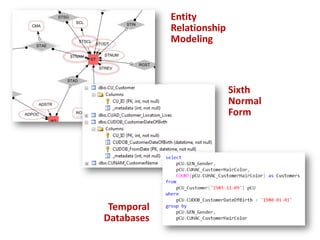

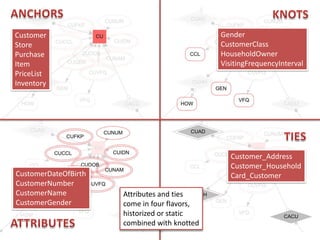

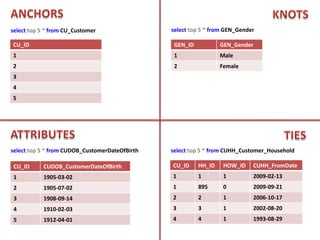

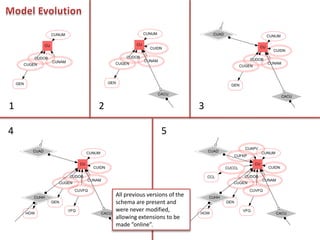

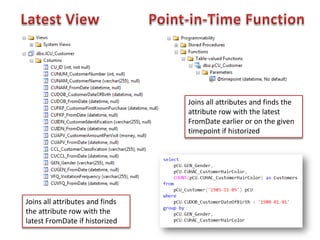

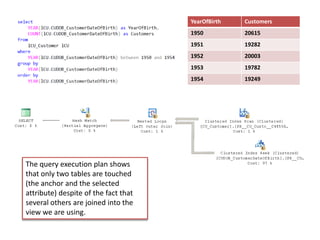

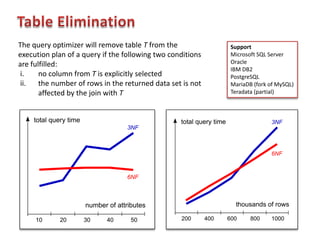

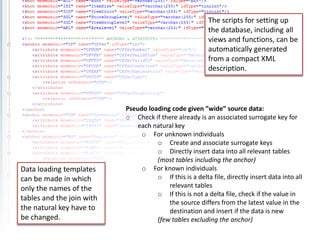

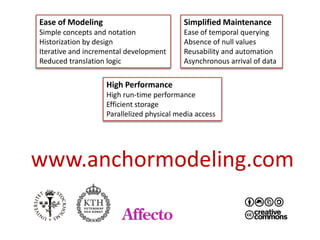

The document discusses an agile modeling technique utilizing the sixth normal form for managing structurally and temporally evolving data. It covers various aspects such as entity relationship modeling, attributes, and query execution, while emphasizing features like ease of modeling, simplified maintenance, and high performance. Additionally, it highlights the ability to handle temporal data efficiently through a combination of historical and current attributes.

![AN AGILE MODELING TECHNIQUE USING THE SIXTH NORMAL FORM

FOR STRUCTURALLY AND TEMPORALLY EVOLVING DATA

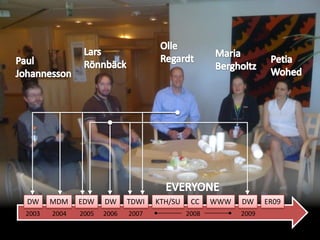

Lars Rönnbäck

[ER09]](https://image.slidesharecdn.com/anchormodelinger09presentation-100909130056-phpapp02/75/Anchor-Modeling-1-2048.jpg)