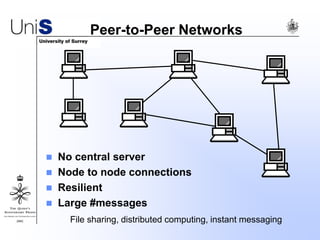

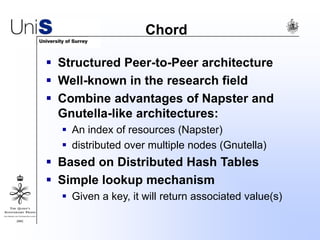

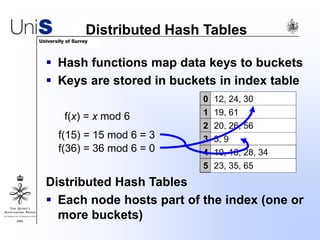

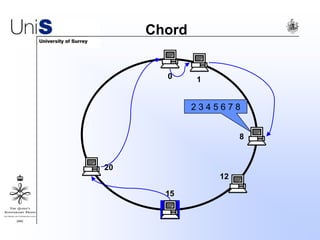

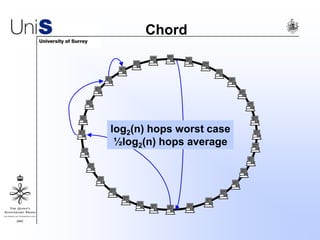

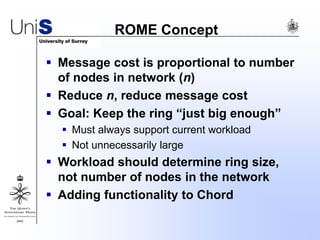

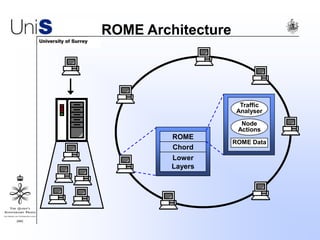

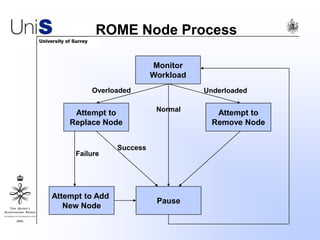

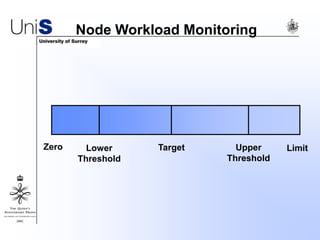

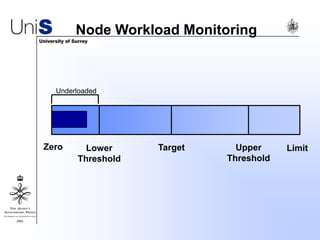

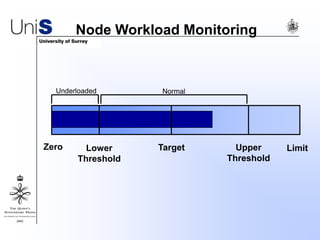

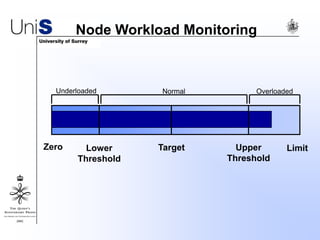

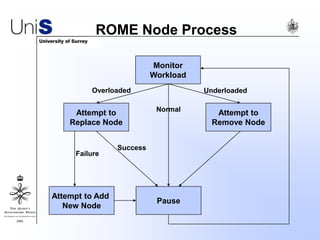

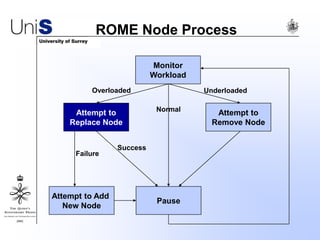

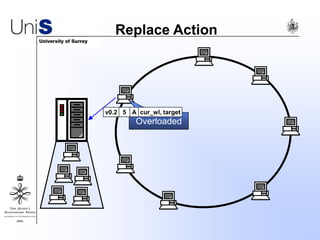

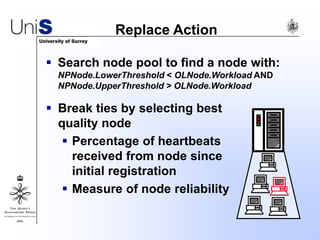

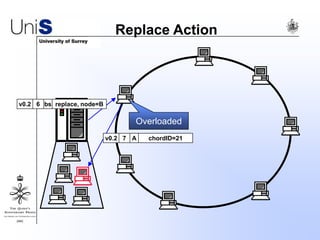

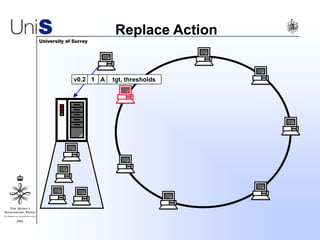

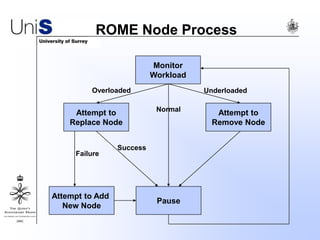

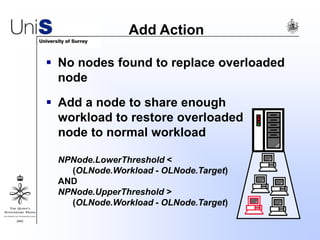

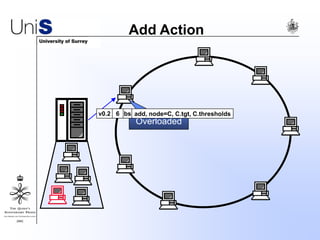

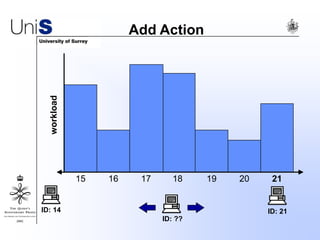

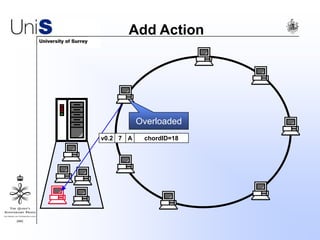

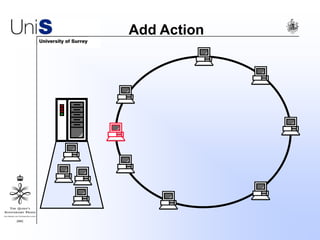

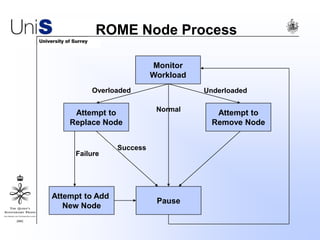

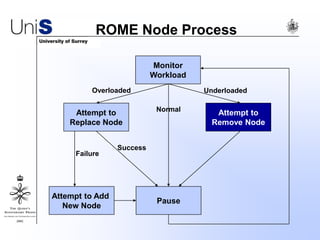

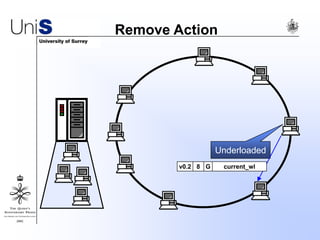

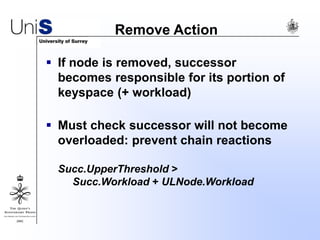

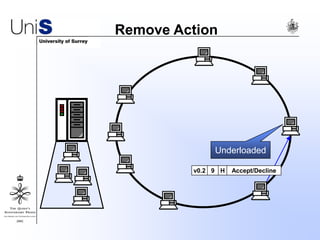

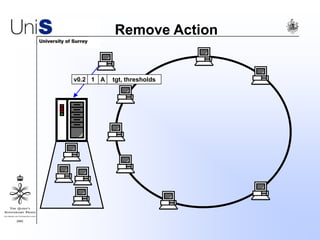

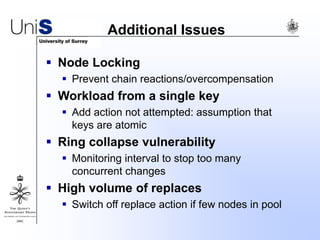

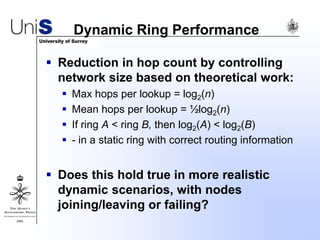

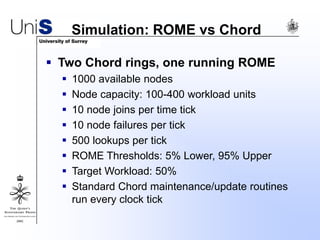

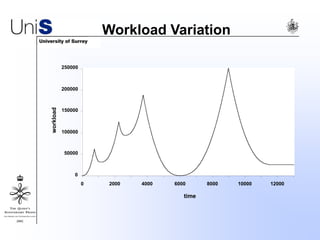

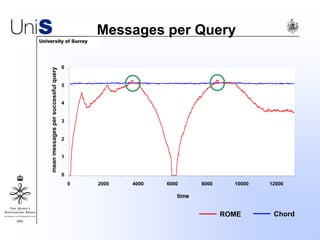

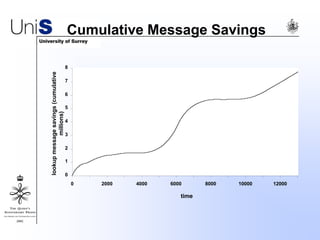

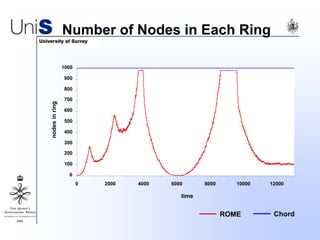

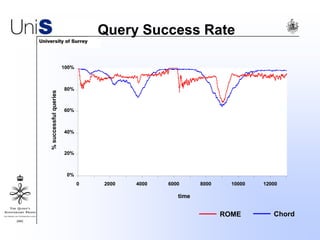

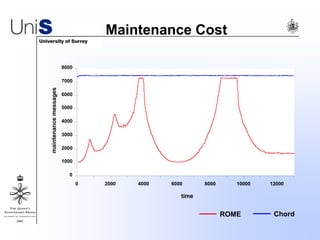

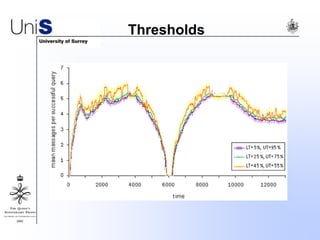

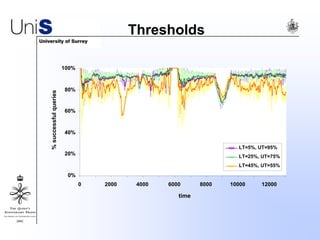

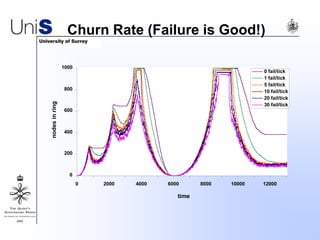

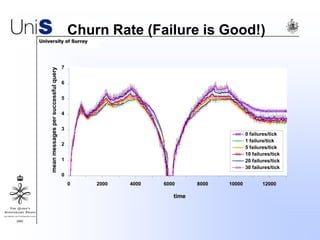

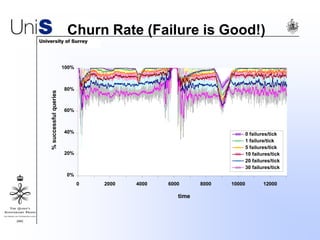

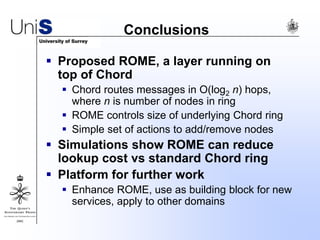

This document summarizes a thesis titled "An Efficient Reactive Model for Resource Discovery in DHT-Based Peer-to-Peer Networks". It introduces ROME, a new architecture that runs on top of Chord to dynamically control the size of the Chord ring based on workload. The ROME node process monitors workload and attempts actions like replacing overloaded nodes, adding nodes, or removing underloaded nodes to maintain an optimally sized ring. Simulations show ROME can reduce lookup costs compared to a standard Chord ring under changing network conditions like node joins and failures.