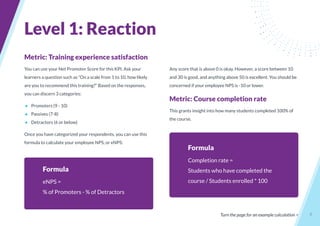

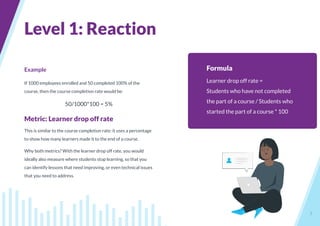

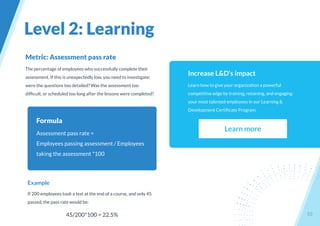

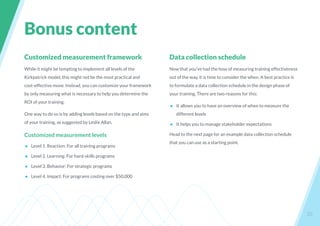

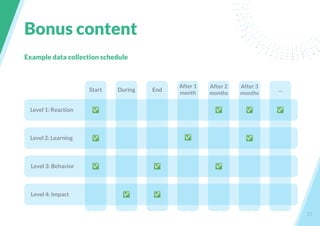

This document provides guidance on measuring the effectiveness of learning and development programs through various metrics and key performance indicators. It outlines the Kirkpatrick evaluation model's four levels for measuring training effectiveness: reaction, learning, behavior, and results. For each level, the document discusses goals, data collection methods, example metrics, and formulas for calculating metrics. It aims to help learning and development professionals quantify the value of their initiatives through a data-driven approach.