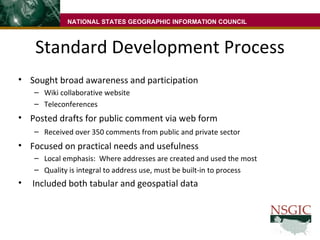

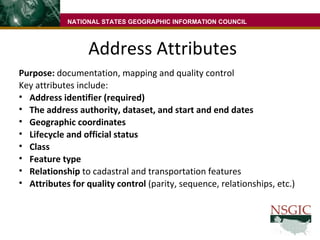

The document summarizes a draft United States address data standard being submitted to the Federal Geographic Data Committee. The standard was developed through a collaborative process and provides guidelines for address element definitions, address classes, quality assurance testing, and data exchange formats. Implementing the standard will help facilitate consistent address data management and sharing across organizations.

![Questions? For access to the Address Standard current draft, please send your name and email address to: [email_address] You will receive an email with your user name and password, and the URL for the site You may also leave a business card, with “address standard access” written on it. NATIONAL STATES GEOGRAPHIC INFORMATION COUNCIL](https://image.slidesharecdn.com/addressstandardpresentationnsgicmwells20091006-091009094146-phpapp02/85/Address-Standard-19-320.jpg)