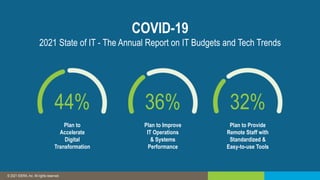

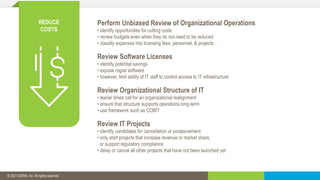

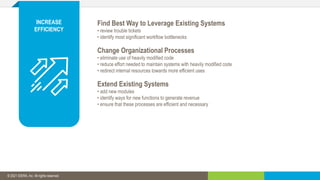

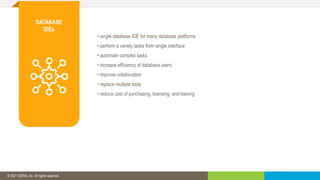

The document discusses how organizations are looking to cut costs and improve efficiency in response to economic uncertainty. It suggests that CIOs need to reduce costs while increasing efficiency. It then provides recommendations for how to reduce costs, such as performing budget reviews and improving processes. It also recommends investing in a database IDE to make database users more efficient and allow them to perform multiple tasks from one interface.