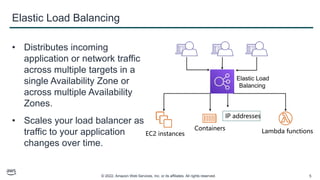

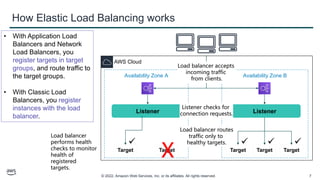

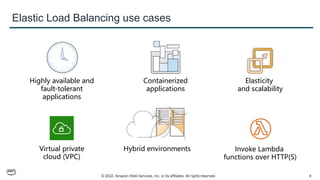

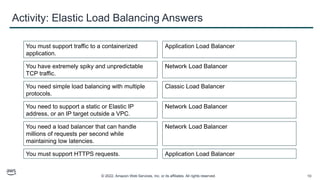

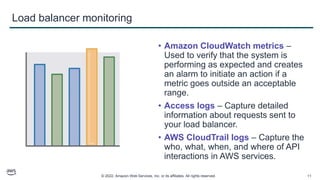

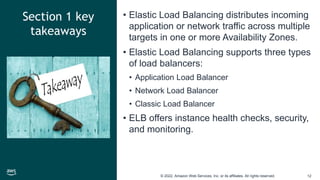

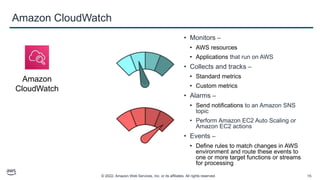

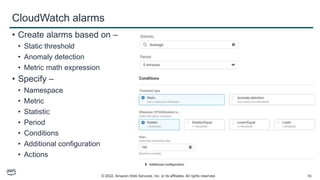

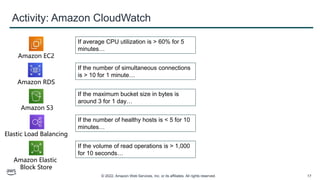

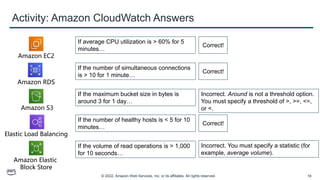

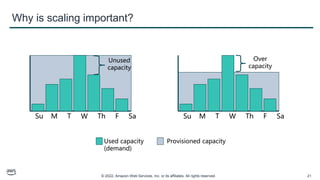

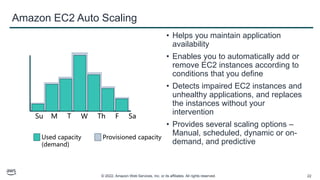

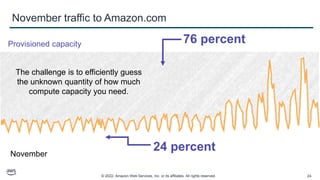

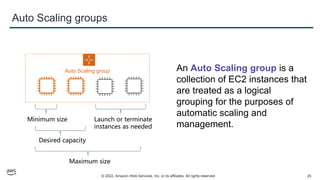

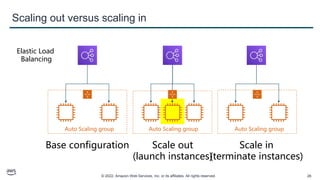

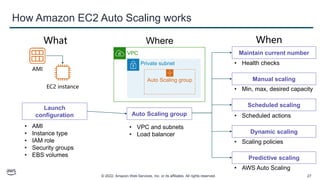

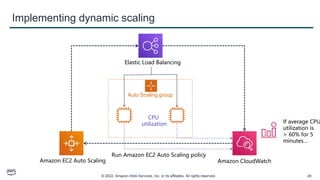

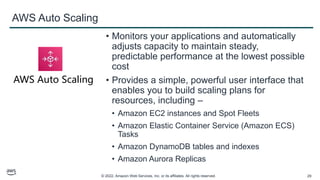

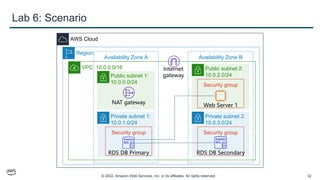

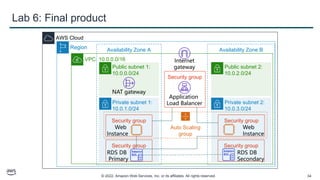

This document provides an overview of automatic scaling and monitoring on AWS. It discusses Elastic Load Balancing and how it distributes traffic across instances, Amazon CloudWatch for real-time monitoring of resources, and Amazon EC2 Auto Scaling for launching and terminating instances in response to workload changes. The objectives are to learn how to distribute traffic with ELB, monitor with CloudWatch, and automatically scale with Auto Scaling.