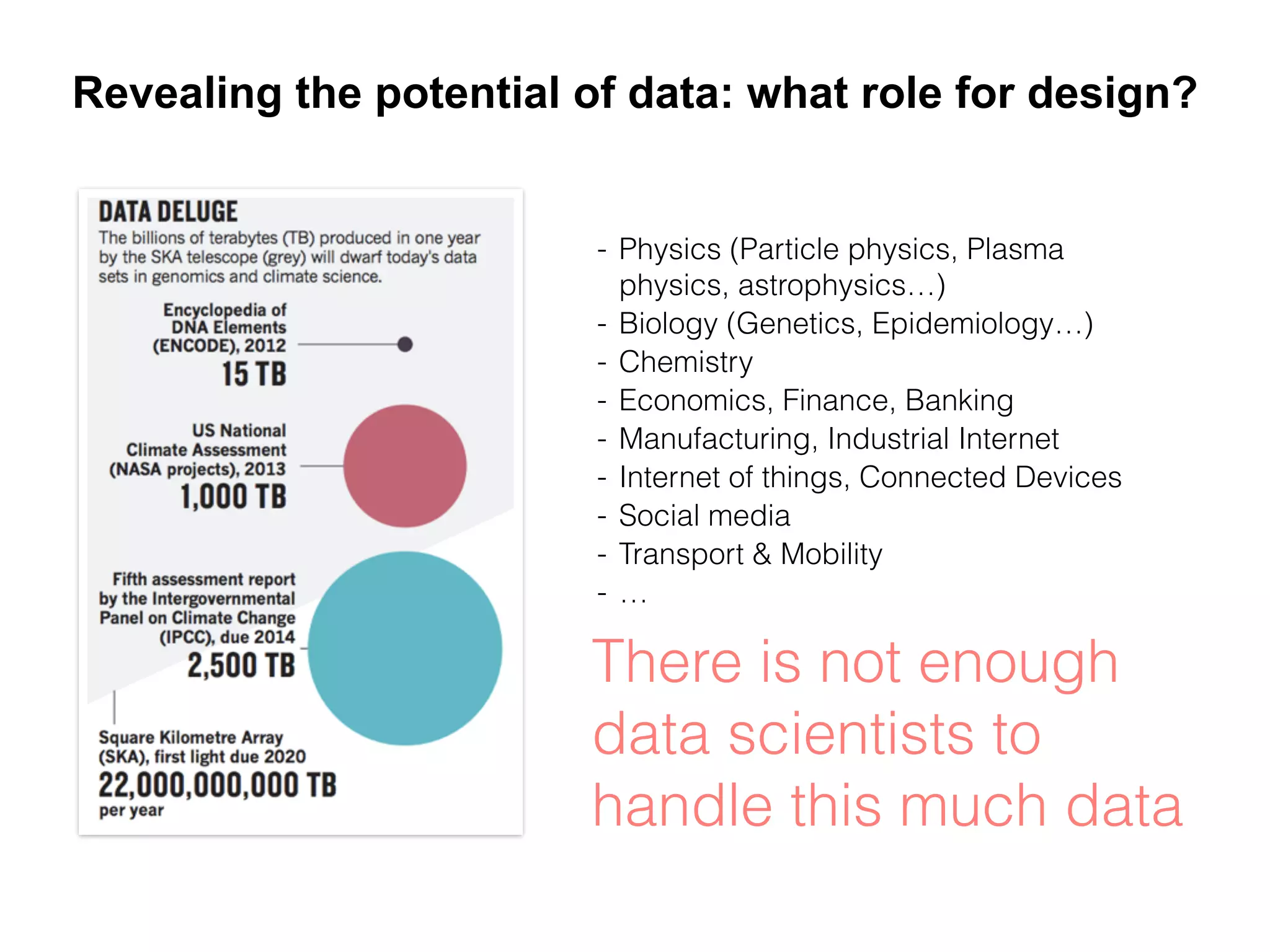

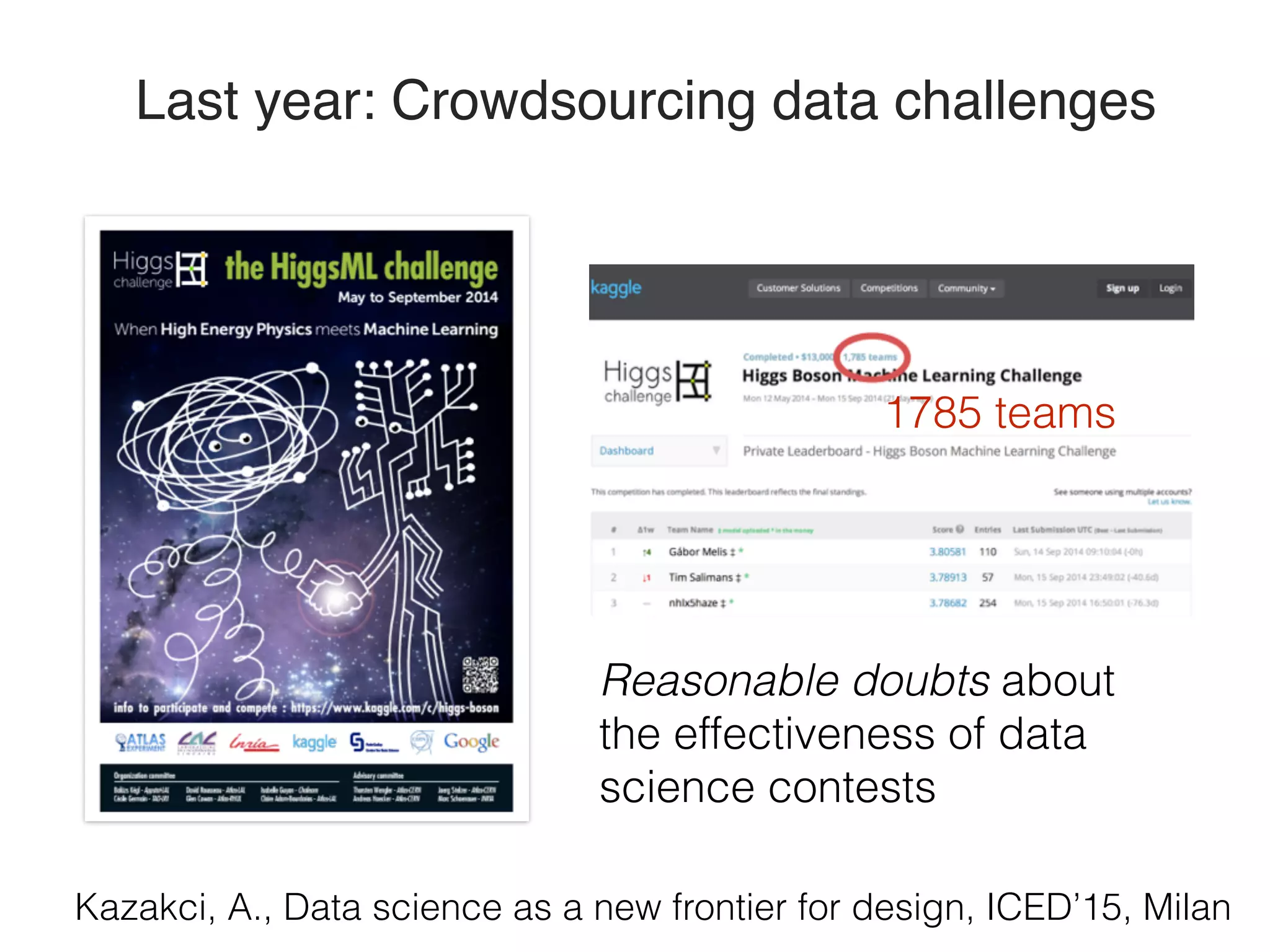

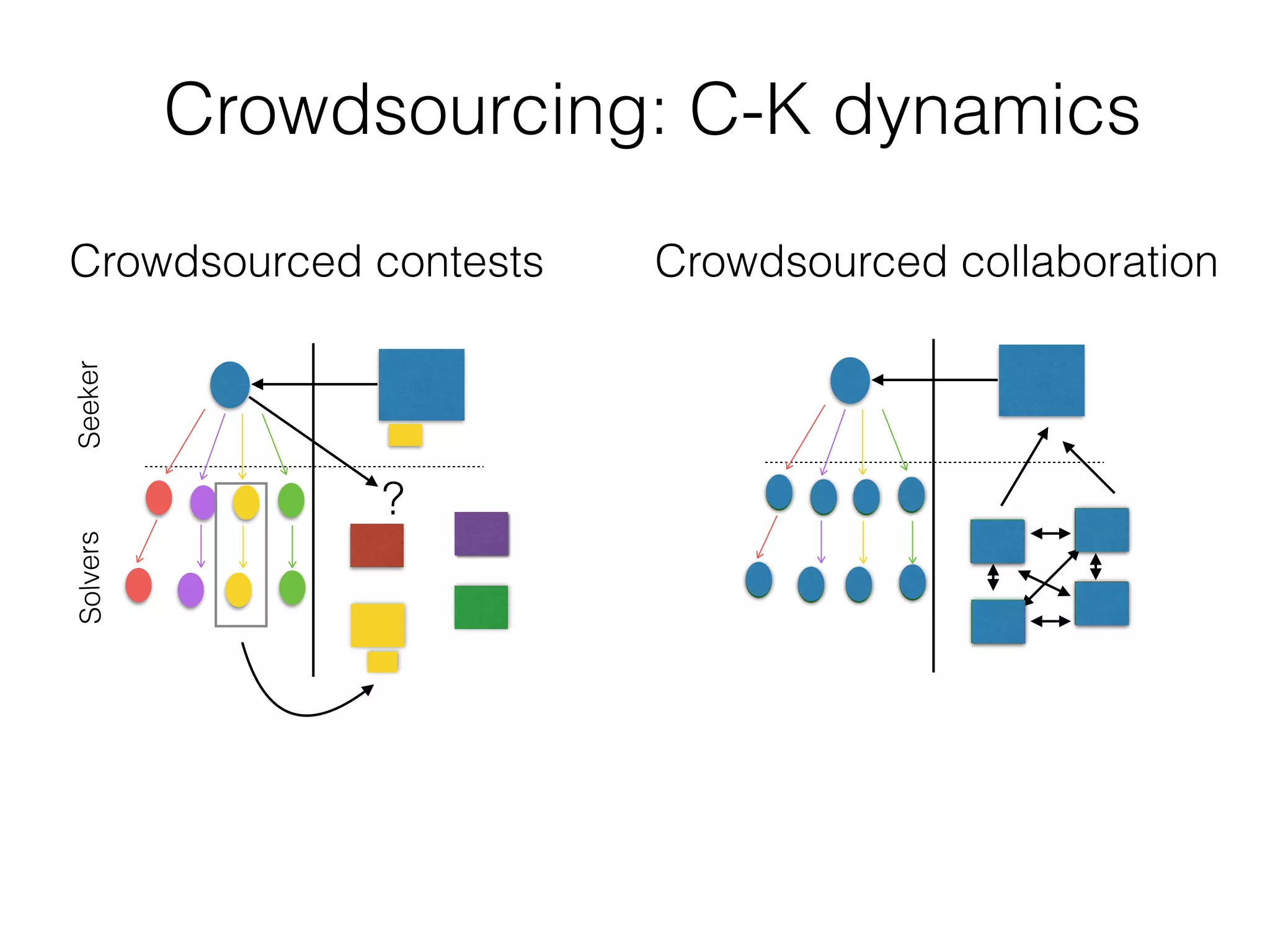

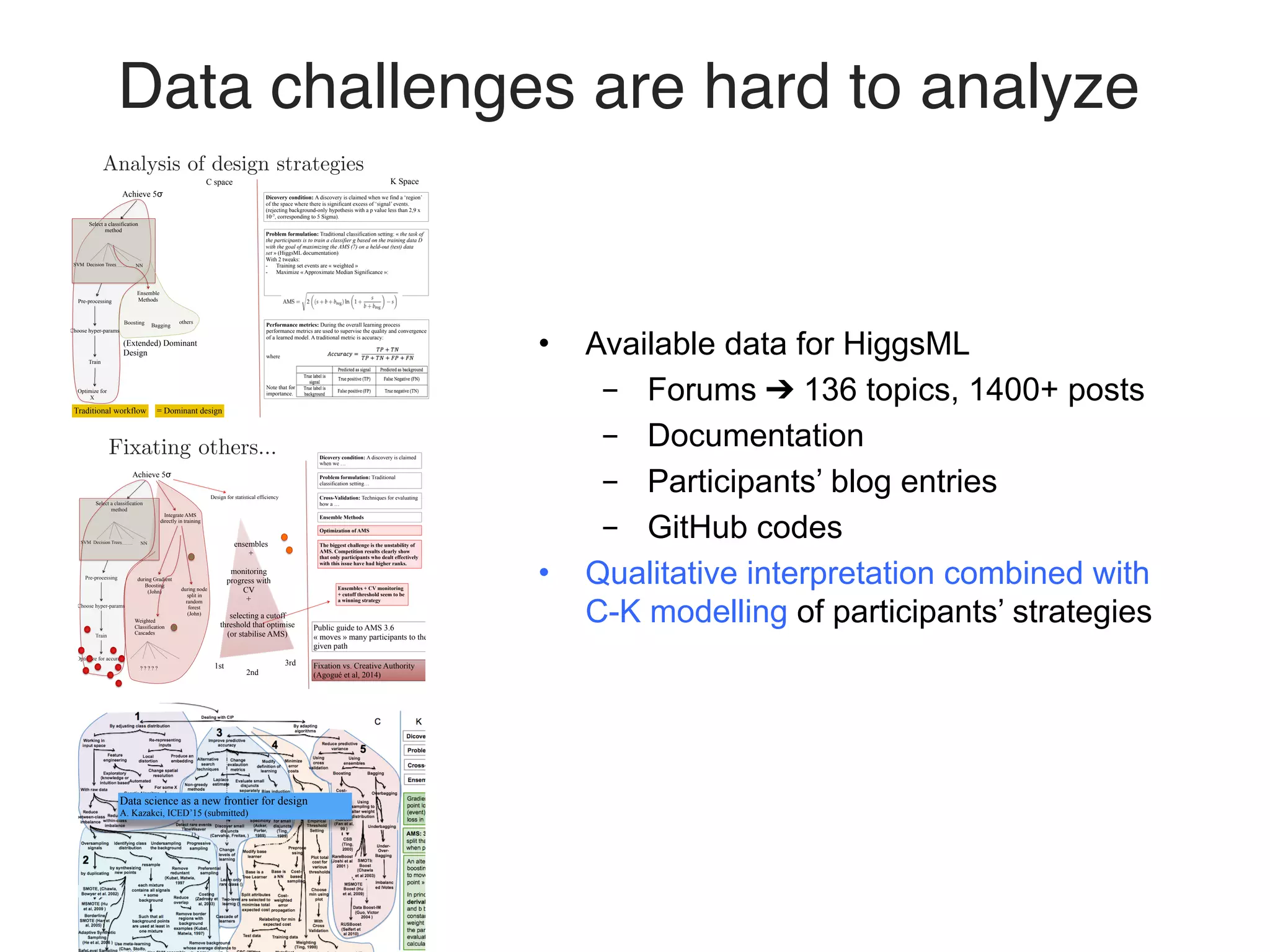

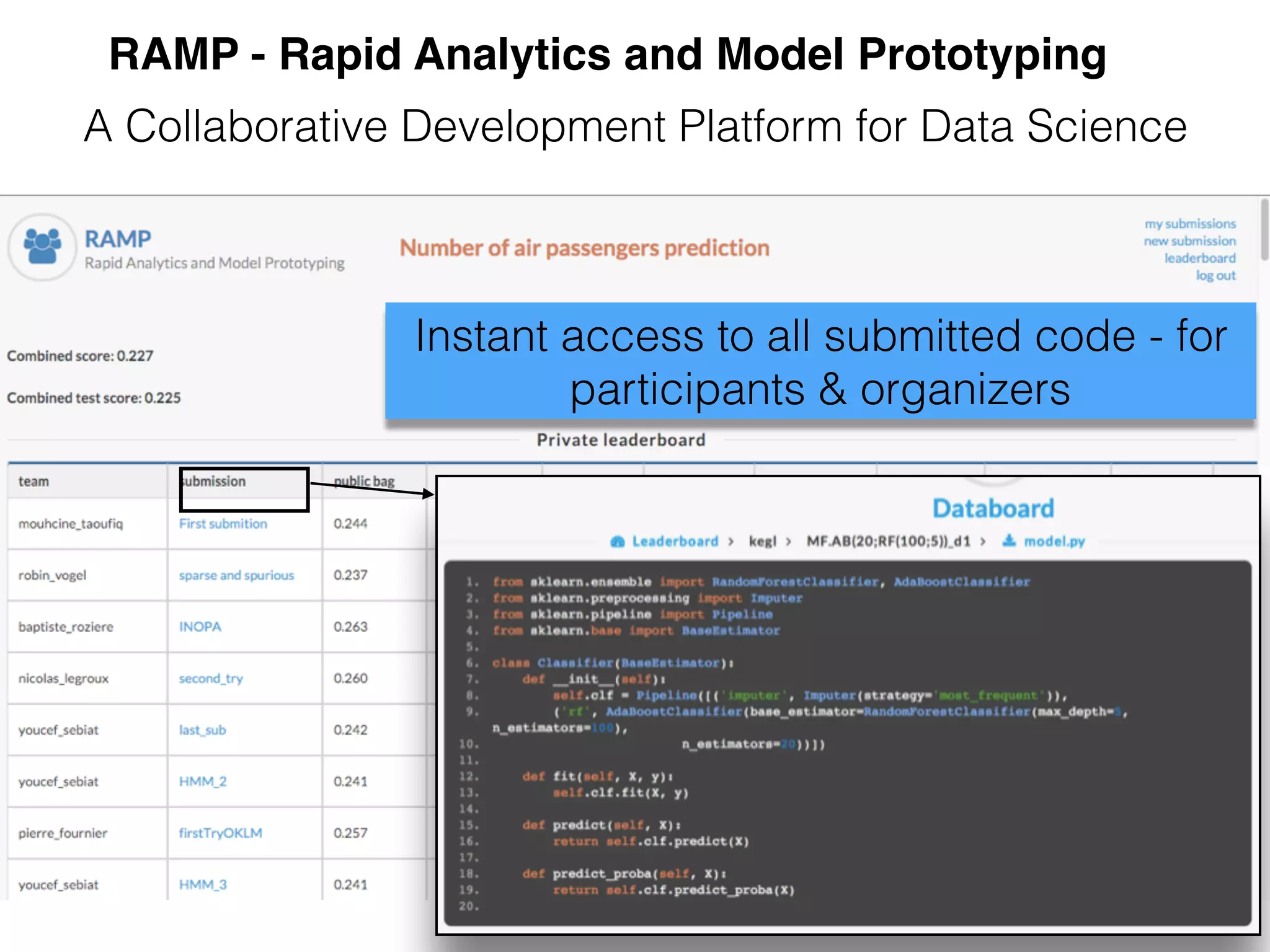

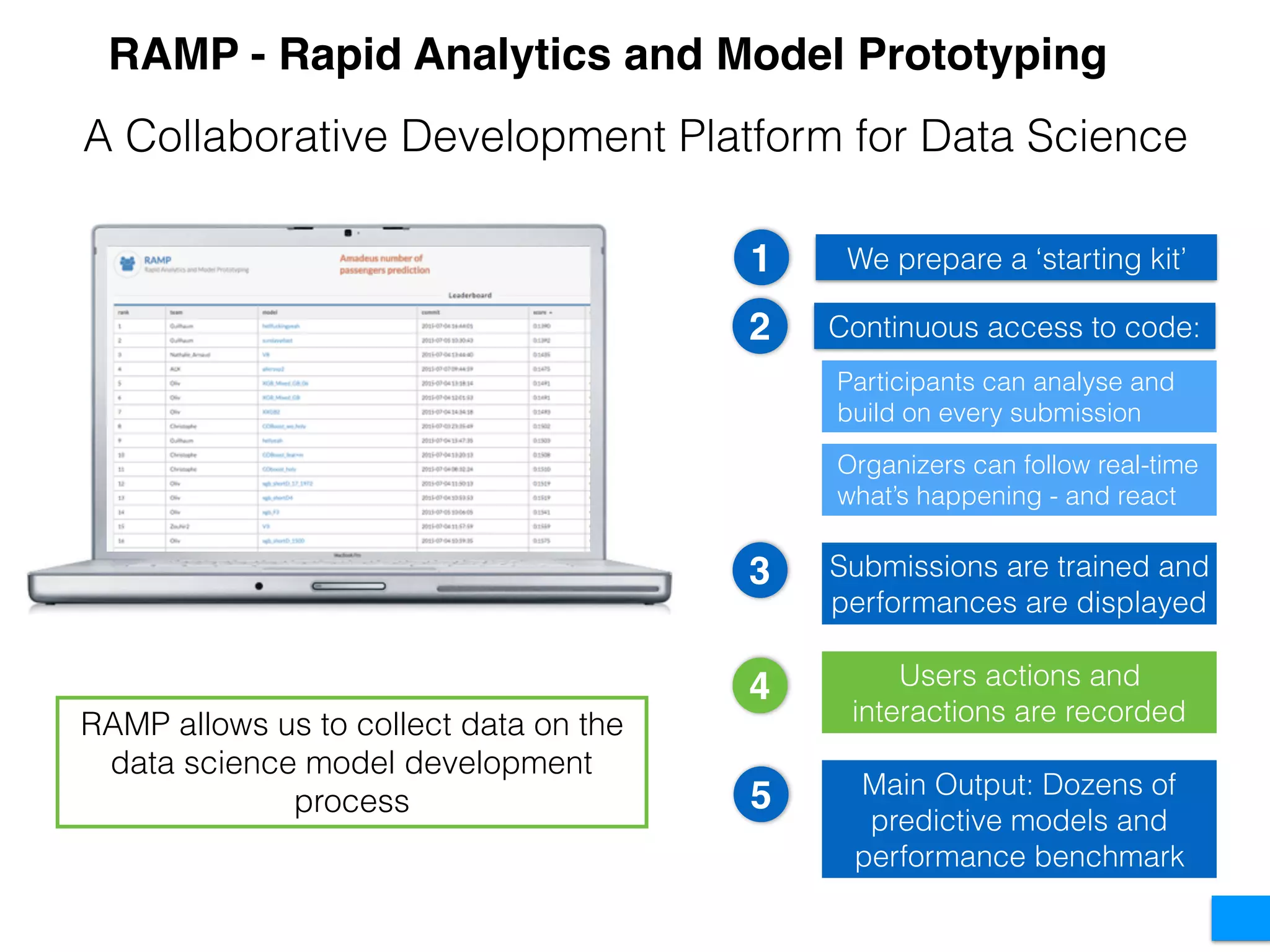

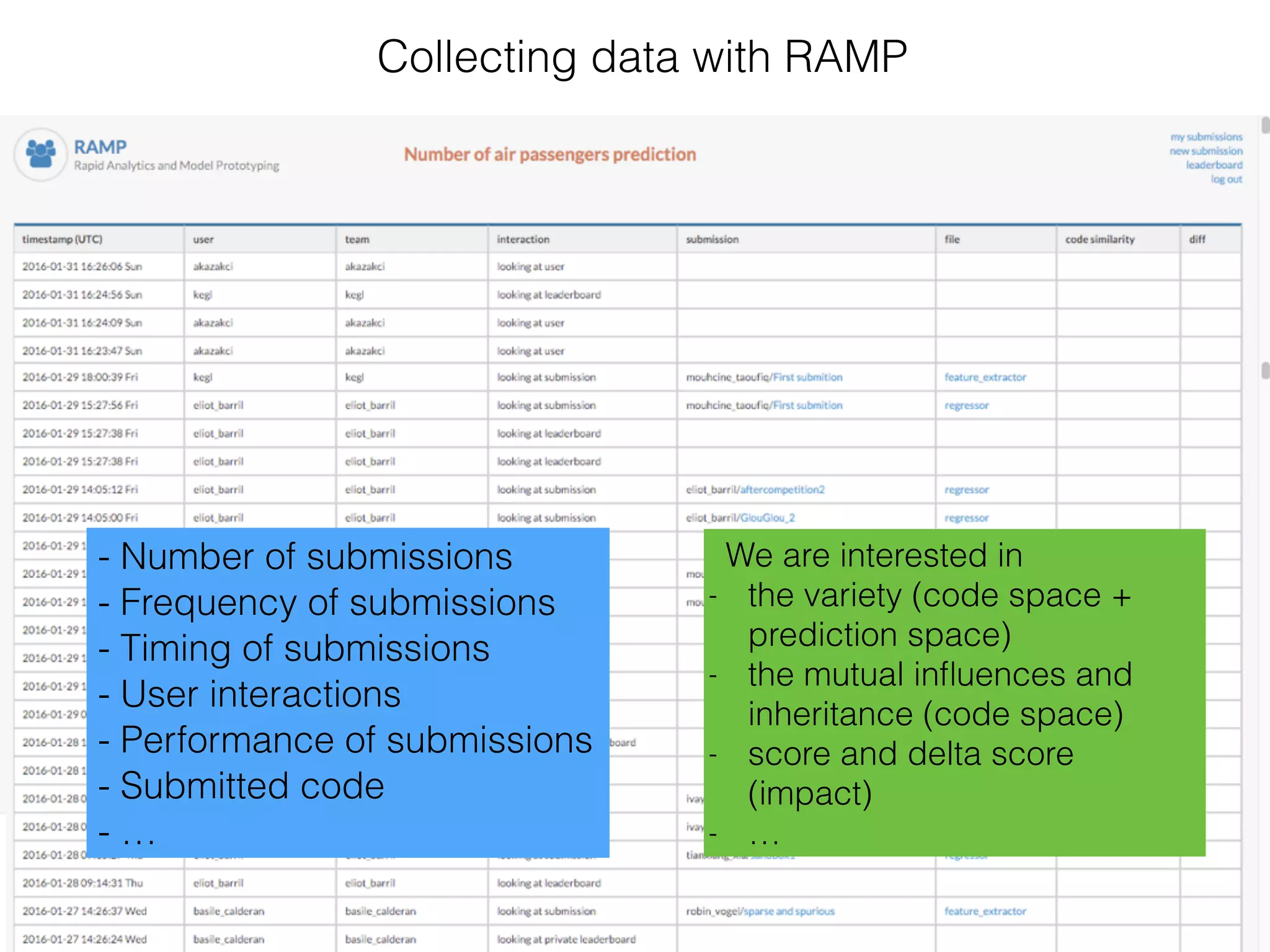

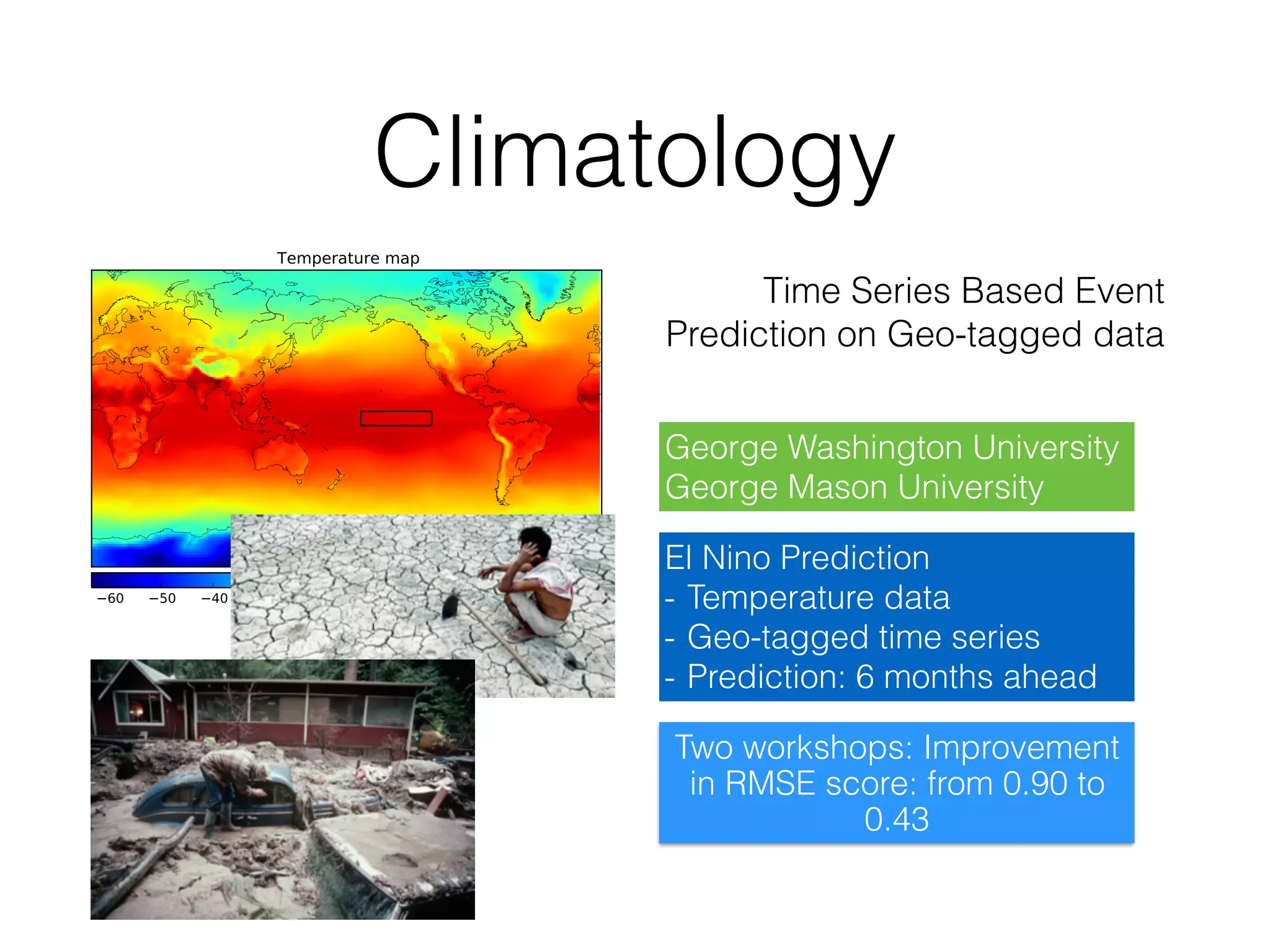

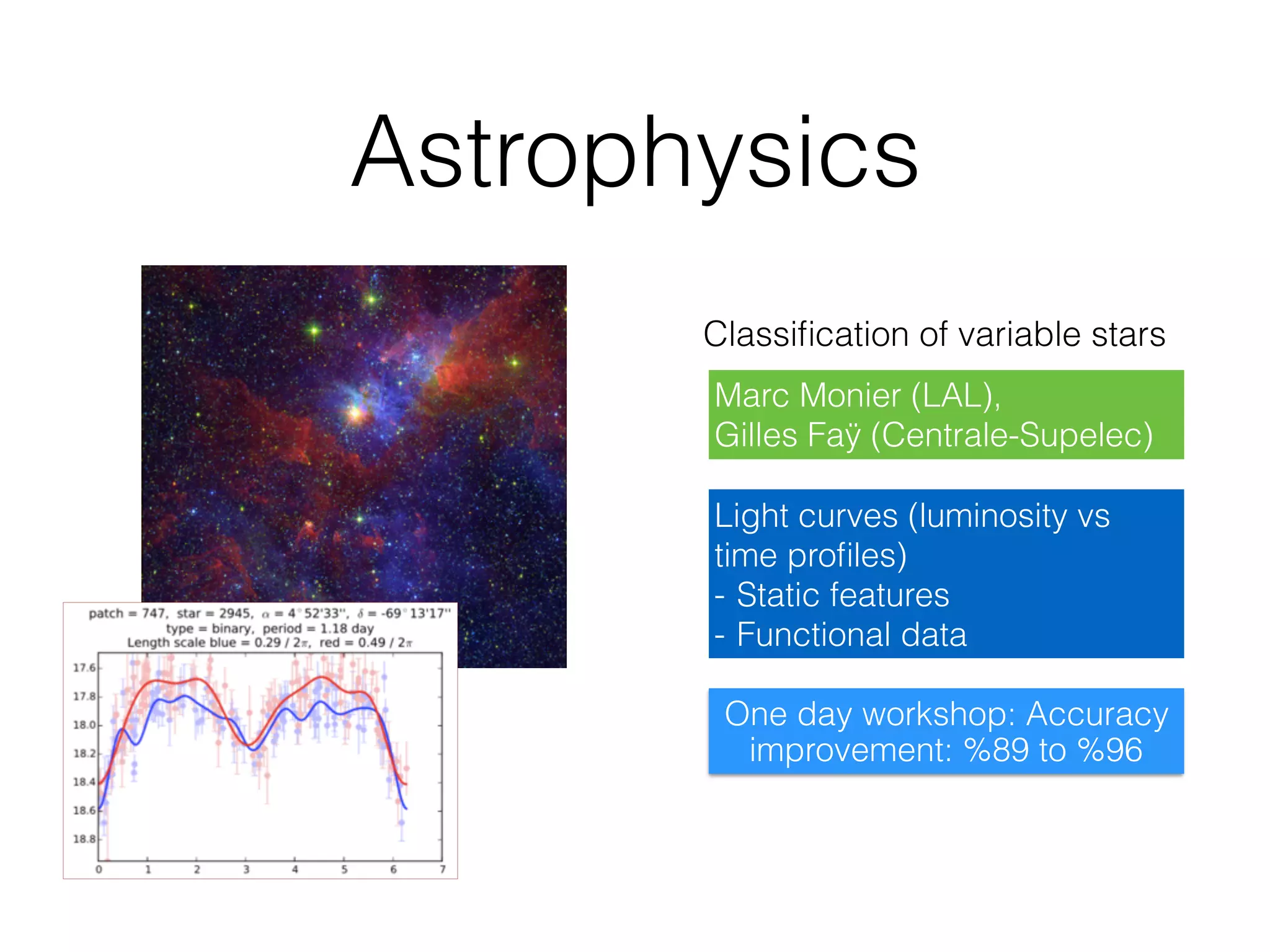

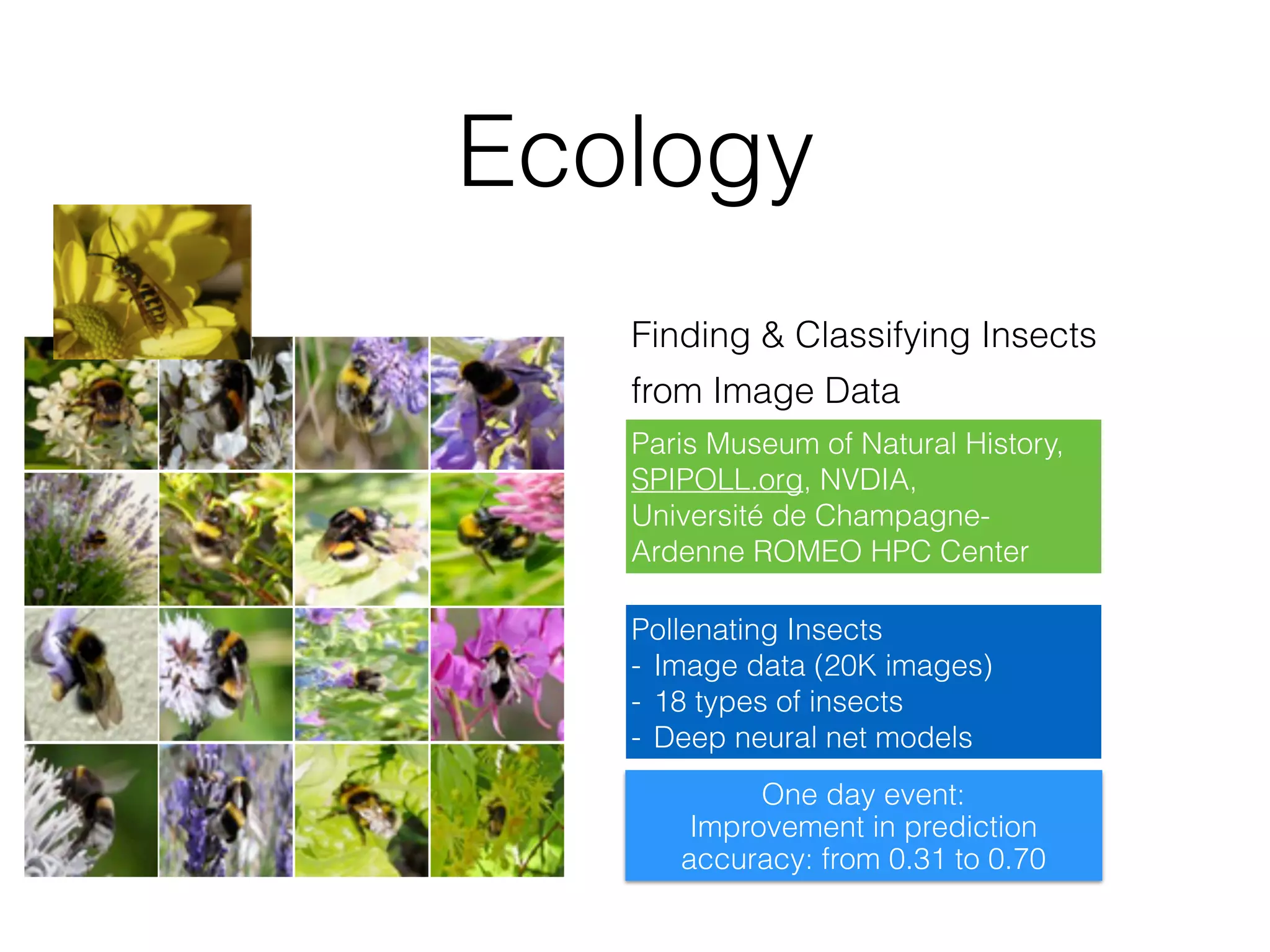

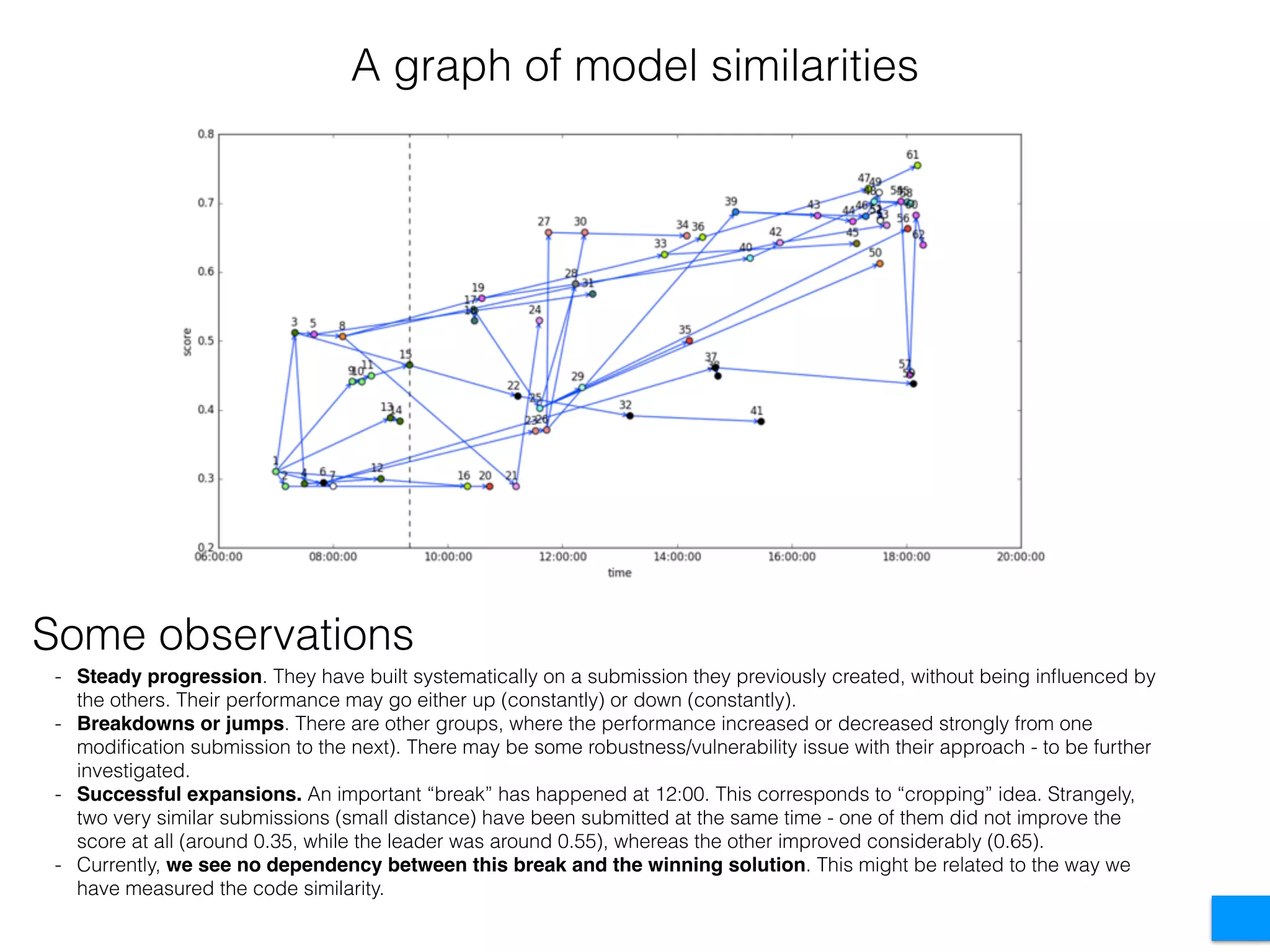

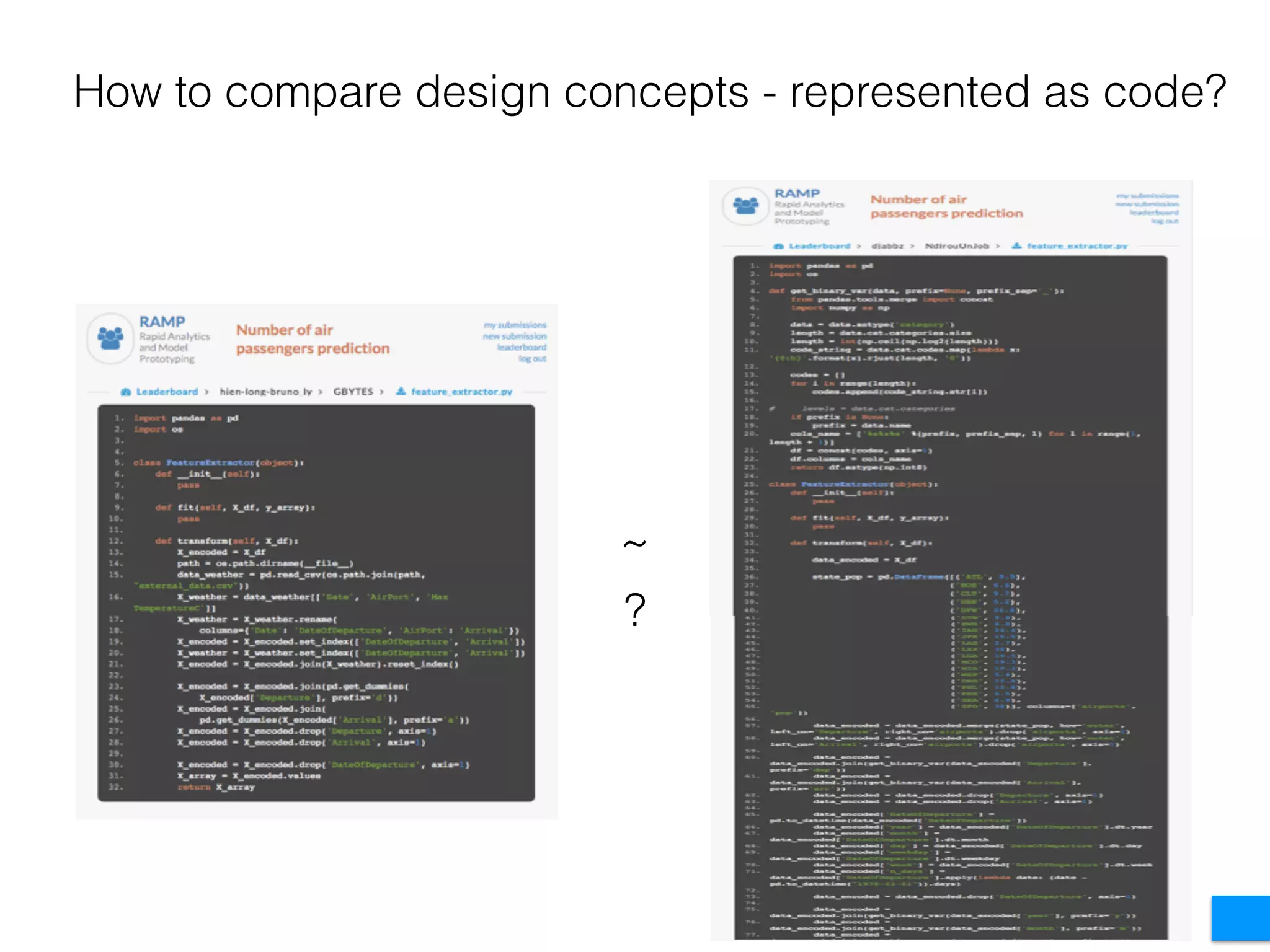

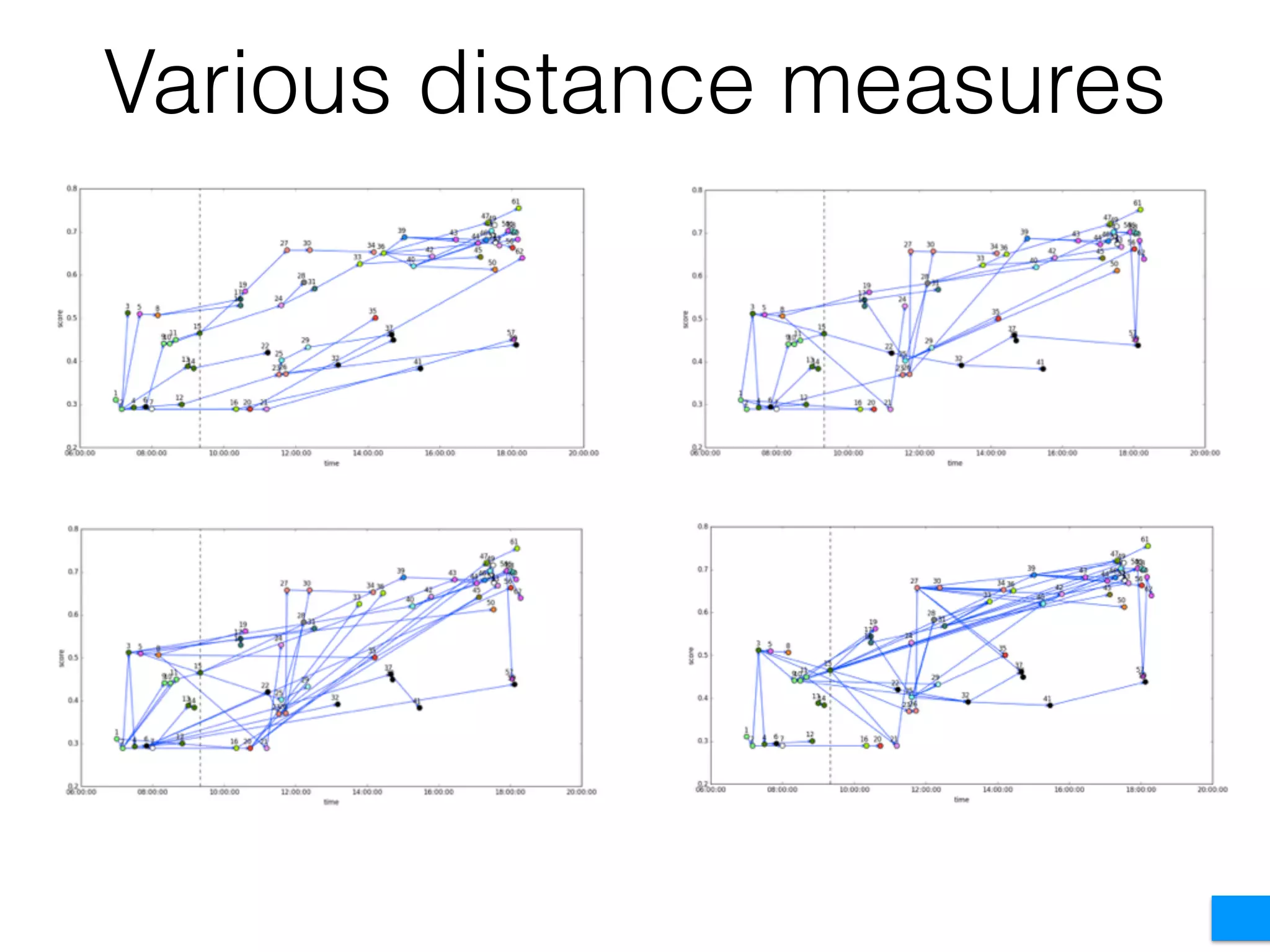

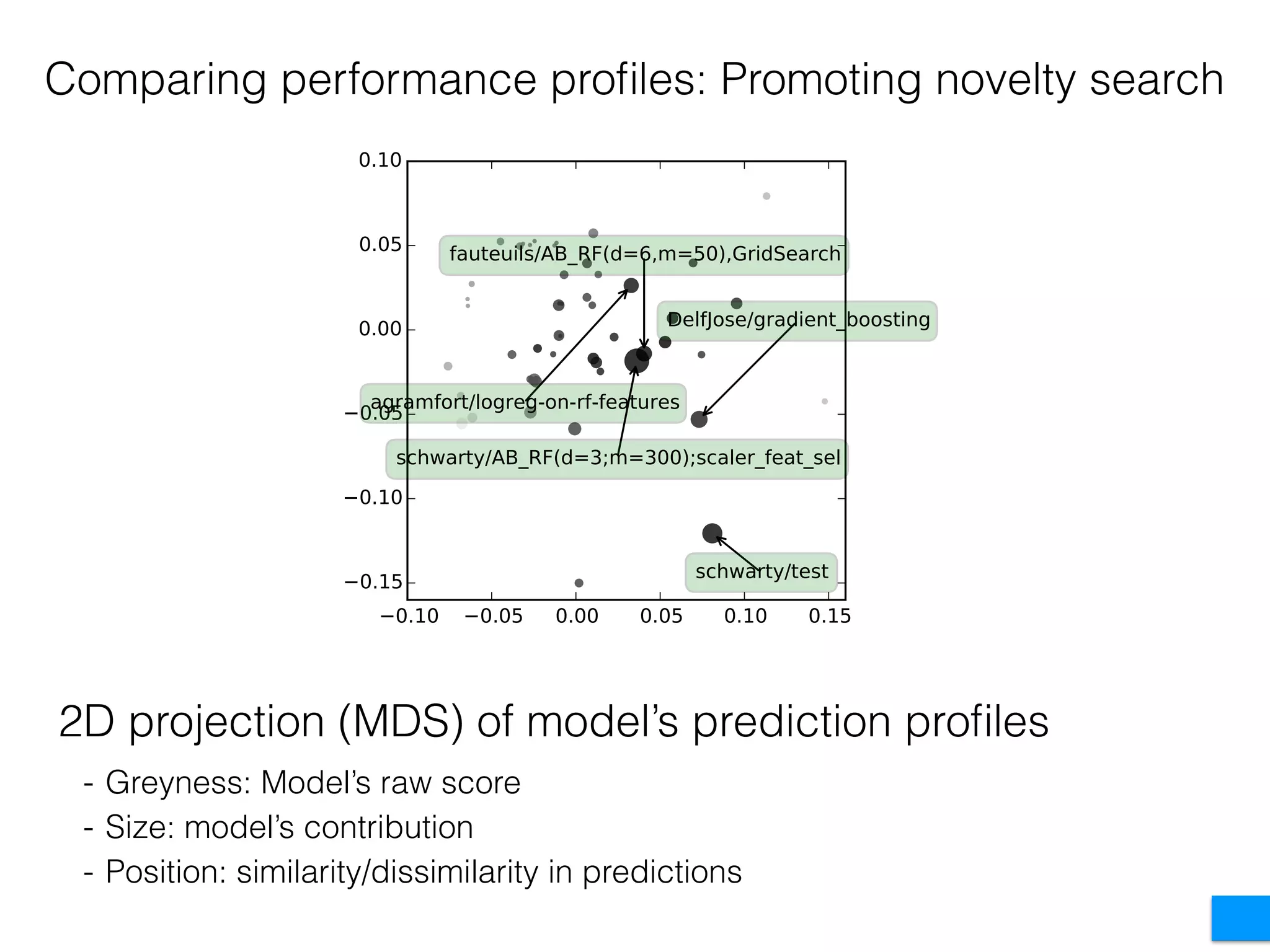

The document discusses the challenges in data science and design, highlighting issues of in-breeding and the need for innovative approaches. It introduces 'RAMP,' a collaborative development platform aimed at enhancing data science modeling and evaluation through shared submissions and performance metrics. Additionally, it explores applications across various fields like climatology, astrophysics, and ecology, emphasizing improvements in prediction accuracy through collaborative efforts.