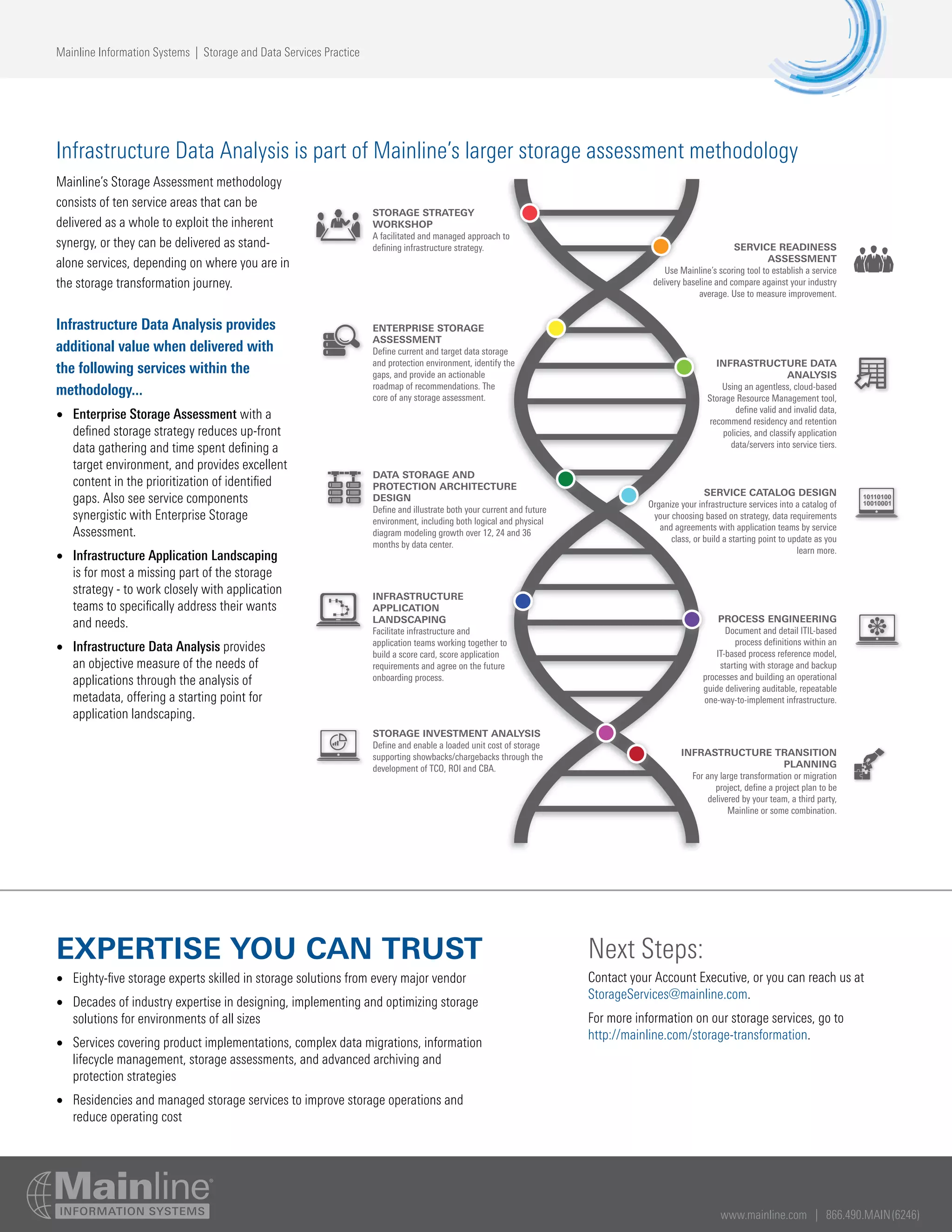

Mainline provides an Infrastructure Data Analysis service to identify invalid or unnecessary data stored on clients' storage systems, recommend proper placement of valid data across storage tiers, and define retention policies. The service analyzes metadata using a cloud-based tool to profile data usage and characteristics, with deliverables including storage capacity analysis, opportunities to clean up data, and data placement recommendations. This helps optimize storage usage, reduce costs, and ensure critical business data is stored appropriately.