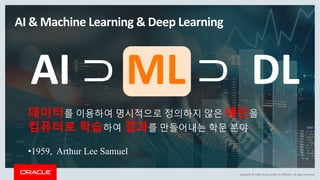

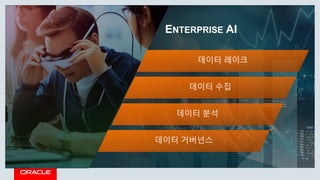

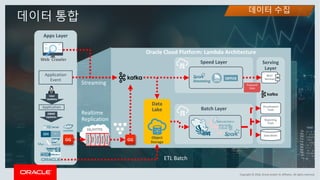

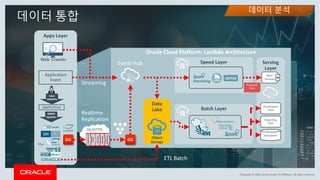

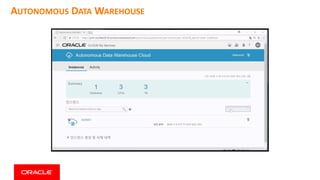

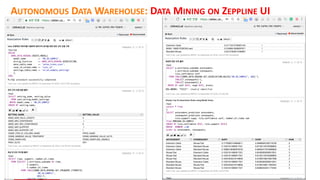

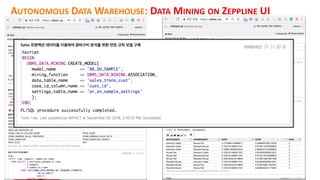

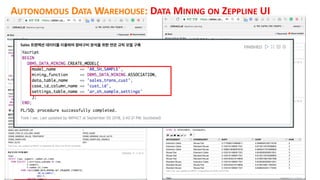

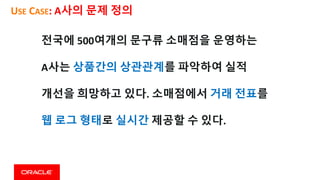

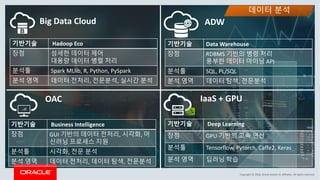

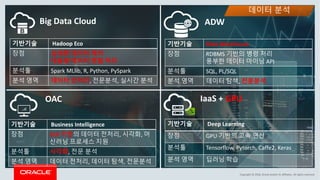

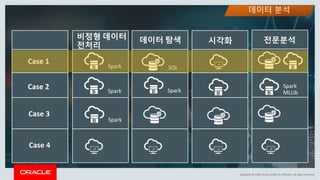

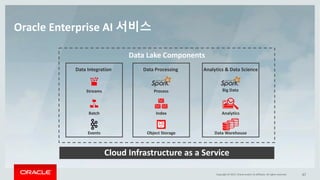

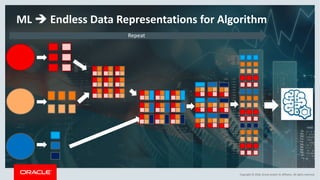

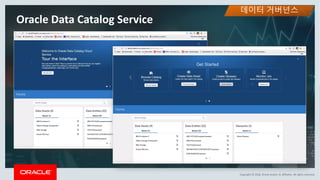

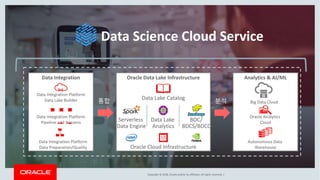

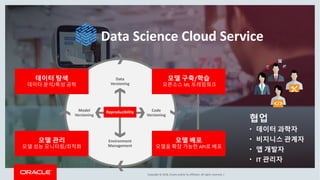

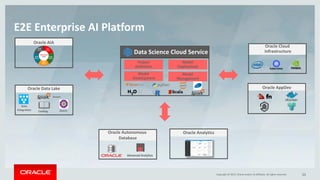

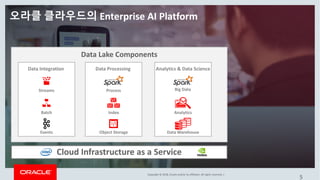

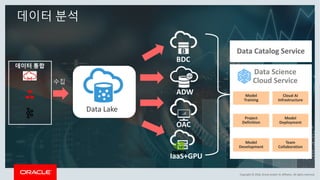

This document discusses enterprise artificial intelligence (AI) and Oracle's cloud AI platform. It begins by providing background on the AI revolution and increasing data generation. It then discusses Oracle's cloud AI platform and services for enterprise AI, including a data lake, data integration, analysis, and machine learning/deep learning tools. As an example, it outlines using the platform for product association analysis based on transaction log data from retail stores. The document emphasizes that Oracle's cloud AI platform provides tools and services suited for different types of data and analysis.