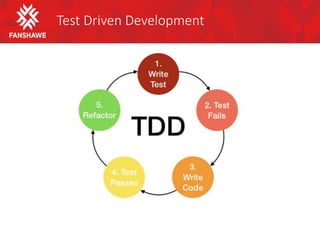

Test driven development involves writing tests before writing code to make the tests pass. This ensures code is built to satisfy explicit requirements. The TDD process is: write a failing test, write code to pass the test, refactor code. This improves quality by preventing defects and ensuring code is maintainable through extensive automated testing. Acceptance TDD applies this process on a system level to build the right functionality.