Character Identification on TV Shows via Coreference Resolution

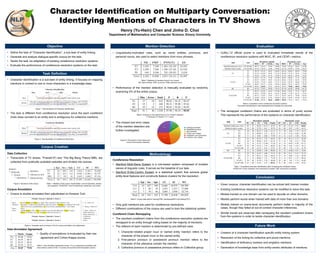

- 1. Character Identification on Multiparty Conversation: Identifying Mentions of Characters in TV Shows Henry (Yu-Hsin) Chen and Jinho D. Choi Department of Mathematics and Computer Science, Emory University • Define the task of “Character Identification”, a sub-task of entity linking. • Generate and analyze dialogue-specific corpus for the task. • Tackle the task via adaptation of existing coreference resolution systems. • Evaluate the performance of coreference resolution systems on the task. Objective • Given corpora, character identification can be solved with trained models • Existing coreference resolution systems can be modified to solve this task. • Models trained on one domain can be used to decode on other domains. • Models perform worse when trained with data of more than one domains. • Models trained on scene-level documents perform better in majority of the cases, though they failed at out-of-context character inferences. • Similar trends are observed after remapping the resultant coreferent chains from the systems in order to tackle character identification. Conclusion • Creation of a character identification specific entity linking system. • Resolution of the linking for collective and plural mentions. • Identification of disfluency markers and singleton mentions. • Generation of knowledge base from entity-centric attributes of mentions. Future Work Data Collection • Transcripts of TV shows, “Friends”(F) and “The Big Bang Theory”(BB), are collected from publically available websites and divided into scenes. Corpus Annotation • Corpus is double-annotated then adjudicated on Amazon Turk. Inter-Annotator Agreement • Quality of annotations is evaluated by their raw agreement and Cohen-Kappa scores. Corpus Creation Season Episode Scene Utterance Speaker Statement(s) Utterance text Figure 3. Structure of the corpus. Table 1. Statistics of the corpus. Epi/Sce/Spk: count of episodes, scenes, and speakers. UC/SC/WC: count of utterances, sentences, and words Figure 4. Template used on Amazon Turk for corpus annotation and adjudication Table 2. Inter-Annotator Agreement scores. F1p is a preliminary annotation trial done without context of the +-2 scenes and dynamic inferred speaker options • Character Identification is a sub-task of entity linking. It focuses on mapping mentions in context to one or more characters in a knowledge base. • The task is different from coreference resolution since the each coreferent chain does connect to an entity and is ambiguous for collective mentions. Task Definition Ross I told mom and dad last night, they seemed to take it pretty well. Monica Oh really, so that hysterical phone call I got from a woman at sobbing 3:00 A.M., "I'll never have grandchildren, I'll never have grandchildren." was what? A wrong number? MonicaJack JudyRoss Character Identification Figure 1. Task illustration of Character Identification. Ross I told mom and dad last night, they seemed to take it pretty well. Monica Oh really, so that hysterical phone call I got from a woman at sobbing 3:00 A.M., "I'll never have grandchildren, I'll never have grandchildren." was what? A wrong number? Coreference Resolution Figure 2. Task illustration of Coreference Resolution. • Linguistically-motivated rules, such as name entities, pronouns, and personal nouns, are used to select mentions from noun phrases. • Performance of the mention detection is manually evaluated by randomly examining 5% of the entire corpus. • The missed and error cases of the mention detection are further investigated. Mention Detection Table 3. Statistics of mentions found in our corpus. NE: Name entities. PRP: pronouns. PNN: personal nouns Table 4. Analysis on the performance of our mention detection. P: Precision. R: Recall. F: F-1 score. Table 1 Analogous phrases 2.06% 2 Misspelled pronouns 5.15% 5 Non-nominals 7.21% 7 Proper noun misses 9.28% 9 Interjection use of pronouns 14.43% 14 Common noun misses 14.43% 14 27% 27% 18% 14% 10% 4% Analogous phrases Misspelled pronouns Non-nominals Proper noun misses Interjection use of pronouns Common noun misses 1 Figure 5. Proportions of the misses and errors of the mention detection. Coreference Resolution • Stanford Multi-Sieve System is a rule-based system composed of multiple sieves of linguistic rules. It serves as the baseline of our task. • Stanford Entity-Centric System is a statistical system that extracts global entity-level features and constructs feature clusters for the resolution. • Only gold mentions are used for coreference resolutions. • Different combinations of the corpus are used to train the statistical system. Coreferent Chain Remapping • The resultant coreferent chains from the coreference resolution systems are remapped to an entity through voting based on the majority of mentions. • The referent of each mention is determined by pre-defined rules: 1. Character-related proper noun or named entity mention refers to the character of the proper noun or the named entity. 2. First-person pronoun or possessive pronoun mention refers to the character of the utterance contain the mention. 3. Collective pronoun or possessive pronoun refers to Collective group. Methodology Table 5. Corpus data split for training(TRN), developing(DEV) and testing(TST) • CoNLL’12 official scorer is used to evaluated immediate results of the coreference resolution systems with MUC, B3, and CEAFm metrics. • The remapped coreferent chains are evaluated in terms of purity scores. This represents the performance of the systems on character identification. Evaluation Table 6. Evaluations of the coreference resolution systems. Document episode/scene: each episode/scene is treated as a document. Table 7. Evaluations character identification after remapping the coreferent chains. FC/EC/UC: Found, expected, and unknown(%) clusters. UM: unknown(%) mentions.