Pneumonia diagnosis tool Case Study

•

1 like•46 views

Elinext developed a tool for automated image analysis to detect lungs pneumonia. Source: https://www.elinext.com/case-study/web/pneumonia-diagnosis-tool/

Report

Share

Report

Share

Download to read offline

Recommended

Operationalizing Machine LearningOperationalizing Machine Learning (Rajeev Dutt, CEO, Co-Founder, DimensionalM...

Operationalizing Machine Learning (Rajeev Dutt, CEO, Co-Founder, DimensionalM...Amazon Web Services Korea

Recommended

Operationalizing Machine LearningOperationalizing Machine Learning (Rajeev Dutt, CEO, Co-Founder, DimensionalM...

Operationalizing Machine Learning (Rajeev Dutt, CEO, Co-Founder, DimensionalM...Amazon Web Services Korea

More Related Content

Similar to Pneumonia diagnosis tool Case Study

Similar to Pneumonia diagnosis tool Case Study (20)

ML Times: Mainframe Machine Learning Initiative- June newsletter (2018)

ML Times: Mainframe Machine Learning Initiative- June newsletter (2018)

Machine Learning in Static Analysis of Program Source Code

Machine Learning in Static Analysis of Program Source Code

Top Artificial Intelligence Tools & Frameworks in 2023.pdf

Top Artificial Intelligence Tools & Frameworks in 2023.pdf

Bringing Machine Learning to Mobile Apps with TensorFlow

Bringing Machine Learning to Mobile Apps with TensorFlow

implementing_ai_for_improved_performance_testing_the_key_to_success.pptx

implementing_ai_for_improved_performance_testing_the_key_to_success.pptx

XYZ Fast Prototyping MGMT 3405 1 Definition – Fa.docx

XYZ Fast Prototyping MGMT 3405 1 Definition – Fa.docx

MongoDB World 2018: Building Intelligent Apps with MongoDB & Google Cloud

MongoDB World 2018: Building Intelligent Apps with MongoDB & Google Cloud

RSA 2015 Blending the Automated and the Manual: Making Application Vulnerabil...

RSA 2015 Blending the Automated and the Manual: Making Application Vulnerabil...

UNCOVERING FAKE NEWS BY MEANS OF SOCIAL NETWORK ANALYSIS

UNCOVERING FAKE NEWS BY MEANS OF SOCIAL NETWORK ANALYSIS

UNCOVERING FAKE NEWS BY MEANS OF SOCIAL NETWORK ANALYSIS

UNCOVERING FAKE NEWS BY MEANS OF SOCIAL NETWORK ANALYSIS

A-Hospital-Management-System Shanto , waliul , Turjo , Munna- FULL update 2 ...

A-Hospital-Management-System Shanto , waliul , Turjo , Munna- FULL update 2 ...

More from Elinext

More from Elinext (14)

Data Migration Testing Purpose, Test Strategy And Scenarios.pdf

Data Migration Testing Purpose, Test Strategy And Scenarios.pdf

Software Testing QA: Automated Testing vs. Manual Testing. Which to Use, and ...

Software Testing QA: Automated Testing vs. Manual Testing. Which to Use, and ...

Development Standards and Regulations for HealthTech

Development Standards and Regulations for HealthTech

Сomparison table of culture parameters for major outsourcing countries

Сomparison table of culture parameters for major outsourcing countries

History and Trends of FinTech in Germany, Austria and Switzerland

History and Trends of FinTech in Germany, Austria and Switzerland

Case Study_Application for integration with financial organizations

Case Study_Application for integration with financial organizations

Recently uploaded

Test Bank -Medical-Surgical Nursing Concepts for Interprofessional Collaborative Care 11th edition (All chapters complete 1 - 74, Question and Answers with Rationales).Test Bank -Medical-Surgical Nursing Concepts for Interprofessional Collaborat...

Test Bank -Medical-Surgical Nursing Concepts for Interprofessional Collaborat...rightmanforbloodline

Mtp kit in kuwait௹+918133066128....) @abortion pills for sale in Kuwait City ✒Abortion CLINIC In Kuwait ?Kuwait pills +918133066128௵) safe Abortion Pills for sale in Salmiya, Kuwait city,Farwaniya-cytotec pills for sale in Kuwait city. Kuwait pills +918133066128WHERE I CAN BUY ABORTION PILLS IN KUWAIT, CYTOTEC 200MG PILLS AVAILABLE IN KUWAIT, MIFEPRISTONE & MISOPROSTOL MTP KIT FOR SALE IN KUWAIT. Whatsapp:+Abortion Pills For Sale In Mahboula-abortion pills in Mahboula-abortion pills in Kuwait City- .Kuwait pills +918133066128)))abortion pills for sale in Mahboula …Mtp Kit On Sale Kuwait pills +918133066128mifepristone Tablets available in Kuwait?Zahra Kuwait pills +918133066128Buy Abortion Pills Cytotec Misoprostol 200mcg Pills Brances and now offering services in Sharjah, Abu Dhabi, Dubai, **))))Abortion Pills For Sale In Ras Al-Khaimah(((online Cytotec Available In Al Madam))) Cytotec Available In muscat, Cytotec 200 Mcg In Zayed City, hatta,Cytotec Pills௵+ __}Kuwait pills +918133066128}— ABORTION IN UAE (DUBAI, SHARJAH, AJMAN, UMM AL QUWAIN, ...UAE-ABORTION PILLS AVAILABLE IN DUBAI/ABUDHABI-where can i buy abortion pillsCytotec Pills௵+ __}Kuwait pills +918133066128}}}/Where can I buy abortion pills in KUWAIT , KUWAIT CITY, HAWALLY, KUWAIT, AL JAHRA, MANGAF , AHMADI, FAHAHEEL, In KUWAIT ... pills for sale in dubai mall and where anyone can buy abortion pills in Abu Dhabi, Dubai, Sharjah, Ajman, Umm Al Quwain, Ras Al Khaimah ... Abortion pills in Dubai, Abu Dhabi, Sharjah, Ajman, Fujairah, Ras Al Khaimah, Umm Al Quwain…Buy Mifepristone and Misoprostol Cytotec , Mtp KitABORTION PILLS _ABORTION PILLS FOR SALE IN ABU DHABI, DUBAI, AJMAN, FUJUIRAH, RAS AL KHAIMAH, SHARJAH & UMM AL QUWAIN, UAE ❤ Medical Abortion pills in ... ABU DHABI, ABORTION PILLS FOR SALE ----- Dubai, Sharjah, Abu dhabi, Ajman, Alain, Fujairah, Ras Al Khaimah FUJAIRAH, AL AIN, RAS AL KHAIMAMedical Abortion pills in Dubai, Abu Dhabi, Sharjah, Al Ain, Ajman, RAK City, Ras Al Khaimah, Fujairah, Dubai, Qatar, Bahrain, Saudi Arabia, Oman, ...Where I Can Buy Abortion Pills In Al ain where can i buy abortion pills in #Dubai, Exclusive Abortion pills for sale in Dubai ... Abortion Pills For Sale In Rak City, in Doha, Kuwait.௵ Kuwait pills +918133066128₩ Abortion Pills For Sale In Doha, Kuwait,CYTOTEC PILLS AVAILABLE Abortion in Doha, ꧁ @ ꧂ ☆ Abortion Pills For Sale In Ivory park,Rabie Ridge,Phomolong. ] Abortion Pills For Sale In Ivory Park, Abortion Pills+918133066128In Ivory Park, Abortion Clinic In Ivory Park,Termination Pills In Ivory Park,. *)][(Abortion Pills For Sale In Tembisa Winnie Mandela Ivory Park Ebony Park Esangweni Oakmoor Swazi Inn Whats'app...In Ra al Khaimah,safe termination pills for sale in Ras Al Khaimah. | Dubai.. @Kuwait pills +918133066128Abortion Pills For Sale In Kuwait, Buy Cytotec Pills In Kuwait.Cytotec Pills௵ __}Kuwait pills +918133066128}}}/Where cAbortion pills Buy Farwaniya (+918133066128) Cytotec 200mg tablets Al AHMEDI

Abortion pills Buy Farwaniya (+918133066128) Cytotec 200mg tablets Al AHMEDIAbortion pills in Kuwait Cytotec pills in Kuwait

Mtp kit in kuwait௹+918133066128....) @abortion pills for sale in Kuwait City ✒Abortion CLINIC In Kuwait ?Kuwait pills +918133066128௵) safe Abortion Pills for sale in Salmiya, Kuwait city,Farwaniya-cytotec pills for sale in Kuwait city. Kuwait pills +918133066128WHERE I CAN BUY ABORTION PILLS IN KUWAIT, CYTOTEC 200MG PILLS AVAILABLE IN KUWAIT, MIFEPRISTONE & MISOPROSTOL MTP KIT FOR SALE IN KUWAIT. Whatsapp:+Abortion Pills For Sale In Mahboula-abortion pills in Mahboula-abortion pills in Kuwait City- .Kuwait pills +918133066128)))abortion pills for sale in Mahboula …Mtp Kit On Sale Kuwait pills +918133066128mifepristone Tablets available in Kuwait?Zahra Kuwait pills +918133066128Buy Abortion Pills Cytotec Misoprostol 200mcg Pills Brances and now offering services in Sharjah, Abu Dhabi, Dubai, **))))Abortion Pills For Sale In Ras Al-Khaimah(((online Cytotec Available In Al Madam))) Cytotec Available In muscat, Cytotec 200 Mcg In Zayed City, hatta,Cytotec Pills௵+ __}Kuwait pills +918133066128}— ABORTION IN UAE (DUBAI, SHARJAH, AJMAN, UMM AL QUWAIN, ...UAE-ABORTION PILLS AVAILABLE IN DUBAI/ABUDHABI-where can i buy abortion pillsCytotec Pills௵+ __}Kuwait pills +918133066128}}}/Where can I buy abortion pills in KUWAIT , KUWAIT CITY, HAWALLY, KUWAIT, AL JAHRA, MANGAF , AHMADI, FAHAHEEL, In KUWAIT ... pills for sale in dubai mall and where anyone can buy abortion pills in Abu Dhabi, Dubai, Sharjah, Ajman, Umm Al Quwain, Ras Al Khaimah ... Abortion pills in Dubai, Abu Dhabi, Sharjah, Ajman, Fujairah, Ras Al Khaimah, Umm Al Quwain…Buy Mifepristone and Misoprostol Cytotec , Mtp KitABORTION PILLS _ABORTION PILLS FOR SALE IN ABU DHABI, DUBAI, AJMAN, FUJUIRAH, RAS AL KHAIMAH, SHARJAH & UMM AL QUWAIN, UAE ❤ Medical Abortion pills in ... ABU DHABI, ABORTION PILLS FOR SALE ----- Dubai, Sharjah, Abu dhabi, Ajman, Alain, Fujairah, Ras Al Khaimah FUJAIRAH, AL AIN, RAS AL KHAIMAMedical Abortion pills in Dubai, Abu Dhabi, Sharjah, Al Ain, Ajman, RAK City, Ras Al Khaimah, Fujairah, Dubai, Qatar, Bahrain, Saudi Arabia, Oman, ...Where I Can Buy Abortion Pills In Al ain where can i buy abortion pills in #Dubai, Exclusive Abortion pills for sale in Dubai ... Abortion Pills For Sale In Rak City, in Doha, Kuwait.௵ Kuwait pills +918133066128₩ Abortion Pills For Sale In Doha, Kuwait,CYTOTEC PILLS AVAILABLE Abortion in Doha, ꧁ @ ꧂ ☆ Abortion Pills For Sale In Ivory park,Rabie Ridge,Phomolong. ] Abortion Pills For Sale In Ivory Park, Abortion Pills+918133066128In Ivory Park, Abortion Clinic In Ivory Park,Termination Pills In Ivory Park,. *)][(Abortion Pills For Sale In Tembisa Winnie Mandela Ivory Park Ebony Park Esangweni Oakmoor Swazi Inn Whats'app...In Ra al Khaimah,safe termination pills for sale in Ras Al Khaimah. | Dubai.. @Kuwait pills +918133066128Abortion Pills For Sale In Kuwait, Buy Cytotec Pills In Kuwait.Cytotec Pills௵ __}Kuwait pills +918133066128}}}/Where can I buy abortion pills in KUWAIT ,@Safe Abortion pills IN Jeddah(+918133066128) Un_wanted kit Buy Jeddah

@Safe Abortion pills IN Jeddah(+918133066128) Un_wanted kit Buy JeddahAbortion pills in Kuwait Cytotec pills in Kuwait

CALL GIRLS IN GOA & ESCORTS SERVICE 9316020077 Door Step Delivery We Offering You 100% Genuine Completed Body And Mind Relaxation With Happy Ending ServiCe Done By Most Attractive Charming Soft Spoken Bold Beautiful Full Cooperative Independent Escort Girls ServiCe In All Star Hotel And Home ServiCe In All Over North Goa-Baga , Calangute , Anjuna , Candolim , Arpora , Vagator , Morjim , Arambol , Mandrem , Mapusa , Siolim , Porvorim , Panaji , Miramar , Dona Paula ,Etc. Goa Also …,

I Have Extremely Beautiful Broad Minded Cute Sexy & Hot Call Girls and Escorts, We Are Located in 3* 4* 5* Hotels in GOA. Safe & Secure High Class Services Affordable Rate 100% Satisfaction, Unlimited Enjoyment. Any Time for Model/Teens Escort in GOA High Class luxury and Premium Escorts ServiCe.

★ CALL US High Class Luxury and Premium Escorts ServiCe We Provide Well Educated, Royal Class Female, High-Class Escorts Offering a Top High Class Escorts Service In the & Several Nearby All Places Of .

★ Get The High Profile, Bollywood Queens , Well Educated , Good Looking , Full Cooperative Model Services. You Can See Me at My Comfortable Hotels or I Can Visit You In hotel Our Service Available IN All SERVICE, 3/4/5 STAR HOTEL , In Call /Out Call Services.24 hrs ,

★ To Enjoy With Hot and Sexy Girls .

★ We Are Providing :-

• Models

• Vip Models

• Russian Models

• Foreigner Models

• TV Actress and Celebrities

• Receptionist

• Air Hostess

• Call Center Working Girls/Women

• Hi-Tech Co. Girls/Women

• Housewife

• Collage Going Girls.

• Travelling Escorts.

• Ramp-Models

• Foreigner And Many More.. Incall & Outcall Available…

• INDEPENDENT GIRLS / HOUSE WIFES

No Advance 931~602~0077 Goa ✂️ Call Girl , Indian Call Girl Goa For Full nig...

No Advance 931~602~0077 Goa ✂️ Call Girl , Indian Call Girl Goa For Full nig...Real Sex Provide In Goa

PEMESANAN OBAT ASLI : +6287776558899

Cara Menggugurkan Kandungan usia 1 , 2 , bulan - obat penggugur janin - cara aborsi kandungan - obat penggugur kandungan 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 bulan - bagaimana cara menggugurkan kandungan - tips Cara aborsi kandungan - trik Cara menggugurkan janin - Cara aman bagi ibu menyusui menggugurkan kandungan - klinik apotek jual obat penggugur kandungan - jamu PENGGUGUR KANDUNGAN - WAJIB TAU CARA ABORSI JANIN - GUGURKAN KANDUNGAN AMAN TANPA KURET - CARA Menggugurkan Kandungan tanpa efek samping - rekomendasi dokter obat herbal penggugur kandungan - ABORSI JANIN - aborsi kandungan - jamu herbal Penggugur kandungan - cara Menggugurkan Kandungan yang cacat - tata cara Menggugurkan Kandungan - obat penggugur kandungan di apotik kimia Farma - obat telat datang bulan - obat penggugur kandungan tuntas - obat penggugur kandungan alami - klinik aborsi janin gugurkan kandungan - ©Cytotec ™misoprostol BPOM - OBAT PENGGUGUR KANDUNGAN ®CYTOTEC - aborsi janin dengan pil ©Cytotec - ®Cytotec misoprostol® BPOM 100% - penjual obat penggugur kandungan asli - klinik jual obat aborsi janin - obat penggugur kandungan di klinik k-24 || obat penggugur ™Cytotec di apotek umum || ®CYTOTEC ASLI || obat ©Cytotec yang asli 200mcg || obat penggugur ASLI || pil Cytotec© tablet || cara gugurin kandungan || jual ®Cytotec 200mcg || dokter gugurkan kandungan || cara menggugurkan kandungan dengan cepat selesai dalam 24 jam secara alami buah buahan || usia kandungan 1_2 3_4 5_6 7_8 bulan masih bisa di gugurkan || obat penggugur kandungan ®cytotec dan gastrul || cara gugurkan pembuahan janin secara alami dan cepat || gugurkan kandungan || gugurin janin || cara Menggugurkan janin di luar nikah || contoh aborsi janin yang benar || contoh obat penggugur kandungan asli || contoh cara Menggugurkan Kandungan yang benar || telat haid || obat telat haid || Cara Alami gugurkan kehamilan || obat telat menstruasi || cara Menggugurkan janin anak haram || cara aborsi menggugurkan janin yang tidak berkembang || gugurkan kandungan dengan obat ©Cytotec || obat penggugur kandungan ™Cytotec 100% original || HARGA obat penggugur kandungan || obat telat haid 1 bulan || obat telat menstruasi 1-2 3-4 5-6 7-8 BULAN || obat telat datang bulan || cara Menggugurkan janin 1 bulan || cara Menggugurkan Kandungan yang masih 2 bulan || cara Menggugurkan Kandungan yang masih hitungan Minggu || cara Menggugurkan Kandungan yang masih usia 3 bulan || cara Menggugurkan usia kandungan 4 bulan || cara Menggugurkan janin usia 5 bulan || cara Menggugurkan kehamilan 6 Bulan

________&&&_________&&&_____________&&&_________&&&&____________

Cara Menggugurkan Kandungan Usia Janin 1 | 7 | 8 Bulan Dengan Cepat Dalam Hitungan Jam Secara Alami, Kami Siap Meneriman Pesanan Ke Seluruh Indonesia, Melputi: Ambon, Banda Aceh, Bandung, Banjarbaru, Batam, Bau-Bau, Bengkulu, Binjai, Blitar, Bontang, Cilegon, Cirebon, Depok, Gorontalo, Jakarta, Jayapura, Kendari, Kota Mobagu, Kupang, LhokseumaweObat Penggugur Kandungan Cytotec Dan Gastrul Harga Indomaret

Obat Penggugur Kandungan Cytotec Dan Gastrul Harga IndomaretCara Menggugurkan Kandungan 087776558899

TEST BANK For Robbins & Kumar Basic Pathology, 11th Edition by Vinay Kumar, Abul K. Abba, Verified Chapters 1 - 24, Complete Newest VersionTEST BANK For Robbins & Kumar Basic Pathology, 11th Edition by Vinay Kumar, A...

TEST BANK For Robbins & Kumar Basic Pathology, 11th Edition by Vinay Kumar, A...rightmanforbloodline

Recently uploaded (20)

MAGNESIUM - ELECTROLYTE IMBALANCE (HYPERMAGNESEMIA & HYPOMAGNESEMIA).pdf

MAGNESIUM - ELECTROLYTE IMBALANCE (HYPERMAGNESEMIA & HYPOMAGNESEMIA).pdf

Test Bank -Medical-Surgical Nursing Concepts for Interprofessional Collaborat...

Test Bank -Medical-Surgical Nursing Concepts for Interprofessional Collaborat...

Real Sex Provide In Goa ✂️ Call Girl (9316020077) Call Girl In Goa

Real Sex Provide In Goa ✂️ Call Girl (9316020077) Call Girl In Goa

Abortion pills Buy Farwaniya (+918133066128) Cytotec 200mg tablets Al AHMEDI

Abortion pills Buy Farwaniya (+918133066128) Cytotec 200mg tablets Al AHMEDI

@Safe Abortion pills IN Jeddah(+918133066128) Un_wanted kit Buy Jeddah

@Safe Abortion pills IN Jeddah(+918133066128) Un_wanted kit Buy Jeddah

Making change happen: learning from "positive deviancts"

Making change happen: learning from "positive deviancts"

Post marketing surveillance in Japan, legislation and.pptx

Post marketing surveillance in Japan, legislation and.pptx

No Advance 931~602~0077 Goa ✂️ Call Girl , Indian Call Girl Goa For Full nig...

No Advance 931~602~0077 Goa ✂️ Call Girl , Indian Call Girl Goa For Full nig...

❤️ Chandigarh Call Girls ☎️99158-51334☎️ Escort service in Chandigarh ☎️ Chan...

❤️ Chandigarh Call Girls ☎️99158-51334☎️ Escort service in Chandigarh ☎️ Chan...

CALCIUM - ELECTROLYTE IMBALANCE (HYPERCALCEMIA & HYPOCALCEMIA).pdf

CALCIUM - ELECTROLYTE IMBALANCE (HYPERCALCEMIA & HYPOCALCEMIA).pdf

VIP Just Call 9548273370 Lucknow Top Class Call Girls Number | 8630512678 Esc...

VIP Just Call 9548273370 Lucknow Top Class Call Girls Number | 8630512678 Esc...

Obat Penggugur Kandungan Cytotec Dan Gastrul Harga Indomaret

Obat Penggugur Kandungan Cytotec Dan Gastrul Harga Indomaret

Goa Call Girl 931~602~0077 Call ✂️ Girl Service Vip Top Model Safe

Goa Call Girl 931~602~0077 Call ✂️ Girl Service Vip Top Model Safe

TEST BANK For Robbins & Kumar Basic Pathology, 11th Edition by Vinay Kumar, A...

TEST BANK For Robbins & Kumar Basic Pathology, 11th Edition by Vinay Kumar, A...

Pneumonia diagnosis tool Case Study

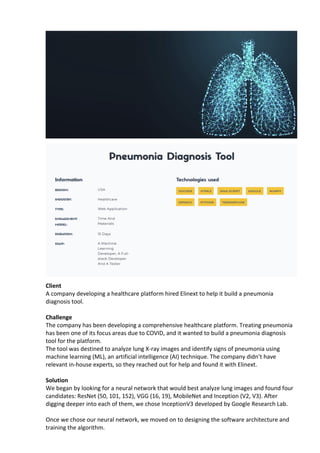

- 1. Client A company developing a healthcare platform hired Elinext to help it build a pneumonia diagnosis tool. Challenge The company has been developing a comprehensive healthcare platform. Treating pneumonia has been one of its focus areas due to COVID, and it wanted to build a pneumonia diagnosis tool for the platform. The tool was destined to analyze lung X-ray images and identify signs of pneumonia using machine learning (ML), an artificial intelligence (AI) technique. The company didn’t have relevant in-house experts, so they reached out for help and found it with Elinext. Solution We began by looking for a neural network that would best analyze lung images and found four candidates: ResNet (50, 101, 152), VGG (16, 19), MobileNet and Inception (V2, V3). After digging deeper into each of them, we chose InceptionV3 developed by Google Research Lab. Once we chose our neural network, we moved on to designing the software architecture and training the algorithm.

- 2. Architecture The software is based on web technology and can be integrated into other systems like desktop applications and mobile apps. We used publicly available frameworks, libraries and technologies to develop the software. To create a static HTML5 web page, we deployed a web server in a Docker container. On that page, a user can upload a lung image and get feedback. The image is sent for processing through the HTTP protocol. Training Training is the most challenging part in building ML algorithms. Your ability to source enough data, avoid errors and be consistent throughout the process can make or break the algorithm. Manual training is often inconsistent. You may forget which steps you have taken and in which order, or occasionally delete logs. As a result, you won’t be able to accurately repeat a training session. Therefore, we automated the process from A to Z. We needed to train complex models with huge datasets fast. To do that, we rented an Amazon Web Services (AWS) g3s.xlarge instance and used Deep Learning Base AMI (Ubuntu 18.10). The latter is a powerful machine boasting 16GB of RAM, a 4-core CPU and an Nvidia Tesla M60 GPU. It was a perfect fit for the task. Once we have chosen the technology, the training could begin. We built a clean Docker container to isolate the model from outer influences and downloaded a ton of lung images from Kaggle. To be able to work with the images, we subsampled them, narrowing them down to a relevant and consistent selection. The dataset and training environment were ready. The training began. We faced a challenge in overtraining, whereby the model could memorize training images and as a result fail to accurately analyze new images in the future. Our solution was to slightly modify the images’ width, height, graininess and some other parameters. We also launched Tensorboard to monitor training metrics. At the final stages, we exported the model to an H5 file, a format commonly used across industries from healthcare to aerospace, for testing. We tested it manually and automatically, using preset scripts. Accuracy The model we’ve developed has a margin of confidence and uses binary identification. What does this mean? It means if the algorithm identifies 80% of lungs as unaffected, it will say the lungs are healthy. If the figure is below 80%, it will assume the lungs might be affected and require medical attention. How It Works The user opens the web application in their browser, uploads a lung image, sends it to the service and receives feedback. The feedback will show whether the lungs are healthy or if a doctor should take a look at the image. Result The tool we’ve built can help reduce human error in identifying pneumonia. This is particularly useful during the pandemic when doctors are overloaded and might overlook some signs of illness. We can also scale the model up to identify some other diseases. Scaling the model down will help integrate it into other systems, speed things up and allow for the analysis of multiple images simultaneously.