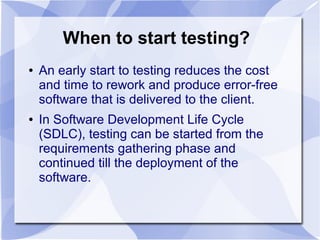

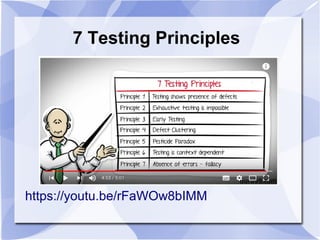

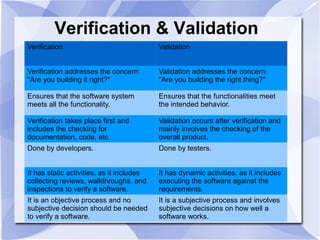

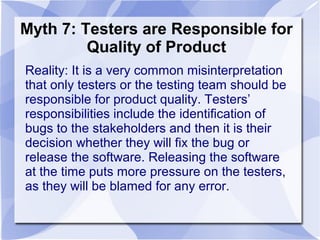

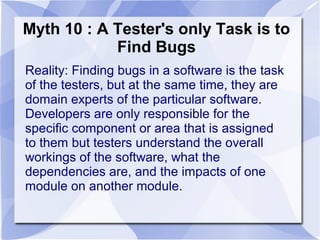

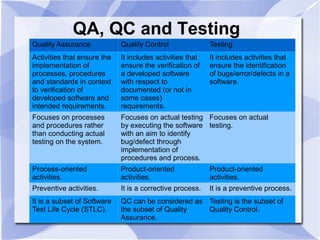

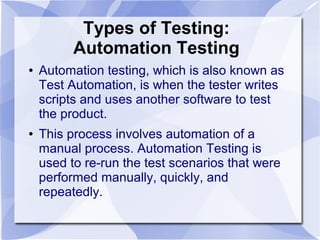

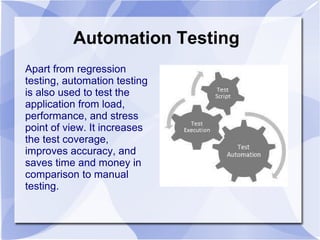

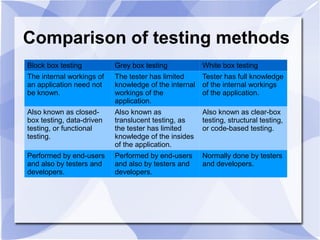

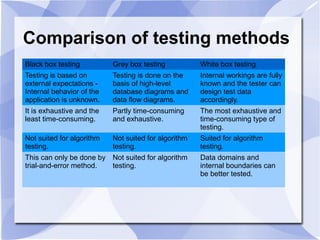

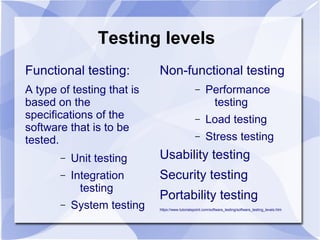

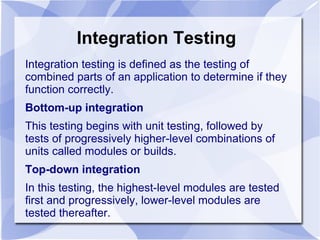

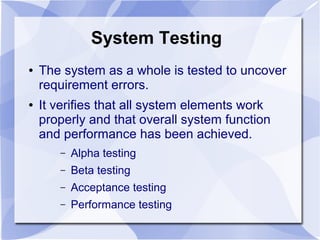

The document discusses various topics related to software testing including types of testing (manual vs automation), testing methods (black box, white box, grey box), testing levels (unit, integration, system), and common myths around testing. It provides definitions and examples of different testing techniques and clarifies misunderstandings around responsibilities and goals of testing. Videos are embedded to further explain key testing concepts like unit testing, integration testing, and differences between testing approaches.