Ranking Twitter Conversations

•Download as PPTX, PDF•

0 likes•327 views

Web Mining Course Project Presentation

Report

Share

Report

Share

Recommended

More Related Content

What's hot

What's hot (20)

Neural Network Based Context Sensitive Sentiment Analysis

Neural Network Based Context Sensitive Sentiment Analysis

IRJET- A Review on: Sentiment Polarity Analysis on Twitter Data from Diff...

IRJET- A Review on: Sentiment Polarity Analysis on Twitter Data from Diff...

IRJET- Sentimental Analysis of Product Reviews for E-Commerce Websites

IRJET- Sentimental Analysis of Product Reviews for E-Commerce Websites

IRJET- A Survey on Graph based Approaches in Sentiment Analysis

IRJET- A Survey on Graph based Approaches in Sentiment Analysis

Methods for Sentiment Analysis: A Literature Study

Methods for Sentiment Analysis: A Literature Study

An Improved sentiment classification for objective word.

An Improved sentiment classification for objective word.

Improving Sentiment Analysis of Short Informal Indonesian Product Reviews usi...

Improving Sentiment Analysis of Short Informal Indonesian Product Reviews usi...

A Survey on Sentiment Categorization of Movie Reviews

A Survey on Sentiment Categorization of Movie Reviews

Similar to Ranking Twitter Conversations

Similar to Ranking Twitter Conversations (20)

Enhancing Enterprise Search with Machine Learning - Simon Hughes, Dice.com

Enhancing Enterprise Search with Machine Learning - Simon Hughes, Dice.com

Kesahan & kebolehpercayaan pembentukan instrumen, kesahan dan kebolehpercayaa...

Kesahan & kebolehpercayaan pembentukan instrumen, kesahan dan kebolehpercayaa...

Qualitative Research vs Quantitative Research - a QuestionPro Academic Webinar

Qualitative Research vs Quantitative Research - a QuestionPro Academic Webinar

Personalized Search and Job Recommendations - Simon Hughes, Dice.com

Personalized Search and Job Recommendations - Simon Hughes, Dice.com

Các phương pháp nghiên cứu thị trường - Market research methods

Các phương pháp nghiên cứu thị trường - Market research methods

How Oracle Uses CrowdFlower For Sentiment Analysis

How Oracle Uses CrowdFlower For Sentiment Analysis

When Mobile meets UX/UI powered by Growth Hacking Asia

When Mobile meets UX/UI powered by Growth Hacking Asia

Recently uploaded

Recently uploaded (20)

Balasore Best It Company|| Top 10 IT Company || Balasore Software company Odisha

Balasore Best It Company|| Top 10 IT Company || Balasore Software company Odisha

Automate your Kamailio Test Calls - Kamailio World 2024

Automate your Kamailio Test Calls - Kamailio World 2024

Recruitment Management Software Benefits (Infographic)

Recruitment Management Software Benefits (Infographic)

BATTLEFIELD ORM: TIPS, TACTICS AND STRATEGIES FOR CONQUERING YOUR DATABASE

BATTLEFIELD ORM: TIPS, TACTICS AND STRATEGIES FOR CONQUERING YOUR DATABASE

Software Project Health Check: Best Practices and Techniques for Your Product...

Software Project Health Check: Best Practices and Techniques for Your Product...

Tech Tuesday - Mastering Time Management Unlock the Power of OnePlan's Timesh...

Tech Tuesday - Mastering Time Management Unlock the Power of OnePlan's Timesh...

A healthy diet for your Java application Devoxx France.pdf

A healthy diet for your Java application Devoxx France.pdf

Dealing with Cultural Dispersion — Stefano Lambiase — ICSE-SEIS 2024

Dealing with Cultural Dispersion — Stefano Lambiase — ICSE-SEIS 2024

SpotFlow: Tracking Method Calls and States at Runtime

SpotFlow: Tracking Method Calls and States at Runtime

Catch the Wave: SAP Event-Driven and Data Streaming for the Intelligence Ente...

Catch the Wave: SAP Event-Driven and Data Streaming for the Intelligence Ente...

Unveiling Design Patterns: A Visual Guide with UML Diagrams

Unveiling Design Patterns: A Visual Guide with UML Diagrams

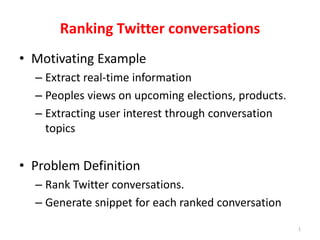

Ranking Twitter Conversations

- 1. 1 Ranking Twitter conversations • Motivating Example – Extract real-time information – Peoples views on upcoming elections, products. – Extracting user interest through conversation topics • Problem Definition – Rank Twitter conversations. – Generate snippet for each ranked conversation

- 2. 2 Related Work • Wang, Hao, Zhengdong Lu, Hang Li, and Enhong Chen. "A Dataset for Research on Short-Text Conversations." In EMNLP, pp. 935-945. 2013. • Key Idea – retrieval-based response model for short-text based conversation • Their solution – Considered few selected topics from Sina Weibo – Semantic matching between post-response – Post-response similarity • Their results – Mean average precision – 0.621 – retrieval is fairly effective at capturing the semantic relevance, but relative weak on modeling the logic consistency

- 3. 3 Our Methodology • Key Idea of your work – Give an importance score to tweets based on their position and user based on their appearances apart from using inverted index. • Solution Description – Filter tweets – Create word index – Considering SMS language – Score tweets according to TF and tweet and user score – TF score for tweets according to word type • Hashtag, user mention, other words – Generate snippets • Our approach is ranking twitter conversation rather that just finding responses to tweets.

- 4. 4 Parse twitter data Filter valid tweets Extract conversation Remove stop words Remove duplicate words in a tweet Creating inverted word index Calculate user and tweet score Get query Parse words in query Expand SMS words Calculate conversation score based on TF and tweet and user score Generate snippet and display the results

- 5. 5 Dataset and Experimental Settings • Dataset details (size, source, other data statistics) – 12077 tweets – 4521 conversations (length >= 2) – 119 Stop words • Experimental settings – Play with removing or adding the below constraints • duplicate words • stop words • Tweet/user score – Expand SMS words in query • Accuracy or any other metric you used – Results were subjective and it was obtained iteratively

- 6. 6 Results and Summary • Results and analysis of results – Subjective in nature. Accuracy could not be obtained without knowing the context of the conversation. • What did you learn from this project? – A basic understanding of how documents can be ranked given a query • Future work: – Infer context of the conversation – Calculate precision/recall by programmatically tagging tweets