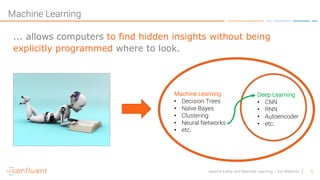

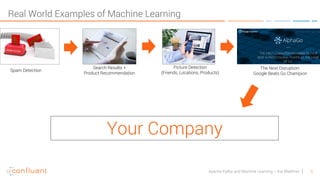

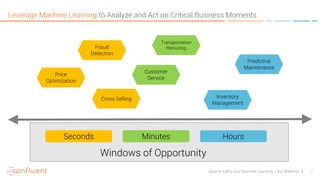

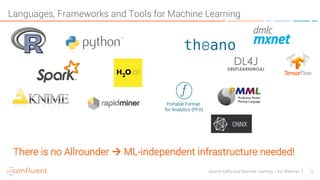

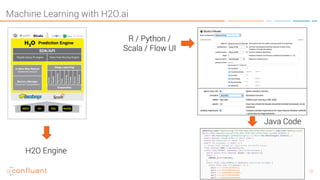

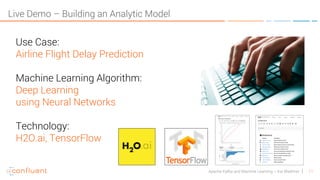

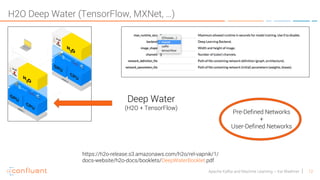

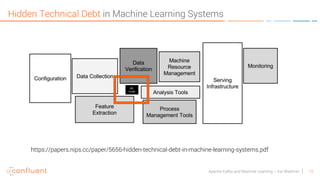

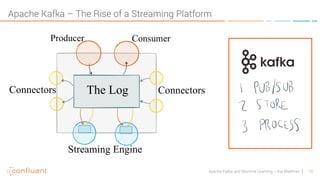

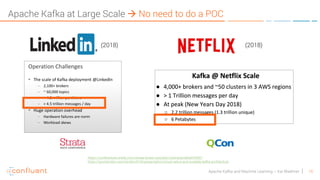

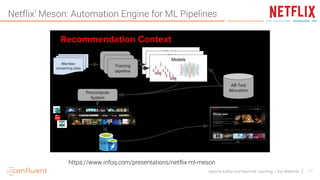

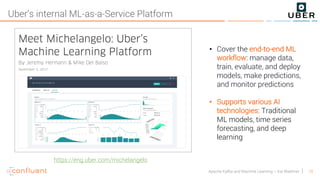

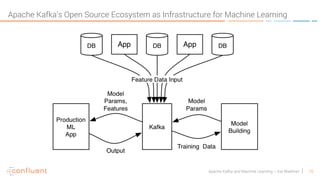

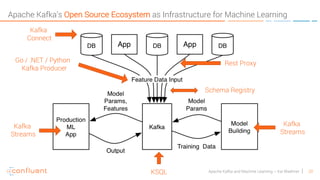

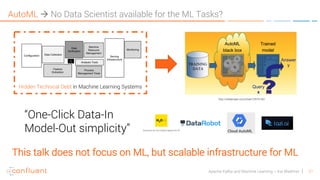

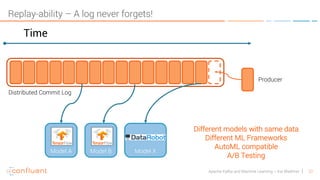

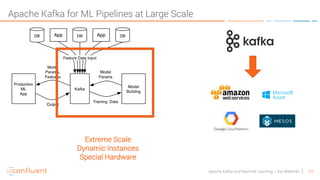

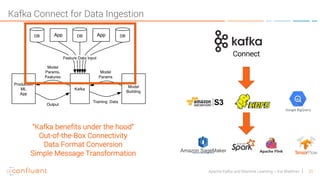

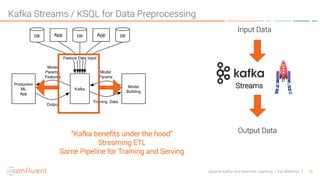

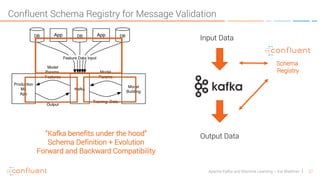

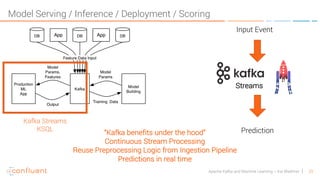

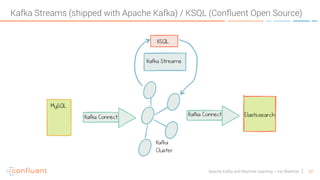

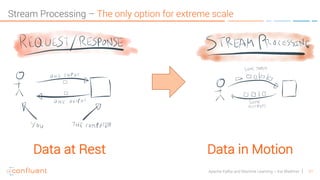

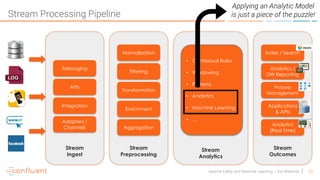

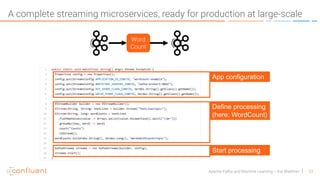

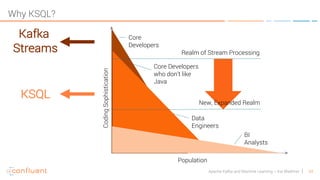

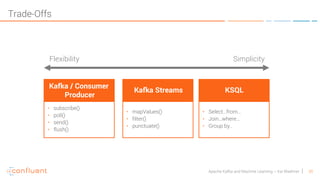

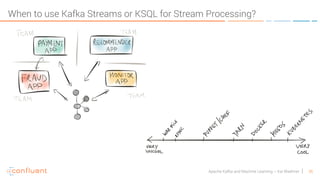

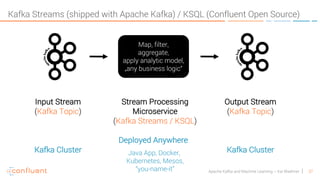

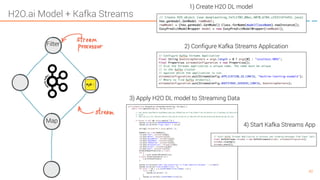

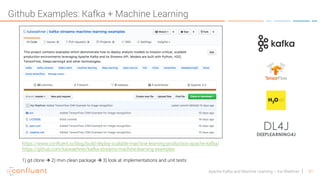

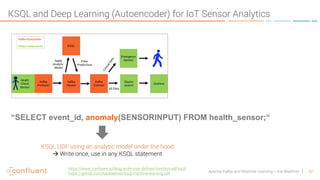

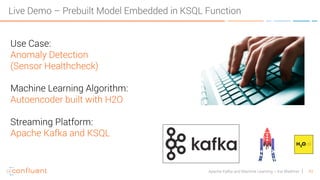

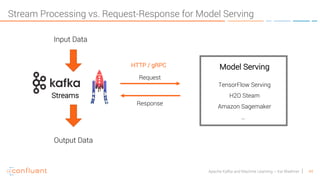

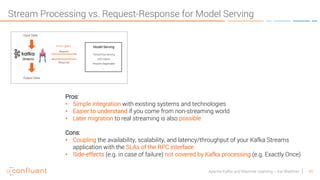

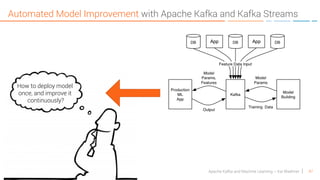

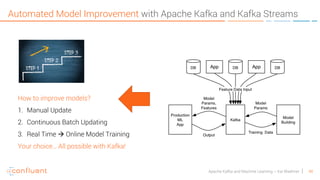

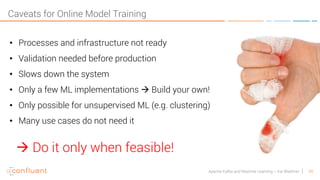

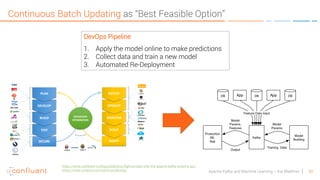

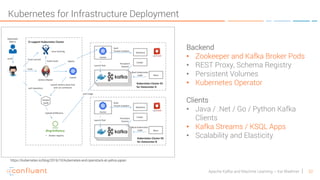

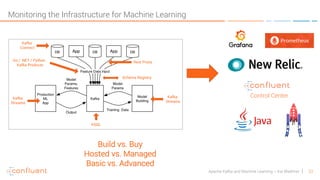

The document discusses how to leverage the Apache Kafka ecosystem for building machine learning infrastructures at extreme scale, highlighting key components such as data ingestion, real-time predictions, and monitoring. It emphasizes the value of machine learning across various business applications and outlines the use of different machine learning frameworks and tools. Additionally, it presents real-world examples and use cases, including anomaly detection and flight delay predictions, demonstrating Kafka's role in supporting scalable machine learning workflows.