More Related Content

Similar to PandoraPPT2 (20)

PandoraPPT2

- 1. Investigating Performance Using Apache Tez

Ace Haidrey, Ad-Analytics Team - Engineering

July 28, 2016

Abstract

Big data has recently become a common buzzword in the industry, but few

understand the true extent of the word. Big data is a term for massive data sets

having large, complex, and more varied structure, which creates difGiculties of storing

and analyzing this data for results or further processes. A lot of research is being

done today to reveal hidden patterns or secret correlations within these data sets to

gain richer and deeper insights. These insights are extremely valuable for companies

for multiple reasons, such as gaining advantages over their competitors and making

their engineering teams more productive. One big data framework, Hadoop

MapReduce, has become the de facto standard for processing voluminous data on a

large cluster of machines. However, some tasks/jobs do not naturally yield to this

programming model. This poster presentation will delve into the shortcomings of

Hadoop MapReduce and will examine another programming model: Apache Tez.

Hadoop was created to handle processing of large scales of data using clusters of

computers. It’s based on two components: Hadoop Distributed File System (HDFS)

and MapReduce (MR). It works by distributing the data across nodes in its cluster of

computers, and then computes on this data locally on each of these nodes. Hadoop

maintains reliability by replicating its data across multiple nodes, in case a computer

fails. The MapReduce component provides developers with a higher-level

abstraction and while it does hide away the complexity of writing a distributed

program/application, it severely restricts the input/output model and problem

composition. It requires any problem to be formulated into a rigid three-stage

process which is composed of the following: Map, ShufGle/Sort, and Reduce. This can

be seen in the Gigure below. MR also allows chaining of many of these two phase

tasks for sequential execution, and this allows for simpliGication of development, but

it is clearly not suitable to all problems. For example, it is unGit for iterative

workloads such as Machine Learning tasks trying to optimize cost functions, or even

interactive workloads such as streaming data processing or data mining. Beyond just

the simplicity of the model, there are other shortcomings of MR, such as storing

intermediate outputs in the hard drive, which takes a hit on performance for very

large data sets. Thus, it was evident a new model for processing data on Hadoop was

needed.

Hadoop MapReduce

Apache Tez

My Project: Adding the Execution Option

Apache Tez uses Directed Acyclic Graphs (DAGs) and does everything MapReduce

does, but faster. MapReduce can be thought of as a DAG with 3 vertices, but Tez allows

for as many vertices as desired. The interactions among these vertices are performed

using shared memory, Giles, or network pipelines. One reason Tez is more successful is

that it is more natural for SQL query plans. Tez models an application into a dataGlow

graph where vertices represent logic and the dataGlow among these vertices are the

edges, so it can be seen how this is more Gitting for most query languages. Tez also

allows for dynamic graph reconGiguration where it can alter the number of mapper and

reducer tasks on the Gly as it seems the data output estimate getting larger or smaller.

Another beneGit Tez introduces is data type agnostic, i.e. this execution engine is only

concerned with moving data through the pipelines, not the data format (tuple oriented

formats, key-value pairs, csv, etc.)

The most convenient advantage of using Apache Tez for developers is that it is

completely a client side application, so it leverages Apache Hadoop YARN local

resources and distributed caches. To use Tez on your cluster, there’s no need to deploy

anything besides uploading the relevant Tez jars to HDFS. Best of all, Tez can run any

MR job without modiGication. This allows for migration of projects in the staging

process which currently depend on MR. My project aims to take advantage of this

migration process within the ad-analytics team, and eventually expand to other teams

as well.

Over the past 7 weeks, I’ve been working on incorporating the Tez execution engine to

existing hive scripts. Upon further investigation, I found that there are currently three

hive execution options: MapReduce, Tez, and Spark. I created two options to set this

option within our jobs in the Analytics module, which is used by many teams.

The Girst option is to set the parameter in the VM:

SET_HIVE_EXECUTION_ENGINE = ‘TEZ’

The second option is within the job URL, add the parameter:

http://drake-ahaidrey-1.savagebeast.com:9001/analytics/job_control.vm

action=start&name=BrandHiveJob&set_execution_engine=tez

If the option within the VM is set, every job and task will run with the engine option, but

if it set in the URL, it will run for only that job. There’s also an option to leave the hive

execution engine already set in the script to remain unchanged. I am proud to say after

writing unit tests and going through multiple iterations after code review suggestions, I

was able to commit and merge my changes into the main-insights, release-insights and

main branches.

My Project: Tracking Performance

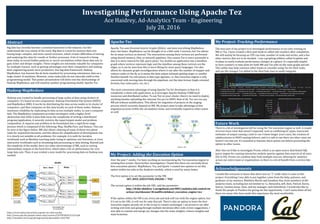

The next part of my project is to investigate performance of our jobs running on

MR vs Tez. I have created a Hive post hook to collect Job counters after completion.

We will mainly be focusing on CPU run time, number of reads and writes, and a few

other metrics that are to be decided. I use a graphing utilities called Graphite and

Grafana to easily evaluate performance changes at a glance. It’s especially helpful

to have counters vs time plots for both MR and Tez jobs on the same graph and job.

This utility may help convince other teams to consider using Tez for their tasks,

and use the changes I’ve added to the HiveTask class to easily integrate.

Future Work

There is a lot of investigation left for using the Tez execution engine as well. A couple

of errors have risen that weren’t expected, such as conGlicting GC types, inaccurate

estimates of output causing a task to run 4 times longer (rare case), the creation of

subdirectories in HDFS causing failures, and it is safe to say there are other cases we

haven’t run into yet. It’s essential to hammer these points out before presenting the

option to other teams.

After this we’d like to investigate Presto, which is an open source distributed SQL

query engine for running interactive analytic queries against data sources of all sizes

(Gb to Pb). Presto can combine data from multiple sources, allowing for analytics

across our entire team or organization, so there is a lot of beneGit from a service like

this.

Acknowledgements

I would like everyone to know that there was no “I” really when it came to this

project. Everything I was able to put together came from the help, patience, and

guidance of my mentors, Matthieu Martin and Jonathan Hsu, from members of the

analytics team, including but not limited to: Li, Alexandra, Jeff, Dom, Patrick, Karen,

Saurav, Gautam Jenny, Sean, and my manager, Jack Schonbrun. I would also like to

thank the people at Pandora for giving me this opportunity. I can’t name them all but

there are many who have made this experience the most worthwhile.

https://www.infoq.com/articles/apache-tez-saha-murthy

http://www.sjsu.edu/people/robert.chun/courses/CS259Fall2013/s3/F.pdf

http://ieeexplore.ieee.org/xpl/login.jsp?tp=&arnumber=6567202