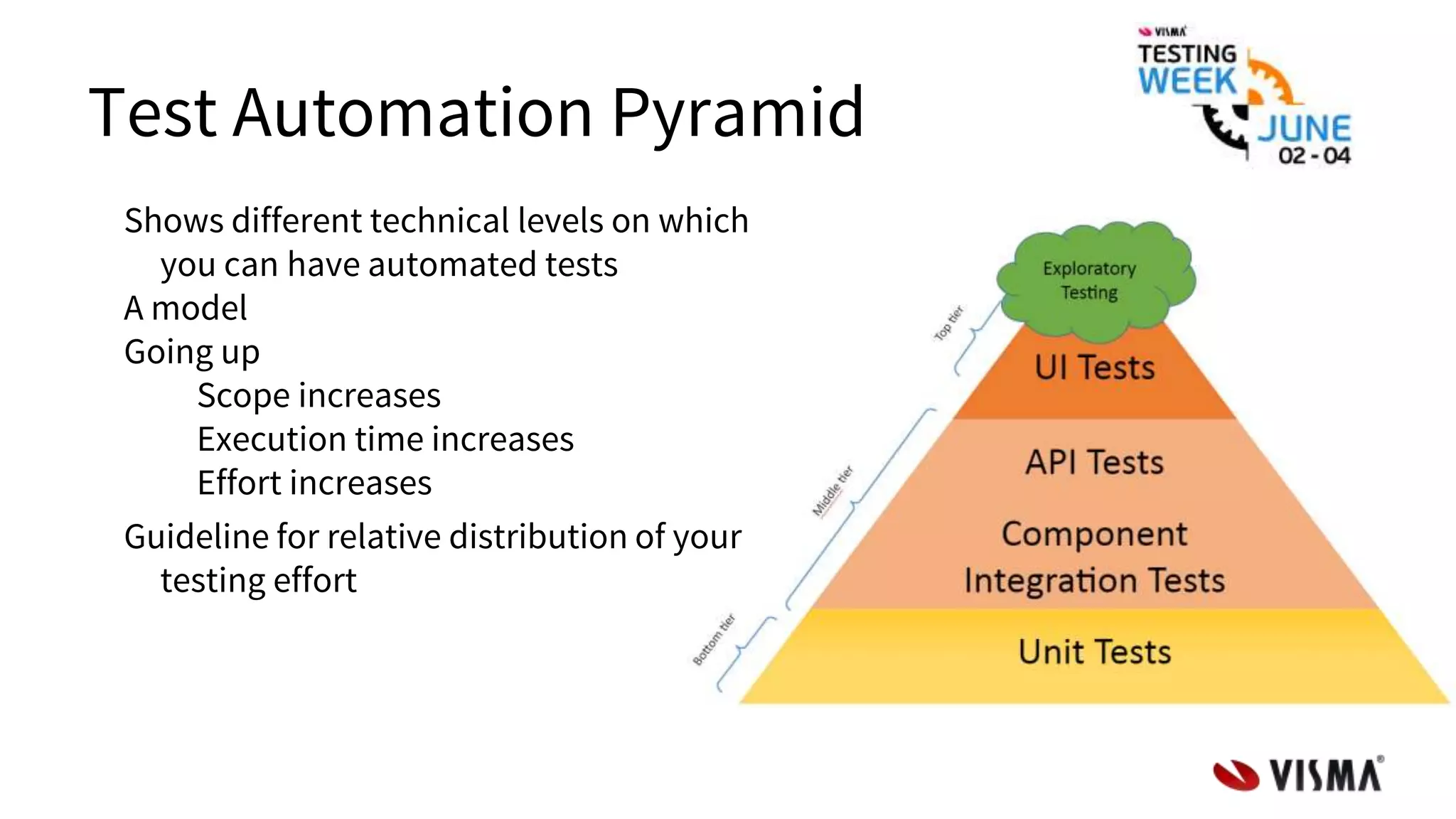

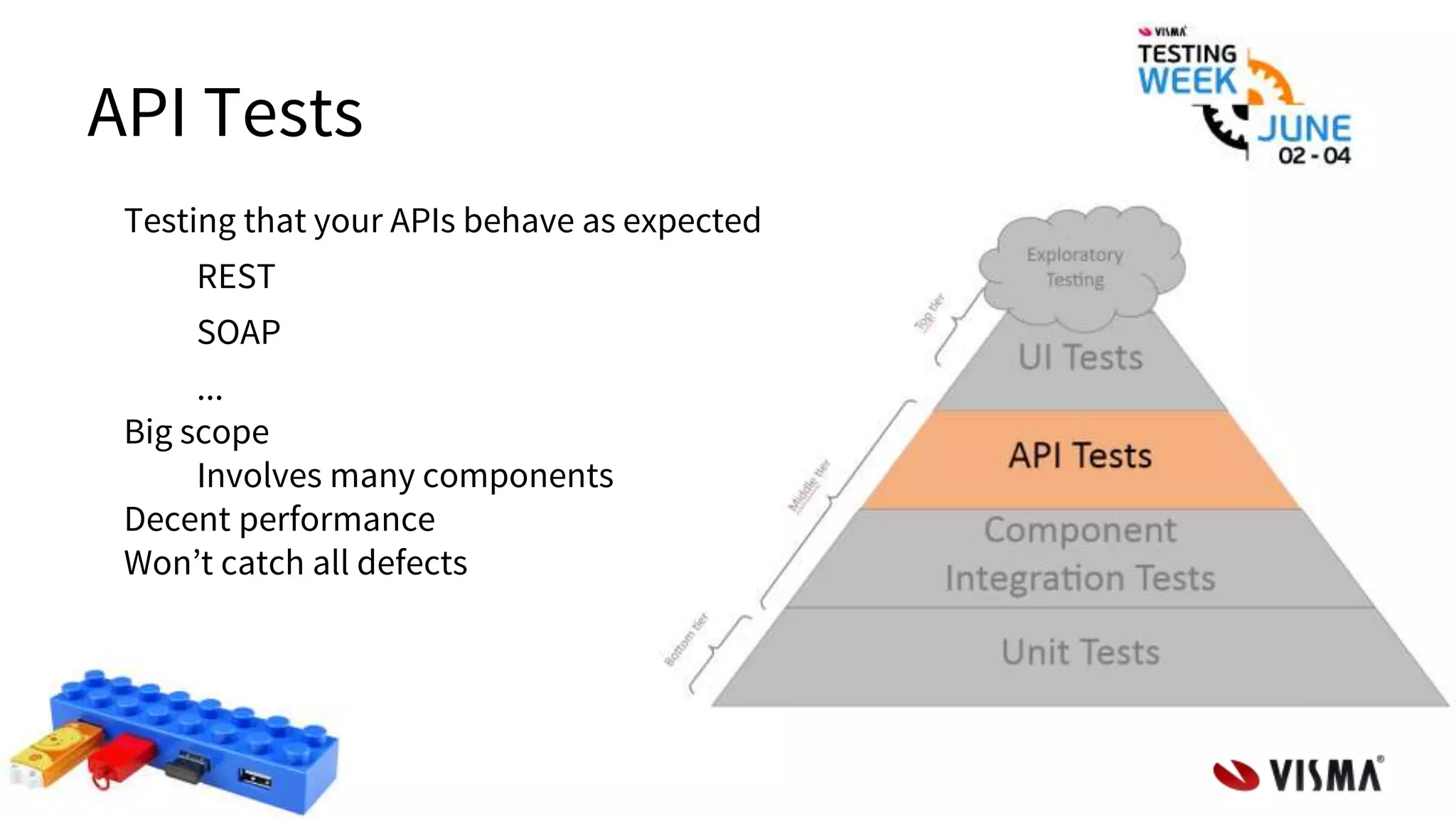

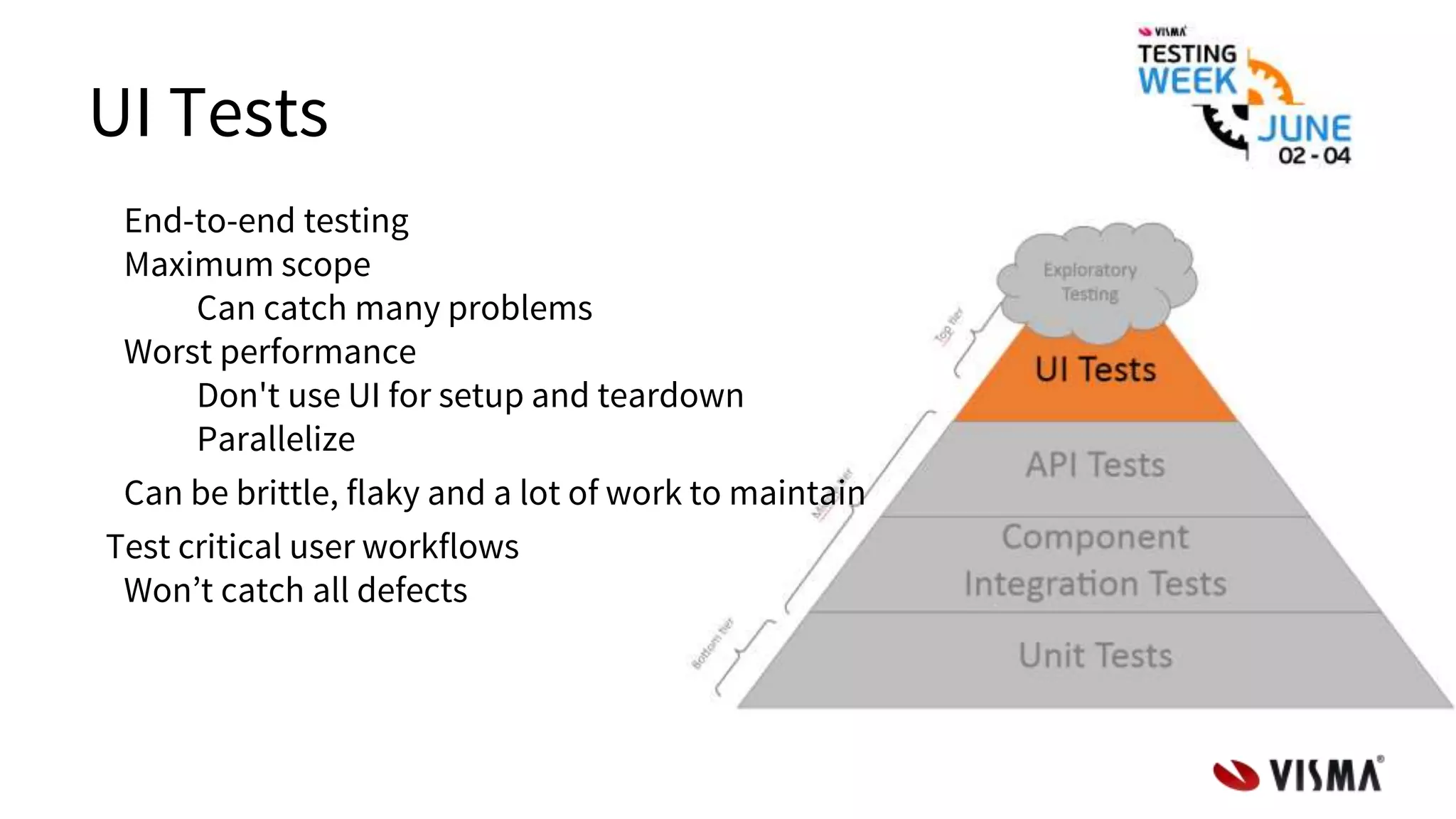

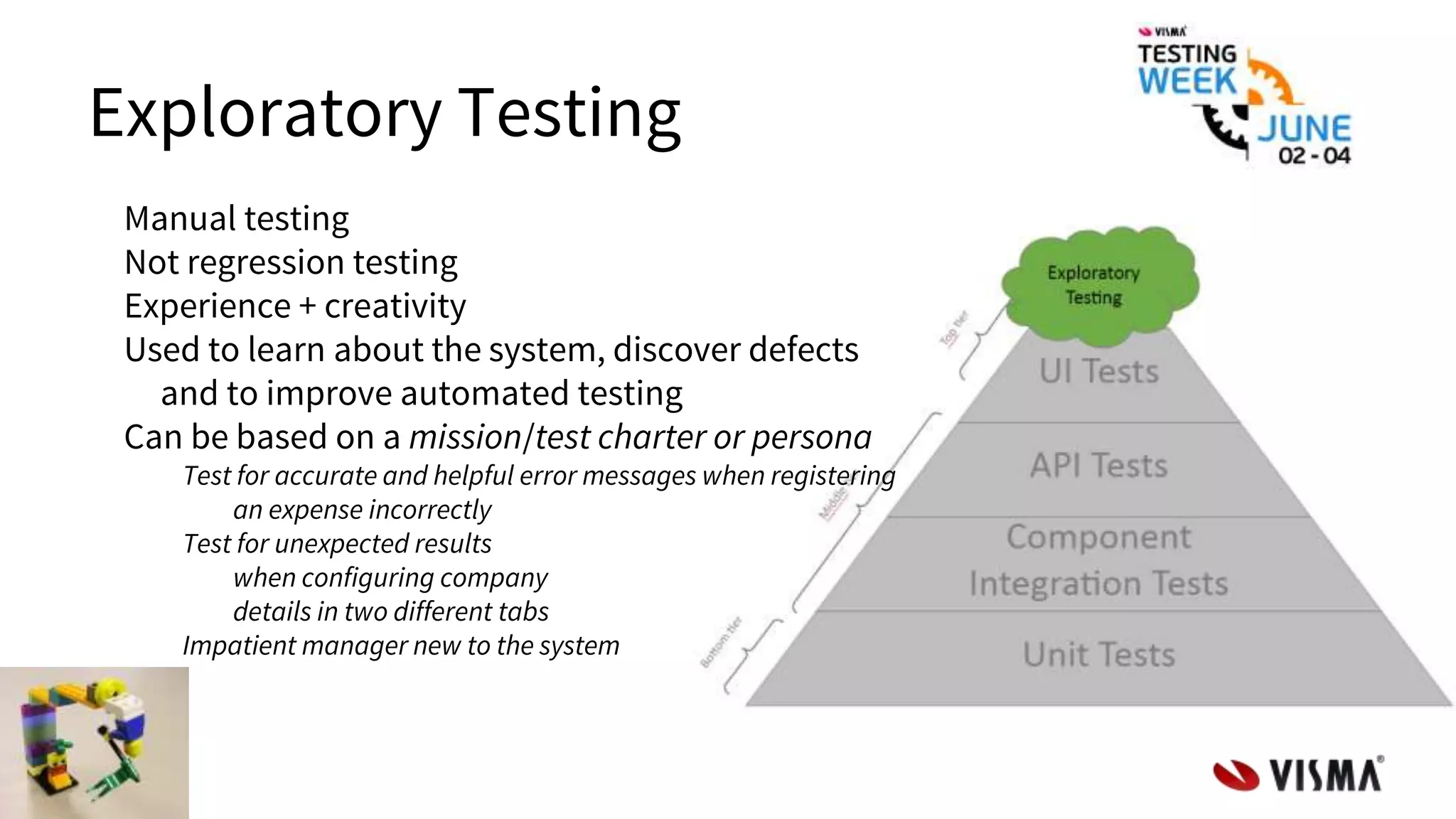

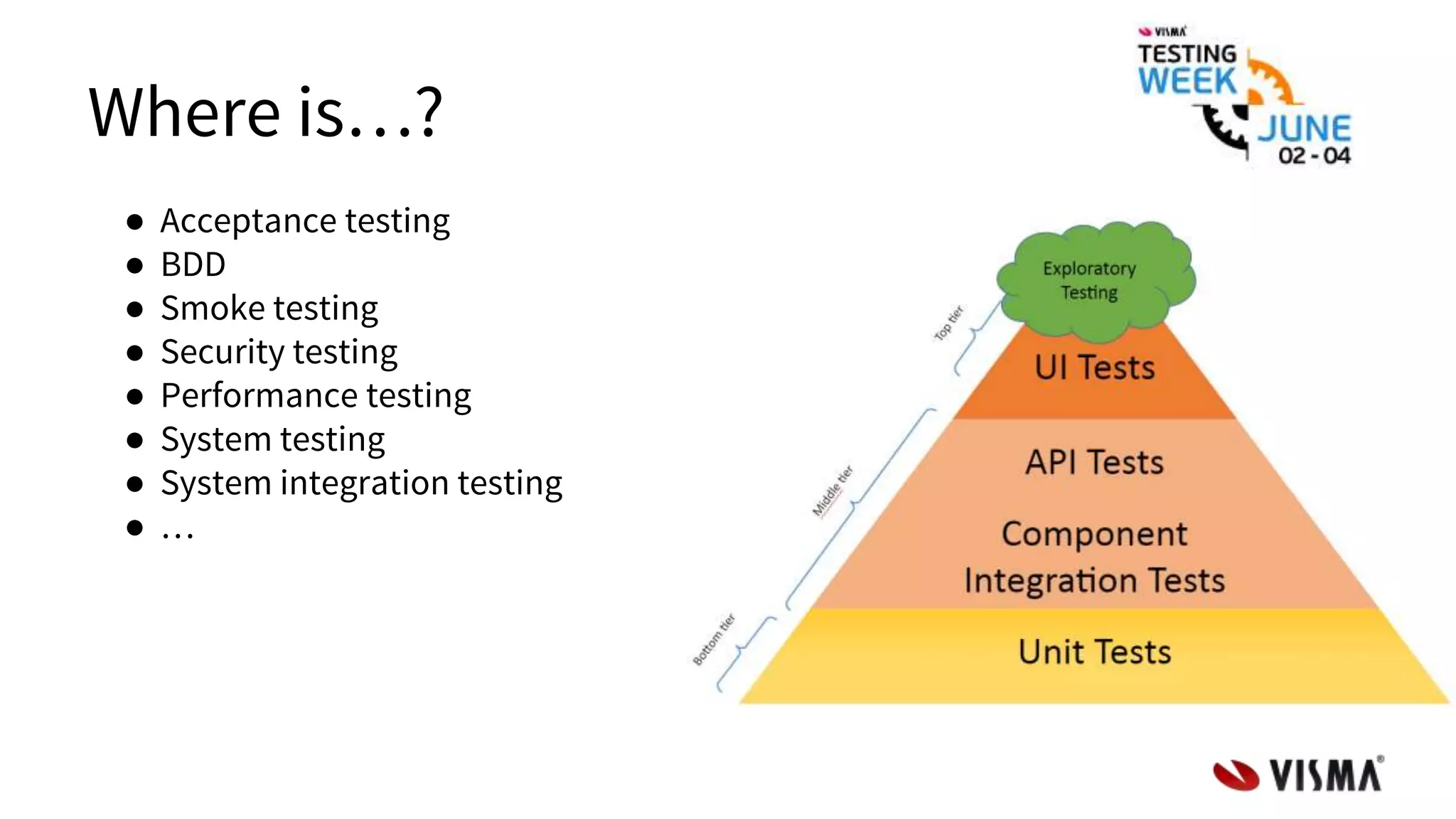

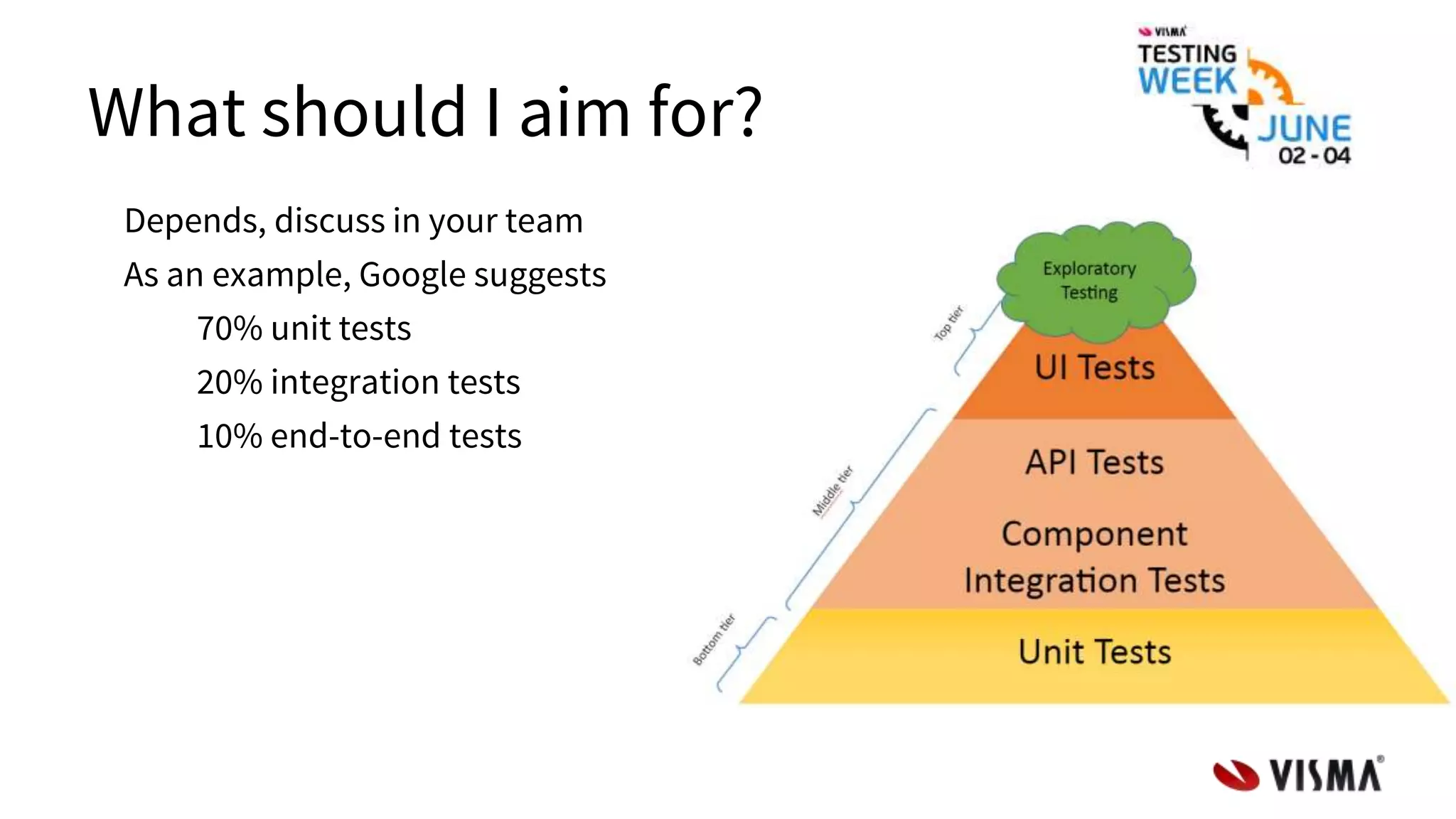

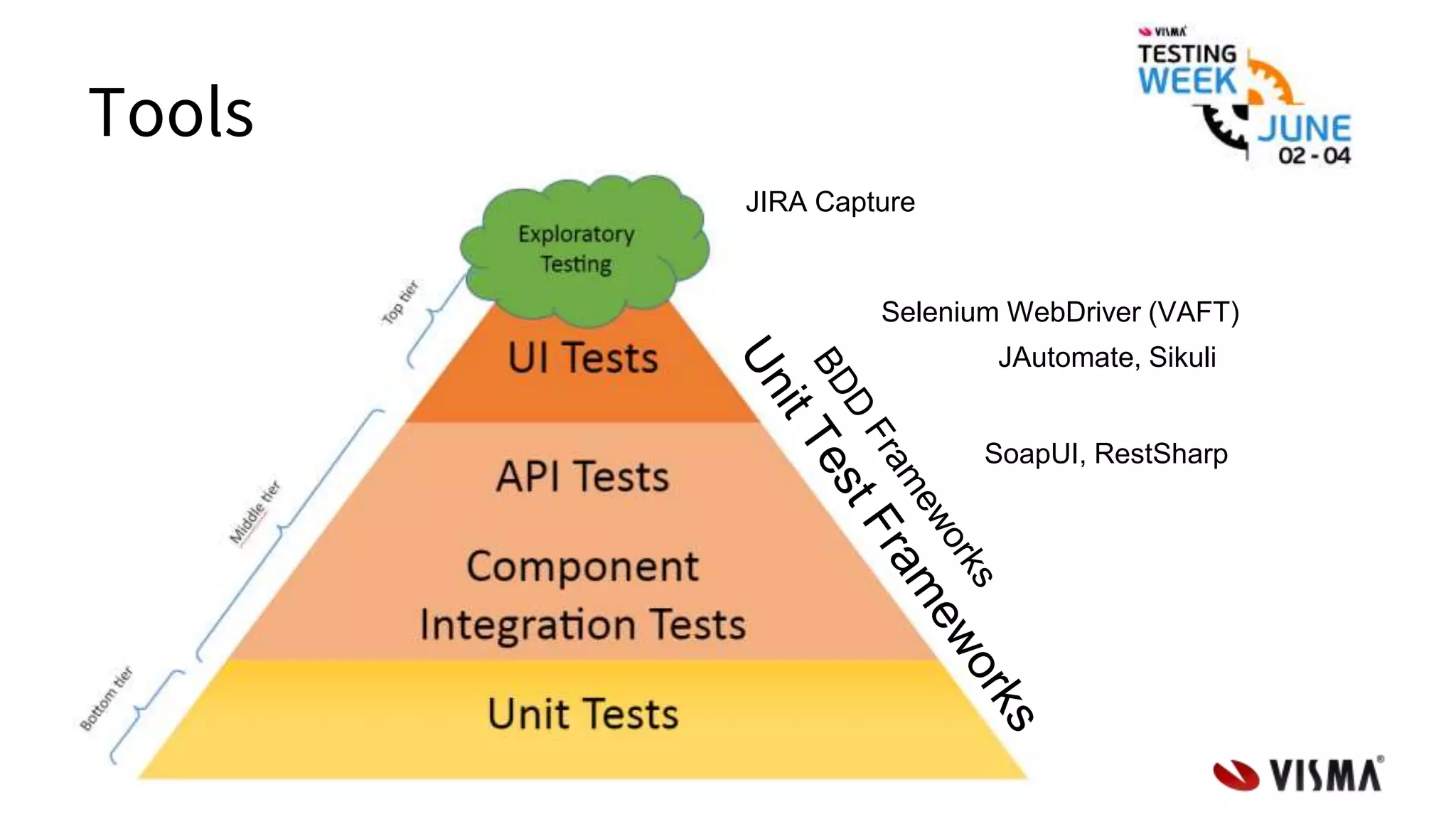

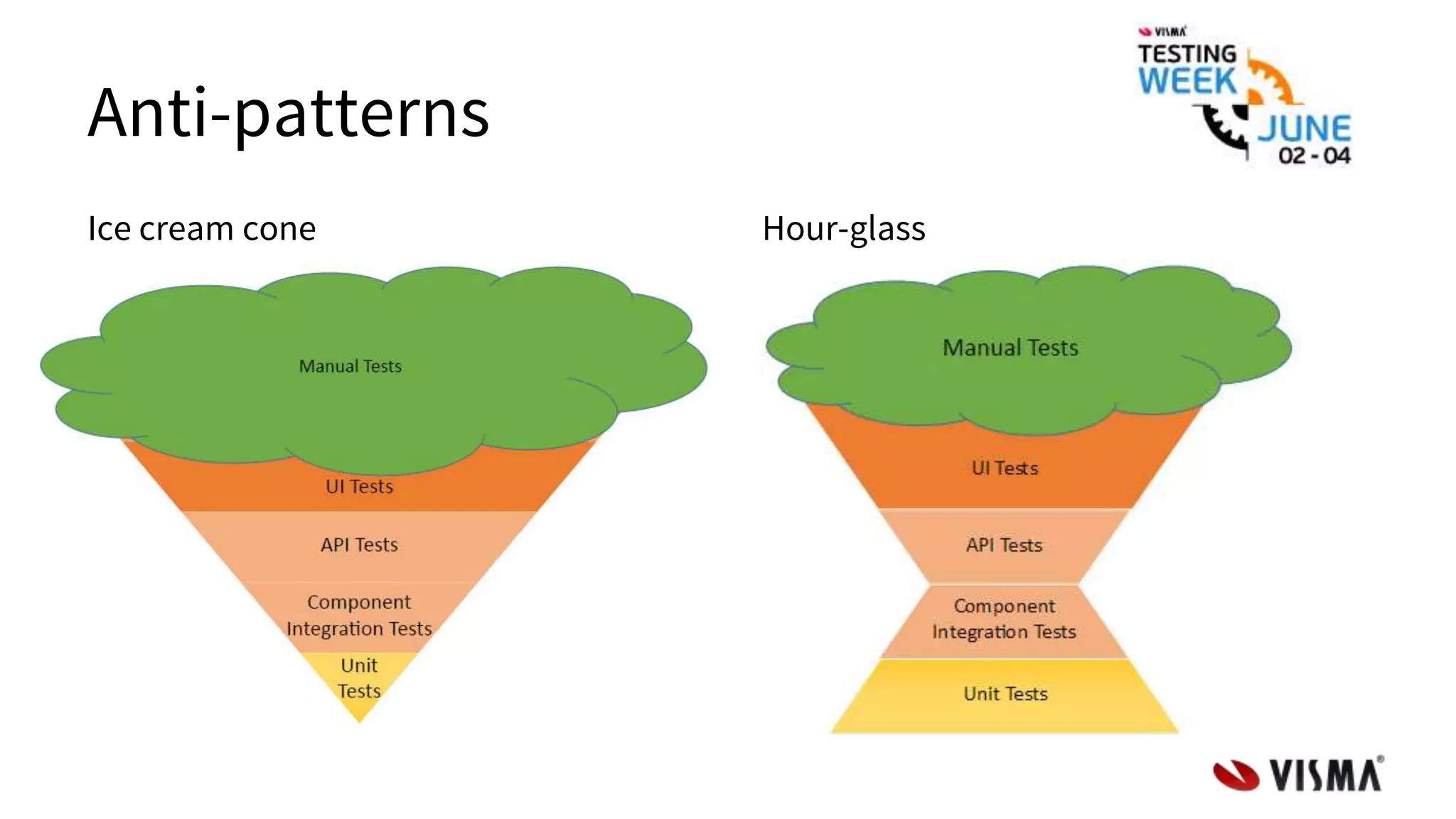

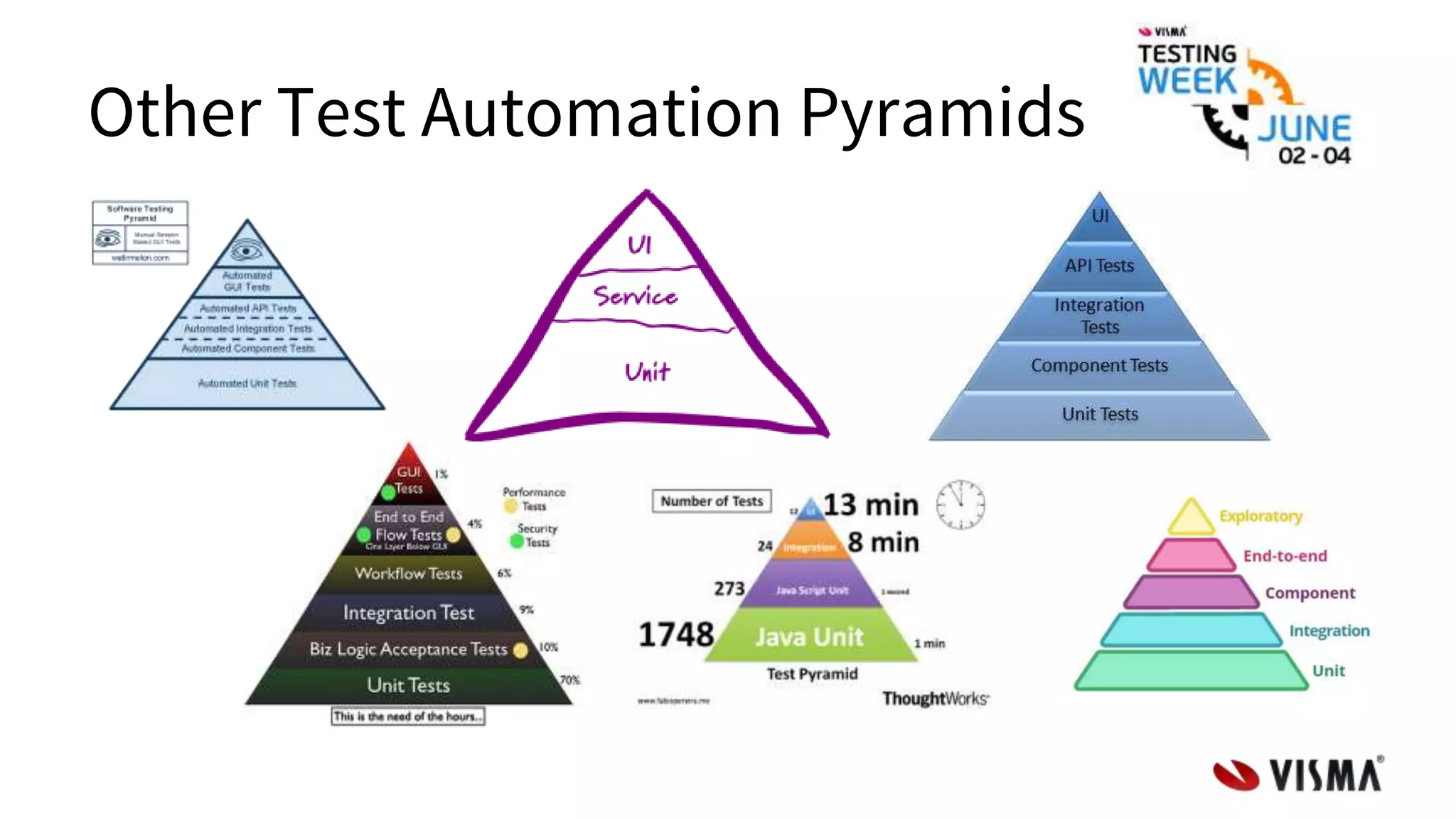

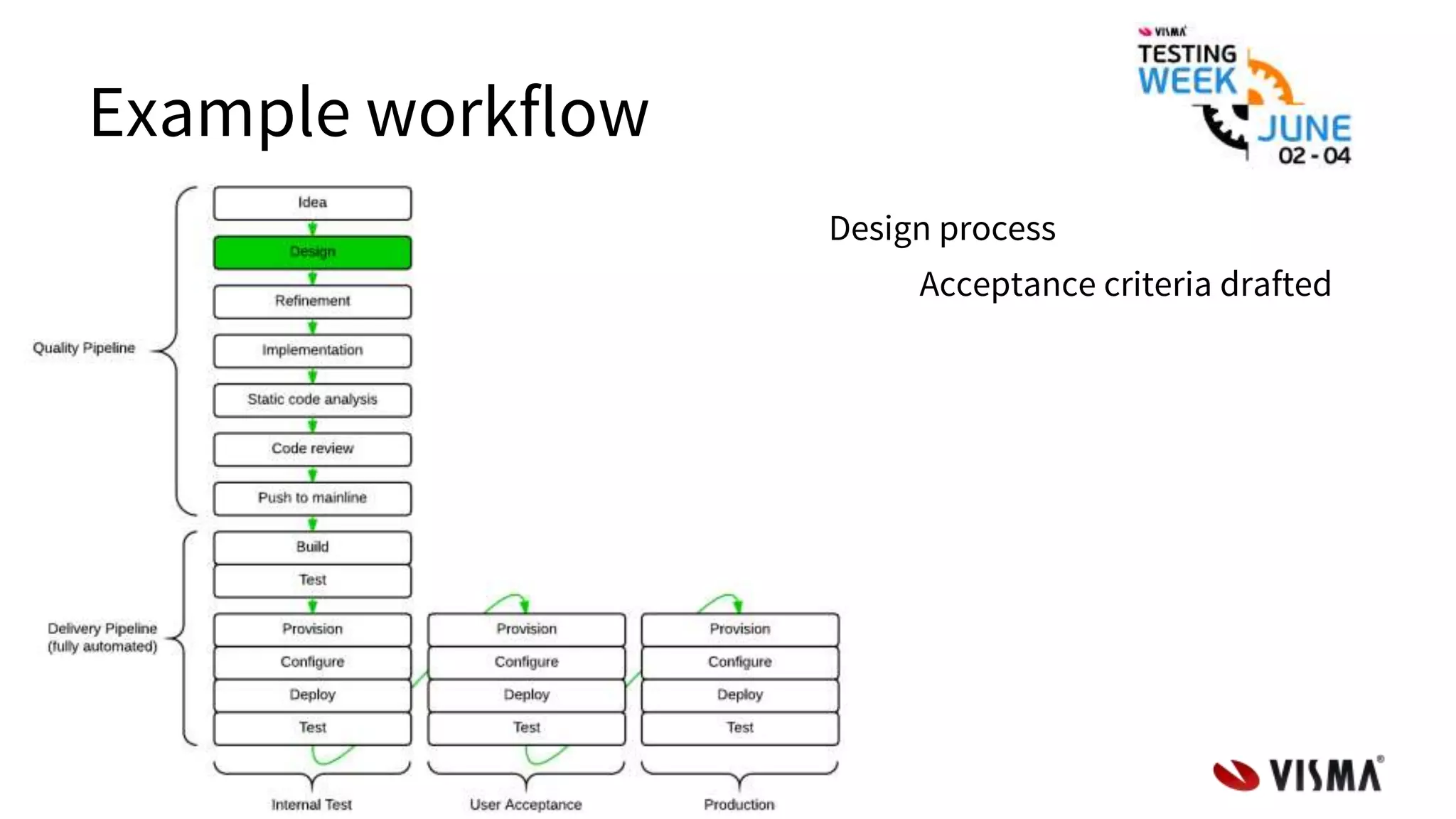

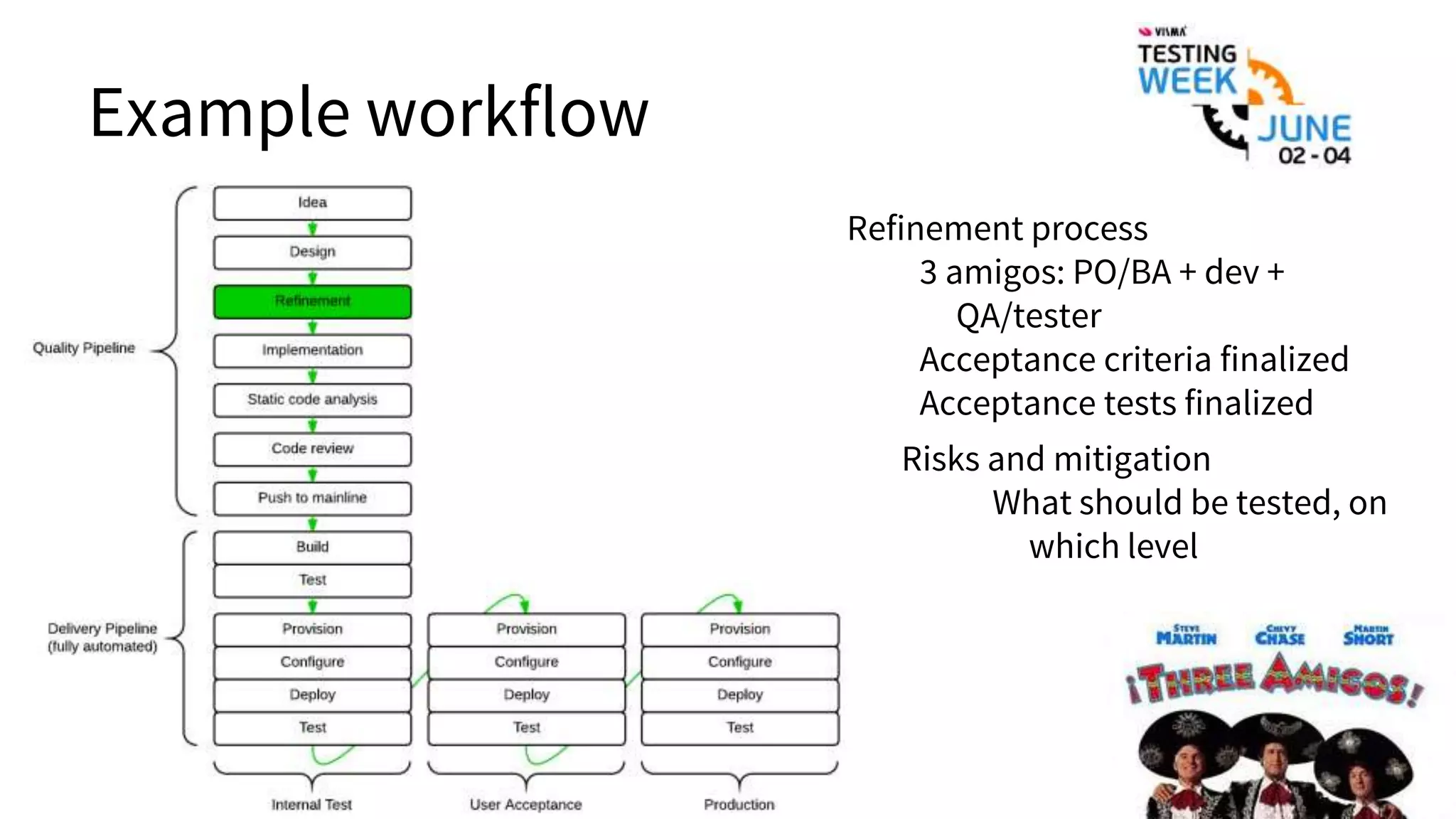

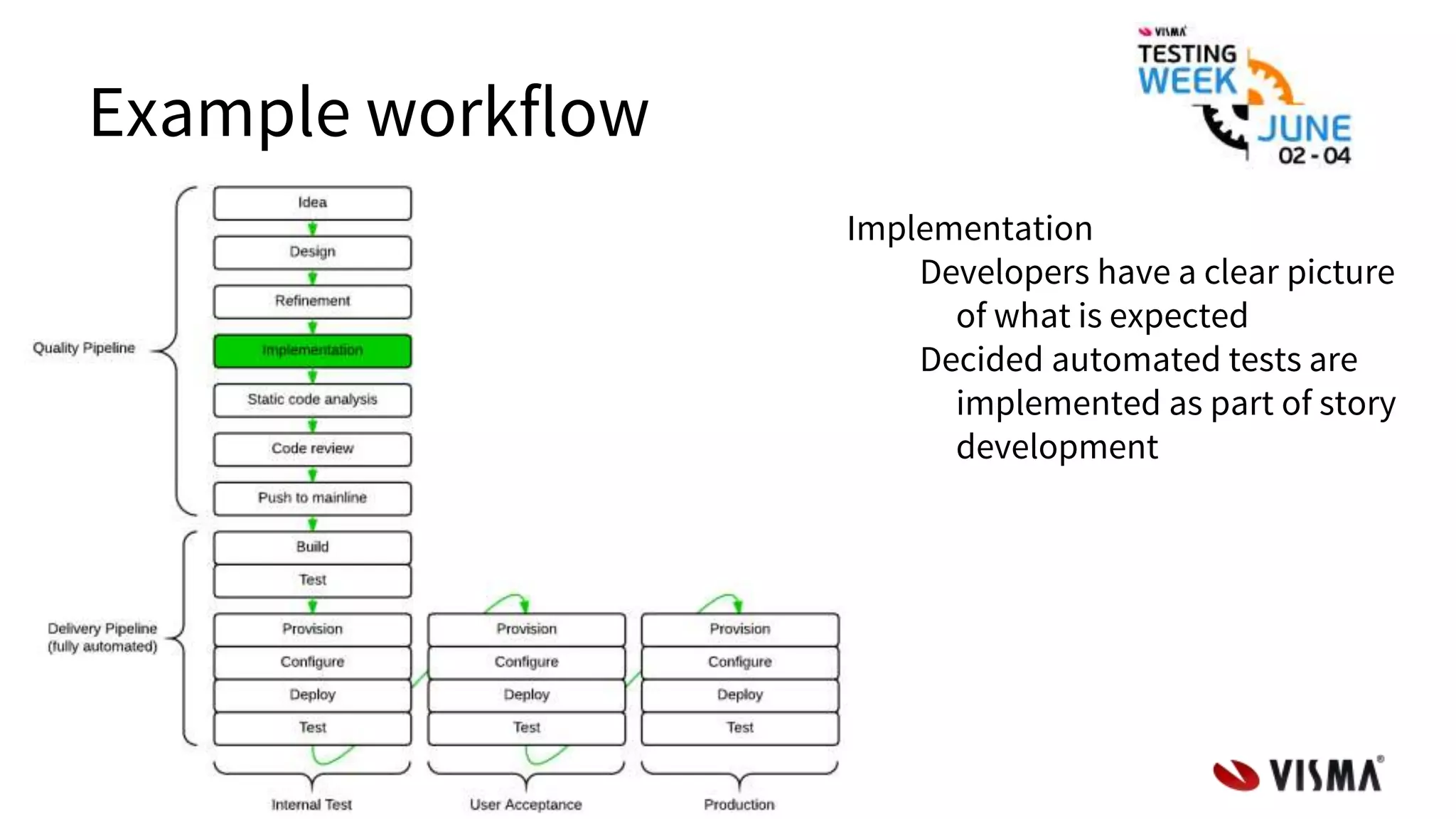

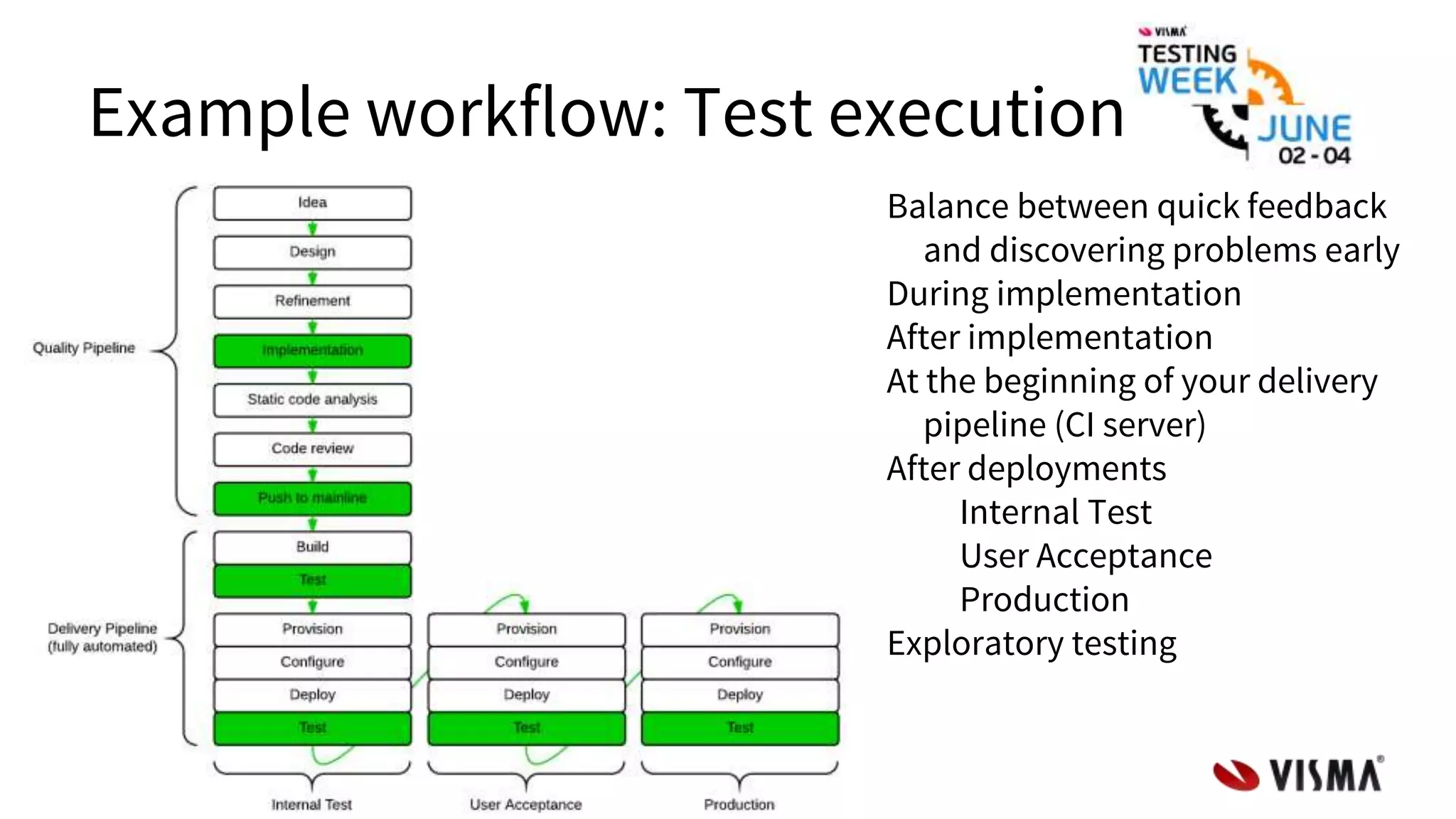

The document discusses the test automation pyramid, emphasizing its role in improving software quality through efficient testing practices. It outlines different testing levels—unit, component integration, API, UI, and exploratory tests—highlighting their respective scopes, performance, and limitations. The pyramid serves as a guideline for distributing testing efforts effectively to balance quality and velocity.