Week10 Web Presentation

•

0 likes•325 views

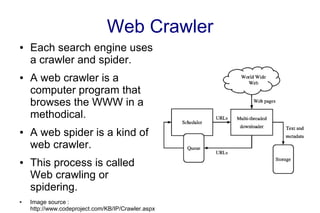

A web crawler is a program that systematically browses websites to index them. It gathers information from web pages using spiders, which are specialized crawlers. The spiders pass retrieved data to applications that index the content and build searchable databases from keywords and terms. When a user searches online, the search engine checks its database of indexed web pages to return relevant results.

Report

Share

Report

Share

Download to read offline

Recommended

Introduction to Microdata & Google Rich Snippets

HTML5 is taking web documents to a next level, by adding semantics. HTML5 contains several semantics elements but they are not enough to annotate your content. You can tag your content with Microdata to build a better web document which can be understood by machines.

This presentation helps you understand Microdata, one of the most popular format to add semantics to your content. It will also give a brief about Google Rich Snippets.

Building an unstructured data management solution with elastic search and ama...

Learn how to manage unstructured data by building a document database with document, page indexing and retrieval solutions using Elasticsearch and Amazon Web Services

Recommended

Introduction to Microdata & Google Rich Snippets

HTML5 is taking web documents to a next level, by adding semantics. HTML5 contains several semantics elements but they are not enough to annotate your content. You can tag your content with Microdata to build a better web document which can be understood by machines.

This presentation helps you understand Microdata, one of the most popular format to add semantics to your content. It will also give a brief about Google Rich Snippets.

Building an unstructured data management solution with elastic search and ama...

Learn how to manage unstructured data by building a document database with document, page indexing and retrieval solutions using Elasticsearch and Amazon Web Services

SMART CRAWLER: A TWO-STAGE CRAWLER FOR EFFICIENTLY HARVESTING DEEP-WEB INTERF...

SMART CRAWLER: A TWO-STAGE CRAWLER FOR EFFICIENTLY HARVESTING DEEP-WEB INTERFACES

C:+91 8121953811,L:040-65511811

M:cloudtechnologiesprojects@gmail.com

All 2015 Implementations http://goo.gl/L07S0Q

http://cloudstechnologies.in/

DomainTools Fingerprinting Threat Actors with Web Assets

Threat actors tools, techniques and procedures are evolving at a rapid pace, making it even more difficult for organizations to effectively defend their network. This is forcing security professionals to be more agile and move beyond simple block and tackle security strategies.

Join SANS instructor, Rebekah Brown and DomainTools Data Systems Engineer, Mike Thompson to learn how the threat intelligence space is changing and what techniques security professionals can apply to stay ahead of threat actors.

In this webcast you will learn:

How the threat intelligence space is evolving

Practical steps your team can take to get ahead of threat actors

Real world examples of enumerating attacker infrastructure using web assets and other information scraped from html

gRSShopper

Overview of my open source aggregation and publication tool, gRSShopper, a prototype personal learning environment

How To Build your own Custom Search Engine

This is a case study on how to build a custom search engine using Elasticsearch.

The approach given here contains following components

1. Crawler -> To Scrape The data from web sites.

2. Google Analytics -> To fetch information about page views, hits, exits etc to show popular results on top.

3. Google NLP -> To analyze the search terms and extract information about people, places and other entities.

4. Elasticsearch -> To store the documents crawled and perform search its capabilities.

Salesforce connect

Slides from Salesforce bangalore developer group event organised at UrbanLadder on "Salesforce Connect".

Salesforce Connect is a framework that enables you to view, search, and modify data that’s stored outside your Salesforce org.

Indexing repositories: Pitfalls & best practices

Indexing repositories: Pitfalls & best practicesSistema de Servicios de Información y Bibliotecas SISIB

Darcy Dapra

Strategic Partner Manager en Google Scholar (Estados Unidos)Elastisearch ur own local google

What is elasticsearch? Its yet another non-relational data management system but used exclusively for search and analytics. Whereas other non-relational dB's like mongoDb are used and simple data fetch and insertion usecases, elasticsearch is specifically designed for mining the data with an extensive JSON API to do just that.

In this flash talk we'll discuss why should you use elasticsearch and how it works in brief.

Winning SEO Using Schema Markup and Structured Data

Schema microdata is applied to the content of a page to define exactly what it is and how it should be treated. Schema elements and attributes can be added directly to the HTML code of a web page to provide the search engines’ crawlers with additional information.

presentation-week10

presentation oh week10.

s1160247 Takafumi Wada

s1160248 Yuhei Wada

s1120244 Ryo Watanabe

Schema Tags In Seo

Schema is a type of micro data that makes it easier for search engines to interpret the information on your web pages more effectively. https://www.softprodigy.com/

Building Windows Phone Database App Using MVVM Pattern

Building data driven apps for windows phone in MVVM way

How search engine work ppt

PPT on How Search Engine Works

What is Search Engine ?

How Google Works ?

Search Engine Optimization

How Google, Baidu, Yahoo, DuckDuckGo Works ?

My YouTube Channel :- https://www.youtube.com/channel/UCeZDAwaaj6LqSY5b6Gaof5A

Design Issues for Search Engines and Web Crawlers: A Review

Abstract: The World Wide Web is a huge source of hyperlinked information contained in hypertext documents.

Search engines use web crawlers to collect these web documents from web for storage and indexing. The prompt

growth of the World Wide Web has posed incomparable challenges for the designers of search engines and web

crawlers; that help users to retrieve web pages in a reasonable amount of time. In this paper, a review on need

and working of a search engine, and role of a web crawler is being presented.

Key words: Internet, www, search engine, types, design issues, web crawlers.

More Related Content

What's hot

SMART CRAWLER: A TWO-STAGE CRAWLER FOR EFFICIENTLY HARVESTING DEEP-WEB INTERF...

SMART CRAWLER: A TWO-STAGE CRAWLER FOR EFFICIENTLY HARVESTING DEEP-WEB INTERFACES

C:+91 8121953811,L:040-65511811

M:cloudtechnologiesprojects@gmail.com

All 2015 Implementations http://goo.gl/L07S0Q

http://cloudstechnologies.in/

DomainTools Fingerprinting Threat Actors with Web Assets

Threat actors tools, techniques and procedures are evolving at a rapid pace, making it even more difficult for organizations to effectively defend their network. This is forcing security professionals to be more agile and move beyond simple block and tackle security strategies.

Join SANS instructor, Rebekah Brown and DomainTools Data Systems Engineer, Mike Thompson to learn how the threat intelligence space is changing and what techniques security professionals can apply to stay ahead of threat actors.

In this webcast you will learn:

How the threat intelligence space is evolving

Practical steps your team can take to get ahead of threat actors

Real world examples of enumerating attacker infrastructure using web assets and other information scraped from html

gRSShopper

Overview of my open source aggregation and publication tool, gRSShopper, a prototype personal learning environment

How To Build your own Custom Search Engine

This is a case study on how to build a custom search engine using Elasticsearch.

The approach given here contains following components

1. Crawler -> To Scrape The data from web sites.

2. Google Analytics -> To fetch information about page views, hits, exits etc to show popular results on top.

3. Google NLP -> To analyze the search terms and extract information about people, places and other entities.

4. Elasticsearch -> To store the documents crawled and perform search its capabilities.

Salesforce connect

Slides from Salesforce bangalore developer group event organised at UrbanLadder on "Salesforce Connect".

Salesforce Connect is a framework that enables you to view, search, and modify data that’s stored outside your Salesforce org.

Indexing repositories: Pitfalls & best practices

Indexing repositories: Pitfalls & best practicesSistema de Servicios de Información y Bibliotecas SISIB

Darcy Dapra

Strategic Partner Manager en Google Scholar (Estados Unidos)Elastisearch ur own local google

What is elasticsearch? Its yet another non-relational data management system but used exclusively for search and analytics. Whereas other non-relational dB's like mongoDb are used and simple data fetch and insertion usecases, elasticsearch is specifically designed for mining the data with an extensive JSON API to do just that.

In this flash talk we'll discuss why should you use elasticsearch and how it works in brief.

Winning SEO Using Schema Markup and Structured Data

Schema microdata is applied to the content of a page to define exactly what it is and how it should be treated. Schema elements and attributes can be added directly to the HTML code of a web page to provide the search engines’ crawlers with additional information.

presentation-week10

presentation oh week10.

s1160247 Takafumi Wada

s1160248 Yuhei Wada

s1120244 Ryo Watanabe

Schema Tags In Seo

Schema is a type of micro data that makes it easier for search engines to interpret the information on your web pages more effectively. https://www.softprodigy.com/

Building Windows Phone Database App Using MVVM Pattern

Building data driven apps for windows phone in MVVM way

What's hot (13)

SMART CRAWLER: A TWO-STAGE CRAWLER FOR EFFICIENTLY HARVESTING DEEP-WEB INTERF...

SMART CRAWLER: A TWO-STAGE CRAWLER FOR EFFICIENTLY HARVESTING DEEP-WEB INTERF...

DomainTools Fingerprinting Threat Actors with Web Assets

DomainTools Fingerprinting Threat Actors with Web Assets

Winning SEO Using Schema Markup and Structured Data

Winning SEO Using Schema Markup and Structured Data

Building Windows Phone Database App Using MVVM Pattern

Building Windows Phone Database App Using MVVM Pattern

Similar to Week10 Web Presentation

How search engine work ppt

PPT on How Search Engine Works

What is Search Engine ?

How Google Works ?

Search Engine Optimization

How Google, Baidu, Yahoo, DuckDuckGo Works ?

My YouTube Channel :- https://www.youtube.com/channel/UCeZDAwaaj6LqSY5b6Gaof5A

Design Issues for Search Engines and Web Crawlers: A Review

Abstract: The World Wide Web is a huge source of hyperlinked information contained in hypertext documents.

Search engines use web crawlers to collect these web documents from web for storage and indexing. The prompt

growth of the World Wide Web has posed incomparable challenges for the designers of search engines and web

crawlers; that help users to retrieve web pages in a reasonable amount of time. In this paper, a review on need

and working of a search engine, and role of a web crawler is being presented.

Key words: Internet, www, search engine, types, design issues, web crawlers.

An Intelligent Meta Search Engine for Efficient Web Document Retrieval

IOSR Journal of Computer Engineering (IOSR-JCE) is a double blind peer reviewed International Journal that provides rapid publication (within a month) of articles in all areas of computer engineering and its applications. The journal welcomes publications of high quality papers on theoretical developments and practical applications in computer technology. Original research papers, state-of-the-art reviews, and high quality technical notes are invited for publications.

Introduction to Search Engine Optimization

I have tried my best to give you all the basic valuable information about one of the Trending Topic of all time i.e, Search Engine Optimization.

It covers all the pre-required knowledge need to learn about SEO.

If you found that I have mention anything inaccurate, Please do not forget to drop a mail to me on #gauravkumar4967@gmail.com

Thank you !

Happy Learning.

Similar to Week10 Web Presentation (20)

Design Issues for Search Engines and Web Crawlers: A Review

Design Issues for Search Engines and Web Crawlers: A Review

An Intelligent Meta Search Engine for Efficient Web Document Retrieval

An Intelligent Meta Search Engine for Efficient Web Document Retrieval

Recently uploaded

UiPath Test Automation using UiPath Test Suite series, part 4

Welcome to UiPath Test Automation using UiPath Test Suite series part 4. In this session, we will cover Test Manager overview along with SAP heatmap.

The UiPath Test Manager overview with SAP heatmap webinar offers a concise yet comprehensive exploration of the role of a Test Manager within SAP environments, coupled with the utilization of heatmaps for effective testing strategies.

Participants will gain insights into the responsibilities, challenges, and best practices associated with test management in SAP projects. Additionally, the webinar delves into the significance of heatmaps as a visual aid for identifying testing priorities, areas of risk, and resource allocation within SAP landscapes. Through this session, attendees can expect to enhance their understanding of test management principles while learning practical approaches to optimize testing processes in SAP environments using heatmap visualization techniques

What will you get from this session?

1. Insights into SAP testing best practices

2. Heatmap utilization for testing

3. Optimization of testing processes

4. Demo

Topics covered:

Execution from the test manager

Orchestrator execution result

Defect reporting

SAP heatmap example with demo

Speaker:

Deepak Rai, Automation Practice Lead, Boundaryless Group and UiPath MVP

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head...

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head of Product, Amazon Games

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

91mobiles recently conducted a Smart TV Buyer Insights Survey in which we asked over 3,000 respondents about the TV they own, aspects they look at on a new TV, and their TV buying preferences.

UiPath Test Automation using UiPath Test Suite series, part 3

Welcome to UiPath Test Automation using UiPath Test Suite series part 3. In this session, we will cover desktop automation along with UI automation.

Topics covered:

UI automation Introduction,

UI automation Sample

Desktop automation flow

Pradeep Chinnala, Senior Consultant Automation Developer @WonderBotz and UiPath MVP

Deepak Rai, Automation Practice Lead, Boundaryless Group and UiPath MVP

How world-class product teams are winning in the AI era by CEO and Founder, P...

How world-class product teams are winning in the AI era by CEO and Founder, Product School

Securing your Kubernetes cluster_ a step-by-step guide to success !

Today, after several years of existence, an extremely active community and an ultra-dynamic ecosystem, Kubernetes has established itself as the de facto standard in container orchestration. Thanks to a wide range of managed services, it has never been so easy to set up a ready-to-use Kubernetes cluster.

However, this ease of use means that the subject of security in Kubernetes is often left for later, or even neglected. This exposes companies to significant risks.

In this talk, I'll show you step-by-step how to secure your Kubernetes cluster for greater peace of mind and reliability.

Le nuove frontiere dell'AI nell'RPA con UiPath Autopilot™

In questo evento online gratuito, organizzato dalla Community Italiana di UiPath, potrai esplorare le nuove funzionalità di Autopilot, il tool che integra l'Intelligenza Artificiale nei processi di sviluppo e utilizzo delle Automazioni.

📕 Vedremo insieme alcuni esempi dell'utilizzo di Autopilot in diversi tool della Suite UiPath:

Autopilot per Studio Web

Autopilot per Studio

Autopilot per Apps

Clipboard AI

GenAI applicata alla Document Understanding

👨🏫👨💻 Speakers:

Stefano Negro, UiPath MVPx3, RPA Tech Lead @ BSP Consultant

Flavio Martinelli, UiPath MVP 2023, Technical Account Manager @UiPath

Andrei Tasca, RPA Solutions Team Lead @NTT Data

Accelerate your Kubernetes clusters with Varnish Caching

A presentation about the usage and availability of Varnish on Kubernetes. This talk explores the capabilities of Varnish caching and shows how to use the Varnish Helm chart to deploy it to Kubernetes.

This presentation was delivered at K8SUG Singapore. See https://feryn.eu/presentations/accelerate-your-kubernetes-clusters-with-varnish-caching-k8sug-singapore-28-2024 for more details.

Elevating Tactical DDD Patterns Through Object Calisthenics

After immersing yourself in the blue book and its red counterpart, attending DDD-focused conferences, and applying tactical patterns, you're left with a crucial question: How do I ensure my design is effective? Tactical patterns within Domain-Driven Design (DDD) serve as guiding principles for creating clear and manageable domain models. However, achieving success with these patterns requires additional guidance. Interestingly, we've observed that a set of constraints initially designed for training purposes remarkably aligns with effective pattern implementation, offering a more ‘mechanical’ approach. Let's explore together how Object Calisthenics can elevate the design of your tactical DDD patterns, offering concrete help for those venturing into DDD for the first time!

GraphRAG is All You need? LLM & Knowledge Graph

Guy Korland, CEO and Co-founder of FalkorDB, will review two articles on the integration of language models with knowledge graphs.

1. Unifying Large Language Models and Knowledge Graphs: A Roadmap.

https://arxiv.org/abs/2306.08302

2. Microsoft Research's GraphRAG paper and a review paper on various uses of knowledge graphs:

https://www.microsoft.com/en-us/research/blog/graphrag-unlocking-llm-discovery-on-narrative-private-data/

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

In this session I delve into the encryption technology used in Microsoft 365 and Microsoft Purview. Including the concepts of Customer Key and Double Key Encryption.

Builder.ai Founder Sachin Dev Duggal's Strategic Approach to Create an Innova...

In today's fast-changing business world, Companies that adapt and embrace new ideas often need help to keep up with the competition. However, fostering a culture of innovation takes much work. It takes vision, leadership and willingness to take risks in the right proportion. Sachin Dev Duggal, co-founder of Builder.ai, has perfected the art of this balance, creating a company culture where creativity and growth are nurtured at each stage.

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

Effective Application Security in Software Delivery lifecycle using Deployment Firewall and DBOM

The modern software delivery process (or the CI/CD process) includes many tools, distributed teams, open-source code, and cloud platforms. Constant focus on speed to release software to market, along with the traditional slow and manual security checks has caused gaps in continuous security as an important piece in the software supply chain. Today organizations feel more susceptible to external and internal cyber threats due to the vast attack surface in their applications supply chain and the lack of end-to-end governance and risk management.

The software team must secure its software delivery process to avoid vulnerability and security breaches. This needs to be achieved with existing tool chains and without extensive rework of the delivery processes. This talk will present strategies and techniques for providing visibility into the true risk of the existing vulnerabilities, preventing the introduction of security issues in the software, resolving vulnerabilities in production environments quickly, and capturing the deployment bill of materials (DBOM).

Speakers:

Bob Boule

Robert Boule is a technology enthusiast with PASSION for technology and making things work along with a knack for helping others understand how things work. He comes with around 20 years of solution engineering experience in application security, software continuous delivery, and SaaS platforms. He is known for his dynamic presentations in CI/CD and application security integrated in software delivery lifecycle.

Gopinath Rebala

Gopinath Rebala is the CTO of OpsMx, where he has overall responsibility for the machine learning and data processing architectures for Secure Software Delivery. Gopi also has a strong connection with our customers, leading design and architecture for strategic implementations. Gopi is a frequent speaker and well-known leader in continuous delivery and integrating security into software delivery.

The Art of the Pitch: WordPress Relationships and Sales

Clients don’t know what they don’t know. What web solutions are right for them? How does WordPress come into the picture? How do you make sure you understand scope and timeline? What do you do if sometime changes?

All these questions and more will be explored as we talk about matching clients’ needs with what your agency offers without pulling teeth or pulling your hair out. Practical tips, and strategies for successful relationship building that leads to closing the deal.

Key Trends Shaping the Future of Infrastructure.pdf

Keynote at DIGIT West Expo, Glasgow on 29 May 2024.

Cheryl Hung, ochery.com

Sr Director, Infrastructure Ecosystem, Arm.

The key trends across hardware, cloud and open-source; exploring how these areas are likely to mature and develop over the short and long-term, and then considering how organisations can position themselves to adapt and thrive.

Recently uploaded (20)

UiPath Test Automation using UiPath Test Suite series, part 4

UiPath Test Automation using UiPath Test Suite series, part 4

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head...

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head...

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

UiPath Test Automation using UiPath Test Suite series, part 3

UiPath Test Automation using UiPath Test Suite series, part 3

How world-class product teams are winning in the AI era by CEO and Founder, P...

How world-class product teams are winning in the AI era by CEO and Founder, P...

Securing your Kubernetes cluster_ a step-by-step guide to success !

Securing your Kubernetes cluster_ a step-by-step guide to success !

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

FIDO Alliance Osaka Seminar: Passkeys at Amazon.pdf

FIDO Alliance Osaka Seminar: Passkeys at Amazon.pdf

Le nuove frontiere dell'AI nell'RPA con UiPath Autopilot™

Le nuove frontiere dell'AI nell'RPA con UiPath Autopilot™

Accelerate your Kubernetes clusters with Varnish Caching

Accelerate your Kubernetes clusters with Varnish Caching

Elevating Tactical DDD Patterns Through Object Calisthenics

Elevating Tactical DDD Patterns Through Object Calisthenics

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Free Complete Python - A step towards Data Science

Free Complete Python - A step towards Data Science

Builder.ai Founder Sachin Dev Duggal's Strategic Approach to Create an Innova...

Builder.ai Founder Sachin Dev Duggal's Strategic Approach to Create an Innova...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

The Art of the Pitch: WordPress Relationships and Sales

The Art of the Pitch: WordPress Relationships and Sales

Key Trends Shaping the Future of Infrastructure.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Week10 Web Presentation

- 1. Web Crawler ● Each search engine uses a crawler and spider. ● A web crawler is a computer program that browses the WWW in a methodical. ● A web spider is a kind of web crawler. ● This process is called Web crawling or spidering. ● Image source : http://www.codeproject.com/KB/IP/Crawler.aspx

- 2. Spider A spider is a program that crawls the Internet in a specific way for a specific purpose. Spiders are the basis for modern search engines, such as Google and AltaVista. These spiders automatically retrieve data from the Web and pass it on to other applications that index the contents of the Web site for the best set of search terms. Source : http://www.ibm.com/developerworks/linux/library/l-spider/

- 3. Information Indexing Documents from an Indexing Software Index agent, are indexed by Agents an indexing software. Extract words or something Database Documents ● Information is putted into a certain database ● There are many different types of indexing ● The kind of index built how the information will be displayed.

- 4. Searching and Visiting If you visit web pages related your searching keywords, you type those in a web page. A particular search engine allow you to use several keywords for searching.

- 5. Searching An engine searched Your keyword from the database. Results are returned by HTML document. There are some additional information.

- 6. Visiting If you are interested in a title of the result page, you click the link and go to directly. Search engines or databases do not store the documents of the indexed sites.