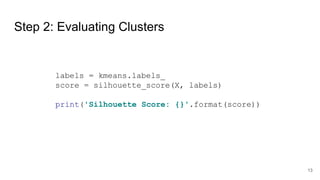

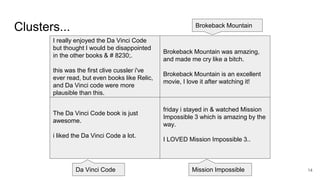

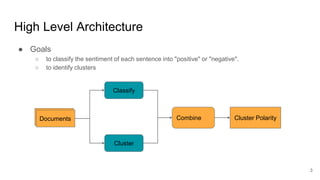

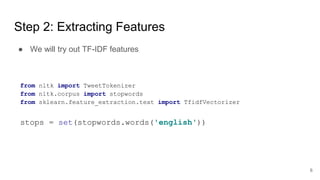

The document outlines a sentiment analysis demonstration focused on classifying sentiment as either 'positive' or 'negative' and identifying clusters using a dataset from Kaggle. It details the required tools, data loading, feature extraction using tf-idf, training a support vector classifier, and evaluation through cross-validation and clustering with K-means. Additionally, it discusses techniques for combining the classification and clustering results.

![Step 1: Loading Dataset

def read_dataset():

with open('../resc/data/training.txt', 'r', encoding='utf-8') as f:

records = list(zip(*[line.split('t') for line in f.readlines()]))

return records[1], records[0]

train_text, train_labels = read_dataset()

5](https://image.slidesharecdn.com/c8keqmu7ts2br7ko2lmj-signature-d3621076d925558bfd32ab9a66a4ca4f1370161b06bde9e79688b9e03786350e-poli-180712120947/85/Twitter-sentiment-analysis-5-320.jpg)

![Step 4: Training the Classifier

● Fix

ValueError: pos_label=1 is not a valid label: array(['0', '1'], dtype='<U1')

le = LabelEncoder()

y = le.fit_transform(train_labels)

svc = LinearSVC()

svc.fit(X_train, y_train)

● Oops!

9](https://image.slidesharecdn.com/c8keqmu7ts2br7ko2lmj-signature-d3621076d925558bfd32ab9a66a4ca4f1370161b06bde9e79688b9e03786350e-poli-180712120947/85/Twitter-sentiment-analysis-9-320.jpg)