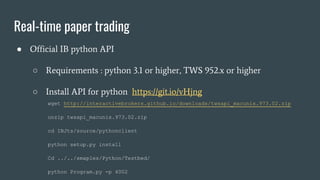

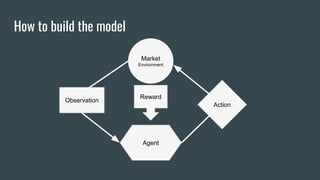

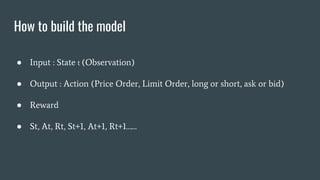

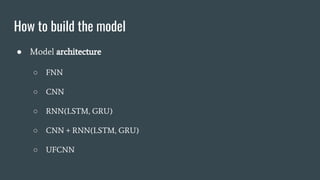

This document discusses how to build an agent for automated trading using reinforcement learning. It covers modeling the market environment and agent, training the agent using backtesting and paper trading, and integrating with real trading APIs. Specific techniques mentioned include neural networks, gym environments, rule-based strategies, and the Interactive Brokers API.

![Trading Gym

● https://github.com/Yvictor/TradingGym

import random

import pandas as pd

import trading_env

df = pd.read_hdf('dataset/SGXTW.h5', 'STW')

env = trading_env.make(obs_data_len=256, step_len=128,

df=df, fee=0.1, max_position=5, deal_col_name='Price',

feature_names=['Price', 'Volume', 'Ask_price','Bid_price',

'Ask_deal_vol','Bid_deal_vol', 'Bid/Ask_deal', 'Updown'])

env.reset()

env.render()

state, reward, done, info = env.step(random.randrange(3))

### random choice action and show the transaction detail

for i in range(500):

state, reward, done, info = env.step(random.randrange(3))

env.render()

if done:

break

env.transaction_details](https://image.slidesharecdn.com/trainingtheagentfortradingusingibapi-170619145429/85/Training-the-agent-for-trading-use-Interactive-Broker-python-api-8-320.jpg)

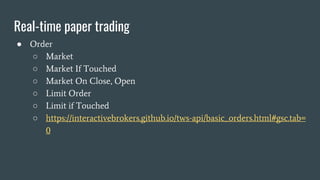

![Trading Gym

● Rule base usage

env = trading_env.make(obs_data_len=10, step_len=1,

df=df, fee=0.1, max_position=5, deal_col_name='Price',

feature_names=['Price', 'MA'],

fluc_div=100.0)

class MaAgent:

def __init__(self):

pass

def choice_action(self, state):

if state[-1][0] > state[-1][1] and state[-2][0] <= state[-2][1]:

return 1

elif state[-1][0] < state[-1][1] and state[-2][0] >= state[-2][1]:

return 2

else:

return 0

# then same as above](https://image.slidesharecdn.com/trainingtheagentfortradingusingibapi-170619145429/85/Training-the-agent-for-trading-use-Interactive-Broker-python-api-11-320.jpg)