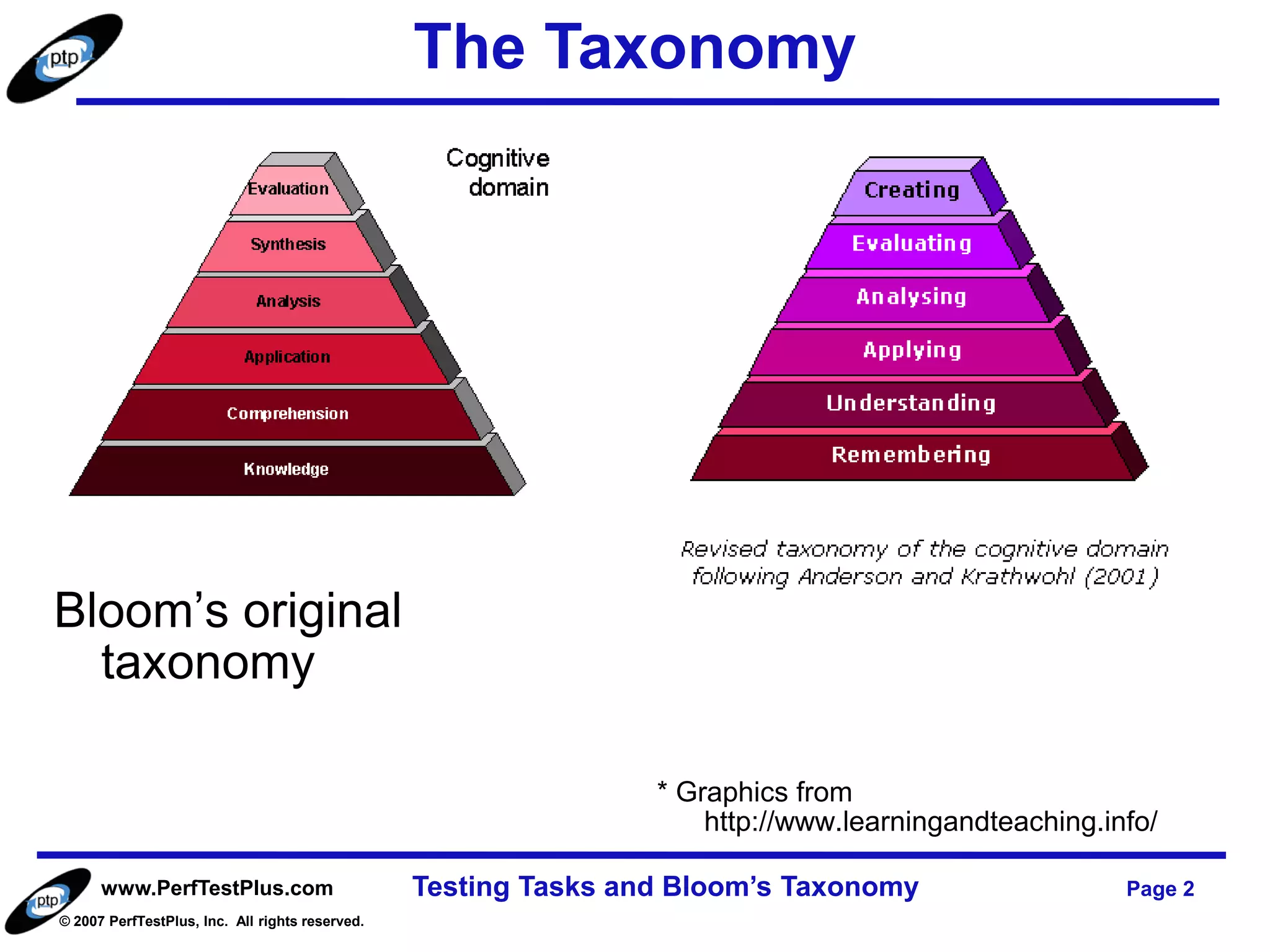

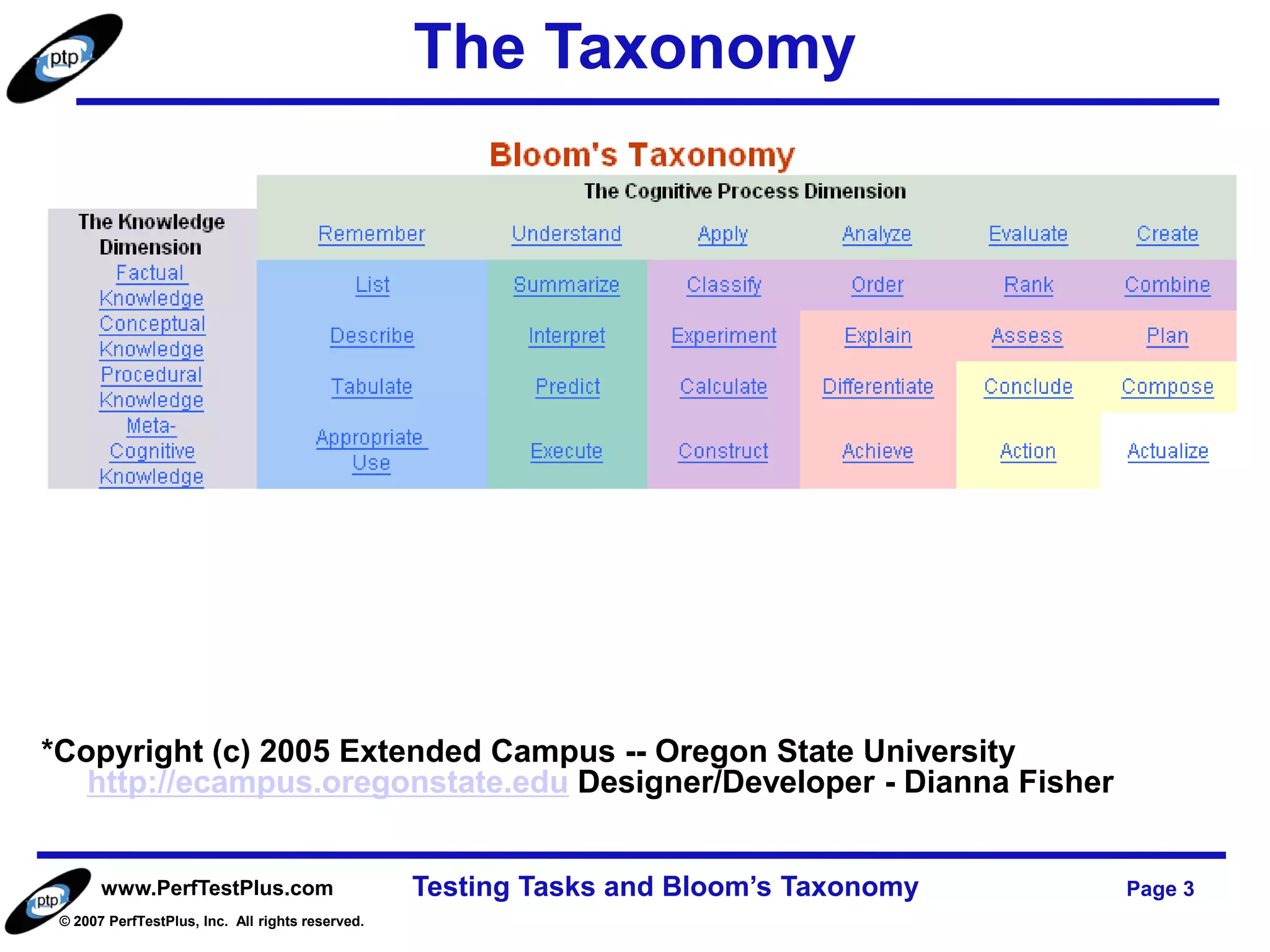

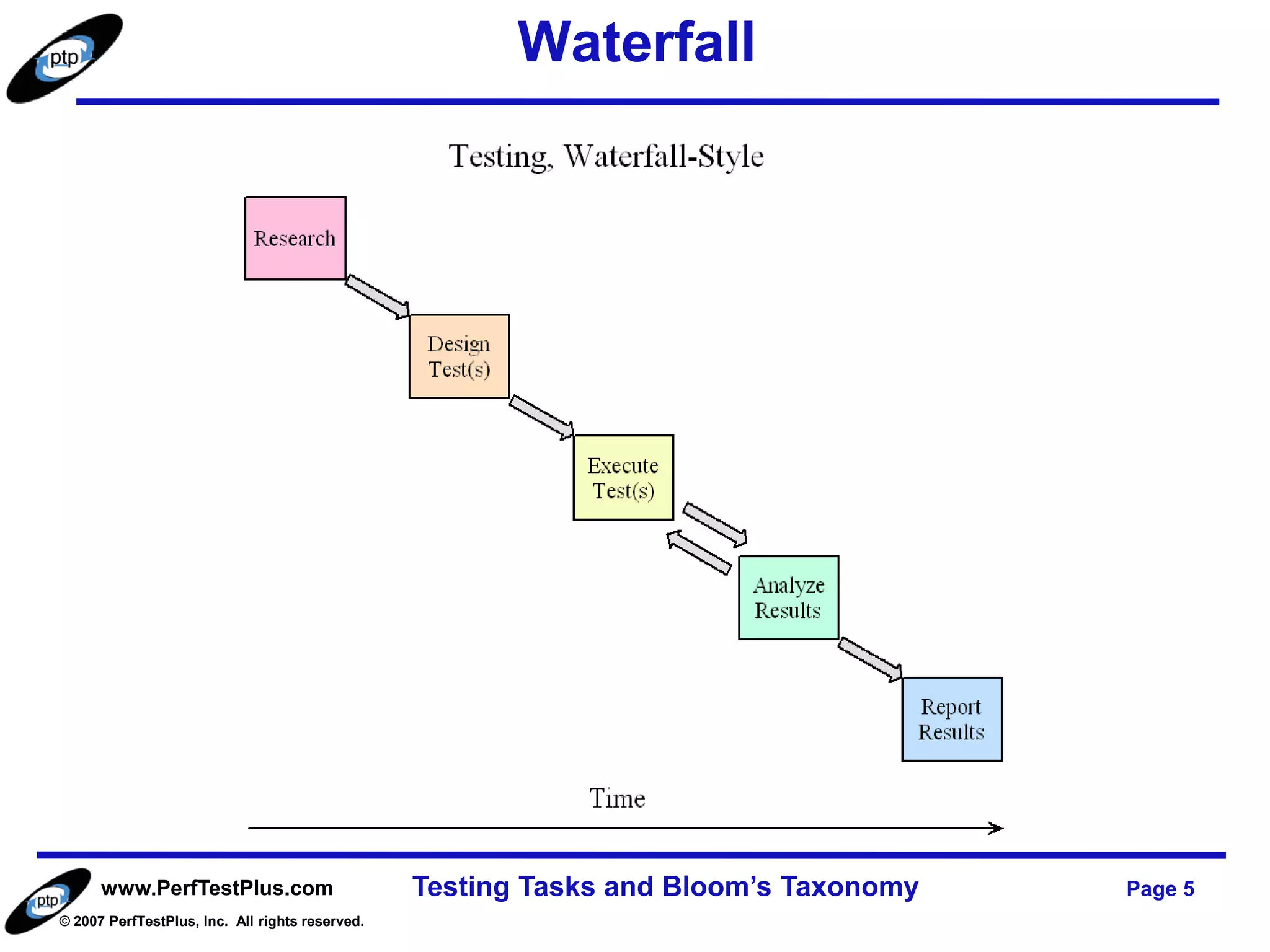

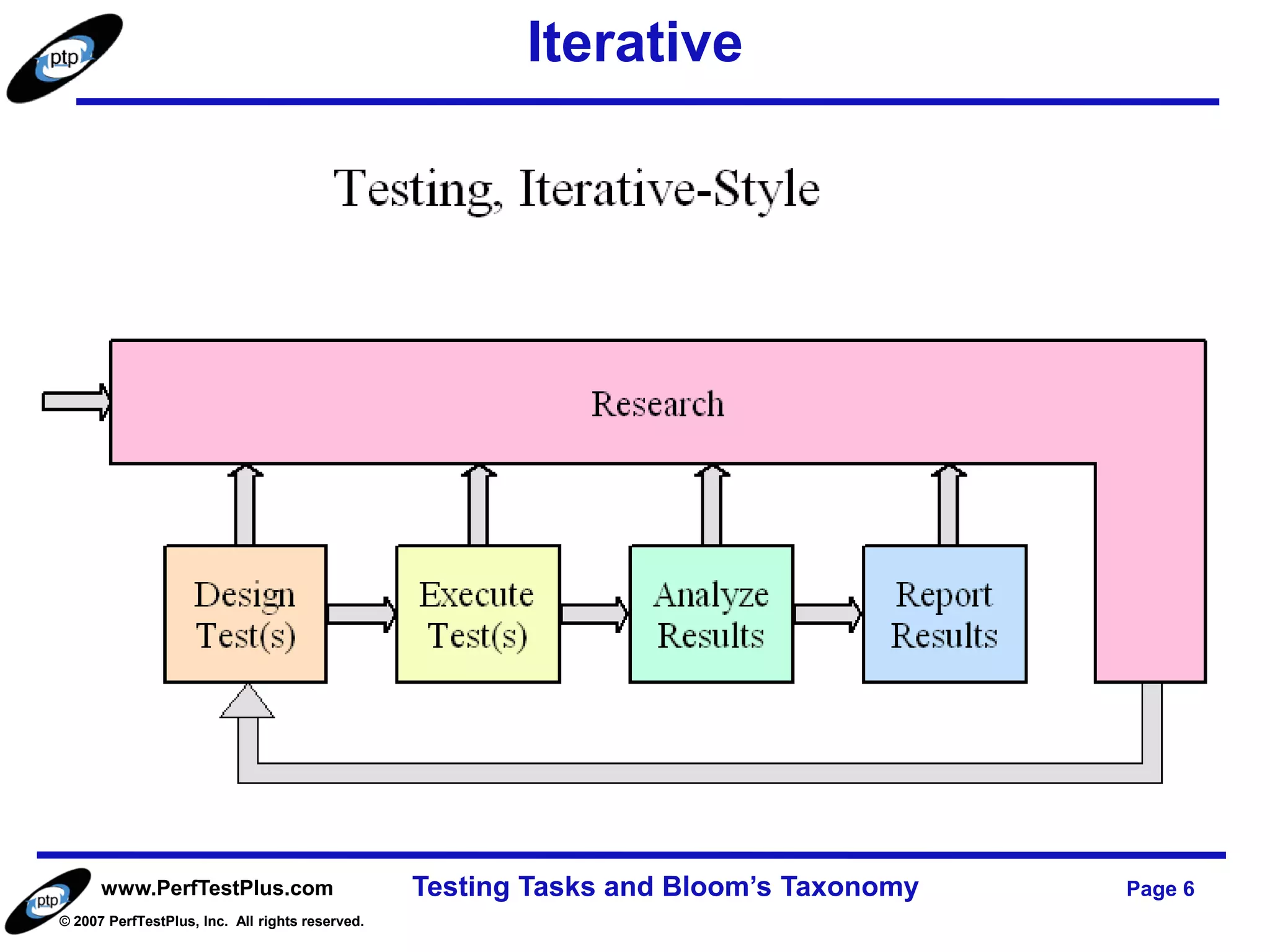

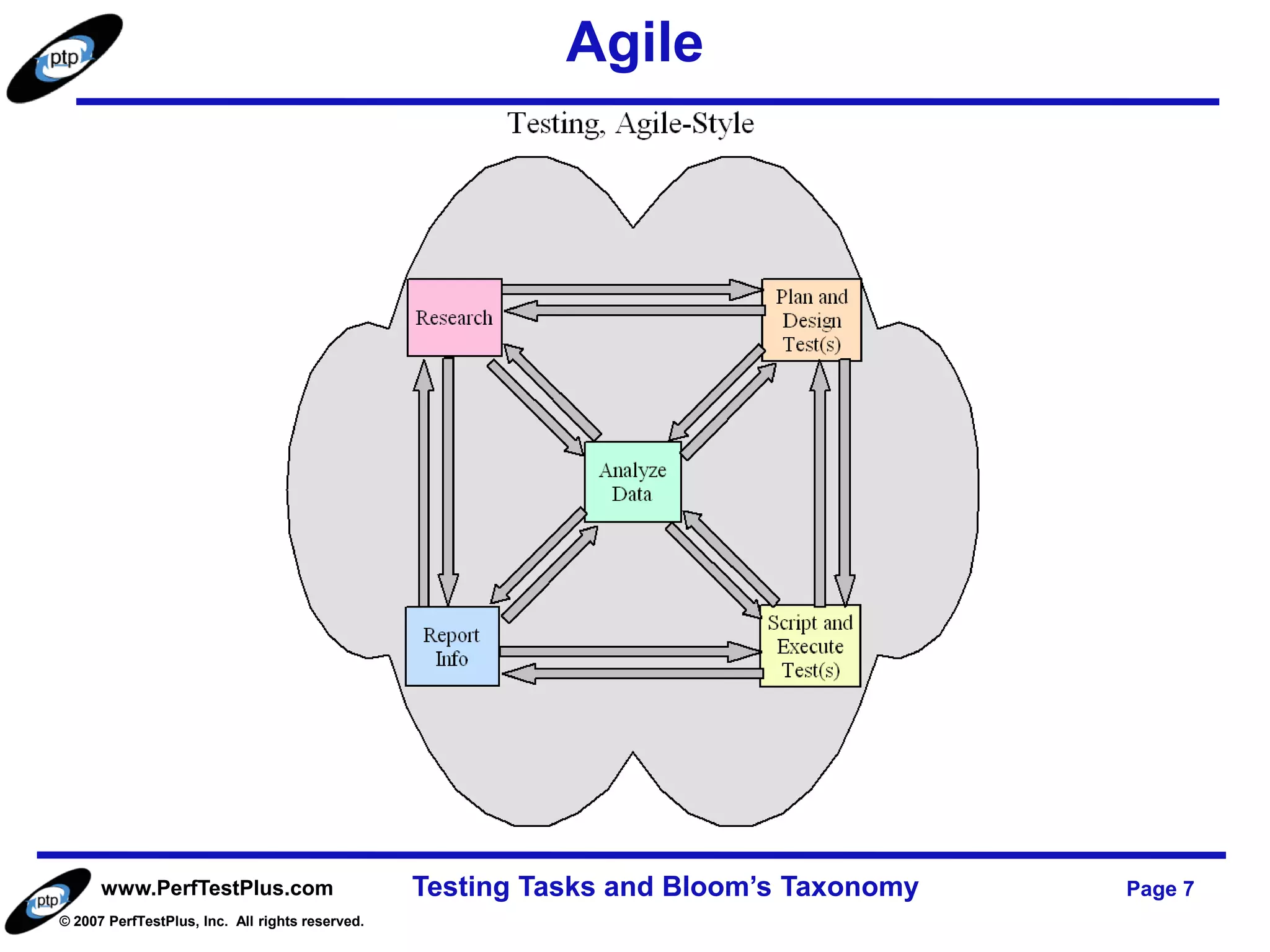

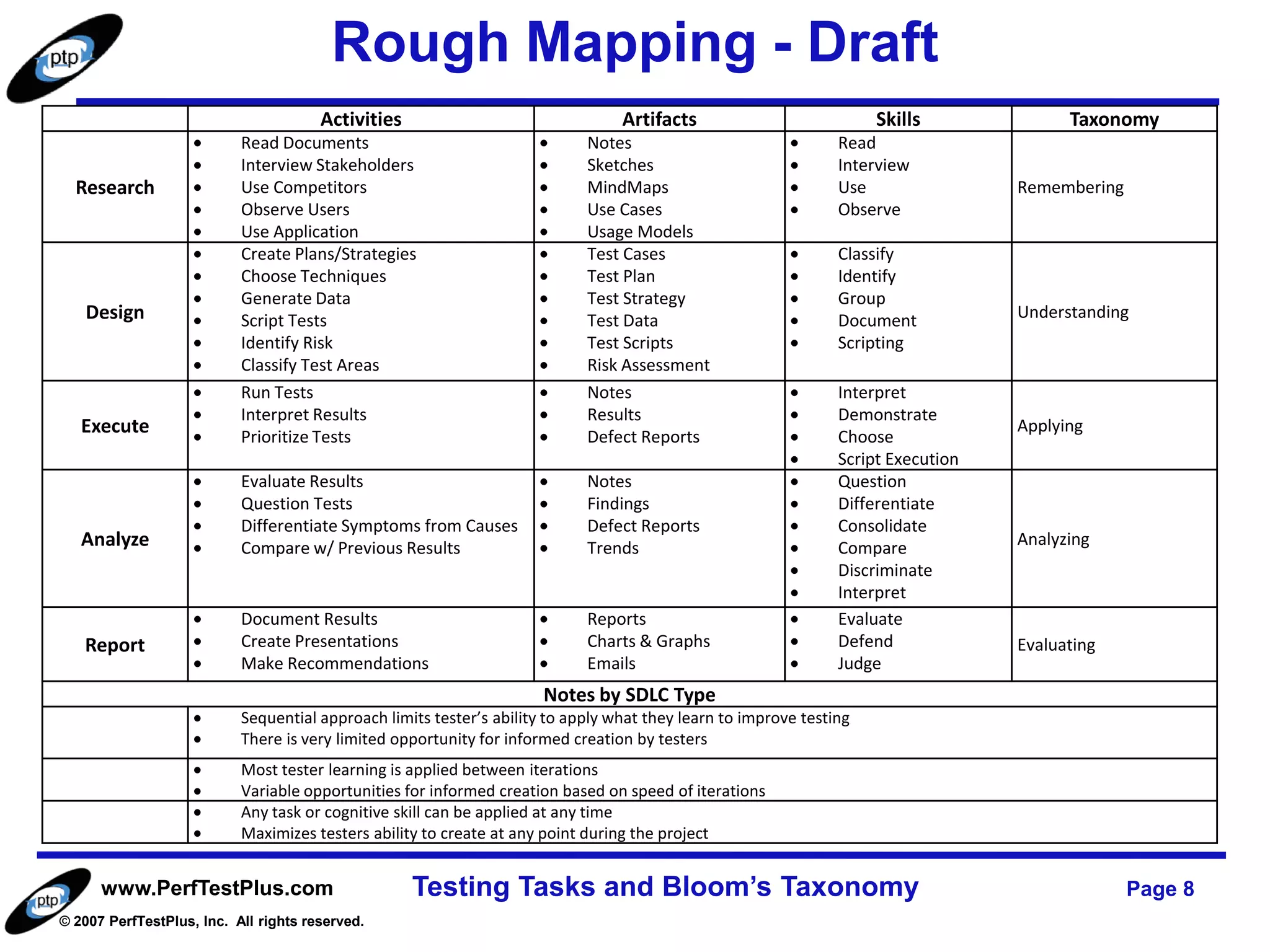

The document discusses Bloom's Taxonomy in the context of testing tasks, highlighting cognitive levels such as remembering, understanding, applying, analyzing, evaluating, and creating. It emphasizes the importance of these skills in various testing methodologies, including sequential, iterative, and agile approaches. The text contemplates how different methodologies impact testers' learning and opportunities for informed creation throughout the testing process.