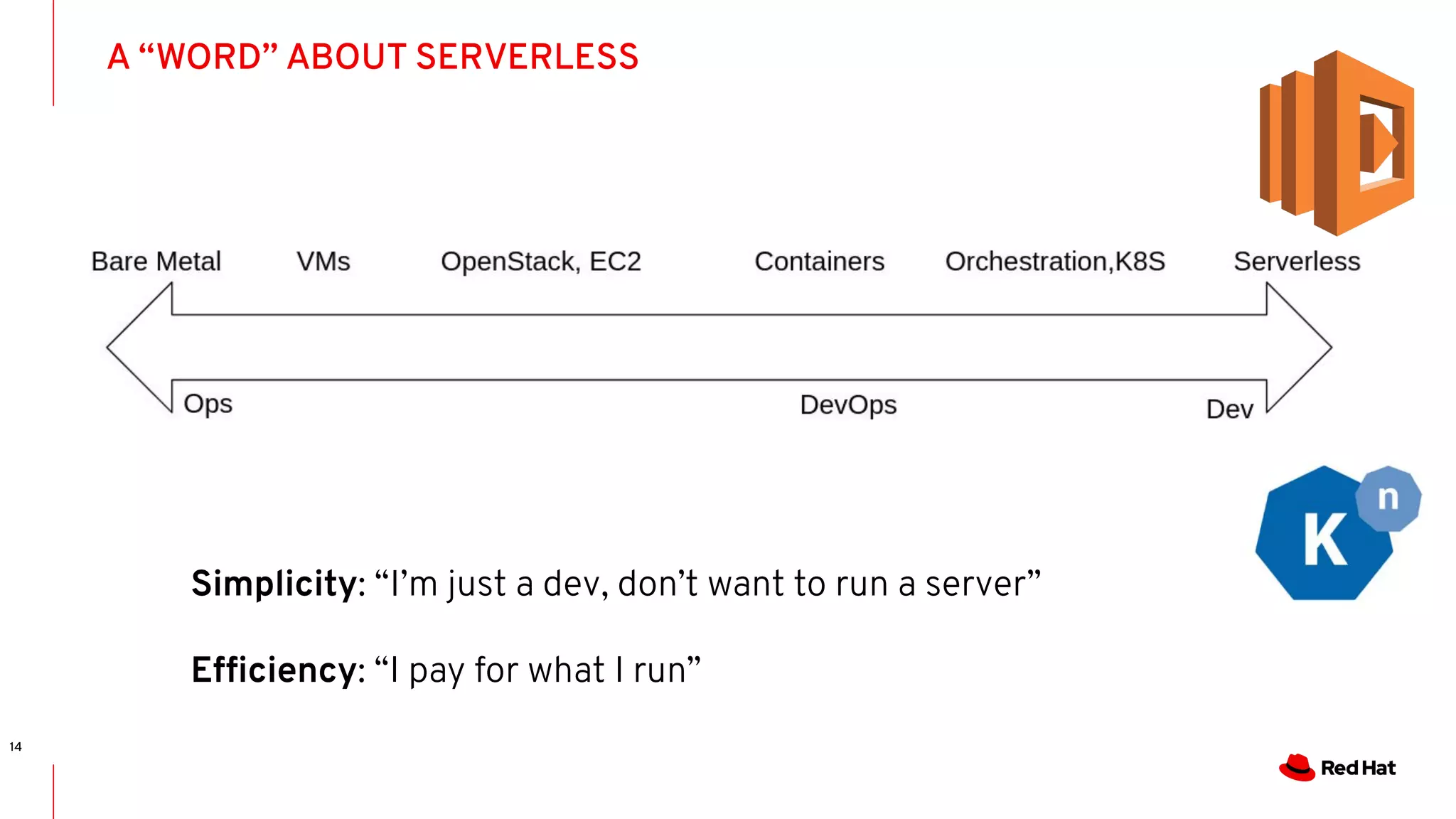

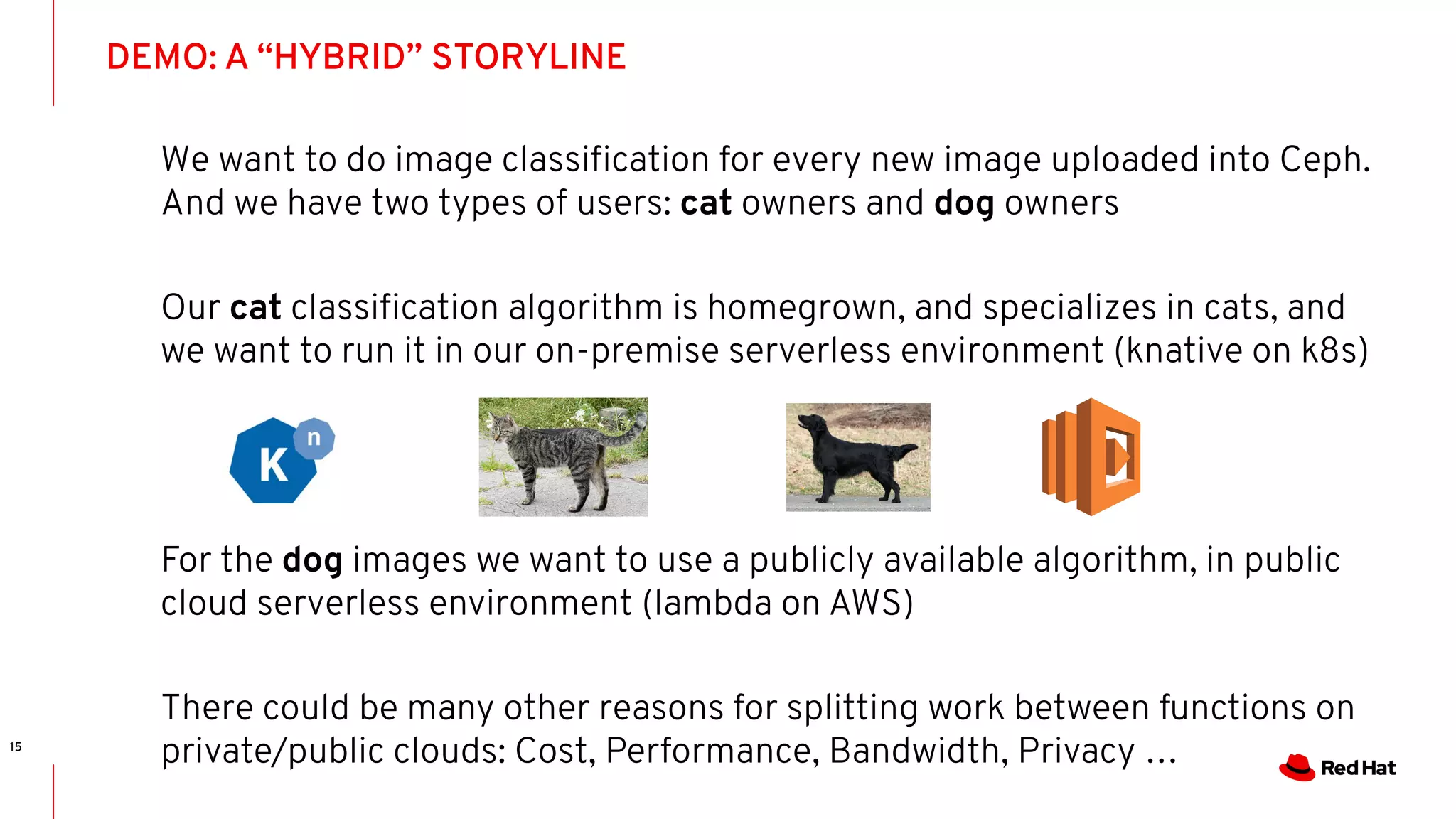

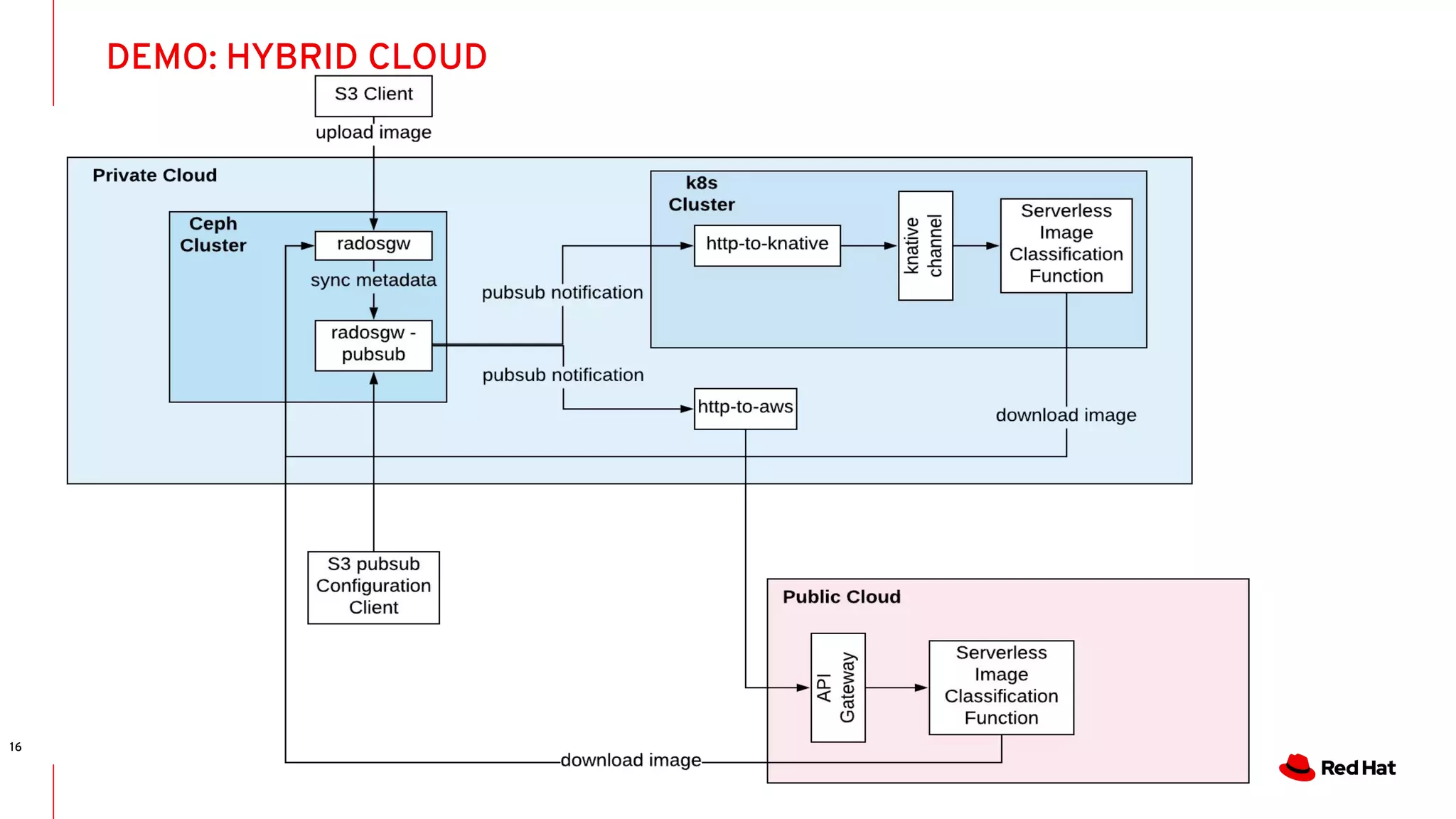

This document summarizes a presentation about hybrid cloud storage using Ceph and serverless functions. The presentation demonstrates setting up Ceph to store images in buckets for cats and dogs. When new images are uploaded, Ceph notifications trigger either a on-premise knative function to classify cat images, or an AWS lambda function to classify dog images, splitting the work between private and public clouds. The live demo shows configuring Ceph notifications, formatting events for knative/lambda, and functions classifying images from Ceph stored in different clouds.