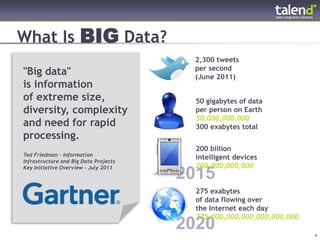

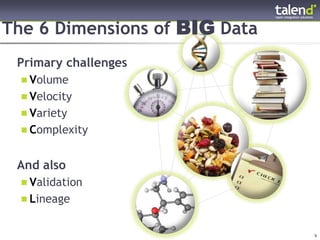

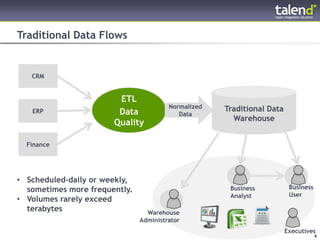

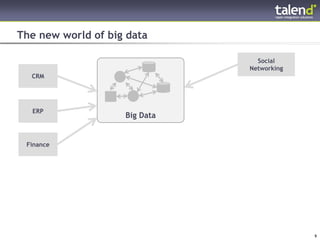

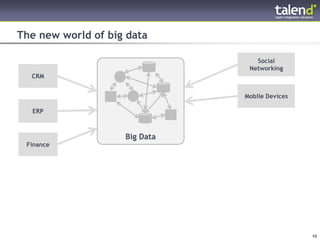

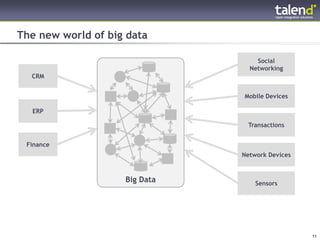

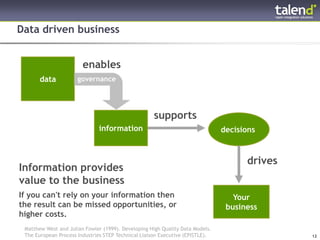

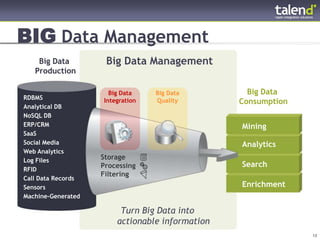

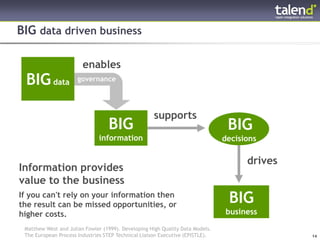

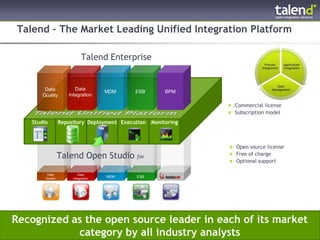

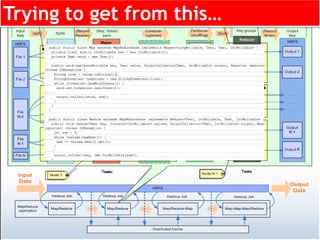

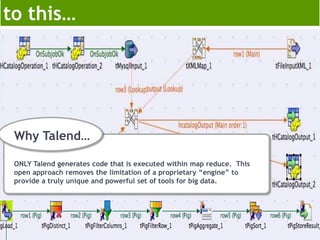

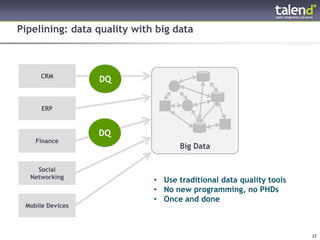

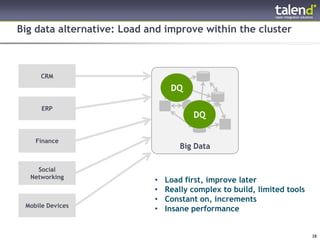

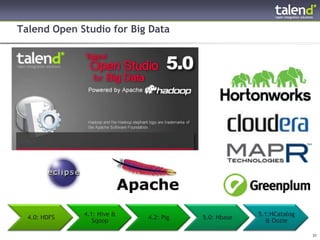

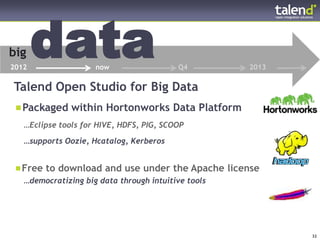

This document discusses big data and Talend's goal of democratizing big data through its open source integration platform. It begins by defining big data and explaining the challenges it poses related to volume, velocity, variety and other factors. It then outlines Talend's goal of providing intuitive graphical tools to design and run big data jobs within Hadoop, abstracting away the underlying code generation. The document stresses that data quality is especially important for big data and how Talend supports implementing data quality checks either as part of loading data into Hadoop or as a separate job after loading. Finally it provides an overview of Talend's roadmap to add support for additional Hadoop technologies over time such as HCatalog, Oozie and more