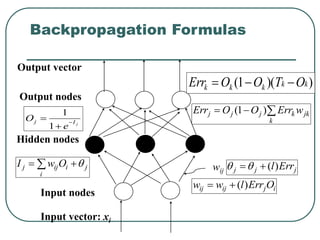

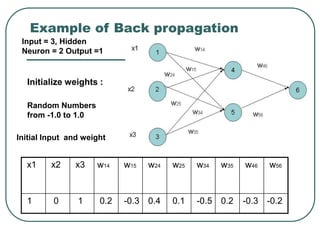

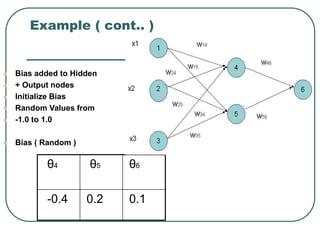

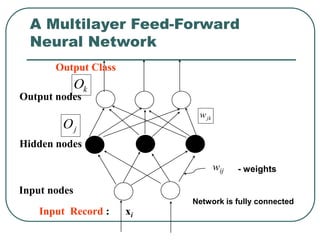

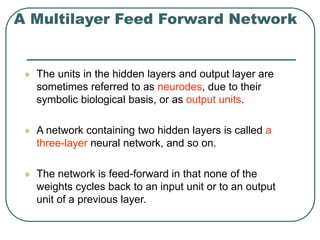

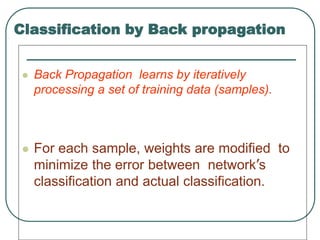

The document provides an overview of neural networks and the backpropagation algorithm for training neural networks. It defines the basic components of a neural network including neurons, layers, weights, and biases. It then explains how a multilayer feedforward network is structured and how backpropagation works by propagating errors backward from the output to earlier layers to update weights and biases to minimize classification errors on training data. The process involves feeding inputs forward, calculating outputs at each layer, computing errors at the output layer, and propagating errors back to update the weights.

![References

Data Mining Concept and Techniques (Chapter 7.5)

[Jiawei Han, Micheline Kamber/Morgan Kaufman

Publishers2002]

Professor Anita Wasilewska’s lecture note

www.cs.vu.nl/~elena/slides03/nn_1light.ppt

Xin Yao Evolving Artificial Neural Networks

http://www.cs.bham.ac.uk/~xin/papers/published_iproc_se

p99.pdf

informatics.indiana.edu/larryy/talks/S4.MattI.EANN.ppt

www.cs.appstate.edu/~can/classes/

5100/Presentations/DataMining1.ppt

www.comp.nus.edu.sg/~cs6211/slides/blondie24.ppt

www.public.asu.edu/~svadrevu/UMD/ThesisTalk.ppt

www.ctrl.cinvestav.mx/~yuw/file/afnn1_nnintro.PPT](https://image.slidesharecdn.com/neural1-231017235619-1dc97124/85/neural-1-ppt-2-320.jpg)

![Neural Network Classifier

Input: Classification data

It contains classification attribute

Data is divided, as in any classification problem.

[Training data and Testing data]

All data must be normalized.

(i.e. all values of attributes in the database are changed to

contain values in the internal [0,1] or[-1,1])

Neural Network can work with data in the range of (0,1) or (-1,1)

Two basic normalization techniques

[1] Max-Min normalization

[2] Decimal Scaling normalization](https://image.slidesharecdn.com/neural1-231017235619-1dc97124/85/neural-1-ppt-10-320.jpg)

![Data Normalization

A

new

A

new

A

new

A

A

A

v

v min

_

)

min

_

max

_

(

min

max

min

'

[1] Max- Min normalization formula is as follows:

[minA, maxA , the minimun and maximum values of the attribute A

max-min normalization maps a value v of A to v’ in the range

{new_minA, new_maxA} ]](https://image.slidesharecdn.com/neural1-231017235619-1dc97124/85/neural-1-ppt-11-320.jpg)

![Example of Max-Min

Normalization

A

new

A

new

A

new

A

A

A

v

v min

_

)

min

_

max

_

(

min

max

min

'

Max- Min normalization formula

Example: We want to normalize data to range of the interval [0,1].

We put: new_max A= 1, new_minA =0.

Say, max A was 100 and min A was 20 ( That means maximum and minimum

values for the attribute ).

Now, if v = 40 ( If for this particular pattern , attribute value is 40 ), v’

will be calculated as , v’ = (40-20) x (1-0) / (100-20) + 0

=> v’ = 20 x 1/80

=> v’ = 0.4](https://image.slidesharecdn.com/neural1-231017235619-1dc97124/85/neural-1-ppt-12-320.jpg)

![Decimal Scaling Normalization

[2]Decimal Scaling Normalization

Normalization by decimal scaling normalizes by moving the

decimal point of values of attribute A.

j

v

v

10

'

Here j is the smallest integer such that max|v’|<1.

Example :

A – values range from -986 to 917. Max |v| = 986.

v = -986 normalize to v’ = -986/1000 = -0.986](https://image.slidesharecdn.com/neural1-231017235619-1dc97124/85/neural-1-ppt-13-320.jpg)

![Steps in Back propagation

Algorithm

STEP ONE: initialize the weights and biases.

The weights in the network are initialized to

random numbers from the interval [-1,1].

Each unit has a BIAS associated with it

The biases are similarly initialized to random

numbers from the interval [-1,1].

STEP TWO: feed the training sample.](https://image.slidesharecdn.com/neural1-231017235619-1dc97124/85/neural-1-ppt-26-320.jpg)