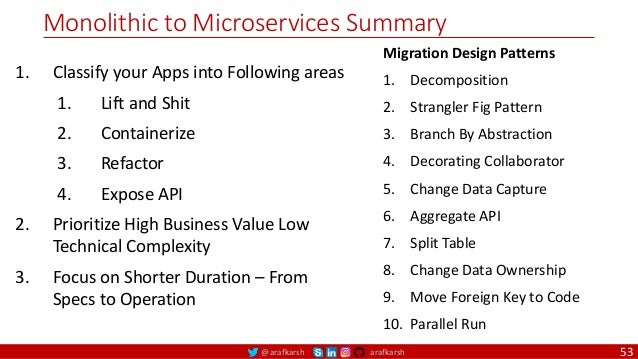

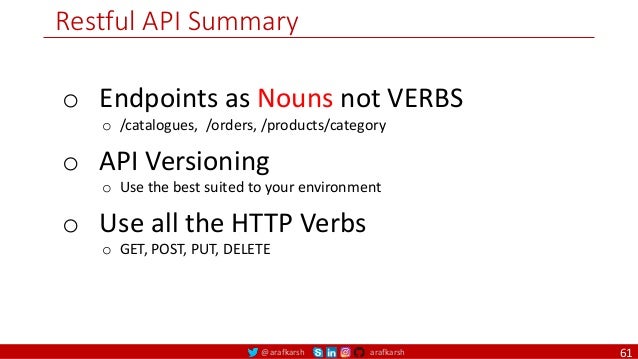

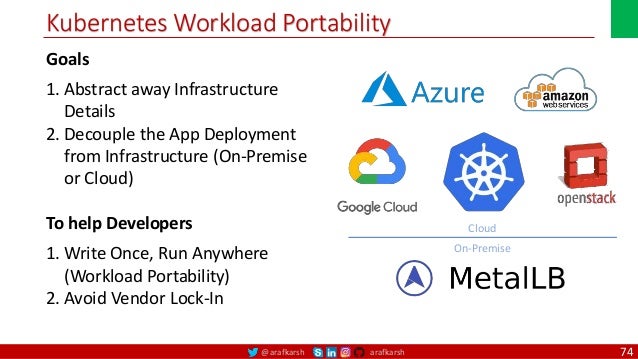

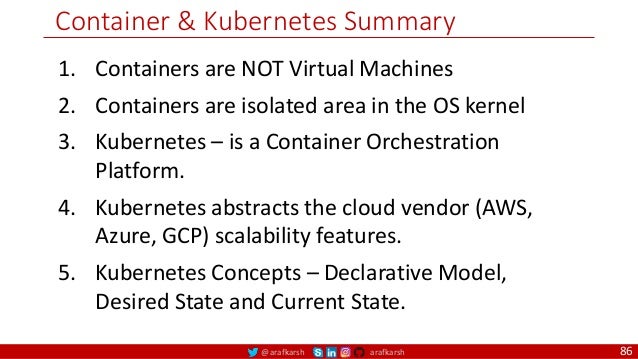

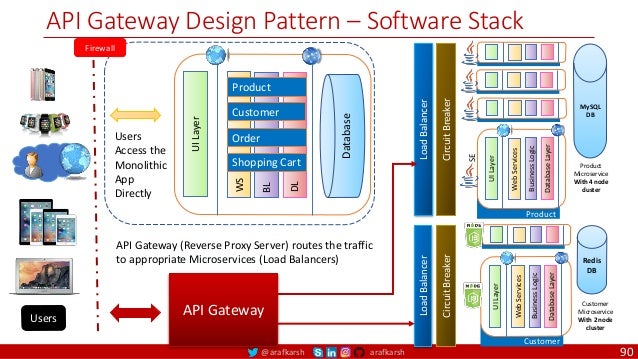

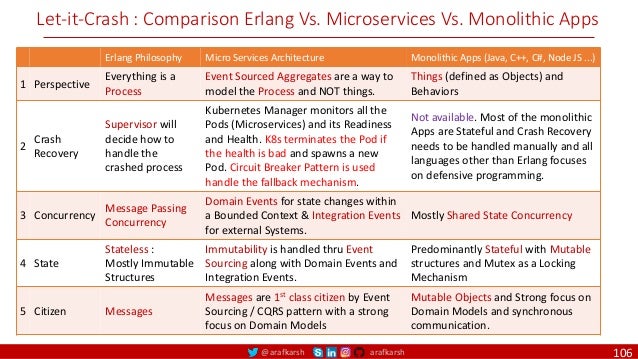

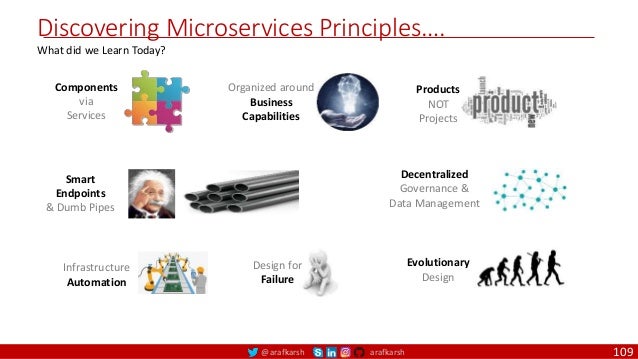

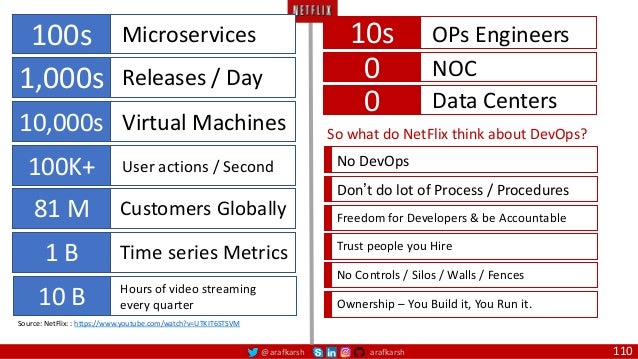

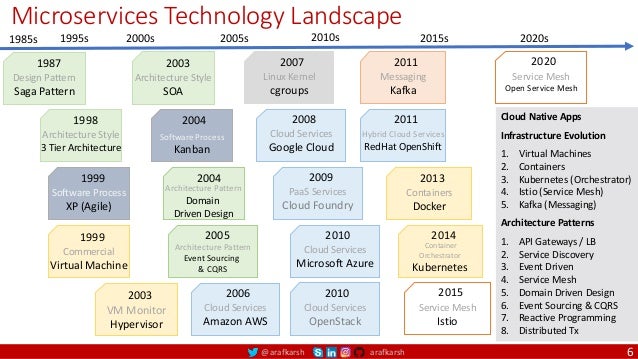

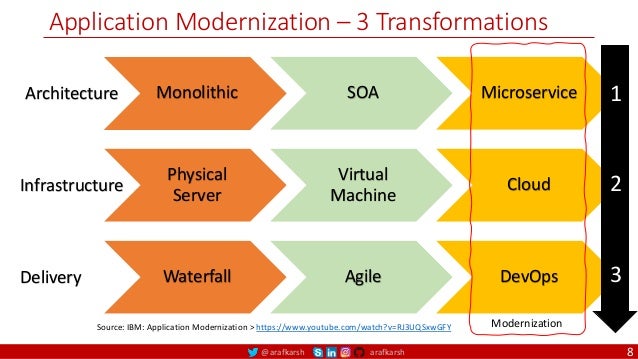

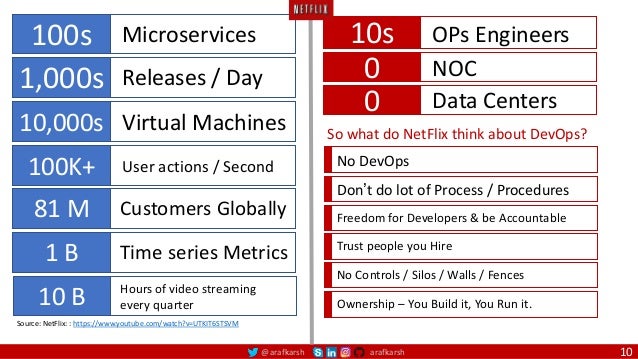

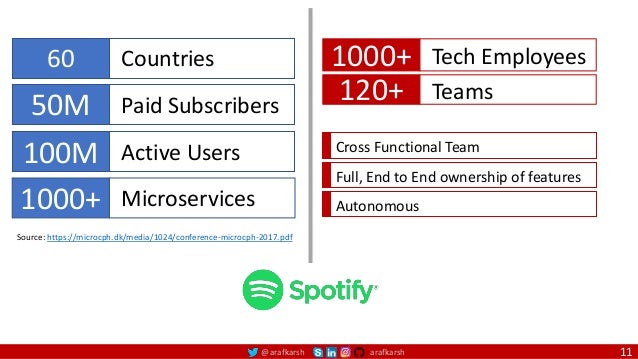

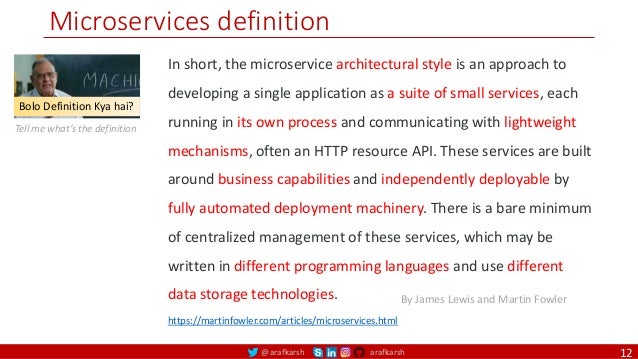

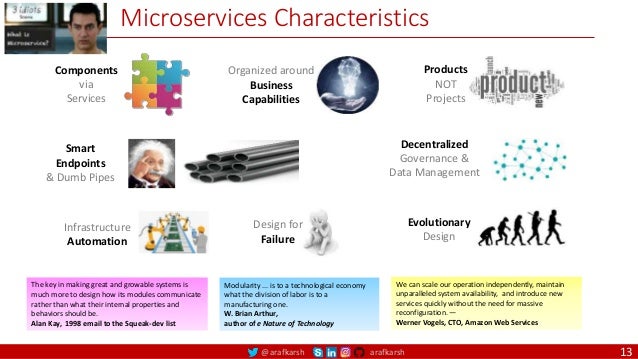

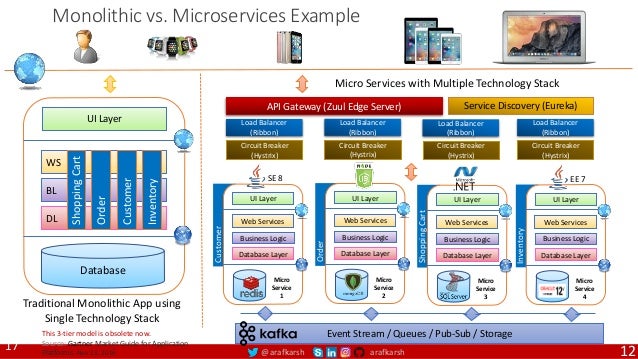

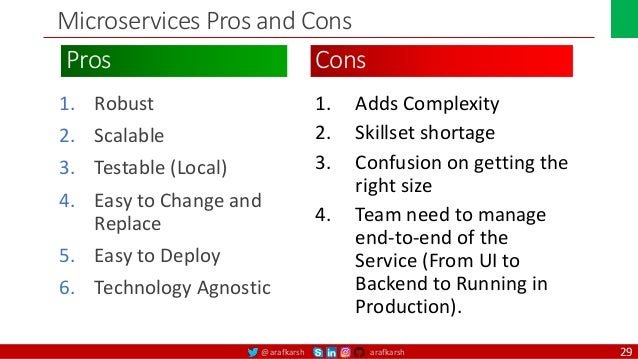

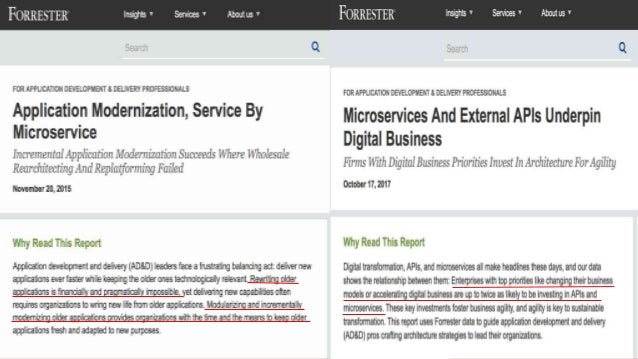

The document outlines a comprehensive guide on microservices architecture, emphasizing the migration from monolithic systems to microservices for cloud-native applications. It covers various aspects, including the principles, design patterns, deployment strategies, and the pros and cons of microservices compared to traditional approaches. Key transformation strategies for application modernization and examples of successful microservices implementations, such as Netflix, are also discussed.

![@arafkarsh arafkarsh

Agile

Scrum (4-6 Weeks)

Developer Journey

Monolithic

Domain Driven Design

Event Sourcing and CQRS

Waterfall

Optional

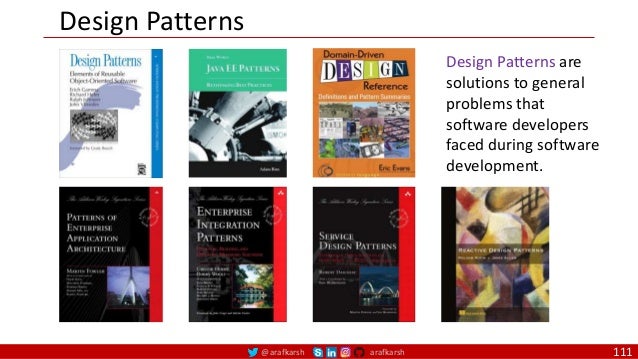

Design

Patterns

Continuous Integration (CI)

6/12 Months

Enterprise Service Bus

Relational Database [SQL] / NoSQL

Development QA / QC Ops

5

Microservices

Domain Driven Design

Event Sourcing and CQRS

Scrum / Kanban (1-5 Days)

Mandatory

Design

Patterns

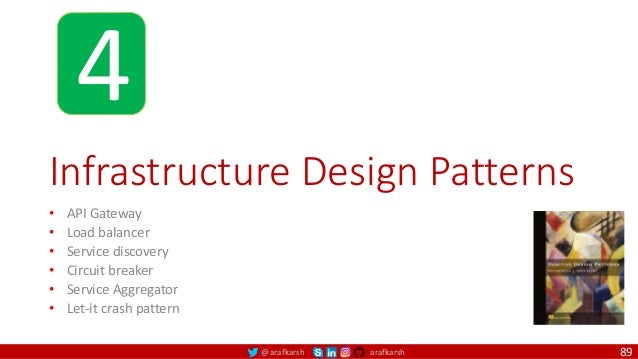

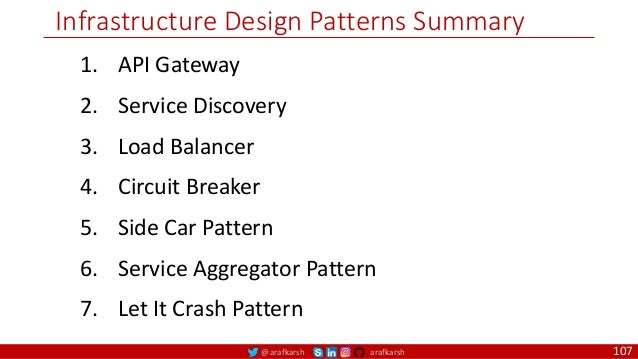

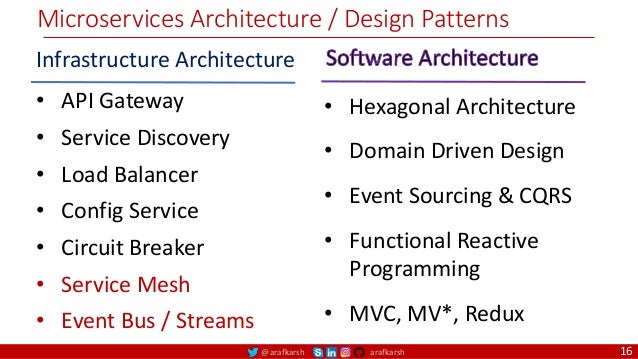

Infrastructure Design Patterns

CI

DevOps

Event Streaming / Replicated Logs

SQL NoSQL

CD

Container Orchestrator Service Mesh](https://image.slidesharecdn.com/ms-5-microservices-monolith-infrapatterns-220104100300/95/Microservices-Architecture-Monolith-Migration-Patterns-5-638.jpg)

![@arafkarsh arafkarsh

Agile

Scrum (4-6 Weeks)

Developer Journey

Monolithic

Domain Driven Design

Event Sourcing and CQRS

Waterfall

Optional

Design

Patterns

Continuous Integration (CI)

6/12 Months

Enterprise Service Bus

Relational Database [SQL]

Development QA / QC Ops

33

Microservices

Domain Driven Design

Event Sourcing and CQRS

Scrum / Kanban (1-5 Days)

Mandatory

Design

Patterns

Infrastructure Design Patterns

CI

DevOps

Event Streaming / Replicated Logs

SQL NoSQL

CD

Container Orchestrator Service Mesh](https://image.slidesharecdn.com/ms-5-microservices-monolith-infrapatterns-220104100300/95/Microservices-Architecture-Monolith-Migration-Patterns-33-638.jpg)