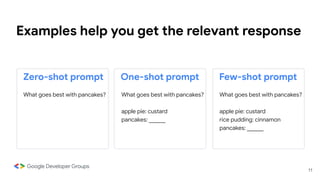

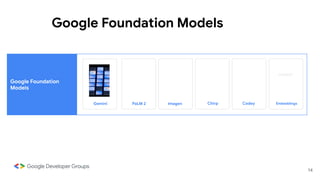

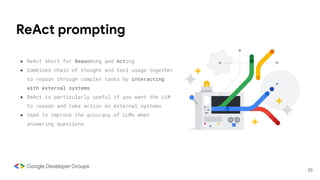

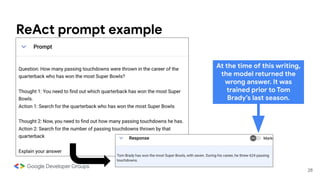

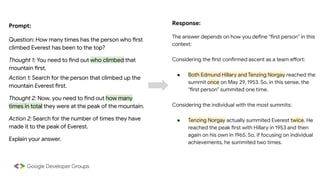

The document provides an introduction to large language models (LLMs), highlighting their applications in generating text, code, and images through effective prompting techniques, including few-shot prompting and chain-of-thought reasoning. It emphasizes the importance of context and examples in prompts to guide LLM behavior, and discusses advanced techniques like react prompting for complex tasks. Additionally, it addresses best practices for fine-tuning and ensuring responsible AI usage.

![What does LLM do?

The cat sat on the

[...] [...] [...] [...] [...]

[...]

mat rug chair

Most likely

next word

Most likely

next word

Less likely

next word

…](https://image.slidesharecdn.com/buildwithai-dipietrog-240325042655-e65c33c4/85/Introduction-to-LLMs-Prompt-Engineering-fundamentals-6-320.jpg)

![Add contextual information in your

prompt when you need to give

information to the model, or restrict

the boundaries of the responses to

only what's within the prompt.

Marbles:

Color: blue

Number: 28

Color: yellow

Number: 15

Color: green

Number: 17

How many green marbles are there?

Including examples in the prompt

is an effective strategy for

customizing the response format.

Classify the following.

Options:

- red wine

- white wine

Text: Chardonnay

The answer is: white wine

Text: Cabernet

The answer is: red wine

Text: Riesling

The answer is:

10

Prompts can include one or more of the following types of content

Question input:

What's a good name for a flower shop

that specializes in selling bouquets of

dried flowers?

Task input:

Give me a list of things that I should

bring with me to a camping trip.

Entity input:

Classify the following as [large, small].

Elephant

Mouse

Completion input:

Some strategies to overcome writer's

block include …

Input Context Examples](https://image.slidesharecdn.com/buildwithai-dipietrog-240325042655-e65c33c4/85/Introduction-to-LLMs-Prompt-Engineering-fundamentals-10-320.jpg)

![Temperature

12

Knobs and levers

Tune the degree

of randomness.

Choose from the smallest set of

words whose cumulative

probability >= P.

Only sample from

the top K tokens.

Takes a value between 0 and 1

0 = always brings the most likely

next token

...

1 = selects from a long list of

options, more random or “creative”

P = 0.8

[flowers (0.5),

trees (0.23),

herbs (0.07),

...

bugs (0.0003)]

K = 2

[flowers (0.5),

trees (0.23),

herbs (0.07),

...

bugs (0.0003)]

Top P Top K

(YOUR IMPACT ON THE “RANDOMNESS”)](https://image.slidesharecdn.com/buildwithai-dipietrog-240325042655-e65c33c4/85/Introduction-to-LLMs-Prompt-Engineering-fundamentals-12-320.jpg)

![31

Source: Yao, Shunyu, et al. "React: Synergizing reasoning and acting in language models." arXiv preprint

arXiv:2210.03629 (2022). Link (accessed 2023 09 03). https://arxiv.org/abs/2210.03629, accessed 2023 09 03.

Question: Aside from the Apple Remote, what other device can control

the program Apple Remote was originally designed to interact with?

(1) Hotspot QA

Answer: Ipod

(1a) Standard

Thought: Let’s think step by step. Apple Remote was originally

designed to interact with Apple TV. Apple TV can be controlled

by iPhone, iPad, and iPod Touch. So the answer is iPhone, iPad,

and iPod Touch.

Answer: iPhone, iPad, iPod Touch

(1b) CoT (Reason-Only)

Act 1: Search [Apple Remote]

OBS 1: The Apple Remote is a remote control…

(1c) Act-Only

Act 2: Search [Front Row]

OBS 2: Could not find [Front Row]. Similar: …

Act 3: Search [Front Row (software)]

OBS 3: Front Row is a discontinued media center software…

Act 4: Finish [yes]](https://image.slidesharecdn.com/buildwithai-dipietrog-240325042655-e65c33c4/85/Introduction-to-LLMs-Prompt-Engineering-fundamentals-31-320.jpg)

![32

Source: Yao, Shunyu, et al. "React: Synergizing reasoning and acting in language models." arXiv preprint

arXiv:2210.03629 (2022). Link (accessed 2023 09 03). https://arxiv.org/abs/2210.03629, accessed 2023 09 03.

Thought 1: I need to search Apple Remote and find the program it was originally designed to interact with.

(1d) ReAct (Reason + Act)

Act 1: Search [Apple Remote]

Obs 1: The Apple Remote is a remote control introduced in October 2005 by Apple…

Originally designed to control the Front Row media center program…

Thought 2: Apple Remote was originally designed to control the Front Row media center

program. I need to search Front Row next and find what other device can control it.

Act 2: Search [Front Row]

Obs 2: Could not find [Front Row]. Similar: [‘Front Row Seat to Earth’, ‘Front Row

Motorsports’, ‘Front Row (software)’, …]

Thought 3: Front Row is not found. I need to search Front Row (software).

Act 3: Search [Front Row (software) ]

Obs 3: Front Row is a discontinued media center software…

Thought 4: Front Row (software) is controlled by an Apple Remote or the keyboard function keys.

So the answer is keyboard function keys.

Act 4: Finish [keyboard function keys ]](https://image.slidesharecdn.com/buildwithai-dipietrog-240325042655-e65c33c4/85/Introduction-to-LLMs-Prompt-Engineering-fundamentals-32-320.jpg)

![Tip 9:

Always remember

Responsible AI and

safety filters

Gemini makes it easy to set safety settings in 3 steps

1. from vertexai.preview.generative_models import (

GenerationConfig,

GenerativeModel,

HarmCategory,

HarmBlockThreshold,

Image)

2. safety_config={

HarmCategory.

HARM_CATEGORY_HARASSMENT:

HarmBlockThreshold.BLOCK_LOW_AND_ABOVE,

HarmCategory.

HARM_CATEGORY_HATE_SPEECH:

HarmBlockThreshold.BLOCK_ONLY_HIGH,

HarmCategory.

HARM_CATEGORY_SEXUALLY_EXPLICIT:

HarmBlockThreshold.BLOCK_ONLY_HIGH,

HarmCategory.

HARM_CATEGORY_DANGEROUS_CONTENT:

HarmBlockThreshold.BLOCK_LOW_AND_ABOVE,}

3. responses = model.generate_content(

contents=[nice_prompt],

generation_config=generation_config,

safety_settings=safety_config,

stream=True,)](https://image.slidesharecdn.com/buildwithai-dipietrog-240325042655-e65c33c4/85/Introduction-to-LLMs-Prompt-Engineering-fundamentals-44-320.jpg)