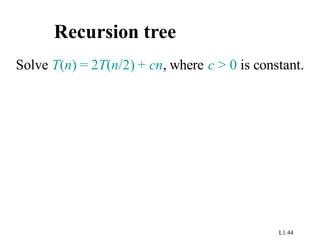

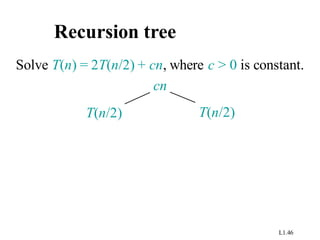

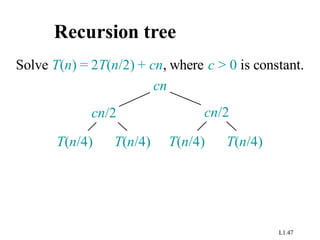

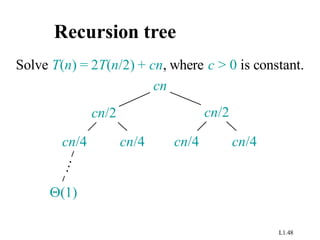

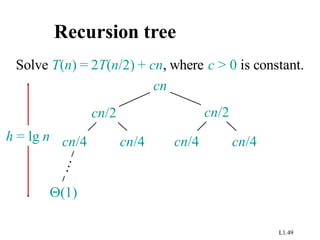

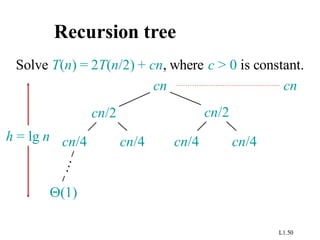

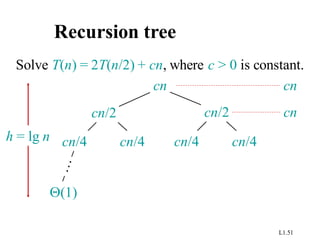

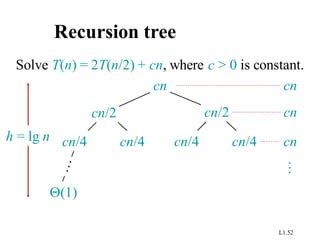

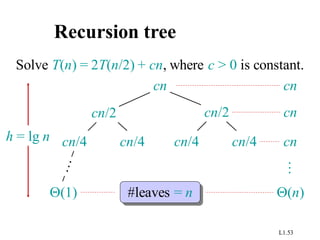

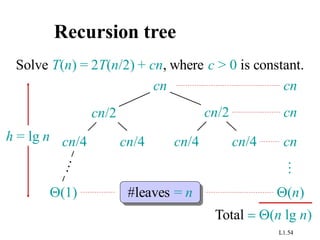

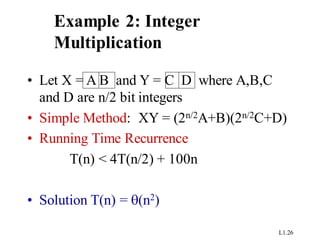

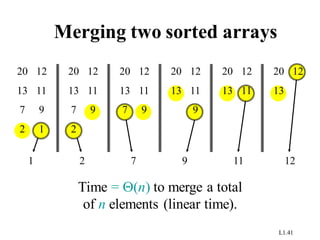

This document provides an overview of an introduction to algorithms course. It discusses insertion sort and merge sort algorithms. Insertion sort runs in O(n^2) time in the worst case, making it inefficient for large data sets. Merge sort runs in O(n log n) time, making it faster than insertion sort for most data sets. The document provides pseudocode and examples of how insertion sort and merge sort work, and uses recurrence relations and recursion trees to analyze their asymptotic runtime performances.

![L1.8

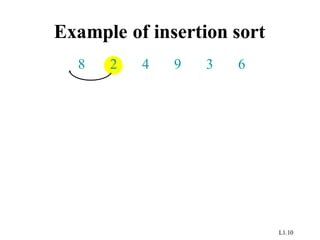

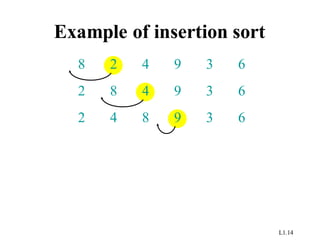

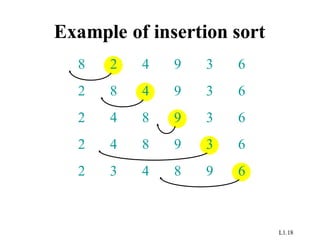

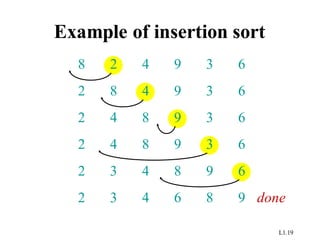

Insertion sort

INSERTION-SORT (A, n) ⊳ A[1 . . n]

for j ← 2 to n

do key ← A[ j]

i ← j – 1

while i > 0 and A[i] > key

do A[i+1] ← A[i]

i ← i – 1

A[i+1] = key

“pseudocode”

i j

key

sorted

A:

1 n](https://image.slidesharecdn.com/doc-20231004-wa0013-231005084003-98129751/85/Introduction-of-Algorithm-pdf-7-320.jpg)

![L1.25

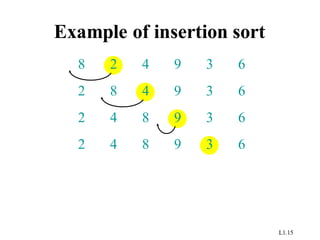

Insertion sort analysis

Worst case: Input reverse sorted.

( )

=

Q

=

Q

=

n

j

n

j

n

T

2

2

)

(

)

(

Average case: All permutations equally likely.

( )

=

Q

=

Q

=

n

j

n

j

n

T

2

2

)

2

/

(

)

(

Is insertion sort a fast sorting algorithm?

• Moderately so, for small n.

• Not at all, for large n.

[arithmetic series]](https://image.slidesharecdn.com/doc-20231004-wa0013-231005084003-98129751/85/Introduction-of-Algorithm-pdf-24-320.jpg)

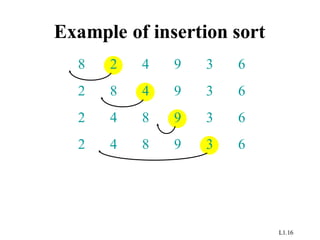

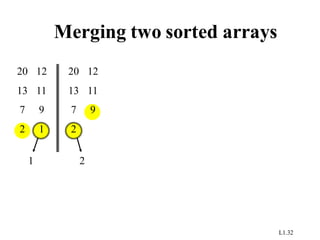

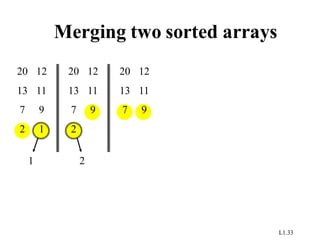

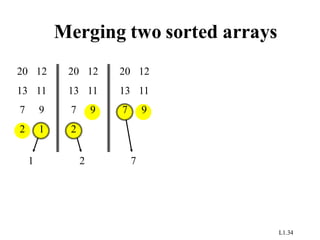

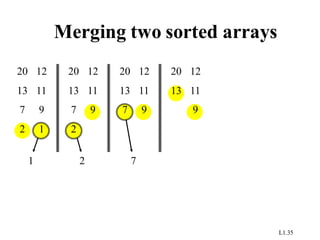

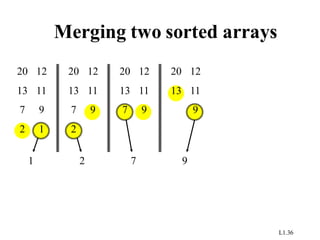

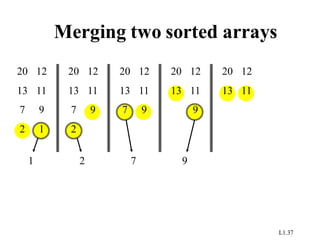

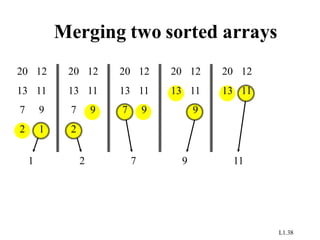

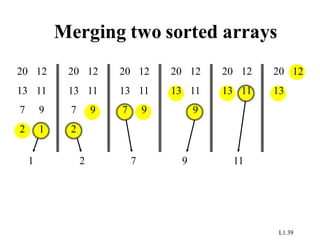

![L1.28

Example 3:Merge sort

MERGE-SORT A[1 . . n]

1. If n = 1, done.

2. Recursively sort A[ 1 . . n/2 ]

and A[ n/2+1 . . n ] .

3. “Merge” the 2 sorted lists.

Key subroutine: MERGE](https://image.slidesharecdn.com/doc-20231004-wa0013-231005084003-98129751/85/Introduction-of-Algorithm-pdf-27-320.jpg)

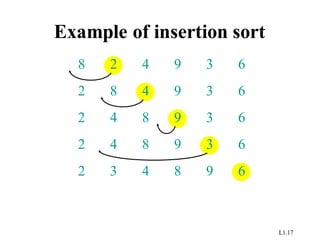

![L1.42

Analyzing merge sort

MERGE-SORT A[1 . . n]

1. If n = 1, done.

2. Recursively sort A[ 1 . . n/2 ]

and A[ n/2+1 . . n ] .

3. “Merge” the 2 sorted lists

T(n)

Q(1)

2T(n/2)

Q(n)

Sloppiness: Should be T( n/2 ) + T( n/2 ) ,

but it turns out not to matter asymptotically.](https://image.slidesharecdn.com/doc-20231004-wa0013-231005084003-98129751/85/Introduction-of-Algorithm-pdf-41-320.jpg)