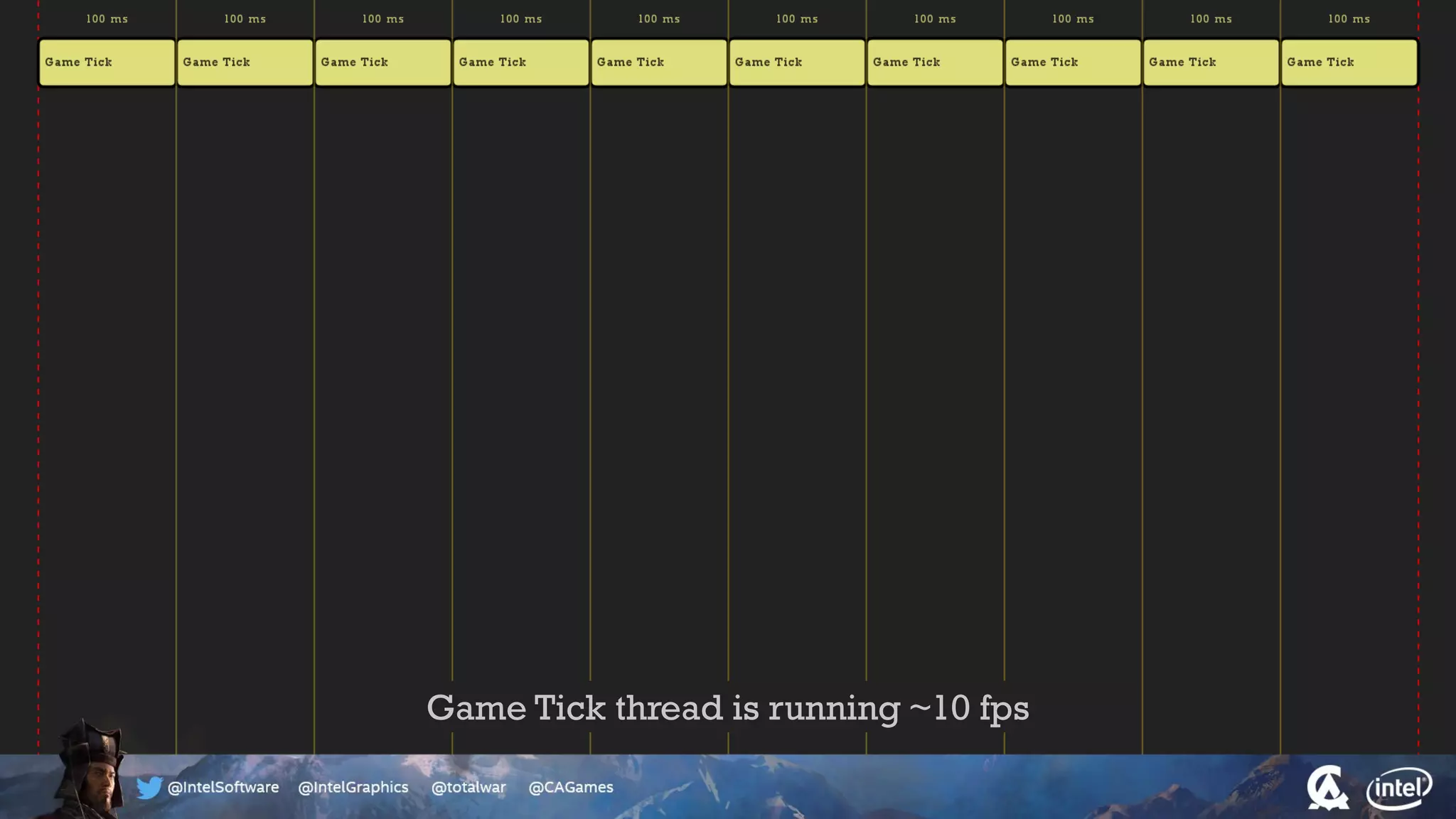

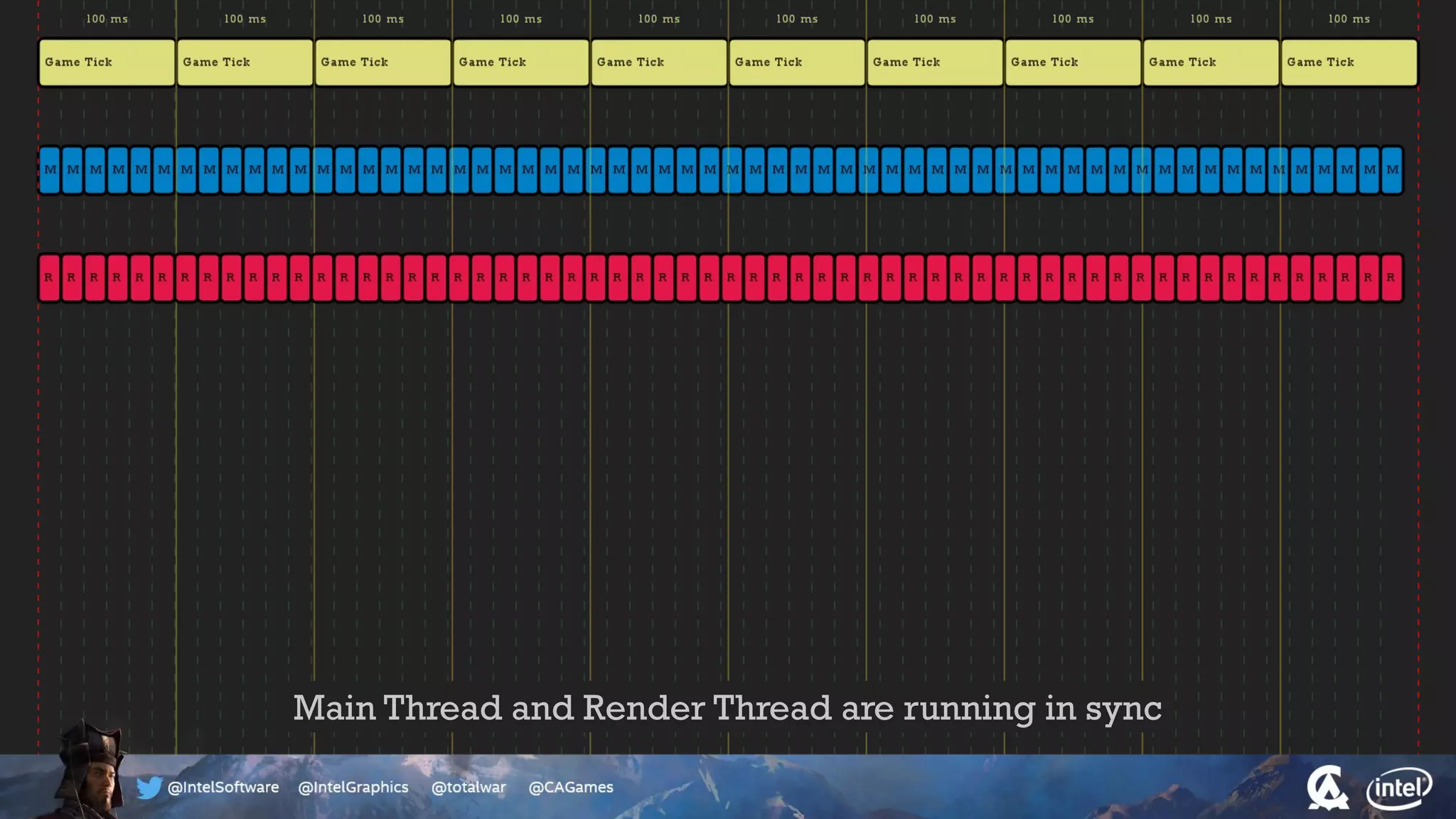

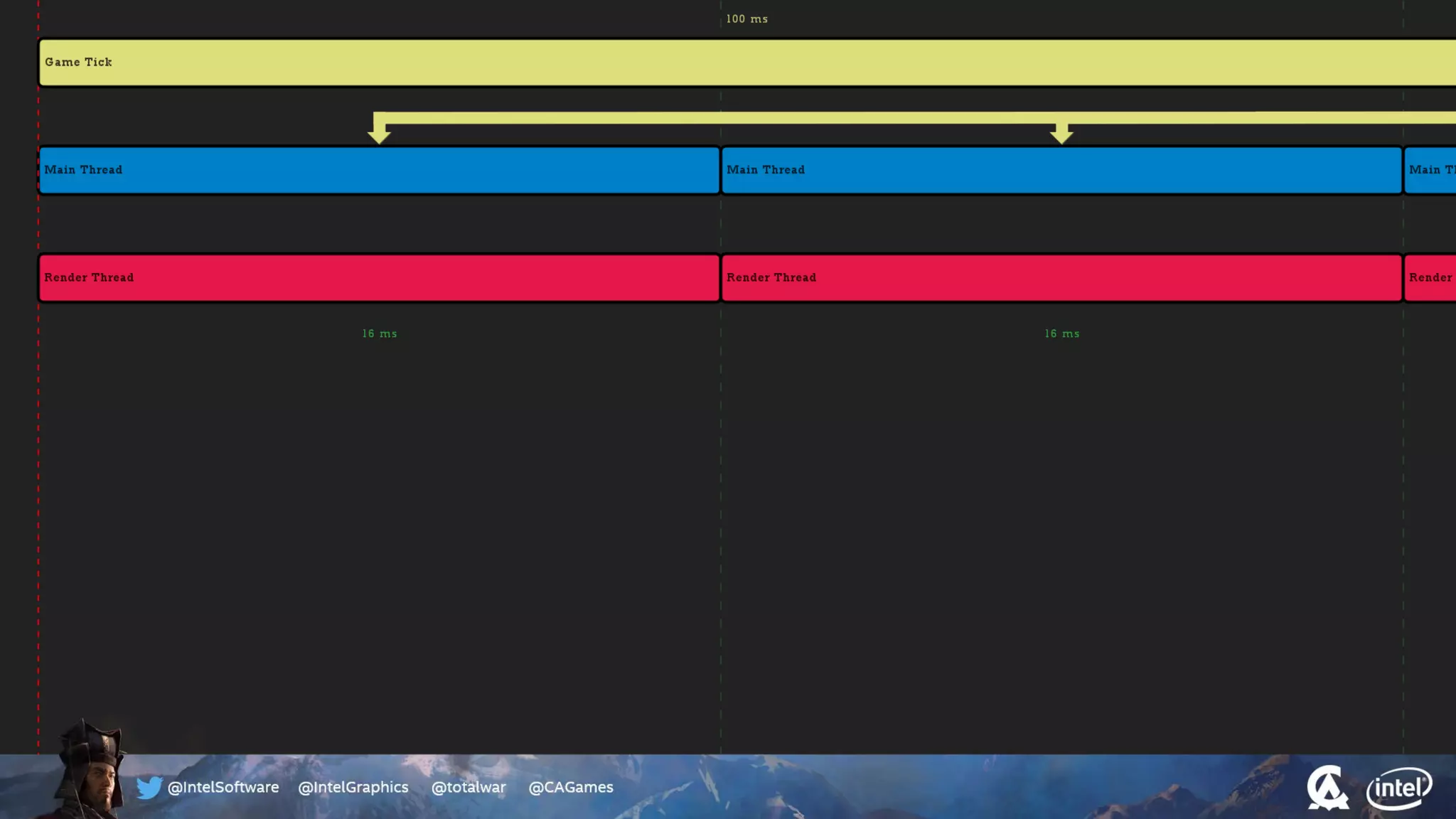

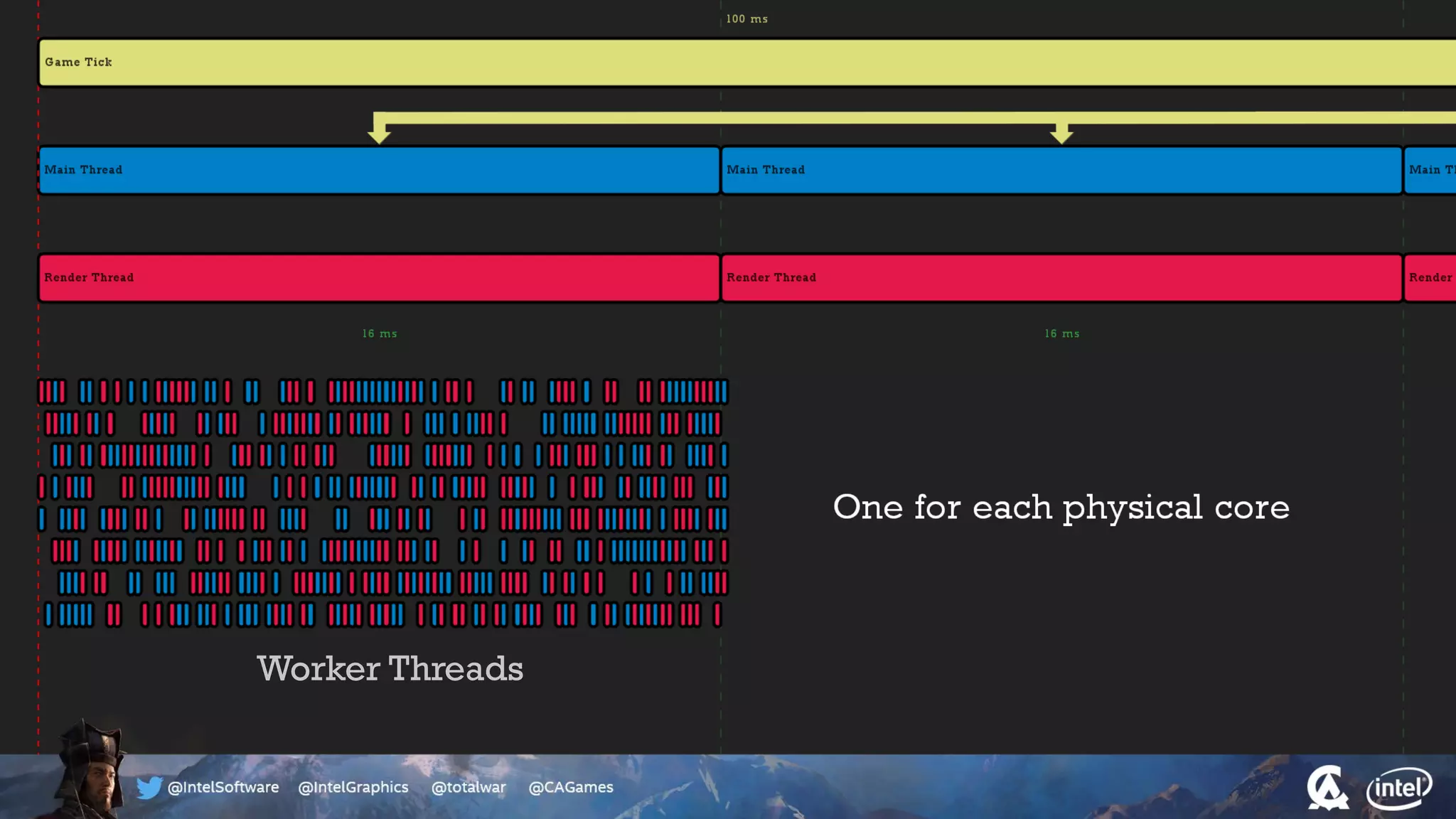

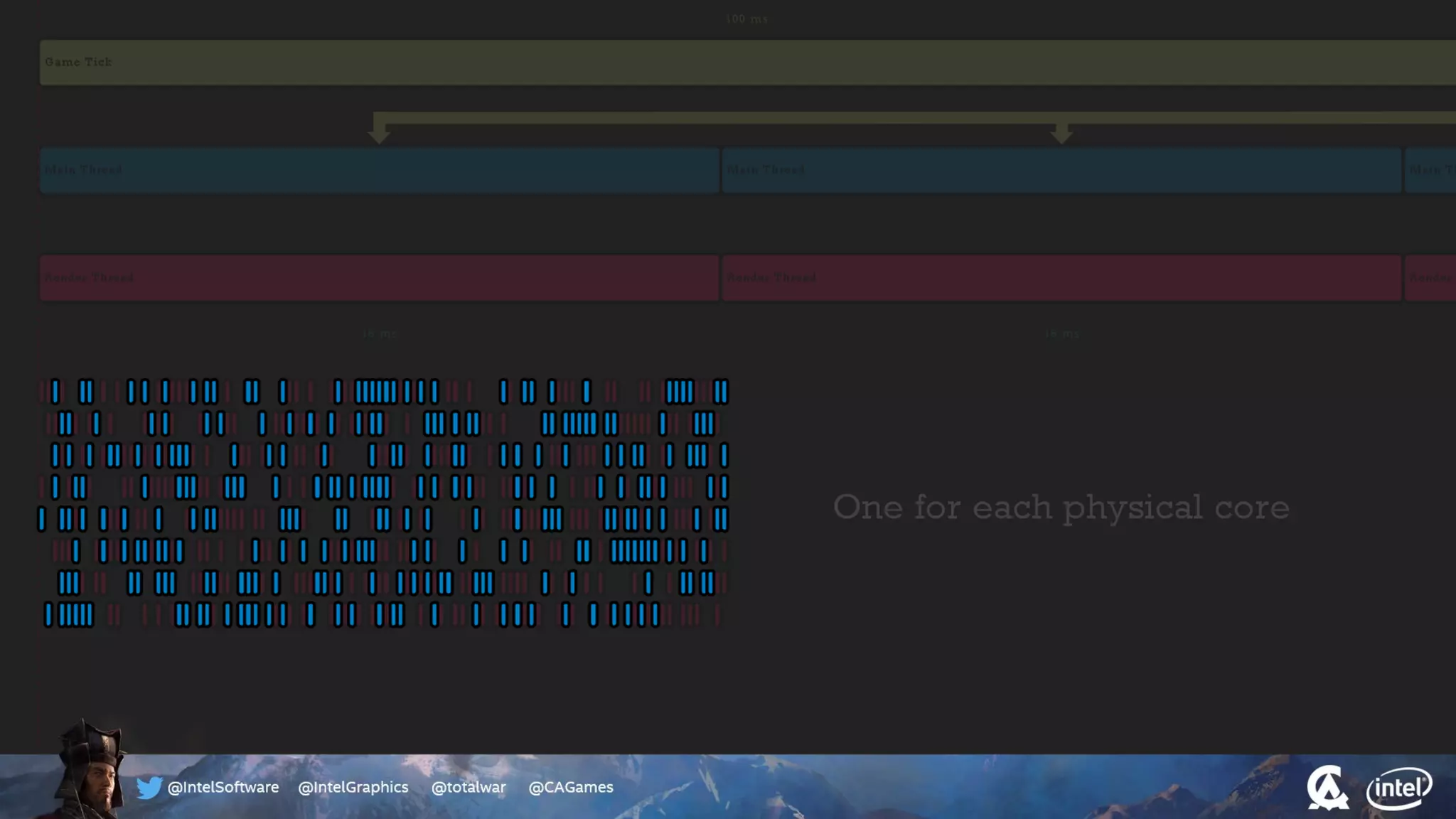

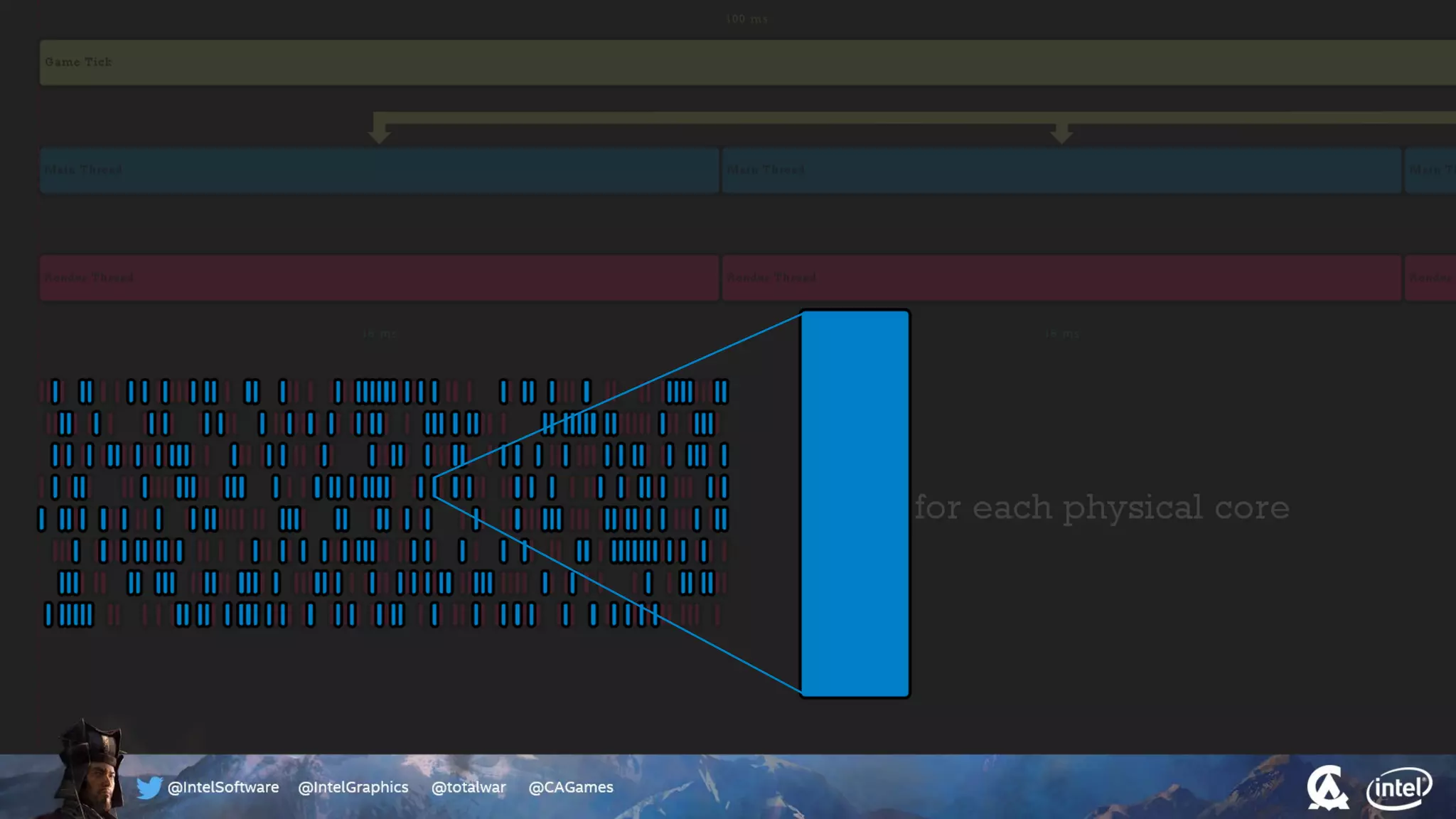

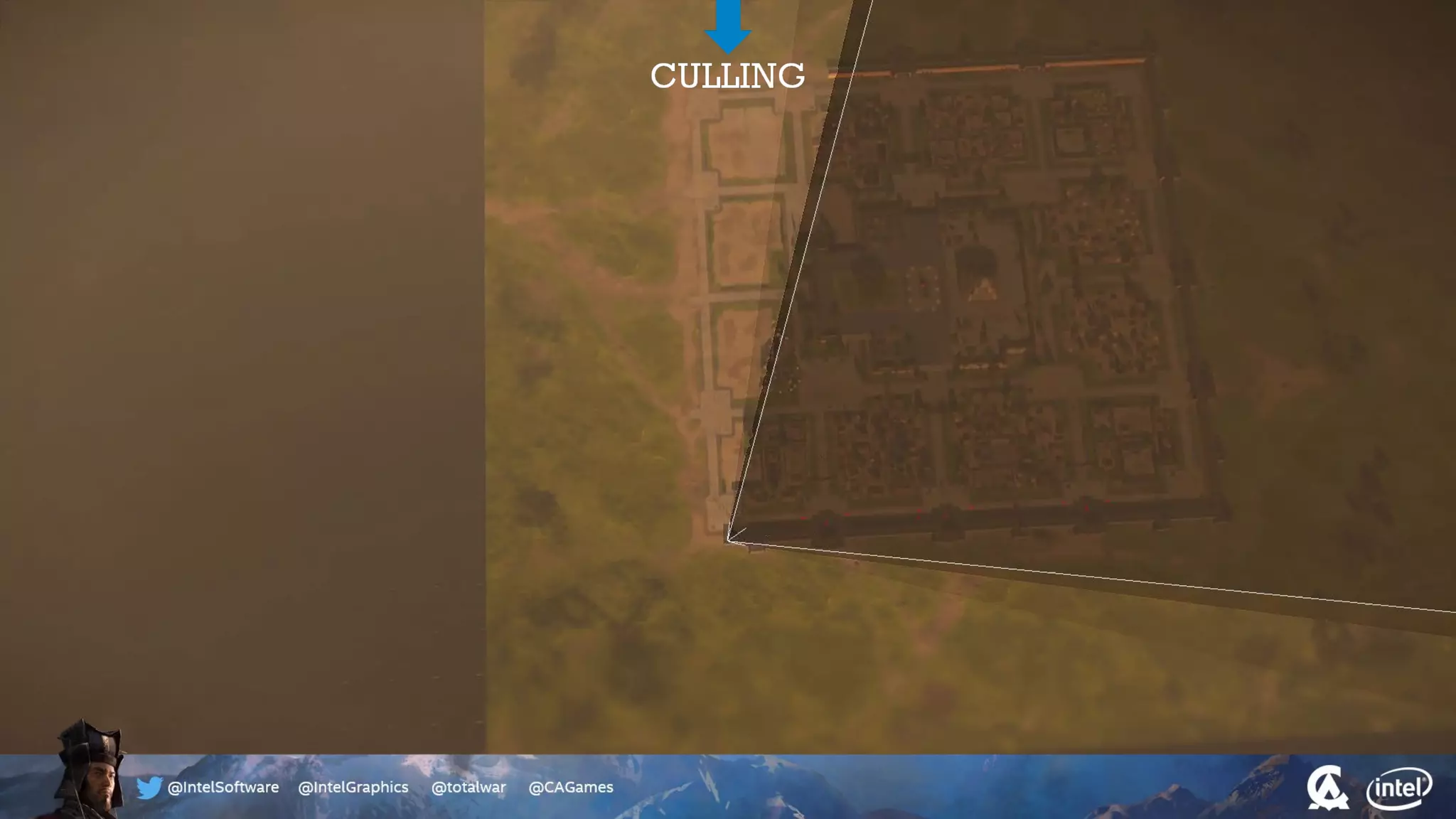

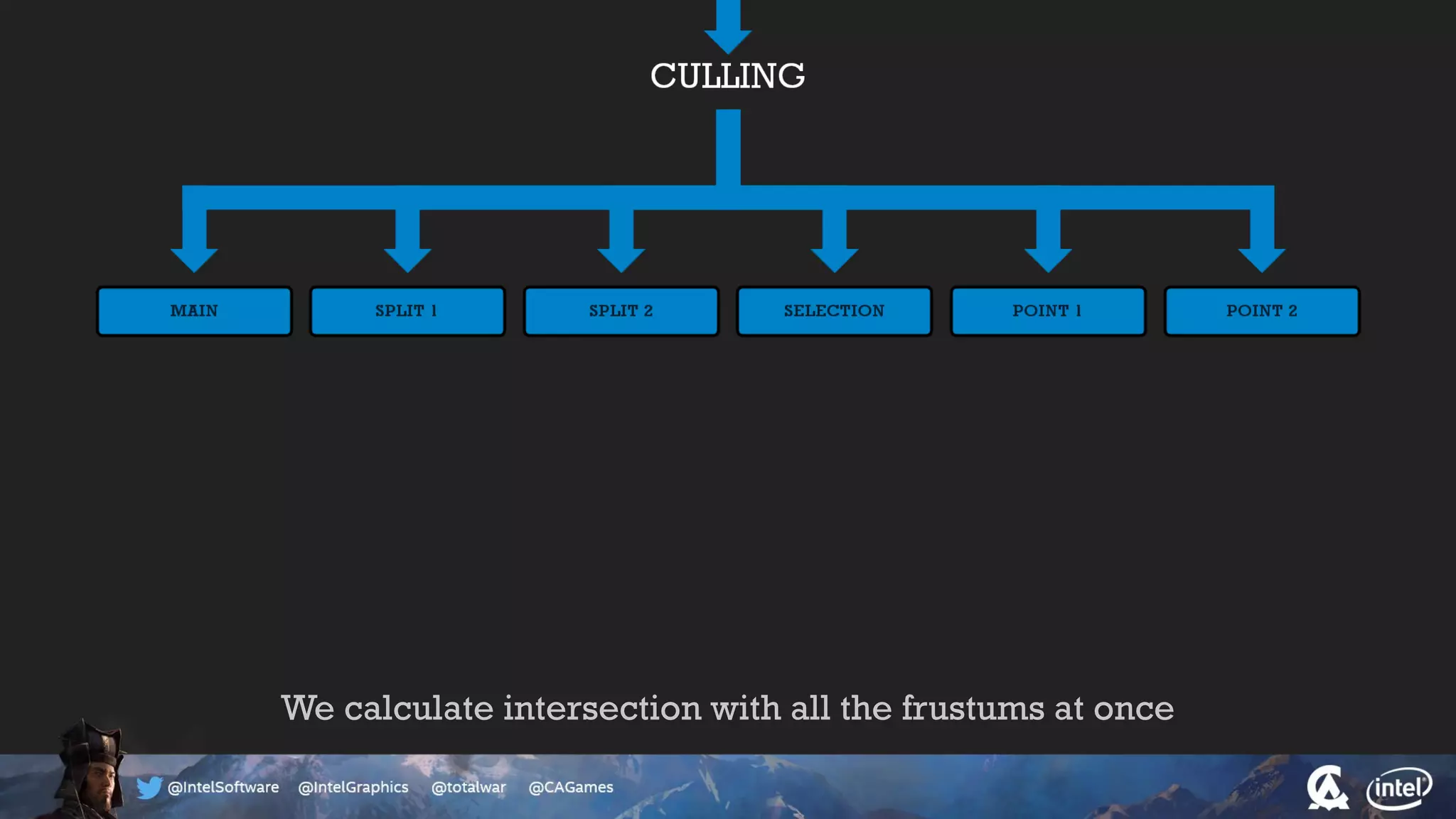

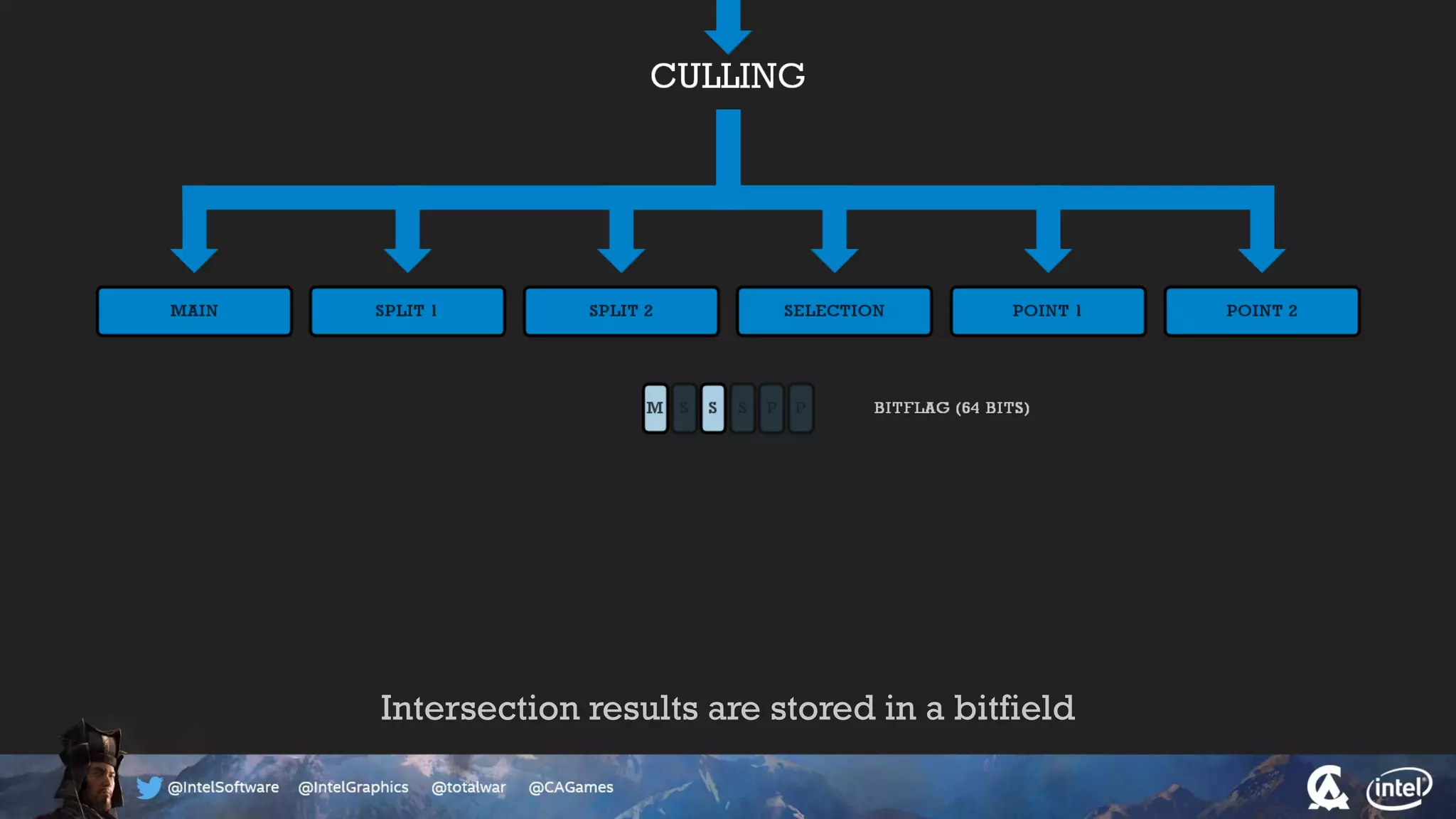

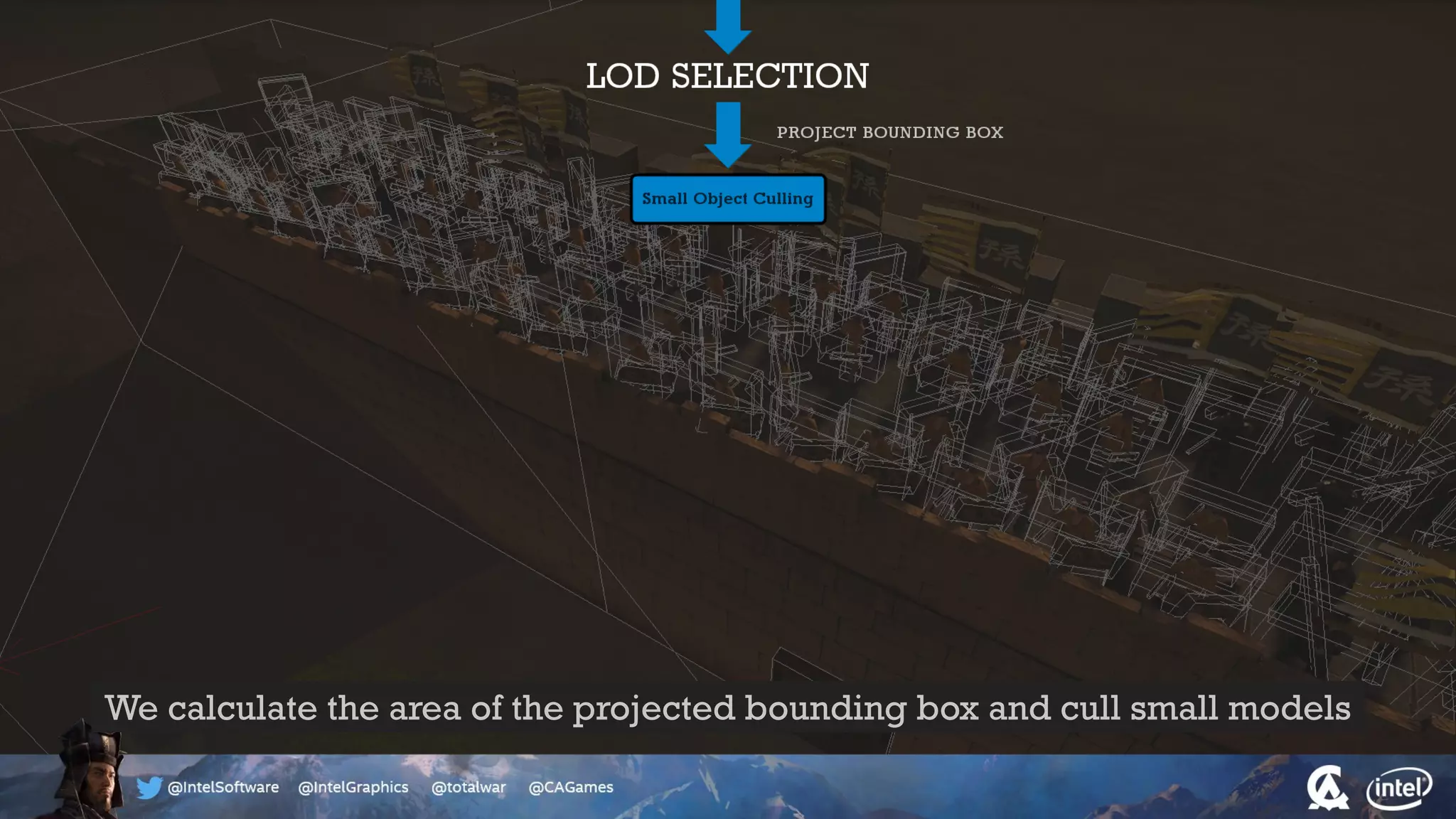

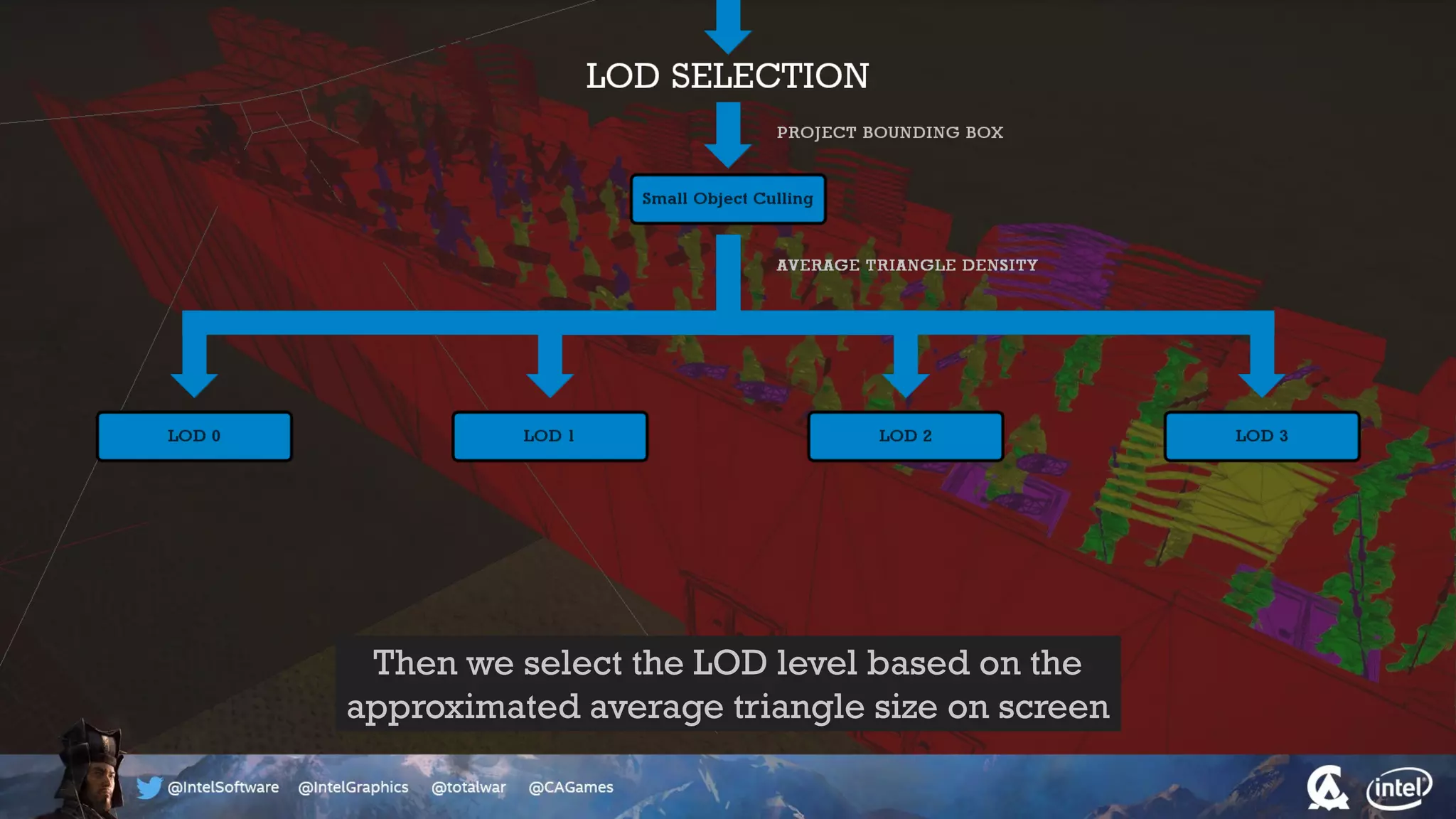

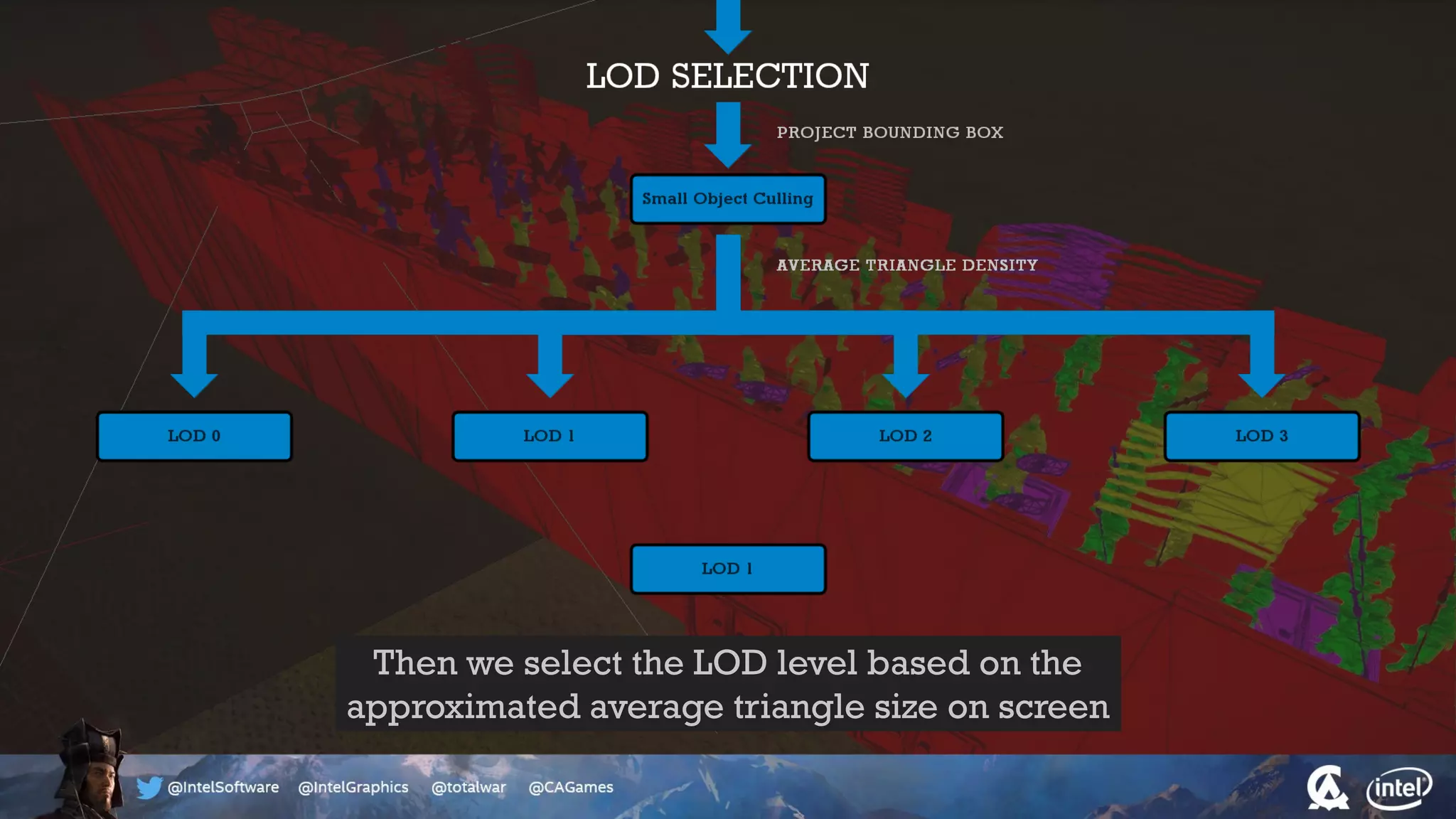

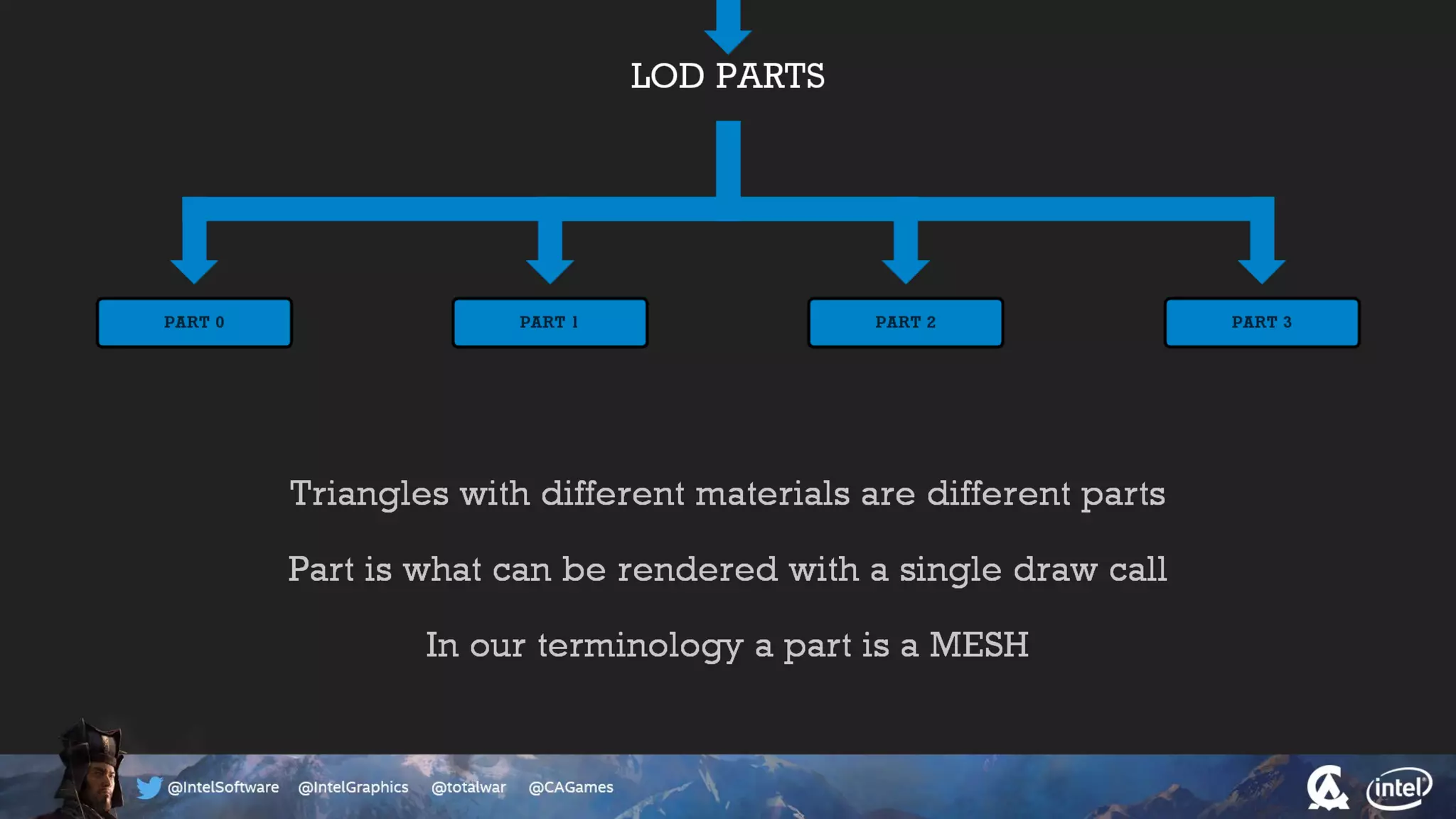

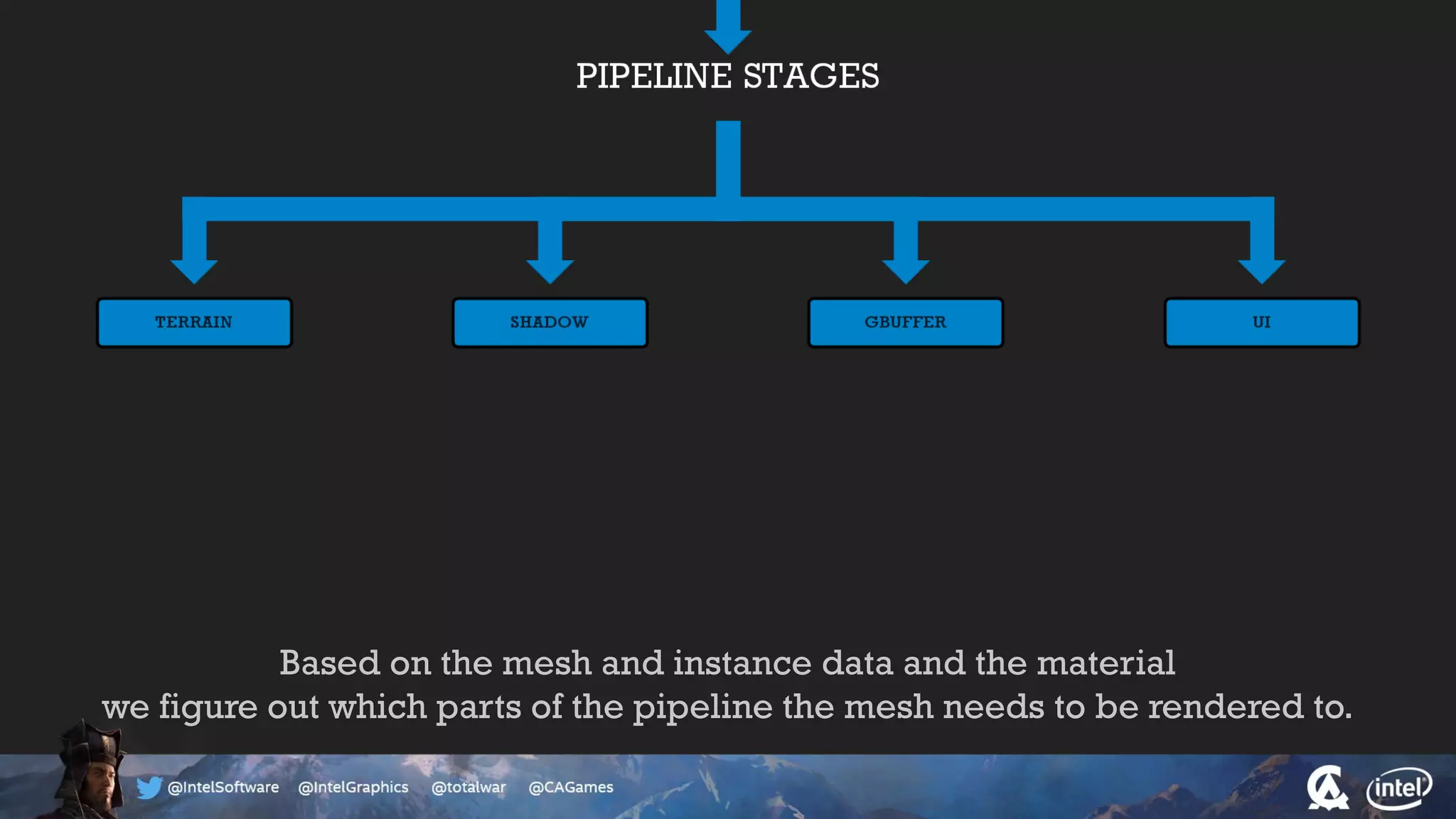

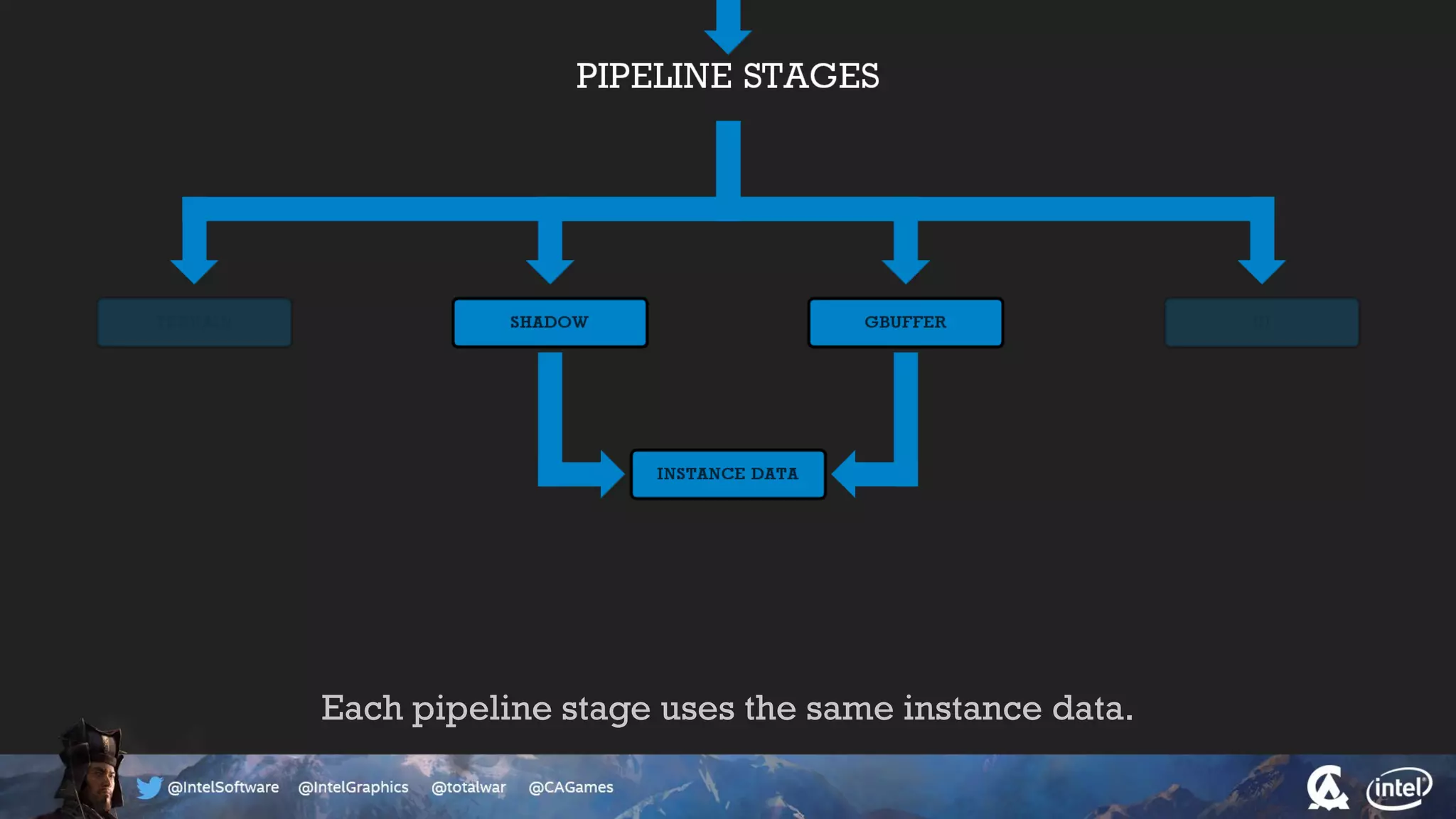

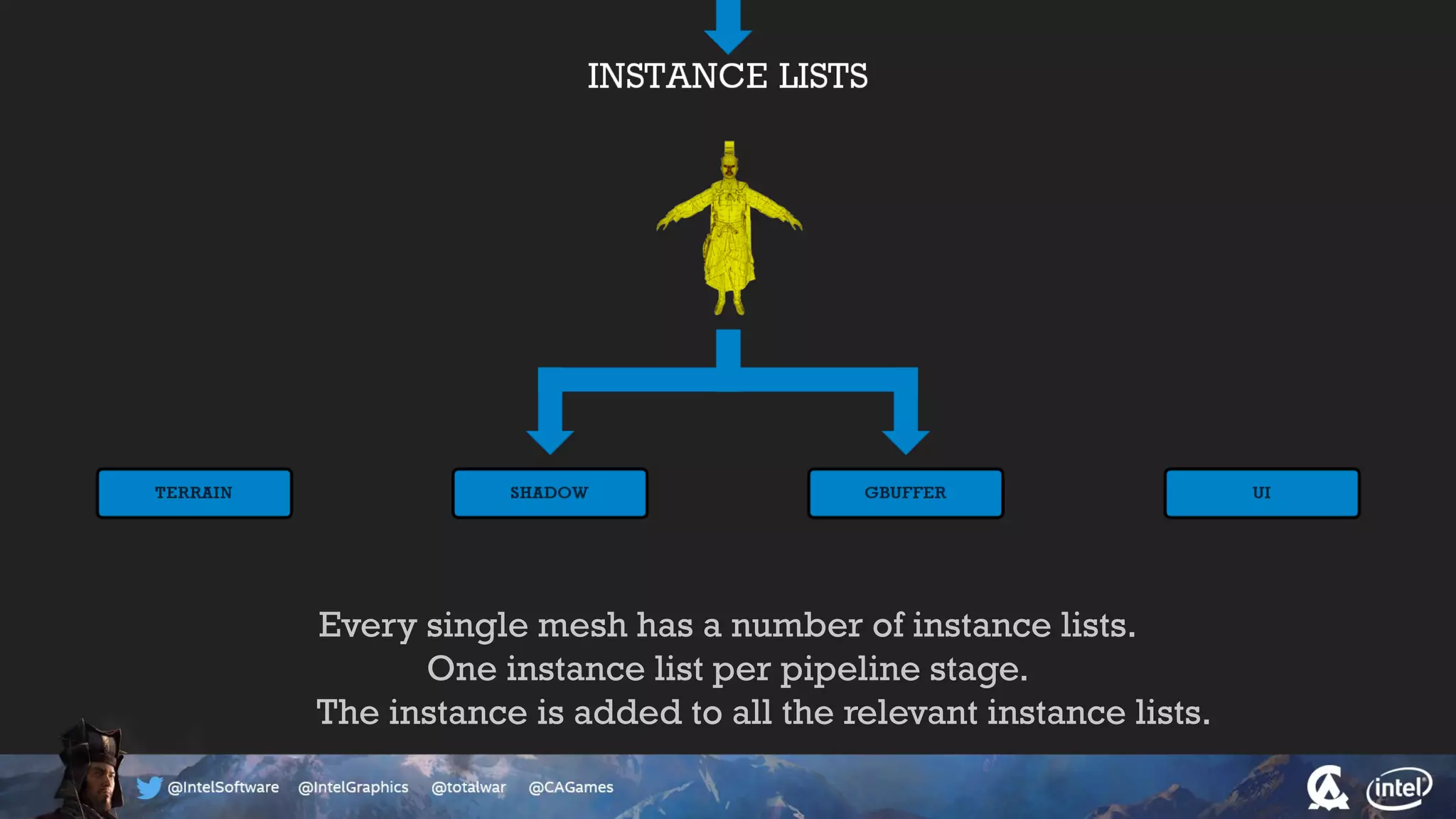

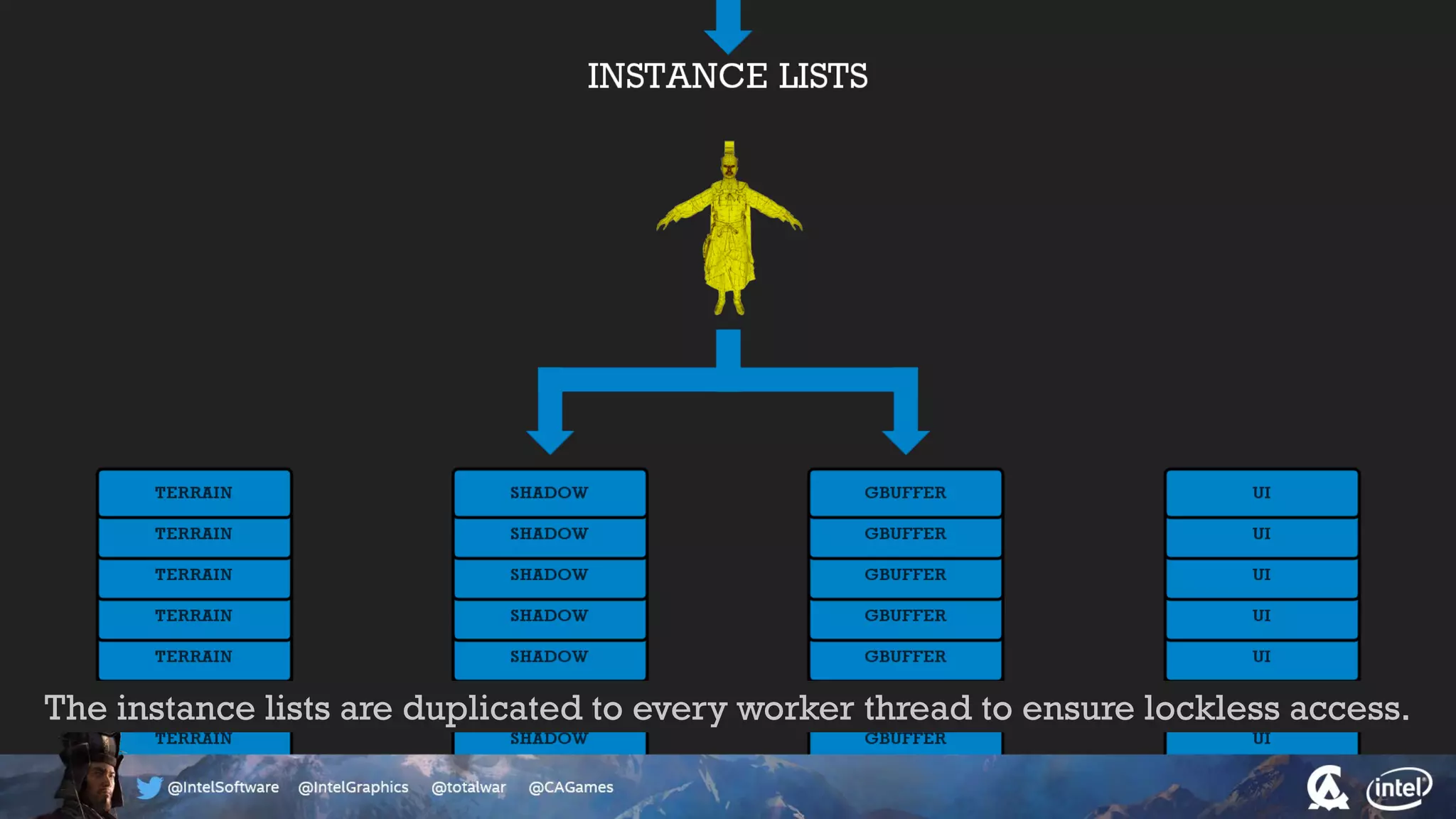

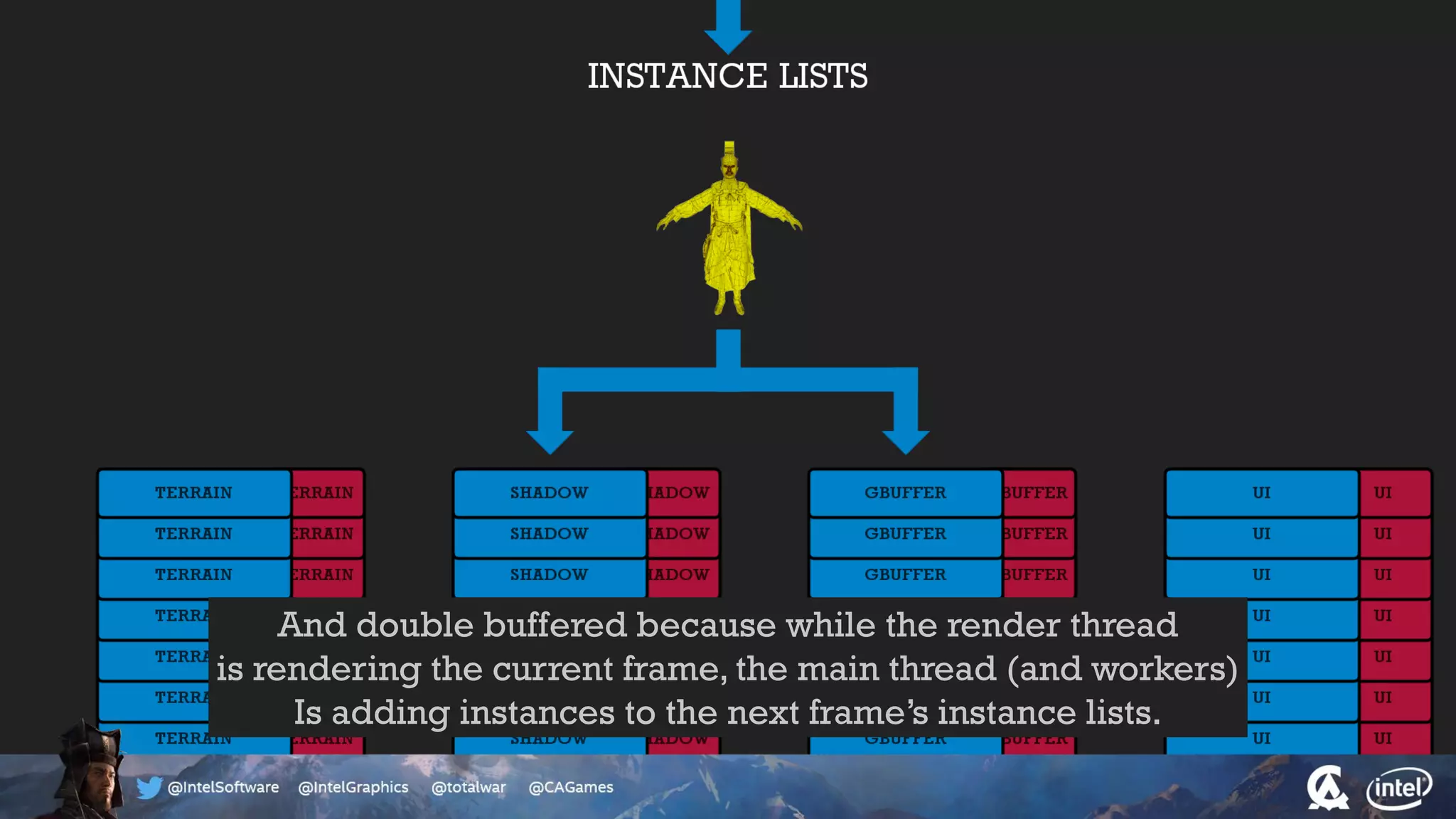

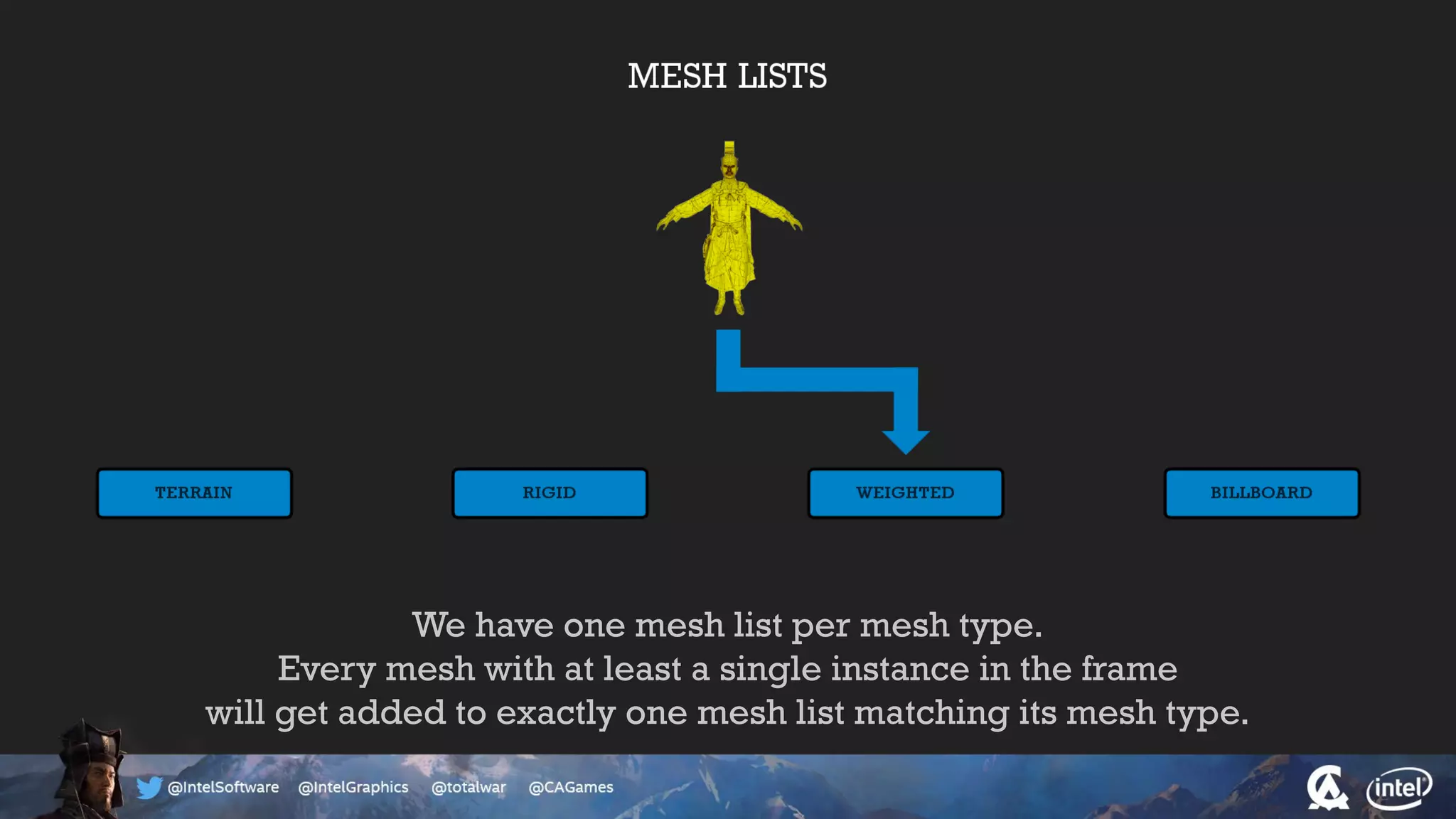

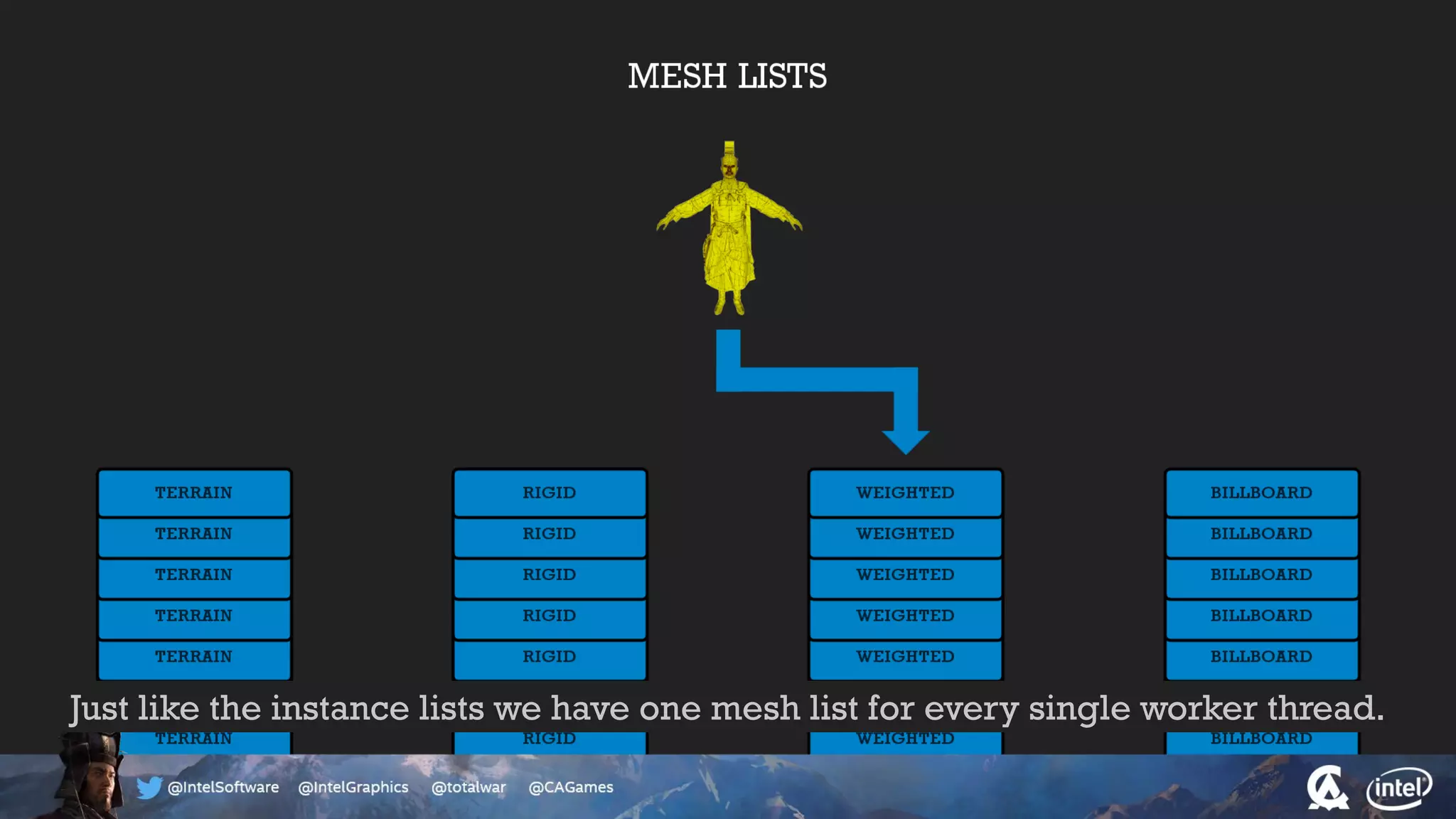

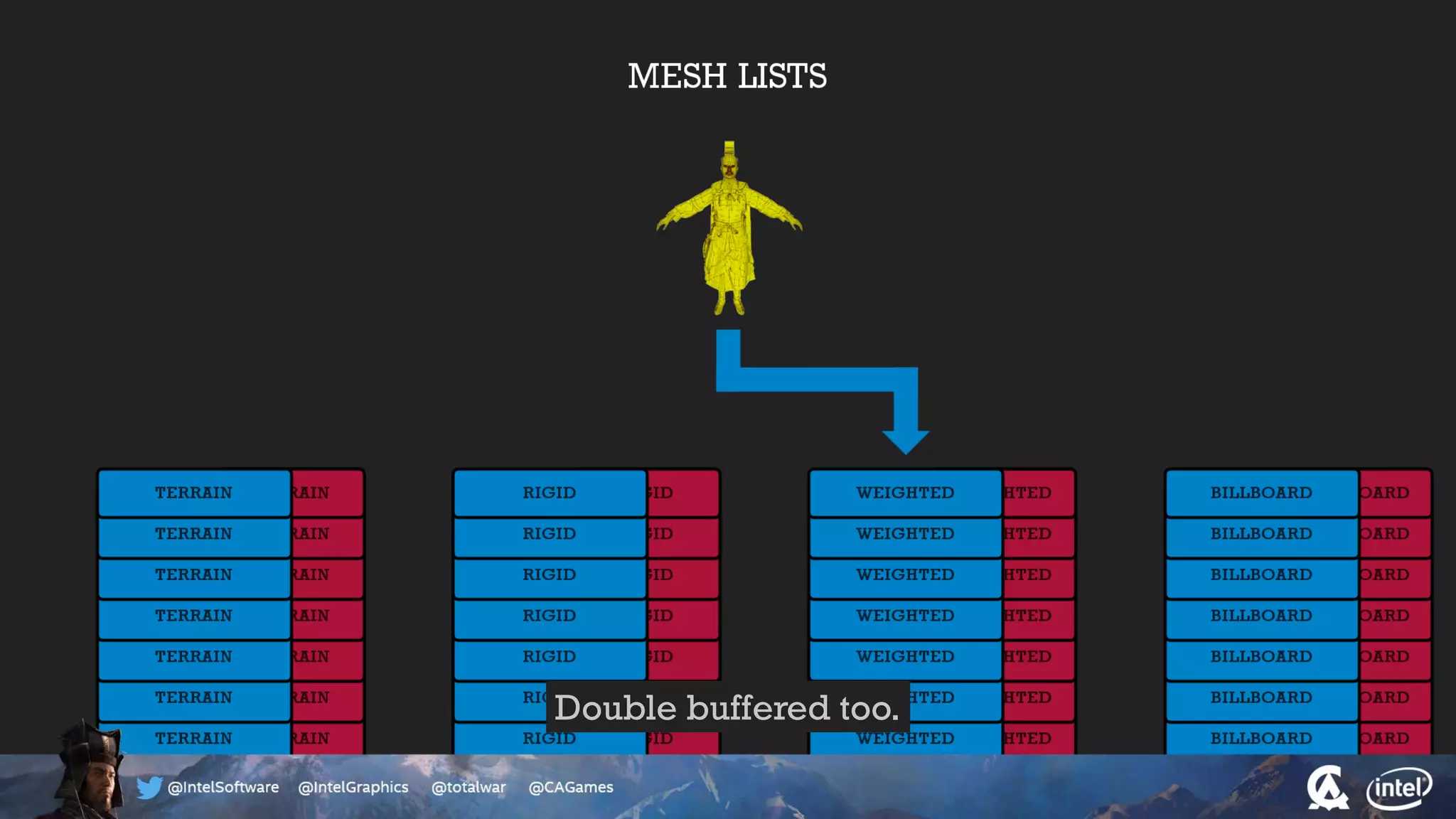

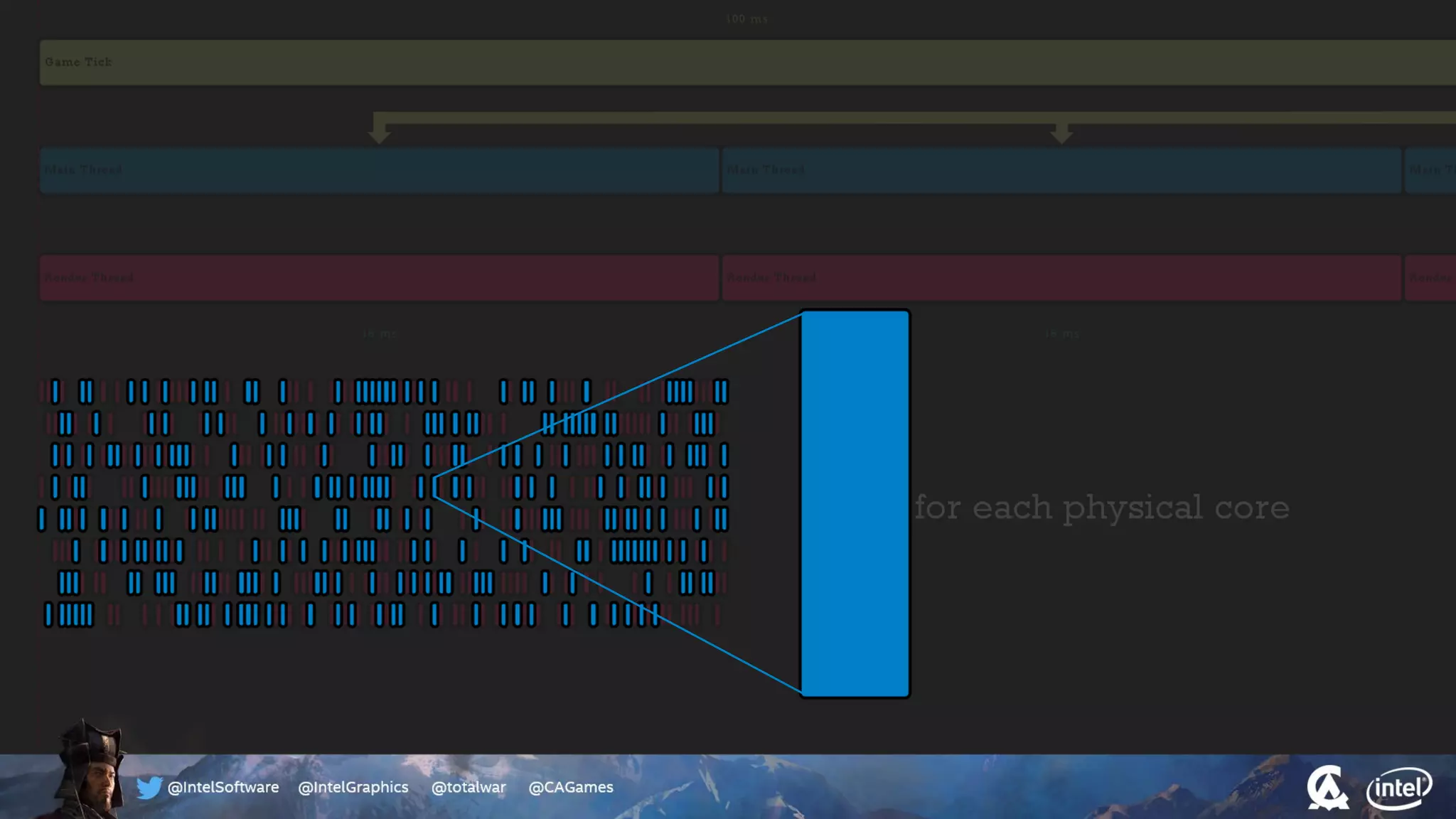

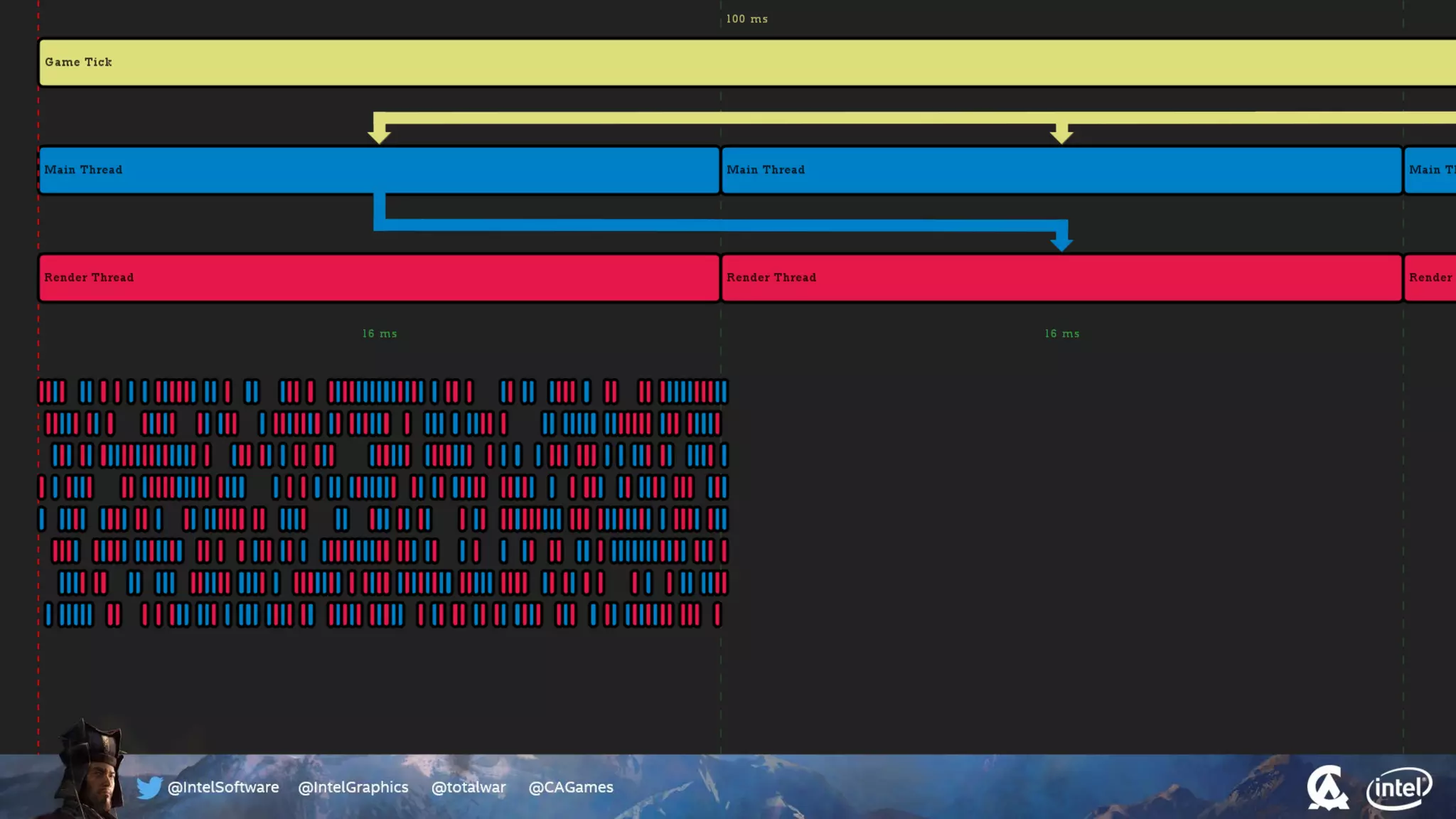

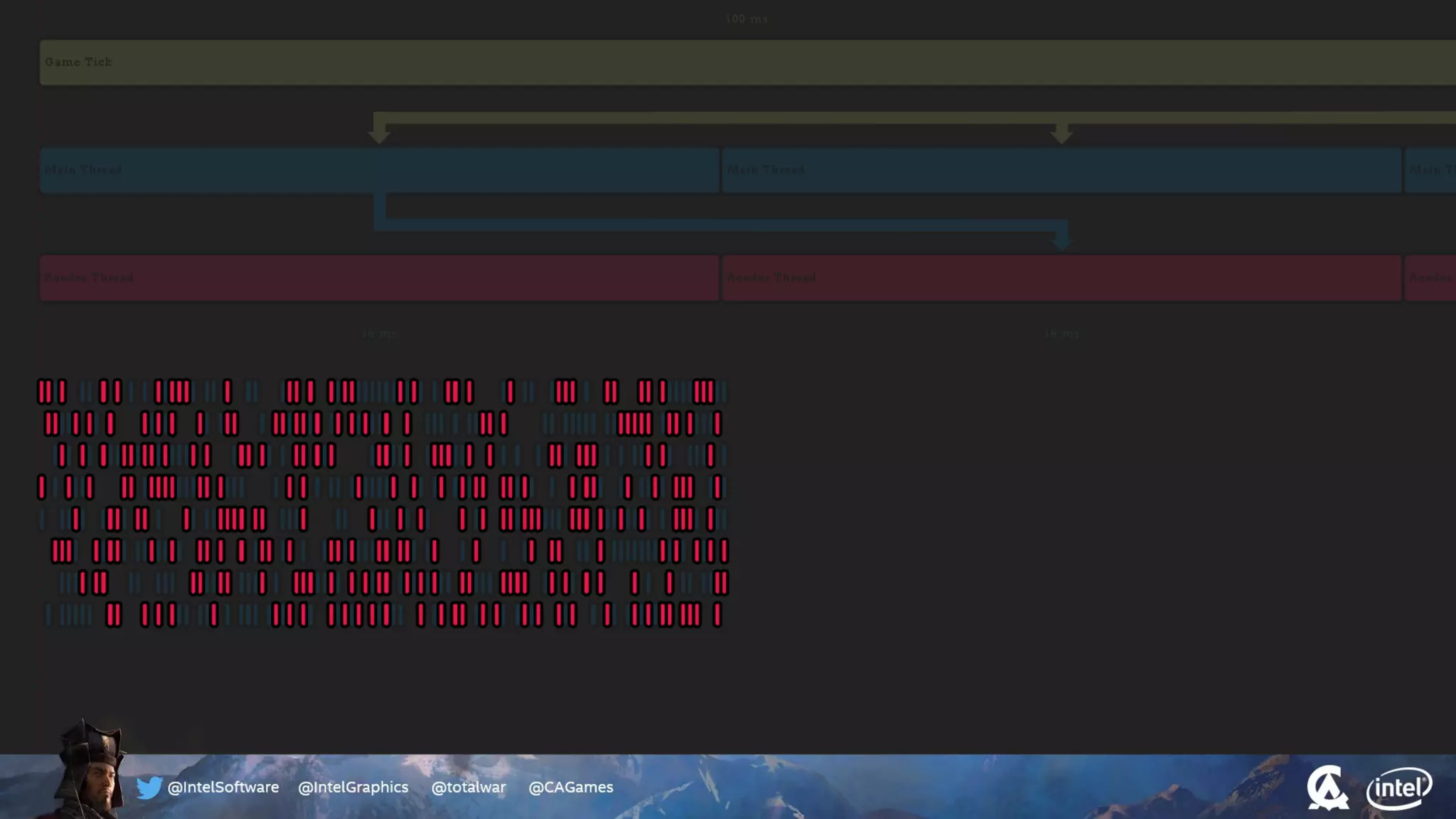

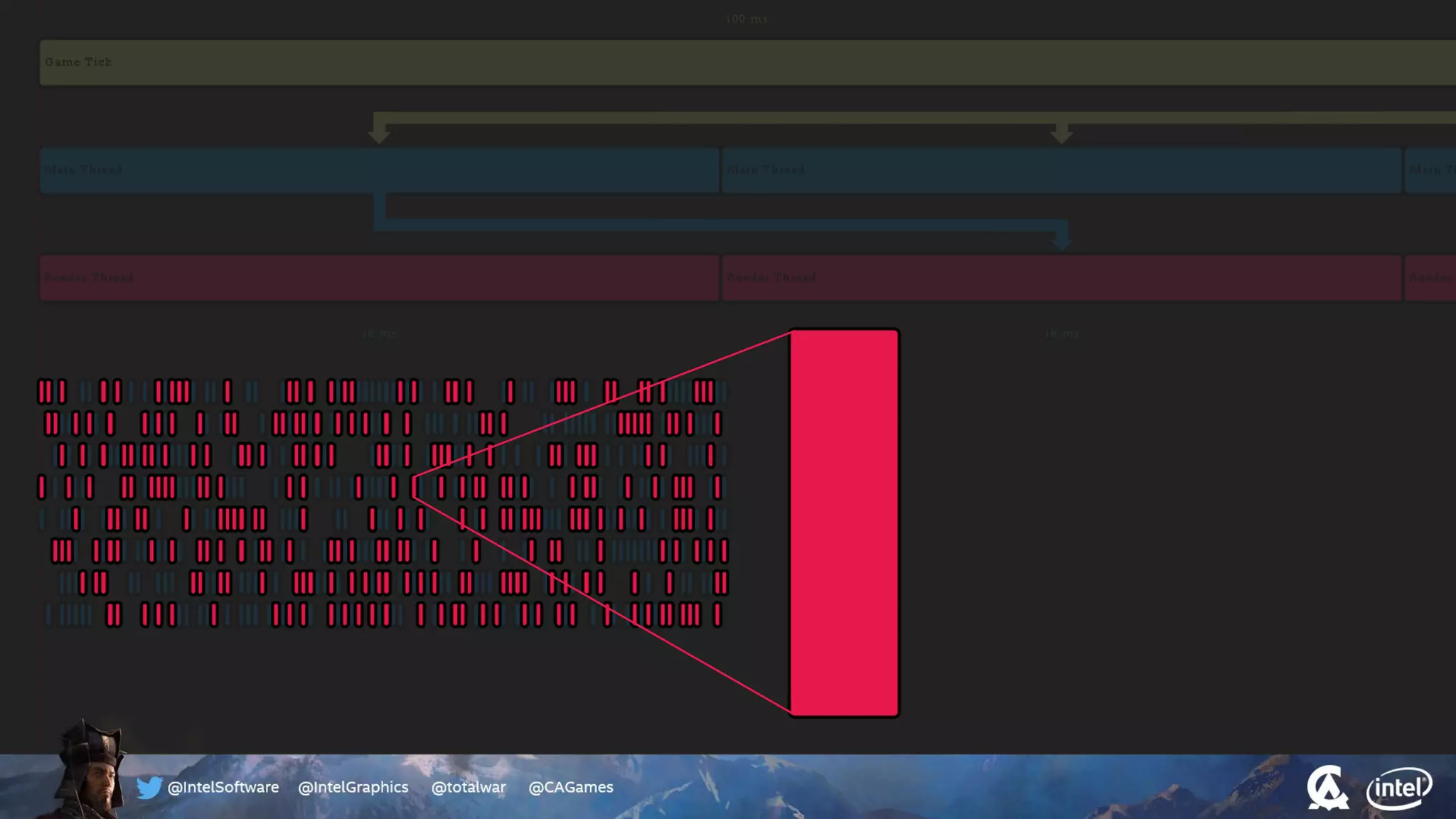

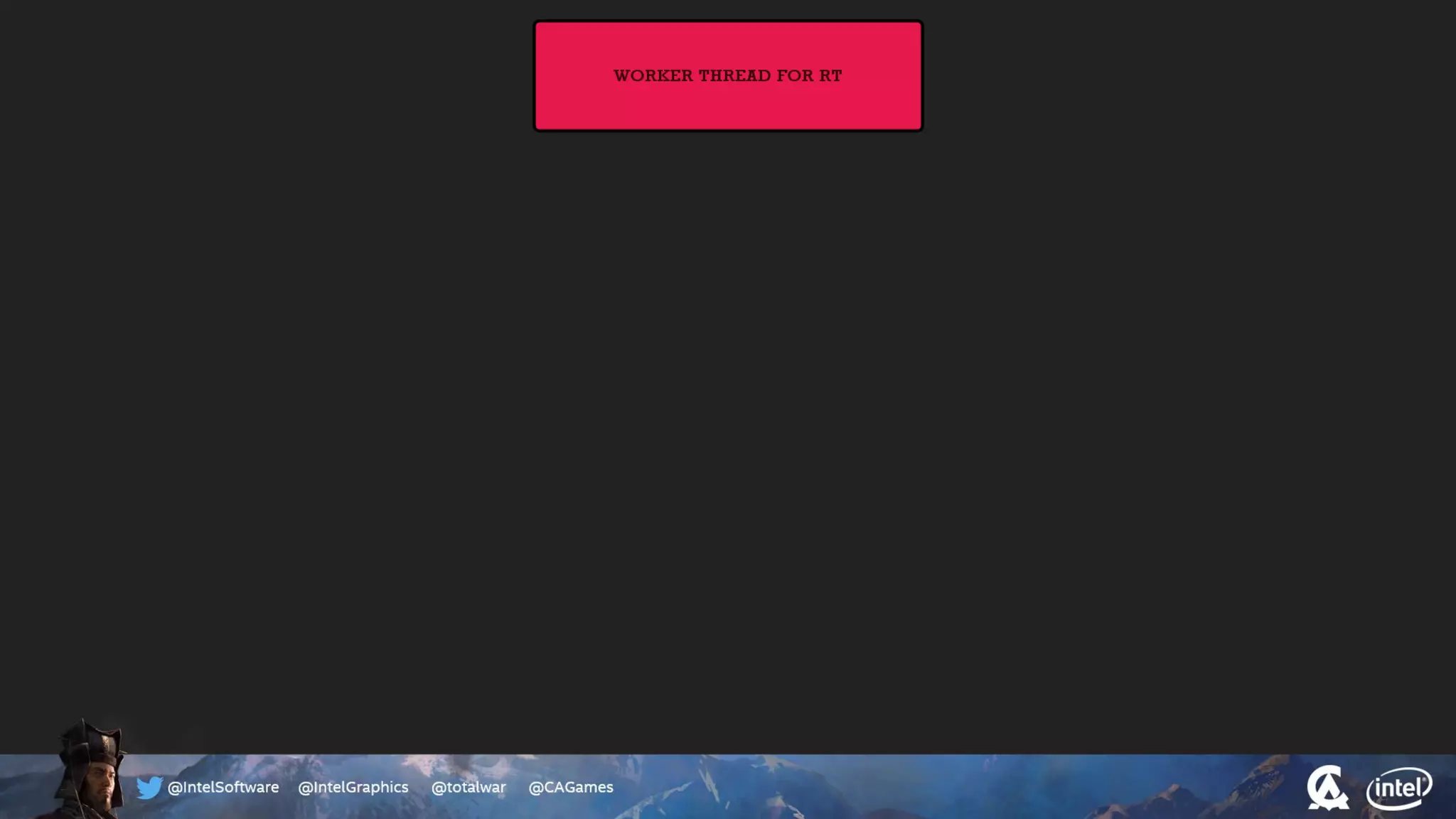

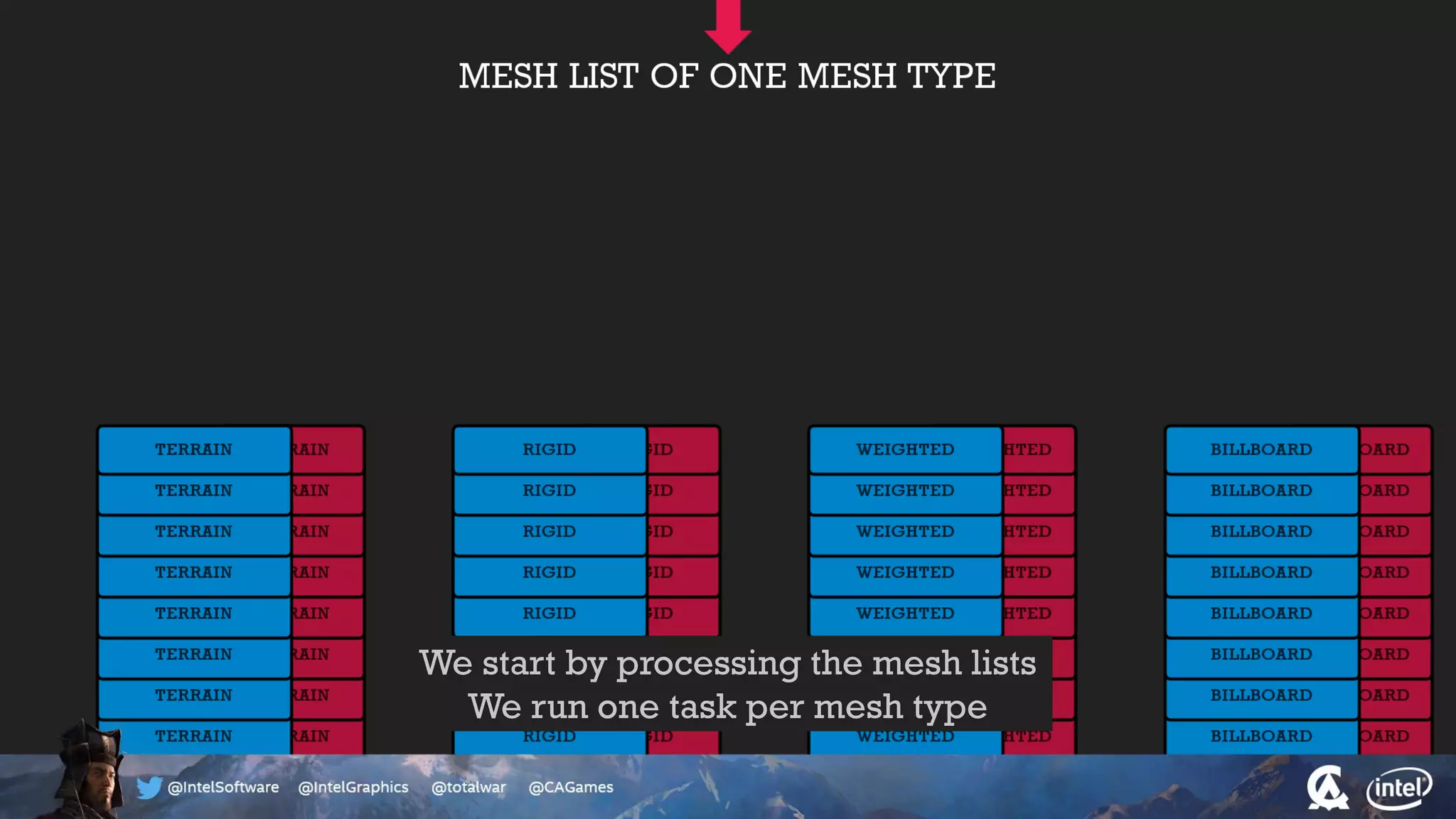

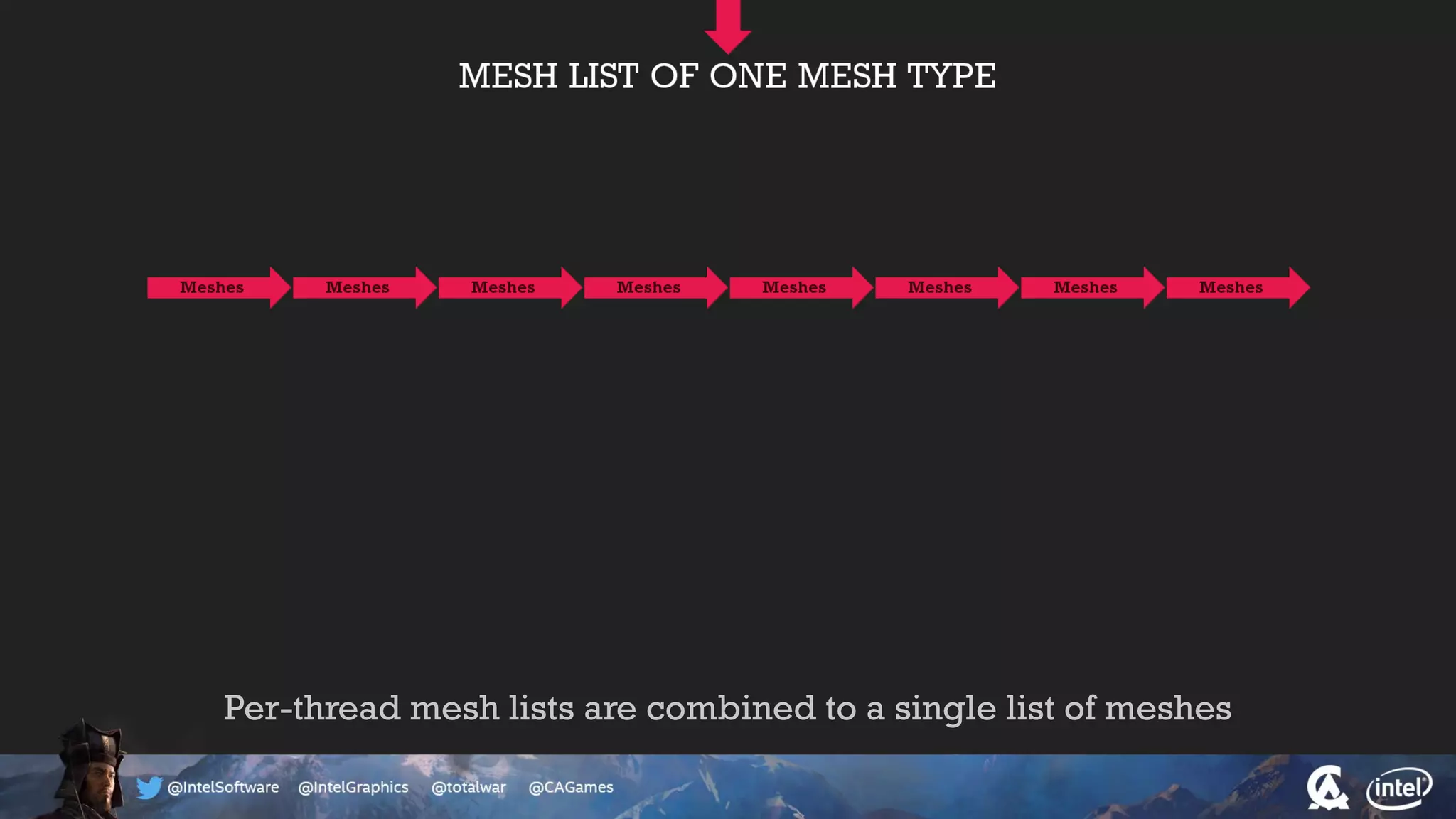

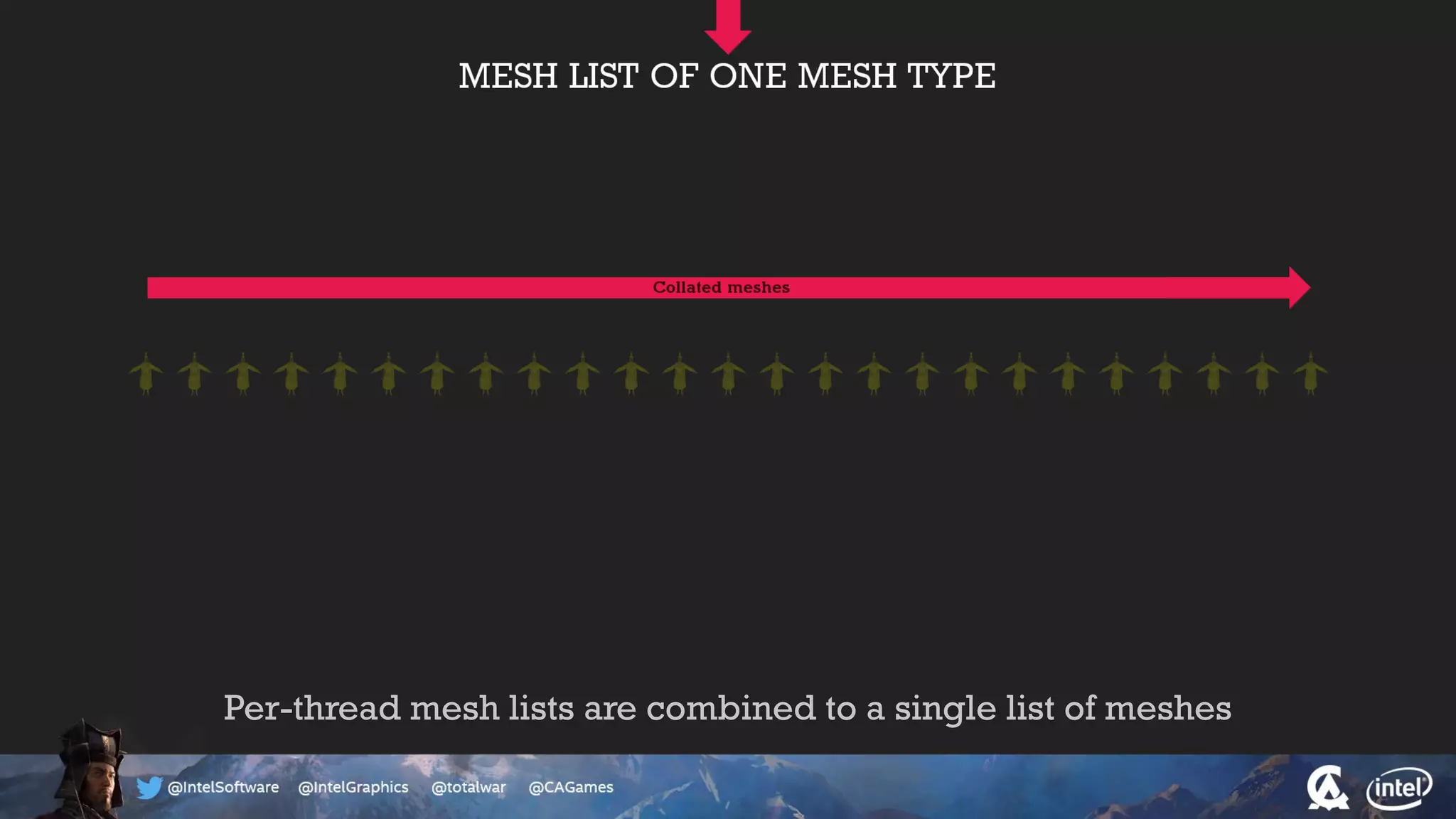

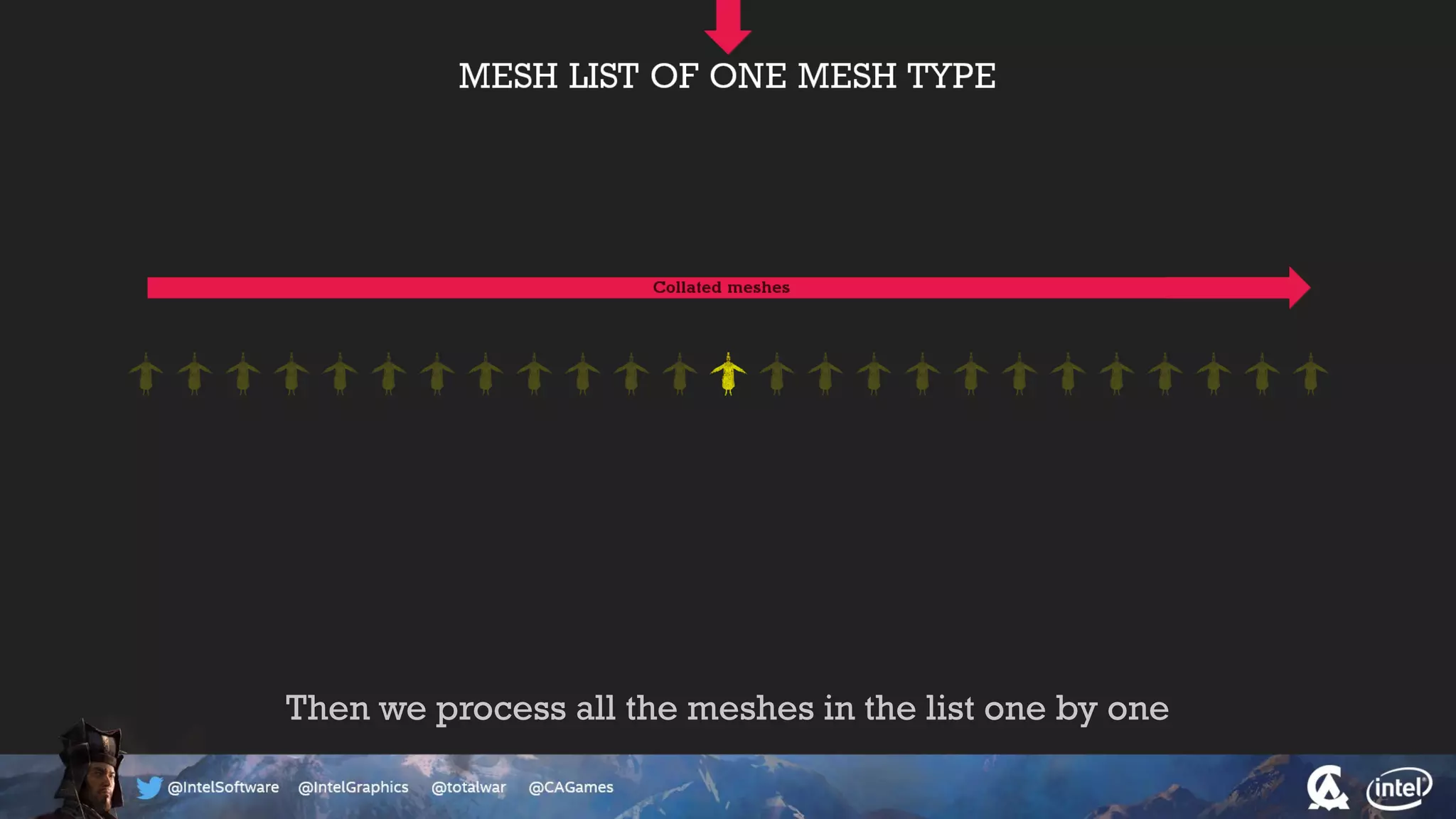

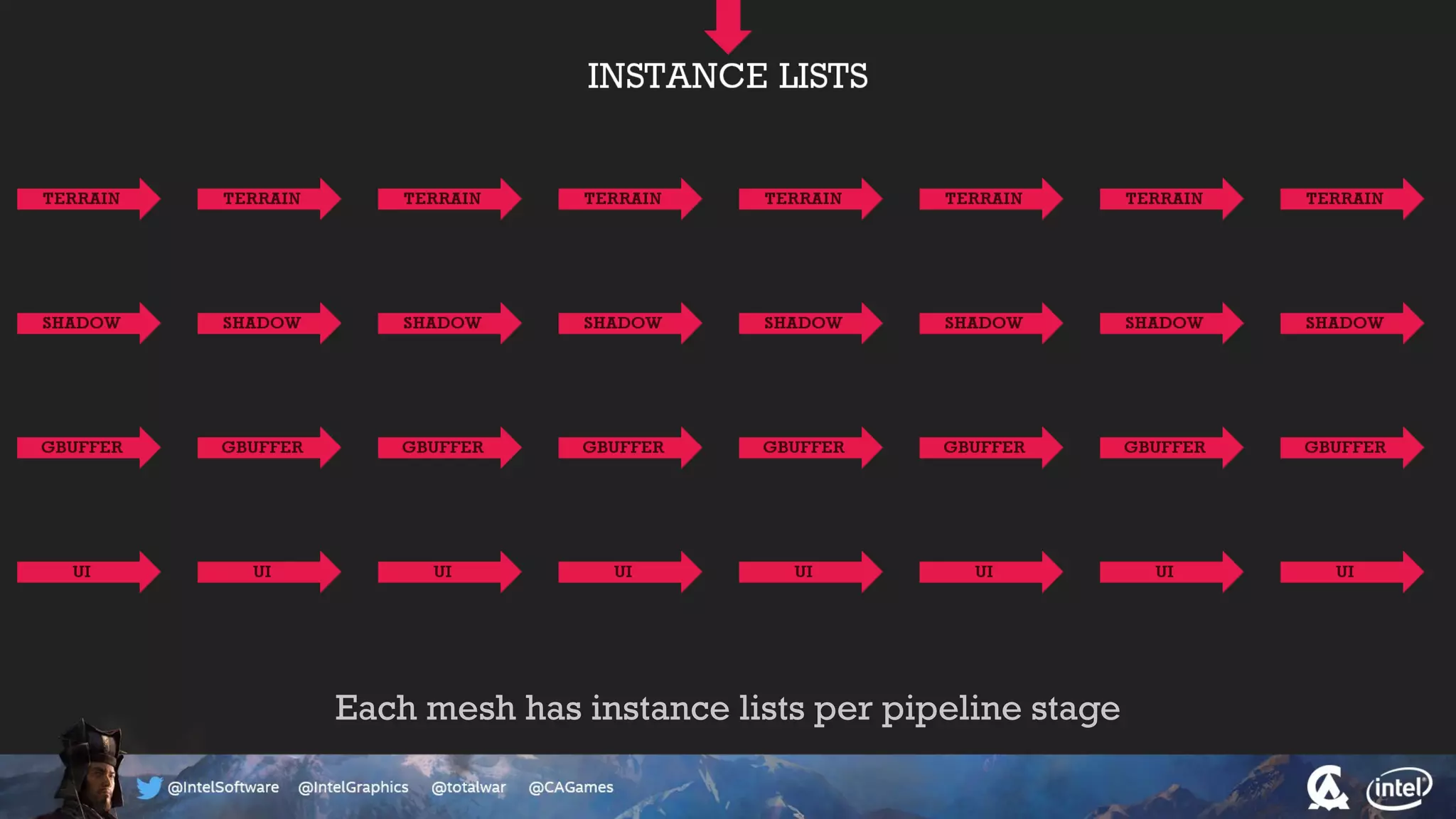

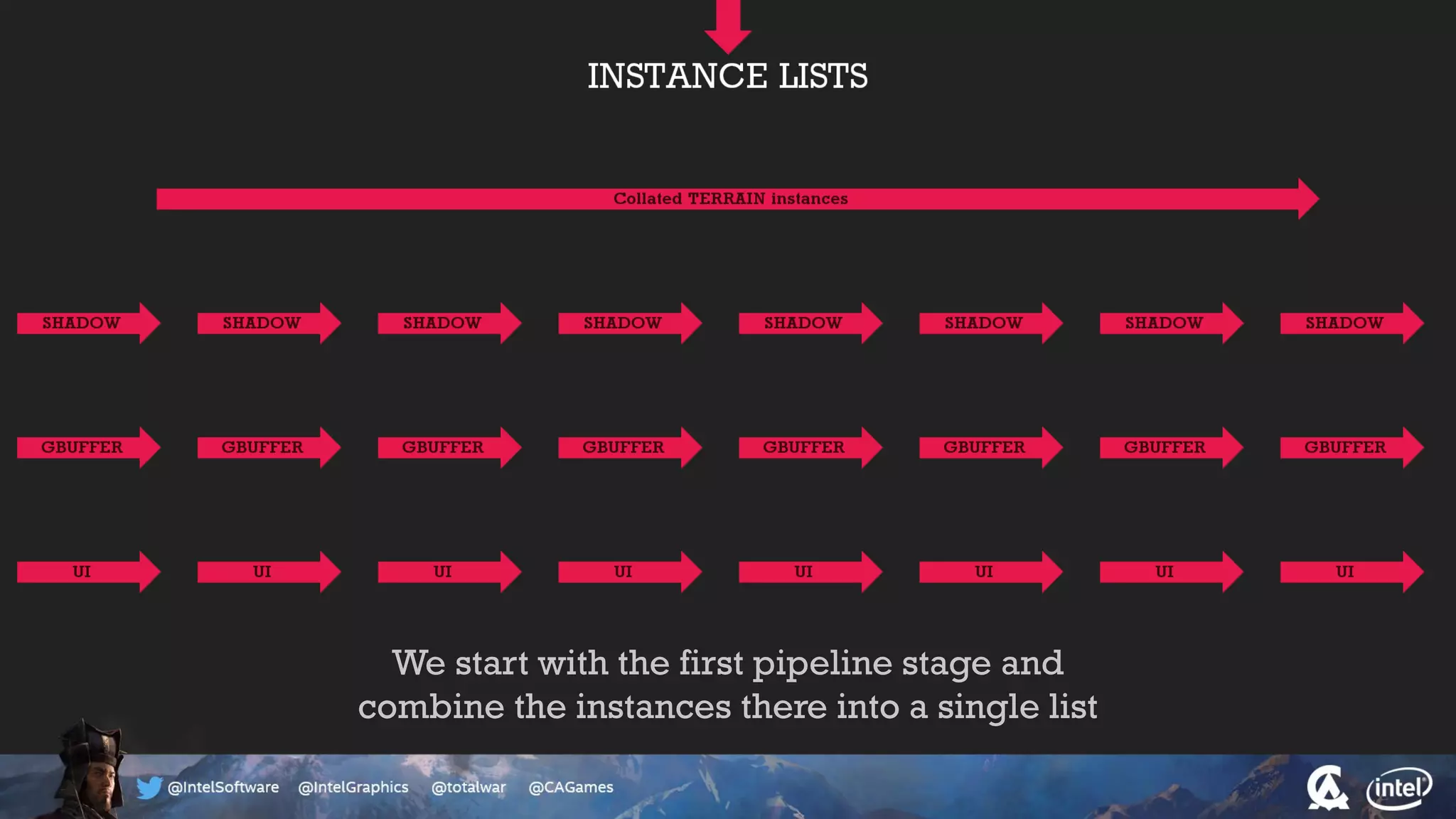

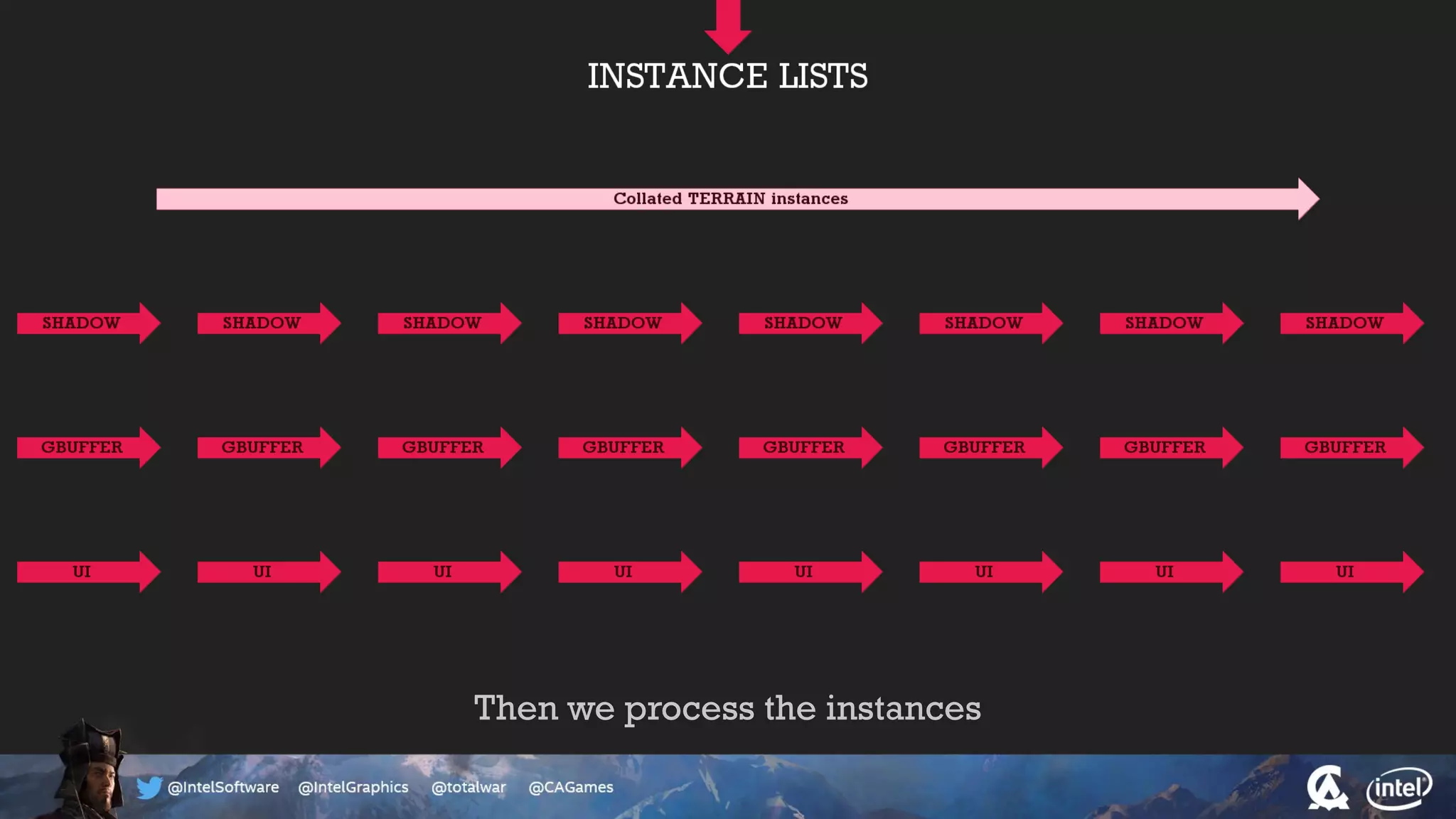

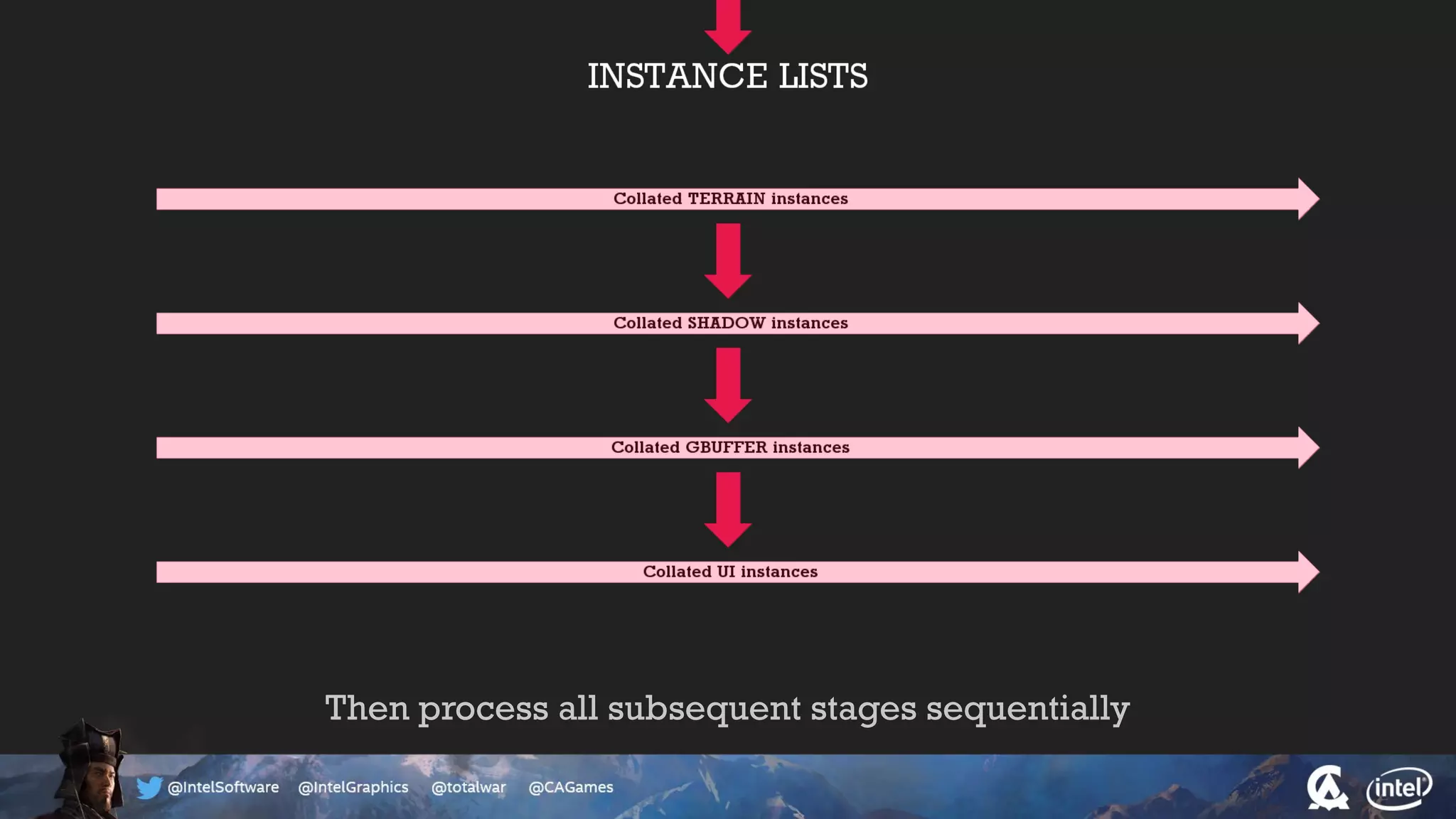

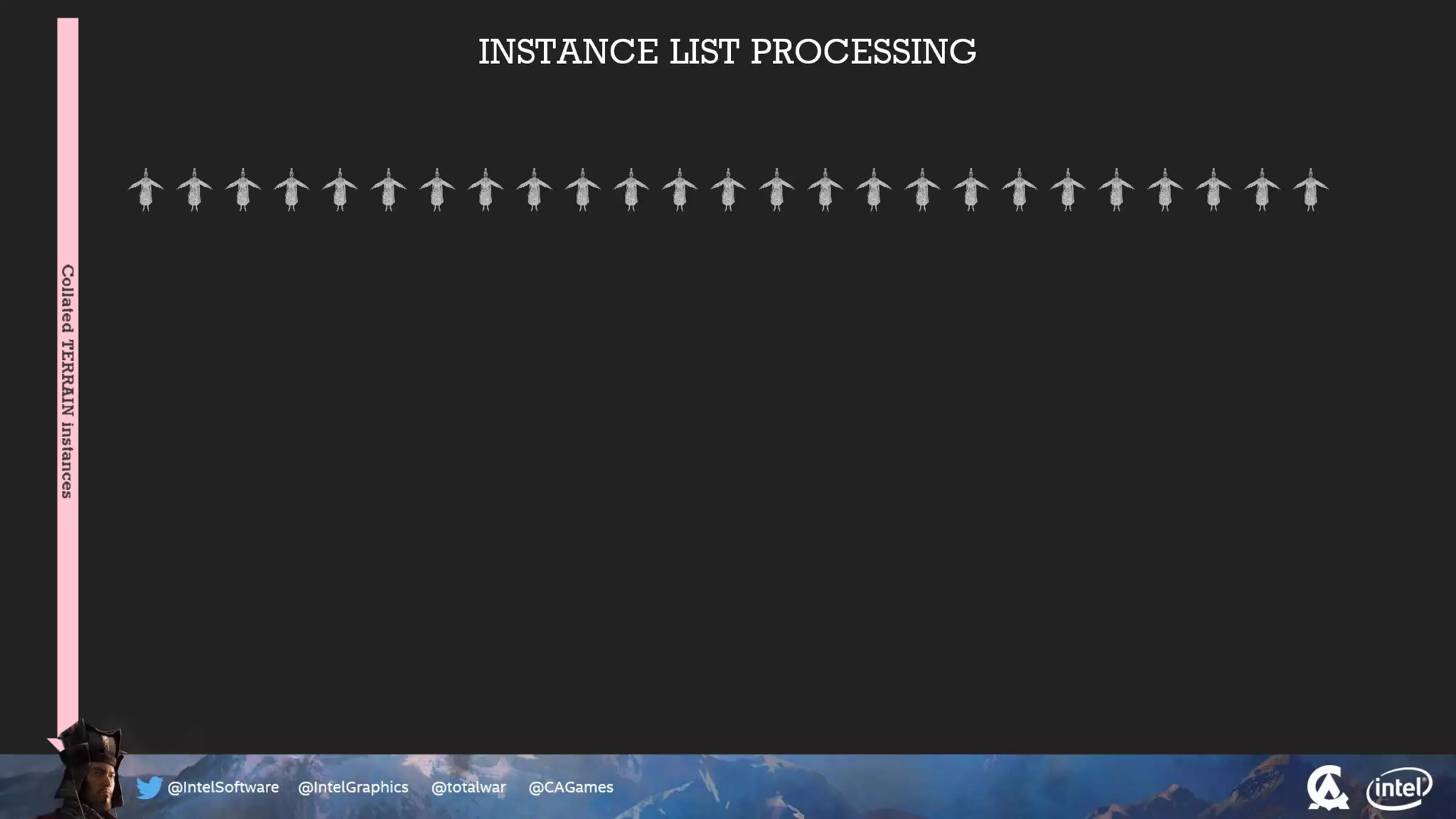

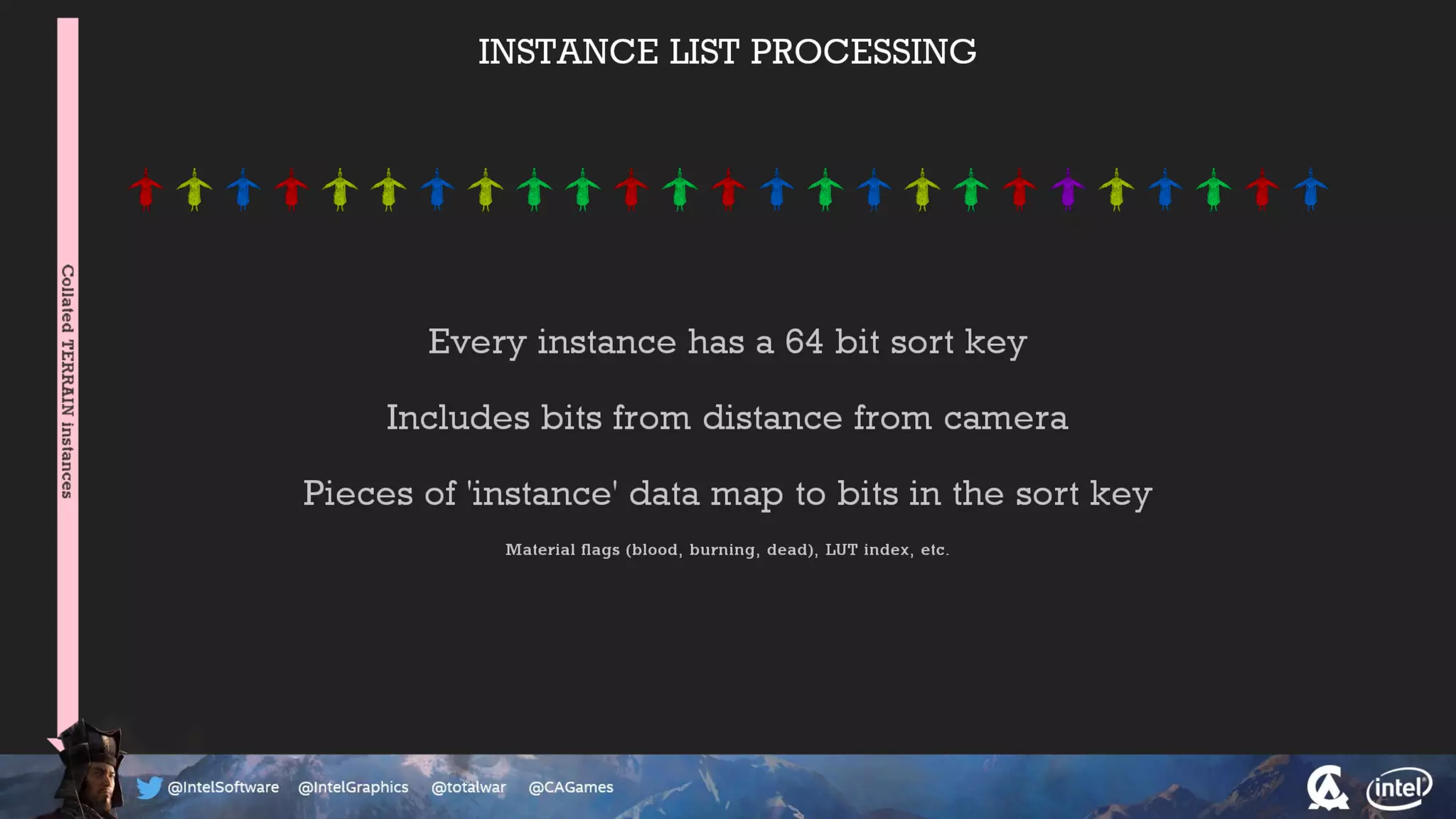

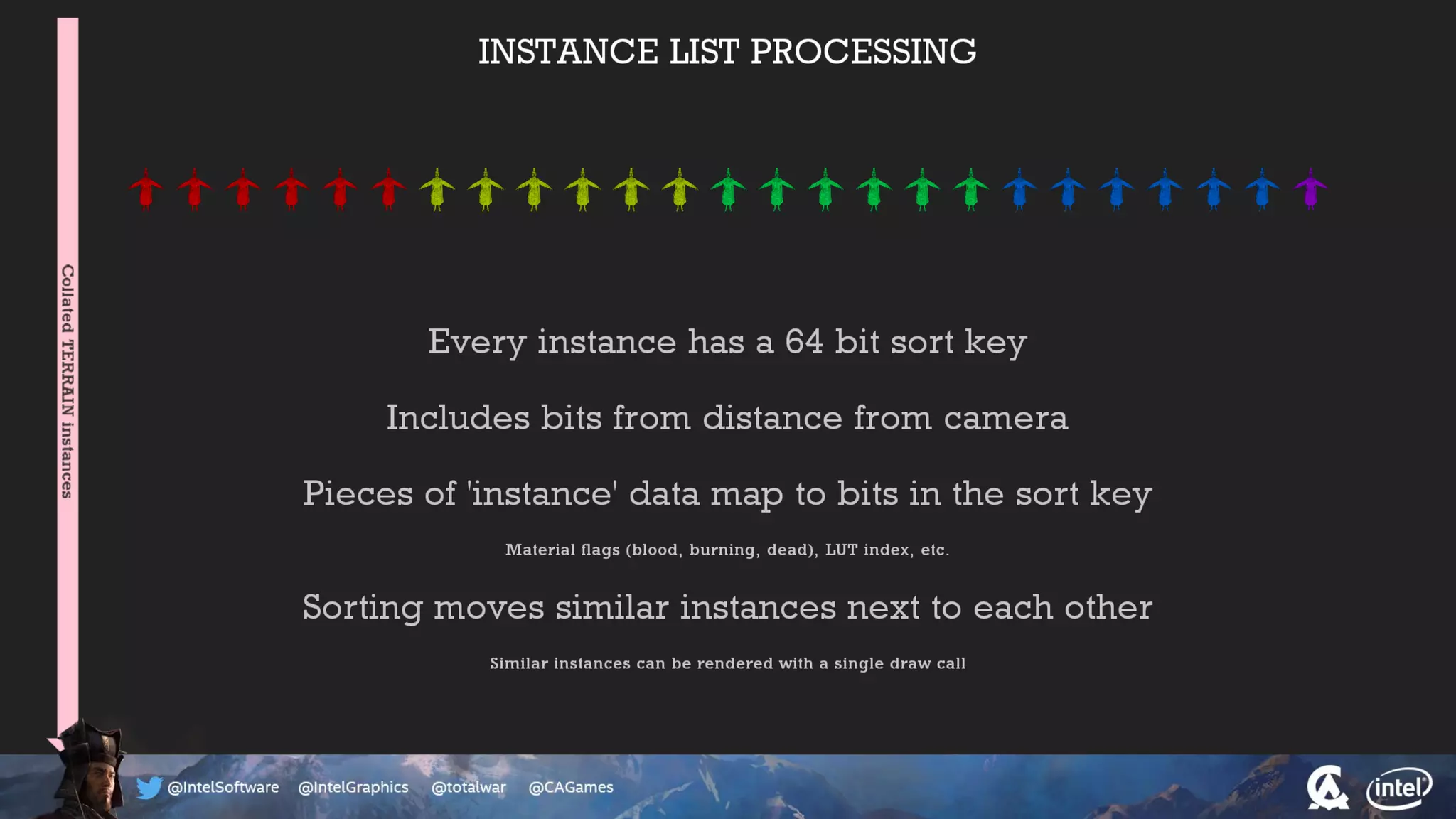

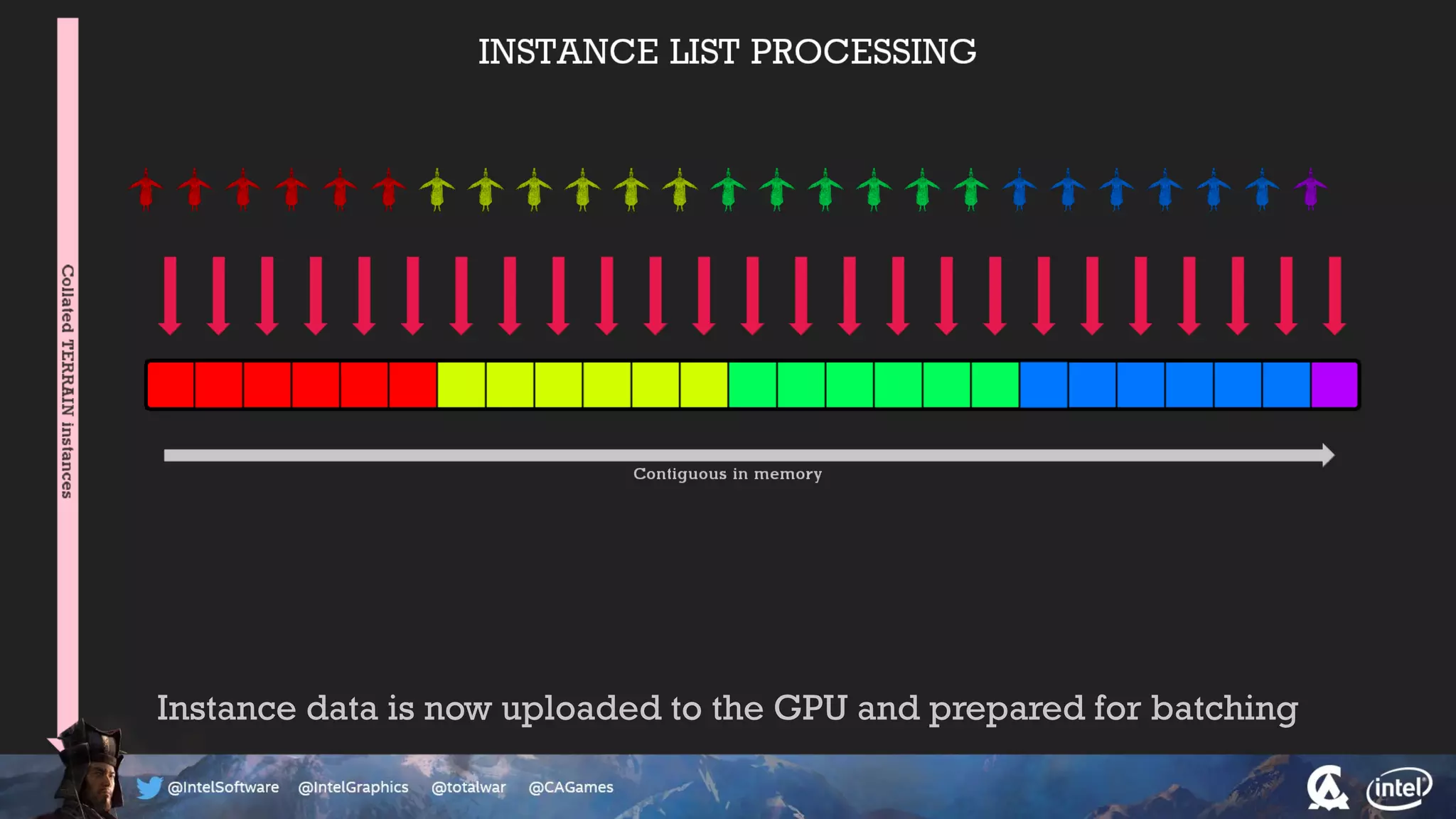

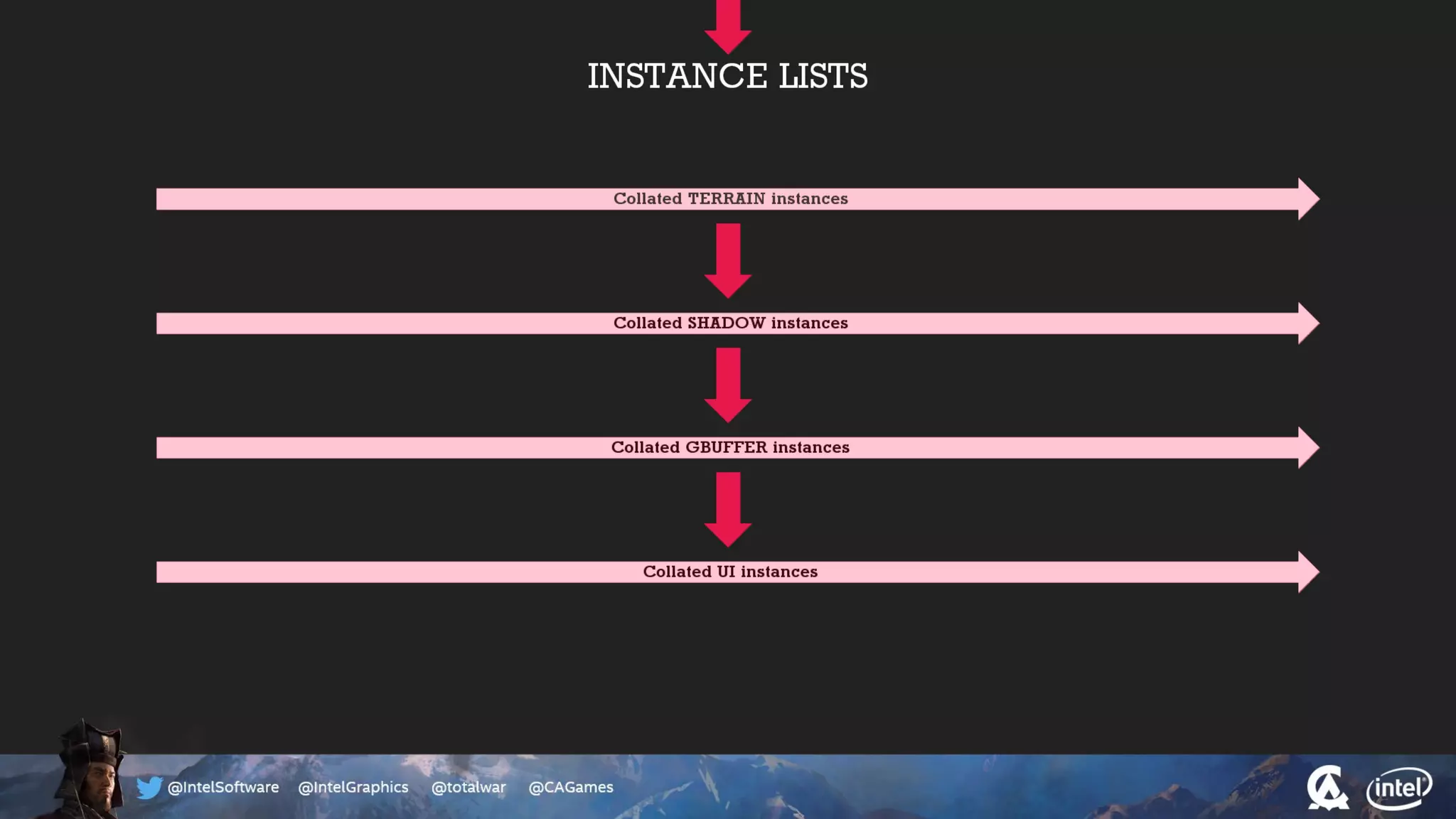

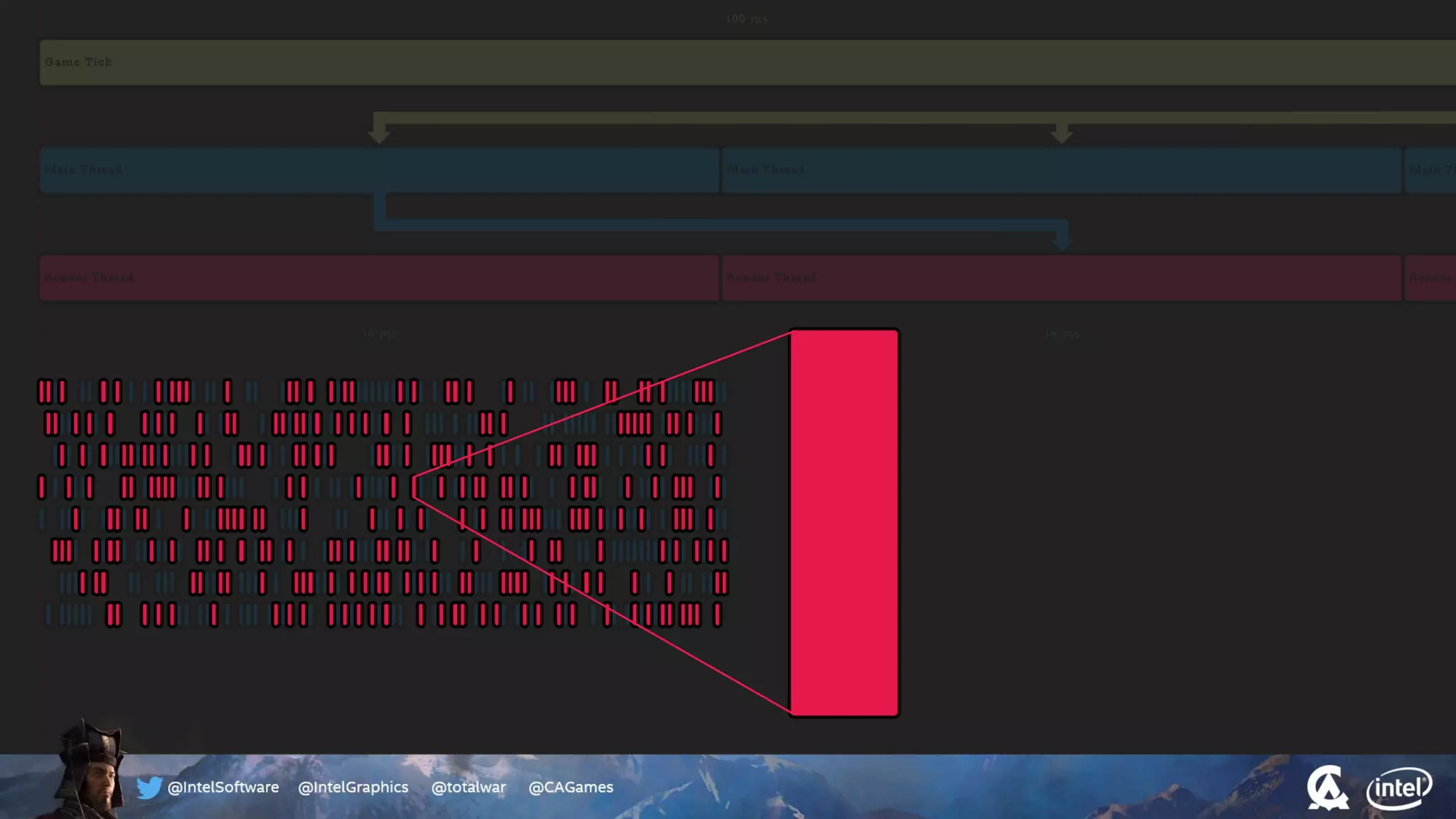

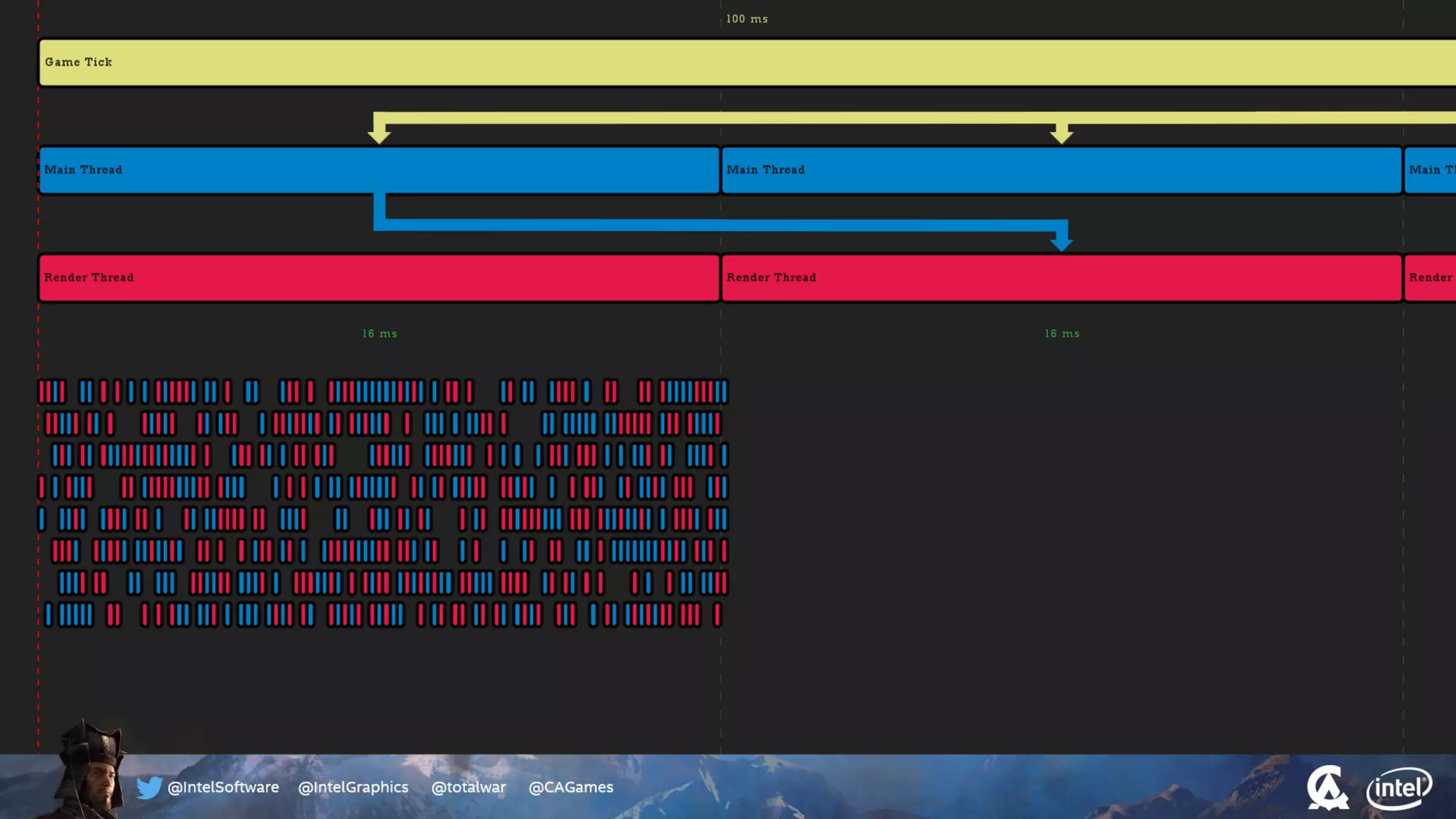

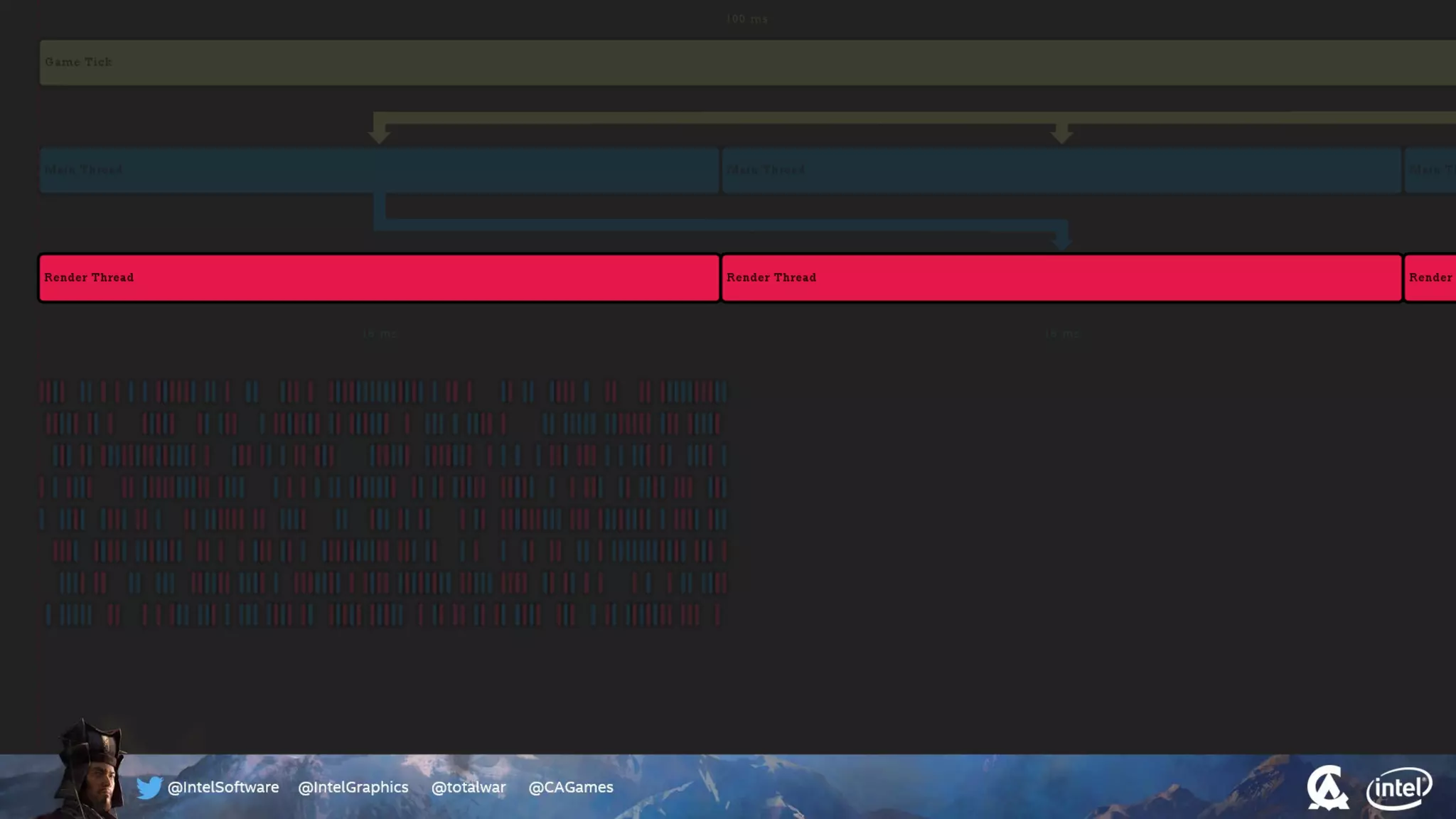

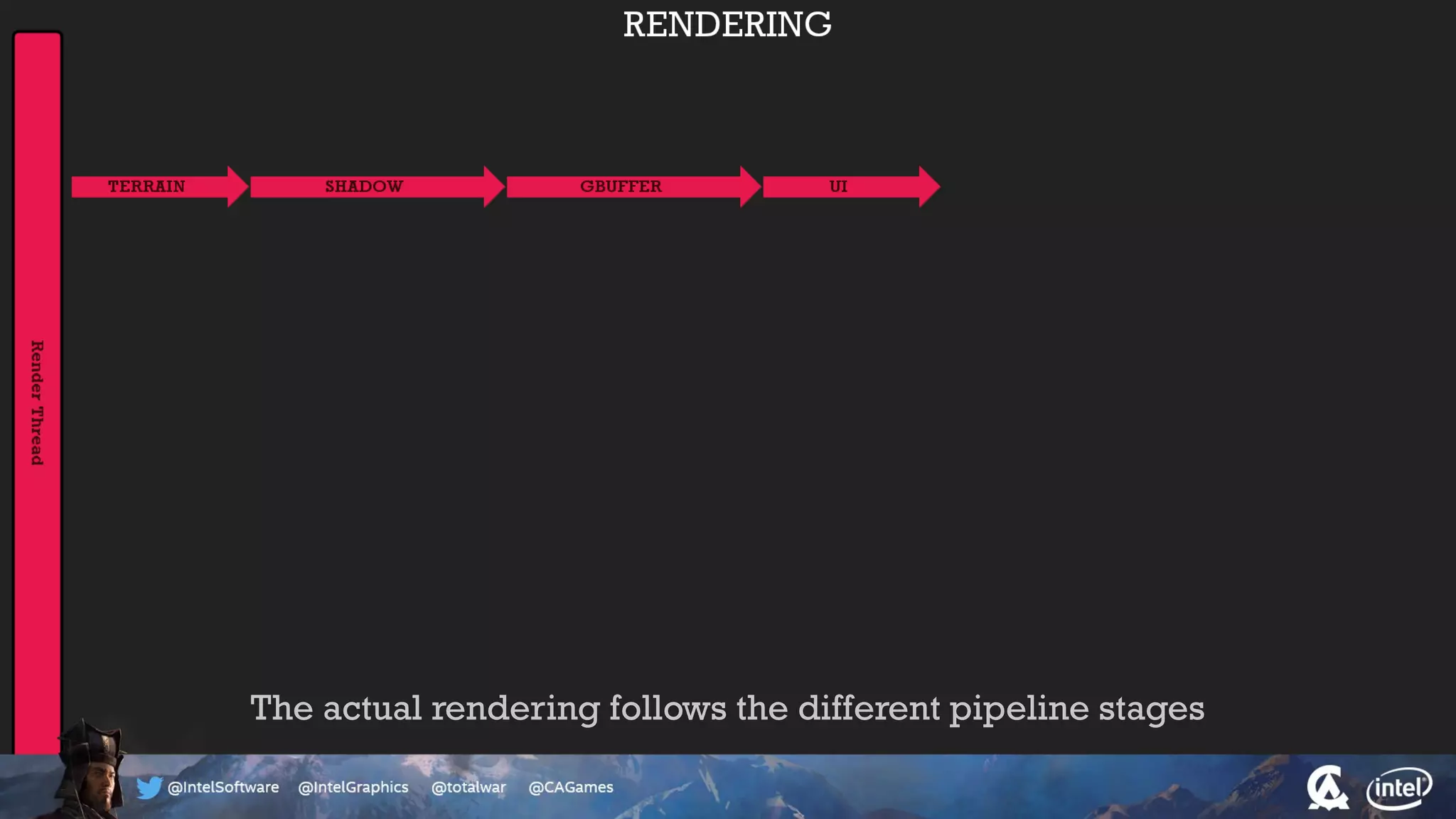

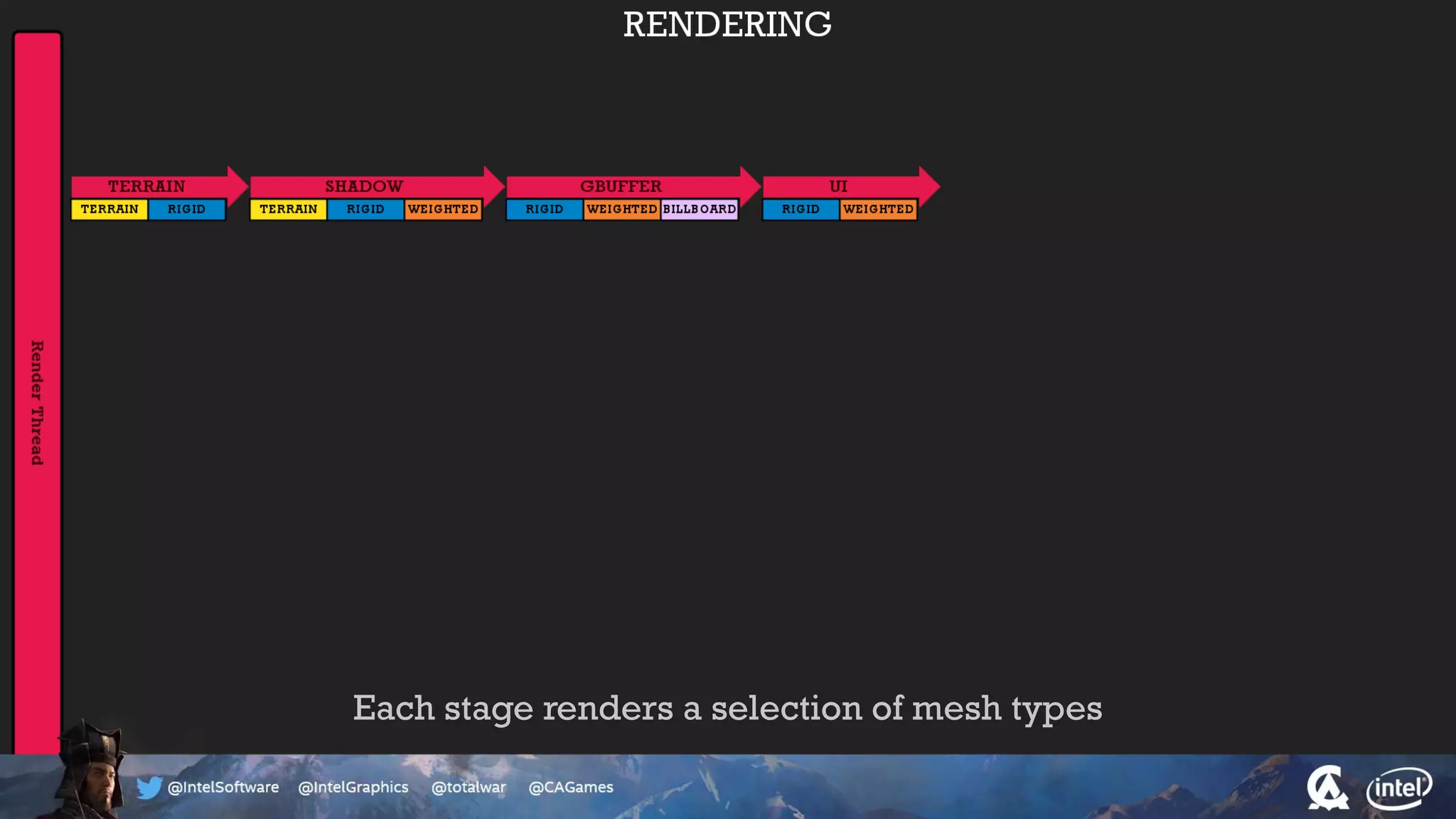

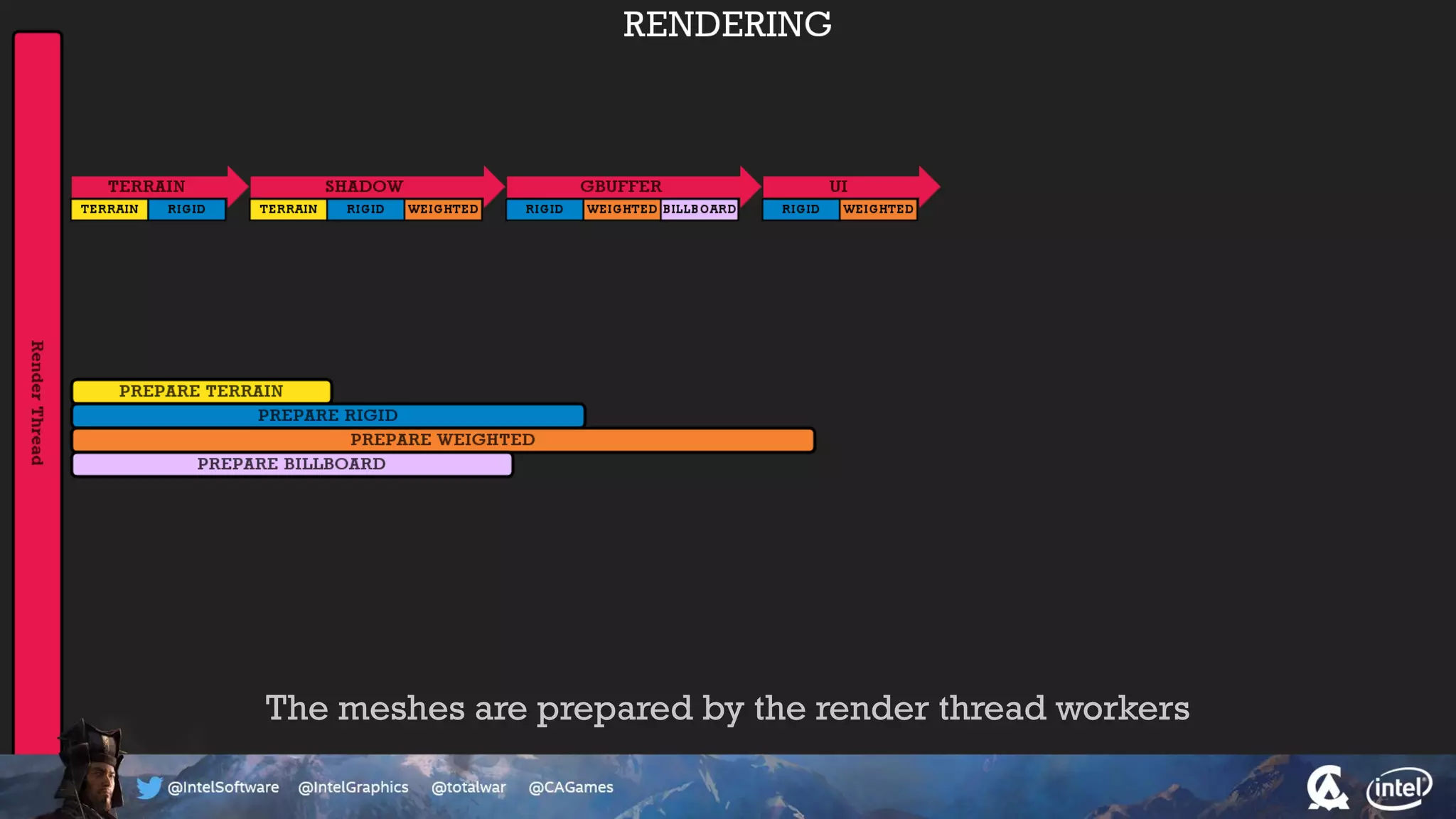

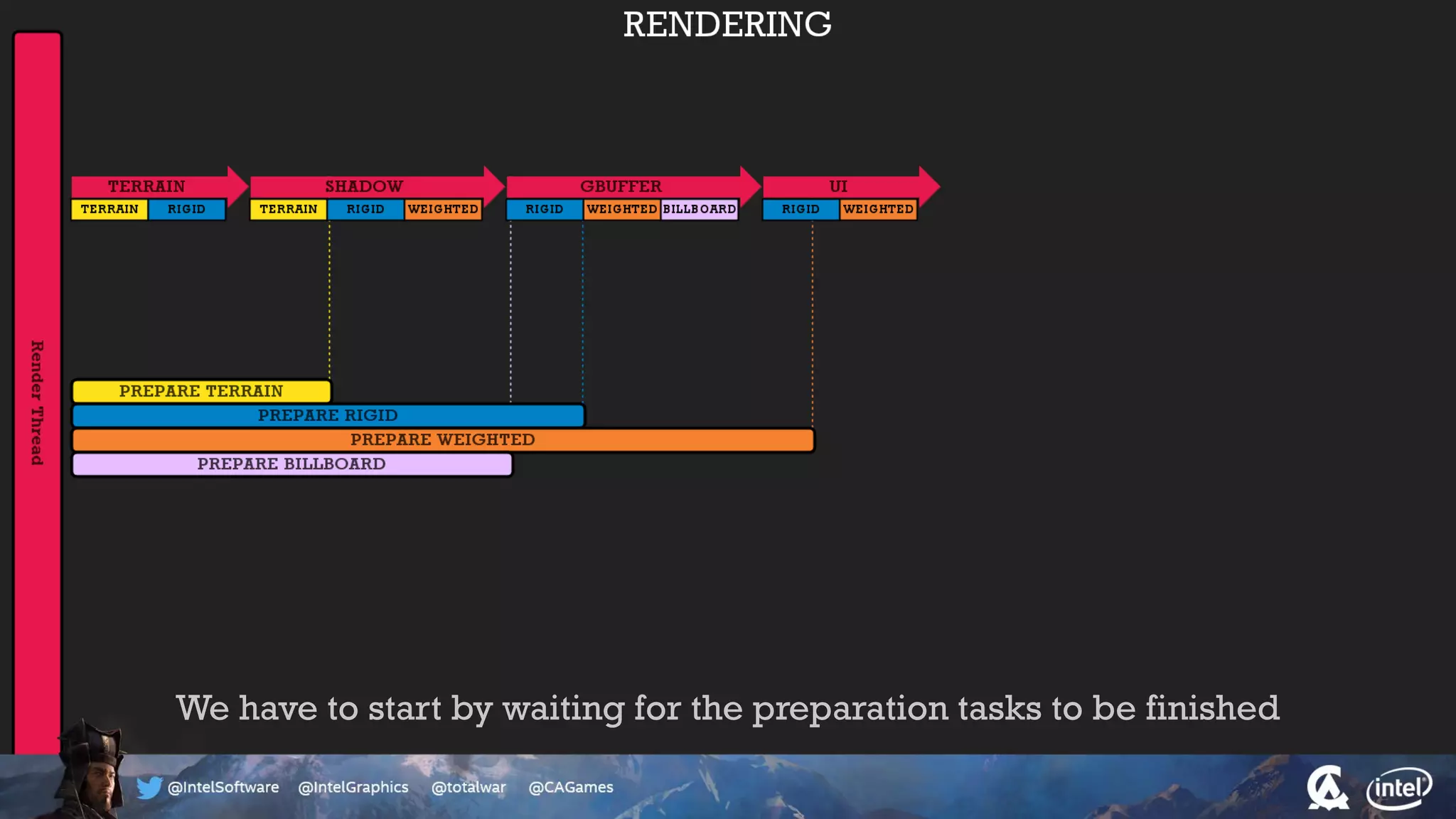

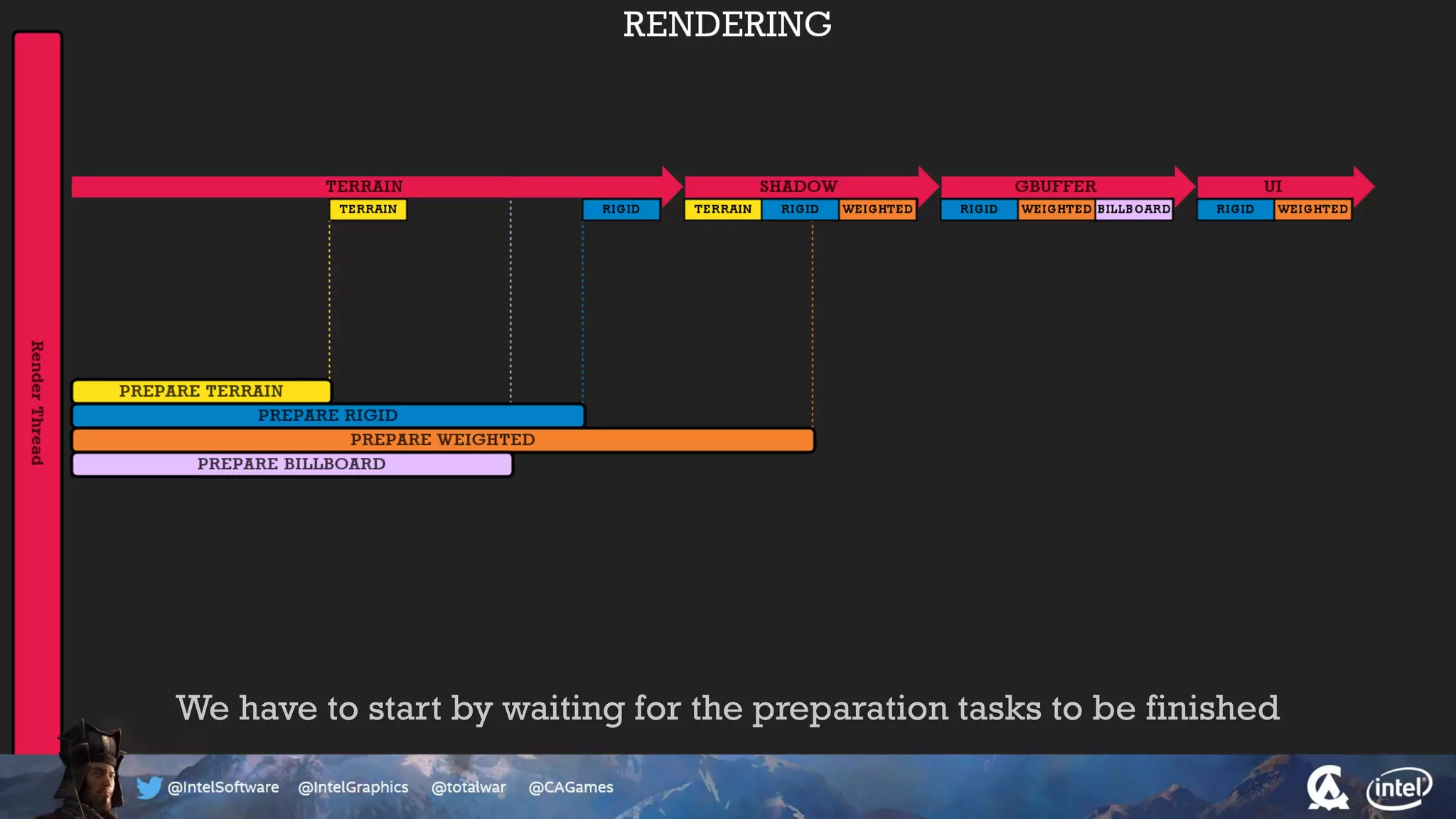

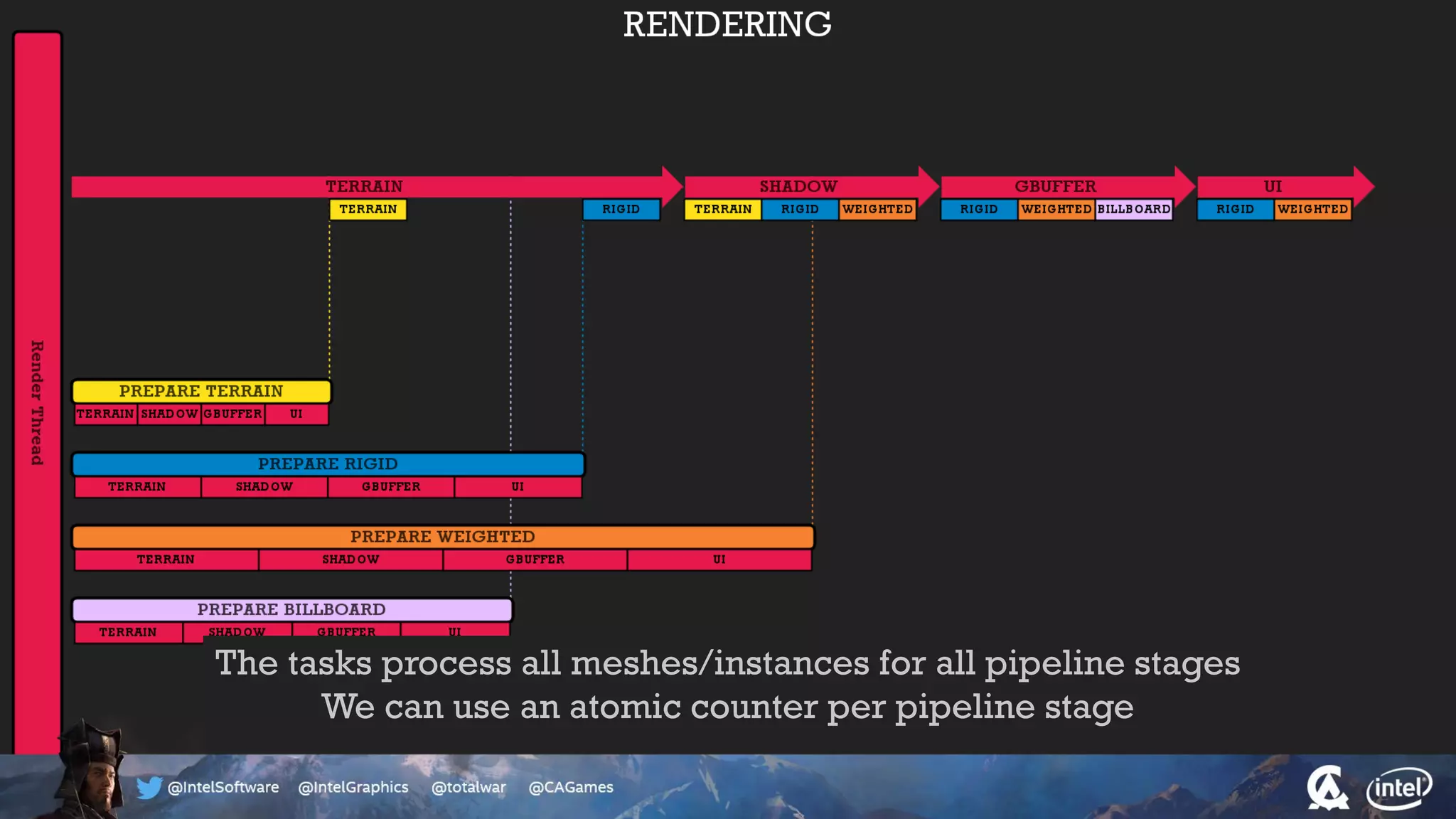

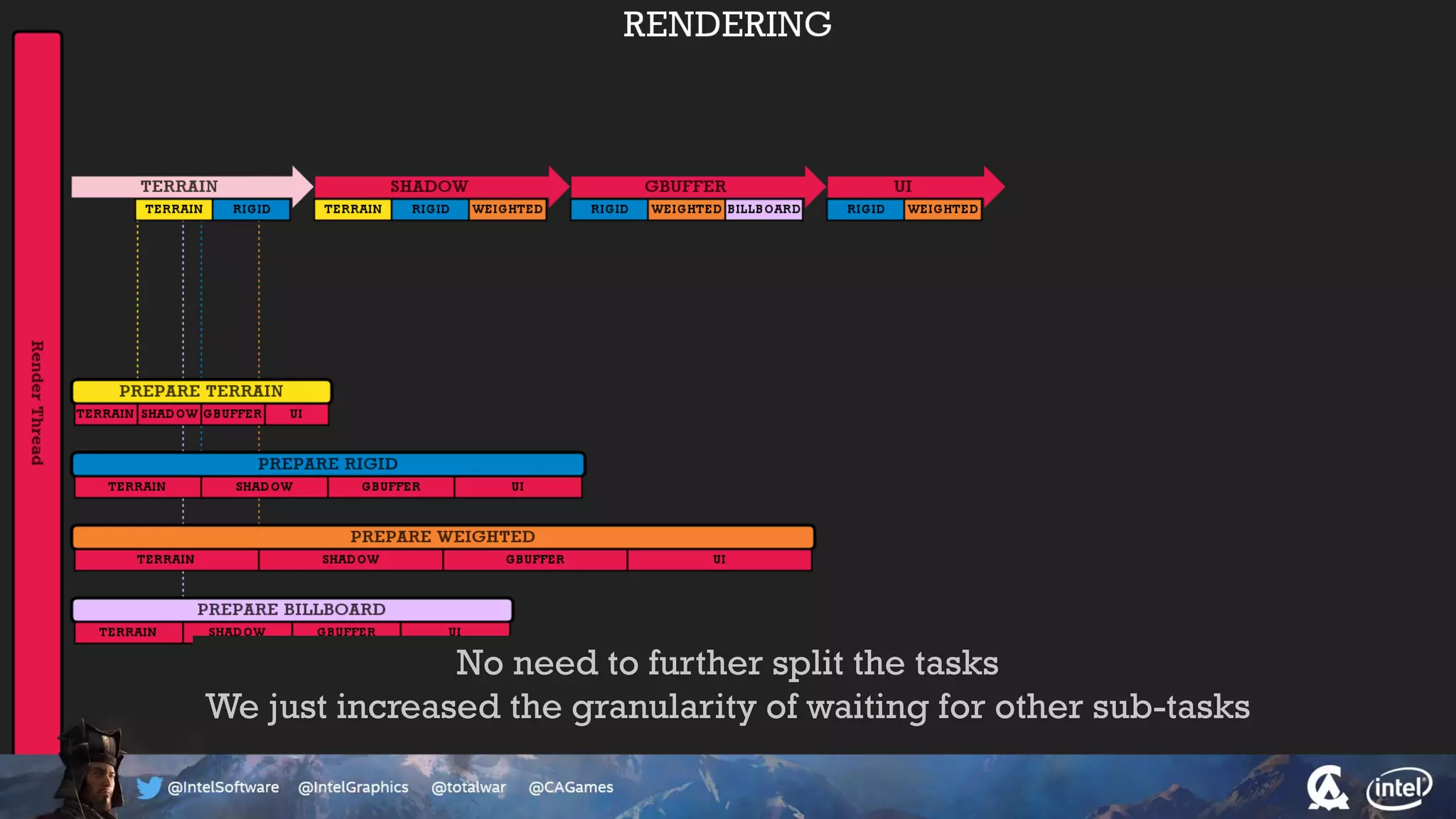

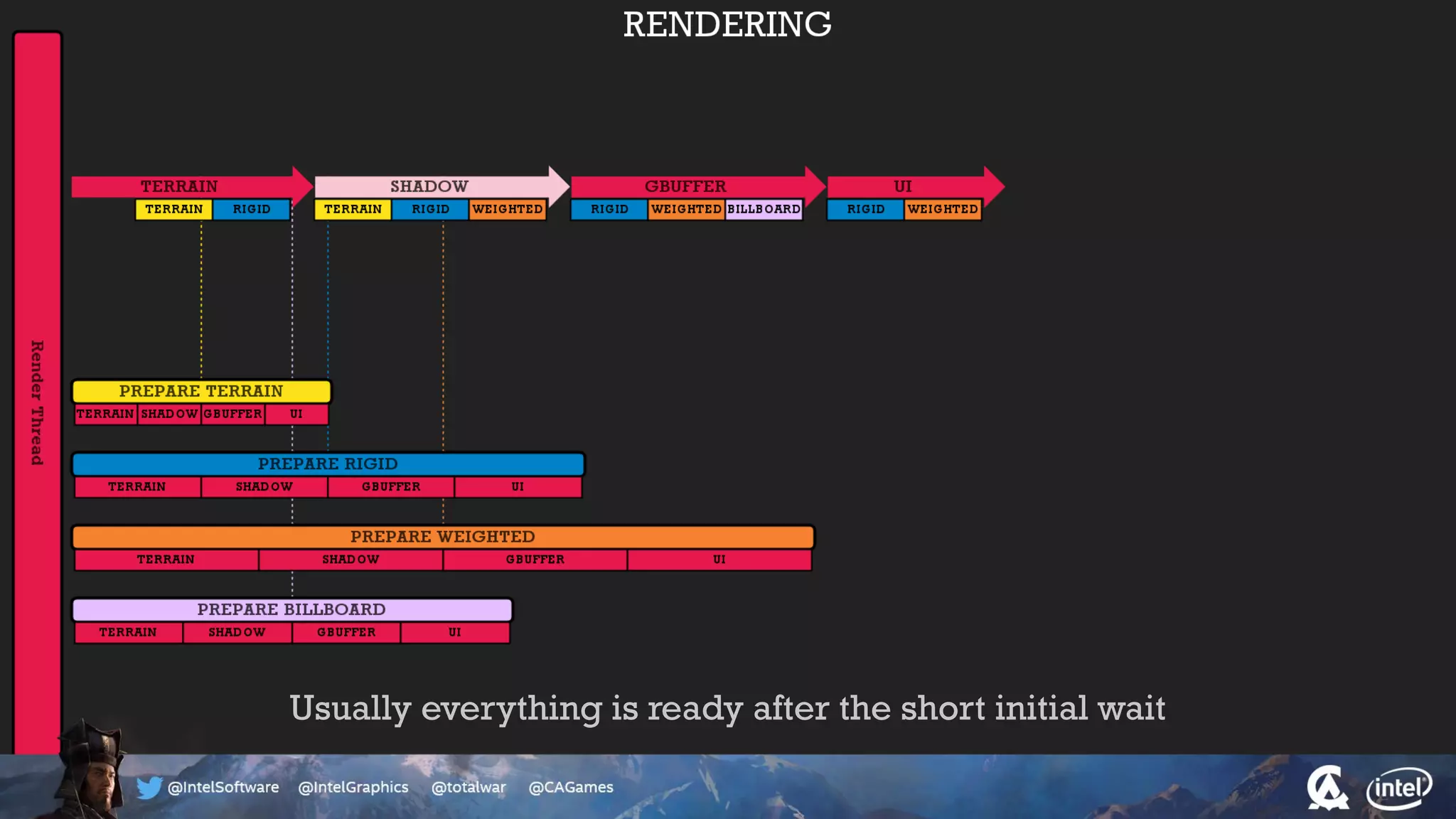

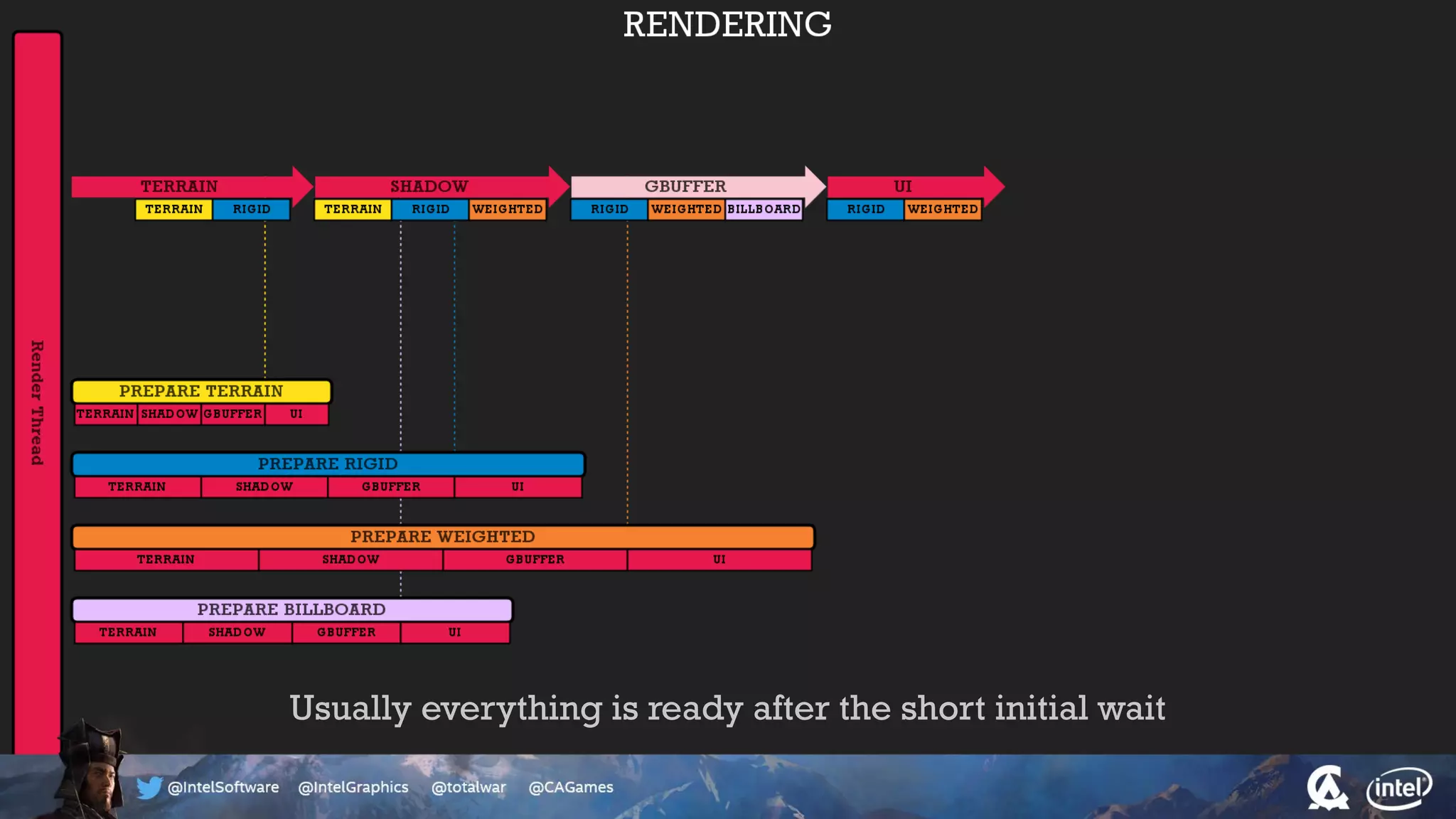

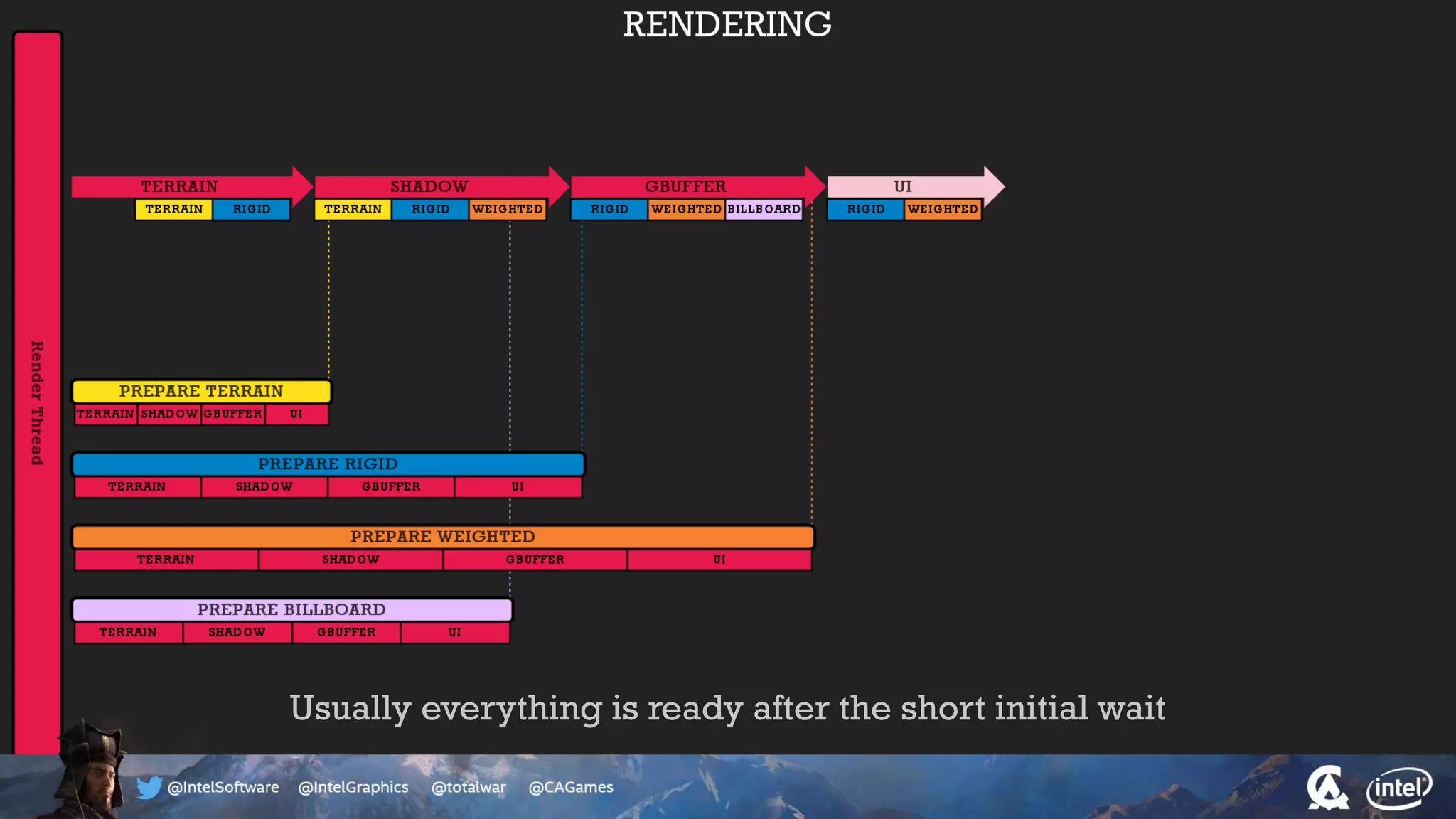

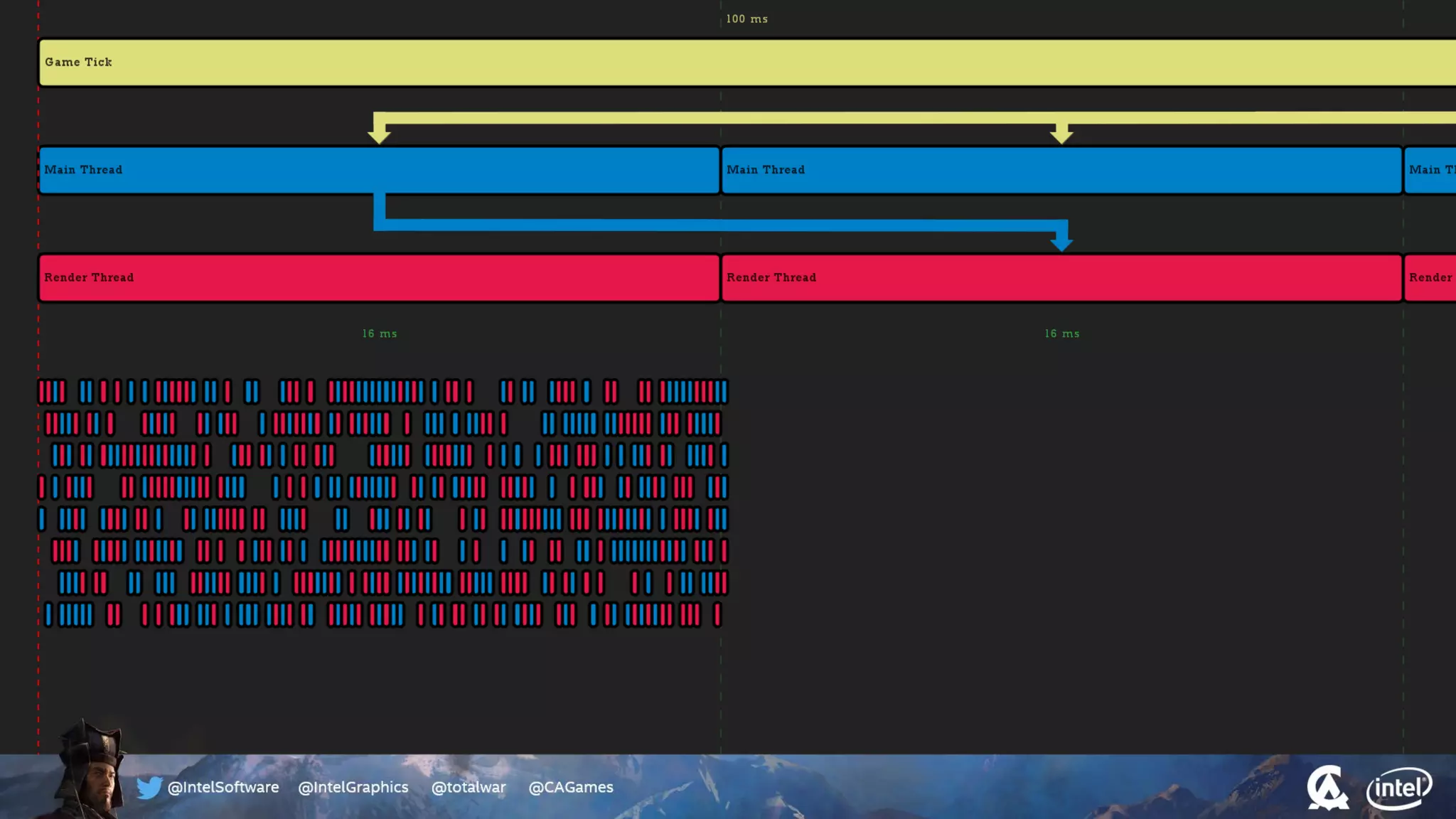

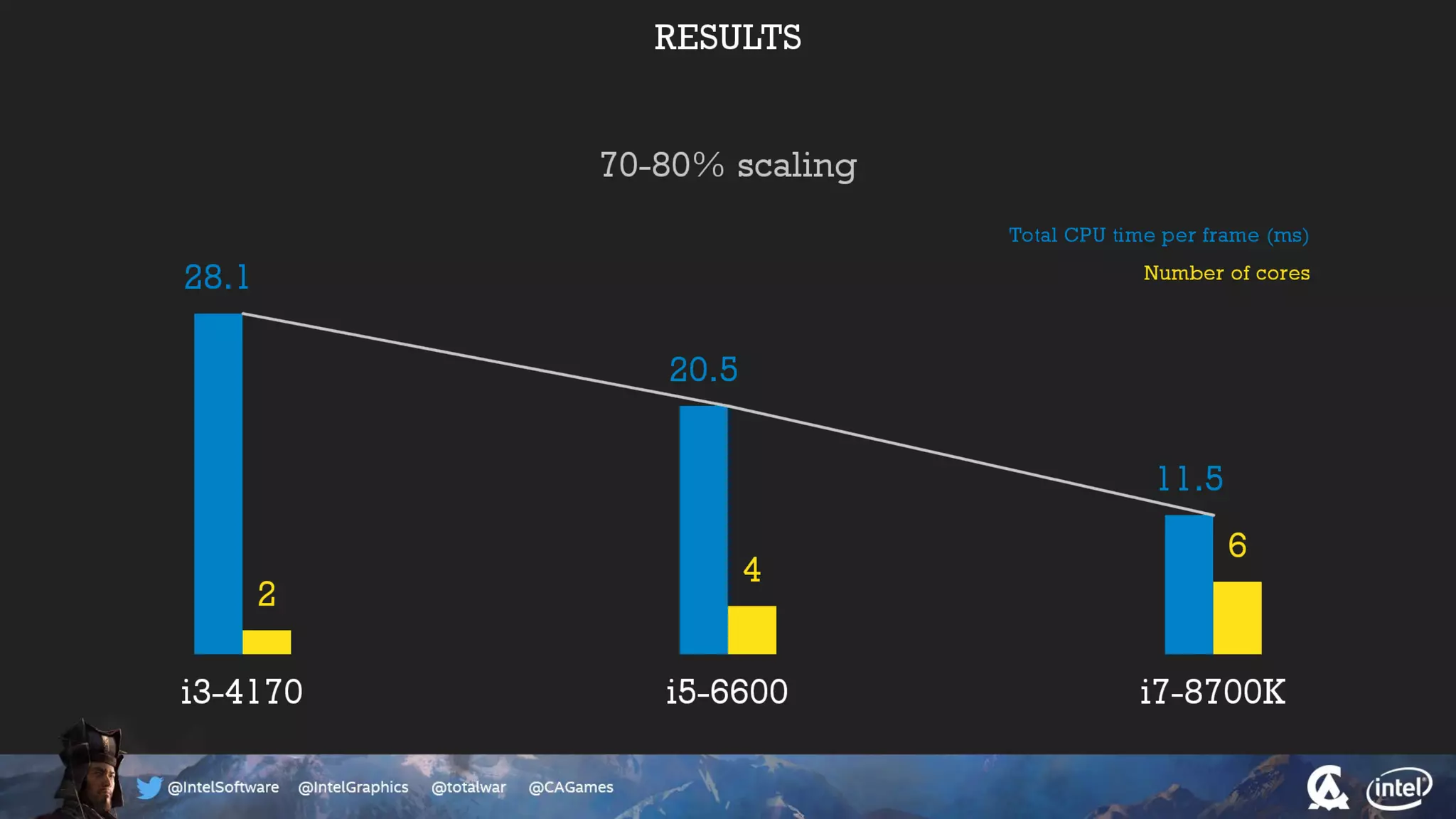

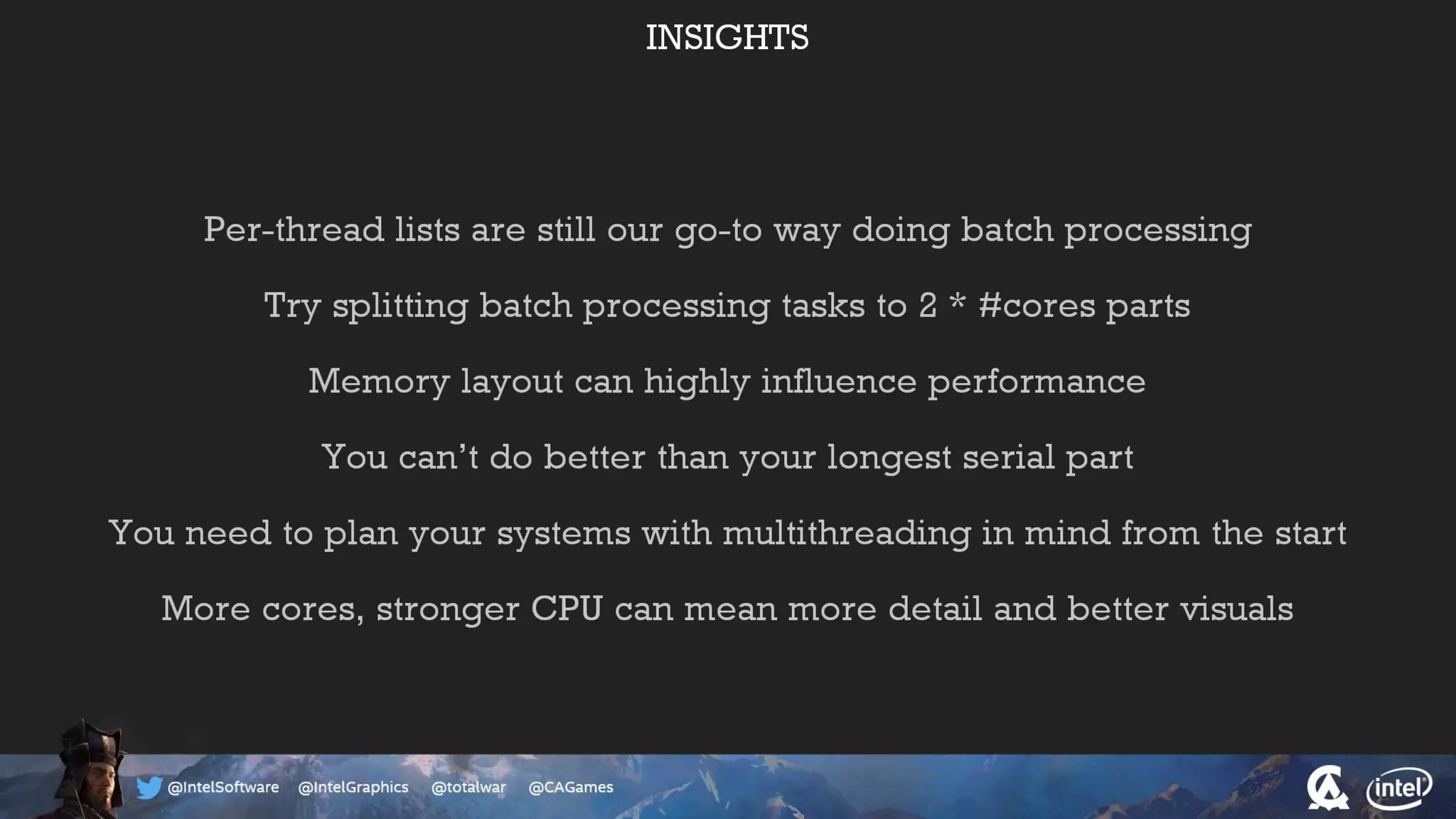

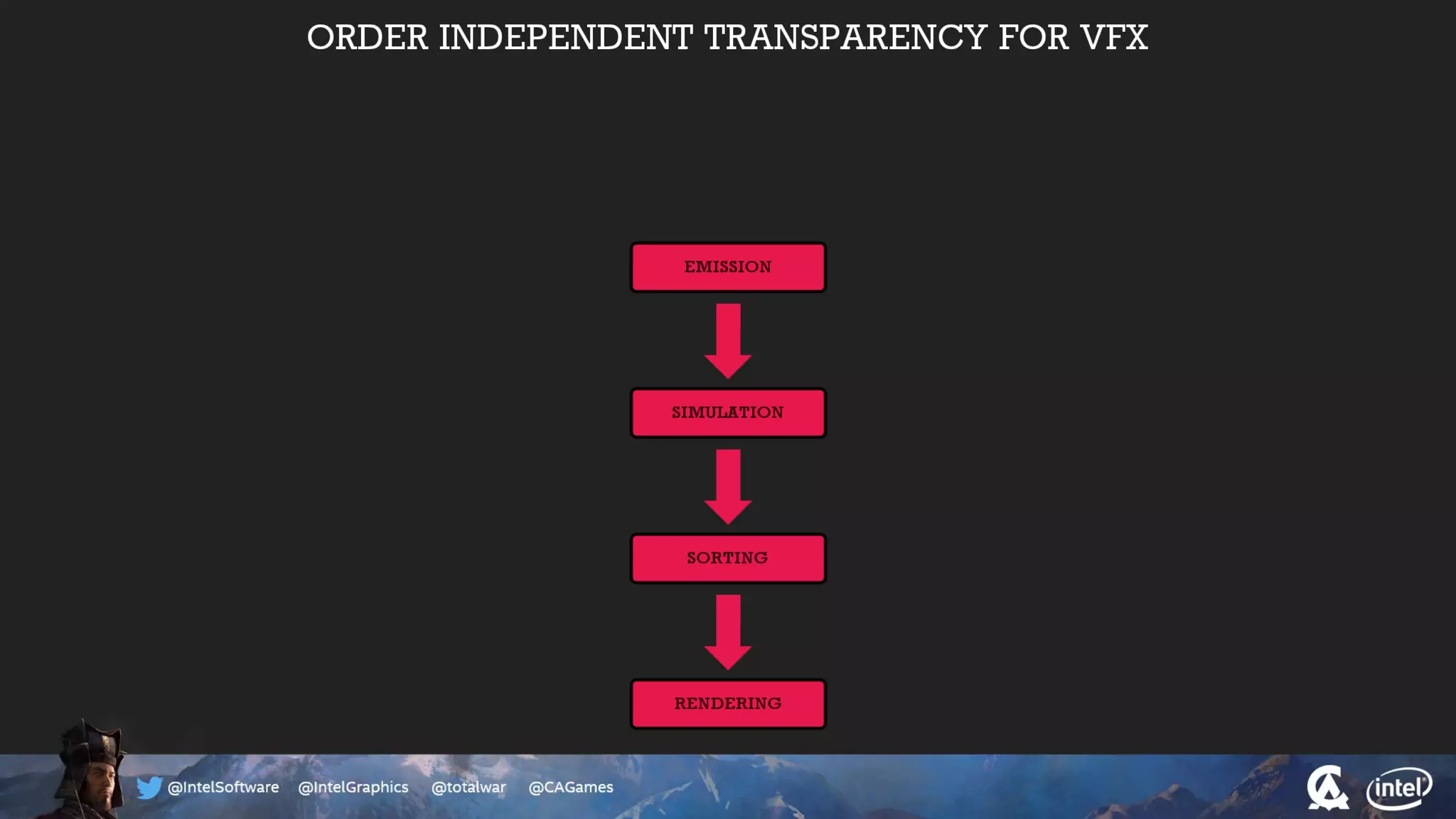

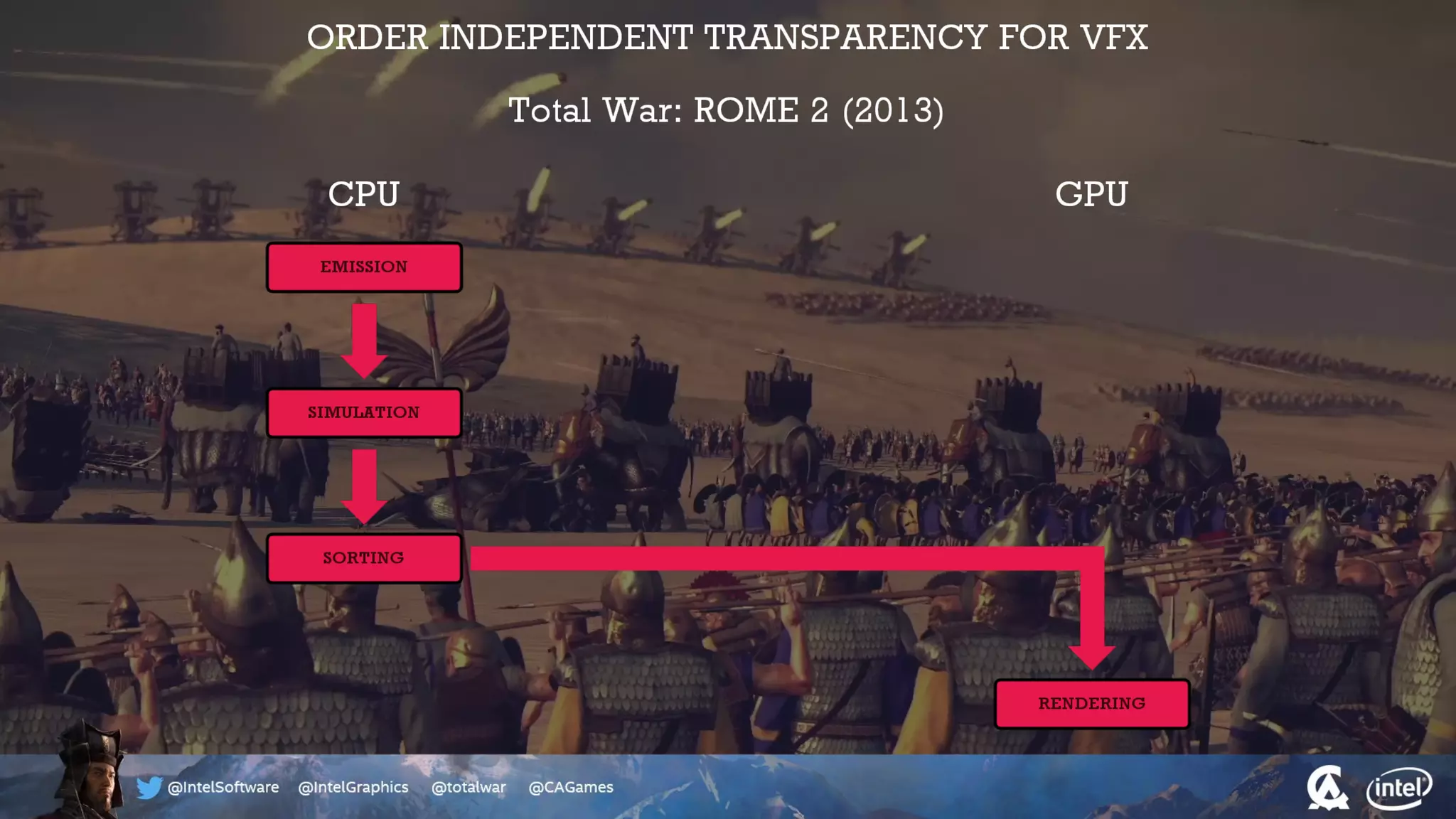

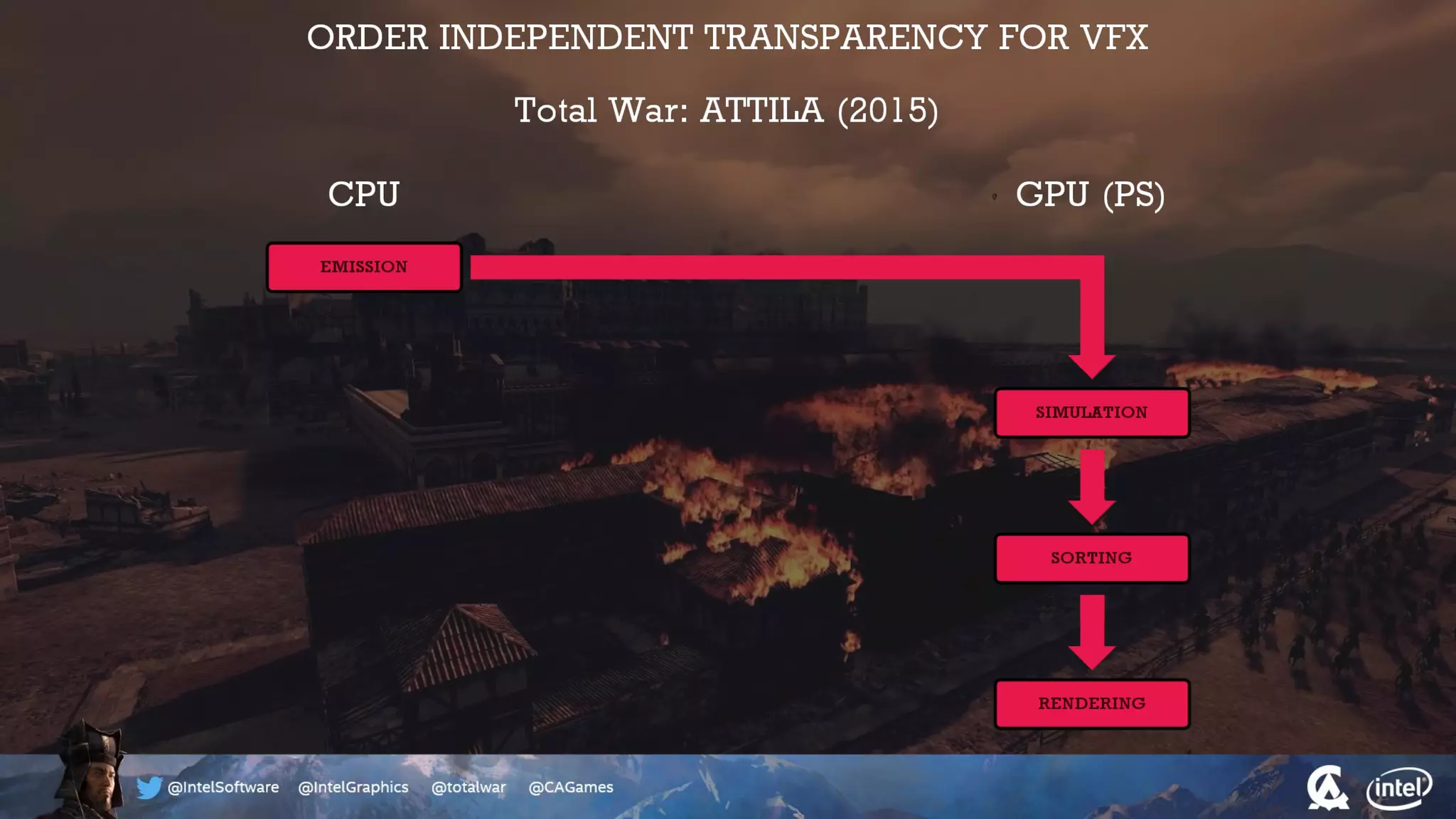

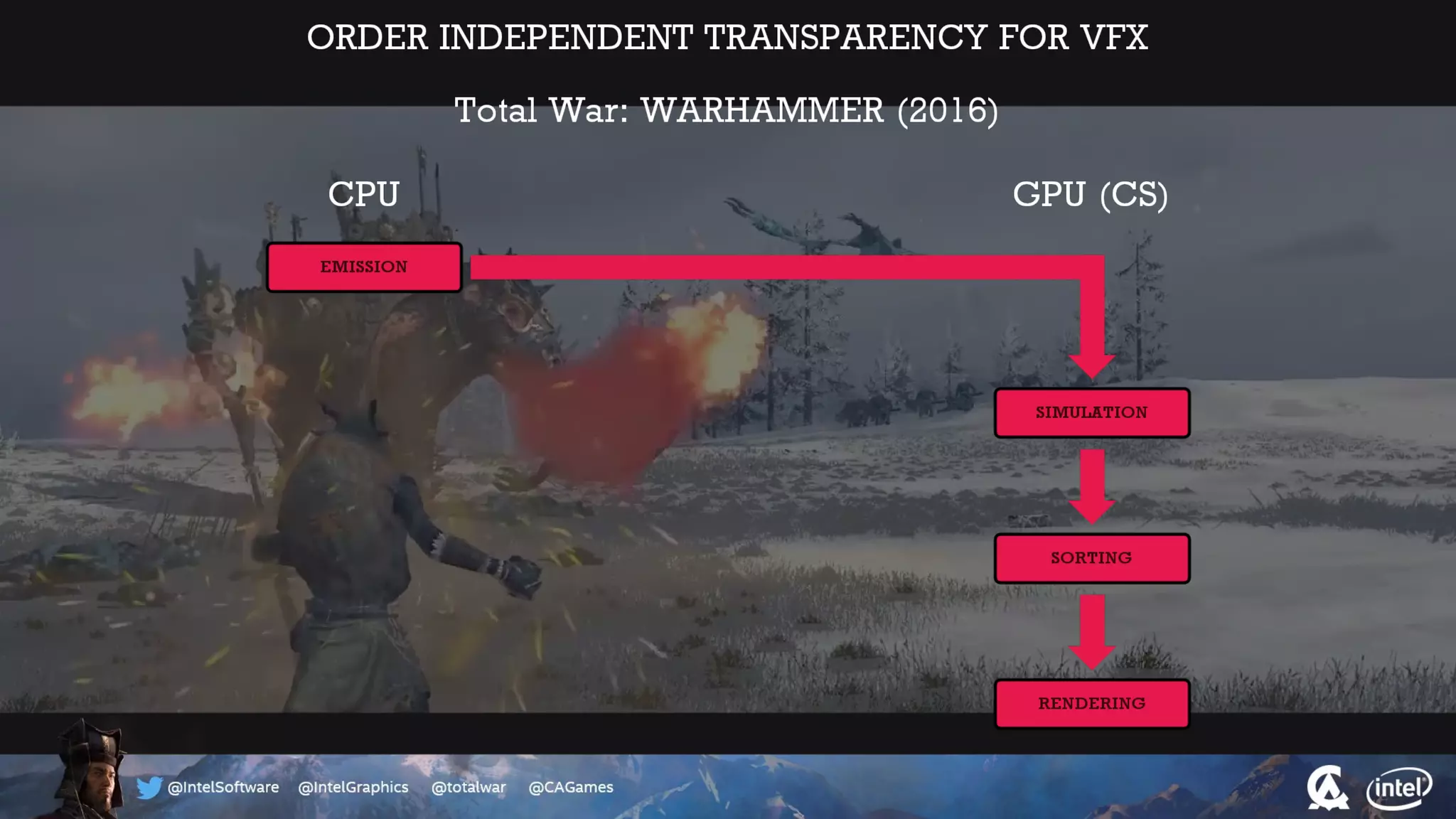

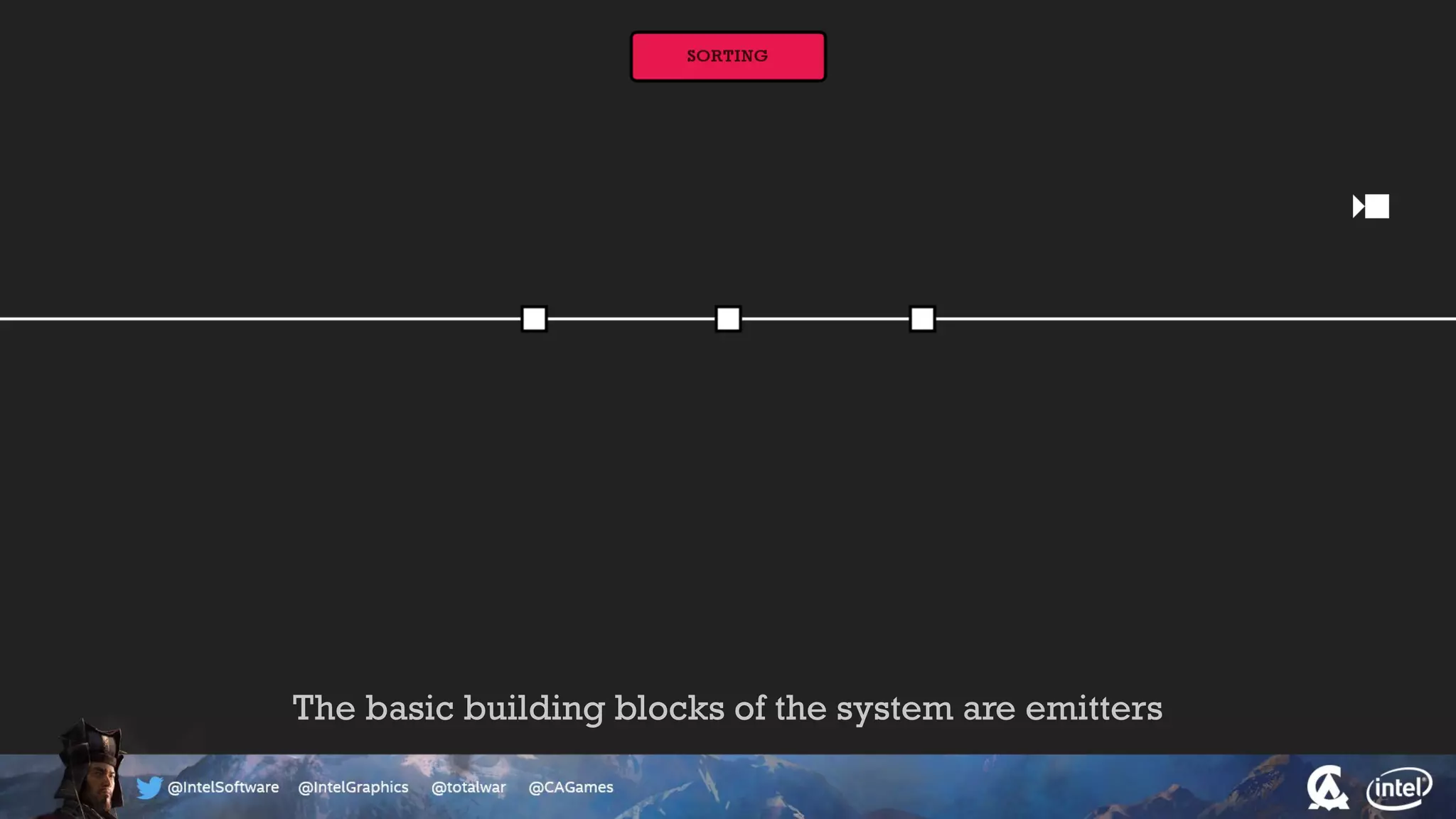

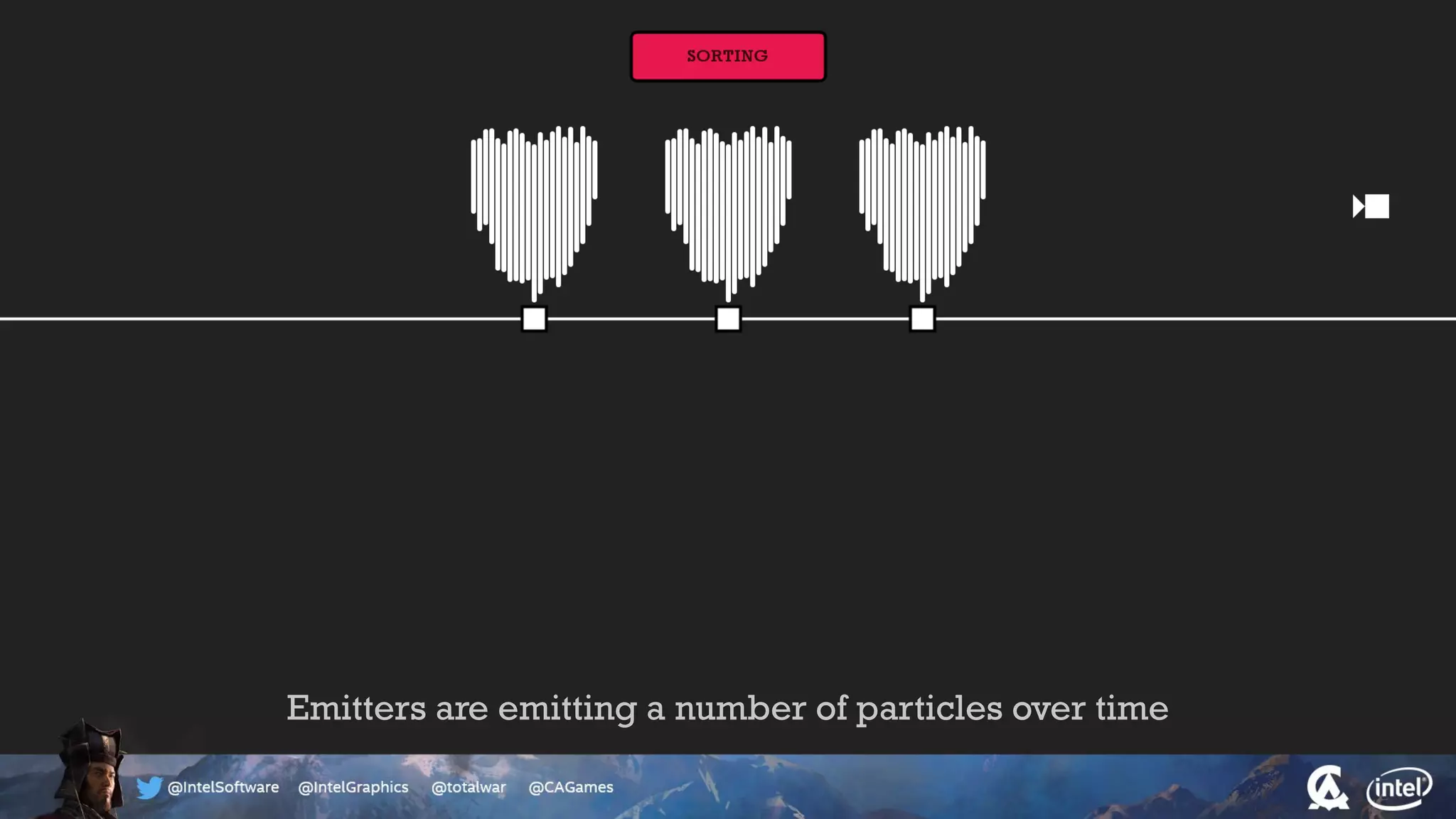

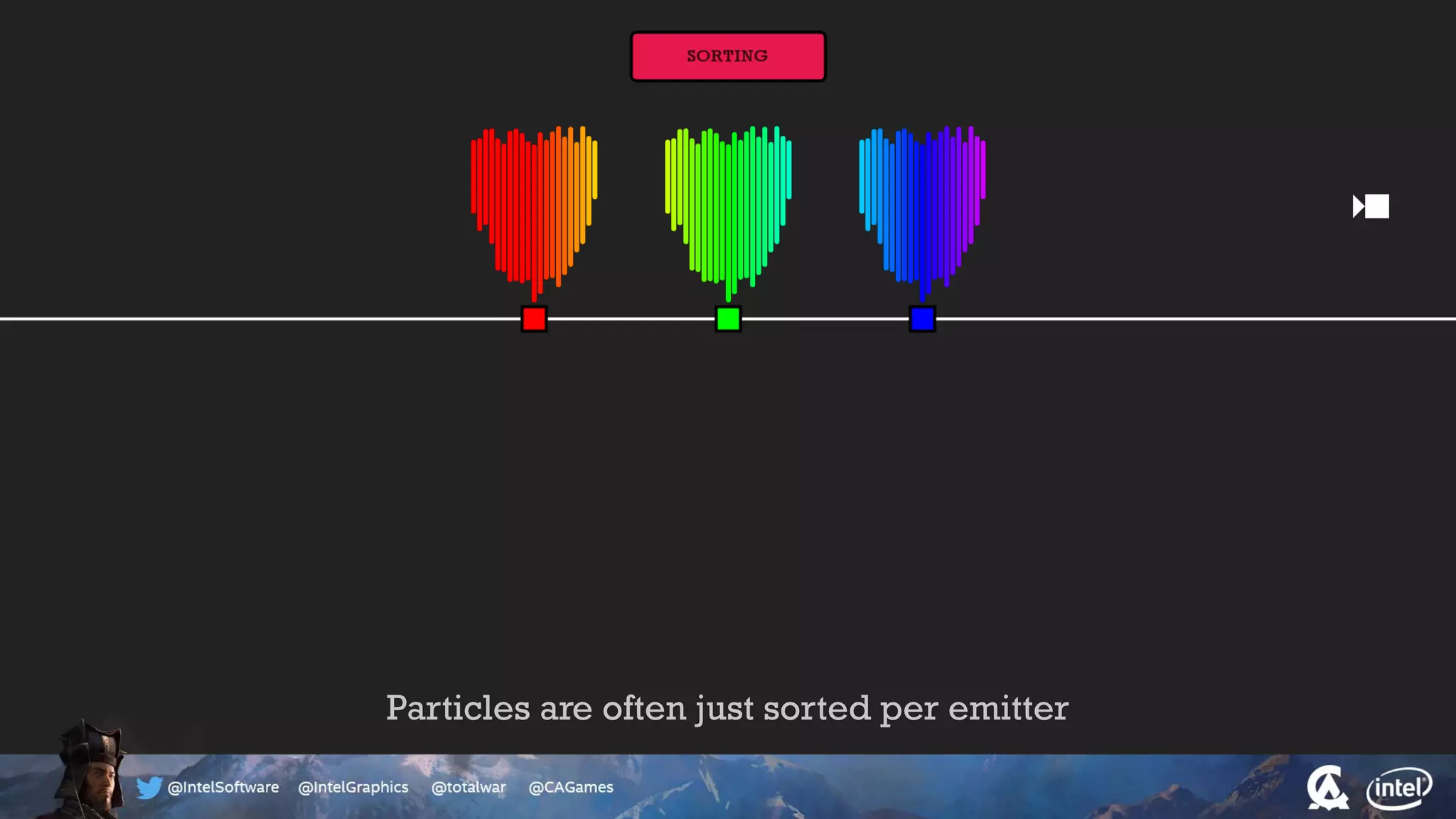

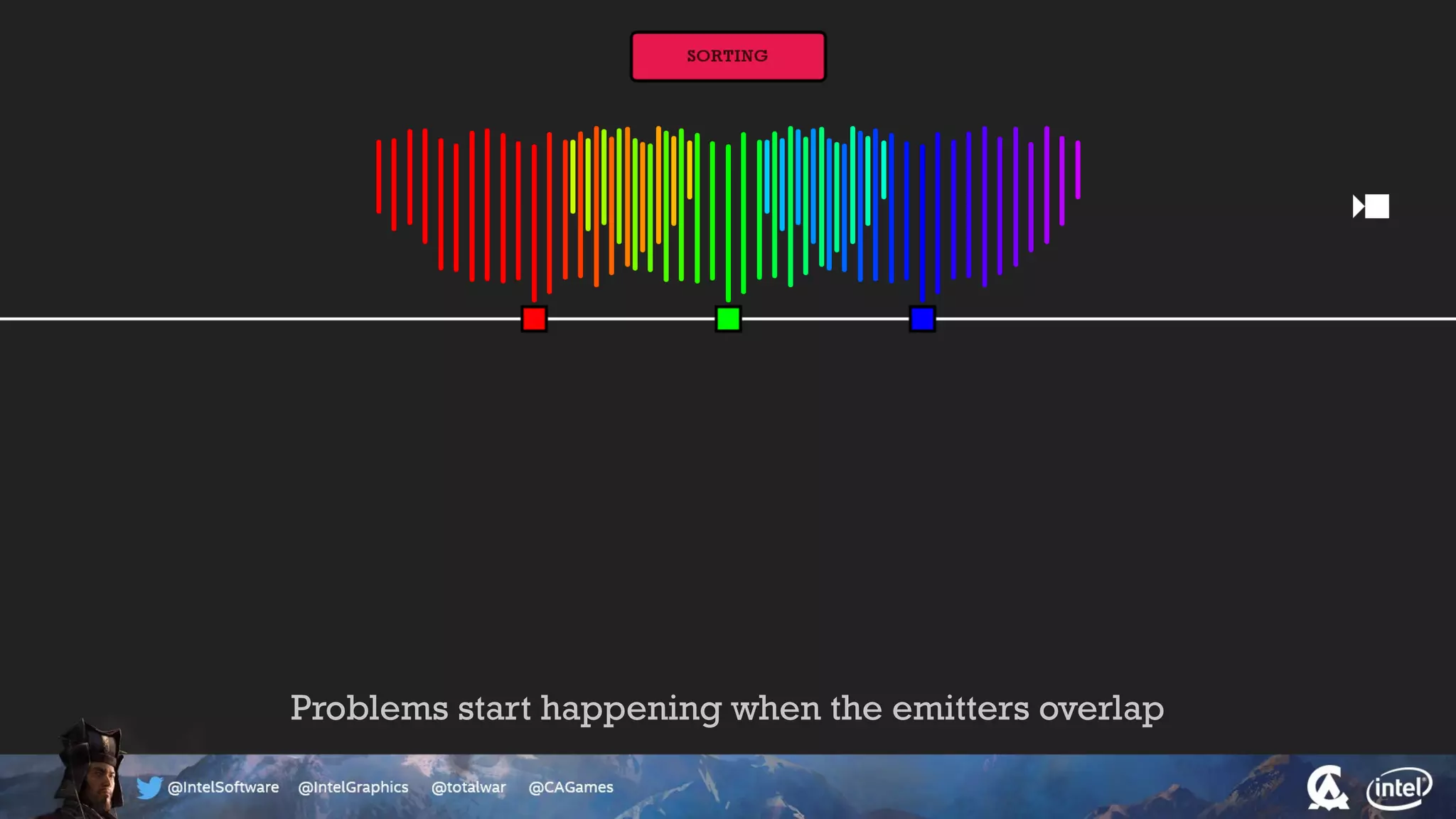

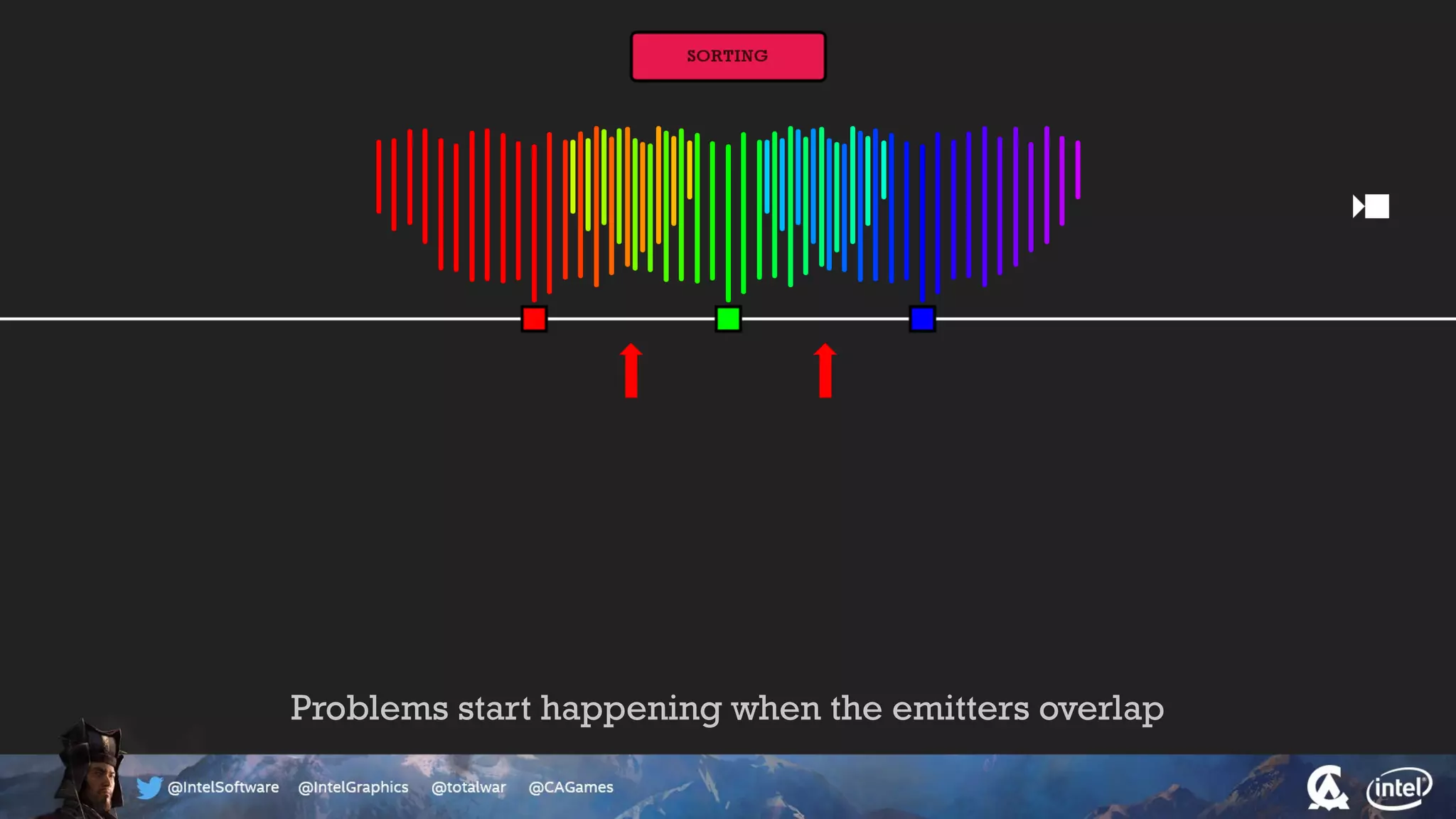

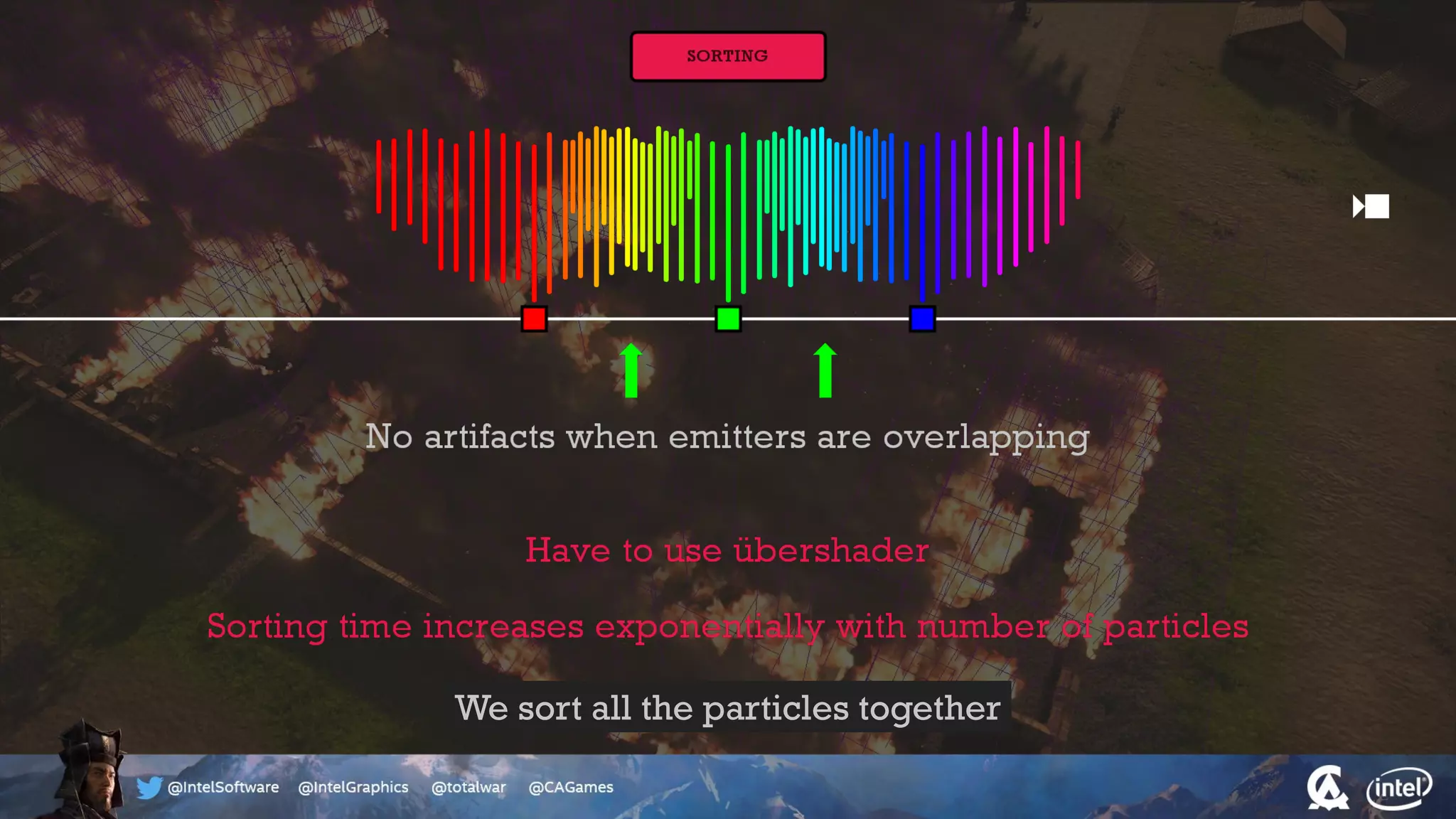

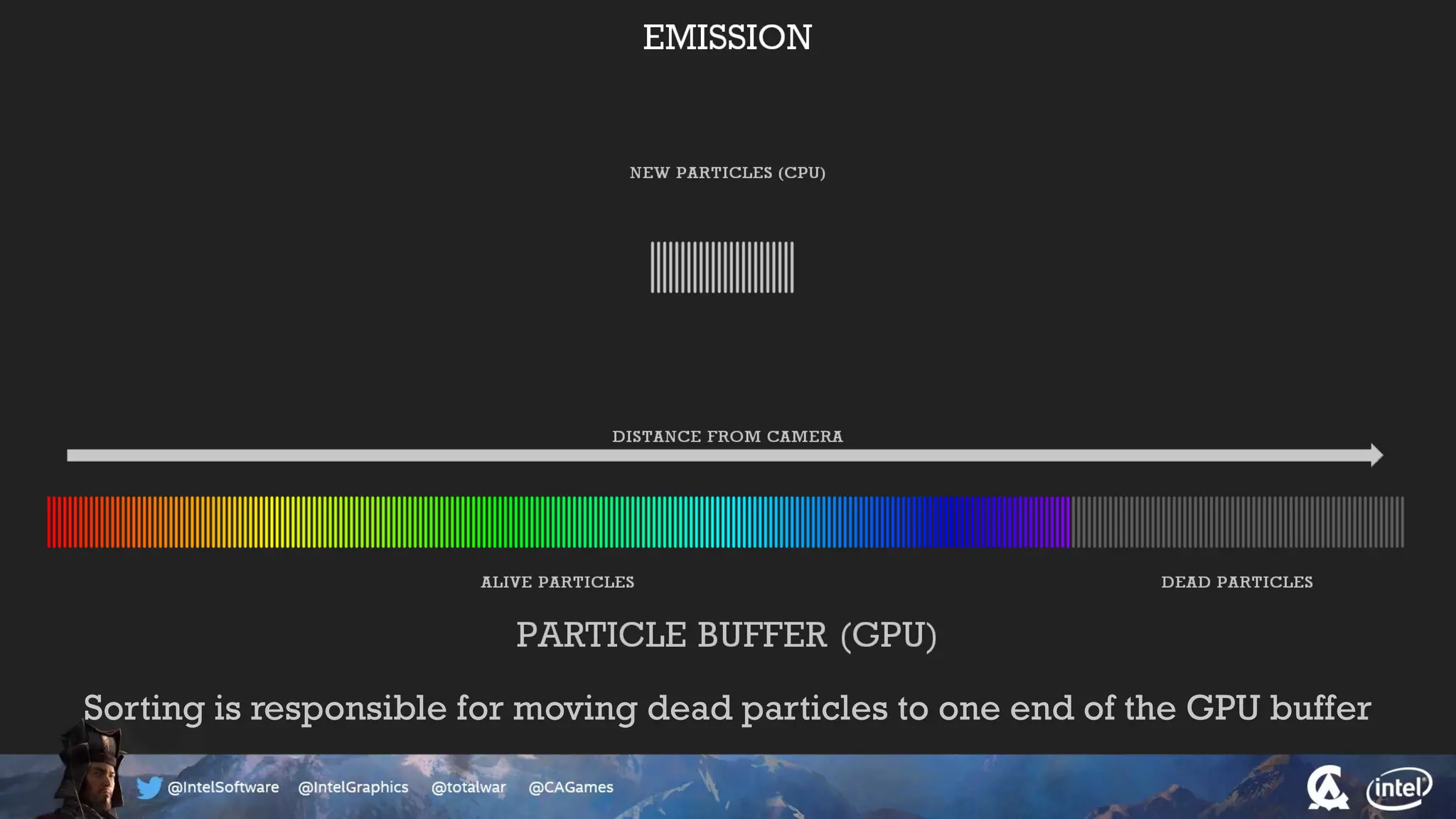

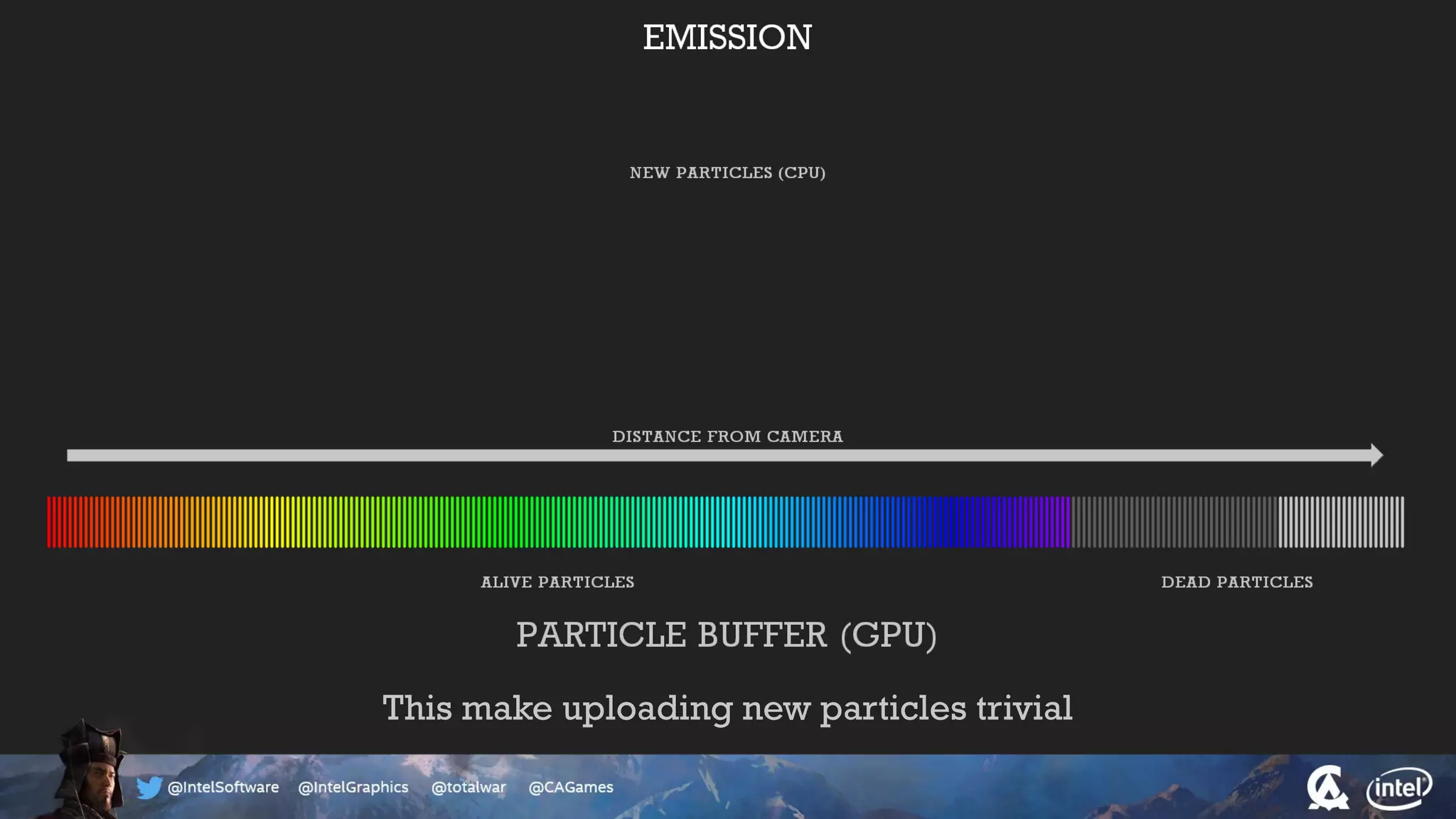

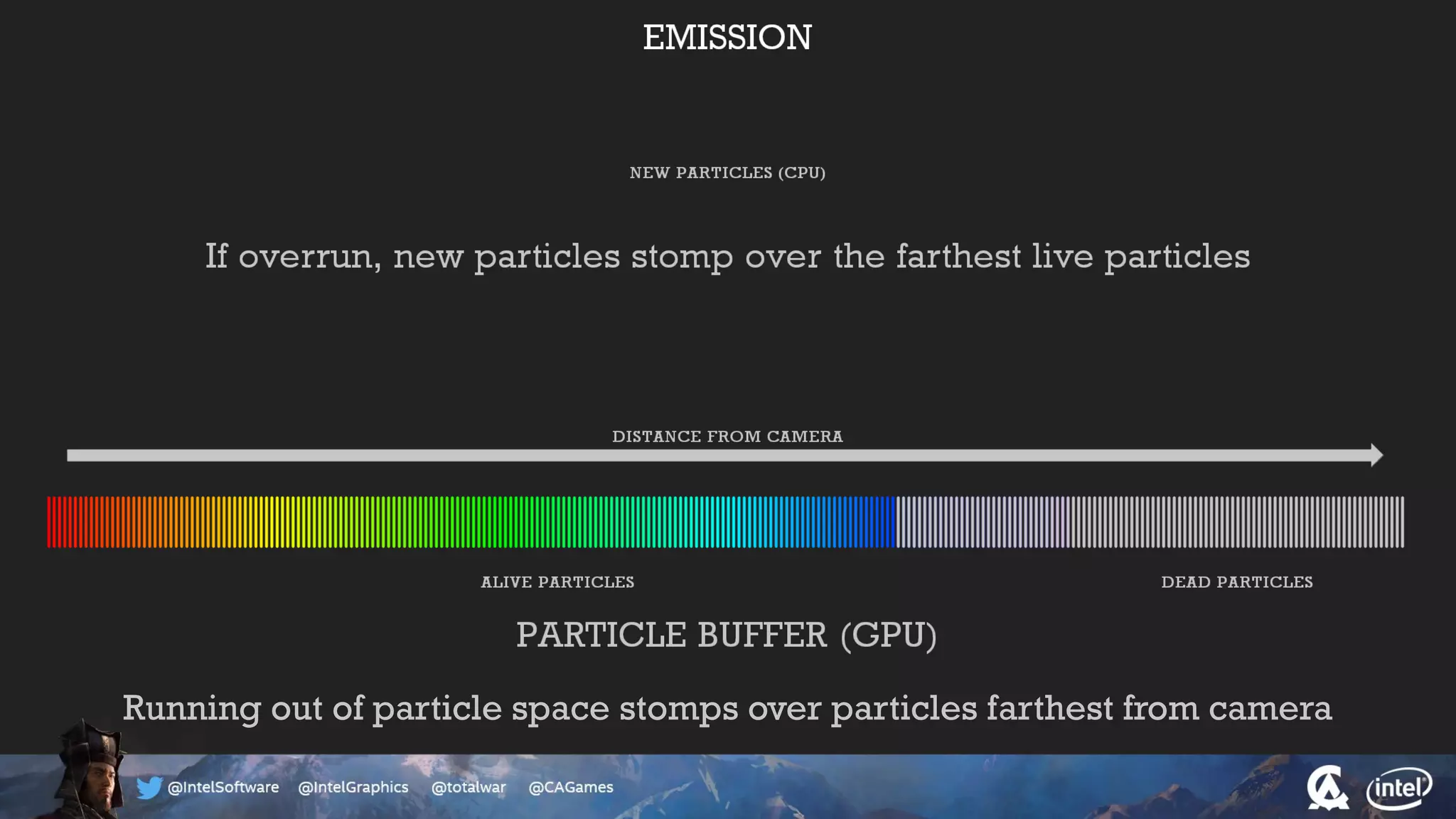

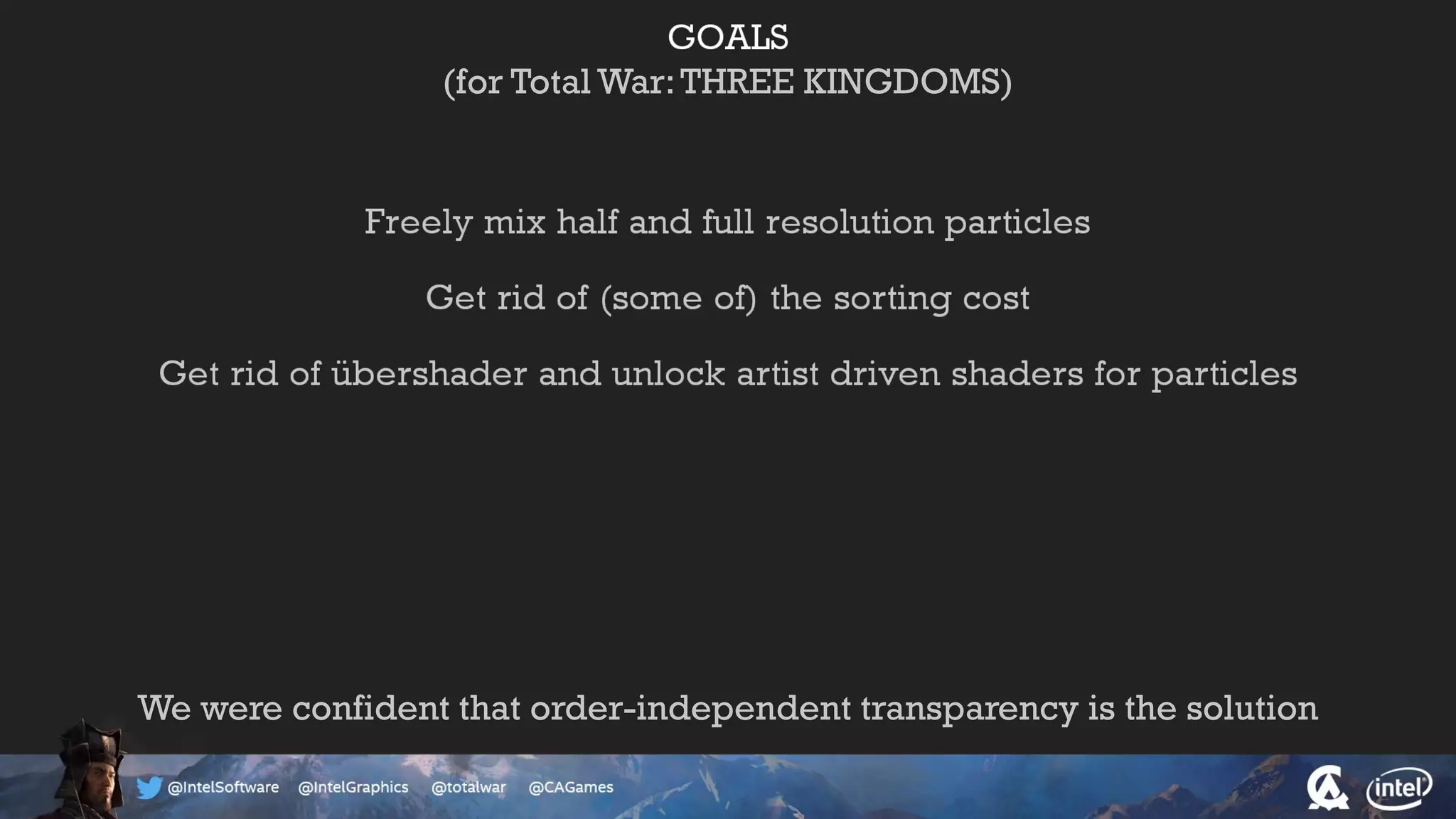

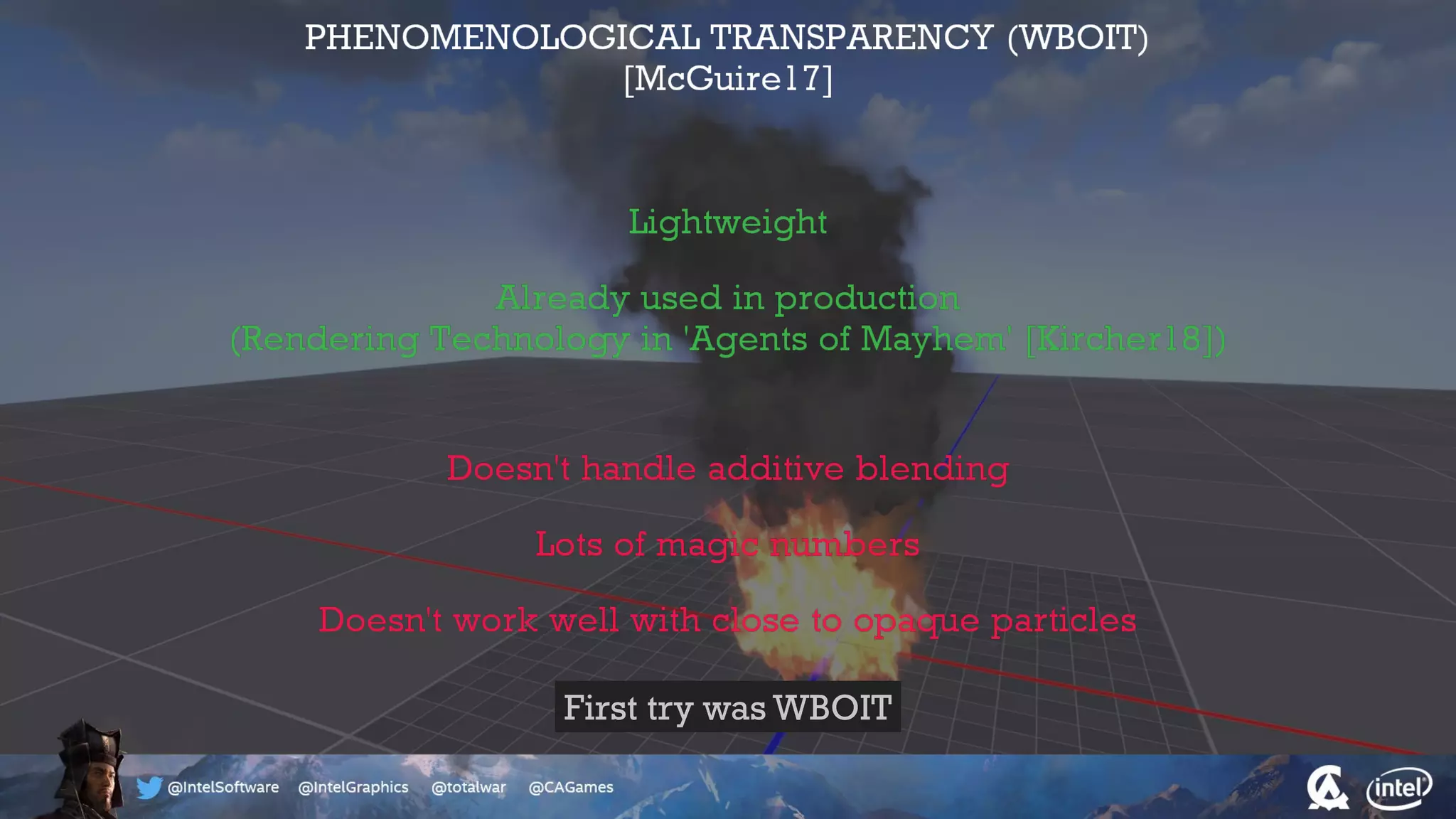

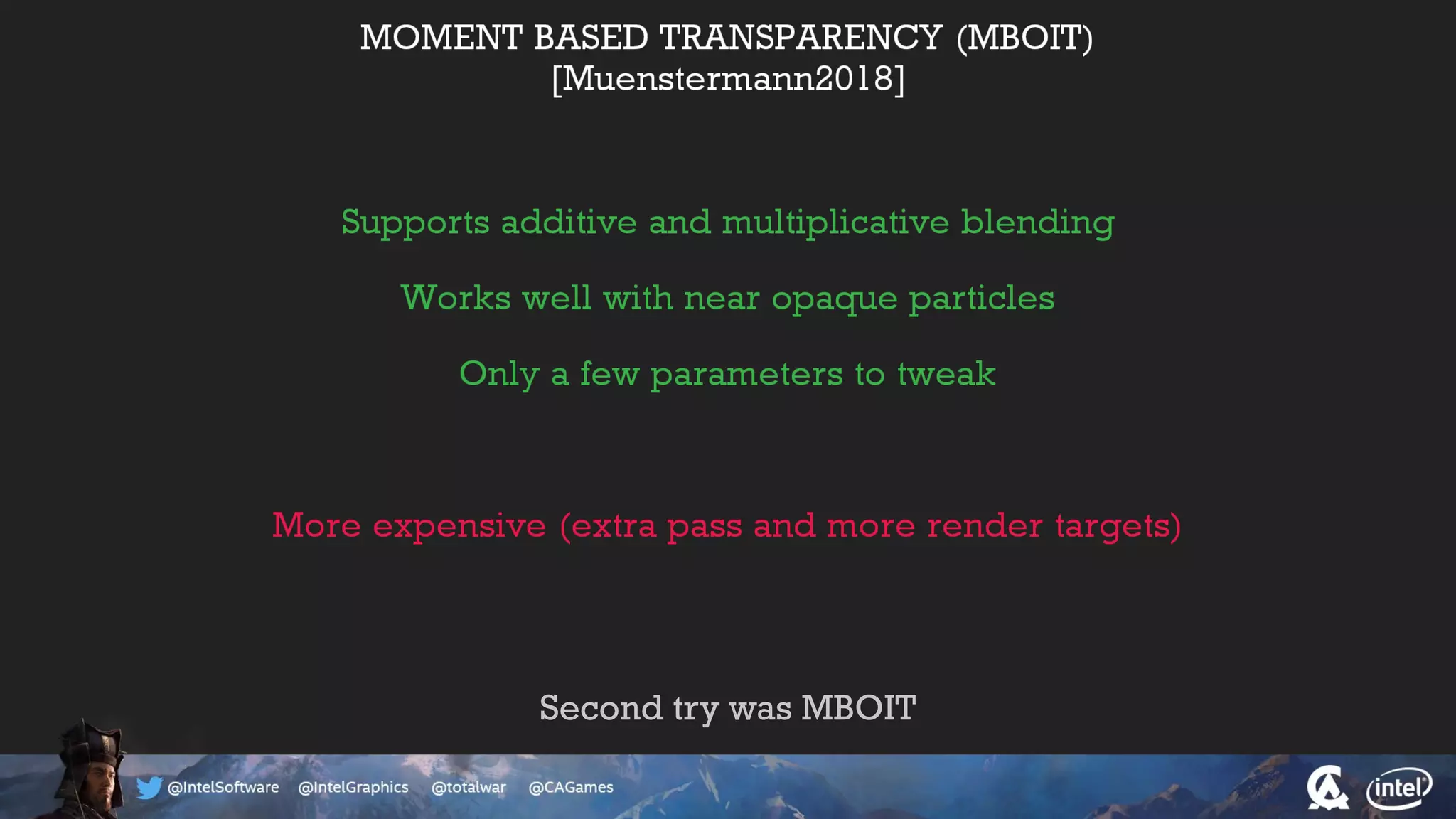

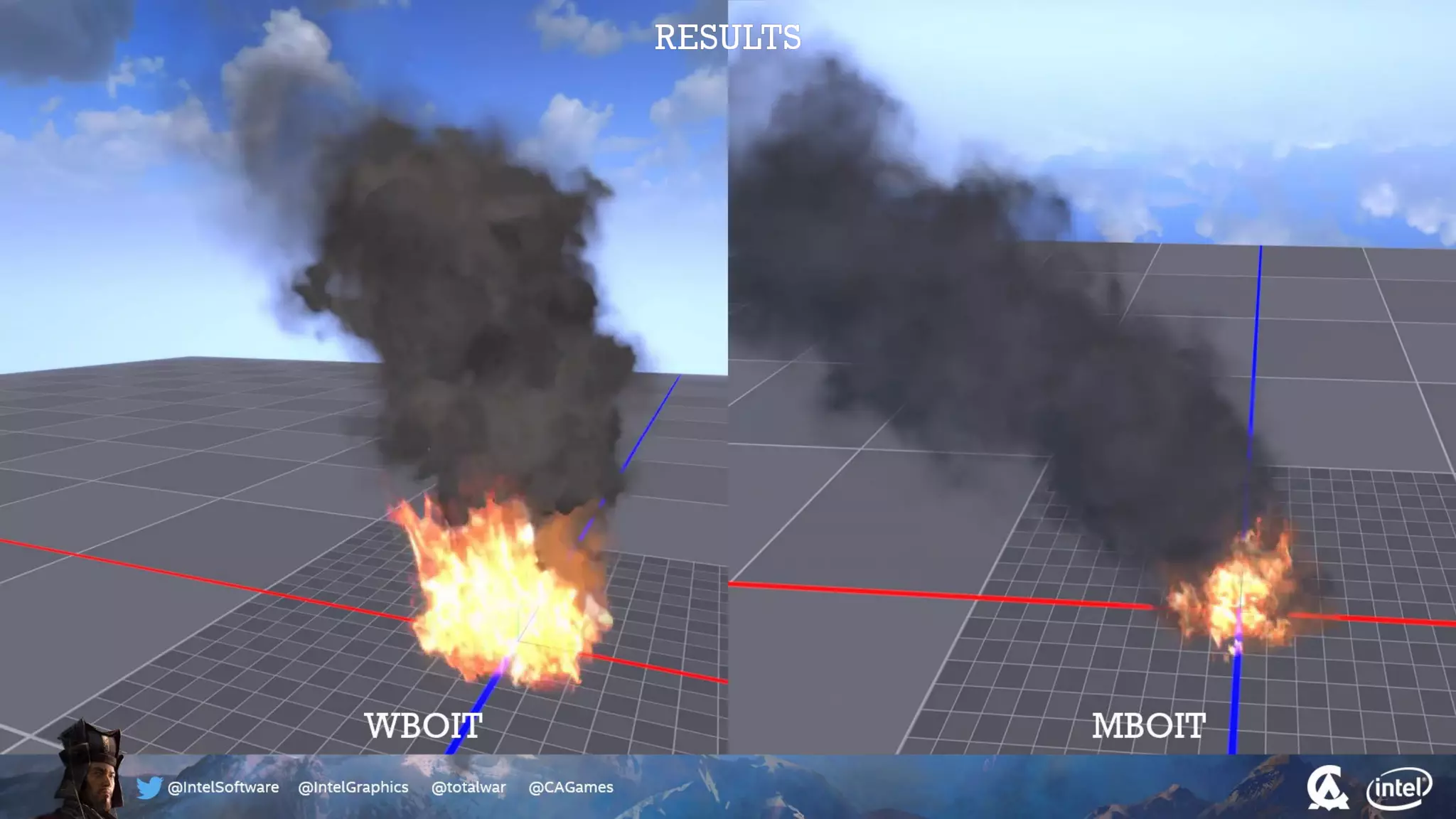

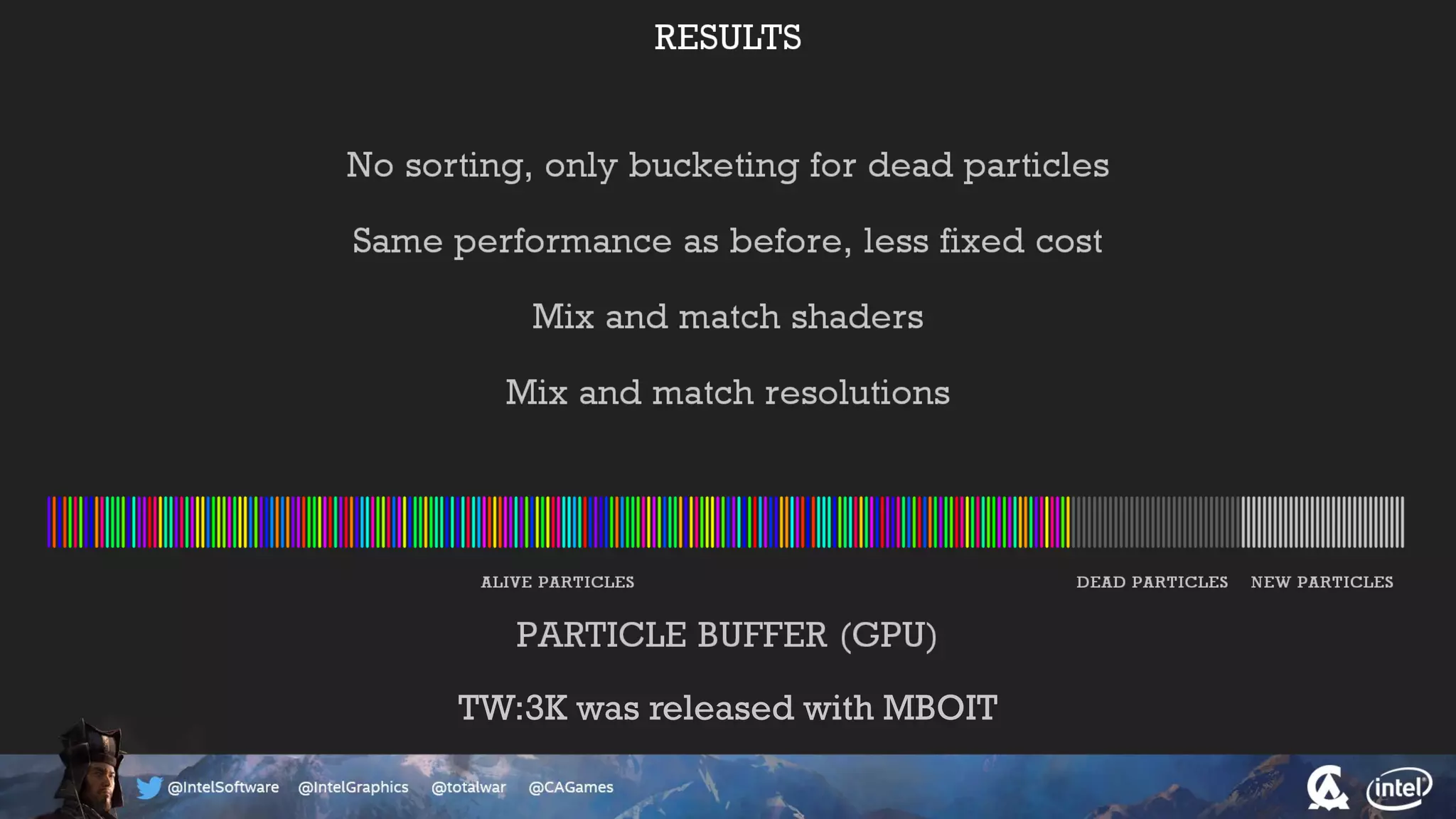

The document outlines the architecture and processing workflow of a turn-based battle RTS game, detailing how game state is built and rendered using worker threads for efficiency. It describes various processes including instance data management, mesh list handling, and rendering pipeline stages, focusing on optimizing tasks and ensuring lockless access. Additionally, it addresses challenges with particle emissions caused by overlapping emitters and the solutions explored, such as order-independent transparency techniques.